Distribution-Specific Hardness of Learning Neural Networks

Although neural networks are routinely and successfully trained in practice using simple gradient-based methods, most existing theoretical results are negative, showing that learning such networks is difficult, in a worst-case sense over all data dis…

Authors: Ohad Shamir

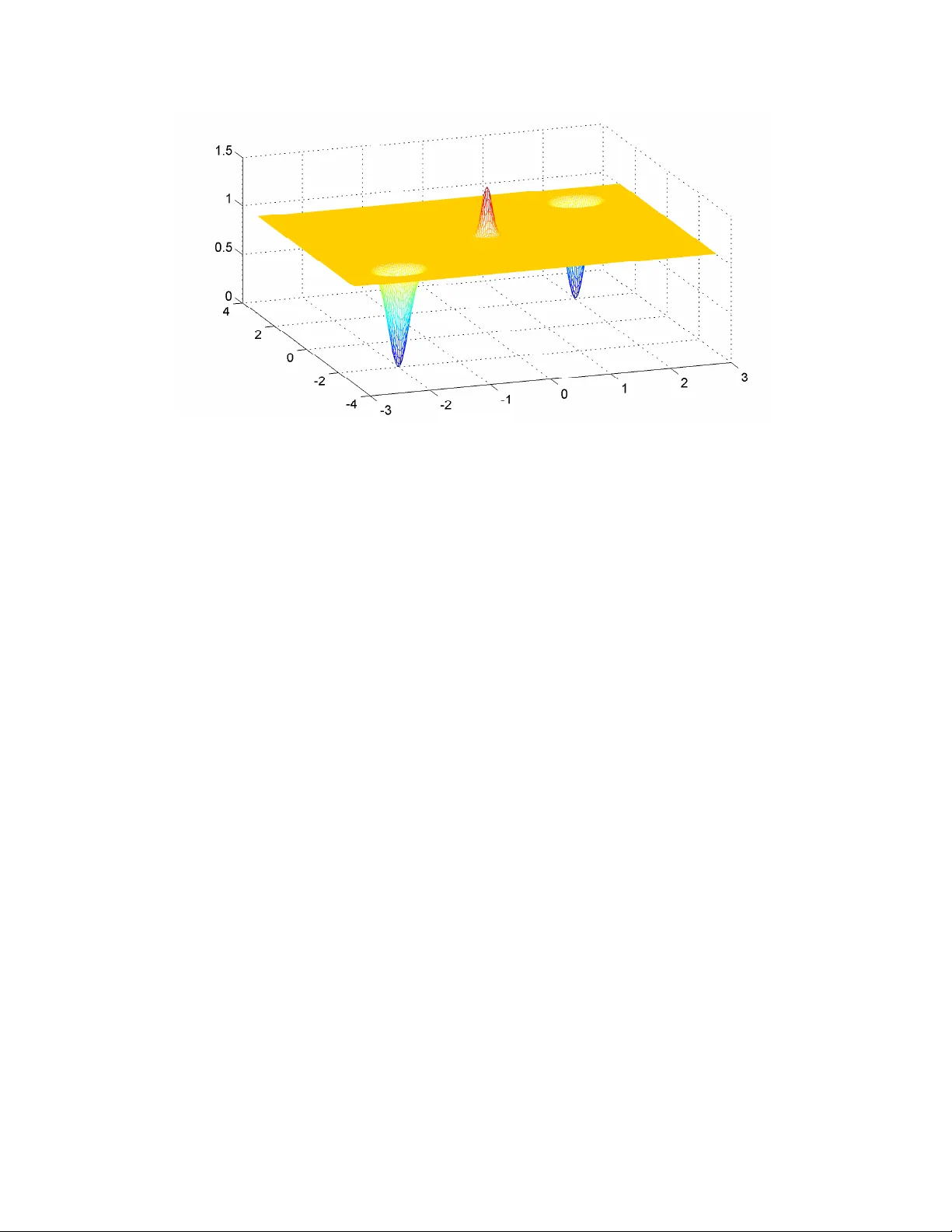

Distrib ution-Specific Hardness of Learning Neural Networks Ohad Shamir W eizmann Institute of Science ohad.shamir@weizmann.ac.il Abstract Although neural networks are routinely and successfully trained in practice using simple gradient- based methods, most existing theoretical results are negati ve, showing that learning such networks is difficult, in a w orst-case sense o ver all data distrib utions. In this paper, we take a more nuanced vie w , and consider whether specific assumptions on the “niceness” of the input distribution, or “niceness” of the target function (e.g. in terms of smoothness, non-de generacy , incoherence, random choice of parameters etc.), are sufficient to guarantee learnability using gradient-based methods. W e provide evidence that neither class of assumptions alone is sufficient: On the one hand, for any member of a class of “nice” target functions, there are dif ficult input distributions. On the other hand, we identify a family of simple target functions, which are difficult to learn e ven if the input distribution is “nice”. T o prove our results, we dev elop some tools which may be of independent interest, such as extending Fourier -based hardness techniques dev eloped in the context of statistical queries [ 3 ], from the Boolean cube to Euclidean space and to more general classes of functions. 1 Intr oduction Artificial neural networks have seen a dramatic resurgence in recent years, and hav e proven to be a highly ef- fecti ve machine learning method in computer vision, natural language processing, and other challenging AI problems. Moreov er, successfully training such networks is routinely performed using simple and scalable gradient-based methods, in particular stochastic gradient descent. Despite this practical success, our theoretical understanding of the computational tractability of such methods is quite limited, with most results being negati ve. For e xample, as discussed in [ 17 ], learning even depth-2 networks in a formal P A C learning framew ork is computationally hard in the worst case, and ev en if the algorithm is allowed to return arbitrary predictors. As common in such worst-case results, these are prov en using rather artificial constructions, quite different than the real-world problems on which neural networks are highly successful. In particular , since the P A C frame work focuses on distribution-fr ee learning (where the distribution generating the examples is unknown and rather arbitrary), the hardness results rely on carefully crafted distrib utions, which allo ws one to relate the learning problem to (say) an NP-hard problem or breaking a cryptographic system. Howe ver , what if we insist on “natural” distributions? Is it possible to sho w that neural networks learning becomes computationally tractable? Can we show that they can be learned using the standard heuristics employed in practice, such as stochastic gradient descent? T o understand what a “natural” distrib ution refers to, we need to separate the distribution o ver examples (gi ven as input-output pairs ( x , y ) ) into two components: • The input distrib ution p ( x ) : “Natural” input distributions on Euclidean space tend to hav e properties such as smoothness, non-degenerac y , incoherence etc. 1 • The tar get function h ( x ) : In P A C learning, it is assumed that the output y equals h ( x ) , where h is some unko wn target function from the hypothesis class we are considering. In studying neural networks, it is common to consider the class of all networks which share some fixed architecture (e.g. feedforward networks of a gi ven depth and width). Ho we ver , one may argue that the parameters of real-world networks (e.g. the weights of each neuron) are not arbitrary , but exhibit v arious features such as non-degeneracy or some “random like” appearance. Indeed, networks with a random structure hav e been shown to be more amenable to analysis in various situations (see for instance [ 6 , 2 , 5 ] and references therein). Empirical evidence clearly suggest that many pairs of input distributions and target functions are computa- tionally tractable, using standard methods. Howe ver , how do we characterize these pairs? W ould appropriate assumptions on one of them be suf ficient to show learnability? In this paper, we in vestigate these two components, and provide evidence that neither one of them alone is enough to guarantee computationally tractable learning, at least with methods resembling those used in practice. Specifically , we focus on simple, shallow ReLU networks, assume that the data can be perfectly predicted by some such network, and even allo w over-specification (a.k.a. improper learning), in the sense that we allo w the learning algorithm to output a predictor which is possibly larger and more complex than the target function (this technique increases the power of the learner , and was shown to make the learning problem easier in theory and in practice [ 17 , 20 , 21 ]). Even under such fa vorable conditions, we show the follo wing: • Hardness f or “natural” target functions. For each individual target function coming from a simple class of small, shallow ReLU networks (even if its parameters are chosen randomly or in some other obli vious way), we show that no algorithm inv ariant to linear transformations can successfully learn it w .r .t. all input distrib utions in polynomial time (this corresponds, for instance, to standard gradient- based methods together with data whitening or preconditioning). This result is based on a reduction from learning intersections of halfspaces. Although that problem is known to be hard in the worst- case ov er both input distributions and target functions, we essentially show that inv ariant algorithms as abov e do not “distinguish” between worst-case and average-case: If one can learn a particular target function with such an algorithm, then the algorithm can learn nearly all target functions in that class. • Hardness for “natural” input distributions. W e show that target functions of the form x 7→ ψ ( h w , x i ) for any periodic ψ are generally difficult to learn using gradient-based methods, e ven if the input distribution is fixed and belongs to a very broad class of smooth input distributions (includ- ing, for instance, Gaussians and mixtures of Gaussians). Note that such functions can be constructed by simple shallow networks, and can be seen as an extension of generalized linear models [ 18 ]. Un- like the pre vious result, which relies on a computational hardness assumption, the results here are geometric in nature, and imply that the gradient of the objectiv e function, nearly e verywhere, con- tains virtually no signal on the underlying target function. Therefore, an y algorithm which relies on gradient information cannot learn such functions. Interestingly , the dif ficulty here is not in having a plethora of spurious local minima or saddle points – the associated stochastic optimization problem may actually have no such critical points. Instead, the objecti ve function may exhibit properties such as flatness nearly everywhere, unless one is already v ery close to the global optimum. This highlights a potential pitfall in non-con vex learning, which occurs already for a slight extension of generalized linear models, and e ven for “nice” input distrib utions. T ogether , these results indicate that in order to explain the practical success of neural netw ork learning with gradient-based methods, one would need to emplo y a careful combination of assumptions on both the input 2 distribution and the target function, and that results with ev en a “partially” distrib ution-free flav or (which are common, for instance, in con vex learning problems) may be dif ficult to attain here. T o prove our results, we de velop some tools which may be of independent interest. In particular , the techniques used to prove hardness of learning functions of the form x 7→ ψ ( h w , x i ) are based on Fourier analysis, and ha ve some close connections to hardness results on learning parities in the well-known frame- work of learning from statistical queries [ 14 ]: In both cases, one essentially shows that the Fourier transform of the tar get function has very small support, and hence does not “correlate” with most functions, making it difficult to learn using certain methods. Ho wev er, we consider a more general and arguably more natural class of input distributions over Euclidean space, rather than distributions on the Boolean cube. In a sense, we show that learning general periodic functions ov er Euclidean space is difficult (at least with gradient- based methods), for the same reasons that learning parities over the Boolean cube is dif ficult in the statistical queries frame work. Related W ork Recent years hav e seen quite a few papers on the theory of neural network learning. Below , we only briefly mention those most rele vant to our paper . In a very elegant work, Janzamin et al. [ 13 ] have shown that a certain method based on tensor decom- positions allo ws one to prov ably learn simple neural networks by a combination of assumptions on the input distribution and the target function. Howe ver , a drawback of their method is that it requires rather precise kno wledge of the input distribution and its deriv ativ es, which is rarely av ailable in practice. In contrast, our focus is on algorithms which do not utilize such knowledge. Other works which show computationally- ef ficient learnability of certain neural networks under suf ficiently strong distributional assumptions include [ 2 , 17 , 1 , 22 ]. In the context of learning functions ov er the Boolean cube, it is known that e ven if we restrict ourself to a particular input distribution (as long as it satisfies some mild conditions), it is dif ficult to learn parity functions using statistical query algorithms [ 14 , 3 ]. Moreov er , it was recently sho wn that stochastic gradient descent methods can be approximately posed as such algorithms [ 9 ]. Since parities can be implemented with small real-valued networks, this implies that for “most” input distributions on the Boolean cube, there are neural networks which are unlikely to be learnable with gradient-based methods. Howe ver , data provided to neural networks in practice are not in the form of Boolean vectors, but rather vectors of floating-point numbers. Moreover , some assumptions on the input distribution, such as smoothness and Gaussianity , only make sense once we consider the support to be Euclidean space rather than Boolean cube. Perhaps these are enough to guarantee computational tractability? A contribution of this paper is to show that this is not the case, and to formally demonstrate how phenomena similar to the Boolean case also occurs in Euclidean space, using appropriate target functions and distrib utions. Finally , we note that [ 15 ] provides improper-learning hardness results, which hold ev en for a standard Gaussian distribution on Euclidean space, and for any algorithm. Howe ver , unlike our paper , their focus is on hardness of agnostic learning (where the target function is arbitrary and does not have to correspond to a gi ven class), the results are specific to the standard Gaussian distribution, and the proofs are based on a reduction from the Boolean case. The paper is structured as follows: In Sec. 2 , we formally present some notation and concepts used throughout the paper . In Sec. 4 , we provide our hardness results for natural input distributions, and in Sec. 3 , we provide our hardness results for natural tar get functions. All proofs are presented in Sec. 5 . 3 2 Pr eliminaries W e generally let bold-faced letters denote vectors. Given a complex-v alued number z = a + ib , we let z = a − ib denote its complex conjugate, and | z | = √ a 2 + b 2 denote its modulus. Gi ven a function f , we let ∇ f denote its gradient and ∇ 2 f denote its Hessian (assuming they exist). Neural Networks. The focus of our results will be on learning predictors which can be described by simple and shallow (depth 2 or 3) neural networks. A standard feedforward neural network is composed of neurons, each of which computes the mapping x 7→ σ ( h w , x i + b ) , where w , b are parameters and σ is a scalar acti v ation function, for example the popular ReLU function [ z ] + = max { 0 , z } . These neurons are arranged in parallel in layers, so the output of each layer can be compactly represented as x 7→ σ ( W > x + b ) , where W is a matrix (each column corresponding to the parameter vector of one of the neurons), b is a vector , and σ applies an activ ation function on the coordinates of W > x . In vanilla feedforward networks, such layers are connected to each other , so given an input x , the output equals σ k ( W > k σ k − 1 ( W > k − 1 . . . σ 2 ( W > 2 σ 1 ( W > 1 x + b 1 ) + b 2 ) . . . + b k − 1 ) + b k ) , where W i , b i , σ i are parameter of the i -th layer . The number of layers k is denoted as the depth of the network, and the maximal number of columns in W i is denoted as the width of the network. For simplicity , in this paper we focus on networks which output a real-valued number , and measure our performance with respect to the squared loss (that is, gi ven an input-output example ( x , y ) , where x is a vector and y ∈ R , the loss of a predictor p on the example is ( p ( x ) − y ) 2 ). Gradient-Based Methods. Gradient-based methods are a class of optimization algorithms for solving problems of the form min w ∈W F ( w ) (for some given function F and assuming w is a vector in Euclidean space), based on computing ∇ F ( w ) of approximations of ∇ F ( w ) at v arious points w . Perhaps the simplest such algorithm is gradient descent, which initializes deterministically or randomly at some point w 1 , and iterati vely performs updates of the form w t +1 = w t − η t ∇ F ( w t ) , where η t > 0 is a step size parameter . In the context of statistical supervised learning problems, we are usually interested in solving problems of the form min w ∈W E x ∼D [ ` ( f ( w , x ) , h ( x ))] , where { x 7→ f ( w , x ) : w ∈ W } is some class of predictors, h is a target function, and ` is some loss function. Since the distribution D is generally unknown, one cannot com- pute the gradient of this function w .r .t. w directly , but can still compute approximations, e.g. by sampling one x at random and computing the gradient (or sub-gradient) of ` ( f ( w , x ) , h ( x )) . The same approach can be used to solve empirical approximations of the above, i.e. min w ∈W 1 m P m i =1 ` ( f ( w , x i ) , h ( x i )) for some dataset { ( x i , h ( x i )) } m i =1 . These are generally kno wn as stochastic gradient methods, and are one of the most popular and scalable machine learning methods in practice. P A C Learning . For the results of Sec. 3 , we will rely on the follo wing standard definition of P A C learning with respect to Boolean functions: Given a hypothesis class H of functions from { 0 , 1 } d to { 0 , 1 } , we say that a learning algorithm P A C-learns H if for any ∈ (0 , 1) , any distribution D over { 0 , 1 } d , and any h ? ∈ H , if the algorithm is given oracle access to i.i.d. samples ( x , h ? ( x )) where x is sampled according to D , then in time poly ( d, 1 / ) , the algorithm returns a function f : { 0 , 1 } d 7→ { 0 , 1 } such that Pr x ∼D ( f ( x ) 6 = h ? ( x )) ≤ with high probability (for our purposes, it will be enough to consider an y constant close to 1 ). Note that in the definition abov e, we allow f not to belong to the hypothesis class H . This is often denoted as “improper” learning, and allows the learning algorithm more power than in “proper” learning, where f must be a member of H . F ourier Analysis on R d . In the analysis of Sec. 4 , we will consider functions from R d to the reals R or complex numbers C , and vie w them as elements in the Hilbert space L 2 ( R d ) of square inte grable functions, 4 equipped with the inner product h f , g i = Z x f ( x ) · g ( x ) d x and the norm k f k = p h f , f i . W e use f g or f · g as shorthand for the function x 7→ f ( x ) g ( x ) . Any function f ∈ L 2 ( R d ) has a Fourier transform ˆ f ∈ L 2 ( R d ) , which for absolutely integrable functions can be defined as ˆ f ( w ) = Z exp ( − 2 π i h x , w i ) f ( x ) d x , (1) where exp( iz ) = cos( z ) + i · sin( z ) , i being the imaginary unit. In the proofs, we will use the follo wing well-kno wn properties of the Fourier transform: • Linearity: For scalars a, b and functions f , g , \ af + bg = a ˆ f + b ˆ g . • Isometry: h f , g i = h ˆ f , ˆ g i and k f k = k ˆ f k . • Con volution: c f g = ˆ f ∗ ˆ g , where ∗ denotes the con volution operation: ( f ∗ g )( w ) = R f ( z ) · g ( w − z ) d z . 3 Natural T arget Functions In this section, we consider simple tar get functions of the form x 7→ [ P n i =1 [ h w i , x i ] + ] [0 , 1] , where [ z ] + = max { 0 , z } is the ReLU function, and [ z ] [0 , 1] = min { 1 , max { 0 , z }} is the clipping operation on the interval [0 , 1] . This corresponds to depth-2 networks with no bias in the first layer , and where the outputs of the first layer are simply summed and moved through a clipping non-linearity (this operation can also be easily implemented using a second layer composed of two ReLU neurons). Letting W = [ w 1 , . . . , w n ] , we can write such predictors as x 7→ h ( W > x ) for an appropriate fixed function h . Our goal would be to show that for such a tar get function, with virtually any choice of W (essentially , as long as its columns are linearly independent), and any polynomial-time learning algorithm satisfying some conditions, there exists an input distribution on which it must f ail. As the careful reader may hav e noticed, it is impossible to provide such a target-function-specific result which holds for any algorithm. Indeed, if we fix the target function in advance, we can alw ays “learn” by returning the target function, regardless of the training data. Thus, imposing some constraints on the algorithm is necessary . Specifically , we will consider algorithms which exhibit certain natural inv ariances to the coordinate system used. One very natural inv ariance is with respect to orthogonal transformations: For e xample, if we rotate the input instances x i in a fixed manner , then an orthogonally-inv ariant algorithm will return a predictor which still makes the same predictions on those instances. Formally , this in variance is defined as follo ws: Definition 1. Let A be an algorithm which inputs a dataset ( { x i , y i } ) m i =1 (wher e x i ∈ R d ) and outputs a pr edictor x 7→ f ( W > x ) (for some function f and matrix W dependent on the dataset). W e say that A is orthogonally-in variant , if for any orthogonal matrix M ∈ R d × d , if we feed the algorithm with { M x i , y i } m i =1 , the algorithm returns a pr edictor x 7→ f ( W > M x ) , where f is the same as befor e and W M is suc h that W > M M x i = W > x i for all x i . Remark 1. The definition as stated r efers to deterministic algorithms. F or stochastic algorithms, we will understand orthogonal in variance to mean orthogonal in variance conditioned on any r ealization of the algorithm’ s random coin flips. 5 For example, standard gradient and stochastic gradient descent methods (possibly with coordinate- obli vious regularization, such as L 2 regularization) can be easily shown to be orthogonally-inv ariant 1 . How- e ver , for our results we will need to make a some what stronger in variance assumption, namely in variance to general in vertible linear transformations of the data (not necessarily just orthogonal). This is formally defined as follo ws: Definition 2. An algorithm A is linearly-inv ariant , if it satisfies Definition 1 for any in vertible matrix M ∈ R d × d (rather than just ortho gonal ones). One well-known example of such an algorithm (which is also in variant to affine transformations) is the Ne wton method [ 4 ]. More rele v ant to our purposes, linear in variance occurs whene ver an orthogonally- in variant algorithm preconditions or “whitens” the data so that its cov ariance has a fixed structure (e.g. the identity matrix, possibly after a dimensionality reduction if the data is rank-deficient). For example, ev en though gradient descent methods are not linearly inv ariant, they become so if we precede them by such a preconditioning step. This is formalized in the follo wing theorem: Theorem 1. Let A be any algorithm which given { x i , y i } m i =1 , computes the whitening matrix P = D − 1 U > (wher e X = [ x 1 x 2 . . . x m ] , X = U D V > is a thin 2 SVD decomposition of X ), feeds { P x i , y i } m i =1 to an orthogonally-in variant algorithm, and given the output pr edictor x 7→ f ( W > x ) , r eturns the pr edictor x 7→ f (( P > W ) > x ) . Then A is linearly-invariant. It is easily verified that the cov ariance matrix of the transformed instances P x 1 , . . . , P x m is the r × r identity matrix (where r = Rank ( X ) ), so this is indeed a whitening transform. W e note that whitening is a very common preprocessing heuristic, and e ven when not done explicitly , scalable approximate whitening and preconditioning methods (such as Adagrad [ 8 ] and batch normalization [ 12 ]) are very common and widely recognized as useful for training neural networks. T o show our result, we rely on a reduction from a P A C-learning problem known to be computation- ally hard, namely learning intersections of halfspaces. These are Boolean predictors parameterized by w 1 , . . . , w n ∈ R d and b 1 , . . . , b n ∈ R , which compute a mapping of the form x → n ^ i =1 ( h w i , x i ≥ b i ) (where we let 1 correspond to ‘true’ and 0 to ‘false’). The problem of P AC-learning intersections of halfs- paces ov er the Boolean cube ( x ∈ { 0 , 1 } d ) has been well-studied. In particular , two kno wn hardness results are the follo wing: • Kli vans and Sherstov [ 16 ] sho w that under a certain well-studied cryptographic assumption (hardness of finding unique shortest vectors in a high-dimensional lattice), no algorithm can P AC-learn intersec- tion of n d = d δ halfspaces (where δ is any positi ve constant), e ven if the coordinates of w i and b i are all integers, and max i k ( w i , b i ) k ≤ poly ( d ) . • Daniely and Shalev-Shwartz [ 7 ] sho w that under an assumption related to the hardness of refuting random K-SA T formulas, no algorithm can P A C-learn intersections of n d = ω (1) halfspaces (as d → ∞ ), ev en if the coordinates of w i and b i are all integers, and max i k ( w i , b i ) k ≤ O ( d ) . 1 Essentially , this is because the gradient of any function g ( W > x ) = g ( h w 1 , x i , . . . , h w k , x i ) w .r .t. any w i is proportional to x . Thus, if we multiply x by an orthogonal M , the gradient also gets multiplied by M . Since M > M = I , the inner products of instances x and gradients remain the same. Therefore, by induction, it can be shown that any algorithm which operates by incrementally updating some iterate by linear combinations of gradients will be rotationally in variant. 2 That is, if X is of size d × m , then U is of size d × Rank ( X ) , D is of size Rank ( X ) × Rank ( X ) , and V is of size m × Rank ( X ) . 6 In the theorem below , we will use the result of [ 7 ], which applies to an intersection of a smaller number of halfspaces, and with smaller norms. Ho we ver , similar results can be sho wn using [ 16 ], at the cost of worse polynomial dependencies on d . The main result of this section is the follo wing: Theorem 2. Consider any network h ( W > ? x ) = [ P n d i =1 [ h w ? i , x i ] + ] [0 , 1] (wher e W ? = [ w ? 1 , . . . , w ? n ] ), which satisfies the following: • n d ≥ ω (1) as d → ∞ • max i k w ? i k ≤ O ( d ) • w ? 1 . . . w ? n ar e linearly independent, so the smallest singular value s min ( W ? ) of W ? is strictly positive. Then under the assumption stated in [ 7 ], ther e is no linearly-in variant algorithm which for any > 0 and any distribution D over vectors of norm at most O ( d √ dn d ) min { 1 ,s min ( W ? ) } , given only access to samples ( x , h ( W > ? x )) wher e x ∼ D , runs in time poly ( d, 1 / ) and returns with high pr obability a predictor x 7→ f ( W > x ) such that E x ∼D f ( W > x ) − h ( W > ? x ) 2 ≤ . Note that the result holds ev en if the returned predictor f ( W > x ) has a different structure than h W ? ( · ) , and W is of a larger size than W ? . Thus, it applies e ven if the algorithm is allo wed to train a larger network or more complicated predictor than h W ? ( · ) . The proof (which is provided in Sec. 5 ) can be sketched as follows: First, the hardness assumption for learning intersection of halfspaces is sho wn to imply hardness of learning networks x 7→ h ( W > x ) as described above (and e ven if W has linearly independent columns – a restriction which will be important later). Ho wev er , this only implies that no algorithm can learn x 7→ h ( W > x ) for all W and all input distributions D . In contrast, we want to show that learning would be difficult ev en for some fixed W ? . T o do so, we sho w that if an algorithm is linearly in variant, then the ability to learn with respect to some W and all distributions D means that we can learn with respect to all W and all D . Roughly speaking, we argue that for linearly-in v ariant algorithms, “av erage-case” and “worst-case” hardness are the same here. Intuiti vely , this is because given some arbitrary W , D , we can create a different input distrib ution ˜ D , so that W , ˜ D “look like” W ? , D under some linear transformation (see Figure 1 for an illustration). Therefore, a linearly-in variant algorithm which succeeds on one will also succeed on the other . A bit more formally , let us fix some W ? (with linearly independent columns), and suppose we ha ve a linearly-in variant algorithm which can successfully learn x 7→ h ( W > ? x ) with respect to any input distribu- tion. Let W, D be some other matrix and distribution with respect to which we wish to learn (where W has full column rank and is of the same size as W ? ). Then it can be shown that there is an in vertible matrix M such that W = M > W ? . Since the algorithm successfully learns x 7→ h ( W > ? x ) with respect to any input distribution, it would also successfully learn if we use the input distribution ˜ D defined by sampling x ∼ D and returning M x . This means that the algorithm would succesfully learn from data distrib uted as ( x , h ( W > ? x ) , x ∼ ˜ D ⇐ ⇒ ( M x , h ( W > ? ( M x ))) , x ∼ D ⇐ ⇒ ( M x , h ( W > x )) , x ∼ D . Since the algorithm is linearly-in v ariant, it can be shown that this implies successful learning from ( x , h ( W > x )) where x ∼ D , as required. In the sketch abov e, we have ignored some technical issues. For example, we need to be careful that M has a bounded spectral norm, so that it induces a linear transformation which does not distort norms by too 7 −1 −0.5 0 0.5 1 −1 −0.5 0 0.5 1 −1 −0.5 0 0.5 1 −1 −0.5 0 0.5 1 Figure 1: Correspondence between W ? , D (left figure) and W , ˜ D (right figure). Arro ws correspond to columns of W ? and W , and dots correspond to the support of D and ˜ D . ˜ D is constructed so that the same linear transformation mapping W ? to W also maps D to ˜ D . much (as all our arguments apply for input distributions supported on a bounded domain). A second issue is that if we apply a linearly-inv ariant algorithm on a dataset transformed by M , then the inv ariance is only with respect to the data, not necessarily with respect to ne w instances x sampled from the same distrib ution (and this restriction is necessary for results such as Thm. 1 to hold without further assumptions). Howe ver , it can be sho wn that if the dataset is large enough, in variance will still occur with high probability over the sampling of x , which is suf ficient for our purposes. 4 Natural Input Distrib utions In this section, we consider the difficulty of gradient-based methods to learn certain target functions, e ven with respect to smooth, well-behav ed distributions ov er R d . Specifically , we will consider functions of the form x 7→ ψ ( h w ? , x i ) , where w ? is a vector of bounded norm and ψ is a periodic function. Note that if ψ is continuous and piecewise linear , then ψ ( h w ? , x i ) can be implemented by a depth-2 neural ReLU network on any bounded subset of the domain. More generally , any continuous periodic function can be approximated arbitrarily well by such networks. Our formal results rely on Fourier analysis and are a bit technical. Hence, we precede them with an informal description, outlining the main ideas and techniques, and presenting a specific case study which may be of independent interest (Subsection 4.1 ). The formal results are presented in Subsection 4.2 . 4.1 Inf ormal Description of Results and T echniques Consider a target function of the form x 7→ ψ ( h w ? , x i ) , and any input distribution whose density function can be written as the square ϕ 2 of some function ϕ (the reason for this will become apparent shortly). Suppose we attempt to learn this target function (with respect to the squared loss) using some hypothesis class, which can be parameterized by a bounded-norm vector w in some subset W of an Euclidean space (not necessarily of the same dimensionality as w ? ), so each predictor in the class can be written as x 7→ f ( w , x ) for some fixed mapping f . Thus, our goal is essentially to solve the stochastic optimization problem min w : w ∈W E x ∼ ϕ 2 h ( f ( w , x ) − ψ ( h w ? , x i )) 2 i . (2) In this section, we study the geometry of this objectiv e function, and show that under mild conditions on f , and assuming the norm of w ? is reasonably large, the follo wing holds: 8 Figure 2: Graphical depiction of the objecti ve function in Eq. ( 3 ), in 2 dimensions and where w ? = (2 , 2) . • For any fixed w , the v alue of the objecti ve function is almost independent of w ? , in the sense that if we pick the direction of w ? uniformly at random, the value is e xtremely concentrated around a fixed value independent of w ? (e.g. exponentially small in k w ? k 2 for a Gaussian or a mixture of Gaussians). • Similarly , the gradient of the objecti ve function with respect to w is almost independent of w ? , and is extremely concentrated around a fixed v alue (again, exponentially small in k w ? k 2 for , say , a mixture of Gaussians). Therefore, assuming k w ? k is reasonably large, an y standard gradient-based method will follow a trajectory nearly independent of w ? . In fact, in practice we do not ev en hav e access to exact gradients of Eq. ( 2 ), but only to noisy and biased versions of it (e.g. if we perform stochastic gradient descent, and certainly if we use finite-precision computations). In that case, the noise will completely obliterate the e xponentially small signal about w ? in the gradients, and will make the trajectory essentially independent of w ? . As a result, assuming ψ and the distribution is such that the function ψ ( h w ? , x i ) is sensitive to the direction of w ? , it follo ws that these methods will fail to optimize Eq. ( 2 ) successfully . Finally , we note that in practice, it is common to solve not Eq. ( 2 ) directly , but rather its empirical approximation with respect to some fixed finite training set. Still, by concentration of measure, this empirical objecti ve w ould con ver ge to the one in Eq. ( 2 ) gi ven enough data, so the same issues will occur . An important feature of our results is that they make virtually no structural assumptions on the predictors x 7→ f ( w , x ) . In particular , they can represent arbitrary classes of neural networks (as well as other predictor classes). Thus, our results imply that target functions of the form x 7→ ψ ( h w ? , x i ) , where ψ is periodic, would be difficult to learn using gradient-based methods, even if we allow improper learning and consider predictor classes of a dif ferent structure. T o e xplain how such results are attained, let us study a concrete special case (not necessarily in the context of neural networks). Consider the target function x 7→ cos(2 π h w ? , x i ) , and the hypothesis class (parameterized by w ) of functions x 7→ cos(2 π h w , x i ) . Thus, Eq. ( 2 ) takes the form min w E x ∼ ϕ 2 h (cos(2 π h w , x i ) − cos(2 π h w ? , x i ) 2 i . (3) 9 Furthermore, suppose the input distribution ϕ 2 is a standard Gaussian on R d . In two dimensions and for w ? = (2 , 2) , the objectiv e function in Eq. ( 2 ) turns out to hav e the form illustrated in Figure 2 . This objecti ve function has only three critical points: A global maximum at 0 , and two global minima at w ? and − w ? . Nevertheless, it would be difficult to optimize using gradient-based methods, since it is e xtremely flat e verywhere except close to the critical points. As we will see shortly , the same phenomenon occurs in higher dimensions. In high dimensions, if the direction of w ? is chosen randomly , we will be ov erwhelmingly likely to initialize far from the global minima, and hence will start in a flat plateau in which most gradient-based methods will stall 3 . W e now turn to explain why Eq. ( 3 ) has the form sho wn in Figure 2 . This will also help to illustrate our proof techniques, which apply much more generally . The main idea is to analyze the Fourier transform of Eq. ( 3 ). Letting cos w denote the function x 7→ cos(2 π h w , x i ) , we can write Eq. ( 3 ) as Z (cos(2 π h w , x i ) − cos(2 π h w ? , x i ) 2 ϕ 2 ( x ) d x = k cos w · ϕ − cos w ? · ϕ k 2 , where k·k is the standard norm o ver the space L 2 ( R d ) of square integrable functions. By standard properties of the Fourier transform (as described in Sec. 2 ), this squared norm of a function equals the squared norm of the function’ s Fourier transform, which equals in turn \ cos w · ϕ − \ cos w · ϕ 2 = [ cos w ∗ ˆ ϕ − \ cos w ? ∗ ˆ ϕ 2 . [ cos w ( ξ ) can be shown to equal 1 2 ( δ ( ξ − w ) + δ ( ξ + w )) , where δ ( · ) is Dirac’ s delta function (a “general- ized” function which satisfies δ ( z ) = 0 for all z 6 = 0 , and R δ ( z ) d z = 1 ). Plugging this into the above and simplifying, we get 1 4 k ˆ ϕ ( · − w ) + ˆ ϕ ( · + w ) − ˆ ϕ ( · − w ? ) − ˆ ϕ ( · + w ? ) k 2 = 1 4 Z ξ | ˆ ϕ ( ξ − w ) + ˆ ϕ ( ξ + w ) − ˆ ϕ ( ξ − w ? ) − ˆ ϕ ( ξ + w ? ) | 2 d ξ . (4) If ϕ 2 is a standard Gaussian, ˆ ϕ ( ξ ) can be shown to equal the Gaussian-like function (4 π ) d/ 2 a −k ξ k 2 where a = exp(4 π 2 ) . Plugging back, the expression abo ve is proportional to Z ξ a −k ξ − w k 2 + a −k ξ + w k 2 − a −k ξ − w ? k 2 + a −k ξ + w ? k 2 2 d ξ . (5) The expression in each inner parenthesis can be viewed as a mixture of two Gaussian-like functions, with centers at w , − w (or w ? , − w ? ). Thus, if w is far from w ? , these two mixtures will hav e nearly disjoint support, and Eq. ( 5 ) will have nearly the same value regardless of w – in other words, it is very flat. Since this equation is nothing more than a re-formulation of the original objecti ve function in Eq. ( 3 ) (up to a constant), we get a similar behavior for Eq. ( 3 ) as well. This behavior e xtends, howe ver , much more generally than the specific objective in Eq. ( 3 ). First of all, we can replace the standard Gaussian distrib ution ϕ 2 by any distribution such that ˆ ϕ has a localized support. This would still imply that Eq. ( 4 ) refers to the difference of two functions with nearly disjoint support, and the same flatness phenomenon will occur . Second, we can replace the cos function by any periodic 3 Although there are techniques to ov ercome flatness (e.g. by normalizing the gradient [ 19 , 10 ]), in our case the normalization factor will be huge and require extremely precise gradient information, which as discussed earlier , is unrealistic here. 10 function ψ . By properties of the Fourier transform of periodic functions, we still get localized functions in the Fourier domain (more precisely , the Fourier transform will be localized around integer multiples of w , up to scaling). Finally , instead of considering hypothesis classes of predictors x 7→ ψ ( h w , x i ) similar to the target function, we can consider quite arbitrary mappings x 7→ f ( w , x ) . Even though this function may no longer be localized in the Fourier domain, it is enough that only the target function x 7→ ψ ( h w ? , x i ) will be localized: That implies that regardless how f looks like, under a random choice of w ? , only a minuscule portion of the L 2 mass of f overlaps with the target function, hence getting suf ficient signal on w ? will be dif ficult. As mentioned in the introduction, these techniques and observations hav e some close resemblance to hardness results for learning parities o ver the Boolean cube in the statistical queries learning model [ 3 ]. There as well, one considers a Fourier transform (b ut on the Boolean cube rather than Euclidean space), and essentially show that functions with a “localized” Fourier transform are difficult to “detect” using any fixed function. Howe ver , our results are different and more general, in the sense that the y apply to generic smooth distributions o ver Euclidean space, and to a general class of periodic functions, rather than just parities. On the flip side, our results are constrained to methods which are based on gradients of the objecti ve, whereas the statistical queries frame work is more general and considers algorithms which are based on computing (approximate) expectations of arbitrary functions of the data. Extending our results to this generality is an interesting topic for future research. 4.2 F ormal Results W e no w turn to pro vide a more formal statement of our results. The distrib utions we will consider consist of arbitrary mixtures of densities, whose square roots have rapidly decaying tails in the Fourier domain. More precisely , we have the follo wing definition: Definition 3. Let ( r ) be some function fr om [0 , ∞ ) to [0 , 1] . A function ϕ 2 : R d → R is ( r ) Fourier - concentrated if its squar e r oot ϕ belongs to L 2 ( R d ) , and satisfies k ˆ ϕ · 1 ≥ r k ≤ k ˆ ϕ k ( r ) , wher e 1 ≥ r is the indicator function of { x : k x k ≥ r } . A canonical example is Gaussian distributions: Gi ven a (non-degenerate, zero-mean) Gaussian density function ϕ 2 with covariance matrix Σ , its square root ϕ is proportional to a Gaussian with cov ariance 2Σ , and its Fourier transform ˆ ϕ is well-kno wn to be proportional to a Gaussian with co variance (2Σ) − 1 . By standard Gaussian concentration results, it follows that ϕ 2 is Fourier-concentrated with ( r ) = exp( − Ω( λ min r 2 )) where λ min is the minimal eigenv alue of Σ . A similar bound can be shown when the Gaussian has some arbitrary mean. More generally , it is well-known that smooth functions (dif ferentiable to sufficiently high order with integrable deriv ativ es) ha ve Fourier transforms with rapidly decaying tails. F or example, if we consider the broad class of Schwartz functions (characterized by having values and all deriv atives decaying faster than polynomially in r ), then the Fourier transform of any such function is also a Schwartz function, which implies super-polynomial decay of ( r ) (see for instance [ 11 ], Chapter 11 and Proposition 11.25). W e now formally state our main result for this section. W e consider any predictor of the form x 7→ f ( w , x ) , where f is some fixed function and w is a parameter vector coming from some domain W , which we will assume w .l.o.g. to be a subset of some Euclidean space 4 (for example, f can represent a network 4 More generally , our analysis is applicable to any separable Hilbert space. 11 of a gi ven architecture, with weights specified by w ). When learning f based on data coming from an underlying distribution, we are essentially attempting to solv e the optimization problem min w ∈W F w ? ( w ) := E x ∼ ϕ 2 h ( f ( w , x ) − ψ ( h w ? , x i )) 2 i . Assume that F is dif ferentiable w .r .t. w , any gradient-based method to solv e this problem proceeds by computing (or approximating) ∇ F w ? ( w ) at various points w . Howe ver , the following theorem shows that at any w , and r e gardless of the type of predictor or network one is attempting to train, the gradient at w is virtually independent of the underlying target function, and hence pro vides very little signal: Theorem 3. Suppose that • ψ : R → [ − 1 , +1] is a periodic function of period 1 , which has bounded variation on every finite interval. • ϕ 2 is a density function on R d , which can be written as a (possibly infinite) mixtur e ϕ 2 = P i α i ϕ 2 i , wher e each ϕ i is an ( r ) F ourier-concentr ated density function. • At some fixed w , E x ∼ ϕ 2 ∂ ∂ w f ( w , x ) 2 ≤ G w for some G w . Then for some universal positive constants c 1 , c 2 , c 3 , if d ≥ c 1 , and w ? ∈ R d is a vector of norm 2 r chosen uniformly at random, then V ar w ? ( ∇ F w ? ( w )) := E w ? k∇ F w ? ( w ) − E w ? [ ∇ F w ? ( w )] k 2 ≤ c 2 G w exp( − c 3 d ) + ∞ X n =1 ( nr ) ! . W e note that bounded variation is weaker than, say , Lipschitz continuity . Assuming ( r ) decays rapidly with r – say , exponentially in r 2 as is the case for a Gaus sian mixture – we get that the bound in the theorem is on the order of exp( − Ω(min { d, r 2 } )) . Overall, the theorem implies that if r , d are moderately large, the gradient of F w ? at any point w is extremely concentrated around a fix ed value, independent of w ? . This implies that gradient-based methods, which attempt to optimize F w ? via gradient information, are unlikely to succeed. One way to formalize this is to consider an y iterati ve algorithm (possibly randomized), which relies on an ε -appr oximate gradient oracle to optimize F w ? : At e very iteration t , the algorithm chooses a point w t ∈ W , and receives a vector g t such that |∇ F w ? − g t | ≤ ε . In our case, we will be interested in ε such that ε 3 is on the order of the bound in Thm. 3 . Since the bound is extremely small for moderate d, r (say , smaller than machine precision), this is a realistic model of gradient-based methods on finite-precision machines, e ven if one attempts to compute the gradients accurately . The follo wing theorem implies that if the number of iterations is not extremely large (on the order of 1 /ε , e.g. exp(Ω( d, r 2 )) iterations for Gaussian mixtures), then with high probability , a gradient-based method will return the same predictor independent of w ? . Ho wev er, since the objecti ve function F w ? is highly sensitive to the choice of w ? , this means that no such gradient-based method can train a reasonable predictor . Theorem 4. Assume the conditions of Thm. 3 , and let ε = 3 p c 2 (sup w ∈W G w ) (exp( − c 3 d ) + P ∞ n =1 ( nr )) be the cube r oot of the bound specified there (uniformly o ver all w ∈ W ). Then for any algorithm as above and any p ∈ (0 , 1) , conditioned on an event which holds with pr obability 1 − p over the choice of w ? , its output after at most p/ε iterations will be independent of w ? . 12 5 Pr oofs 5.1 Proof of Thm. 1 Let P M denote the whitening matrix employed if we transform the instances X by some in vertible d × d matrix M (that is, X becomes M X ), and P the whitening matrix employed for the original data. Using the same notation as in the theorem, it is easily verified that P X = V > , and P M M X = V > M , where U M D M V > M is an SVD decomposition of the matrix M X . Since both V > and V > M are Rank ( X ) × m matrices with ro ws consisting of orthonormal vectors, the y are related by an orthogonal transformation (i.e. there is an orthogonal matrix R M such that R M V > = V > M ). Therefore, R M P X = P M M X . Since the data is fed to an orthogonally-in variant algorithm, its output W M satisfies W > M P M M X = W > P X . This in turn implies W > M R M P X = W > P X , and hence W > M R M V > = W > V > . Multiplying both sides on the right by V and taking a transpose, we get that R > M W M = W , and hence W M = R M W . In words, W and W M are the same up to an orthogonal transformation R M depending on M . Therefore, ( P > M W M ) > M X = W > M P M M X = W > R > M R M P X = W > P X = ( P > W ) > X , so we see that the returned predictor makes the same predictions over the dataset, independent of the trans- formation matrix M . 5.2 Proof of Thm. 2 W e start with the follo wing auxiliary theorem, which reduces the hardness result of [ 7 ] to one about neural networks of the type we discuss here: Theorem 5. Under the assumption stated in [ 7 ], the following holds for any n d = ω (1) (as d → ∞ ): Ther e is no algorithm running in time poly ( d, 1 / ) , which for any distribution D on { 0 , 1 } d , and any h ( W > x ) = σ ( P n d i =1 [ h w i , x i ] + ) (where W = [ w 1 , w 2 , . . . , w n d ] and max i k w i k ≤ O ( d ) ), given only access to samples ( x , h ( W > x )) wher e x ∼ D , r eturns with high pr obability a function f such that E x ∼D f ( x ) − h ( W > x ) 2 ≤ . Pr oof. Suppose by contradiction that there exists an algorithm A which for any distribution and h W as described in the theorem, returns a function f such that E x ∼D h f ( x ) − h ( W > x ) 2 i ≤ with high proba- bility . In particular , let us focus on distributions D supported on { 0 , 1 } d − 1 × { 1 } . For these distributions, we argue that any intersection of halfspaces on R d − 1 specified by w 1 , . . . , w n d ∈ R d − 1 with integer coordinates, and integer b 1 , . . . , b n , can be specified as x 7→ 1 − h ( W > x ) for some function h as de- scribed in the theorem statement. T o see this, note that for any w i , b i and x = ( x 0 , 1) in the support of D , h ( − w i , b i ) , x i = − h w i , x 0 i + b i is an integer , hence σ n d X i =1 [ h ( − w i , b i ) , x i ] + ! = σ n d X i =1 [ − w i , x 0 + b i ] + ! = n d _ i =1 − w i , x 0 + b i > 0 = n d _ i =1 w i , x 0 < b i = ¬ n d ^ i =1 w i , x 0 ≥ b i ! . 13 Therefore, for any distribution over examples labelled by an intersection of halfspaces x 7→ 1 − h ( W > x ) (with integer -v alued coordinates and bounded norms), by feeding A with { x i , 1 − y i } m i =1 , the algorithm returns a function f , such that with high probability , E x f ( x ) − h ( W > x ) 2 ≤ , and therefore E x (1 − f ( x )) − (1 − h ( W > x )) 2 ≤ . In particular , if we consider the Boolean function ˜ f ( x ) = 1 − rnd ( f ( x )) , where rnd ( z ) = 0 if z ≤ 1 / 2 and rnd ( z ) = 1 if z > 1 / 2 , we argue that Pr x ( ˜ f ( x ) 6 = 1 − h ( W > x )) ≤ 8 . Since is arbitrary , and 1 − h ( W > x ) specifies an intersection of halfspaces, this would contradict the hardness result of [ 7 ], and therefore prov e the theorem. This argument follows from the follo wing chain of inequalities, where 1 denotes the indicator function: Pr ˜ f ( x )) 6 = g ( x ) = Pr( f ( x ) > 1 / 2 ∧ g ( x ) = 1) + Pr( f ( x ) ≤ 1 / 2 ∧ g ( x ) = 0) = E [ 1 ( f ( x ) > 1 / 2 ∧ g ( x ) = 1)] + E [ 1 ( f ( x ) ≤ 1 / 2 ∧ g ( x ) = 0)] ≤ E h 4 ((1 − f ( x )) − g ( x )) 2 i + E h 4 ((1 − f ( x )) − g ( x )) 2 i ≤ 8 · E h ((1 − f ( x )) − g ( x )) 2 i ≤ 8 . Proposition 1. Thm. 5 holds even if we r estrict w 1 , . . . , w n d to be linearly independent, with s min ( W ) ≥ 1 . Pr oof. Suppose by contradiction that there exists an algorithm A which succeeds for any W as stated above. W e will describe ho w to use A to get an algorithm which succeeds for an y W as described in Thm. 5 , hence reaching a contradiction. Specifically , suppose we hav e access to samples ( x , h ( W > x )) , where x is supported on { 0 , 1 } d , and where W is any matrix as described in Thm. 5 . W e do the following: W e map e very x to ˜ x ∈ { 0 , 1 } d + n d by ˜ x = ( x , 0 , . . . , 0) , run A on the transformed samples ( ˜ x , h ( W > x )) to get some predictor ˜ f : { 0 , 1 } d + n d 7→ R , and return the predictor f ( x ) = ˜ f (( x , 0 , . . . , 0)) . T o see why this reduction works, we note that the mapping x 7→ ˜ x we hav e defined, where x is dis- tributed according to x , induces a distribution ˜ D on { 0 , 1 } d + n d . Let ˜ W be the ( d + n d ) × n d matrix [ W ; I n d ] (that is, we add another n d × n d unit matrix below W ). W e hav e ˜ W > ˜ W = W > W + I n d , so the minimal eigen value of ˜ W > ˜ W is at least 1 , hence s min ( ˜ W ) ≥ 1 , so ˜ W satisfies the conditions in the proposition. Moreov er, the norm of each column of ˜ W is larger than the norm of the corresponding column in W by at most 1 , so the norm constraint in Thm. 5 still holds. Finally , ˜ W ˜ x = W x for all x , and there- fore h ˜ W ( ˜ x ) = h W ( x ) . Thus, the distribution of ( ˜ x , h ( W > x )) = ( ˜ x , h ( ˜ W > x )) (which is used to feed the algorithm A ) is a v alid distribution corresponding to the conditions of the proposition and Thm. 5 (only in dimension d + n d ≤ 2 d instead of d ), so A returns with high probability a predictor ˜ f such that E ˜ x ∼ ˜ D ˜ f ( ˜ x ) − h ( ˜ W > ˜ x ) 2 ≤ . Ho wev er , ˜ f ( ˜ x ) = f ( x ) , h ( ˜ W > ˜ x ) = h ( W > x ) , so the returned predictor f satisfies E x ∼D f ( x ) − h ( W > x ) 2 ≤ . This contradicts Thm. 5 , which states that no efficient algorithm can return such a predictor for any suffi- ciently large dimension d and norm bound O ( d ) . 14 In the definitions of orthogonal inv ariance and linear in variance, we only required the in variance to hold with respect to instances x i in the dataset. A stronger condition is that the inv ariance is satisfied for any x ∈ R d . Howe ver , the following lemma shows that in v ariance w .r .t. a dataset sampled i.i.d. from some distribution is suf ficient to imply in variance w .r .t. “nearly all” x (under the same distrib ution): Lemma 1. Suppose the dataset { x i , y i } m i =1 is sampled i.i.d. fr om some distribution (wher e x i ∈ R d ), then the following holds with pr obability at least 1 − δ for any δ ∈ (0 , 1) : F or any in vertible M and linearly-in variant algorithm (or orthogonal M and orthogonally-in variant algorithm), conditioned on the algorithm’ s internal randomness, the r eturned matrices W and W M (with respect to the original data and the data transformed by M r espectively) satisfy Pr x ( W > M M x 6 = W > x ) ≤ d δ ( m + 1) . Pr oof. It is enough to prov e that with probability at least 1 − δ over the sampling of x 1 , . . . , x m , Pr x ( x / ∈ span ( x 1 , . . . , x m )) ≤ d δ ( m + 1) . (6) This is because the ev ent W > M M x i = W > x i for all i means that W > M M x = W > x for any x in the span of x 1 , . . . , x m . Let x 1 , . . . , x m +1 be sampled i.i.d. according to D . Considering probabilities o ver this sample, we hav e m +1 X j =1 Pr ( x j / ∈ span ( x 1 , . . . , x j − 1 )) = E m +1 X j =1 1 ( x j / ∈ span ( x 1 , . . . , x j − 1 )) ≤ d, (7) where the latter inequality is because each x j is a d -dimensional vector , hence the number of times we can get a vector not in the span of the previous ones is at most d . Moreover , since the vectors are sampled i.i.d, we hav e Pr( x j +1 / ∈ span ( x 1 , . . . , x j )) ≤ Pr( x j +1 / ∈ span ( x 1 , . . . , x j − 1 )) = Pr( x j / ∈ span ( x 1 , . . . , x j − 1 )) , so the probabilities in Eq. ( 7 ) monotonically decrease with j . Thus, Eq. ( 7 ) implies ( m + 1) Pr ( x m +1 / ∈ span ( x 1 , . . . , x m )) ≤ d ⇒ Pr ( x m +1 / ∈ span ( x 1 , . . . , x m )) ≤ d m + 1 . This is equi valent to E x 1 ,..., x m ∼D Pr x m +1 ( x m +1 / ∈ span ( x 1 , . . . , x m ) | x 1 , . . . , x m ) ≤ d m + 1 , so by Marko v’ s inequality , with probability at least 1 − δ ov er the sampling of x 1 , . . . , x m , Pr x m +1 ( x m +1 / ∈ span ( x 1 , . . . , x m )) ≤ d δ ( m + 1) . Since x m +1 is sampled independently , Eq. ( 6 ) and hence the lemma follows. 15 W ith these results in hand, we can finally turn to prov e Thm. 2 . Suppose by contradiction that there exists an ef ficient linearly-in variant algorithm A , which for any distrib ution D supported on v ectors of norm at most O d √ 2 dn d / min { 1 , s min ( W ? ) } , returns w .h.p. a predictor x 7→ f ( ˜ W > x ) such that E x ∼D ? f ( ˜ W > x ) − h ( W > ? x ) 2 ≤ . W e will show that the very same algorithm, if giv en poly ( d, 1 / ) samples, can successfully learn w .r .t. any d × n d matrix W and any distrib ution D satisfying Proposition 1 and Thm. 5 , contradicting those results. Indeed, let W and D be an arbitrary matrix and distribution as abov e. W e first argue that there exists a d × d in vertible matrix M such that W = M > W ? , k M k ≤ O ( d √ 2 n d ) min { 1 , s min ( W ? ) } . (8) T o see this, note that W and W ? are of the same size and our conditions imply that both of them have full column rank. Thus, we can simply augment them to in vertible d × d matrices [ W ˆ W ] and [ W ? ˆ W ? ] , where the columns of ˆ W (respectiv ely ˆ W ? ) are an orthonormal basis for the subspace orthogonal to the column space of W (respecti vely W ? ), and choosing M > = [ W ˆ W ][ W ? ˆ W ? ] − 1 . Thus, k M k ≤ [ W ˆ W ] · [ W ? ˆ W ? ] − 1 . The spectral norm of [ W ˆ W ] can be bounded by the Frobenius norm, which by the assumption on W from Thm. 5 and the fact that ˆ W consist of an orthonormal basis, is at most p O ( d ) 2 · n d + 1 · ( d − n d ) = O ( p d 2 n d ) = O ( d √ n d ) . The spectral norm of [ W ? ˆ W ? ] − 1 can be bounded by 1 /s min ([ W ? ˆ W ? ]) , where s min ([ W ? ˆ W ? ]) equals the square root of the smallest eigen value of [ W ? ˆ W ? ] > [ W ? ˆ W ? ] , which can be easily verified to be min { 1 , s min ( W ? ) } . No w , consider the following thought experiment: Suppose we would run the algorithm A with samples ( M x i , h ( W > ? ( M x i )) , i = 1 , 2 , . . . , m , where x i is sampled from D (which by the assumptions of Thm. 5 , is supported on vectors of norm at most √ d ). By Eq. ( 8 ), M x i is supported on the set of vectors of norm at most k M k k x i k ≤ k M k √ d ≤ O ( d √ dn d ) / min { 1 , s min ( W ? ) } , and the outputs correspond to the network specified by W ? . Therefore, by assumption, the algorithm A would return w .h.p. a matrix ˜ W M such that E x ∼D f ( ˜ W > M ( M x )) − h ( W > ? ( M x )) 2 ≤ . By Eq. ( 8 ), W > ? M = ( M > W ? ) > = W > , so this is equi valent to E x ∼D f (( ˜ W > M M x ) − h ( W > x )) 2 ≤ . (9) Let ˜ W I be the matrix returned by A if we had fed it with the samples { ( x i , h ( W > x i )) } m i =1 (or equi valently , { ( x i , h ( W > ? ( M x i ))) } m i =1 ) 5 . Let E x be the ev ent (conditioned on the samples used by the algorithm) that a freshly sampled x ∼ D satisfies ˜ W > M M x = ˜ W > I x . By Lemma 1 , w .h.p. over the samples fed to the 5 Note that if the algorithm is stochastic, both ˜ W M and ˜ W I are not fixed given the data, but also depend on the algorithm’ s internal randomness. Ho wev er , the proof will still follow by conditioning on an y possible realization of this randomness. 16 algorithm, Pr x ( E x ) ≥ 1 − O ( d/m ) . Therefore, w .h.p. over the samples x 1 , . . . , x m , E x ∼D f ( ˜ W > I x ) − h ( W > x ) 2 = Pr x ( E x ) · E x f ( ˜ W > I x ) − h ( W > x ) 2 E x + Pr x ( ¬ E x ) · E x f ( ˜ W > I x ) − h ( W > x ) 2 ¬ E x ≤ Pr x ( E x ) · E x f ( ˜ W > M M x ) − h ( W > x ) 2 E x + Pr x ( ¬ E x ) · 1 = E x f ( ˜ W > M M x ) − h ( W > x ) 2 1 ( E x ) + O d m ≤ E x f ( ˜ W > M M x ) − h ( W > x ) 2 + O d m ≤ + O d m , where we used the fact that h maps x to [0 , 1] , a union bound and Eq. ( 9 ). No w , recall that ˜ W I refers to the output of the algorithm, gi ven samples { ( x i , h ( W > x i )) } m i =1 where m = poly ( d, 1 / ) . Thus, we have sho wn that w .h.p., as long as the algorithm is fed with m ≥ d/ samples 6 , the algorithm returns ˜ W I which satisfies E x ∼D f ( ˜ W > I x ) − h ( W > x ) 2 ≤ O ( ) . This means that the algorithm succesfully learns the hypothesis x 7→ h ( W > x ) with respect to the distribu- tion D . Since is arbitrarily small and W, D were chosen arbitrarily , the result follows. 5.3 Proof of Thm. 3 W e note that for any function q , E x ∼ ϕ 2 [ q ( x )] = P i α i · E x ∼ ϕ 2 i [ q ( x )] . Thus, it is enough to prov e the bound in the theorem when ϕ 2 consists of a single element whose square root is Fourier -concentrated. For a mixture ϕ 2 = P i α i ϕ 2 i , the result follows by applying the bound for each ϕ 2 i indi vidually , and using Jensen’ s inequality . T o simplify notation a bit, we let ψ w ( · ) stand for the function ψ ( h w , ·i ) , and let h ( · − v ) (where v is some vector and h is a function on R d ) stand for the function x 7→ h ( x − v ) . Also, we will use sev eral times the fact that for an y two L 2 ( R d ) functions h 1 , h 2 , h h 1 ( · − v ) , h 2 ( · − v ) i = Z h 1 ( x − v ) h 2 ( x − v ) d x = Z h 1 ( x ) h 2 ( x ) d x = h h 1 , h 2 i . In other words, inner products (and hence also norms) in L 2 ( R d ) are in variant to a shift in coordinates. The proof is a combination of a fe w lemmas, presented below . Lemma 2. F or any w , it holds that ψ w ϕ ∈ L 2 ( R d ) , and satisfies [ ψ w ϕ ( x ) = X z ∈ Z a z · ˆ ϕ ( x − z w ) for any x , wher e Z is the set of inte gers and a z ar e comple x-valued coefficients (corr esponding to the F ourier series expansion of ψ , hence depending only on ψ ) which satisfy P z ∈ Z | a z | 2 ≤ 1 . 6 Even if the algorithm does not require that many samples, we can still artificially add more samples – these are merely used to ensure that its linear in variance is with respect to a suf ficiently large dataset. 17 Pr oof. First, we note that ψ w ϕ ∈ L 2 ( R d ) , since both ψ w and ϕ are locally integrable by the theorem’ s conditions, and satisfy k ψ w ϕ k 2 = Z ψ 2 w ( x ) ϕ 2 ( x ) d x = Z ψ 2 ( h w , x i ) ϕ 2 ( x ) d x ≤ Z ϕ 2 ( x ) d x = 1 < ∞ . As a result, [ ψ w ϕ exists as a function in L 2 ( R d ) . Since ψ is a bounded variation function, it is equal e verywhere to its F ourier series expansion: ψ ( x ) = X z ∈ Z a z exp (2 π iz x ) , where i is the imaginary unit (note that since ψ is real-valued, the imaginary components eventually cancel out, b ut it will be more conv enient for us to represent the Fourier series in this compact form). By P arsev al’ s identity , P z | a z | 2 = R 1 / 2 − 1 / 2 ψ 2 ( x ) dx , which is at most 1 (since ψ ( x ) ∈ [ − 1 , +1] ). Based on this equation, we hav e ψ w ( x ) = ψ ( h w , x i ) = X z ∈ Z a z exp (2 π iz h w , x i ) . W e now wish to compute the Fourier transform of the abov e 7 . First, we note that the Fourier transform of exp(2 π i h v , ·i ) is giv en by δ ( · − v ) , where δ is the Dirac delta function (a so-called generalized function which satisfies δ ( x ) = 0 for all x 6 = 0 , and R δ ( x ) d x = 1 ). Based on this and the linearity of the Fourier transform, we hav e that ˆ ψ w ( x ) = X z ∈ Z a z · δ ( x − z w ) , and therefore, by the con volution property of the Fourier transform, we ha ve [ ψ w ϕ ( x ) = ˆ ψ w ∗ ˆ ϕ ( x ) = Z ˆ ψ w ( z ) · ˆ ϕ ( x − z ) d z = X z ∈ Z a z · Z δ ( z − z w ) · ˆ ϕ ( x − z ) d z = X z ∈ Z a z · ˆ ϕ ( x − z w ) as required. Lemma 3. F or any distinct integ ers z 1 6 = z 2 and any w such that k w k = 2 r , it holds that h | ˆ ϕ ( · − z 1 w ) | , | ˆ ϕ ( · − z 2 w ) | i ≤ 2 · ( | z 1 − z 2 | r ) . Pr oof. Let ∆ = | z 2 − z 1 | r , and v = ( z 2 − z 1 ) w , so v is a vector of norm 2∆ . Since the inner product is in variant to shifting the coordinates, we can assume without loss of generality that z 1 = 0 , and our goal is to bound h| ˆ ϕ | , | ˆ ϕ ( · − v ) |i . 7 Strictly speaking, this function does not have a Fourier transform in the sense of Eq. ( 1 ), since the associated integrals do not con ver ge. Howe ver , the function still has a well-defined Fourier transform in the more general sense of a generalized function or distribution (see e.g. [ 11 ] for a surve y). In the derivation below , we will simply rely on some standard formulas from the Fourier analysis literature, and refer to [ 11 ] for their formal justifications. 18 Using the con vention that 1 ≤ ∆ is the indicator of { x : k x k ≤ ∆ } , and 1 > ∆ is the indicator for its complement, we hav e h| ˆ ϕ | , | ˆ ϕ ( · − v ) |i = h| ˆ ϕ | , | ˆ ϕ ( · − v ) | 1 ≤ ∆ i + h| ˆ ϕ | , | ˆ ϕ ( · − v ) | 1 > ∆ i = h| ˆ ϕ | , | ˆ ϕ ( · − v ) | 1 ≤ ∆ i + h| ˆ ϕ | 1 > ∆ , | ˆ ϕ ( · − v ) |i ≤ k ˆ ϕ k k ˆ ϕ ( · − v ) 1 ≤ ∆ k + k ˆ ϕ 1 > ∆ k k ˆ ϕ ( · − v ) k , where in the last step we used Cauchy-Schwartz. Using the fact that norms and inner products are inv ariant to coordinate shifting, the abov e is at most k ˆ ϕ k k ˆ ϕ 1 ≤ ∆ ( · + v ) k + k ˆ ϕ 1 > ∆ k k ˆ ϕ k = k ˆ ϕ k s Z | ˆ ϕ ( x ) | 2 1 k x + v k≤ ∆ d x + s Z | ˆ ϕ ( x ) | 2 1 > ∆ ( x ) d x ! . By the triangle inequality and the assumption k v k = 2∆ , the e vent k x + v k ≤ ∆ implies k x k ≥ ∆ . Therefore, the abov e can be upper bounded by 2 k ˆ ϕ k s Z | ˆ ϕ ( x ) | 2 1 ≥ ∆ ( x ) d x = 2 k ˆ ϕ k · k ˆ ϕ · 1 ≥ ∆ k . Since ϕ is Fourier-concentrated, this is at most 2 (∆) k ˆ ϕ k 2 = 2 (∆) k ϕ k 2 = 2 (∆) , where we use the isometry of the Fourier transform and the assumption that k ϕ k 2 = R ϕ 2 ( x ) d x = 1 . Plugging back the definition of ∆ , the result follo ws. Lemma 4. It holds that X z 1 6 = z 2 ∈ Z | a z 1 | · | a z 2 | · ( r | z 1 − z 2 | ) ≤ 2 ∞ X n =1 ( nr ) Pr oof. For simplicity , define 0 ( x ) = ( x ) for all x > 0 , and (0) = 0 . Then the expression in the lemma equals X z 1 ,z 2 ∈ Z | a z 1 | · | a z 2 | · 0 ( | z 1 − z 2 | r ) = X z 1 ,z 2 ∈ Z | a z 1 | p 0 ( | z 1 − z 2 | r ) | a z 2 | p 0 ( | z 1 − z 2 | r ) ≤ s X z 1 ,z 2 ∈ Z | a z 1 | 2 0 ( | z 1 − z 2 | r ) s X z 1 ,z 2 ∈ Z | a z 2 | 2 0 ( | z 1 − z 2 | r ) = X z 1 ,z 2 ∈ Z | a z 1 | 2 0 ( | z 1 − z 2 | r ) where in the last step we used the fact that the two inner square roots are the same up to a different indexing. Recalling the definition of 0 and that P z | a z | 2 ≤ 1 , the above is at most X z 1 ∈ Z | a z 1 | 2 X z 2 ∈ Z 0 ( | z 1 − z 2 | r ) ≤ sup z 1 ∈ Z X z 2 ∈ Z 0 ( | z 1 − z 2 | r ) = 0 (0) + 2 ∞ X n =1 0 ( nr ) ! = 2 ∞ X n =1 ( nr ) . 19 Lemma 5. F or any g ∈ L 2 ( R d ) , if d ≥ c 0 (for some universal constant c 0 ), and we sample w uniformly at random fr om { w : k w k = 2 r } , it holds that E D g , [ ψ w ϕ E − a 0 h g , ˆ ϕ i 2 ≤ 10 k g k 2 exp( − cd ) + ∞ X n =1 ( nr ) ! wher e a 0 is the coefficient fr om Lemma 2 and c is a universal positive constant. Pr oof. By symmetry , given any function f of w , the expectation E w [ f ( w )] (where w is uniform on a sphere) can be equiv alently written as E w ∈W E U [ f ( U w )] = E U E w ∈W [ f ( U w )] , where U is a rotation matrix chosen uniformly at random (so that for any w , U w is uniformly distributed on the sphere of radius k w k ), and E w ∈W refers to a uniform distrib ution of w over some finite set W of vectors of norm 2 r . In particular , we will choose W = { w 1 , . . . , w d exp( cd ) e } (where c is some positive univ ersal constant) which satisfies the follo wing: ∀ i k w i k = 2 r , ∀ i 6 = j | h w i , w j i | < 2 r 2 . (10) The existence of such a set follows from standard concentration of measure arguments (i.e. if we pick that many vectors uniformly at random from n − 2 r √ d , + 2 r √ d o d , and c is small enough, the vectors will satisfy the abov e with ov erwhelming probability , hence such a set must exist). Thus, our goal is to bound E U E w ∈W E D g , [ ψ w ϕ E − a 0 h g , ˆ ϕ i 2 . In fact, we will pro ve the bound stated in the lemma for any U , and will focus on U = I without loss of generality (the argument for other U is exactly the same). First, by applying Lemma 2 , we ha ve E w ∈W D g , [ ψ w ϕ E − a 0 h g , ˆ ϕ i 2 = E w ∈W * g , X z ∈ Z a z ˆ ϕ ( · − z w ) + − a 0 h g , ˆ ϕ i ! 2 = E w ∈W * g , X z ∈ Z \{ 0 } a z ˆ ϕ ( · − z w ) + 2 . (11) For an y w ∈ W , let A w = { x ∈ R d : ∃ z ∈ Z \ { 0 } s.t. k x − z w k < r } . In w ords, each A w corresponds to the union of open balls of radius r around ± w , ± 2 w , ± 3 w . . . . An important property of these sets is that the y are disjoint: A w ∩ A w 0 = ∅ for any distinct w , w 0 ∈ W . T o see why , note that if there was some x in both of them, it would imply k x − z 1 w k < r and k x − z 2 w 0 k < r for some non-zero z 1 , z 2 ∈ Z , hence k z 1 w − z 2 w 0 k < 2 r by the triangle inequality . Squaring both sides and performing some simple manipulations (using the facts that k w k = k w 0 k = 2 r and | z 1 | , | z 2 | ≥ 1 ), we would get 2 | z 1 z 2 | · w , w 0 > 4 r 2 ( z 2 1 + z 2 2 − 1) ≥ 2 r 2 ( z 2 1 + z 2 2 ) ⇒ w , w 0 ≥ r 2 z 1 z 2 + z 2 z 1 ≥ 2 r 2 , where we used the f act that x + 1 /x ≥ 2 for all x > 0 . This contradicts the assumption on W (see Eq. ( 10 )), and establishes that { A w } w ∈W are indeed disjoint sets. 20 W e now continue by analyzing Eq. ( 11 ). Letting 1 A w be the indicator function to the set A w , and 1 A C w be the indicator of its complement, and recalling that ( a + b ) 2 ≤ 2( a 2 + b 2 ) , we can upper bound Eq. ( 11 ) by 2 · E w ∈W * g , 1 A w X z ∈ Z \{ 0 } a z ˆ ϕ ( · − z w ) + 2 + 2 · E w ∈W * g , 1 A C w X z ∈ Z \{ 0 } a z ˆ ϕ ( · − z w ) + 2 . (12) W e consider each expectation separately . Starting with the first one, we hav e E w ∈W * g , 1 A w X z ∈ Z \{ 0 } a z ˆ ϕ ( · − z w ) + 2 = E w ∈W D g , 1 A w [ ψ w ϕ − a 0 ˆ ϕ E 2 = E w ∈W D 1 A w g , [ ψ w ϕ − a 0 ˆ ϕ E 2 ≤ E w ∈W k 1 A w g k 2 [ ψ w ϕ − a 0 ˆ ϕ 2 ≤ 2 · E w ∈W k 1 A w g k 2 [ ψ w ϕ 2 + k a 0 ˆ ϕ k 2 = 2 · E w ∈W h k 1 A w g k 2 k ψ w ϕ k 2 + | a 0 | 2 · k ˆ ϕ k 2 i . Since we have k ˆ ϕ k = k ϕ k = 1 , | a 0 | 2 ≤ P z | a z | 2 ≤ 1 , and k ψ w ϕ k 2 = R ψ 2 w ( x ) ϕ 2 ( x ) d x ≤ R ϕ 2 ( x ) d x = 1 , the abov e is at most 4 · E w ∈W h k 1 A w g k 2 i ≤ 4 |W | X w ∈W Z 1 A w ( x ) | g ( x ) | 2 d x = 4 |W | Z X w ∈W 1 A w ( x ) ! | g ( x ) | 2 d x . Since A w are disjoint sets, P w ∈W 1 A w ( x ) ≤ 1 for any x , so the abo ve is at most 4 |W | Z | g ( x ) | 2 d x ≤ 4 exp( − cd ) k g k 2 . (13) W e now turn to analyze the second expectation in Eq. ( 12 ), namely E w ∈W D g , 1 A C w P z ∈ Z \{ 0 } a z ˆ ϕ ( · − z w ) E 2 . W e will upper bound the expression deterministically for any w , so we may drop the e xpectation. Applying Cauchy-Schwartz, it is at most k g k 2 · 1 A C w X z ∈ Z \{ 0 } a z ˆ ϕ ( · − z w ) 2 = k g k 2 X z 1 ,z 2 ∈ Z \{ 0 } a z 1 a z 2 D 1 A C w ˆ ϕ ( · − z 1 w ) , ˆ ϕ ( · − z 2 w ) E . (14) W e now di vide the terms in the sum above to tw o cases: • If z 1 = z 2 , then D 1 A C w ˆ ϕ ( · − z 1 w ) , ˆ ϕ ( · − z 2 w ) E = Z 1 A C w ( x ) | ˆ ϕ ( x − z 1 w ) | 2 d x . = Z 1 A C w ( x + z 1 w ) | ˆ ϕ ( x ) | 2 d x , and by definition of A C w and the assumption z 1 6 = 0 , we ha ve 1 A C w ( x + z 1 w ) = 1 only if k x k ≥ r . Therefore, as ϕ is Fourier -concentrated, the above is at most Z x : k x k≥ r | ˆ ϕ ( x ) | 2 d x ≤ 2 ( r ) · k ˆ ϕ k 2 = 2 ( r ) · k ϕ k 2 = 2 ( r ) . 21 • If z 1 6 = z 2 , then by Lemma 3 , D 1 A C w ˆ ϕ ( · − z 1 w ) , ˆ ϕ ( · − z 2 w ) E ≤ h| ˆ ϕ ( · − z 1 w ) | , | ˆ ϕ ( · − z 2 w ) |i ≤ 2 ( | z 1 − z 2 | r ) . Plugging these two cases back into Eq. ( 14 ), we get the upper bound k g k 2 X z ∈ Z \{ 0 } | a z | 2 2 ( r ) + 2 X z 1 6 = z 2 ∈ Z | a z 1 | · | a z 2 | · ( | z 1 − z 2 | r ) . Noting that P z | a z | 2 ≤ 1 , and applying Lemma 4 , the above is at most k g k 2 2 ( r ) + 4 ∞ X n =1 ( nr ) ! ≤ 5 k g k 2 ∞ X n =1 ( nr ) , where we used the fact that 2 ( r ) ≤ ( r ) ≤ P ∞ n =1 ( nr ) . Recalling this is an upper bound on the second expectation in Eq. ( 12 ), and that the first expectation is upper bounded as in Eq. ( 13 ), we get that Eq. ( 12 ) (and hence the expression in the lemma statement) is at most 10 k g k 2 exp( − cd ) + ∞ X n =1 ( nr ) ! as required. W ith these lemmas in hand, we can now turn to prov e the theorem. W e have that V ar w ? [ ∇ F w ? ( w )] = E w ? k∇ F w ? ( w ) − E w ? [ ∇ F w ? ( w )] k 2 ≤ E w ? k∇ F w ? ( w ) − p k 2 for any vector p which is not dependent of w ? (this p will be determined later). Recalling the definition of the objecti ve function F , and letting g ( x ) = ( g 1 ( x ) , g 2 ( x ) , . . . ) = ∂ ∂ w f ( w , x ) , the above equals E w ? E x ∼ ϕ 2 [( f ( w , x ) − ψ ( h w ? , x i )) g ( x )] − p 2 = X i E w ? E x ∼ ϕ 2 [ f ( w , x ) g i ( x ) − ψ ( h w ? , x i ) g i ( x )] − p i 2 = X i E w ? ( h ϕg i , ϕf ( w , · ) i − h ϕg i , ϕψ w ? i − p i ) 2 Let us no w choose p so that p i = h ϕg i , ϕf ( w , · ) i − h ϕg i , a 0 ϕ i (note that this choice is indeed independent of w ? ). Plugging back and applying Lemma 5 (using the L 2 function c ϕg i for each i ), we get X i E w ? ( h ϕg i , ϕψ w ? i − h ϕg i , a 0 ϕ i ) 2 = X i E w ? D c ϕg i , \ ϕψ w ? E − h c ϕg i , a 0 b ϕ i 2 ≤ 10 X i k c ϕg i k 2 exp( − cd ) + ∞ X n =1 ( nr ) ! , and since d X i =1 k ϕg i k 2 = d X i =1 Z g 2 i ( x ) ϕ 2 ( x ) d x = Z k g ( x ) k 2 ϕ 2 ( x ) = E x ∼ ϕ 2 k g ( x ) k 2 ≤ G 2 w , the theorem follo ws. 22 5.4 Proof of Thm. 4 W e will assume w .l.o.g. that the algorithm is deterministic: If it is randomized, we can simply prove the statement for any possible realization of its random coin flips. W e consider an oracle which giv en a point w , returns E w ? [ ∇ F w ? ( w )] if |∇ F w ? ( w ) − E w ? [ ∇ F w ? ( w )] | ≤ ε , and ∇ F w ? ( w ) otherwise. Thus, it is enough to sho w that with probability at least 1 − p , the oracle will only return responses of the form E w ? [ ∇ F w ? ( w )] , which is clearly independent of w ? . Since the algorithm’ s output can depend on w ? only through the oracle responses, this will prov e the theorem. The algorithm’ s first point w 1 is fix ed before receiving any information from the oracle, and is therefore independent of w ? . By Thm. 3 , we ha ve that V ar w ? ( ∇ F w ? ( w 1 )) ≤ ε , which by Chebyshe v’ s inequality , implies that Pr ( |∇ F w ? ( w 1 ) − E w ? [ ∇ F w ? ( w 1 )] | > ε ) ≤ ε, where the probability is o ver the choice of w ? . Assuming the e vent abov e does not occur , the oracle returns E w ? [ ∇ F w ? ( w )] , which does not depend on the actual choice of w ? . This means that the next point w 2 chosen by the algorithm is fixed independent of w ? . Again by Thm. 3 and Chebyshe v’ s inequality , Pr ( |∇ F w ? ( w 2 ) − E w ? [ ∇ F w ? ( w 2 )] | > ε ) ≤ ε. Repeating this argument and applying a union bound, it follows that as long as the number of iterations T satisfies T ε ≤ p (or equiv alently T ≤ p/ε ), the oracle rev eals no information whatsoever on the choice of w ? all point chosen by the algorithm (and hence also its output) are independent of w ? as required. Acknowledgements This research is supported in part by an FP7 Marie Curie CIG grant, Israel Science F oundation grant 425/13, and the Intel ICRI-CI Institute. Refer ences [1] Alexandr Andoni, Rina Panigrahy , Gregory V aliant, and Li Zhang. Learning polynomials with neural networks. In Pr oceedings of the 31st International Conference on Machine Learning (ICML-14) , pages 1908–1916, 2014. [2] Sanjee v Arora, Aditya Bhaskara, Rong Ge, and T engyu Ma. Prov able bounds for learning some deep representations. In ICML , pages 584–592, 2014. [3] A vrim Blum, Merrick Furst, Jef frey Jackson, Michael Kearns, Y ishay Mansour , and Steven Rudich. W eakly learning dnf and characterizing statistical query learning using fourier analysis. In Pr oceedings of the twenty-sixth annual A CM symposium on Theory of computing , pages 253–262. A CM, 1994. [4] Stephen Boyd and Lie ven V andenber ghe. Con vex optimization . Cambridge uni versity press, 2004. [5] Anna Choromanska, Mikael Henaff, Michael Mathieu, G ´ erard Ben Arous, and Y ann LeCun. The loss surfaces of multilayer networks. In AIST A TS , 2015. [6] Amit Daniely , Roy Frostig, and Y oram Singer . T o ward deeper understanding of neural networks: The po wer of initialization and a dual view on e xpressi vity . arXiv pr eprint arXiv:1602.05897 , 2016. 23 [7] Amit Daniely and Shai Shalev-Shw artz. Comple xity theoretic limitations on learning dnf ’ s. In 29th Annual Confer ence on Learning Theory , pages 815–830, 2016. [8] John Duchi, Elad Hazan, and Y oram Singer . Adapti ve subgradient methods for online learning and stochastic optimization. Journal of Mac hine Learning Resear ch , 12(Jul):2121–2159, 2011. [9] V italy Feldman, Cristobal Guzman, and Santosh V empala. Statistical query algorithms for stochastic con vex optimization. arXiv pr eprint arXiv:1512.09170 , 2015. [10] Elad Hazan, Kfir Levy , and Shai Shalev-Shwartz. Beyond conv exity: Stochastic quasi-con vex opti- mization. In Advances in Neural Information Pr ocessing Systems , pages 1594–1602, 2015. [11] John K. Hunter and Bruno Nachtergaele. Applied analysis . W orld Scientific Publishing Co., Inc., Ri ver Edge, NJ, 2001. [12] Serge y Ioffe and Christian Szegedy . Batch normalization: Accelerating deep network training by reducing internal covariate shift. In Pr oceedings of the 32nd International Confer ence on Machine Learning (ICML-15) , pages 448–456, 2015. [13] Majid Janzamin, Hanie Sedghi, and Anima Anandkumar . Beating the perils of non-con ve xity: Guar - anteed training of neural networks using tensor methods. CoRR abs/1506.08473 , 2015. [14] Michael Kearns. Ef ficient noise-tolerant learning from statistical queries. Journal of the A CM (JA CM) , 45(6):983–1006, 1998. [15] Adam R. Kliv ans and Pravesh Kothari. Embedding hard learning problems into gaussian space. In APPR OX/RANDOM , 2014. [16] Adam R Kli vans and Alexander A Sherstov . Cryptographic hardness for learning intersections of halfspaces. Journal of Computer and System Sciences , 75(1):2–12, 2009. [17] Roi Li vni, Shai Shalev-Shw artz, and Ohad Shamir . On the computational ef ficiency of training neural networks. In Advances in Neural Information Pr ocessing Systems , pages 855–863, 2014. [18] Peter McCullagh and John A Nelder . Generalized linear models , volume 37. CRC press, 1989. [19] Y urii E Nesterov . Minimization methods for nonsmooth con vex and quasicon vex functions. Matekon , 29:519–531, 1984. [20] Itay Safran and Ohad Shamir . On the quality of the initial basin in overspecified neural networks. In ICML , 2016. [21] Daniel Soudry and Y air Carmon. No bad local minima: Data independent training error guarantees for multilayer neural networks. arXiv pr eprint arXiv:1605.08361 , 2016. [22] Y uchen Zhang, Jason D Lee, Martin J W ainwright, and Michael I Jordan. Learning halfspaces and neural networks with random initialization. arXiv pr eprint arXiv:1511.07948 , 2015. 24

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment