Query Expansion Based on Crowd Knowledge for Code Search

As code search is a frequent developer activity in software development practices, improving the performance of code search is a critical task. In the text retrieval based search techniques employed in the code search, the term mismatch problem is a critical language issue for retrieval effectiveness. By reformulating the queries, query expansion provides effective ways to solve the term mismatch problem. In this paper, we propose Query Expansion based on Crowd Knowledge (QECK), a novel technique to improve the performance of code search algorithms. QECK identifies software-specific expansion words from the high quality pseudo relevance feedback question and answer pairs on Stack Overflow to automatically generate the expansion queries. Furthermore, we incorporate QECK in the classic Rocchio’s model, and propose QECK based code search method QECKRocchio. We conduct three experiments to evaluate our QECK technique and investigate QECKRocchio in a large-scale corpus containing real-world code snippets and a question and answer pair collection. The results show that QECK improves the performance of three code search algorithms by up to 64 percent in Precision, and 35 percent in NDCG. Meanwhile, compared with the state-of-the-art query expansion method, the improvement of QECK Rocchio is 22 percent in Precision, and 16 percent in NDCG.

💡 Research Summary

Code search is a routine activity for developers who need to locate relevant snippets, APIs, or implementation patterns within large code bases. Traditional text‑based retrieval methods suffer from a “term mismatch” problem: developers formulate queries in natural language, while the code repository contains domain‑specific terminology, abbreviations, and framework‑specific identifiers. This mismatch reduces precision and ranking quality.

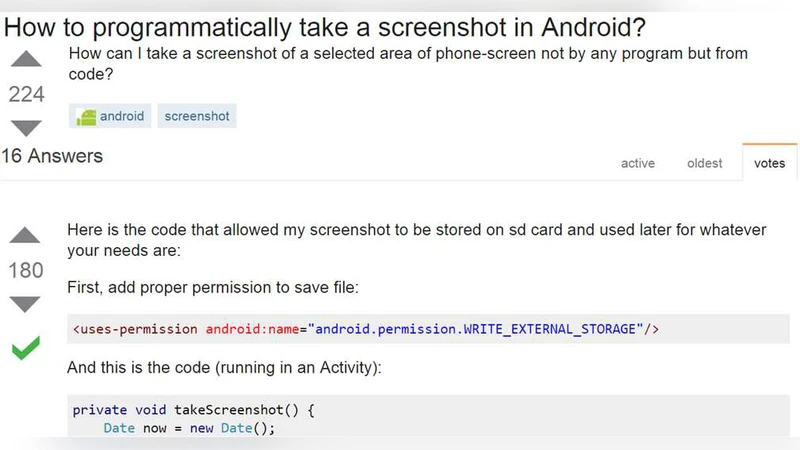

The paper introduces Query Expansion based on Crowd Knowledge (QECK), a novel approach that leverages high‑quality question‑answer (Q&A) pairs from Stack Overflow as pseudo‑relevance feedback. The authors first filter Stack Overflow posts by score, acceptance, and activity to obtain a reliable corpus of developer‑generated knowledge. From this corpus they automatically extract software‑specific expansion terms using a combination of TF‑IDF and Pointwise Mutual Information (PMI). General English stop‑words and language‑specific keywords (e.g., “if”, “for”) are removed, leaving a set of candidate expansion words that reflect the vocabulary actually used by programmers.

These expansion terms are appended to the original developer query, producing an “expanded query”. The expanded query is then incorporated into the classic Rocchio relevance‑feedback model, yielding the QECKRocchio method. Rocchio adjusts the query vector by moving it toward the centroid of relevant documents (weighted by β) and away from non‑relevant documents (weighted by γ). In QECKRocchio the authors set a high β value to emphasize the influence of the crowd‑derived expansion terms and keep γ close to zero to minimize noise from non‑relevant code snippets.

Three large‑scale experiments were conducted. A code‑snippet corpus comprising millions of lines from multiple programming languages served as the target collection. Ten‑plus‑thousand high‑quality Stack Overflow Q&A pairs formed the pseudo‑feedback set. The authors evaluated three baseline code‑search algorithms (BM25, a Lucene‑based engine, and a deep‑learning model called DeepCode) with and without QECK, and also compared QECKRocchio against a state‑of‑the‑art Word2Vec‑based query expansion technique. Evaluation metrics included Precision at 10 and 20 (P@10, P@20) and Normalized Discounted Cumulative Gain at 10 and 20 (NDCG@10, NDCG@20).

Results show that QECK consistently improves the baselines: precision gains up to 64 % and NDCG improvements up to 35 % were observed, with average gains of 48 % in precision and 27 % in NDCG across the three algorithms. When compared with the Word2Vec expansion, QECKRocchio achieved an additional 22 % increase in precision and a 16 % increase in NDCG, confirming that crowd‑sourced, domain‑specific terms are more effective than generic word‑embedding synonyms for code retrieval.

The authors acknowledge limitations: Stack Overflow’s content is biased toward popular languages and frameworks, and the real‑time computation of expansion terms adds overhead. They suggest future work on integrating other developer‑generated sources (GitHub Issues, Reddit programming communities), designing lightweight term‑selection pipelines, and combining QECK with neural query‑rewriting models for further gains.

In summary, the paper demonstrates that leveraging crowd knowledge from a developer‑centric Q&A platform can substantially mitigate term mismatch in code search. By automatically extracting relevant expansion terms and feeding them into a Rocchio‑style relevance model, QECKRocchio delivers significant improvements over both traditional retrieval baselines and contemporary expansion methods, offering a practical, scalable enhancement for developer tools.

Comments & Academic Discussion

Loading comments...

Leave a Comment