The Mythos of Model Interpretability

Supervised machine learning models boast remarkable predictive capabilities. But can you trust your model? Will it work in deployment? What else can it tell you about the world? We want models to be not only good, but interpretable. And yet the task …

Authors: Zachary C. Lipton

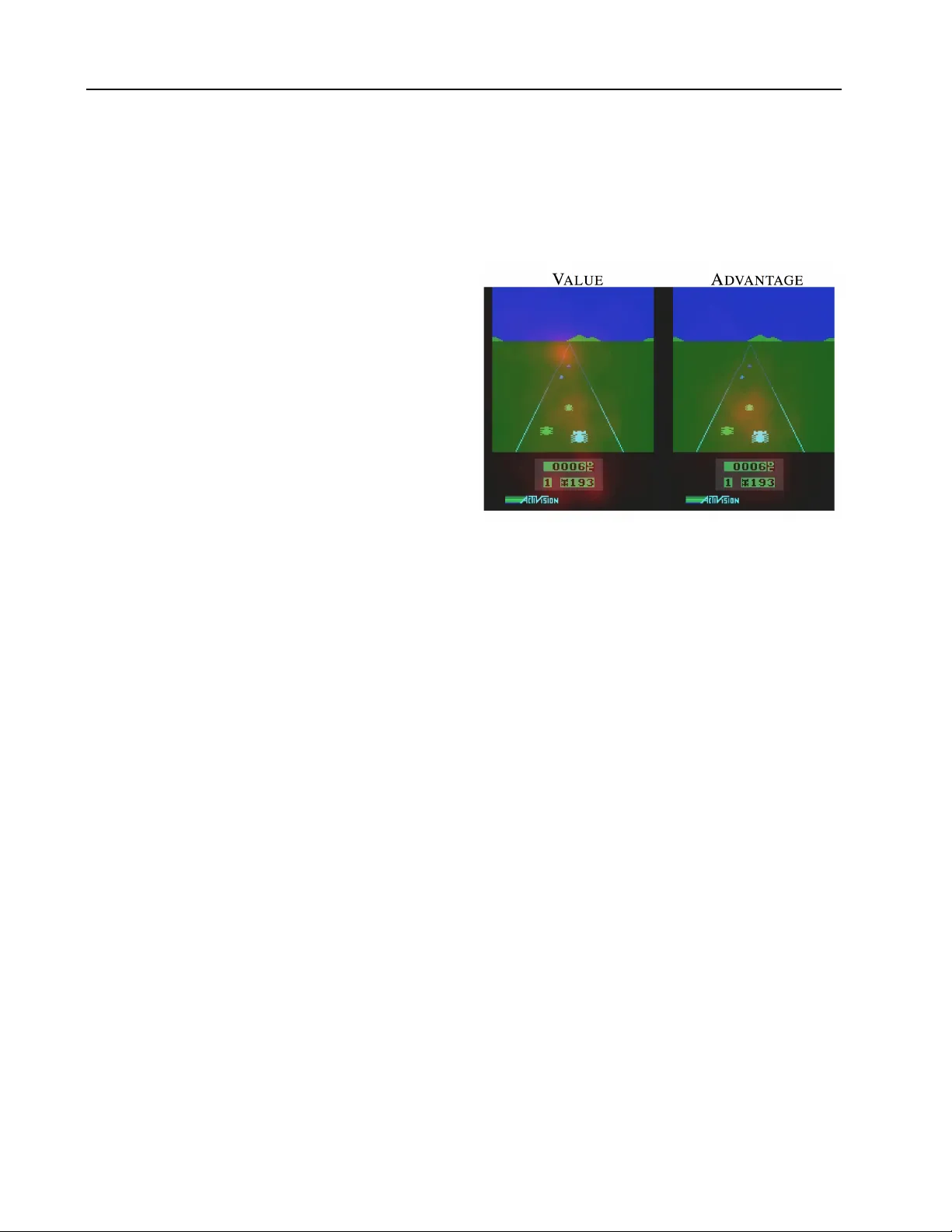

The Mythos of Model Interpr etability Zachary C. Lipton 1 Abstract Supervised machine learning models boast re- markable predicti ve capabilities. But can you trust your model? W ill it work in deployment? What else can it tell you about the world? W e want models to be not only good, but inter- pretable. And yet the task of interpr etation ap- pears underspecified. Papers pro vide div erse and sometimes non-ov erlapping motiv ations for in- terpretability , and offer myriad notions of what attributes render models interpretable. Despite this ambiguity , many papers proclaim inter- pretability axiomatically , absent further explana- tion. In this paper , we seek to refine the dis- course on interpretability . First, we examine the motiv ations underlying interest in interpretabil- ity , finding them to be div erse and occasionally discordant. Then, we address model properties and techniques thought to confer interpretability , identifying transparency to humans and post-hoc explanations as competing notions. Throughout, we discuss the feasibility and desirability of dif- ferent notions, and question the oft-made asser- tions that linear models are interpretable and that deep neural networks are not. 1. Introduction As machine learning models penetrate critical areas lik e medicine, the criminal justice system, and financial mar- kets, the inability of humans to understand these mod- els seems problematic ( Caruana et al. , 2015 ; Kim , 2015 ). Some suggest model interpr etability as a remedy , but few articulate precisely what interpretability means or why it is important. Despite the absence of a definition, papers fre- quently make claims about the interpretability of v arious models. From this, we might conclude that either: (i) the definition of interpretability is uni versally agreed upon, but 1 Univ ersity of California, San Diego. Correspondence to: Zachary C. Lipton < zlipton@cs.ucsd.edu > . 2016 ICML W orkshop on Human Interpretability in Machine Learning (WHI 2016), New Y ork, NY , USA. Copyright by the author(s). no one has managed to set it in writing, or (ii) the term in- terpretability is ill-defined, and thus claims regarding inter - pretability of various models may e xhibit a quasi-scientific character . Our in vestigation of the literature suggests the latter to be the case. Both the moti ves for interpretability and the technical descriptions of interpretable models are div erse and occasionally discordant, suggesting that inter- pretability refers to more than one concept. In this paper, we seek to clarify both, suggesting that interpr etability is not a monolithic concept, but in fact reflects sev eral dis- tinct ideas. W e hope, through this critical analysis, to bring focus to the dialogue. Here, we mainly consider supervised learning and not other machine learning paradigms, such as reinforcement learn- ing and interactiv e learning. This scope deriv es from our original interest in the oft-made claim that linear models are preferable to deep neural networks on account of their interpretability ( Lou et al. , 2012 ). T o gain conceptual clar- ity , we ask the refining questions: What is interpretability and why is it important? Broadening the scope of discus- sion seems counterproductive with respect to our aims. For research inv estigating interpretability in the context of rein- forcement learning, we point to ( Dragan et al. , 2013 ) which studies the human interpretability of robot actions. By the same reasoning, we do not delve as much as other papers might into Bayesian methods, ho wev er try to draw these connections where appropriate. T o ground any discussion of what might constitute inter- pretability , we first consider the v arious desiderata put forth in work addressing the topic (expanded in § 2 ). Many pa- pers propose interpretability as a means to engender trust ( Kim , 2015 ; Ridgeway et al. , 1998 ). But what is trust? Does it refer to faith in a model’ s performance ( Ribeiro et al. , 2016 ), robustness, or to some other property of the decisions it makes? Does interpretability simply mean a low-le vel mechanistic understanding of our models? If so does it apply to the features, parameters, models, or training algorithms? Other papers suggest a connection between an interpretable model and one which uncovers causal structure in data ( Athey & Imbens , 2015 ). The legal notion of a right to explanation offers yet another lens on interpretability . Often, our machine learning problem formulations are im- The Mythos of Model Interpr etability perfect matches for the real-life tasks they are meant to solve. This can happen when simplified optimization ob- jectiv es fail to capture our more comple x real-life goals. Consider medical research with longitudinal data. Our real goal may be to discover potentially causal associations, as with smoking and cancer ( W ang et al. , 1999 ). But the opti- mization objective for most supervised learning models is simply to minimize error , a feat that might be achieved in a purely correlativ e fashion. Another such diver gence of real-life and machine learning problem formulations emerges when the off-line training data for a supervised learner is not perfectly representative of the likely deployment en vironment. For example, the en vironment is typically not stationary . This is the case for product recommendation, as new products are introduced and preferences for some items shift daily . In more ex- treme cases, actions influenced by a model may alter the en vironment, in validating future predictions. Discussions of interpretability sometimes suggest that hu- man decision-makers are themselves interpretable because they can explain their actions ( Ridgew ay et al. , 1998 ). But precisely what notion of interpretability do these expla- nations satisfy? The y seem unlikely to clarify the mech- anisms or the precise algorithms by which brains work. Nev ertheless, the information conferred by an interpreta- tion may be useful. Thus, one purpose of interpretations may be to con ve y useful information of any kind. After addressing the desiderata of interpretability , we con- sider what properties of models might render them in- terpretable (expanded in § 3 ). Some papers equate in- terpretability with understandability or intelligibility ( Lou et al. , 2013 ), i.e., that we can grasp how the models work . In these papers, understandable models are some- times called transpar ent , while incomprehensible models are called black boxes . But what constitutes transparenc y? W e might look to the algorithm itself. W ill it con ver ge? Does it produce a unique solution? Or we might look to its parameters: do we understand what each represents? Al- ternativ ely , we could consider the model’ s complexity . Is it simple enough to be examined all at once by a human? Other papers in vestigate so-called post-hoc interpretations. These interpretations might explain predictions without elucidating the mechanisms by which models work. Ex- amples of post-hoc interpretations include the verbal ex- planations produced by people or the saliency maps used to analyze deep neural networks. Thus, humans decisions might admit post-hoc interpretability despite the black box nature of human brains, re vealing a contradiction between two popular notions of interpretability . 2. Desiderata of Interpr etability Research At present, interpretability has no formal technical mean- ing. One aim of this paper is to propose more specific def- initions. Before we can determine which meanings might be appropriate, we must ask what the real-world objecti ves of interpretability research are. In this section we spell out the various desiderata of interpretability research through the lens of the literature. While these desiderata are diverse, it might be instructive to first consider a common thread that persists throughout the literature: The demand for interpretability arises when there is a mismatch between the formal objecti ves of super - vised learning (test set predictive performance) and the real world costs in a deployment setting. Evaluation Metric Interpretation Figure 1. T ypically , ev aluation metrics require only predictions and gr ound truth labels. When stakeholders additionally demand interpr etability , we might infer the existence of desiderata that cannot be captured in this fashion. Consider that most common ev aluation metrics for su- pervised learning require only predictions, together with ground truth, to produce a score. These metrics can be be assessed for ev ery supervised learning model. So the very desire for an interpretation suggests that in some sce- narios, predictions alone and metrics calculated on these predictions do not suffice to characterize the model (Figure 1 ). W e should then ask, what are these other desiderata and under what circumstances are they sought? Howe ver incon veniently , it turns out that many situations arise when our real world objecti ves are difficult to en- code as simple real-valued functions. For example, an al- gorithm for making hiring decisions should simultaneously optimize producti vity , ethics, and legality . But typically , ethics and legality cannot be directly optimized. The prob- lem can also arise when the dynamics of the deployment en vironment dif fer from the training environment. In all cases, interpr etations serve those objectives that we deem important but struggle to model formally . The Mythos of Model Interpr etability 2.1. T rust Some papers moti vate interpretability by suggesting it to be prerequisite for trust ( Kim , 2015 ; Ribeiro et al. , 2016 ). But what is trust? Is it simply confidence that a model will per- form well? If so, a sufficiently accurate model should be demonstrably trustworthy and interpretability would serve no purpose. Trust might also be defined subjecti vely . For example, a person might feel more at ease with a well- understood model, e ven if this understanding served no ob- vious purpose. Alternativ ely , when the training and deploy- ment objecti ves di ver ge, trust might denote confidence that the model will perform well with respect to the real objec- tiv es and scenarios. For example, consider the growing use of machine learning models to forecast crime rates for purposes of allocating police of ficers. W e may trust the model to make accurate predictions but not to account for racial biases in the train- ing data for the model’ s own ef fect in perpetuating a cy- cle of incarceration by over -policing some neighborhoods. Another sense in which we might trust a machine learn- ing model might be that we feel comfortable relinquishing control to it. In this sense, we might care not only about how often a model is right but also for which examples it is right . If the model tends to make mistakes in regions of input space where humans also make mistakes, and is typically accurate when humans are accurate, then it may be considered trustworthy in the sense that there is no ex- pected cost of relinquishing control. But if a model tends to make mistakes for inputs that humans classify accurately , then there may always be an advantage to maintaining hu- man supervision of the algorithms. 2.2. Causality Although supervised learning models are only optimized directly to make associations, researchers often use them in the hope of inferring properties or generating h ypotheses about the natural world. For example, a simple regression model might rev eal a strong association between thalido- mide use and birth defects or smoking and lung cancer ( W ang et al. , 1999 ). The associations learned by supervised learning algorithms are not guaranteed to reflect causal relationships. There could always exist unobserved causes responsible for both associated v ariables. One might hope, howe ver , that by interpreting supervised learning models, we could gener- ate hypotheses that scientists could then test e xperimen- tally . Liu et al. ( 2005 ), for e xample, emphasizes regression trees and Bayesian neural networks, suggesting that mod- els are interpretable and thus better able to provide clues about the causal relationships between physiologic signals and affecti ve states. The task of inferring causal relation- ships from observational data has been extensi vely studied ( Pearl , 2009 ). But these methods tend to rely on strong assumptions of prior knowledge. 2.3. T ransferability T ypically we choose training and test data by randomly par- titioning e xamples from the same distribution. W e then judge a model’ s generalization error by the gap between its performance on training and test data. Howe ver , hu- mans exhibit a far richer capacity to generalize, transfer - ring learned skills to unfamiliar situations. W e already use machine learning algorithms in situations where such abil- ities are required, such as when the en vironment is non- stationary . W e also deploy models in settings where their use might alter the en vironment, in v alidating their future predictions. Along these lines, Caruana et al. ( 2015 ) de- scribe a model trained to predict probability of death from pneumonia that assigned less risk to patients if they also had asthma. In fact, asthma was predictive of lo wer risk of death. This o wed to the more aggressive treatment these patients receiv ed. But if the model were deployed to aid in triage, these patients would then receiv e less aggressi ve treatment, in validating the model. Even worse, we could imagine situations, like machine learning for security , where the en vironment might be ac- tiv ely adversarial. Consider the recently discov ered sus- ceptibility of con volutional neural networks (CNNs) to ad- versarial examples. The CNNs were made to misclassify images that were imperceptibly (to a human) perturbed ( Szegedy et al. , 2013 ). Of course, this isn’t overfitting in the classical sense. The results achiev ed on training data generalize well to i.i.d. test data. But these are mistakes a human wouldn’ t make and we would prefer models not to make these mistakes either . Already , supervised learning models are regularly subject to such adversarial manipulation. Consider the models used to generate credit ratings, scores that when higher should signify a higher probability that an indi vidual re- pays a loan. According to their own technical report, FICO trains credit models using logistic regression ( Fair Isaac Corporation , 2011 ), specifically citing interpretability as a motiv ation for the choice of model. Features include dummy variables representing binned v alues for average age of accounts, debt ratio, and the number of late pay- ments, and the number of accounts in good standing. Sev eral of these factors can be manipulated at will by credit-seekers. F or example, one’ s debt ratio can be im- prov ed simply by requesting periodic increases to credit lines while keeping spending patterns constant. Similarly , the total number of accounts can be increased by simply applying for new accounts, when the probability of accep- tance is reasonably high. Indeed, FICO and Experian both acknowledge that credit ratings can be manipulated, ev en The Mythos of Model Interpr etability suggesting guides for improving one’ s credit rating. These rating improvement strate gies do not fundamentally change one’ s underlying ability to pay a debt. The fact that indi vid- uals actively and successfully game the rating system may in validate its predicti ve po wer . 2.4. Informati veness Sometimes we apply decision theory to the outputs of su- pervised models to take actions in the real world. Howe ver , in another common use paradigm, the supervised model is used instead to provide information to human decision makers, a setting considered by Kim et al. ( 2015 ); Huys- mans et al. ( 2011 ). While the machine learning objec- tiv e might be to reduce error, the real-world purpose is to provide useful information. The most obvious way that a model conv eys information is via its outputs. Howe ver , it may be possible via some procedure to conv ey additional information to the human decision-maker . By analogy , we might consider a PhD student seeking ad- vice from her advisor . Suppose the student asks what venue would best suit a paper . The advisor could simply name one conference, but this may not be especially useful. Even if the advisor is reasonably intelligent, the terse reply doesn’ t enable the student to meaningfully combine the advisor’ s knowledge with her o wn. An interpretation may prove informativ e ev en without shedding light on a model’ s inner workings. F or exam- ple, a diagnosis model might provide intuition to a human decision-maker by pointing to similar cases in support of a diagnostic decision. In some cases, we train a supervised learning model, but our real task more closely resembles unsupervised learning. Here, our real goal is to explore the data and the objectiv e serves only as weak supervision . 2.5. Fair and Ethical Decision-Making At present, politicians, journalists and researchers have expressed concern that we must produce interpretations for the purpose of assessing whether decisions produced automatically by algorithms conform to ethical standards ( Goodman & Flaxman , 2016 ). The concerns are timely: Algorithmic decision-making mediates more and more of our interactions, influencing our social experiences, the ne ws we see, our finances, and our career opportunities. W e task computer programs with approving lines of credit, curating news, and filtering job applicants. Courts e ven deplo y computerized algorithms to predict risk of recidi vism, the probability that an individual relapses into criminal beha vior ( Chouldechova , 2016 ). It seems likely that this trend will only accelerate as break- throughs in artificial intelligence rapidly broaden the capa- bilities of software. Recidivism predictions are already used to determine who to release and who to detain, raising ethical concerns. 1 How can we be sure that predictions do not discriminate on the basis of race? Conv entional ev aluation metrics such as accuracy or A UC of fer little assurance that a model and via decision theory , its actions, beha ve acceptably . Thus de- mands for fairness often lead to demands for interpr etable models. New regulations in the European Union propose that indi- viduals affected by algorithmic decisions ha ve a right to explanation ( Goodman & Flaxman , 2016 ). Precisely what form such an explanation might take or how such an ex- planation could be proven correct and not merely appeas- ing remain open questions. Moreover the same regulations suggest that algorithmic decisions should be contestable . So in order for such explanations to be useful it seems the y must (i) present clear reasoning based on falsifiable propo- sitions and (ii) offer some natural way of contesting these propositions and modifying the decisions appropriately if they are falsified. 3. Properties of Inter pretable Models W e turn now to consider the techniques and model proper- ties that are proposed either to enable or to comprise inter- pr etations . These broadly fall into two categories. The first relates to transpar ency , i.e., how does the model work? The second consists of post-hoc explanations , i.e., what else can the model tell me? This division is a useful organi- zationally , but we note that it is not absolute. For example post-hoc analysis techniques attempt to uncov er the signif- icance of various of parameters, an aim we group under the heading of transparency . 3.1. T ransparency Informally , transpar ency is the opposite of opacity or blackbox-ness . It connotes some sense of understanding the mechanism by which the model works. W e consider transparency at the level of the entire model ( simulatabil- ity ), at the le vel of indi vidual components (e.g. parameters) ( decomposability ), and at the lev el of the training algorithm ( algorithmic transpar ency ). 3 . 1 . 1 . S I M U L A TA B I L I T Y In the strictest sense, we might call a model transparent if a person can contemplate the entire model at once. This definition suggests that an interpretable model is a simple model. W e might think, for example that for a model to be fully understood, a human should be able to take the 1 It seems reasonable to argue that under most circumstances, risk-based punishment is fundamentally unethical, b ut this discus- sion requires exceeds the present scope. The Mythos of Model Interpr etability input data together with the parameters of the model and in r easonable time step through ev ery calculation required to produce a prediction. This accords with the common claim that sparse linear models, as produced by lasso regression ( T ibshirani , 1996 ), are more interpretable than dense linear models learned on the same inputs. Ribeiro et al. ( 2016 ) also adopt this notion of interpretability , suggesting that an interpretable model is one that “can be readily presented to the user with visual or textual artifacts. ” For some models, such as decision trees, the size of the model (total number of nodes) may gro w much faster than the time to perform inference (length of pass from root to leaf). This suggests that simulatability may admit two sub- types, one based on the total size of the model and another based on the computation required to perform inference. Fixing a notion of simulatability , the quantity denoted by r easonable is subjectiv e. But clearly , given the limited capacity of human cognition, this ambiguity might only span several orders of magnitude. In this light, we suggest that neither linear models, rule-based systems, nor deci- sion trees are intrinsically interpretable. Sufficiently high- dimensional models, unwieldy rule lists, and deep decision trees could all be considered less transparent than compar- ativ ely compact neural networks. 3 . 1 . 2 . D E C O M P O S A B I L I T Y A second notion of transparency might be that each part of the model - each input, parameter , and calculation - admits an intuitive explanation. This accords with the property of intelligibility as described by ( Lou et al. , 2012 ). For exam- ple, each node in a decision tree might correspond to a plain text description (e.g. all patients with diastolic blood pr es- sur e over 150 ). Similarly , the parameters of a linear model could be described as representing strengths of association between each feature and the label. Note that this notion of interpretability requires that in- puts themselves be indi vidually interpretable, disqualifying some models with highly engineered or anonymous fea- tures. While this notion is popular , we shouldn’t accept it blindly . The weights of a linear model might seem intuiti ve, but they can be fragile with respect to feature selection and pre-processing. For example, associations between flu risk and vaccination might be positive or negati ve depending on whether the feature set includes indicators of old age, infancy , or immunodeficiency . 3 . 1 . 3 . A L G O R I T H M I C T R A N S PA R E N C Y A final notion of transparency might apply at the level of the learning algorithm itself. For example, in the case of linear models, we understand the shape of the error surface. W e can prove that training will con verge to a unique solu- tion, e ven for previously unseen datasets. This may gi ve some confidence that the model might behav e in an online setting requiring programmatic retraining on pre viously unseen data. On the other hand, modern deep learning methods lack this sort of algorithmic transparency . While the heuristic optimization procedures for neural networks are demonstrably powerful, we don’t understand ho w they work, and at present cannot guarantee a priori that they will work on ne w problems. Note, howe ver , that humans exhibit none of these forms of transparency . 3.2. Post-hoc Inter pretability Post-hoc interpretability presents a distinct approach to e x- tracting information from learned models. While post- hoc interpretations often do not elucidate precisely how a model works, they may nonetheless confer useful infor - mation for practitioners and end users of machine learn- ing. Some common approaches to post-hoc interpreta- tions include natural language explanations, visualizations of learned representations or models, and explanations by example (e.g. this tumor is classified as malignant because to the model it looks a lot like these other tumors ). T o the extent that we might consider humans to be inter- pretable, it is this sort of interpretability that applies. For all we know , the processes by which we humans make de- cisions and those by which we explain them may be dis- tinct. One advantage of this concept of interpretability is that we can interpret opaque models after-the-fact, without sacrificing predictiv e performance. 3 . 2 . 1 . T E X T E X P L A N A T I O N S Humans often justify decisions verbally . Similarly , we might train one model to generate predictions and a sep- arate model, such as a recurrent neural network language model, to generate an e xplanation. Such an approach is taken in a line of work by Krening et al. ( 2016 ). They pro- pose a system in which one model (a reinforcement learner) chooses actions to optimize cumulativ e discounted return. They train another model to map a model’ s state represen- tation onto verbal explanations of strategy . These explana- tions are trained to maximize the likelihood of previously observed ground truth explanations from human players, and may not f aithfully describe the agent’ s decisions, ho w- ev er plausible they appear . W e note a connection between this approach and recent work on neural image caption- ing in which the representations learned by a discriminativ e con volutional neural network (trained for image classifica- tion) are co-opted by a second model to generate captions. These captions might be regarded as interpretations that ac- company classifications. In work on recommender systems, McAuley & Leskov ec ( 2013 ) use text to explain the decisions of a latent factor The Mythos of Model Interpr etability model. Their method consists of simultaneously training a latent factor model for rating prediction and a topic model for product re views. During training they alternate between decreasing the squared error on rating prediction and in- creasing the lik elihood of re vie w text. The models are con- nected because they use normalized latent factors as topic distributions. In other words, latent factors are regularized such that they are also good at explaining the topic distri- butions in revie w text. The authors then explain user-item compatibility by examining the top w ords in the topics cor- responding to matching components of their latent factors. Note that the practice of interpreting topic models by pre- senting the top words is itself a post-hoc interpretation tech- nique that has in vited scrutiny ( Chang et al. , 2009 ). 3 . 2 . 2 . V I S U A L I Z A T I O N Another common approach to generating post-hoc interpre- tations is to render visualizations in the hope of determin- ing qualitativ ely what a model has learned. One popular approach is to visualize high-dimensional distributed rep- resentations with t-SNE ( V an der Maaten & Hinton , 2008 ), a technique that renders 2D visualizations in which nearby data points are likely to appear close together . Mordvintsev et al. ( 2015 ) attempt to explain what an im- age classification network has learned by altering the input through gradient descent to enhance the activ ations of cer- tain nodes selected from the hidden layers. An inspection of the perturbed inputs can giv e clues to what the model has learned. Likely because the model was trained on a large corpus of animal images, they observed that enhanc- ing some nodes caused the dog faces to appear throughout the input image. In the computer vision community , similar approaches hav e been explored to in vestig ate what information is re- tained at various layers of a neural network. Mahendran & V edaldi ( 2015 ) pass an image through a discriminativ e con volutional neural network to generate a representation. They then demonstrate that the original image can be re- cov ered with high fidelity ev en from reasonably high-lev el representations (lev el 6 of an AlexNet) by performing gra- dient descent on randomly initialized pixels. 3 . 2 . 3 . L O C A L E X P L A N A T I O N S While it may be difficult to succinctly describe the full mapping learned by a neural network, some papers focus instead on explaining what a neural network depends on locally . One popular approach for deep neural nets is to compute a saliency map. T ypically , they take the gradient of the output corresponding to the correct class with re- spect to a gi ven input vector . For images, this gradient can be applied as a mask (Figure 2 ), highlighting regions of the input that, if changed, would most influence the output ( Si- monyan et al. , 2013 ; W ang et al. , 2015 ). Note that these explanations of what a model is focusing on may be misleading. The saliency map is a local expla- nation only . Once you move a single pixel, you may get a very different saliency map. This contrasts with linear models, which model global relationships between inputs and outputs. Figure 2. Saliency map by W ang et al. ( 2015 ) to conv ey intuition ov er what the value function and advantage function portions of their deep Q-network are focusing on. Another attempt at local explanations is made by Ribeiro et al. ( 2016 ). In this work, the authors explain the deci- sions of an y model in a local region near a particular point, by learning a separate sparse linear model to explain the decisions of the first. 3 . 2 . 4 . E X P L A N A T I O N B Y E X A M P L E One post-hoc mechanism for explaining the decisions of a model might be to report (in addition to predictions) which other examples the model considers to be most similar , a method suggested by Caruana et al. ( 1999 ). After training a deep neural network or latent variable model for a dis- criminativ e task, we then hav e access not only to predic- tions but also to the learned representations. Then, for any example, in addition to generating a prediction, we can use the acti vations of the hidden layers to identify the k -nearest neighbors based on the proximity in the space learned by the model. This sort of explanation by example has prece- dent in how humans sometimes justify actions by analogy . For example, doctors often refer to case studies to support a planned treatment protocol. In the neural network literature, Mikolov et al. ( 2013 ) use such an approach to e xamine the learned representations of words after word2vec training. While their model is trained for discriminative skip-gram prediction, to examine what relationships the model has learned, they enumerate near- The Mythos of Model Interpr etability est neighbors of words based on distances calculated in the latent space. W e also point to related work in Bayesian methods: Kim et al. ( 2014 ) and Doshi-V elez et al. ( 2015 ) in vestigate cased-base reasoning approaches for interpret- ing generativ e models. 4. Discussion The concept of interpretability appears simultaneously im- portant and slippery . Earlier, we analyzed both the motiv a- tions for interpretability and some attempts by the research community to confer it. In this discussion, we consider the implications of our analysis and offer sev eral takeaways to the reader . 4.1. Linear models are not strictly mor e interpretable than deep neural networks Despite this claim’ s enduring popularity , its truth content varies depending on what notion of interpretability we em- ploy . W ith respect to algorithmic transpar ency , this claim seems uncontroversial, but giv en high dimensional or hea v- ily engineered features, linear models lose simulatability or decomposability , respectiv ely . When choosing between linear and deep models, we must often make a trade-off between algorithic transpar ency and decomposability . This is because deep neural networks tend to operate on raw or lightly processed features. So if nothing else, the features are intuitively meaningful, and post-hoc reasoning is sensible. Howe ver , in order to get comparable performance, linear models often must operate on heavily hand-engineered features. Lipton et al. ( 2016 ) demonstrates such a case where linear models can only ap- proach the performance of RNNs at the cost of decompos- ability . For some kinds of post-hoc interpretation, deep neural net- works exhibit a clear adv antage. They learn rich represen- tations that can be visualized, verbalized, or used for clus- tering. Considering the desiderata for interpretability , lin- ear models appear to have a better track record for studying the natural world but we do not kno w of a theoretical reason why this must be so. Conceiv ably , post-hoc interpretations could prov e useful in similar scenarios. 4.2. Claims about interpr etability must be qualified As demonstrated in this paper , the term does not reference a monolithic concept. T o be meaningful, any assertion regarding interpretability should fix a specific definition. If the model satisfies a form of transparency , this can be shown directly . For post-hoc interpretability , papers ought to fix a clear objectiv e and demonstrate evidence that the offered form of interpretation achie ves it. 4.3. In some cases, transparency may be at odds with the broader objecti ves of AI Some arguments against black-box algorithms appear to preclude any model that could match or surpass our abil- ities on complex tasks. As a concrete example, the short- term goal of building trust with doctors by developing transparent models might clash with the longer-term goal of improving health care. W e should be careful when gi v- ing up predictive power , that the desire for transparency is justified and isn’t simply a concession to institutional bi- ases against ne w methods. 4.4. Post-hoc inter pretations can potentially mislead W e caution against blindly embracing post-hoc notions of interpretability , especially when optimized to placate sub- jectiv e demands. In such cases, one might - deliberately or not - optimize an algorithm to present misleading b ut plau- sible explanations. As humans, we are known to engage in this behavior , as evidenced in hiring practices and college admissions. Several journalists and social scientists have demonstrated that acceptance decisions attributed to virtues like leadership or originality often disguise racial or gender discrimination ( Mounk , 2014 ). In the rush to gain accep- tance for machine learning and to emulate human intelli- gence, we should be careful not to reproduce pathological behavior at scale. 4.5. Future W ork W e see several promising directions for future work. First, for some problems, the discrepancy between real-life and machine learning objectives could be mitigated by develop- ing richer loss functions and performance metrics. Exem- plars of this direction include research on sparsity-inducing regularizers and cost-sensiti ve learning. Second, we can expand this analysis to other ML paradigms such as rein- forcement learning. Reinforcement learners can address some (but not all) of the objecti ves of interpretability re- search by directly modeling interaction between models and environments. Howev er , this capability may come at the cost of allowing models to e xperiment in the world, in- curring real consequences. Notably , reinforcement learners are able to learn causal relationships between their actions and real world impacts. Howe ver , like supervised learn- ing, reinforcement learning relies on a well-defined scalar objectiv e. For problems like fairness, where we struggle to verbalize precise definitions of success, a shift of ML paradigm is unlikely to eliminate the problems we face. 5. Contributions This paper identifies an important but under-recognized problem: the term interpr etability holds no agreed upon The Mythos of Model Interpr etability meaning, and yet machine learning conferences fre- quently publish papers which wield the term in a quasi- mathematical way . For these papers to be meaningful and for this field to progress, we must critically engage the is- sue of problem formulation. Moreover , we identify the in- compatibility of most presently inv estigated interpretability techniques with pressing problems facing machine learning in the wild. For example, little in the published work on model intepretability addresses the idea of contestability . This paper makes a first step to wards providing a compre- hensiv e taxonomy of both the desiderata and methods in interpretability research. W e argue that the paucity of criti- cal writing in the machine learning community is problem- atic. When we hav e solid problem formulations, flaws in methodology can be addressed by articulating new meth- ods. But when the problem formulation itself is fla wed, neither algorithms nor experiments are suf ficient to address the underlying problem. Moreov er , as machine learning continues to exert influence upon society , we must be sure that we are solving the right problems. While lawmakers and policymak ers must in- creasingly consider the impact of machine learning, the re- sponsibility to account for the impact of machine learning and to ensure its alignment with societal desiderata must ultimately be shared by practitioners and researchers in the field. Thus, we belie ve that such critical writing ought to hav e a voice at machine learning conferences. References Athey , Susan and Imbens, Guido W . Machine learn- ing methods for estimating heterogeneous causal ef fects. Stat , 2015. Caruana, Rich, Kangarloo, Hooshang, Dionisio, JD, Sinha, Usha, and Johnson, David. Case-based explanation of non-case-based learning methods. In Proceedings of the AMIA Symposium , pp. 212, 1999. Caruana, Rich, Lou, Y in, Gehrk e, Johannes, K och, Paul, Sturm, Marc, and Elhadad, No ´ emie. Intelligible models for healthcare: Predicting pneumonia risk and hospital 30-day readmission. In KDD , 2015. Chang, Jonathan, Gerrish, Sean, W ang, Chong, Boyd- Graber , Jordan L, and Blei, David M. Reading tea leav es: How humans interpret topic models. In NIPS , 2009. Chouldechov a, Alexandra. Fair prediction with disparate impact: A study of bias in recidivism prediction instru- ments. , 2016. Doshi-V elez, Finale, W allace, Byron, and Adams, Ryan. Graph-sparse lda: a topic model with structured sparsity . AAAI , 2015. Dragan, Anca D, Lee, Kenton CT , and Srini vasa, Sid- dhartha S. Legibility and predictability of robot motion. In Human-Robot Interaction (HRI), 2013 8th A CM/IEEE International Confer ence on . IEEE, 2013. Fair Isaac Corporation. Introduction to scorecard for fico model builder , 2011. URL http://www. fico.com/en/node/8140?file=7900 . Ac- cessed: 2017-02-22. Goodman, Bryce and Flaxman, Seth. European union reg- ulations on algorithmic decision-making and a” right to explanation”. , 2016. Huysmans, Johan, Dejaeger , Karel, Mues, Christophe, V anthienen, Jan, and Baesens, Bart. An empirical e v alu- ation of the comprehensibility of decision table, tree and rule based predicti ve models. Decision Support Systems , 2011. Kim, Been. Interactive and interpretable machine learning models for human machine collaboration . PhD thesis, Massachusetts Institute of T echnology , 2015. Kim, Been, Rudin, Cynthia, and Shah, Julie A. The Bayesian Case Model: A generati ve approach for case- based reasoning and prototype classification. In NIPS , 2014. Kim, Been, Glassman, Elena, Johnson, Brittney , and Shah, Julie. ibcm: Interacti ve bayesian case model empower- ing humans via intuitiv e interaction. 2015. Krening, Samantha, Harrison, Brent, Feigh, Karen, Isbell, Charles, Riedl, Mark, and Thomaz, Andrea. Learning from explanations using sentiment and advice in rl. IEEE T ransactions on Cognitive and Developmental Systems , 2016. Lipton, Zachary C, Kale, David C, and W etzel, Randall. Modeling missing data in clinical time series with rnns. In Machine Learning for Healthcar e , 2016. Liu, Changchun, Rani, Pramila, and Sarkar , Nilanjan. An empirical study of machine learning techniques for af- fect recognition in human-robot interaction. In Inter - national Confer ence on Intelligent Robots and Systems . IEEE, 2005. Lou, Y in, Caruana, Rich, and Gehrke, Johannes. Intelli- gible models for classification and regression. In KDD , 2012. Lou, Y in, Caruana, Rich, Gehrke, Johannes, and Hooker , Giles. Accurate intelligible models with pairwise inter- actions. In KDD , 2013. The Mythos of Model Interpr etability Mahendran, Aravindh and V edaldi, Andrea. Understanding deep image representations by in verting them. In CVPR , 2015. McAuley , Julian and Leskov ec, Jure. Hidden factors and hidden topics: understanding rating dimensions with re- view te xt. In RecSys . ACM, 2013. Mikolov , T omas, Sutskev er , Ilya, Chen, Kai, Corrado, Greg S, and Dean, Jef f. Distributed representations of words and phrases and their compositionality . In NIPS , pp. 3111–3119, 2013. Mordvintsev , Alexander , Olah, Christopher , and T yka, Mike. Inceptionism: Going deeper into neural networks. Google Resear ch Blo g. Retrieved J une , 2015. Mounk, Y ascha. Is Harvard unfair to asian- americans?, 2014. URL http://www. nytimes.com/2014/11/25/opinion/ is- harvard- unfair- to- asian- americans. html?_r=0 . Pearl, Judea. Causality . Cambridge univ ersity press, 2009. Ribeiro, Marco T ulio, Singh, Sameer , and Guestrin, Carlos. ”why should i trust you?”: Explaining the predictions of any classifier . KDD , 2016. Ridgew ay , Greg, Madigan, David, Richardson, Thomas, and O’Kane, John. Interpretable boosted na ¨ ıve bayes classification. In KDD , 1998. Simonyan, Karen, V edaldi, Andrea, and Zisserman, An- drew . Deep inside con volutional networks: V isual- ising image classification models and saliency maps. arXiv:1312.6034 , 2013. Szegedy , Christian, Zaremba, W ojciech, Sutskev er , Ilya, Bruna, Joan, Erhan, Dumitru, Goodfellow , Ian, and Fer- gus, Rob. Intriguing properties of neural networks. arXiv:1312.6199 , 2013. T ibshirani, Robert. Regression shrinkage and selection via the lasso. Journal of the Royal Statistical Society . Series B (Methodological) , 1996. V an der Maaten, Laurens and Hinton, Geoffrey . V isualizing data using t-sne. JMLR , 2008. W ang, Hui-Xin, Fratiglioni, Laura, Frisoni, Giov anni B, V iitanen, Matti, and Winblad, Bengt. Smoking and the occurence of alzheimer’ s disease: Cross-sectional and longitudinal data in a population-based study . American journal of epidemiology , 1999. W ang, Ziyu, de Freitas, Nando, and Lanctot, Marc. Dueling network architectures for deep reinforcement learning. arXiv:1511.06581 , 2015.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment