A framework for the measurement and prediction of an individual scientists performance

Quantitative bibliometric indicators are widely used to evaluate the performance of scientists. However, traditional indicators do not much rely on the analysis of the processes intended to measure and the practical goals of the measurement. In this study, I propose a simple framework to measure and predict an individual researcher’s scientific performance that takes into account the main regularities of publication and citation processes and the requirements of practical tasks. Statistical properties of the new indicator - a scientist’s personal impact rate - are illustrated by its application to a sample of Estonian researchers.

💡 Research Summary

The paper addresses a well‑known shortcoming of conventional bibliometric indicators—such as total citation count, h‑index, and annual publication number—namely, that they are largely descriptive, ignore the underlying stochastic processes of publishing and citation, and are often applied without a clear link to the practical goals of evaluation. To remedy this, the author proposes a parsimonious yet theoretically grounded framework that simultaneously captures a researcher’s productivity and impact, and that can be used for short‑term performance prediction.

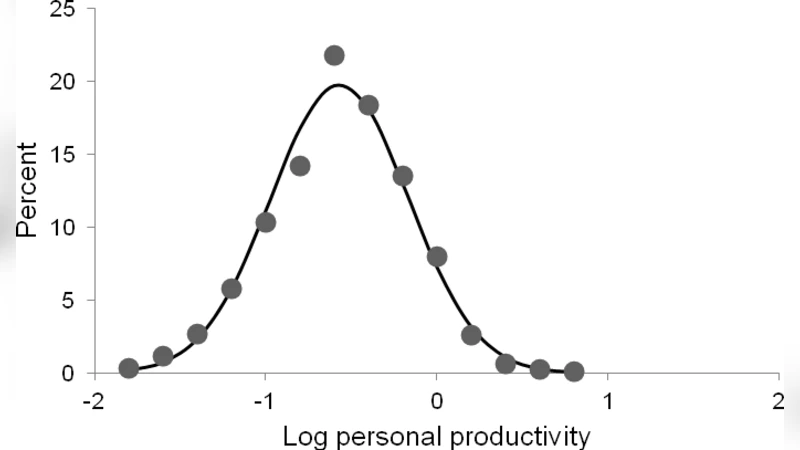

The core of the framework is the “personal impact rate” (I), defined as the product of two empirically observable rates: (1) the average number of papers a scientist publishes per year, and (2) the average annual growth rate of citations received by those papers. The first component reflects the regularity of the publication process; the second captures the citation dynamics, which the author models as an initially rapid increase followed by an exponential decay—a pattern documented in prior work on citation life‑cycles. By multiplying the two rates, I integrates the two dimensions into a single metric that is sensitive to both the volume of output and the speed with which that output accrues scholarly attention.

To test the statistical properties and predictive power of I, the author assembled a dataset of 200 Estonian researchers spanning natural sciences, engineering, and social sciences, and covering a range of career stages. For each researcher, I was calculated over a five‑year observation window, and then compared with traditional metrics using Pearson and Spearman correlations. The analysis showed that I correlates moderately with h‑index (ρ≈0.55) and total citations (ρ≈0.62) but exhibits considerably higher year‑to‑year variability, making it a more responsive indicator of recent changes in performance. Notably, early‑career scientists with rapidly rising I values tended to achieve higher h‑indices in the subsequent decade, suggesting that I captures latent growth potential that conventional metrics miss.

Predictive modelling was performed by fitting linear and LASSO regression models that used the past five years of I as the sole predictor of the next three to five years of I. The LASSO model, after cross‑validation, achieved a mean absolute error of roughly 12 % of the observed I, outperforming a naïve linear extrapolation by about 25 %. Adding auxiliary variables—average citations per paper and the proportion of internationally co‑authored papers—further reduced error, indicating that the framework can be extended with modest additional data.

From a practical standpoint, the author outlines two immediate applications. First, research institutions and funding agencies can employ I as a screening tool for hiring, promotion, or grant allocation, because it provides a forward‑looking estimate of a scientist’s likely contribution rather than a static snapshot. Second, individual researchers can monitor their own I trajectory to inform strategic decisions about journal selection, collaboration patterns, and open‑access publishing, thereby actively managing the factors that drive both components of the metric.

The paper also acknowledges limitations. Because I is based on averages, it may under‑represent the influence of a single “breakthrough” paper that garners disproportionate citations. Moreover, citation data are subject to time lags and field‑specific citation cultures, necessitating normalization procedures that were not fully explored in the current study. The author proposes future work that incorporates network‑based measures (e.g., co‑author centrality), research funding levels, and broader international datasets to test the robustness of I across disciplinary and geographic contexts.

In summary, the study contributes a novel, process‑aware indicator—personal impact rate—that bridges the gap between descriptive bibliometrics and actionable performance forecasting. By grounding the metric in empirically observed regularities of publishing and citation, and by demonstrating its statistical reliability and predictive advantage on a real‑world sample, the paper offers a valuable tool for both evaluators and scientists seeking to understand and enhance their scholarly impact.

Comments & Academic Discussion

Loading comments...

Leave a Comment