Item2Vec: Neural Item Embedding for Collaborative Filtering

Many Collaborative Filtering (CF) algorithms are item-based in the sense that they analyze item-item relations in order to produce item similarities. Recently, several works in the field of Natural Language Processing (NLP) suggested to learn a latent representation of words using neural embedding algorithms. Among them, the Skip-gram with Negative Sampling (SGNS), also known as word2vec, was shown to provide state-of-the-art results on various linguistics tasks. In this paper, we show that item-based CF can be cast in the same framework of neural word embedding. Inspired by SGNS, we describe a method we name item2vec for item-based CF that produces embedding for items in a latent space. The method is capable of inferring item-item relations even when user information is not available. We present experimental results that demonstrate the effectiveness of the item2vec method and show it is competitive with SVD.

💡 Research Summary

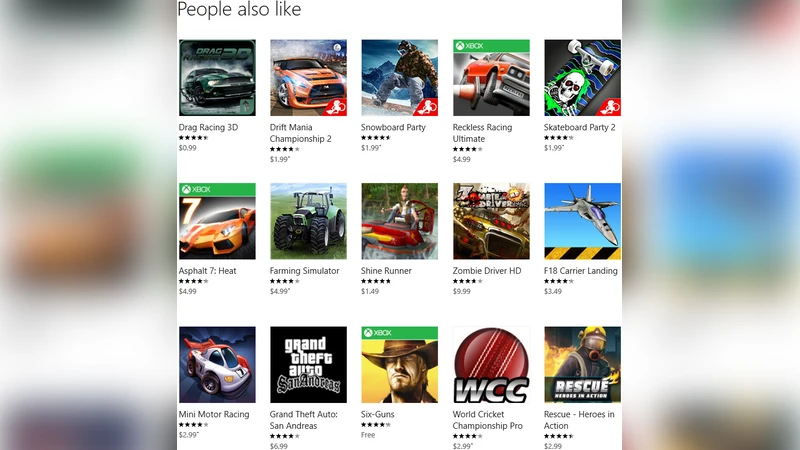

The paper “Item2Vec: Neural Item Embedding for Collaborative Filtering” proposes a novel way to view item‑based collaborative filtering (CF) through the lens of neural word‑embedding techniques that have become standard in natural‑language processing (NLP). The authors observe that the Skip‑gram with Negative Sampling (SGNS) model—popularly known as word2vec—learns dense vector representations of words by maximizing the probability that words appearing together in a context window are close in the embedding space while pushing apart randomly sampled word pairs. By treating each item as a “word” and each user session (e.g., a purchase basket, a click session, a listening session) as a “context”, the same objective can be applied to learn item embeddings.

Methodology

The core idea is to construct a training set of positive item pairs ((i, j)) that co‑occur in the same session and a set of negative pairs ((i, k)) drawn from a noise distribution proportional to the 3/4 power of item frequencies (the same heuristic used in word2vec). The SGNS loss function is then maximized:

\

Comments & Academic Discussion

Loading comments...

Leave a Comment