Multigrid with rough coefficients and Multiresolution operator decomposition from Hierarchical Information Games

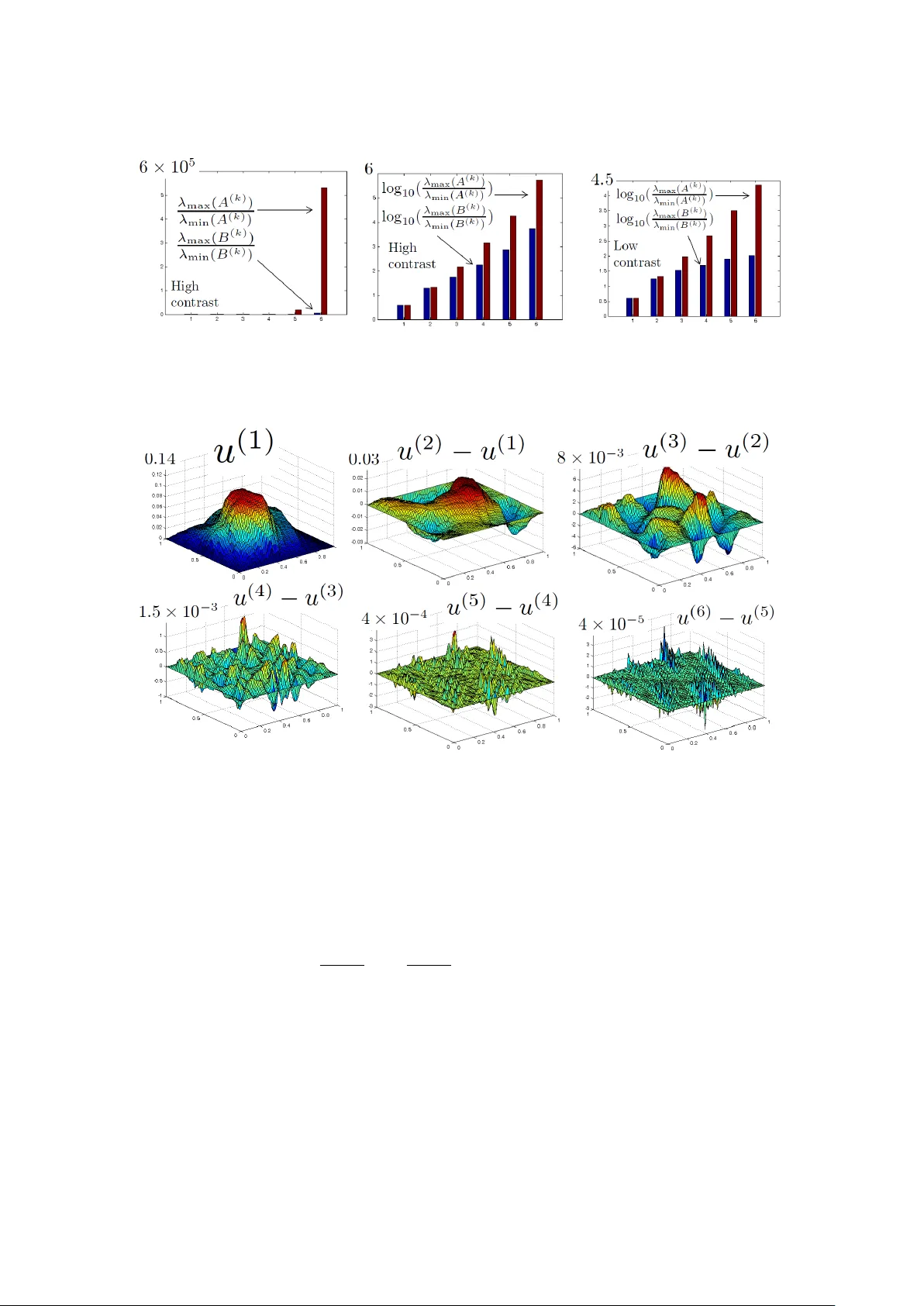

We introduce a near-linear complexity (geometric and meshless/algebraic) multigrid/multiresolution method for PDEs with rough ($L^\infty$) coefficients with rigorous a-priori accuracy and performance estimates. The method is discovered through a deci…

Authors: Houman Owhadi