A Historical Review of Forty Years of Research on CMAC

The Cerebellar Model Articulation Controller (CMAC) is an influential brain-inspired computing model in many relevant fields. Since its inception in the 1970s, the model has been intensively studied and many variants of the prototype, such as Kernel-CMAC, Self-Organizing Map CMAC, and Linguistic CMAC, have been proposed. This review article focus on how the CMAC model is gradually developed and refined to meet the demand of fast, adaptive, and robust control. Two perspective, CMAC as a neural network and CMAC as a table look-up technique are presented. Three aspects of the model: the architecture, learning algorithms and applications are discussed. In the end, some potential future research directions on this model are suggested.

💡 Research Summary

This paper provides a comprehensive historical review of the Cerebellar Model Articulation Controller (CMAC) spanning four decades since its introduction by J. S. Albus in 1975. The authors frame CMAC from two complementary perspectives: as a neural‑network‑like function approximator and as a table‑lookup mechanism that exploits localized generalization. The review is organized chronologically, covering three major dimensions of the model: architecture, learning algorithms, and applications.

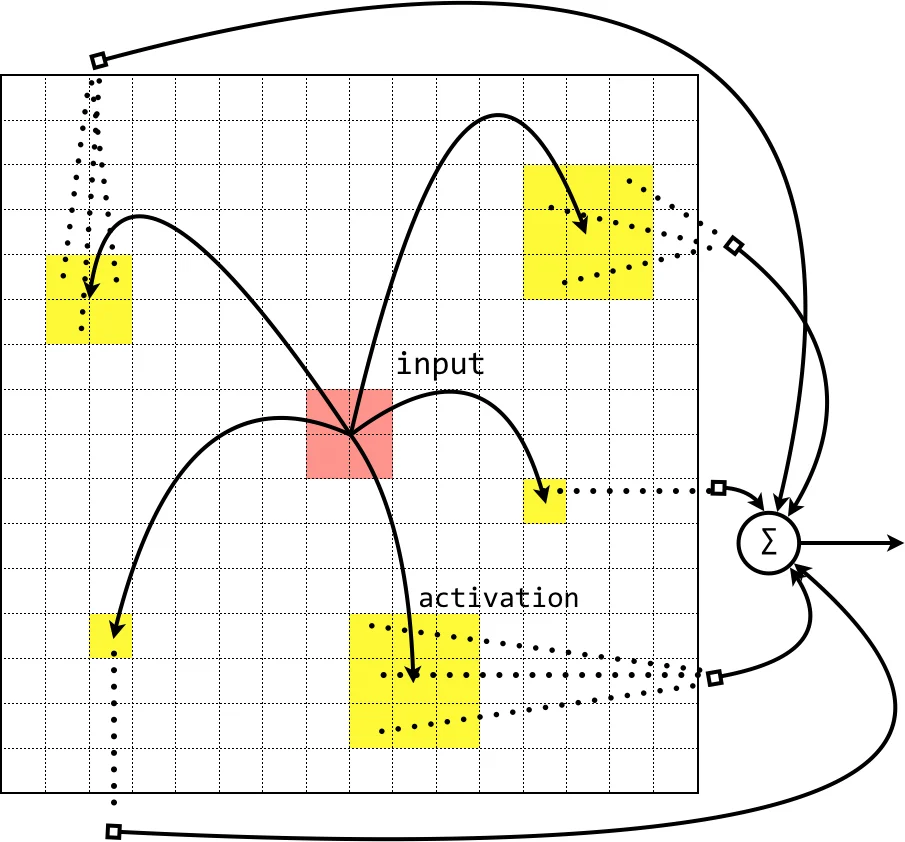

In the architectural section, the original two‑stage mapping (sensory input → association cells → output) is described, emphasizing how the restriction of each association cell to a limited receptive field dramatically speeds up learning compared with fully connected multilayer perceptrons. The authors then trace a series of structural refinements aimed at mitigating the curse of dimensionality and memory explosion. Early solutions such as tile coding and hash‑based address compression are discussed, together with their practical limitations (collision handling, loss of locality). Subsequent innovations include the addition of a “conceptual memory” layer, cascade CMAC (sequentially adding low‑dimensional CMAC modules), hierarchical CMAC (multi‑level voting and gating networks), and high‑order CMAC that replaces binary association cells with spline functions to obtain differentiable outputs. The review also surveys fuzzy and linguistic extensions (FC‑CMAC, LC‑CMAC) that embed membership functions or evidence‑theoretic focal elements, thereby providing probabilistic or fuzzy interpretations of the memory activation. Hybridizations with radial‑basis‑function networks, wavelet networks, and recurrent structures are highlighted as ways to improve performance on time‑varying nonlinear systems.

The learning‑algorithm portion begins with Albus’s original error‑back‑propagation rule, then systematically presents a family of enhancements. Adaptive learning‑rate schemes gradually reduce the step size, while weight‑smoothing techniques average activated weights to suppress zig‑zag artifacts in sparse data regimes. Credit‑assignment methods adjust the magnitude of error updates based on how often a memory cell has been activated, effectively giving more “credibility” to frequently used weights. Momentum, neighborhood learning, and fuzzy‑output averaging are incorporated in the MC‑CMAC variant, and a self‑organizing‑map (SOFM) interpretation leads to competitive learning rules (MCMA‑CMAC). Convergence conditions (e.g., learning rate α < 2) and theoretical guarantees are briefly outlined.

Application examples demonstrate CMAC’s versatility in real‑time control tasks: printer calibration, robotic arm trajectory tracking, PID‑assisted regulation, and adaptive modeling of nonlinear dynamic plants. The authors note a recurring pattern where CMAC initially serves as an error‑compensator for a primary controller and, after sufficient training, gradually assumes the main control role.

Finally, the paper acknowledges persistent challenges: exponential growth of weight tables with input dimensionality, interpolation errors when data are sparse, and the inherently discrete nature of the original model that precludes analytical derivatives. Future research directions are suggested, including dynamic hashing or multi‑resolution storage schemes, automatic learning‑rate and credibility estimation, hardware acceleration on FPGA/ASIC platforms, and deeper integration of contemporary cerebellar neuroscience findings to inspire more biologically plausible yet computationally efficient variants.

Overall, the review offers a valuable chronological synthesis of CMAC’s evolution, clarifies the trade‑offs among its many extensions, and outlines promising avenues for continued advancement in fast, adaptive, and robust control systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment