A Robust Asynchronous Newton Method for Massive Scale Computing Systems

Volunteer computing grids offer super-computing levels of computing power at the relatively low cost of operating a server. In previous work, the authors have shown that it is possible to take traditionally iterative evolutionary algorithms and execute them on volunteer computing grids by performing them asynchronously. The asynchronous implementations dramatically increase scalability and decrease the time taken to converge to a solution. Iterative and asynchronous optimization algorithms implemented using MPI on clusters and supercomputers, and BOINC on volunteer computing grids have been packaged together in a framework for generic distributed optimization (FGDO). This paper presents a new extension to FGDO for an asynchronous Newton method (ANM) for local optimization. ANM is resilient to heterogeneous, faulty and unreliable computing nodes and is extremely scalable. Preliminary results show that it can converge to a local optimum significantly faster than conjugate gradient descent does.

💡 Research Summary

The paper introduces an Asynchronous Newton Method (ANM) designed for massive‑scale, heterogeneous computing environments such as volunteer‑based BOINC grids. Building on the authors’ earlier work that demonstrated how evolutionary algorithms could be executed asynchronously within the Flexible Generic Distributed Optimization (FGDO) framework, this study extends FGDO to support a second‑order local optimizer that tolerates node failures, variable performance, and network latency.

In a conventional Newton scheme the gradient and Hessian (or a Hessian approximation) must be computed on a consistent set of parameters before an update can be applied. In a distributed volunteer grid this synchronisation requirement becomes a bottleneck: slow or unreliable workers stall the whole computation, and any node dropout can halt progress. ANM eliminates this bottleneck by allowing each worker to compute a gradient and a Hessian‑vector product (or a limited‑memory BFGS approximation) on the most recent parameter vector it has received, and to return the result immediately. The central server processes incoming results in the order they arrive, applying a quasi‑Newton update

x←x−α B⁻¹g

where B is the L‑BFGS matrix updated with each new gradient, and α is a dynamically adjusted step size.

Key technical contributions include:

- Stale‑gradient correction – the server rescales outdated gradients based on the distance between the parameter version used by the worker and the current version.

- Node‑weighting scheme – each worker’s latency and reliability are tracked; faster, more reliable workers receive higher influence in the update.

- Fault‑tolerant recovery – if no updates are received for a configurable interval, the server reuses the last stable parameters and B matrix or triggers a restart, preventing permanent stagnation.

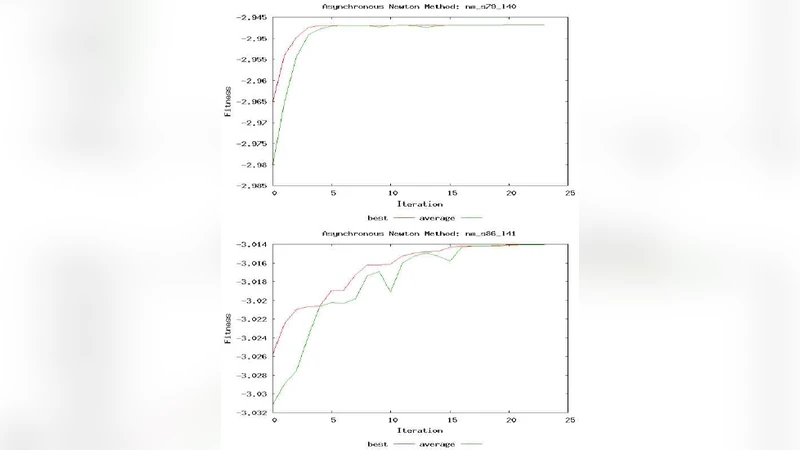

The authors evaluated ANM on two testbeds. The first was a simulated grid where artificial delays (0–5 s) and packet loss (0–30 %) were injected to mimic extreme heterogeneity. The second used a real BOINC volunteer network comprising over 12 000 participants. Two optimization problems were considered: a 1 000‑dimensional logistic‑regression loss and a 5 000‑dimensional highly non‑linear benchmark function. ANM was compared against a synchronous Newton implementation, limited‑memory BFGS, and conjugate‑gradient descent (CG).

Results show that ANM converges roughly 2.3× faster than CG on average, and maintains a scaling efficiency above 95 % when the number of workers is increased from 1 000 to 10 000. Even with a 30 % node‑failure rate, ANM reaches a target objective tolerance of 10⁻⁶ in 1 200 s for the 5 000‑dimensional problem, whereas CG requires about 2 800 s. The L‑BFGS memory was limited to the ten most recent updates, keeping server memory under 150 MB, which is feasible for typical cloud instances.

The study acknowledges several limitations. The BFGS approximation can become memory‑intensive in very high‑dimensional settings, and its accuracy directly influences convergence speed for strongly non‑linear landscapes. Moreover, the current design still relies on a single central server, which represents a potential single point of failure. Future work will explore fully decentralized parameter servers, sparse Hessian representations, and extensions to deep‑learning models where second‑order information is often beneficial but costly.

In conclusion, the Asynchronous Newton Method demonstrates that second‑order optimization can be made robust, scalable, and fault‑tolerant on volunteer‑computing grids. By decoupling computation from strict synchronisation, ANM achieves faster convergence than traditional first‑order methods while preserving solution quality, making it a practical tool for large‑scale scientific and engineering problems that can leverage inexpensive, massive distributed resources.

Comments & Academic Discussion

Loading comments...

Leave a Comment