Characterisation of speech diversity using self-organising maps

We report investigations into speaker classification of larger quantities of unlabelled speech data using small sets of manually phonemically annotated speech. The Kohonen speech typewriter is a semi-supervised method comprised of self-organising maps (SOMs) that achieves low phoneme error rates. A SOM is a 2D array of cells that learn vector representations of the data based on neighbourhoods. In this paper, we report a method to evaluate pronunciation using multilevel SOMs with /hVd/ single syllable utterances for the study of vowels, for Australian pronunciation.

💡 Research Summary

This paper presents a methodological investigation into characterizing pronunciation diversity within Australian English using a semi-supervised learning approach based on Self-Organising Maps (SOMs). The core research question explores whether a small set of manually phonemically annotated speech data can be leveraged to effectively classify and analyze larger quantities of unlabelled speech data from different speaker groups.

The study employs the framework of the Kohonen phonetic typewriter. SOMs are neural network models that project high-dimensional input data onto a low-dimensional (typically 2D) grid of units, preserving topological relationships. The audio data used consists of 18 /hVd/ single-syllable words (e.g., “had,” “heed,” “hod”) extracted from the AusTalk corpus of Australian English. Speech signals are parameterized into standard 39-dimensional Mel-Frequency Cepstral Coefficients (MFCCs), including energy and delta features.

The experimental design is central to the paper’s contribution. The researchers compare three speaker groups: General Australian English speakers, educated Melbournian speakers (aged 25-34), and Australian English speakers with a Chinese language background. The methodology involves a multi-level or “boosted” SOM architecture:

- A base 25x25 unit SOM is trained on all audio frames from the unlabelled data of one group.

- Three-quarters of a separate set of manually annotated data (from three other speakers) is used to assign phoneme labels to the units on this base map.

- This labelled map segments the unlabelled input data for training subsequent, more specialized SOMs.

Two distinct strategies for creating these secondary “submaps” are tested:

- Kohonen’s Method: Training three 20x20 submaps on subsets of vowels that were frequently confused with each other on the base map. The analysis identified three such confusion groups (e.g., Group 1: /oI/ and /o:/).

- Linguistic Method: Training three 20x20 submaps based on the linguistic position within the /hVd/ syllable: one for /h/ (initial), one for the vowel (nucleus), and one for /d/ (final).

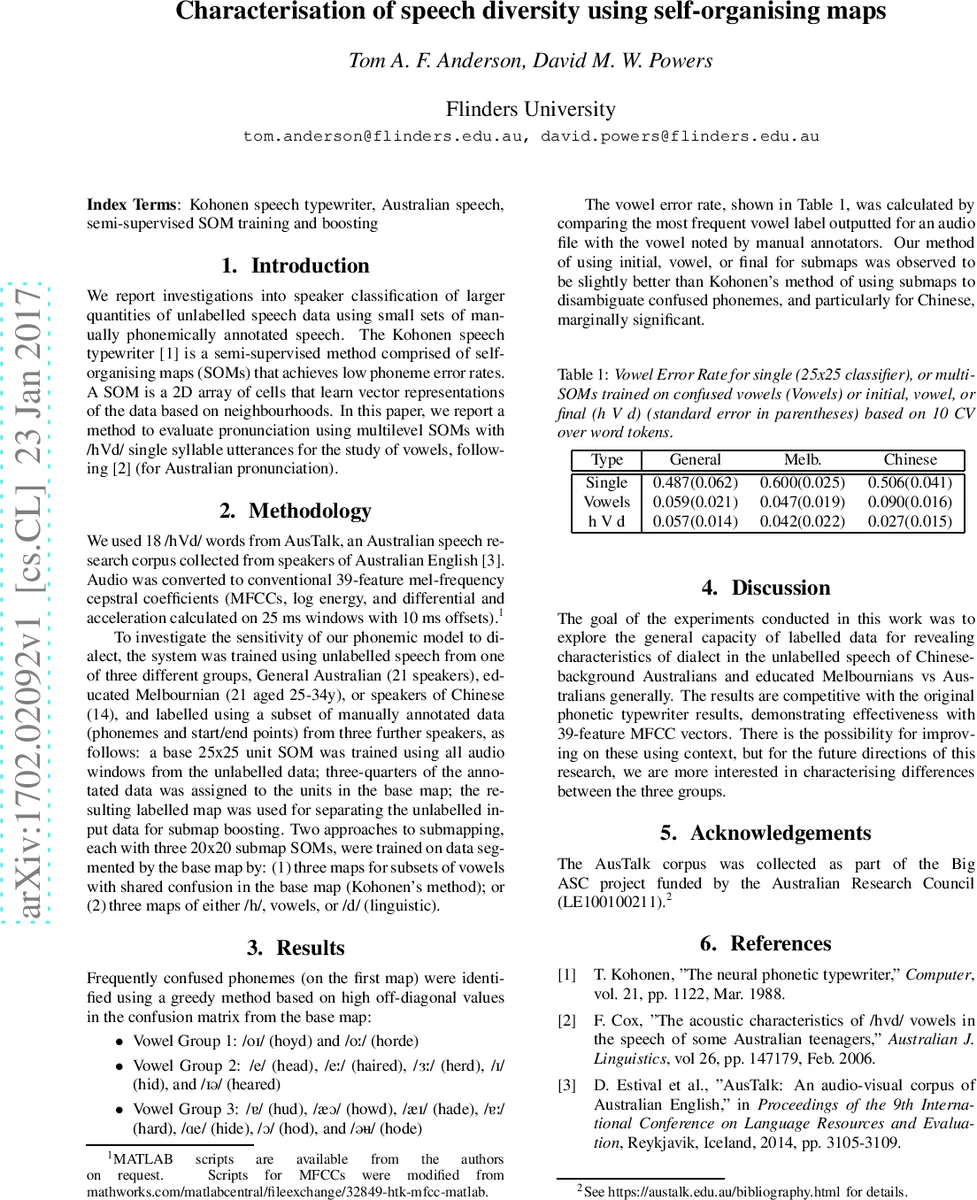

The key results are presented in terms of vowel error rate, calculated by comparing the most frequent vowel label output by the system for a given audio file with the manual annotation. The single base SOM yielded relatively high error rates (0.487 to 0.600). However, both multi-SOM approaches dramatically reduced errors to below 0.10. Crucially, the linguistic method of using initial/vowel/final submaps consistently outperformed Kohonen’s confusion-group method across all speaker groups. This performance advantage was most pronounced and marginally significant for the Chinese-background speaker data, where the error rate dropped to 0.027.

The discussion clarifies that the primary goal was not necessarily to achieve state-of-the-art phoneme recognition accuracy—though the results are competitive with the original phonetic typewriter—but to explore the capacity of this semi-supervised, SOM-based framework to reveal characteristic dialectal differences in unlabelled speech. The success of the linguistically structured submaps suggests that positional context within a syllable can be a more robust feature for disambiguation than pure acoustic similarity between confused phonemes, especially for analyzing L2-influenced speech patterns. The paper concludes by positioning this work as a foundation for future research focused on characterizing pronunciation variation rather than mere recognition, with potential applications in dialectology and personalized speech technology.

Comments & Academic Discussion

Loading comments...

Leave a Comment