GuessWhat?! Visual object discovery through multi-modal dialogue

We introduce GuessWhat?!, a two-player guessing game as a testbed for research on the interplay of computer vision and dialogue systems. The goal of the game is to locate an unknown object in a rich image scene by asking a sequence of questions. Higher-level image understanding, like spatial reasoning and language grounding, is required to solve the proposed task. Our key contribution is the collection of a large-scale dataset consisting of 150K human-played games with a total of 800K visual question-answer pairs on 66K images. We explain our design decisions in collecting the dataset and introduce the oracle and questioner tasks that are associated with the two players of the game. We prototyped deep learning models to establish initial baselines of the introduced tasks.

💡 Research Summary

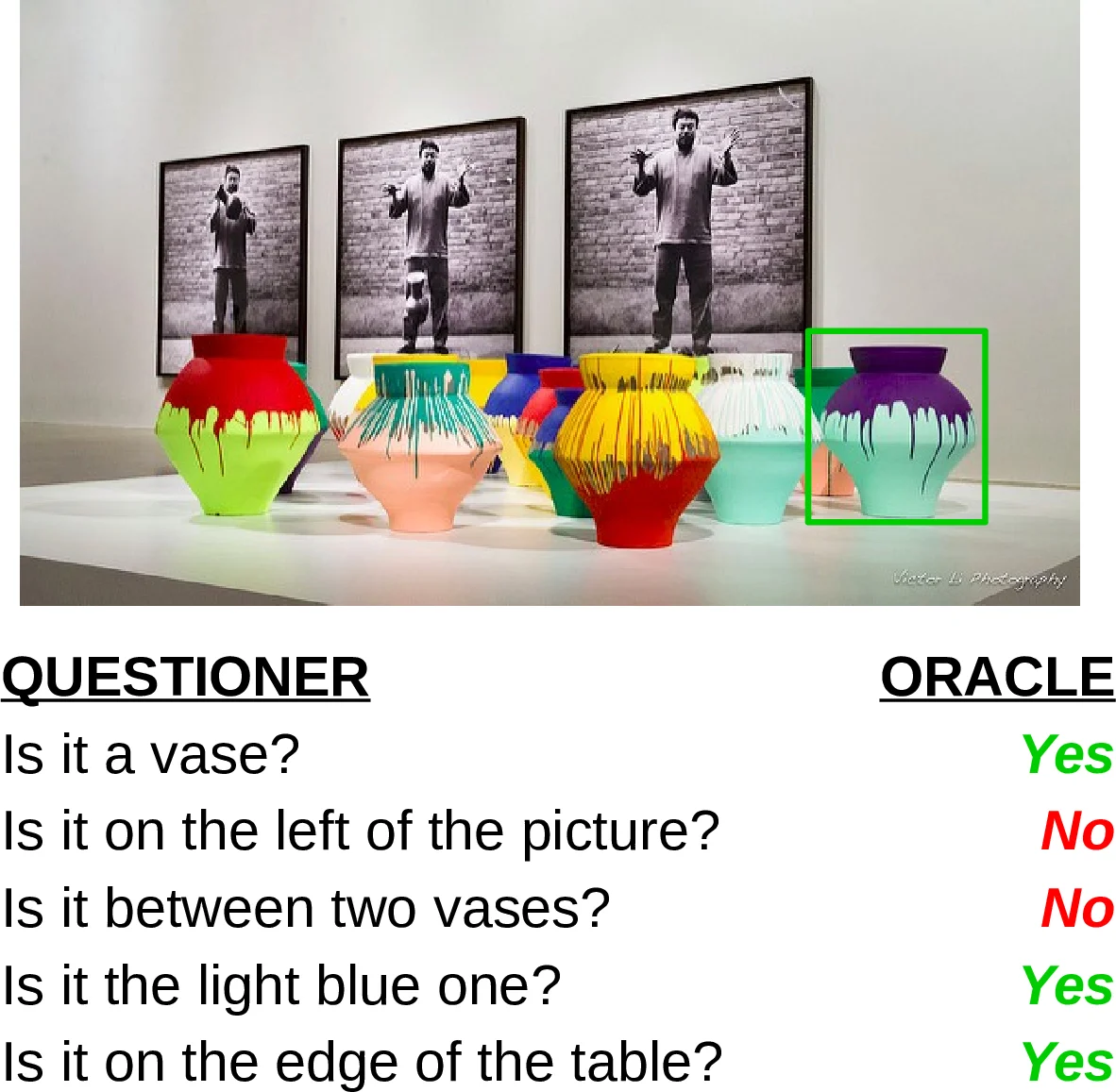

GuessWhat?! introduces a cooperative two‑player game that serves as a testbed for integrating visual perception and natural‑language dialogue. In each game, both participants view the same rich image (drawn from the MS COCO dataset). The “oracle” is secretly assigned a target object, while the “questioner” must locate this object by asking a sequence of yes/no questions. The oracle answers with “Yes”, “No”, or “N/A” (when the question is ambiguous or unanswerable). After a series of questions, the questioner makes a final guess; the game is marked successful if the guessed object matches the hidden target.

To create a large‑scale benchmark, the authors collected 155,280 games (821,889 question‑answer pairs) covering 66,537 images and 134,073 distinct objects. They filtered COCO images to retain only those containing 3–20 objects of sufficient size (area ≥ 500 px²) to ensure human recognizability. Data collection was performed on Amazon Mechanical Turk with separate HITs for the two roles. Workers first passed a qualification round (10 flawless games) and then completed batches of ten games, receiving modest bonuses for high success rates. Quality control included mutual reporting, bans for repeated infractions, and post‑hoc spelling correction of the free‑form questions. The resulting dataset is split into “full”, “finished” (successful + unsuccessful), and “successful” subsets; 84.6 % of finished games are successful. Answers are roughly balanced (≈45 % Yes, 52 % No, 2 % N/A). The average dialogue contains 5.2 questions, and the number of questions grows between logarithmic and linear with the number of objects in the image, indicating that human questioners employ a hybrid strategy between exhaustive listing and optimal binary search.

The paper defines two learning tasks. The oracle task requires a model to predict the correct answer given an image, the target object’s bounding box, and a question. The authors implement a CNN‑based visual encoder combined with an LSTM for the question, feeding both into a three‑way classifier (Yes/No/N/A). The questioner task is more complex: the model must (a) generate the next question conditioned on the dialogue history and the image, and (b) after a fixed number of turns, infer the target object. For question generation they employ a sequence‑to‑sequence architecture, optionally fine‑tuned with reinforcement learning to maximize information gain. For object inference they maintain a candidate set and iteratively prune it based on the accumulated yes/no answers, mimicking a binary‑search policy.

Baseline results show that the oracle model reaches around 70 % accuracy, confirming that the visual‑question pairing is learnable. In contrast, the questioner models lag behind human performance; generated questions often lack semantic coherence or fail to efficiently reduce the candidate set, leading to a sharp drop in success as dialogue length increases. This gap highlights the difficulty of learning an effective questioning policy that balances relevance, informativeness, and linguistic naturalness.

Beyond the dataset itself, the work contributes a clear experimental framework for multi‑modal, goal‑directed dialogue. It bridges three research areas—image captioning, visual question answering, and task‑oriented dialogue—by requiring agents to ground language in visual context while pursuing a concrete objective. The authors discuss several avenues for future research: (1) improving question generation via advanced attention mechanisms or hierarchical policy learning; (2) joint training of oracle and questioner agents in a multi‑agent reinforcement learning setting; (3) incorporating external knowledge (e.g., object taxonomies) to enable higher‑level reasoning such as “Is it a vehicle?”. The GuessWhat?! dataset, being the first large‑scale, multi‑modal, goal‑oriented dialogue corpus, opens the door to more sophisticated visual‑language agents capable of interactive, human‑like reasoning about the world.

Comments & Academic Discussion

Loading comments...

Leave a Comment