Probabilistic Sensor Fusion for Ambient Assisted Living

There is a widely-accepted need to revise current forms of health-care provision, with particular interest in sensing systems in the home. Given a multiple-modality sensor platform with heterogeneous network connectivity, as is under development in t…

Authors: Tom Diethe, Niall Twomey, Meelis Kull

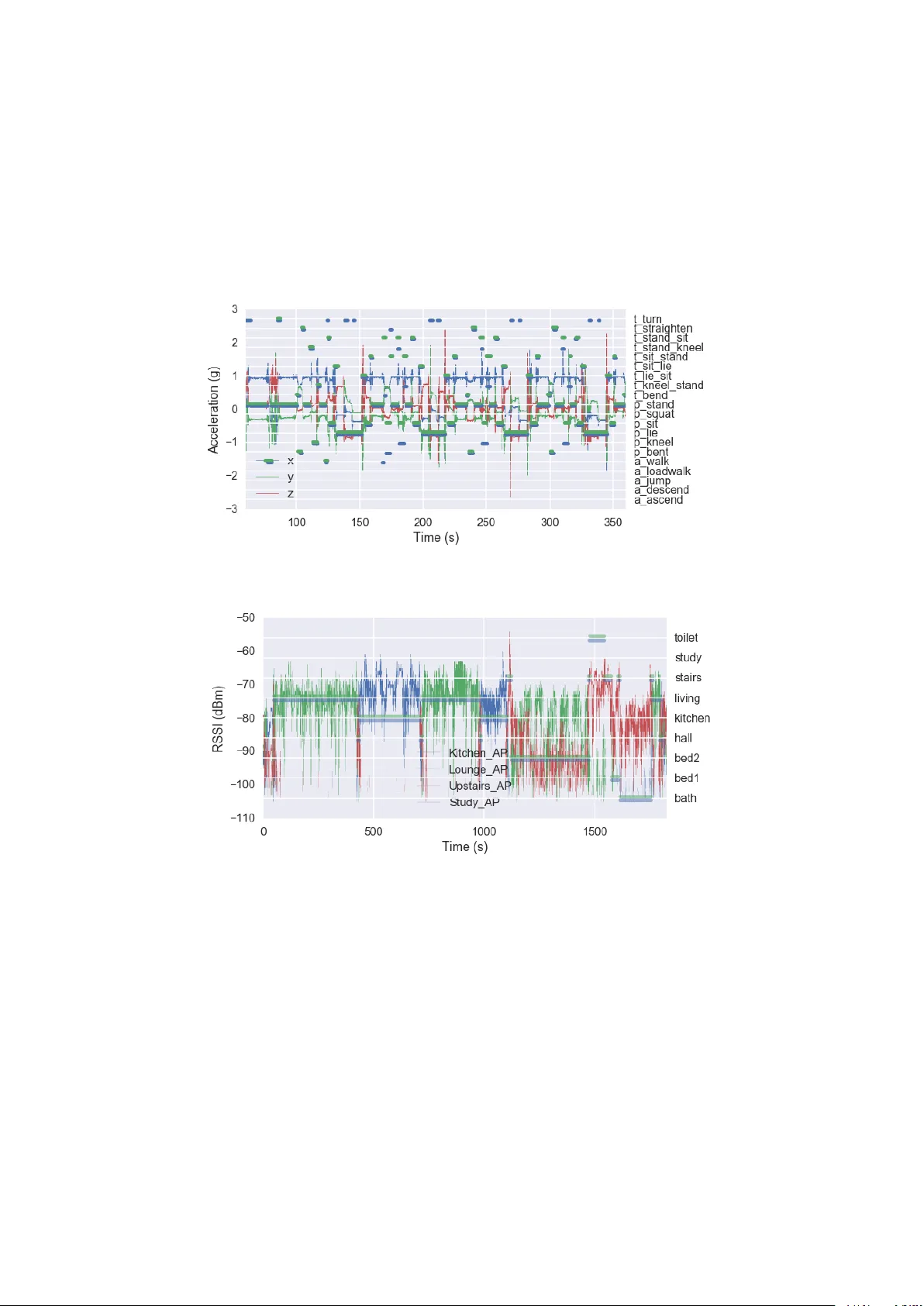

Probabilistic Sensor Fusion for Ambient Assisted Li ving T om Diethe a, ∗ , Niall T wome y a , Meelis Kull a , Peter Flach a , Ian Craddock a a Intelligent Systems Laboratory , University of Bristol Abstract There is a widely-accepted need to re vise current forms of health-care provision, with particular interest in sensing systems in the home. Gi ven a multiple-modality sensor platform with hetero- geneous network connecti vity , as is under de velopment in the Sensor Platform for HEalthcare in Residential En vironment (SPHERE) Interdisciplinary Research Collaboration (IRC), we face specific challenges relating to the fusion of the heterogeneous sensor modalities. W e introduce Bayesian models for sensor fusion, which aims to address the challenges of fusion of heterogeneous sensor modalities. Using this approach we are able to identify the modal- ities that hav e most utility for each particular acti vity , and simultaneously identify which features within that acti vity are most relev ant for a gi ven acti vity . W e further sho w ho w the two separate tasks of location prediction and acti vity recognition can be fused into a single model, which allo ws for simultaneous learning an prediction for both tasks. W e analyse the performance of this model on data collected in the SPHERE house, and show its utility . W e also compare against some benchmark models which do not hav e the full structure, and sho w how the proposed model compares f av ourably to these methods. K e ywor ds: Bayesian, Sensor Fusion, Smart Homes, Ambient Assisted Li ving 1. Introduction 1.1. Ambient and Assisted Living Due to well-known demographic challenges, traditional regimes of health-care are in need of re-examination. Many countries are experiencing the ef fects of an ageing population, which coupled with a rise in chronic health conditions is expediting a shift to wards the management of a wide variety of health related issues in the home. In this context, adv ances in Ambient Assisted Li ving (AAL) are providing resources to improv e the experience of patients, as well as informing necessary interventions from relati ves, carers and health-care professionals. ∗ I am corresponding author Email addr esses: tom.diethe@bristol.ac.uk (T om Diethe), niall.twomey@bristol.ac.uk (Niall T womey), meelis.kull@bristol.ac.uk (Meelis Kull), peter.flach@bristol.ac.uk (Peter Flach), ian.craddock@bristol.ac.uk (Ian Craddock) URL: www.tomdiethe.com (T om Diethe) Pr eprint submitted to Information Fusion F ebruary 7, 2017 T o this end the EPSRC-funded “Sensor Platform for HEalthcare in Residential Environment (SPHERE)” Interdisciplinary Research Collaboration (IRC) [9, 26, 27, 25] has designed a multi- modal system dri ven by data analytics requirements. The system is under test in a single house, and deployment to a general population of 100 homes in Bristol (UK) is underway at the time of writing. Wherev er possible, the data collected will be made a vailable to researchers in a v ariety of communities. Data fusion and machine learning in this setting is required to address two main challenges: transparent decision making under uncertainty; and adapting to multiple operating contexts. Here we focus on the first of these challenges, by describing an approach to sensor fusions that takes a principled approach to the quantification of uncertainty whilst maintaining the ability to introspect on the decisions being made. 1.2. Quantification of Uncertainty Multiple heterogeneous sensors in a real world en vironment introduce different sources of uncertainty . At a basic le vel, we might hav e sensors that are simply not working, or that are gi ving incorrect readings. More generally , a giv en sensor will at any giv en time hav e a particular signal to noise ratio, and the types of noise that are corrupting the signal might also v ary . As a result we need to be able to handle quantities whose values are uncertain, and we need a principled framew ork for quantifying uncertainty which will allow us to build solutions in ways that can represent and process uncertain values. A compelling approach is to build a model of the data-generating process, which directly incorporates the noise models for each of the sensors. Probabilistic (Bayesian) graphical models, coupled with ef ficient inference algorithms, provide a principled and flexible modelling frame work [2, 23]. 1.3. F eatur e Construction, Selection, and Fusion Gi ven an understanding of data generation processes, the sensor data can be interpreted for the identification of meaningful features. Hence it is important that this is closely coupled to the de- velopment of the indi vidual sensing modalities [15], e.g. it may be that sensors ha ve strong spatial or temporal correlations or that specific combinations of sensors are particularly meaningful. One of the main hypotheses underlying the SPHERE project [9] is that once calibrated, many weak signals from particular sensors can be fused into a strong signal allo wing meaningful health- related interventions [13]. Based on the calibrated and fused signals, the system must decide whether intervention is required and which intervention to recommend; interventions will need to be information gathering as well as health providing. This is known as the exploration-e xploitation dilemma, which must be extended to address the challenges of costly interv entions and complex data-structures [16]. Continuous streams of data can be mined for temporal patterns that vary between indi viduals. These temporal patterns can be directly built into the model-based framework, and additionally can be learnt on both group-wide and individual le vels to learn context sensiti ve and specific patterns. For a recent revie w of methods for dealing with multiple heterogeneous streams of data in an online setting see [6]. 2 2. Related W ork W e consider data- or sensor - fusion in the setting of supervised learning. There are se veral dif ferent approaches to this, which hav e subtle distinctions in their motiv ation. In Multi-V iew Learning (MVL), we have multiple views of the same underlying semantic object, which may be deri ved from dif ferent sensors, or dif ferent sensing techniques [7, 8]. In Multi-Source Learning (MSL), we hav e multiple sources of data which come from different sources b ut whose label space is aligned. Finally , in Multiple K ernel Learning (MKL) [1], we ha ve multiple kernels built from dif ferent feature mappings of the same data source. In general, any algorithm b uilt to solve an y of the three problems will also solve the others. Probabilistic approaches have been de veloped for the MKL problem [5], which gi ves the adv antages of full distributions ov er the parameters, but also remov es the need for heuristic methods for selecting hyperparameters. Our approach is closest in flav our to this last method. 3. The SPHERE Challenge In this work, we use the SPHERE challenge dataset [22] as our primary source of data. This dataset contains synchronised accelerometer , en vironmental and video data that w as recorded in a smart home by the SPHERE project [27, 25]. A number of features make this dataset v aluable and interesting to activity recognition re- searchers and more generally to machine learning researchers: • It features missing data. • The data are temporal, and correlations in the data must be captured either in the features or in the modelling frame work [21]. • There are man y correlations between activities. • The problem can be modelled in a hierarchical classification frame work. • The tar gets are probabilistic (since the annotations of multiple annotators were av eraged). • The optimal solution will need to consider sensor fusion techniques. Three primary sensing modalities were collected in this dataset: 1) en vironmental sensor data; 2) accelerometer and Receiv ed Signal Strength Indication (RSSI) data; and 3) video and depth data. Accompanying these data are annotations on location within the smart home, as well as annotations relating to the Activities of Daily Living (ADLs) that were being performed at the time. The floorplan of the smart en vironment is shown in Figure 1, and we can see that nine rooms are marked in this figure: 3 (a) Ground floor (li ving room, study , kitchen, downstairs hall way) (b) Second floor (toilet, master bedroom, second bathroom) // (c) First floor (bathroom only) Figure 1: Floor plan of the SPHERE house. A staircase joins the ground floor to the second floor , with the bathroom half-way up. 4 1. bathroom 2. bedroom 1 3. bedroom 2 4. hallw ay 5. kitchen 6. li ving room 7. stairs 8. study 9. toilet to predict posture and amb ulation labels gi ven the sensor data from recruited participants as they perform acti vities of daily living in a smart en vironment. Additionally , twenty activities of daily living are annotated in this dataset. These are enumer- ated belo w: 1. ascent stairs; 2. descent stairs; 3. jump; 4. walk wit h load; 5. walk; 6. bending; 7. kneeling; 8. lying; 9. sitting; 10. squatting; 11. standing; 12. stand-to-bend; 13. kneel-to-stand; 14. lie-to-sit; 15. sit-to-lie; 16. sit-to-stand; 17. stand-to-kneel; 18. stand-to-sit; 19. bend-to-stand; and 20. turn Three main categories of ADL are found here: 1) ambulation acti vities ( i.e. an activity requir - ing of continuing movement, e.g . walking), 2) static postures ( i.e. times when the participants are stationary , e.g . standing, sitting); and 3) posture-to-posture transitions ( e.g . stand-to-sit, stand-to- bend). By considering the floorplan and the list of ADLs together , it should be clear to see that there are correlations between these: since there is no seating area in the kitchen, it is v ery unlikely that somebody will sit in the kitchen. Howe ver , since there are many seats in the living room, it is much more likely that somebody will sit in the li ving room than in the kitchen. Additionally , one can only ascent and descent stairs when one is on the staircase. 3.1. Sensor data In this section we outline the individual sensor modalities in greater details. Additionally , we indicate whether each modality will be suitable for the prediction location and ADLs. 3.1.1. En vir onmental data In Figure 2, we sho w the Passi ve Infra-Red (PIR) data that was recorded and localisation labels that were annotated as part of the data collection. In this image, the black se gments indicate the times during which motion was detected by the PIR sensor . The remaining line segments ( e.g . the transparent blue and green segments) represent the ground truth location annotations. In this example, two colours (blue and green) are sho wn since two annotators labelled this segment. Overall, these localisation annotations are very similar o verall, and the main dif ferences are on the 5 Figure 2: Example PIR for training record 00001. The black lines indicate the time durations where a PIR is activ ated. The blue and green wide horizontal lines indicate the room occupancy labels as gi ven by the two annotators that labelled this sequence. precise specification of ‘start’ and ‘end’ times in each room, see for example the ‘hall’ annotation at approximately 1 000 seconds. The PIR data is suitable only for prediction of localisation since it cannot report on fine-grained mov ements. Ho wev er , this sensor provides useful information pertaining to localisation since each of the nine rooms contains these sensors. It is worth noting that under certain circumstances the PIR data may report ‘false positi ve’ triggers, e.g. when bright sunshine is present. For this reason, it is necessary to fuse additional modalities together to produce accurate localisation. 3.1.2. Acceler ometer and RSSI data The accelerometer signal trace and the annotated ADLs are shown in 3a. The continuous blue, green and red traces here are related to the x , y , and z axes of the accelerometer . These signals are assigned to the left hand axis. Overlaid on this image are discontinuous line segments in blue and green, and these are the ADL annotations that were produced from two annotators. One should confer with the right hand axis to identify the label at a particular time point. In general, there is good agreement between these two annotators. Howe ver , some annotators naturally produced greater resolution than others ( e .g. the ‘blue’ annotator annotated more turning acti vities than the ‘green’ annotator). Since the accelerometer data are transmitted to central access points via a wireless Bluetooth connection, we also hav e access to the relati ve strength of the wireless connection at each of the access points. Four access points were distributed throughout the smart home: one each in the kitchen, living room, master bedroom, and the study . The RSSI signals that were recorded by the four access points are shown in 3b, and the localisation labels are also ov erliad on this image ( c.f. to Figure 2). This image sho ws that there is a close relationship between the values of RSSI and location. T aking a concrete example, we can see that during the first 500 seconds, a significant proportion of time is spent in the living room. The access point in the li ving room ( i.e. the green trace) reports the highest response, and the remaining access points have not registered the connection. 6 (a) Acceleration signal trace shown over a 5 minute time period. Annotations are ov erlaid in blue and green. // (b) RSSI values from the four access points. Room occupancy labels are shown by the the horizontal lines. Figure 3: Example acceleration and RSSI signals for training record 00001. The line traces indi- cate the accelerometer/RSSI v alues recorded by the access points. The horizontal lines indicate the ground-truth as provided by the annotators (two annotators annotated this record, and their annotations are depicted by the green and blue traces respecti vely . 7 Consequently , the RSSI and the PIR are both valuable signals for localisation. It is worth noting that the Bluetooth link is set up in ‘connectionless’ mode. This mode in- creases the liv e cycle of the wearable battery , and also allows the wearable data to be acquired simultaneously by multiple access points. Ho we ver , the data are also transmitted with a lower Quality of Service (QoS) which increases the risk of lost packets. This is particularly clear in 3b since at no time is data recei ved on all access points. Similarly , in 3a acceleration packets are lost. 3.1.3. V ideo data V ideo recordings were taken using ASUS Xtion PR O RGB-Depth (RGBD) cameras 1 . Auto- matic detection of humans was performed using the OpenNI library 2 . F alse positi ve detections were manually remov ed by the organisers by visual inspection. Three RGBD cameras are in- stalled in the SPHERE house, and these are located in the li ving room, do wnstairs hallw ay , and the kitchen. No cameras are located elsewhere in the residence. In order to preserve the anonymity of the participants the raw video data are not av ailable, and instead the coordinates of the 2D bounding box, 2D centre of mass, 3D bounding box and 3D centre of mass are provided. The units of 2D coordinates are in pixels (i.e. number of pixels do wn and right from the upper left hand corner) from an image of size 640 × 480 pixels. The coordinate system of the 3D data is axis aligned with the 2D bounding box, with a supplementary dimension that projects from the central position of the video frames. The first two dimensions specify the vertical and horizontal displacement of a point from the central vector (in millimetres), and the final dimension specifies the projection of the object along the central vector (again, in millimetres). Examples of the centre of the 3D bounding box are sho wn in Figure 4 where the three video locations are separated according to their location. V ideo data is rich in the information that it pro- vides, and it is informati ve for predicting location and ADLs. Room-lev el location can be inferred since each camera is situated in a room. Additionally , with appropriate feature engineering, it is possible to determine acti vities of daily li ving. Howe ver , since cameras are only a vailable in three rooms, we cannot rely purely on RGBD features for localisation or ADL. 3.2. Recruitment and Sensor Layout T wenty participants were recruited to perform acti vities of daily li ving in the SPHERE house while the data recorded by the sensors was logged to a database. Ethical approv al was secured from the Univ ersity of Bristol’ s ethics committee to conduct data collection, and informed consent was obtained from healthy v olunteers. In the first stage of data collection, participants were requested to follow a pre-defined script. 1a 1b and 1c sho w the floor plan of the ground, first and second floors of the smart en vironment respecti vely . 1 https://www.asus.com/3D- Sensor/Xtion_PRO/ 2 https://github.com/OpenNI/OpenNI 8 Figure 4: Example centre 3d for training record 00001. The horizontal lines indicate annotated room occupancy . The blue, green and red traces are the x , y , and z values for the 3D centre of mass. 3.3. Annotation A team of 12 annotators were recruited and trained to annotate the locations of ADLs. T o support the annotation process, a head mounted camera (Panasonic HX-A500E-K 4K W earable Action Camera Camcorder) recorded 4K video at 25 FPS to an SD-card. This data is not shared in this dataset, and is used only to assist the annotators. Synchronisation between the network time protocol (NTP) clock and the head-mounted camera was achie ved by focusing the camera on an NTP-synchronised digital clock at the beginning and end of the recording sequences. An annotation tool called ELAN 3 was used for annotation. ELAN is a tool for the creation of complex annotations on video and audio resources, dev eloped by the Max Planck Institute for Psycholinguistics in Nijmegen, The Netherlands. Room occupancy labels are also giv en in the training sequences. While performance ev aluation is not directly af fected by room prediction on this, participants may find that modelling room occupancy may be informati ve for prediction of posture and ambulation. 3.4. P erformance evaluation As both the targets and predictions are probabilistic, the standard classification performance e valuation metrics are inappropriate. Hence, classification performance is e valuated with weighted 3 https://tla.mpi.nl/tools/tla- tools/elan/ 9 Brier score [4]: B S = 1 N N X n =1 C X c =1 w c ( p n,c − y n,c ) 2 (1) where N is the number of test sequences, C is the number of classes, w c is the weight for each class, p n,c is the predicted probability of instance n being from class c , and y n,c is the proportion of annotators that labelled instance n as arising from class c . Lower Brier score values indicate better performance, with optimal performance achie ved with a Brier score of 0 . 4. Bayesian Sensor Fusion In this section we will dev elop a class of models that may be used to tackle the two sensor fusion tasks described above. Critically , we will also show ho w these two can be combined into a single model. 4.1. Pr eliminaries W e will begin by assuming that distributions (or densities) over a set of variables x = ( x 1 , . . . x D ) of interest can be represented as factor graphs, i.e . p ( x ) = 1 Z J Y j =1 ψ j ( x ne( ψ j ) ) , (2) where ψ j are the factors : non-negati ve functions defined ov er subsets of the variables x ne( ψ j ) , the neighbours of the factor node ψ j in the graph, where we use ne( ψ j ) to denote the set indices of the variables of factor ψ j ). Z is the normalisation constant. W e only consider here directed factors ψ ( x out | x in ) which specify a conditional distribution ov er v ariables x out gi ven x in (hence x ne( ψ ) = ( x out , x in )) . For more details see e.g . [14, 3]. 4.2. Multi-Class Bayes P oint Machine W e begin by describing a Bayesian model for classification known as the multi-class Bayes Point Machine (BPM) [12], which will form the basis of our modelling approach. The BPM makes the follo wing assumptions: 1. The feature values x are always fully observ ed. 2. The order of instances does not matter . 3. The predictiv e distribution is a linear discriminant of the form p ( y i | x i , w ) = p ( y i | s i = w 0 x i ) where w are the weights and s i is the score for instance i . 4. The scores are subject to additiv e Gaussian noise. 5. The features are as uncorrelated as possible. This allo ws modelling p ( w ) as fully factorised 6. (optional) A factorised heavy-tailed prior distrib utions over the weights w 10 For the purposes of both location prediction and activity recognition, assumption 2 may be problematic, since the data is clearly sequential in nature. Intuitiv ely , we might imagine that the strength of the temporal dependence in the sequence will determine ho w costly this approximation is, and this will in turn depend on how the data is preprocessed ( i.e. is raw data presented to the classifier , or are features instead computed from the time series?). It has been shown [21] that under certain conditions structured models and unstructured models can yield equiv alent predictiv e performance on sequential tasks, whilst unstructured models are also typically much cheaper to compute. W e follow the guidance set out there and use conte xtual information from neighbouring time-points to construct our features. The factor graph for the basic multi-class BPM model is illustrated in 5a, where N denotes a Gaussian density for a giv en mean µ and precision τ , and Γ denotes a Gamma density for giv en shape k and scale θ . The factor indicated by is the arg-max factor , which is like a probabilistic multi-class switch. The additi ve Gaussian noise from assumption 4 results in the v ariable ˜ s , which is a noisy version of the score s . An alternati ve to the arg-max factor is the softmax function, or normalised exponential function, which is a generalisation of the logistic function that transforms a K -dimensional vector z of arbitrary real values to a K -dimensional vector σ ( z ) of real v alues in the range (0 , 1) that sum to 1. The function is giv en by σ ( z j ) = e z j P K k =1 e z k j = 1 , . . . , K. Multi-class regression in volves constructing a separate linear regression for each class, the output of which is known as an auxiliary variable. The vector of auxiliary variables for all classes is put through the softmax function to gi ve a probability vector , corresponding to the probability of being in each class. Multi-class softmax regression is very similar in spirit to the multi-class BPM. The key dif ferences are that where the BPM uses a max operation constructed from multiple “greater than” factors, softmax regression uses the softmax factor , and V ariational Message Passing (VMP) (see subsection 5.1) is currently the only option when using the softmax factor . T wo conse- quences of these dif ferences are that softmax regression scales computationally much better with the number of classes than the BPM (linear rather quadratic complexity) and although not rele vant here it is possible to ha ve multiple counts for a single sample (multinomial regression). In this case the number of classes C is not so large, meaning that the quadratic O ( C 2 ) scaling of the BPM is not so problematic. W e also propose to use a heavy-tailed prior , a Gaussian distribution whose precision is itself a mixture of Gamma-Gamma distributions. This is illustrated in the factor graph in 5b, where N denotes a Gaussian density for a gi ven mean µ and precision τ , and Γ denotes a Gamma density for giv en shape and rate). The v ariable a is a common precision that is shared by all features, and the variable b represents the precision rate, which adapts to the scaling of the features. Compared with a Gaussian prior , the hea vy-tailed prior is more robust to wards outliers, i.e. feature v alues which are far from the mean of the weight distribution, 0. It is in variant to rescaling the feature v alues, but not in variant to their shifting, which is achie ved by adding a constant feature value 11 (bias) for all instances. y ˜ s N s 1 × w x 0 1 N N D C (a) y ˜ s N s 1 × w x 0 τ N a b / ( 1 , 1 ) 1 r ( 1 , 1 ) 0 N N D C (b) y ˜ s BPM w x N D C (c) Figure 5: (a) Multi-class Bayes Point Machine; (b) heavy-tailed version; and (c) simplified repre- sentation. C : Number of classes; N : Number of examples; D : Number of features. See text for details. In 5c we also giv e a simplified form of the factor graph, where the essential parts of the BPM hav e been collapsed into a single bpm factor . W e will use the simplified form in further models to aid the presentation, but should be born in mind that this actually represents 5a or 5b depending on the context. In this form, the model is not identifiable, since the addition of a constant value to all the score v ariables s will not change the output of the prediction. T o make the model identifiable we enforce that the score v ariable corresponding to the last class will be zero, by constraining the last coef ficient vector and mean to be zero. 4.3. Fused Bayes P oint Machine The simplest method for fusing multiple sensing modalities is to concatenate all of the features from each modality for each e xample into a single long feature v ector . This can then be presented 12 to the BPM as described abov e, and will serve as a baseline model. Here we present three Bayesian models for sensor fusion. the first of these, sho wn in 6a, is the simplest of these, and corresponds quite closesly to the unweighted probabilistic MKL model gi ven in [5]. T aking the simplified factor graph in 5c, we hav e an additional plate ov er the sources/modalities S , for the entire BPM model, which results in a noisy score ˜ s for each source. These are then summed together to get a fused score per class, and the standard arg-max or softmax link function can then be used. The first modification of this is the weighted additi ve fusion model sho wn in 6b. Here we hav e additional v ariables β per source, which are than multiplied with the noisy scores before addition to gi ve fused scores. This model provides extra interpretability since the means of the β v ariables indicate the influence of the source associated with that v ariable, and the variances of these variables indicate the ov erall uncertainty about that source. Howe ver this model also introduces extra symmetries that can cause dif ficulties in inference. The second modification is the switching model presented in 6c. In this model, we hav e a single switching variable z which follows a categorical distribution that is defined ov er the range of the sources. This categorical distribution is parametrised by the probability vector θ , which follo ws a uniform Dirichlet distribution. y f + ˜ s BPM w x N D C S (a) y t + f × ˜ s BPM β ( 0 , 1 ) w x N D C S (b) y t Switch ˜ s BPM z Cat θ Dir w x N D C S (c) Figure 6: Bayesian multi-class fusion classification models: (a) unweighted additi ve version; (b) weighted additi ve version; and (c) fused switching model. S : Number of sources. See text for details. 13 4.4. Stack ed classifiers The models described thus far have all been designed to tackle fusion of multiple sources to achie ve a single multi-class classification task. Howe ver , in the SPHERE challenge setting, there are in fact two classification tasks: firstly , there is the classification of location, based on the fusion of RSSI values from the wearable device along with the stationary PIR sensors. Secondly , there is the classification of the acti vity being performed, based on the fusion of the wearable accelerometer readings and the video bounding boxes. It is a reasonable hypothesis that certain acti vities are much more likely to occur in some locations than others: hence, we propose another form of fusion, to train acti vity recognition models for each location use the location prediction model to switch ov er these models. This could of course be done in a two stage process, where the switch could take the a weighted combination of the predictions from the location predictions to produce a fused prediction (again this can form a strong baseline). Ho we ver we also surmise that there may be an advantage to be gained by learning both stages in a single model, since the activity recognition models could inform the location prediction as well. This model is depicted in Figure 7. Note that the location predictions y L no w act as a probabilistic switching variable ov er the activity BPMs, of which there is one per location. For simplicity , we ha ve omitted the fusion parts of this model, b ut note that we can incorporate any of the 3 kinds of fusion found in Figure 6. y L ˜ s BPM w x y A ˜ s A BPM w A x A N D L D A L A Figure 7: Stacked classification model for simultaneous location prediction and acti vity recogni- tion. L : Number of locations; A : Number of activities N : Number of examples; D L : Number of location features. D A : Number of activity features. See text for details. 14 5. Methodology 5.1. Infer ence The Belief Propagation (BP) (or sum-product) algorithm computes mar ginal distrib utions o ver subsets of variables by iterativ ely passing messages between v ariables and factors within a giv en graphical model, ensuring consistency of the obtained mar ginals at con vergence. Unfortunately , for all but trivial models, exact inference using BP is intractable. In this work, we employ two separate inference algorithms, both of which are efficient deterministic approximations based on message-passing ov er the factor graphs we ha ve seen before. Expectation Propagation (EP) [17] introduces an approximation in the case when the messages from factors to variables do not hav e a simple parametric form, by projecting the exact marginal onto a member of some class of kno wn parametric distributions, typically in the exponential fam- ily , e.g. the set of Gaussian or Beta distrib utions. VMP applies v ariational inference to Bayesian graphical models [24]. VMP proceeds by send- ing messages between nodes in the network and updating posterior beliefs using local operations at each node. Each such update increases a lo wer bound on the log e vidence at that node, (unless already at a local maximum). VMP can be applied to a very general class of conjug ate-exponential models because it uses a factorised variational approximation, and by introducing additional v ari- ational parameters, VMP can be applied to models containing non-conjugate distrib utions. EP and VMP has a major advantage ov er sampling methods in this setting, which is that it is relati vely easy to compute model evidence (see Equation ( ?? ) in belo w). In the case of VMP, this is directly a vailable from the lower bound. The e vidence computations can be used both for model comparison and for detection of con vergence. 5.2. Model Comparison W e would also like perform Bayesian model comparison, in which we marginalise ov er the parameters for the type of model being used, with the remaining variable being the identity of the model itself. The resulting marginalised likelihood, known as the model evidence, is the probability of the data giv en the model type, not assuming any particular model parameters. Using D for data, θ to denote model parameters, H as the hypothesis, the marginal likelihood for the model H is p ( D | H ) = Z p ( D | θ , H ) p ( θ | H ) d θ (3) This quantity can then be used to compute the Bayes factor [11], which is the posterior odds ratio for a model H 1 against another model H 2 , p ( H 1 | D ) p ( H 2 | D ) = p ( H 1 ) p ( D | H 1 ) p ( H 2 ) p ( D | H 2 ) . (4) 5.3. Batch and Online Learning There may be occasions when the full model will not fit in memory at once (either at train time or prediction time), as is the case for the SPHERE challenge dataset when performing inference 15 on a standard desktop computer . In this case, there are two options: batched inference and online learning. Infer .NET pro vides a Shar edV ariable class, supports sharing between models of dif ferent structures and also across multiple data batches, and makes it easy to implement batched inference. Online learning is performed using the standard assumed-density filtering method of [18], which in the case of the weight v ariables which are marginally Gaussian distributed, simply equates to setting the priors to be the poteriors from the pre vious round of inference. 5.4. Data pr e-pr ocessing W e further perform standardisation (whitening) for each feature within each source indepen- dently . Whilst this is not strictly necessary for the heavy-tailed models, it will not negati vely impact on their performance so is done for all models to ensure consistency . W e further add a constant bias feature (equal to 1) per source. W e also augment the feature space by adding polynomial degree 2 interaction features for each source, meaning that we in effect performing polynomial regression. This is equiv alent to the implicit feature space of a degree 2 polynomial kernel in kernel methods, which intuitiv ely , means that both features and pairwise combinations of features are taken into account. When the input features are binary-valued, then the features correspond to logical conjunctions of input features. Note that this method roughly doubles the number of variables in the model, b ut in this case the feature spaces are of relati vely lo w dimension. 6. Discussion Prediction performance is the one clear motiv ation for the use of models for fusion. Ho wev er , e ven if prediction performance is not improved (or degraded in non-significant ways) it can provide other benefits. These include extra interpretability of the models by looking at ho w the modalities are used by the models, and potentially robustness to missing modalities, since the models can be encouraged to use all of the modalities ev en when they provide less information for predictions. It might be possible to achieve such rob ustness with a simple BPM through re-weighting features on the basis of the posteriors from training, but it would clearly be more satisfying if this could achie ved only by changing mixing coef ficients to get the same effect. 6.1. Early versus late fusion Generally speaking, the literature describes early and late fusion strategies [20]. Early fusion is a usually described as a scheme that integrates unimodal features before learning concepts. This would include strate gies such as concatenating feature spaces and (probabilistic) MKL [1, 5]. Late fusion is a scheme that first reduces unimodal features to separately inferred concept scores ( e.g . through independent classifiers), after which these scores are integrated to learn concepts, typically using a further classification algorithm. [20] showed empirically that late fusion tended to giv e slightly better performance for most concepts, but for those concepts where early fusion performs better the dif ference was more significant. The methods described in this paper fit more in line with the late fusion scheme under this definition, since the fusion is occurring at the score lev el. Ho wev er the framew ork laid out here is more principled, since there is no recourse to two sets of classification algorithms and the heuristic manipulations that they entail. 16 6.2. T ransfer Learning As shown in [10], it is possible to extend the multi-class BPM [12] to the transfer learning setting by adding an additional layer of hierarchy to the model. T o deal with the transfer of learning between indi viduals (residents), we would ha ve an e xtra plate around the indi viduals that are present in the training set ( R ), who form the community , allo wing us to learning shared weights for the community . T o apply our learnt community weight posteriors to a new indi vidual we can use the same model configured for a single individual with the priors over weight mean and weight precision replaced by the Gaussian and Gamma posteriors learnt from the individuals in the training set respecti vely . This model is able to make predictions e ven when we hav e not seen any data for the new individual, b ut it is also possible to do online training as we recei ve labelled data for the indi vidual. By doing so, we can smoothly e volve from making generic predictions that may apply to any indi vidual to making personalised predictions specific to the ne w individual. Note that as stated this only applies to the version of the model without hea vy-tailed priors, but can be extended to this setting as well. Note that it is also possible to separate the community priors into groups ( e.g. by demographics or medical condition) by ha ving hyper -priors for each group, a separate indicator v ariable indicat- ing group membership, acting as a gating v ariable to select the appropriate hyper-priors. The separate transfer learning problem from house-to-house is achiev ed through the method of introducing meta-features of [19], and then the feature space is automatically mapped from the source domain to the target domain. In order to apply this to the models discussed in section 4, we would assume that the features x hav e already been mapped to these meta-features, and that similarly for the personalisation phase the mapping has already taken place. 7. Conclusions There is a widely-accepted need to revise current forms of health-care provision, with par- ticular interest in sensing systems in the home. Giv en a multiple-modality sensor platform with heterogeneous network connectivity , as is under dev elopment in the Sensor Platform for HEalth- care in Residential En vironment (SPHERE) Interdisciplinary Research Collaboration (IRC), we face specific challenges relating to the fusion of the heterogeneous sensor modalities. W e further will require the transfer of learnt models to a deployment context that may dif fer from the training context. W e introduce Bayesian models for sensor fusion, which aim to address the challenges of fusion of heterogeneous sensor modalities.Using this approach we are able to identify the modalities that hav e most utility for each particular acti vity , and simultaneously identify which features within that acti vity are most relev ant for a given acti vity . W e further show ho w the two separate tasks of location prediction and activity recognition can be fused into a single model, which allo ws for simultaneous learning an prediction for both tasks. W e analyse the performance of this model on data collected in the SPHERE house, and show its utility . W e also compare against some benchmark models which do not hav e the full structure, and sho w how the proposed model compares f av ourably to these methods. 17 Acknowledg ements This work was performed under the SPHERE IRC funded by the UK Engineering and Physical Sciences Research Council (EPSRC), Grant EP/K031910/1. The project is acti vely working to- wards releasing high-quality data sets to encourage community participation in tackling the issues outlined here. References [1] Bach, F . R., Lanckriet, G. R., Jordan, M. I., 2004. Multiple k ernel learning, conic duality , and the smo algorithm. In: Proceedings of the twenty-first international conference on Machine learning. A CM, p. 6. [2] Bishop, C., 2013. Model-based machine learning. Phil Trans R Soc A 371. [3] Bishop, C. M., et al., 2006. Pattern recognition and machine learning. V ol. 4. springer New Y ork. [4] Brier , G. W ., 1950. V erification of forecasts expressed in terms of probability . Monthly weather revie w 78 (1), 1–3. [5] Damoulas, T ., Girolami, M. A., 2008. Probabilistic multi-class multi-kernel learning: on protein fold recognition and remote homology detection. Bioinformatics 24 (10), 1264–1270. [6] Diethe, T ., Girolami, M., 2013. Online learning with (multiple) kernels: A revie w . Neural Computation 25, 567–625. [7] Diethe, T ., Hardoon, D. R., Shawe-T aylor , J., 2008. Multivie w Fisher discriminant analysis. In: NIPS 2008 workshop “Learning from Multiple Sources”. [8] Diethe, T ., Hardoon, D. R., Shawe-T aylor , J., 2010. Constructing nonlinear discriminants from multiple data views. In: ECML/PKDD. V ol. 1. pp. 328–343. [9] Diethe, T ., T womey , N., Flach, P ., 2014. SPHERE: A sensor platform for healthcare in a residential environment. In: Proceedings of Large-scale Online Learning and Decision Making W orkshop. [10] Diethe, T ., T womey , N., Flach, P ., 2016. Activ e transfer learning for activity recognition. In: European Sympo- sium on Artificial Neural Networks, Computational Intelligence and Machine Learning. [11] Goodman, S. N., 1999. T ow ard evidence-based medical statistics. 2: The Bayes factor . Annals of internal medicine 130 (12), 1005–1013. [12] Herbrich, R., Graepel, T ., Campbell, C., January 2001. Bayes point machines. Journal of Machine Learning Research 1, 245–279. [13] Klein, L. A., 2004. Sensor and data fusion: a tool for information assessment and decision making. V ol. 324. Spie Press Bellingham, W A. [14] Kschischang, F . R., Frey , B. J., Loeliger , H.-A., 2001. Factor graphs and the sum-product algorithm. IEEE T ransactions on information theory 47 (2), 498–519. [15] Liu, H., Motoda, H., 1998. Feature extraction, construction and selection: A data mining perspectiv e. Springer . [16] May , B. C., K orda, N., Lee, A., Leslie, D. S., Jun. 2012. Optimistic bayesian sampling in contextual-bandit problems. J. Mach. Learn. Res. 13, 2069–2106. [17] Minka, T . P ., 2001. Expectation propagation for approximate Bayesian inference. In: Proceedings of the Sev en- teenth conference on Uncertainty in artificial intelligence. Morgan Kaufmann Publishers Inc., pp. 362–369. [18] Opper , M., 1998. A Bayesian approach to on-line learning. In: Saad, D. (Ed.), On-line Learning in Neural Networks. Cambridge Uni versity Press, Ne w Y ork, NY , USA, pp. 363–378. [19] Rashidi, P ., Cook, D. J., Jun. 2011. Activity knowledge transfer in smart en vironments. Perv asiv e Mob . Comput. 7 (3), 331–343. [20] Snoek, C. G., W orring, M., Smeulders, A. W ., 2005. Early versus late fusion in semantic video analysis. In: Proceedings of the 13th annual A CM international conference on Multimedia. A CM, pp. 399–402. [21] T womey , N., Diethe, T ., Flach, P ., 2016. On the need for structure modelling in sequence prediction. Machine Learning 104 (2), 291–314. URL http://dx.doi.org/10.1007/s10994- 016- 5571- y [22] T womey , N., Diethe, T ., Kull, M., Song, H., Camplani, M., Hannuna, S., Fafoutis, X., Zhu, N., W oznowski, P ., 18 Flach, P ., Craddock, I., 2016. The SPHERE challenge: Activity recognition with multimodal sensor data. arXiv preprint [23] W inn, J., Bishop, C. M., Diethe, T ., 2015. Model-Based Machine Learning. Microsoft Research Cambridge. URL http://www.mbmlbook.com [24] W inn, J. M., Bishop, C. M., 2005. V ariational message passing. In: Journal of Machine Learning Research. pp. 661–694. [25] W oznowski, P ., Burro ws, A., Camplani, M., Diethe, T ., Fafoutis, X., Hall, J., Hannuna, S., Kozlo wski, M., T womey , N., T an, B., Zhu, N., Elsts, A., V afeas, A., Mirmehdi, M., Burghardt, T ., Damen, D., Paiement, A., T ao, L., Flach, P ., Oikonomou, G., Piechocki, R., Craddock., I., 2016. SPHERE: A Sensor Platform for HEalthcare in a Residential En vironment. In: Angelakis, V ., T ragos, E., P ¨ ohls, H., Kapo vits, A., Bassi, A. (Eds.), Designing and Dev eloping and and Facilitating Smart Cities: Urban Design to IoT Solutions. Springer . [26] W oznowski, P ., Fafoutis, X., Song, T ., Hannuna, S., Camplani, M., T ao, L., P aiement, A., Mellios, E., Haghighi, M., Zhu, N., et al., 2015. A multi-modal sensor infrastructure for healthcare in a residential environment. In: Communication W orkshop (ICCW), 2015 IEEE International Conference on. IEEE, pp. 271–277. [27] Zhu, N., Diethe, T ., Camplani, M., T ao, L., Burrows, A., T womey , N., Kaleshi, D., Mirmehdi, M., Flach, P ., Craddock, I., 2015. Bridging e-health and the internet of things: The SPHERE project. Intelligent Systems, IEEE 30 (4), 39–46. 19

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment