Gaussian-binary Restricted Boltzmann Machines on Modeling Natural Image Statistics

We present a theoretical analysis of Gaussian-binary restricted Boltzmann machines (GRBMs) from the perspective of density models. The key aspect of this analysis is to show that GRBMs can be formulated as a constrained mixture of Gaussians, which gives a much better insight into the model’s capabilities and limitations. We show that GRBMs are capable of learning meaningful features both in a two-dimensional blind source separation task and in modeling natural images. Further, we show that reported difficulties in training GRBMs are due to the failure of the training algorithm rather than the model itself. Based on our analysis we are able to propose several training recipes, which allowed successful and fast training in our experiments. Finally, we discuss the relationship of GRBMs to several modifications that have been proposed to improve the model.

💡 Research Summary

This paper provides a thorough theoretical and empirical investigation of Gaussian‑binary Restricted Boltzmann Machines (GRBMs) as density estimators for natural image statistics. The authors first reformulate the GRBM energy function to show that, conditioned on a binary hidden vector h, the visible units follow a multivariate Gaussian distribution with mean b + Wh and isotropic variance σ². Consequently, the marginal distribution over visible units can be written as a mixture of 2ᴺ Gaussians, each corresponding to a particular hidden configuration. However, unlike an unrestricted Gaussian mixture model, the component means are not independent; they are constrained to be linear combinations of a small set of “anchor” and “first‑order” components (the bias vector b and the weight vectors w₁,…,w_N). Higher‑order components are therefore deterministic functions of these bases, which limits the expressive power of GRBMs but still allows them to capture the low‑dimensional linear structure typical of natural images.

The paper then addresses the widely reported difficulty of training GRBMs. By dissecting the contrastive divergence (CD‑k) learning rule, the authors identify several practical pitfalls: poor initialization of the visible bias b and variance σ, overly aggressive sparsity that drives hidden activations to zero, and static learning‑rate schedules that fail to accommodate the rapidly changing energy landscape. To remedy these issues they propose a concrete training recipe: (1) initialize b to the data mean and σ to a small fraction of the data standard deviation; (2) start with a large learning rate and a relatively high CD‑k (e.g., k = 10) to explore the parameter space, then decay the learning rate exponentially; (3) apply both L2 weight decay and a modest sparsity penalty to keep hidden activation probabilities in a useful range (≈0.1–0.3); (4) periodically orthogonalize the weight matrix (≈ WᵀW ≈ I) to encourage decorrelated filters, similar to ICA. With these adjustments the authors achieve stable and fast convergence on both synthetic and real‑world data.

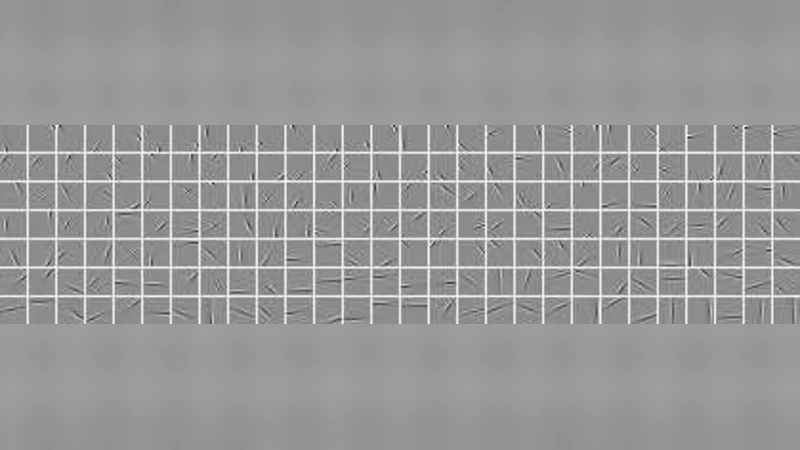

Empirical validation is performed on two fronts. First, a two‑dimensional blind‑source‑separation (BSS) task uses a mixture of independent Laplacian sources. After whitening, GRBMs are trained 200 times; about 55 % of the runs recover the true independent components, and the average log‑likelihood is only slightly worse than that of Independent Component Analysis (ICA) but substantially better than a simple isotropic Gaussian baseline. Visualizations of the learned weight vectors confirm that GRBMs capture the same edge‑like structures as ICA, albeit without the strict orthogonality constraint. Second, the authors train GRBMs on 8 × 8 natural‑image patches. Using the proposed recipe, log‑likelihood stabilizes within 50 epochs, and the learned filters resemble Gabor‑like edge detectors and color‑frequency patterns commonly observed in early visual cortex models. The experiments demonstrate that, when properly trained, GRBMs can model the statistical regularities of natural images effectively.

Finally, the paper situates GRBMs among several proposed modifications—sparse RBMs, scaled‑Gaussian noise models, deep Boltzmann machines, etc.—and argues that many of these extensions merely relax the mixture‑of‑Gaussians constraints (e.g., by allowing non‑isotropic covariances or increasing the number of components) without addressing the core training shortcomings. The authors conclude that the apparent limitations of GRBMs stem from suboptimal learning procedures rather than intrinsic model deficiencies. Their analysis not only clarifies the theoretical relationship between GRBMs, product‑of‑experts models, and constrained Gaussian mixtures, but also provides practical guidance that can be directly applied to future deep generative‑model research.

Comments & Academic Discussion

Loading comments...

Leave a Comment