Learning Criticality in an Embodied Boltzmann Machine

Many biological and cognitive systems do not operate deep into one or other regime of activity. Instead, they exploit critical surfaces poised at transitions in their parameter space. The pervasiveness of criticality in natural systems suggests that there may be general principles inducing this behaviour. However, there is a lack of conceptual models explaining how embodied agents propel themselves towards these critical points. In this paper, we present a learning model driving an embodied Boltzmann Machine towards critical behaviour by maximizing the heat capacity of the network. We test and corroborate the model implementing an embodied agent in the mountain car benchmark, controlled by a Boltzmann Machine that adjust its weights according to the model. We find that the neural controller reaches a point of criticality, which coincides with a transition point of the behaviour of the agent between two regimes of behaviour, maximizing the synergistic information between its sensors and the hidden and motor neurons. Finally, we discuss the potential of our learning model to study the contribution of criticality to the behaviour of embodied living systems in scenarios not necessarily constrained by biological restrictions of the examples of criticality we find in nature.

💡 Research Summary

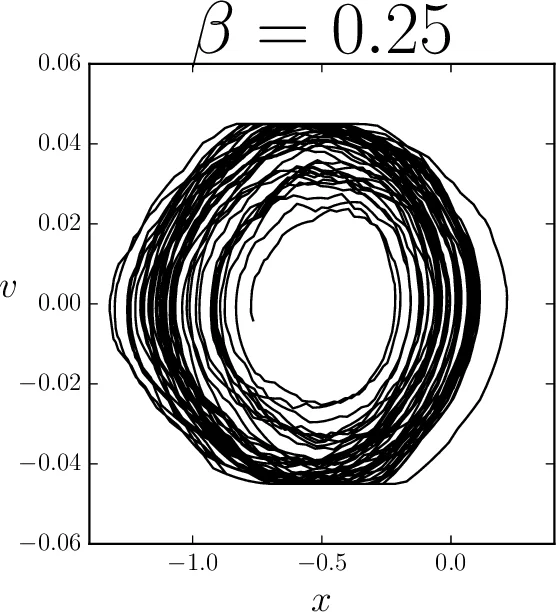

The paper tackles the pervasive yet poorly understood phenomenon of criticality—states poised at phase transitions—in biological and cognitive systems. It proposes a principled, mechanistic learning rule that drives an embodied neural controller toward such critical points by maximizing the system’s heat capacity, a thermodynamic quantity that diverges at criticality. The authors formulate the controller as a Boltzmann Machine (BM) with binary units, bias terms h_i and symmetric couplings J_ij, whose joint distribution follows the maximum‑entropy form P(s)=Z⁻¹exp(−βE(s)). Because the global heat capacity depends on the full state distribution, they introduce a local proxy: the path‑entropy of each neuron’s transition probabilities. From this they derive an explicit expression for the neuron‑wise heat capacity C_i and its gradients with respect to h_i and J_ij. The resulting gradient‑ascent update (augmented with L2 regularization) can be computed using only locally available quantities (the current state of neighboring units), making it suitable for online learning in an embodied setting.

To test the theory, the authors embed the BM in the classic Mountain Car reinforcement‑learning benchmark (implemented via OpenAI Gym). The controller receives six binary sensor inputs encoding the car’s horizontal and vertical accelerations (each discretized into three bits) and contains six internal units, two of which are directly linked to the motor output (action a∈{−1,0,1}). Ten agents are initialized with small random weights (h, J ∈

Comments & Academic Discussion

Loading comments...

Leave a Comment