Pose-Selective Max Pooling for Measuring Similarity

In this paper, we deal with two challenges for measuring the similarity of the subject identities in practical video-based face recognition - the variation of the head pose in uncontrolled environments and the computational expense of processing vide…

Authors: Xiang Xiang, Trac D. Tran

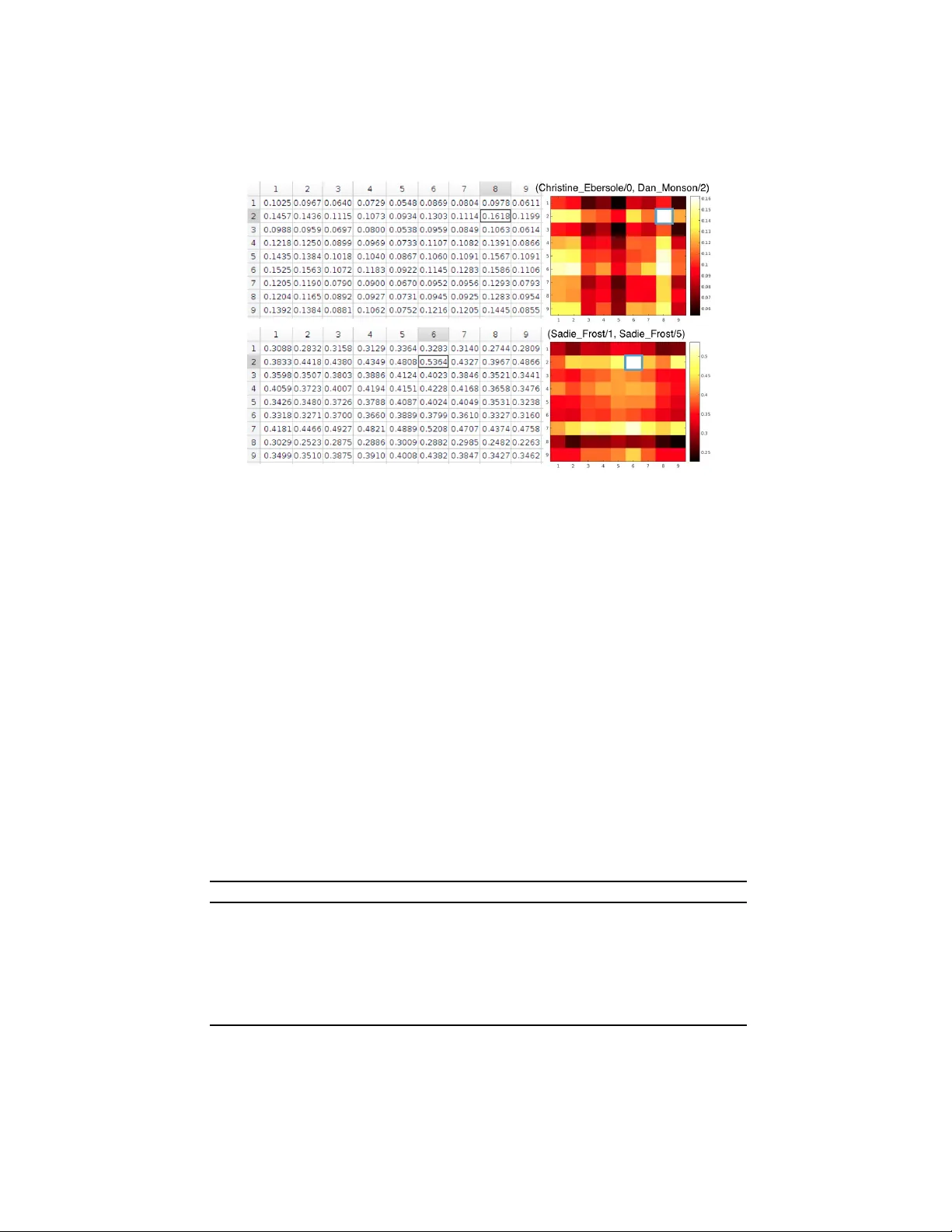

P ose-Selectiv e Max P ooling f or Measuring Similarity Xiang Xiang 1 and T rac D. T ran 2 1 Dept. of Computer Science 2 Dept. of Electrical & Computer Engineering Johns Hopkins Univ ersity , 3400 N. Charles St, Baltimore, MD 21218, USA xxiang@cs.jhu.edu Abstract. In this paper , we deal with two challenges for measuring the similarity of the subject identities in practical video-based face recognition - the v ariation of the head pose in uncontrolled en vironments and the computational e xpense of processing videos. Since the frame-wise feature mean is unable to characterize the pose diversity among frames, we define and preserve the overall pose diver - sity and closeness in a video. Then, identity will be the only source of variation across videos since the pose varies ev en within a single video. Instead of sim- ply using all the frames, we select those faces whose pose point is closest to the centroid of the K-means cluster containing that pose point. Then, we represent a video as a bag of frame-wise deep face features while the number of features has been reduced from hundreds to K . Since the video representation can well represent the identity , now we measure the subject similarity between two videos as the max correlation among all possible pairs in the two bags of features. On the official 5,000 video-pairs of the Y ouT ube Face dataset for face verification, our algorithm achie ves a comparable performance with V GG-face that av erages ov er deep features of all frames. Other vision tasks can also benefit from the generic idea of emplo ying geometric cues to improv e the descriptiveness of deep features. 1 Introduction In this paper , we are interested in measuring the similarity of one source of variation among videos such as the subject identity in particular . The moti v ation of this w ork is as followed. Giv en a face video visually affected by confounding factors such as the identity and the head pose, we compare it against another video by hopefully only mea- suring the similarity of the subject identity , e v en if the frame-le vel feature characterizes mixed information. Indeed, deep features from Con volutional Neural Networks (CNN) trained on face images with identity labels are generally not robust to the variation of the head pose , which refers to the f ace’ s relativ e orientation with respect to the camera and is the primary challenge in uncontrolled environments. Therefore, the emphasis of this paper is not the deep learning of frame-level features. Instead, we care about ho w to improve the video-le vel representation’ s descriptiv eness which rules out confusing factors ( e.g . , pose) and induces the similarity of the factor of interest ( e .g. , identity). If we treat the frame-le vel feature v ector of a video as a random v ector , we may as- sume that the highly-correlated feature vectors are identically distributed. When the task is to represent the whole image sequence instead of modeling the temporal dynamics such as the state transition, we may use the sample mean and v ariance to approximate the true distribution, which is implicitly assumed to be a normal distribution. While this 2 Xiang Xiang and T rac D. T ran Fig. 1. Example of the chosen key faces. T op ro w sho ws the first 10 frames of a 49-frame YTF sequence of W oody Allen, who looks right and do wn sometimes. And most of the time his face is slightly slanting. Bottom row are 9 frames selected according to the variation of 3D poses. Disclaimer: the source owning this Y ouT ube video allo ws republishing the face images. assumption might hold gi ven natural image statistics, it can be untrue for a particular video. Even if the features are Gaussian random vectors, taking the mean makes sense only if the frame-lev el feature just characterizes the identity . Because there is no v ari- ation of the identity in a video by construction. Ho we ver , e ven the CNN face features still normally contain both the identity and the pose cues. Surely , the feature mean will still characterize both the identity and the pose. What is even worse, there is no way to decouple the two cues once we take the mean. Instead, if we want the video feature to only represent the subject identity , we had better preserv e the overall pose di versity that very likely exists among frames. Disregarding minor factors, the identity will be the only source of variation across videos since pose varies ev en within a single video. The proposed K frame selection algorithm retains frames that preserve the pose di- versity . Based on the selection, we further design an algorithm to compute the identity similarity between two sets of deep face features by pooling the max correlation. Instead of pooling from all the frames, the K frame selection algorithm is high- lighted at firstly the pose quantization via K-means and then the pose selection using the pose distances to the K-means centroids. It reduces the number of features from tens or hundreds to K while still preserving the overall pose di versity , which makes it possible to process a video stream at real time. Fig. 1 sho ws an example sequence in the Y ouT ube Face (YTF) dataset [16]. This algorithm also serves as a way to sample the video frames (to K images). Once the key frames are chosen, we will pool a single number of the similarity between two videos from many pairs of images. The metric to pool from man y correlations normally are the mean or the max. T aking the max is essentially finding the nearest neighbor, which is a typical metric for measuring simi- larity or closeness of two point sets. In our work, the max correlation between two bags of frame-wise CNN features is emplo yed to measure how likely two videos represent the same person. In the end, a video is represented by a single frame’ s feature which induces nearest neighbors between two sets of selected frames if we treat each frame as a data point. This is essentially a pairwise max pooling process. On the of ficial 5000 video-pairs of YTF dataset [16], our algorithm achie ves a comparable performance with state-of-the-art that av erages ov er deep features of all frames. Pose-Selectiv e Max Pooling for Measuring Similarity 3 Fig. 2. Analysis of rank-1 identification under v arying poses for Google’s FaceNet [12] on the recently established MegaF ace 1 million face benchmark [8]. Y aw is e xamined as it is the primary variation such as looking left/right inducing a profile. The colors represent identification accuracy going from 0 (blue, none of the true pairs were matched) to 1 (red, all possible combinations of probe and gallery were matched). White color indicates combinations of poses that did not exist in the test set. (a) 1K distractors (people in the gallery yet not in the probe). (b) 1M distractors. This figure is adapted from MegaF ace’ s FaceScrub results. 2 Related W orks The cosine similarity or correlation both are well-defined metrics for measuring the sim- ilarity of two images. A simple adaptation to videos will be randomly sampling a frame from each of the video. Howe ver , the correlation between two random image samples might characterize cues other than identity (say , the pose similarity). There are e xisting works on measuring the similarity of two videos using manifold-to-manifold distance [6]. Howe ver , the straightforward extension of image-based correlation is preferred for its simplicity , such as temporal max or mean pooling [11]. The impact of different spa- tial pooling methods in CNN such as mean pooling, max pooling and L -2 pooling, has been discussed in the literature [3,2]. Howe ver , pooling over the time domain is not as straightforward as spatial pooling. The frame-wise feature mean is a straightforward video-lev el representation and yet not a rob ust statistic. Despite that, temporal mean pooling is conv entional to represent a video such as av erage pooling for video-level representation [1], mean encoding for f ace recognition [4], feature a veraging for action recognition [5] and mean pooling for video captioning [15]. Measuring the similarity of subject identity is useful face recognition such as face verification for sure and face identification as well. Face verification is to decide whether two modalities containing f aces represent the same person or two different people and thus is important for access control or re-identification tasks. Face identification in- volv es one-to-many similarity , namely a ranked list of one-to-one similarity and thus is important for watch-list surveillance or forensic search tasks. In identification, we gather information about a specific set of individuals to be recognized ( i.e. , the gallery). At test time, a new image or group of images is presented ( i.e. , the probe). 4 Xiang Xiang and T rac D. T ran In this deep learning era, face verification on a number of benchmarks such as the Labeled Face in the W ild (LFW) dataset [9] has been well solved by DeepFace [14], DeepID [13], FaceNet [12] and so on. The V isual Geometry Group at the Univ ersity of Oxford released their deep face model called VGG-Face Descriptor [10] which also giv es a comparable performance on LFW . Ho wev er in the real world, pictures are often taken in uncontrolled en vironment (the so-called in the wild versus in the lab setting). Considering the number of image parameters that were allowed to vary sim ultaneously , it is logical to consider a divide-and-conquer approach - studying each source of vari- ation separately and k eeping all other v ariations as constants in a control e xperiment. Such a separation of variables has been widely used in Physics and Biology for multi- variate problems. In this data-dri ven machine learning era, it seems fine to remain all variations in realistic data, given the idea of letting the deep neural networks learn the variations existing in the enormous amount of data. For example, FaceNet [12] trained using a private dataset of over 200M subjects is indeed robust to poses, as illustrated in Fig. 2. Howe ver , the CNN features from con ventional networks suach as DeepFace [14] and VGG-F ace [10] are normally not. Moreov er , the unconstrained data with fused variations may contain biases to wards factors other than identity , since the feature might characterize a mixed information of identity and lo w-le vel f actors such as pose, illumi- nation, expression, motion and background. For instance, pose similarities normally outweigh subject identity similarities, leading to matching based on pose rather than identity . As a result, it is critical to decouple pose and identity . If the f acial expression confuses the identity as well, it is also necessary to decouple them too. In the paper , the face expression is not considered as it is minor compared with pose. Similarly , if we want to measure the similarity of the face expression, we need to decouple it from the identity . For example in [ ? ] for facial expression recognition, one class of training data are formed by face videos with the same expression yet across dif ferent people. Moreov er , there are many different application scenarios for face verifications. For W eb-based applications, v erification is conducted by comparing images to images. The images may be of the same person b ut were taken at dif ferent time or under different conditions. Other than the identity , high-level factors such as the age, gender, ethnicity and so on are not considered in this paper as they remain the same in a video. F or online face verification, aliv e video rather than still images is used. More specifically , the e xist- ing video-based verification solutions assume that gallery face images are taken under controlled conditions [6]. Howev er , gallery is often built uncontrolled. In practice, a camera could take a picture as well as capture a video. When there are more infor- mation describing identities in a video than an image, using a fully liv e video stream will require expensi ve computational resources. Normally we need video sampling or a temporal sliding window . 3 Pose Selection by Di versity-Pr eserving K-Means In this section, we will explain our treatment particularly for real-world images with various head poses such as images in YTF . Many existing methods such as [ ? ] make a certain assumption which holds only when faces are properly aligned. Pose-Selectiv e Max Pooling for Measuring Similarity 5 Fig. 3. An example of 3-D pose space. Shown for the 49-frame W oody Allen sequence in YTF . Three axises represent rotation angles of yaw (looking left or right), pitch (looking up or down) and roll (twisting left or right so that the face is slanting), respectively . The primary variation is the yaw such as turning left/right inducing a profile. Pattern e xists in pose distribution - ob viously two clusters for this sequence so in extreme case for reducing computation we can set K = 2 . By construction (say , face tracking by detection), each video contains a single sub- ject. Each video is formalised as a set V = { x 1 , x 2 , ..., x m } of frames where each frame x i contains a face. Given the homography H and correspondence of facial land- marks, it is entirely possible to estimate the 3D rotation angles (yaw , pitch and roll) for each 2D face frame. Concretely , some head pose estimator p ( V ) giv es a set P = { p 1 , p 2 , ..., p m } where p i is a 3D rotation-angle vector ( α y aw , α pitch , α rol l ) . After pose estimation, we would like to select key frames with significant head poses. Our intuition is to preserve pose diversity while downsampling the video in the time domain. W e learn from Fig. 2 of Google’ s FaceNet that face features learned from a deep CNN trained on identity-labelled data can be inv ariant to head poses as long as the training inputs for a particular identity class include almost all possible poses. That is also true for other minor source of variations such as illumination, expression, motion, background among others. Then, identity will be the only source of variation across classes since any factor other than identity v aries even within a single class. W ithout such huge training data as Google has, we instead hope that the testing inputs for a particular identity class include poses as diverse as possible. A straightfor- ward way is to use the full video, which indeed preserves all possible pose v ariations in that video while computing deep features for all the frames is computationally ex- pensiv e. T aking representing a line in a 2D coordinate system as an e xample, we only needs either two parameters such as the intercept and gradient or an y two points in that line. Similarly , now our problem becomes to find a compact pose representation of a testing video which in v olves the follo wing two criteria. 6 Xiang Xiang and T rac D. T ran First, the pose representation is compact in terms of non-redundancy and closeness. For non-redundancy , we hope to retain as fe w frames as possible. For pose closeness, we observe from Fig. 3 that certain patterns exist in the head pose distribution - close points turn to cluster together . That observation occurs for other sequences as well. As a result, we want to select key frames out of a video by clustering the 3D head poses. The widely-used K-means clustering aims to partition the point set into K subsets so as to minimize the within-cluster Sum of Squared Distances (SSD). If we treat each cluster as a class, we want to minimize the intra-class or within-cluster distance. Second, the pose representation is representati ve in terms of div ersity ( i.e. , dif fer- ence, distance). Intuitively we w ant to retain the key faces that have poses as different as possible. If we treat each frame’ s estimated 3D pose as a point, then the approximate polygon formed by selected points should be as close to the true polygon formed by all the points as possible. W e measure the di versity using the SSD between any two selected key points (SSD within the set formed by centroids if we use the them as key points). And we want to maximize such a inter -class or between-cluster distance. Now , we put all criteria together in a single objective. Giv en a set P = { p 1 , p 2 , ..., p m } of pose observations, we aim to partition the m observ ations into K ( ≤ m ) disjoint sub- sets S = { S 1 , S 2 , ..., S K } so as to minimize the within-cluster SSD as well as maximize the between-cluster SSD while still minimizing the number of clusters: min K, S S S D within S S D between := K X k =1 P m i =1 k p i − µ k k 2 P K j =1 ,j 6 = k k µ j − µ k k 2 (1) where µ j , µ k is the mean of points in S k , S k , respecti vely . This objective differs from that of K-means only in considering between-cluster distance which makes it a bit simi- lar with multi-class LD A (Linear Discriminant Analysis). Ho wev er , it is still essentially K-means. T o solve it, we do not really need alternative minimization because that K with a limited number of choices is empirically enumerated by cross v alidation. Once K is fix ed, solving Eqn. 1 follows a similar procedure of multi-class LD A while there is no mixture of classes or clusters because e very point is hard-assigned to a single cluster as done in K-means. The subsequent selection of ke y poses is straightforward (by the distances to K-means centroids). The selected key poses form a subset P Ω of P where Ω is a m -dimensional K - sparse impulse vector of binary v alues 1/0 indicating whether the index is chosen or not, respecti v ely . The selection of frames will follow the index activ ation v ector Ω as well. Such a selection reduces the number of images required to represent the face from tens or hundreds to K while preserving the pose diversity which is considered in the formation of clusters. Now we frontalize the chosen faces which is called face alignment or pose correction/normalization. All abov e operations are summarized in Algorithm 1. Note that not all landmarks can be perfectly aligned. Priority is gi ven to salient ones such as the eye center and corners, the nose tip, the mouth corners and the chin. Other properties such as symmetry are also preserved. For example, we mirror the detected eye horizontally . Howe v er , a profile will not be frontalized. Pose-Selectiv e Max Pooling for Measuring Similarity 7 Algorithm 1: K frame selection. Input : face video V = { x 1 , x 2 , ..., x m } . Output : pose-corrected down-sampled f ace video V c Ω = { x c (1) , x c (2) , ..., x c ( K ) } . (1) Landmark detection: detect facial landmarks per frame in V so that correspondence between frames is known. (2) Homography estimation: estimate an approximate 3D model (say , homography H ) from the sequence of faces in V with kno wn correspondence from landmarks. (3) Pose estimation: compute the rotation angles p i for each frame using landmark correspondence and obtain a set of sequential head poses P = { p 1 , p 2 , ..., p m } . (4) Pose quantization: cluster P into K subsets S 1 , S 2 , ..., S K by solving Eqn. 1 with estimated pose centroids { c 1 , ..., c K } which might be pseudo pose (non-existing pose). (5) Pose selection: for each cluster , compute the distances from each pose point p ∈ S k to the pose centroid c k and then select the closest pose point to represent the cluster S k . The selected key poses form a subset P Ω of P where Ω is the index acti v ation vector . (6) Face selection: follo w Ω to select the ke y frames and form a subset V Ω = { x (1) , x (2) , ..., x ( K ) } of V where V Ω ⊂ V . (7) Face alignment: W arp the each face in V Ω according to H so that landmarks are fixed to canonical positions. 4 Pooling Max Corr elation for Measuring Similarity In this section, we e xplain our max correlation guided pooling from a set of deep face features and verify whether the selected ke y frames are able to well represent identity regardless of pose v ariation. After face alignment, some feature descriptor , a function f ( · ) , maps each corrected frame x c ( i ) to a d × 1 feature vector f ( x c ( i ) ) ∈ R d with dimensionality d and unit Eu- clidean norm. Then the video is represented as a bag of normalized frame-wise CNN features X := { f 1 , f 2 , ..., f K } := { f ( x c (1) ) , f ( x c (2) ) , ..., f ( x c ( K ) ) } . W e can also arrange the feature vectors column by column to form a matrix X = f 1 | f 2 | ... | f K . For ex- ample, the VGG-f ace network [10] has been verified to be able to produce features well representing the identity information. It has 24 layers including se veral stacked con v olution-pooling layer , 2 fully-connected layer and one softmax layer . Since the model was trained for face identification purpose with respect to 2,622 identities, we use the output of the second last fully-connected layer as the feature descriptor, which returns a 4,096-dim feature vector for each input face. Giv en a pair of videos ( V a , V b ) of subject a and b respecti vely , we want to measure the similarity between a and b . Since we claim the proposed bag of CNN features can well represent the identity , instead we will measure the similarity between two sets of CNN features S im ( X a , X b ) which is defined as the max correlat ion among all possible pairs of CNN features, namely the max element in the correlation matrix (see Fig. 4): S im ( X a , X b ) := max n a ,n b ( f a n a T · f b n b ) = max ( X a T X a )(:) (2) where n a = 1 , 2 , ..., K a and n b = 1 , 2 , ..., K b . Notably , the notation (:) indicates all elements in a matrix following the MA TLAB con vention. Now , instead of comparing 8 Xiang Xiang and T rac D. T ran Fig. 4. Max pooling from the correlation matrix with each axis coordinates the time step in one video. T op ro w gives an example of different subjects while the bottom row shows that of the same person. Max responses are highlighted by boxes. Faces not shown due to copyright consideration. m a × m b pairs, with Sec. 3 we only need to compute K a × K b correlations, from which we further pool a single ( 1 × 1 ) number as the similarity measure. In the time domain, it also serv es as pushing from K images to just 1 image. The metric can be the mean, median, max or the majority from a histogram while the mean and max are more widely- used. The insight of not taking the mean is that a frame highly correlated with another video usually does not appear twice in a temporal sliding windo w . If we plot the two bags of features in the common feature space, a similarity is essentially the closeness between the two sets of points. If the two sets are non-overlapping, one measure of the closeness between two points sets is the distance between nearest neighbors, which is essentially pooling the max correlation. Similar with spatial pooling for inv ariance, taking the max from the correlation matrix shown in Fig. 4 preserves the temporal in v ariance that the largest correlation can appear at an y time step among the selected frames. Since the identity is consistent in one video, we can claim two videos contain a similar person as long as one pair of frames from each video are highly correlated. The computation of two videos’ identity similarity is summarized in Algorithm 2. Algorithm 2: V ideo-based identity similarity measur ement. Input : A pair of face videos V a and V b . Output : The similarity score S im ( X a , X b ) of their subject identity . (1) Face selection and alignment: run Algorithm 1 for each video to obtain ke y frames with faces aligned. (2) Deep video representation: generate deep face features of the ke y frames to obtain two sets of features X a and X b . (3) Pooling max correlation: compute similarity S im ( X a , X b ) according ro Eqn. 2. Pose-Selectiv e Max Pooling for Measuring Similarity 9 5 Experiments 5.1 Implementation W e dev elop the programs using resources 1 such as OpenCV , DLib and VGG-F ace. – Face detection: frame-by-frame detection using DLib’ s HOG+SVM based detector trained on 3,000 cropped face images from LFW . It works better for faces in the wild than OpenCV’ s cascaded haar-like+boosting based (V iola-Jones) detector . – Facial landmarking: DLib’ s landmark model trained via regression tree ensemble. – Head pose estimation: OpenCV’ s solvePnP recovering 3D coordinates from 2D coordinates using Direct Linear T ransform + Le venber g-Marquardt optimization. – Face alignment: OpenCV’ s warpAf fine by af fine-warping to center eyes and mouth. – Deep f ace representation 2 : second last layer output (4,096-dim) of V GG-Face [10] using Caffe [7]. For your con veniece, you may consider using MatCon vNet-VLFeat instead of Caffe. VGG-F ace has been trained using face images of size 224 × 224 with the a verage face image subtracted and then is used for our v erification purpose without any re-training. Howe ver , such av erage face subtraction is unav ailable and unnecessary given a new inputting image. As a result, we directly input the face image to VGG-F ace network without an y mean face subtraction. 5.2 Evaluation on video-based face verification For video-based face recognition database, EPFL captures 152 people facing web-cam and mobile-phone camera in controlled en vironments. Ho we ver , they are frontal faces and thus of no use to us. Univ ersity of Surrey and Uni versity of Queensland capture 295 and 45 subjects under various various well-quantized poses in controlled en viron- ments, respectiv ely . Since the poses are well quantized, we can hardly v erify our pose quantization and selection algorithm on them. McGill and NICT A capture 60 videos of 60 subjects and 48 surv eillance videos of 29 subjects in uncontrolled en vironments, re- spectiv ely . Ho we ver , the database size are way too small. Y ouT ube Faces (YTF) dataset (YTF) and India Mvie Face Database (IMFDB) collect 3,425 videos of 1,595 people and 100 videos of 100 actors in uncontrolled en vironments, respecti vely . There are quite a fe w existing work verified on IMFDB. As a result, the YTF dataset 3 [16] is chosen to verify the proposed video-based similarity measure for face verification. YTF w as b uilt by using the 5,749 names of subjects included in the LFW dataset [9] to search Y ouT ube for videos of these same indi viduals. Then, a screening process reduced the original set of videos from the 18,899 of 3,345 subjects to 3,425 videos of 1,595 subjects. In the same way with LFW , the creator of YTF provides an initial of ficial list of 5,000 video pairs with ground truth (same person or not as shown in Fig. 5). Our exper - iments can be replicated by follo wing our tutorial 4 . K = 9 turns to be av eragely the 1 http://opencv.org/ , http://dlib.net/ and http://www.robots.ox.ac. uk/ ˜ vgg/software/vgg_face/ , respectiv ely . 2 Codes are av ailable at https://github.com/eglxiang/vgg_face 3 Dataset is av ailable at http://www.cs.tau.ac.il/ ˜ wolf/ytfaces/ 4 Codes with a tutorial at https://github.com/eglxiang/ytf 10 Xiang Xiang and T rac D. T ran Fig. 5. Examples of YFT video-pairs. Instead of using the full video in the top row , we choose key faces in the bottom row . Disclaimer: this figure is adapted from VGG-face’ s pre- sentation (see also http://www.robots.ox.ac.uk/ ˜ vgg/publications/2015/ Parkhi15/presentation.pptx ) and follows V GG-face’ s republishing permission. best for the YTF dataset. Fig. 6 presents the Receiv er Operating Characteristic (ROC) curve obtained after we compute the 5,000 video-video similarity scores. One way to look at a R OC curve is to first fix the le vel of false positive rate that we can bear (say , 0.1) and then see how high is the true positiv e rate (say , roughly 0.9). Another w ay is to see ho w close the curve towards the top-left corner . Namely , we measure the Area Under the Curve (A UC) and hope it to be as large as possible. In this testing, the A UC is 0.9419 which is quite close to V GG-Face [10] which uses temporal mean pooling . Howe ver , our selectiv e pooling strategy ha ve much fewer computation credited to the key f ace selection. W e do run cross validations here as we do not ha v e any training. Later on, the creator of YTF sends a list of errors in the ground-truth label file and provides a corrected list of video pairs with updated ground-truth labels. As a result, we run again the proposed algorithm on the corrected 4,999 video pairs. Fig. 7 updates the R OC curv e with an A UC of 0.9418 which is identical with the result on the initial list. 6 Conclusion In this w ork, we propose a K frame selection algorithm and an identity similarity mea- sure which employs simple correlations and no learning. It is verified on fast video- based face verification on YTF and achieves comparable performance with VGG-face. Particularly , the selection and pooling significantly reduce the computational expense of processing videos. The further verification of the proposed algorithm include the ev aluation of video-based face e xpression recognition. As shown in Fig. 5 of [ ? ], the assumption of group sparsity might not hold under imperfect alignment. The extended Cohna-Kanade dataset include mostly well-aligned frontal faces and thus is not suitable for our research purpose. Our further experiments are being conducted on the B U-4DFE database 5 which contains 101 subjects, each one displaying 6 acted facial expressions with moderate head pose v ariations. A generic problem underneath is variable disen- tanglement in real data and a take-home message is that emplo ying geometric cues can improv e the descripti veness of deep features. 5 http://www.cs.binghamton.edu/ ˜ lijun/Research/3DFE/3DFE_ Analysis.html Pose-Selectiv e Max Pooling for Measuring Similarity 11 Fig. 6. R OC curve of running our algorithm on the YTF initial of ficial list of 5,000 pairs. Fig. 7. R OC curve of running our algorithm on the YTF corrected official list of 4,999 video pairs. 12 Xiang Xiang and T rac D. T ran References 1. Abu-El-Haija, S., K othari, N., Lee, J., Natse v , P ., T oderici, G., V aradarajan, B., V i- jayanarasimhan, S.: Y outube-8m: A lar ge-scale video classification benchmark. arxiv: 1609.08675 (September 2016) 2. Boureau, Y .L., Bach, F ., LeCun, Y ., Ponce, J.: Learning mid-level features for recognition. In: Proceedings of the IEEE Conference on Computer V ision and Pattern Recognition (2010) 3. Boureau, Y .L., Ponce, J., LeCun, Y .: A theoretical analysis of feature pooling in visual recog- nition. In: Proceedings of the International Conference on Machine Learning (2010) 4. Crosswhite, N., Byrne, J., Parkhi, O.M., Stauffer , C., Cao, Q., Zisserman, A.: T emplate adap- tation for face verification and identification. arxi v (April 2016) 5. Donahue, J., Hendricks, L.A., Guadarrama, S., Rohrbach, M., V enugopalan, S., Saenko, K., Darrell, T .: Long-term recurrent con volutional networks for visual recognition and descrip- tion. In: Proceedings of the IEEE Conference on Computer V ision and Pattern Recognition. pp. 2625–2634 (2015) 6. Huang, Z., Shan, S., W ang, R., Zhang, H., Lao, S., Kuerban, A., Chen, X.: A benchmark and comparativ e study of video-based face recogni-tion on cox face database. IEEE Transaction on Image Processing 24, 5967–5981 (2015) 7. Jia, Y ., Shelhamer , E., Donahue, J., Karayev , S., Long, J., Girshick, R., Guadarrama, S., Darrell, T .: Caf fe: Conv olutional architecture for fast feature embedding. arXi v:1408.5093 (2014) 8. Kemelmacher -Shlizerman, I., Seitz, S.M., Miller , D., Brossard, E.: The megaface bench- mark: 1 million faces for recognition at scale. In: Proceedings of the IEEE Conference on Computer V ision and Pattern Recognition (2016) 9. Learned-Miller , E., Huang, G.B., Ro yChowdhury , A., Li, H., Hua, G.: Labeled faces in the wild: A surve y . Advances in F ace Detection and Facial Image Analysis pp. 189–248 (2016) 10. Parkhi, O.M., V edaldi, A., Zisserman, A.: Deep face recognition. In: British Machine V ision Conference (2015) 11. Pigou, L., v an den Oord, A., Dieleman, S., Herrewe ghe, M.V ., Dambre, J.: Beyond temporal pooling: Recurrence and temporal con volutions for gesture recognition in video. arxi v (June 2015) 12. Schroff, F ., Kalenichenko, D., Philbin, J.: Facenet: A unified embedding for face recognition and clustering. In: Proceedings of the IEEE International Conference on Computer V ision (2015) 13. Sun, Y ., Chen, Y ., W ang, X., T ang, X.: Deep learning face representation by joint identification-verification. In: Adv ances in Neural Information Processing Systems (2014) 14. T aigman, Y ., Y ang, M., Ranzato, M., W olf, L.: Deepface: Closing the gap to human-lev el performance in face verification. In: Proceedings of the IEEE International Conference on Computer V ision (2014) 15. V enugopalan, S., Xu, H., Donahue, J., Rohrbach, M., Mooney , R., Saenko, K.: Translat- ing videos to natural language using deep recurrent neural networks. In: Proceedings of the Annual Conference of the North American Chapter of the Association for Computational Linguistics: Human Language T echnologies (2014) 16. W olf, L., Hassner, T ., Maoz, I.: F ace recognition in unconstrained videos with matched back- ground similarity . In: Proceedings of the IEEE Conference on Computer V ision and P attern Recognition (2011)

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment