Socio-Affective Agents as Models of Human Behaviour in the Networked Prisoners Dilemma

Affect Control Theory (ACT) is a powerful and general sociological model of human affective interaction. ACT provides an empirically derived mathematical model of culturally shared sentiments as heuristic guides for human decision making. BayesACT, a variant on classical ACT, combines affective reasoning with cognitive (denotative or logical) reasoning as is traditionally found in AI. Bayes-ACT allows for the creation of agents that are both emotionally guided and goal-directed. In this work, we simulate BayesACT agents in the Iterated Networked Prisoner’s Dilemma (INPD), and we show four out of five known properties of human play in INPD are replicated by these socio-affective agents. In particular, we show how the observed human behaviours of network structure invariance, anti-correlation of cooperation and reward, and player type stratification are all clearly emergent properties of the networked BayesACT agents. We further show that decision hyteresis (Moody Conditional Cooperation) is replicated by BayesACT agents in over $2/3$ of the cases we have considered. In contrast, previously used imitation-based agents are only able to replicate one of the five properties. We discuss the implications of these findings in the development of human-agent societies.

💡 Research Summary

The paper investigates whether agents built on Affect Control Theory (ACT) can emulate the characteristic patterns of human behavior observed in the Iterated Networked Prisoner’s Dilemma (INPD). ACT posits that every social concept is associated with a culturally shared affective sentiment expressed in a three‑dimensional EPA (Evaluation, Potency, Activity) space. Human interaction is driven by a desire to minimize “deflection,” the Euclidean distance between an actor’s fundamental sentiment and the transient sentiment generated by a specific Actor‑Behaviour‑Object (A‑B‑O) event. BayesACT extends ACT by treating EPA values as probability distributions, integrating denotative state, and adding an explicit reward function. The resulting model is a partially observable Markov decision process (POMDP) solved with a Monte‑Carlo Tree Search (MCTS) algorithm: nodes with lower expected deflection are explored more frequently, but the final action is chosen to maximize expected reward.

The authors adapt BayesACT to the networked version of the Prisoner’s Dilemma. Each agent holds a self‑identity distribution and a perception of its opponent’s identity. To handle multiple neighbors, they aggregate the EPA vectors of all neighbors into a single “average opponent” EPA, allowing each agent to operate within a single POMDP while still reflecting the affective influence of the whole neighborhood. Two initialization schemes are examined: (1) a synthetic mixture of “friend” (high E, P, A) and “scrooge” (low P, A) identities, and (2) empirically derived EPA distributions obtained from prior human PD experiments.

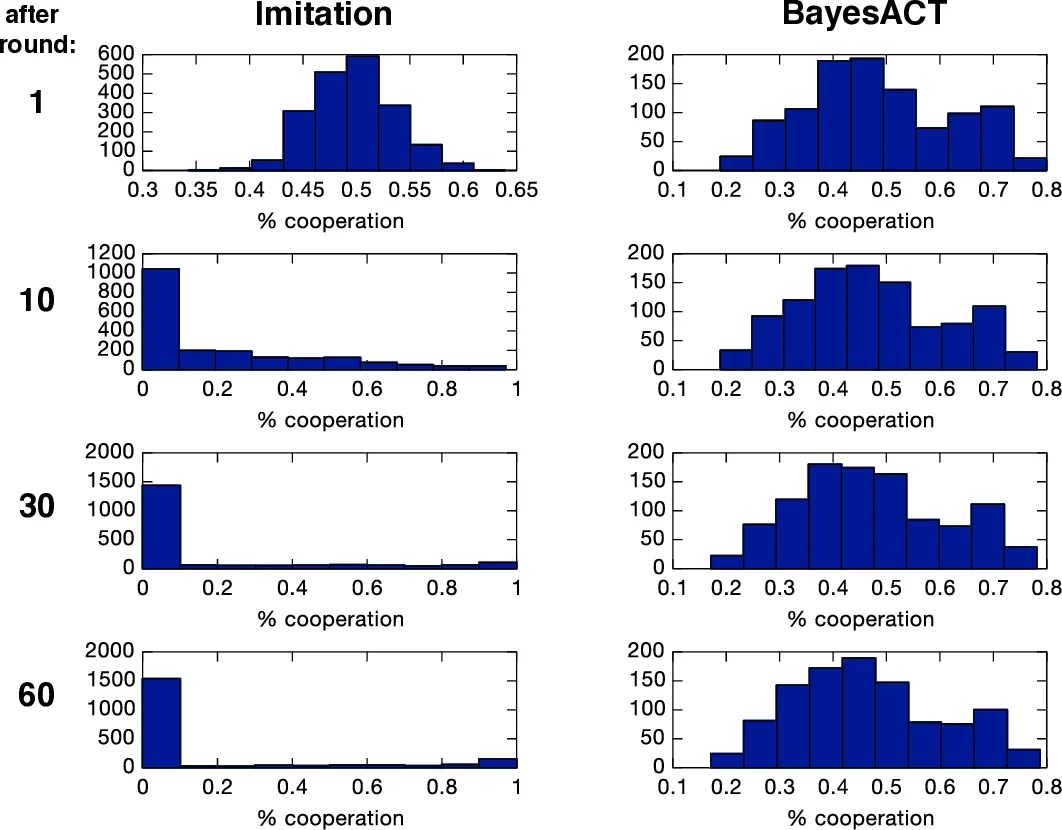

Experiments are conducted on three network topologies (grid, random, scale‑free) and several payoff matrices that satisfy the Prisoner’s Dilemma inequalities (T > R > P > S). The authors compare BayesACT agents against a baseline of imitation‑based agents previously used in the literature. They evaluate five well‑documented human properties from Grujić et al. (2010): (1) invariance of cooperation rates to network structure, (2) a gradual decline of cooperation over time, (3) an anti‑correlation between cooperation frequency and individual payoff, (4) Moody Conditional Cooperation (MCC) – the tendency to cooperate conditional on the previous round’s outcome, and (5) stratification of players into four behavioral clusters.

Results show that BayesACT agents successfully reproduce four of the five properties. They maintain similar cooperation levels across all network structures, exhibit the expected anti‑correlation between cooperation and reward, display MCC in more than two‑thirds of the simulated runs, and naturally form four distinct clusters when agents are grouped by their long‑term cooperation rates. The only property not fully captured is the long‑term decline in cooperation; BayesACT agents remain more cooperative than human subjects, likely because the model’s primary drive—to minimize deflection—overrides pure reward‑maximizing incentives that cause humans to defect over time. In contrast, the imitation agents only replicate the anti‑correlation property and fail on the others.

The discussion highlights that integrating affective reasoning yields agents whose behavior aligns closely with human social intuition, suggesting a pathway toward socially acceptable AI in mixed human‑machine societies. Limitations include the simplification of multiple opponents into a single averaged EPA, which discards nuanced pairwise affective differences, and the inability of the current BayesACT implementation to represent dynamic identity mixtures beyond the initial distribution. Future work could explore hierarchical POMDPs or multi‑agent belief‑sharing mechanisms to capture richer social dynamics.

In conclusion, the study demonstrates that affect‑driven, probabilistic agents can model key aspects of human cooperation in complex networked games, outperforming traditional imitation‑based approaches. This provides empirical support for the claim that embedding sociologically grounded affective models into AI agents can enhance their predictability, trustworthiness, and effectiveness in collaborative environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment