Network Backboning with Noisy Data

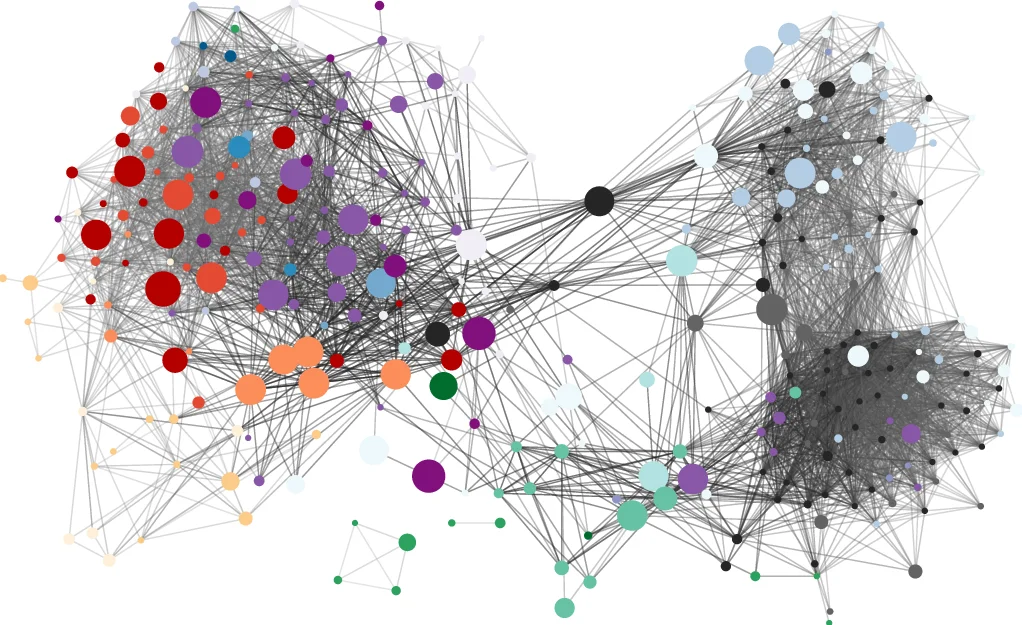

Networks are powerful instruments to study complex phenomena, but they become hard to analyze in data that contain noise. Network backbones provide a tool to extract the latent structure from noisy networks by pruning non-salient edges. We describe a new approach to extract such backbones. We assume that edge weights are drawn from a binomial distribution, and estimate the error-variance in edge weights using a Bayesian framework. Our approach uses a more realistic null model for the edge weight creation process than prior work. In particular, it simultaneously considers the propensity of nodes to send and receive connections, whereas previous approaches only considered nodes as emitters of edges. We test our model with real world networks of different types (flows, stocks, co-occurrences, directed, undirected) and show that our Noise-Corrected approach returns backbones that outperform other approaches on a number of criteria. Our approach is scalable, able to deal with networks with millions of edges.

💡 Research Summary

The paper addresses the problem of extracting a meaningful backbone from dense, noisy weighted networks. Existing general‑purpose methods such as the Disparity Filter (DF) evaluate edge significance only from the perspective of the source node, which can lead to systematic biases—particularly the over‑retention of peripheral‑to‑hub edges and the under‑retention of peripheral‑to‑peripheral edges. To overcome this limitation, the authors propose a Noise‑Corrected (NC) backbone extraction algorithm that models each edge weight as a draw from a binomial distribution and estimates its posterior variance within a Bayesian framework.

Formally, let (N_{ij}) be the observed weight of edge ((i,j)), (N_{i.}) the total outgoing weight of node (i), (N_{.j}) the total incoming weight of node (j), and (N_{..}) the sum of all weights. The expected weight under the null model is (E

Comments & Academic Discussion

Loading comments...

Leave a Comment