One evaluation of model-based testing and its automation

Model-based testing relies on behavior models for the generation of model traces: input and expected output—test cases—for an implementation. We use the case study of an automotive network controller to assess different test suites in terms of error detection, model coverage, and implementation coverage. Some of these suites were generated automatically with and without models, purely at random, and with dedicated functional test selection criteria. Other suites were derived manually, with and without the model at hand. Both automatically and manually derived model-based test suites detected significantly more requirements errors than hand-crafted test suites that were directly derived from the requirements. The number of detected programming errors did not depend on the use of models. Automatically generated model-based test suites detected as many errors as hand-crafted model-based suites with the same number of tests. A sixfold increase in the number of model-based tests led to an 11% increase in detected errors.

💡 Research Summary

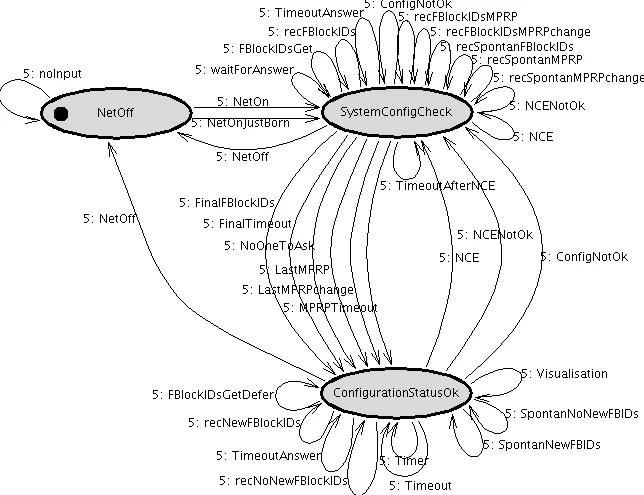

This paper presents an empirical evaluation of model‑based testing (MBT) using a real‑world automotive network controller (the MOST NetworkMaster) as a case study. The authors first constructed an executable behavior model of the controller with the AUTOFOCUS CASE tool, translating informal message sequence charts (MSCs) into extended finite‑state machines (EFSMs). The modeling effort uncovered inconsistencies and omissions in the original requirements, which were corrected, thereby demonstrating that modeling itself can improve requirement quality. The final model comprises 17 components, 100 channels, 138 ports, 12 EFSMs, 16 control states, 104 transitions, and a substantial data‑type library.

Four families of test suites were generated and applied to the implementation (≈12,300 lines of C code): (1) hand‑crafted tests derived directly from the requirements without a model, (2) hand‑crafted tests that used the model as a reference, (3) automatically generated tests derived from the model using constraint‑logic‑programming (CLP) based enumeration guided by test‑case specifications (e.g., coverage criteria, functional constraints), and (4) purely random tests generated without any model. The automatic generation process translates the EFSM model into a CLP representation, adds the test‑case specifications, and enumerates all feasible traces; when the trace space is too large, additional constraints or random sampling are applied.

The evaluation measured three dimensions: (a) number of detected failures, split into requirements errors (issues in the specification) and programming errors (code defects); (b) model coverage (including condition/decision – C/D – coverage on the model); and (c) implementation coverage (C/D coverage on the SUT). The key findings are:

- Model‑based tests (both hand‑crafted and automatically generated) detect significantly more requirements errors than tests that ignore the model. The detection rate for programming errors is essentially independent of model usage.

- For a given test‑suite size, automatically generated MBT tests find as many failures as hand‑crafted MBT tests. Scaling the automatically generated suite six‑fold yields only an 11 % increase in detected errors, indicating diminishing returns from sheer quantity.

- Hand‑crafted MBT suites achieve higher model coverage but lower implementation C/D coverage, whereas automatically generated suites show the opposite pattern.

- Model C/D coverage correlates strongly with failure detection, while implementation C/D coverage shows a moderate positive correlation. Nevertheless, high coverage on either level does not guarantee a higher detection rate.

From these results the authors draw three practical implications: (i) employing explicit behavior models pays off for finding specification‑level defects; (ii) structural criteria that strongly correlate with failure detection on the implementation side do not automatically transfer to model‑driven test generation; and (iii) the benefits of automated test generation remain to be proven when the number of actually executed test cases is a limiting factor. The authors acknowledge that the study’s external validity is limited to this single, medium‑scale industrial system, but they argue that a growing body of publicly available case studies will eventually enable more general conclusions about the cost‑effectiveness and quality impact of model‑based testing.

Comments & Academic Discussion

Loading comments...

Leave a Comment