Not Normal: the uncertainties of scientific measurements

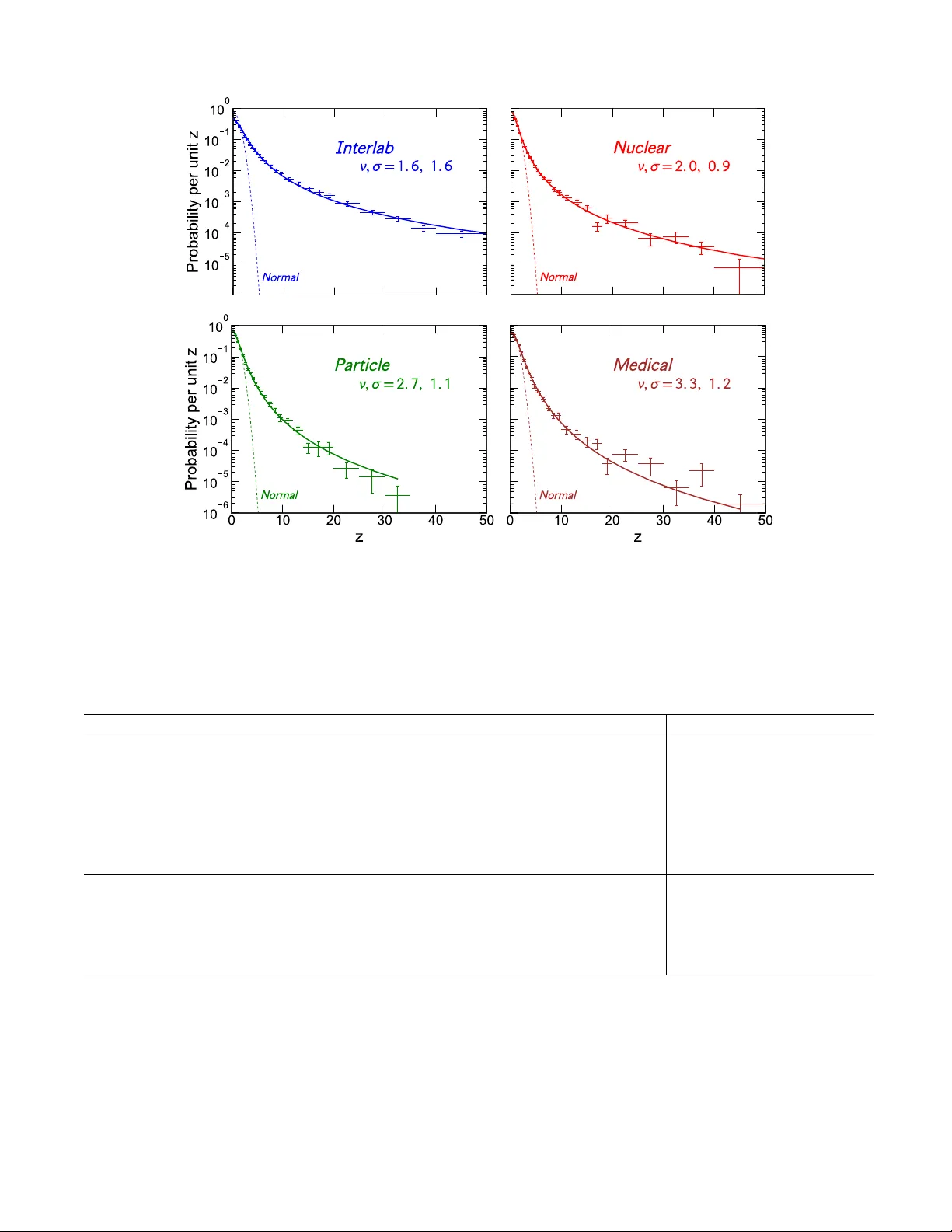

Judging the significance and reproducibility of quantitative research requires a good understanding of relevant uncertainties, but it is often unclear how well these have been evaluated and what they imply. Reported scientific uncertainties were stud…

Authors: David C. Bailey