This paper presents the development of several models of a deep convolutional auto-encoder in the Caffe deep learning framework and their experimental evaluation on the example of MNIST dataset. We have created five models of a convolutional auto-encoder which differ architecturally by the presence or absence of pooling and unpooling layers in the auto-encoder's encoder and decoder parts. Our results show that the developed models provide very good results in dimensionality reduction and unsupervised clustering tasks, and small classification errors when we used the learned internal code as an input of a supervised linear classifier and multi-layer perceptron. The best results were provided by a model where the encoder part contains convolutional and pooling layers, followed by an analogous decoder part with deconvolution and unpooling layers without the use of switch variables in the decoder part. The paper also discusses practical details of the creation of a deep convolutional auto-encoder in the very popular Caffe deep learning framework. We believe that our approach and results presented in this paper could help other researchers to build efficient deep neural network architectures in the future.

Deep Dive into A Deep Convolutional Auto-Encoder with Pooling - Unpooling Layers in Caffe.

This paper presents the development of several models of a deep convolutional auto-encoder in the Caffe deep learning framework and their experimental evaluation on the example of MNIST dataset. We have created five models of a convolutional auto-encoder which differ architecturally by the presence or absence of pooling and unpooling layers in the auto-encoder’s encoder and decoder parts. Our results show that the developed models provide very good results in dimensionality reduction and unsupervised clustering tasks, and small classification errors when we used the learned internal code as an input of a supervised linear classifier and multi-layer perceptron. The best results were provided by a model where the encoder part contains convolutional and pooling layers, followed by an analogous decoder part with deconvolution and unpooling layers without the use of switch variables in the decoder part. The paper also discusses practical details of the creation of a deep convolutional auto-

1

A Deep Convolutional Auto-Encoder with Pooling - Unpooling Layers in Caffe

Volodymyr Turchenko, Eric Chalmers, Artur Luczak

Canadian Centre for Behavioural Neuroscience

Department of Neuroscience, University of Lethbridge

4401 University Drive, Lethbridge, AB, T1K 3M4, Canada

{vtu, eric.chalmers, luczak}@uleth.ca

Abstract – This paper presents the development of several models of a deep convolutional auto-encoder in the Caffe

deep learning framework and their experimental evaluation on the example of MNIST dataset. We have created five

models of a convolutional auto-encoder which differ architecturally by the presence or absence of pooling and

unpooling layers in the auto-encoder’s encoder and decoder parts. Our results show that the developed models provide

very good results in dimensionality reduction and unsupervised clustering tasks, and small classification errors when we

used the learned internal code as an input of a supervised linear classifier and multi-layer perceptron. The best results

were provided by a model where the encoder part contains convolutional and pooling layers, followed by an analogous

decoder part with deconvolution and unpooling layers without the use of switch variables in the decoder part. The paper

also discusses practical details of the creation of a deep convolutional auto-encoder in the very popular Caffe deep

learning framework. We believe that our approach and results presented in this paper could help other researchers to

build efficient deep neural network architectures in the future.

Keywords – Deep convolutional auto-encoder, machine learning, neural networks, dimensionality reduction,

unsupervised clustering.

- Introduction

An auto-encoder (AE) model is based on an encoder-decoder paradigm, where an encoder first transforms an input

into a typically lower-dimensional representation, and a decoder is tuned to reconstruct the initial input from this

representation through the minimization of a cost function [1-4]. An AE is trained in unsupervised fashion which allows

extracting generally useful features from unlabeled data. AEs and unsupervised learning methods have been widely

used in many scientific and industrial applications, mainly solving tasks like network pre-training, feature extraction,

dimensionality reduction, and clustering. A classic or shallow AE has only one hidden layer which is a lower-

dimensional representation of the input. In the last decade, the revolutionary success of deep neural network (NN)

architectures has shown that deep AEs with many hidden layers in the encoder and decoder parts are the state-of-the-art

models in unsupervised learning. In comparison with a shallow AE, when the number of trainable parameters is the

same, a deep AE can reproduce the input with lower reconstruction error [5]. A deep AE can extract hierarchical

features by its hidden layers and, therefore, substantially improve the quality of solving specific task. One of the

variations of a deep AE [5] is a deep convolutional auto-encoder (CAE) which, instead of fully-connected layers,

contains convolutional layers in the encoder part and deconvolution layers in the decoder part. Deep CAEs may be

better suited to image processing tasks because they fully utilize the properties of convolutional neural networks

(CNNs), which have been proven to provide better results on noisy, shifted (translated) and corrupted image data [6].

Modern deep learning frameworks, i.e. ConvNet2 [7], Theano with lightweight extensions Lasagne and Keras [8-

10], Torch7 [11], Caffe [12], TensorFlow [13] and others, have become very popular tools in deep learning research

since they provide fast deployment of state-of-the-art deep learning models along with state-of-the-art training

algorithms (Stochastic Gradient Descent, AdaDelta, etc.) allowing rapid research progress and emerging commercial

applications. Moreover, these frameworks implement many state-of-the-art approaches to network initialization,

parametrization and regularization, as well as state-of-the-art example models. Besides many outstanding features, we

have chosen the Caffe deep learning framework [12] mainly for two reasons: (i) a description of a deep NN is pretty

straightforward, it is just a text file describing the layers and (ii) Caffe has a Matlab wrapper, which is very convenient

and allows getting Caffe results directly into a Matlab workspace for their further processing (visualization, etc.) [12].

The goal of this paper is to present the practical implementation of several CAE models in the Caffe deep learning

framework, as well as experimental results on solving an unsupervised clustering task using the MNIST dataset. This

study is an extended version of our paper published in arXiv [14]. All developed Caffe .prototxt files to reproduce our

models along with Matlab-based visualization scripts are included in supplementary materials. The paper is organized

as fo

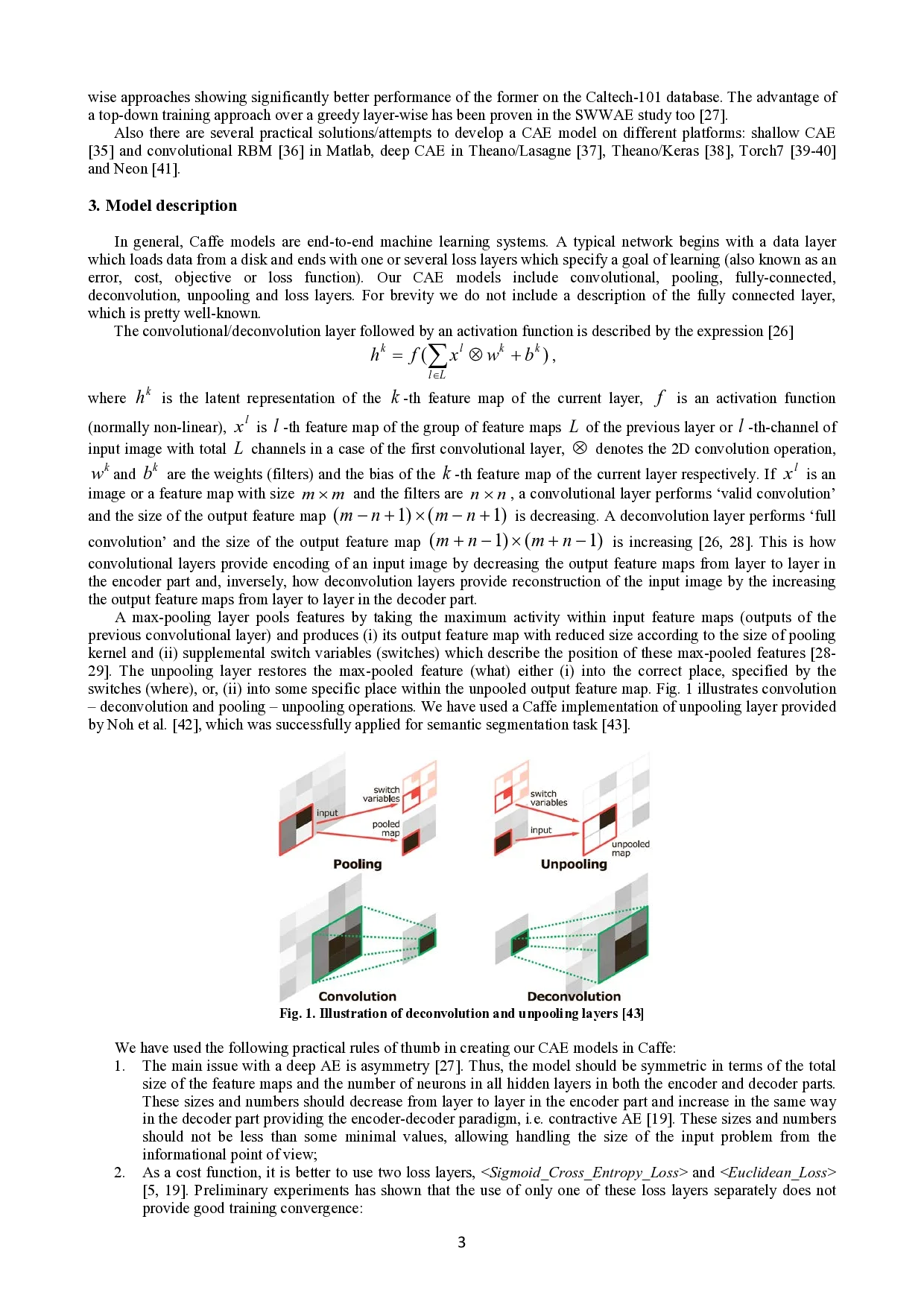

…(Full text truncated)…

This content is AI-processed based on ArXiv data.