Deep Memory Networks for Attitude Identification

We consider the task of identifying attitudes towards a given set of entities from text. Conventionally, this task is decomposed into two separate subtasks: target detection that identifies whether each entity is mentioned in the text, either explicitly or implicitly, and polarity classification that classifies the exact sentiment towards an identified entity (the target) into positive, negative, or neutral. Instead, we show that attitude identification can be solved with an end-to-end machine learning architecture, in which the two subtasks are interleaved by a deep memory network. In this way, signals produced in target detection provide clues for polarity classification, and reversely, the predicted polarity provides feedback to the identification of targets. Moreover, the treatments for the set of targets also influence each other – the learned representations may share the same semantics for some targets but vary for others. The proposed deep memory network, the AttNet, outperforms methods that do not consider the interactions between the subtasks or those among the targets, including conventional machine learning methods and the state-of-the-art deep learning models.

💡 Research Summary

The paper “Deep Memory Networks for Attitude Identification” introduces AttNet, a novel end‑to‑end neural architecture that jointly solves target detection (TD) and polarity classification (PC) for attitude identification. Traditional pipelines treat these two subtasks separately: first a classifier decides whether a given entity (target) appears in a text (explicitly or implicitly), then a second classifier predicts the sentiment polarity (positive, negative, neutral) toward that target. This separation ignores two important sources of information: (1) signals from TD can guide PC (e.g., the presence of a target narrows the search space for sentiment words), and (2) polarity cues can provide indirect supervision for TD, especially when the target is mentioned implicitly. Moreover, existing methods rarely model interactions among multiple targets that may co‑occur in the same document.

AttNet addresses these gaps by interleaving TD and PC within a deep memory‑network framework. Each target is represented by a one‑hot vector and embedded via a shared matrix B. For a given context, word tokens are also one‑hot encoded and embedded. In the TD stage, a content‑based attention computes match scores between the target embedding and each word embedding, producing a weighted sum that serves as a context representation. This representation is concatenated with the binary TD output and fed into the PC stage, where a second attention layer (conditioned on the TD attention) focuses on sentiment‑bearing words. Crucially, the loss from PC is back‑propagated through the TD component, creating a bidirectional feedback loop.

A key innovation is the introduction of target‑specific projection matrices. These matrices transform word embeddings differently for each target, allowing some words to share a common semantic representation across targets while others retain target‑specific nuances. This mechanism captures the fact that, for example, “fast” may be positive for a service target but neutral for a product target.

The authors extend the single‑layer design to multiple stacked layers, deepening the model’s capacity to refine attention iteratively. Each layer’s output serves as a pre‑condition for the next layer’s attention, enabling progressively more precise alignment between targets and sentiment cues.

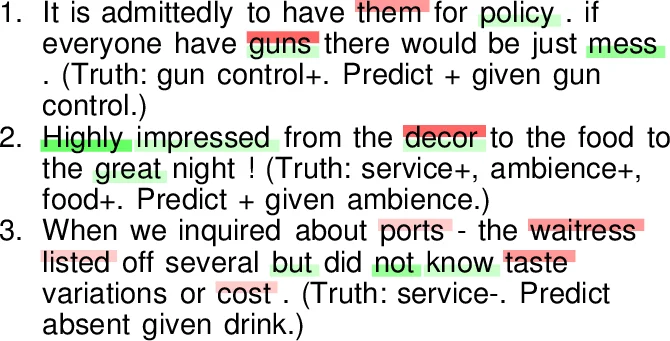

Experiments were conducted on two public datasets: a Twitter‑style opinion dataset and a product‑review corpus. AttNet was compared against strong baselines, including SVMs with hand‑crafted features, single‑layer memory networks, LSTM/GRU models, and recent Transformer‑based classifiers. Results show that AttNet consistently outperforms all baselines, achieving average gains of 4–7 percentage points in both target detection accuracy and polarity F1 score. The improvement is especially pronounced for implicitly mentioned targets, where traditional pipelines suffer large drops. Visualization of attention weights confirms that TD attention focuses on target‑related tokens, while PC attention concentrates on surrounding sentiment words, validating the intended interaction.

The paper’s contributions are threefold: (1) a unified architecture that tightly couples TD and PC through bidirectional feedback, (2) target‑specific projection matrices that enable shared and distinct semantic representations across multiple targets, and (3) a multi‑layer memory network that deepens the interaction modeling. Limitations include the need for a predefined target set (the number of projection matrices grows linearly with target count) and computational cost for very long documents due to the O(N) attention over all tokens.

Future work suggested includes dynamic target generation using external knowledge bases, memory‑efficient attention mechanisms for long texts, and extensions to multimodal data for real‑world opinion monitoring. Overall, AttNet represents a significant step forward in fine‑grained sentiment analysis, offering a more holistic and accurate solution for attitude identification in diverse textual domains.

Comments & Academic Discussion

Loading comments...

Leave a Comment