Attention Allocation Aid for Visual Search

This paper outlines the development and testing of a novel, feedback-enabled attention allocation aid (AAAD), which uses real-time physiological data to improve human performance in a realistic sequential visual search task. Indeed, by optimizing over search duration, the aid improves efficiency, while preserving decision accuracy, as the operator identifies and classifies targets within simulated aerial imagery. Specifically, using experimental eye-tracking data and measurements about target detectability across the human visual field, we develop functional models of detection accuracy as a function of search time, number of eye movements, scan path, and image clutter. These models are then used by the AAAD in conjunction with real time eye position data to make probabilistic estimations of attained search accuracy and to recommend that the observer either move on to the next image or continue exploring the present image. An experimental evaluation in a scenario motivated from human supervisory control in surveillance missions confirms the benefits of the AAAD.

💡 Research Summary

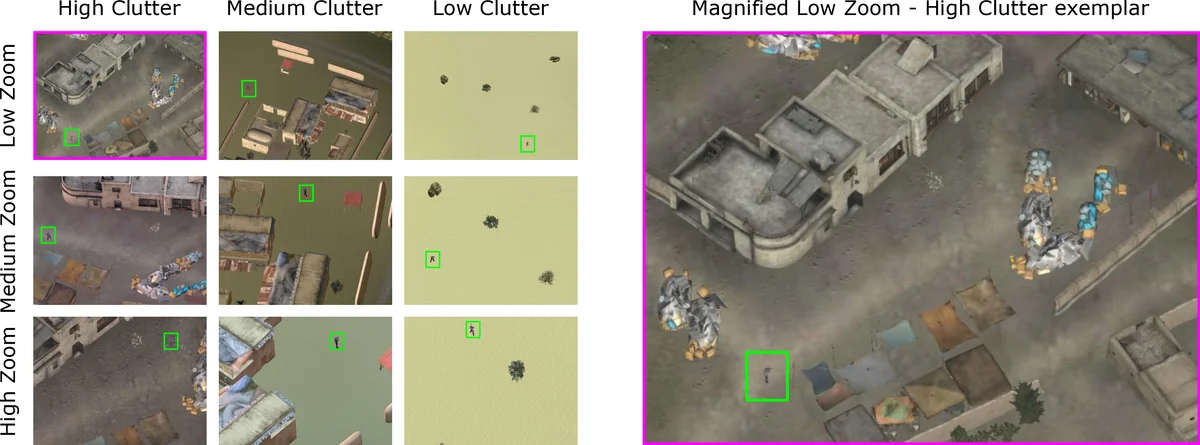

The paper presents the design, implementation, and experimental validation of a real‑time Attention Allocation Aid for Visual Search (AAAD), a decision‑support tool that uses eye‑tracking data to determine when a human operator should terminate or continue a visual search task. The motivation stems from modern surveillance and reconnaissance missions where operators must sift through massive streams of aerial imagery, often leading to information overload and inefficient allocation of visual attention. The AAAD addresses this by continuously monitoring three key metrics: (1) elapsed search time, (2) the number of saccadic eye movements, and (3) a probabilistic estimate of target detectability derived from pre‑measured detectability surfaces that capture how detection performance varies with retinal eccentricity, image clutter, and zoom level.

To build these models, the authors conducted two preliminary psychophysical experiments. In a forced‑fixation condition, participants maintained central fixation while stimuli of varying eccentricities (1°, 4°, 9°, 15°) and exposure durations (100–1600 ms) were presented, allowing the authors to map detection accuracy as a function of eccentricity and time and to generate detectability surfaces for each combination of clutter and zoom. In a free‑search condition, participants were free to move their eyes while searching for people and weapons in the same set of videos, providing data to construct performance‑perceptual curves (PPCs) linking search time, saccade count, and detectability scores to accuracy. These PPCs identify an asymptotic “search satisfaction time” at which additional viewing yields diminishing returns.

The AAAD algorithm ingests real‑time gaze coordinates from an EyeLink 1000 system (1 kHz sampling). At each moment it updates a cumulative evidence map by projecting the current fixation onto the pre‑computed detectability surface, weighting by clutter and zoom. Using a Bayesian estimator, it computes the probability that the accumulated evidence has reached the asymptotic accuracy threshold. When this probability exceeds a preset confidence level, the system displays a simple visual cue indicating that the operator may move on to the next image; if the probability remains low or unexplored regions are detected, the cue encourages continued inspection. Importantly, the aid does not provide saliency or fixation cues; it functions purely as a back‑end optimizer of search duration.

The system was evaluated in a simulated surveillance scenario designed to mimic human supervisory control of unmanned aerial vehicles. A corpus of 273 rotating aerial videos was segmented into 1,440 short clips (each 120 s) with balanced presence/absence of people and weapons, and varying levels of zoom (high, medium, low) and clutter (high, medium, low). Eleven participants performed the search task under two conditions: with AAAD feedback and without. Eye movements were recorded, and performance metrics—average search time, number of clips processed per unit time, detection accuracy (hit rate, false‑alarm rate), and subjective confidence—were collected.

Results showed that the AAAD reduced average search time by roughly 33 % and increased the number of clips processed per unit time by a factor of 1.5, without any statistically significant change in detection accuracy. Participants reported that the feedback was intuitive and that it reduced perceived workload. These findings demonstrate that a real‑time, physiology‑driven termination cue can substantially improve throughput in sequential visual search tasks while preserving decision quality.

Key contributions of the work include: (1) a quantitative model that fuses eye‑movement dynamics with spatially varying detectability, (2) the creation of clutter‑ and zoom‑aware detectability surfaces, (3) a probabilistic decision framework for estimating when asymptotic accuracy has been reached, and (4) empirical evidence that a “when‑to‑stop” aid can outperform traditional “where‑to‑look” saliency‑based assistance in terms of efficiency.

Limitations are acknowledged. The experiments involved a modest number of participants and synthetic video clips; real‑world deployment would require user‑specific calibration of detectability surfaces and validation across diverse sensor modalities (e.g., infrared, radar). The current system also assumes a single target class and binary decision; extending it to multi‑target, multi‑class, or multi‑modal scenarios remains an open challenge. Future work should explore long‑term effects on cognitive load, adaptation to fatigue, and integration with higher‑level mission planning tools. Nonetheless, the AAAD represents a promising step toward physiologically informed human‑machine collaboration in high‑stakes visual monitoring environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment