Improving Sampling from Generative Autoencoders with Markov Chains

We focus on generative autoencoders, such as variational or adversarial autoencoders, which jointly learn a generative model alongside an inference model. Generative autoencoders are those which are trained to softly enforce a prior on the latent dis…

Authors: Antonia Creswell, Kai Arulkumaran, Anil Anthony Bharath

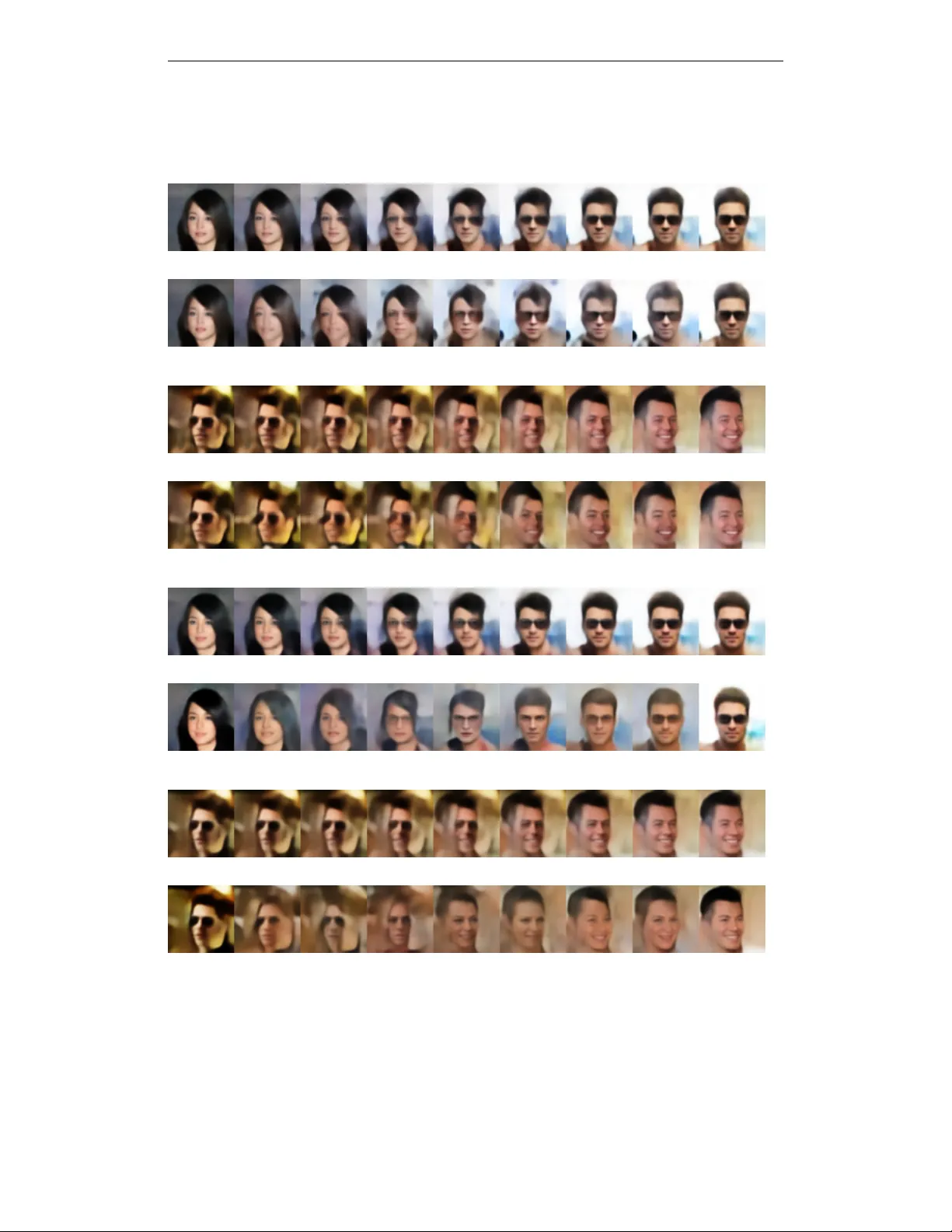

Under revie w as a conference paper at ICLR 2017 I M P R OV I N G S A M P L I N G F RO M G E N E R A T I V E A U T O E N C O D E R S W I T H M A R K OV C H A I N S Antonia Creswell, Kai Arulkumaran & Anil A. Bharath Department of Bioengineering Imperial College London London SW7 2BP , UK { ac2211,ka709,aab01 } @ic.ac.uk A B S T R AC T W e focus on generativ e autoencoders, such as variational or adv ersarial autoen- coders, which jointly learn a generative model alongside an inference model. Gen- erativ e autoencoders are those which are trained to softly enforce a prior on the latent distribution learned by the inference model. W e call the distribution to which the inference model maps observed samples, the learned latent distribu- tion , which may not be consistent with the prior . W e formulate a Markov chain Monte Carlo (MCMC) sampling process, equiv alent to iterativ ely decoding and encoding, which allows us to sample from the learned latent distribution. Since, the generative model learns to map from the learned latent distribution, rather than the prior , we may use MCMC to improv e the quality of samples dra wn from the generative model, especially when the learned latent distribution is far from the prior . Using MCMC sampling, we are able to rev eal previously unseen dif fer- ences between generativ e autoencoders trained either with or without a denoising criterion. 1 I N T RO D U C T I O N Unsupervised learning h as benefited greatly from the introduction of deep generativ e models. In particular , the introduction of generativ e adversarial networks (GANs) (Goodfellow et al., 2014) and v ariational autoencoders (V AEs) (Kingma & W elling, 2014; Rezende et al., 2014) has led to a plethora of research into learning latent v ariable models that are capable of generating data from complex distrib utions, including the space of natural images (Radford et al., 2015). Both of these models, and their extensions, operate by placing a prior distribution, P ( Z ) , ov er a latent space Z ⊆ R b , and learn mappings from the latent space, Z , to the space of the observed data, X ⊆ R a . W e are interested in autoencoding generative models, models which learn not just the generativ e mapping Z 7→ X , but also the inferential mapping X 7→ Z . Specifically , we define generative autoencoders as autoencoders which softly constrain their latent distribution, to match a specified prior distribution, P ( Z ) . This is achiev ed by minimising a loss, L prior , between the latent distribu- tion and the prior . This includes V AEs (Kingma & W elling, 2014; Rezende et al., 2014), extensions of V AEs (Kingma et al., 2016), and also adversarial autoencoders (AAEs) (Makhzani et al., 2015). Whilst other autoencoders also learn an encoding function, e : R a → Z , together with a decoding function, d : R b → X , the latent space is not necessarily constrained to conform to a specified probability distribution. This is the key distinction for generativ e autoencoders; both e and d can still be deterministic functions (Makhzani et al., 2015). The functions e and d are defined for any input from R a and R b respectiv ely , howe ver the outputs of the functions may be constrained practically by the type of functions that e and d are, such that e maps to Z ⊆ R b and d maps to X ⊆ R a . During training ho wever , the encoder , e is only fed with training data samples, x ∈ X and the decoder , d is only fed with samples from the encoder , z ∈ Z , and so the encoder and decoder learn mappings between X and Z . The process of encoding and decoding may be interpreted as sampling the conditional probabilities Q φ ( Z | X ) and P θ ( X | Z ) respectiv ely . The conditional distributions may be sampled using the en- coding and decoding functions e ( X ; φ ) and d ( Z ; θ ) , where φ and θ are learned parameters of the 1 Under revie w as a conference paper at ICLR 2017 encoding and decoding functions respecti vely . The decoder of a generati ve autoencoder may be used to generate ne w samples that are consistent with the data. There are two traditional approaches for sampling generativ e autoencoders: Appr oach 1 (Bengio et al., 2014): x 0 ∼ P ( X ) , z 0 ∼ Q φ ( Z | X = x 0 ) , x 1 ∼ P θ ( X | Z = z 0 ) where P ( X ) is the data generating distrib ution. Howe ver , this approach is lik ely to generate samples similar to those in the training data, rather than generating novel samples that are consistent with the training data. Appr oach 2 (Kingma & W elling, 2014; Makhzani et al., 2015; Rezende et al., 2014): z 0 ∼ P ( Z ) , x 0 ∼ P θ ( X | Z = z 0 ) where P ( Z ) is the prior distribution enforced during training and P θ ( X | Z ) is the decoder trained to map samples dra wn from Q φ ( Z | X ) to samples consistent with P ( X ) . This approach assumes that R Q φ ( Z | X ) P ( X ) dX = P ( Z ) , suggesting that the encoder maps all data samples from P ( X ) to a distribution that matches the prior distrib ution, P ( Z ) . Ho wever , it is not always true that R Q φ ( Z | X ) P ( X ) dX = P ( Z ) . Rather Q φ ( Z | X ) maps data samples to a distribution which we call, ˆ P ( Z ) : Z Q φ ( Z | X ) P ( X ) dX = ˆ P ( Z ) where it is not necessarily true that ˆ P ( Z ) = P ( Z ) because the prior is only softly enforced. The decoder, on the other hand, is trained to map encoded data samples (i.e. samples from R Q φ ( Z | X ) P ( X ) dX ) to samples from X which ha ve the distribution P ( X ) . If the encoder maps observed samples to latent samples with the distribution ˆ P ( Z ) , rather than the desired prior distri- bution, P ( Z ) , then: Z P θ ( X | Z ) P ( Z ) d Z 6 = P ( X ) This suggests that samples drawn from the decoder, P θ ( X | Z ) , conditioned on samples drawn from the prior, P ( Z ) , may not be consistent with the data generating distribution, P ( X ) . Howe ver , by conditioning on ˆ P ( Z ) : Z P θ ( X | Z ) ˆ P ( Z ) d Z = P ( X ) This suggests that to obtain more realistic generations, latent samples should be drawn via z ∼ ˆ P ( Z ) rather than z ∼ P ( Z ) , followed by x ∼ P θ ( X | Z ) . A limited number of latent samples may be drawn from ˆ P ( Z ) using the first two steps in Approach 1 - howe ver this has the drawbacks discussed in Approach 1. W e introduce an alternati ve method for sampling from ˆ P ( Z ) which does not have the same drawbacks. Our main contri bution is the formulation of a Markov chain Monte Carlo (MCMC) sampling process for generative autoencoders, which allows us to sample from ˆ P ( Z ) . By iteratively sampling the chain, starting from an arbitrary z t =0 ∈ R b , the chain conv erges to z t →∞ ∼ ˆ P ( Z ) , allowing us to draw latent samples from ˆ P ( Z ) after sev eral steps of MCMC sampling. From a practical perspectiv e, this is achieved by iteratively decoding and encoding, which may be easily applied to existing generativ e autoencoders. Because ˆ P ( Z ) is optimised to be close to P ( Z ) , the initial sample, z t =0 can be drawn from P ( Z ) , improving the quality of the samples within a fe w iterations. When interpolating between latent encodings, there is no guarantee that z stays within high density regions of ˆ P ( Z ) . Pre viously , this has been addressed by using spherical, rather than linear interpo- lation of the high dimensional Z space (White, 2016). Howe ver , this approach attempts to keep z 2 Under revie w as a conference paper at ICLR 2017 Figure 1: P ( X ) is the data generating distribution. W e may access some samples from P ( X ) by drawing samples from the training data. Q φ ( Z | X ) is the conditional distribution, modeled by an encoder , which maps samples from R a to samples in R b . An ideal encoder maps samples from P ( X ) to a known, prior distrib ution P ( Z ) : in reality the encoder maps samples from P ( X ) to an unknown distrib ution ˆ P ( Z ) . P θ ( X | Z ) is a conditional distribution, modeled by a decoder , which maps samples from R b to R a . During training the decoder learns to map samples dra wn from ˆ P ( Z ) to P ( X ) rather than samples dra wn from P ( Z ) because the decoder only sees samples from ˆ P ( Z ) . Regularisation on the latent space only encourages ˆ P ( Z ) to be close to P ( Z ) . Note that if L prior is optimal, then ˆ P ( Z ) ov erlaps fully with P ( Z ) . (a) V AE (initial) (b) V AE (5 steps) (c) V AE (initial) (d) V AE (5 steps) Figure 2: Prior work: Spherically interpolating (White, 2016) between two f aces using a V AE (a, c). In (a), the attempt to gradually generate sunglasses results in visual artifacts around the eyes. In (c), the model fails to properly capture the desired change in orientation of the face, resulting in three partial faces in the middle of the interpolation. This work: (b) and (d) are the result of 5 steps of MCMC sampling applied to the latent samples that were used to generate the original interpolations, (a) and (c). In (b), the discolouration around the eyes disappears, with the model settling on either generating or not generating glasses. In (d), the model mov es away from multiple faces in the interpolation by producing ne w faces with appropriate orientations. 3 Under revie w as a conference paper at ICLR 2017 within P ( Z ) , rather than trying to sample from ˆ P ( Z ) . By instead applying sev eral steps of MCMC sampling to the interpolated z samples before sampling P θ ( X | Z ) , unrealistic artifacts can be re- duced (see Figure 2). Whilst most methods that aim to generate realistic samples from X rely on adjusting encodings of the observed data (White, 2016), our use of MCMC allows us to walk any latent sample to more probable re gions of the learned latent distribution, resulting in more con vinc- ing generations. W e demonstrate that the use of MCMC sampling improv es generations from both V AEs and AAEs with high-dimensional Z ; this is important as pre vious studies ha ve sho wn that the dimensionality of Z should be scaled with the intrinsic latent dimensionality of the observed data. Our second contrib ution is the modification of the proposed transition operator for the MCMC sam- pling process to denoising generati ve autoencoders. These are generative autoencoders trained us- ing a denoising criterion, (Seung, 1997; V incent et al., 2008). W e reformulate our original MCMC sampling process to incorporate the noising and denoising processes, allo wing us to use MCMC sampling on denoising generative autoencoders. W e apply this sampling technique to two models. The first is the denoising V AE (D V AE) introduced by Im et al. (2015). W e found that MCMC sam- pling re vealed benefits of the denoising criterion. The second model is a denoising AAE (D AAE), constructed by applying the denoising criterion to the AAE. There were no modifications to the cost function. For both the D V AE and the DAAE, the ef fects of the denoising crtierion were not immedi- ately obvious from the initial samples. Training generativ e autoencoders with a denoising criterion reduced visual artefacts found both in generations and in interpolations. The effect of the denoising criterion was re vealed when sampling the denoising models using MCMC sampling. 2 B A C K G R O U N D One of the main tasks in machine learning is to learn explanatory factors for observed data, commonly kno wn as inference. That is, giv en a data sample x ∈ X ⊆ R a , we would like to find a corresponding latent encoding z ∈ Z ⊆ R b . Another task is to learn the in verse, generativ e mapping from a giv en z to a corresponding x . In general, coming up with a suit- able criterion for learning these mappings is difficult. Autoencoders solve both tasks efficiently by jointly learning an inferential mapping e ( X ; φ ) and generative mapping d ( Z ; θ ) , using unla- belled data from X in a self-supervised fashion (Kingma & W elling, 2014). The basic objec- tiv e of all autoencoders is to minimise a reconstruction cost, L reconstr uct , between the original data, X , and its reconstruction, d ( e ( X ; φ ); θ ) . Examples of L reconstr uct include the squared error loss, 1 2 P N n =1 k d ( e ( x n ; φ ); θ ) − x n k 2 , and the cross-entropy loss, H [ P ( X ) k P ( d ( e ( X ; φ ); θ ))] = − P N n =1 x n log( d ( e ( x n ; φ ); θ )) + (1 − x n ) log (1 − d ( e ( x n ; φ ); θ )) . Autoencoders may be cast into a probablistic framework, by considering samples x ∼ P ( X ) and z ∼ P ( Z ) , and attempting to learn the conditional distrib utions Q φ ( Z | X ) and P θ ( X | Z ) as e ( X ; φ ) and d ( Z ; θ ) respectiv ely , with L reconstr uct representing the negati ve log-likelihood of the recon- struction gi ven the encoding (Bengio, 2009). W ith any autoencoder, it is possible to create novel x ∈ X by passing a z ∈ Z through d ( Z ; θ ) , but we ha ve no kno wledge of appropriate choices of z beyond those obtained via e ( X ; φ ) . One solution is to constrain the latent space to which the encod- ing model maps observed samples. This can be achie ved by an additional loss, L prior , that penalises encodings far a way from a specified prior distribution, P ( Z ) . W e now revie w two types of gener- ativ e autoencoders, V AEs (Kingma & W elling, 2014; Rezende et al., 2014) and AAEs (Makhzani et al., 2015), which each take dif ferent approaches to formulating L prior . 2 . 1 G E N E R A T I V E AU T O E N C O D E R S Consider the case where e is constructed with stochastic neurons that can produce outputs from a specified probability distribution, and L prior is used to constrain the distribution of outputs to P ( Z ) . This leaves the problem of estimating the gradient of the autoencoder ov er the expectation E Q φ ( Z | X ) , which would typically be addressed with a Monte Carlo method. V AEs sidestep this by constructing latent samples using a deterministic function and a source of noise, moving the source of stochas- ticity to an input, and leaving the network itself deterministic for standard gradient calculations—a technique commonly known as the reparameterisation trick (Kingma & W elling, 2014). e ( X ; φ ) then consists of a deterministic function, e rep ( X ; φ ) , that outputs parameters for a probability distri- bution, plus a source of noise. In the case where P ( Z ) is a diagonal covariance Gaussian, e rep ( X ; φ ) 4 Under revie w as a conference paper at ICLR 2017 Figure 3: Reconstructions of faces from a D V AE trained with additiv e Gaussian noise: Q ( ˜ X | X ) = N ( X , 0 . 25 I ) . The model successfully recovers much of the detail from the noise-corrupted images. maps x to a v ector of means, µ ∈ R b , and a vector of standard deviations, σ ∈ R b + , with the noise ∼ N ( 0 , I ) . Put together, the encoder outputs samples z = µ + σ , where is the Hadamard product. V AEs attempt to make these samples from the encoder match up with P ( Z ) by using the KL div ergence between the parameters for a probability distribution outputted by e rep ( X ; φ ) , and the parameters for the prior distrib ution, giving L prior = D K L [ Q φ ( Z | X ) k P ( Z )] . A multiv ariate Gaussian has an analytical KL diver gence that can be further simplified when considering the unit Gaussian, resulting in L prior = 1 2 P N n =1 µ 2 + σ 2 − log ( σ 2 ) − 1 . Another approach is to deterministically output the encodings z . Rather than minimising a met- ric between probability distributions using their parameters, we can turn this into a density ratio estimation problem where the goal is to learn a conditional distribution, Q φ ( Z | X ) , such that the distribution of the encoded data samples, ˆ P ( Z ) = R Q φ ( Z | X ) P ( X ) dX , matches the prior distri- bution, P ( Z ) . The GAN framework solv es this density ratio estimation problem by transforming it into a class estimation problem using two networks (Goodfello w et al., 2014). The first network in GAN training is the discriminator network, D ψ , which is trained to maximise the log probability of samples from the “real” distribution, z ∼ P ( Z ) , and minimise the log probability of samples from the “fake” distribution, z ∼ Q φ ( Z | X ) . In our case e ( X ; φ ) plays the role of the second network, the generator network, G φ , which generates the “fake” samples. 1 The two networks compete in a minimax game, where G φ receiv es gradients from D ψ such that it learns to better fool D ψ . The training objectiv e for both netw orks is giv en by L prior = argmin φ argmax ψ E P ( Z ) [log( D ψ ( Z ))] + E P ( X ) [log(1 − D ψ ( G φ ( X )))] = argmin φ argmax ψ E P ( Z ) [log( D ψ ( Z ))] + E Q φ ( Z | X ) P ( X ) log[1 − D ψ ( Z )] . This formulation can create problems during training, so instead G φ is trained to minimise − log ( D ψ ( G φ ( X ))) , which provides the same fixed point of the dynamics of G φ and D ψ . The result of applying the GAN framew ork to the encoder of an autoencoder is the deterministic AAE (Makhzani et al., 2015). 2 . 2 D E N O I S I N G AU T O E N C O D E R S In a more general viewpoint, generativ e autoencoders fulfill the purpose of learning useful repre- sentations of the observed data. Another widely used class of autoencoders that achiev e this are denoising autoencoders (D AEs), which are moti vated by the idea that learned features should be robust to “partial destruction of the input” (V incent et al., 2008). Not only does this require en- coding the inputs, but capturing the statistical dependencies between the inputs so that corrupted data can be recovered (see Figure 3). D AEs are presented with a corrupted version of the input, ˜ x ∈ ˜ X , but must still reconstruct the original input, x ∈ X , where the noisy inputs are cre- ated through sampling ˜ x ∼ C ( ˜ X | X ) , a corruption process. The denoising criterion, L denoise , can be applied to any type of autoencoder by replacing the straightforward reconstruction cri- terion, L reconstr uct ( X, d ( e ( X ; φ ); θ )) , with the reconstruction criterion applied to noisy inputs: L reconstr uct ( X, d ( e ( ˜ X ; φ ); θ )) . The encoder is no w used to model samples drawn from Q φ ( Z | ˜ X ) . As such, we can construct denoising gener ative autoencoders by training autoencoders to minimise L denoise + L prior . One might expect to see dif ferences in samples dra wn from denoising generati ve autoencoders and their non-denoising counterparts. Ho wever , Figures 4 and 6 sho w that this is not the case. Im et al. 1 W e adapt the variables to better fit the con ventions used in the conte xt of autoencoders. 5 Under revie w as a conference paper at ICLR 2017 (2015) address the case of D V AEs, claiming that the noise mapping requires adjusting the original V AE objective function. Our work is orthogonal to theirs, and others which adjust the training or model (Kingma et al., 2016), as we focus purely on sampling from generative autoencoders after training. W e claim that the existing practice of drawing samples from generative autoencoders conditioned on z ∼ P ( Z ) is suboptimal, and the quality of samples can be improv ed by instead conditioning on z ∼ ˆ P ( Z ) via MCMC sampling. 3 M A R K OV S A M P L I N G W e no w consider the case of sampling from generati ve autoencoders, where d ( Z ; θ ) is used to draw samples from P θ ( X | Z ) . In Section 1, we showed that it was important, when sampling P θ ( X | Z ) , to condition on z ’ s drawn from ˆ P ( Z ) , rather than P ( Z ) as is often done in practice. Howe ver , we now show that for any initial z 0 ∈ Z 0 = R b , Markov sampling can be used to produce a chain of samples z t , such that as t → ∞ , produces samples z t that are from the distribution ˆ P ( Z ) , which may be used to draw meaningful samples from P θ ( X | Z ) , conditioned on z ∼ ˆ P ( Z ) . T o speed up con vergence we can initialise z 0 from a distribution close to ˆ P ( Z ) , by drawing z 0 ∼ P ( Z ) . 3 . 1 M A R K OV S A M P L I N G P RO C E S S A generativ e autoencoder can be sampled by the following process: z 0 ∈ Z 0 = R b , x t +1 ∼ P θ ( X | Z t ) , z t +1 ∼ Q φ ( Z | X t +1 ) This allows us to define a Mark ov chain with the transition operator T ( Z t +1 | Z t ) = Z Q φ ( Z t +1 | X ) P θ ( X | Z t ) dX (1) for t ≥ 0 . Drawing samples according to the transition operator T ( Z t +1 | Z t ) produces a Markov chain. For the transition operator to be homogeneous, the parameters of the encoding and decoding functions are fixed during sampling. 3 . 2 C O N V E R G E N C E P R O P E RT I E S W e no w show that the stationary distribution of sampling from the Mark ov chain is ˆ P ( Z ) . Theorem 1. If T ( Z t +1 | Z t ) defines an er godic Markov chain, { Z 1 , Z 2 ...Z t } , then the chain will con ver ge to a stationary distrib ution, Π( Z ) , fr om any arbitr ary initial distribution. The stationary distribution Π( Z ) = ˆ P ( Z ) . The proof of Theorem 1 can be found in (Rosenthal, 2001). Lemma 1. T ( Z t +1 | Z t ) defines an er godic Markov chain. Pr oof. For a Markov chain to be ergodic it must be both irreducible (it is possible to get from any state to any other state in a finite number of steps) and aperiodic (it is possible to get from any state to any other state without having to pass through a c ycle). T o satisfy these requirements, it is more than sufficient to show that T ( Z t +1 | Z t ) > 0 , since ev ery z ∈ Z would be reachable from every other z ∈ Z . W e show that P θ ( X | Z ) > 0 and Q φ ( Z | X ) > 0 , gi ving T ( Z t +1 | Z t ) > 0 , providing the proof of this in Section A of the supplementary material. Lemma 2. The stationary distrib ution of the chain defined by T ( Z t +1 | Z t ) is Π( Z ) = ˆ P ( Z ) . Pr oof. For the transition operator defined in Equation (1), the asymptotic distribution to which T ( Z t +1 | Z t ) con verges to is ˆ P ( Z ) , because ˆ P ( Z ) is, by definition, the mar ginal of the joint distribu- tion Q φ ( Z | X ) P ( X ) , over which the L prior used to learn the conditional distribution Q φ ( Z | X ) . 6 Under revie w as a conference paper at ICLR 2017 Using Lemmas 1 and 2 with Theorem 1, we can say that the Mark ov chain defined by the transition operator in Equation (1) will produce a Markov chain that con ver ges to the stationary distribution Π( Z ) = ˆ P ( Z ) . 3 . 3 E X T E N S I O N T O D E N O I S I N G G E N E R AT I V E A U T O E N C O D E R S A denoising generativ e autoencoder can be sampled by the following process: z 0 ∈ Z 0 = R b , x t +1 ∼ P θ ( X | Z t ) , ˜ x t +1 ∼ C ( ˜ X | X t +1 ) , z t +1 ∼ Q φ ( Z | ˜ X t +1 ) . This allows us to define a Mark ov chain with the transition operator T ( Z t +1 | Z t ) = Z Q φ ( Z t +1 | ˜ X ) C ( ˜ X | X ) P θ ( X | Z t ) dX d ˜ X (2) for t ≥ 0 . The same arguments for the proof of con vergence of Equation (1) can be applied to Equation (2). 3 . 4 R E L A T E D W O R K Our w ork is inspired by that of Bengio et al. (2013); denoising autoencoders are cast into a proba- bilistic frame work, where P θ ( X | ˜ X ) is the denoising (decoder) distribution and C ( ˜ X | X ) is the cor- ruption (encoding) distribution. ˜ X represents the space of corrupted samples. Bengio et al. (2013) define a transition operator of a Markov chain – using these conditional distrib utions – whose sta- tionary distribution is P ( X ) under the assumption that P θ ( X | ˜ X ) perfectly denoises samples. The chain is initialised with samples from the training data, and used to generate a chain of samples from P ( X ) . This work was generalised to include a corruption process that mapped data samples to latent v ariables (Bengio et al., 2014), to create a new type of network called Generativ e Stochastic Networks (GSNs). Howe ver in GSNs (Bengio et al., 2014) the latent space is not regularised with a prior . Our work is similar to sev eral approaches proposed by Bengio et al. (2013; 2014) and Rezende et al. (Rezende et al., 2014). Both Bengio et al. and Rezende et al. define a transition operator in terms of X t and X t − 1 . Bengio et al. generate samples with an initial X 0 drawn from the observed data, while Rezende et al. reconstruct samples from an X 0 which is a corrupted v ersion of a data sample. In contrasts to Bengio et al. and Rezende et al., in this work we define the transition operator in terms of Z t +1 and Z t , initialise samples with a Z 0 that is drawn from a prior distribution we can directly sample from, and then sample X 1 conditioned on Z 0 . Although the initial samples may be poor , we are lik ely to generate a no vel X 1 on the first step of MCMC sampling, which would not be achiev ed using Bengio et al. ’ s or Rezende et al. ’ s approach. W e are able draw initial Z 0 from a prior because we constrain ˆ P ( Z ) to be close to a prior distrib ution P ( Z ) ; in Bengio et al. a latent space is either not explicitly modeled (Bengio et al., 2013) or it is not constrained (Bengio et al., 2014). Further , Rezende et al. (2014) explicitly assume that the distribution of latent samples dra wn from Q φ ( Z | X ) matches the prior , P ( Z ) . Instead, we assume that samples drawn from Q φ ( Z | X ) ha ve a distribution ˆ P ( Z ) that does not necessarily match the prior , P ( Z ) . W e propose an alternativ e method for sampling ˆ P ( Z ) in order to improv e the quality of generated image samples. Our motiv ation is also different to Rezende et al. (2014) since we use sampling to generate improved, no vel data samples, while they use sampling to denoise corrupted samples. 3 . 5 E FF E C T O F R E G U L A R I S AT I O N M E T H O D The choice of L prior may effect ho w much improv ement can be gained when using MCMC sam- pling, assuming that the optimisation process con ver ges to a reasonable solution. W e first consider the case of V AEs, which minimise D K L [ Q φ ( Z | X ) k P ( Z )] . Minimising this KL di vergence pe- nalises the model ˆ P ( Z ) if it contains samples that are outside the support of the true distribution P ( Z ) , which might mean that ˆ P ( Z ) captures only a part of P ( Z ) . This means that when sampling 7 Under revie w as a conference paper at ICLR 2017 P ( Z ) , we may draw from a region that is not captured by ˆ P ( Z ) . This suggests that MCMC sampling can improv e samples from trained V AEs by walking them to wards denser regions in ˆ P ( Z ) . Generally speaking, using the re verse KL diver gence during training, D K L [ P ( Z ) k Q φ ( Z | X )] , pe- nalises the model Q φ ( Z | X ) if P ( Z ) produces samples that are outside of the support of ˆ P ( Z ) . By minimising this KL di ver gence, most samples in P ( Z ) will likely be in ˆ P ( Z ) as well. AAEs, on the other hand are regularised using the JS entropy , given by 1 2 D K L [ P ( Z ) k 1 2 ( P ( Z ) + Q φ ( Z | X ))] + 1 2 D K L [ Q φ ( Z | X ) k 1 2 ( P ( Z ) + Q φ ( Z | X ))] . Minimising this cost function attempts to find a com- promise between the aforementioned extremes. Ho wev er, this still suggests that some samples from P ( Z ) may lie outside ˆ P ( Z ) , and so we expect AAEs to also benefit from MCMC sampling. 4 E X P E R I M E N T S 4 . 1 M O D E L S W e utilise the deep con volutional GAN (DCGAN) (Radford et al., 2015) as a basis for our autoen- coder models. Although the recommendations from Radford et al. (2015) are for standard GAN architectures, we adopt them as sensible defaults for an autoencoder , with our encoder mimicking the DCGAN’ s discriminator, and our decoder mimicking the generator . The encoder uses strided con volutions rather than max-pooling, and the decoder uses fractionally-strided con volutions rather than a fix ed upsampling. Each conv olutional layer is succeeded by spatial batch normalisation (Iof fe & Szegedy, 2015) and ReLU nonlinearities, except for the top of the decoder which utilises a sig- moid function to constrain the output values between 0 and 1. W e minimise the cross-entropy between the original and reconstructed images. Although this results in blurry images in regions which are ambiguous, such as hair detail, we opt not to use extra loss functions that improve the visual quality of generations (Larsen et al., 2015; Doso vitskiy & Brox, 2016; Lamb et al., 2016) to av oid confounding our results. Although the AAE is capable of approximating complex probabilistic posteriors (Makhzani et al., 2015), we construct ours to output a deterministic Q φ ( Z | X ) . As such, the final layer of the encoder part of our AAEs is a conv olutional layer that deterministically outputs a latent sample, z . The adversary is a fully-connected network with dropout and leaky ReLU nonlinearities. e rep ( X ; φ ) of our V AEs hav e an output of twice the size, which corresponds to the means, µ , and standard deviations, σ , of a diagonal covariance Gaussian distribution. For all models our prior , P ( Z ) , is a 200D isotropic Gaussian with zero mean and unit variance: N ( 0 , I ) . 4 . 2 D A TA S E T S Our primary dataset is the (aligned and cropped) CelebA dataset, which consists of 200,000 images of celebrities (Liu et al., 2015). The DCGAN (Radford et al., 2015) was the first generativ e neural network model to show con vincing nov el samples from this dataset, and it has been used e ver since as a qualitativ e benchmark due to the amount and quality of samples. In Figures 7 and 8 of the supplementary material, we also include results on the SVHN dataset, which consists of 100,000 images of house numbers extracted from Google Street vie w images (Netzer et al., 2011). 4 . 3 T R A I N I N G & E V A L U A T I O N For all datasets we perform the same preprocessing: cropping the centre to create a square image, then resizing to 64 × 64 px. W e train our generati ve autoencoders for 20 epochs on the training split of the datasets, using Adam (Kingma & Ba, 2014) with α = 0 . 0002 , β 1 = 0 . 5 and β 2 = 0 . 999 . The denoising generati ve autoencoders use the additiv e Gaussian noise mapping C ( ˜ X | X ) = N ( X , 0 . 25 I ) . All of our experiments were run using the T orch library (Collobert et al., 2011). 2 For ev aluation, we generate no vel samples from the decoder using z initially sampled from P ( Z ) ; we also sho w spherical interpolations (White, 2016) between four images of the testing split, as depicted in Figure 2. W e then perform several steps of MCMC sampling on the nov el samples and interpolations. During this process, we use the training mode of batch normalisation (Ioffe & 2 Example code is av ailable at https://github.com/Kaixhin/Autoencoders . 8 Under revie w as a conference paper at ICLR 2017 Szegedy, 2015), i.e., we normalise the inputs using minibatch rather than population statistics, as the normalisation can partially compensate for poor initial inputs (see Figure 4) that are far from the training distribution. W e compare novel samples between all models below , and leave further interpolation results to Figures 5 and 6 of the supplementary material. 9 Under revie w as a conference paper at ICLR 2017 4 . 4 S A M P L E S (a) V AE (initial) (b) V AE (1 step) (c) V AE (5 steps) (d) V AE (10 steps) (e) D V AE (initial) (f) D V AE (1 step) (g) D V AE (5 steps) (h) D V AE (10 steps) (i) AAE (initial) (j) AAE (1 step) (k) AAE (5 steps) (l) AAE (10 steps) (m) D AAE (initial) (n) D AAE (1 step) (o) D AAE (5 steps) (p) D AAE (10 steps) Figure 4: Samples from a V AE (a-d), D V AE (e-h), AAE (i-l) and D AAE (m-p) trained on the CelebA dataset. (a), (e), (i) and (m) show initial samples conditioned on z ∼ P ( Z ) , which mainly result in recognisable faces emerging from noisy backgrounds. After 1 step of MCMC sampling, the more unrealistic generations change noticeably , and continue to do so with further steps. On the other hand, realistic generations, i.e. samples from a region with high probability , do not change as much. The adversarial criterion for deterministic AAEs is difficult to optimise when the dimensionality of Z is high. W e observe that during training our AAEs and DAAEs, the empirical standard deviation of z ∼ Q φ ( Z | X ) is less than 1, which means that ˆ P ( Z ) fails to approximate P ( Z ) as closely as w as achiev ed with the V AE and D V AE. Ho wever , this means that the ef fect of MCMC sampling is more pronounced, with the quality of all samples noticeably impro ving after a fe w steps. As a side-effect of the suboptimal solution learned by the networks, the denoising properties of the D AAE are more noticeable with the nov el samples. 10 Under revie w as a conference paper at ICLR 2017 5 C O N C L U S I O N Autoencoders consist of a decoder, d ( Z ; θ ) and an encoder , e ( X ; φ ) function, where φ and θ are learned parameters. Functions e ( X ; φ ) and d ( Z ; θ ) may be used to draw samples from the condi- tional distributions P θ ( X | Z ) and Q φ ( Z | X ) (Bengio et al., 2014; 2013; Rezende et al., 2014), where X refers to the space of observed samples and Z refers to the space of latent samples. The encoder distribution, Q φ ( Z | X ) , maps data samples from the data generating distribution, P ( X ) , to a latent distribution, ˆ P ( Z ) . The decoder distribution, P θ ( X | Z ) , maps samples from ˆ P ( Z ) to P ( X ) . W e are concerned with gener ative autoencoders , which we define to be a family of autoencoders where regularisation is used during training to encourage ˆ P ( Z ) to be close to a known prior P ( Z ) . Com- monly it is assumed that ˆ P ( Z ) and P ( Z ) are similar , such that samples from P ( Z ) may be used to sample a decoder P θ ( X | Z ) ; we do not make the assumption that ˆ P ( Z ) and P ( Z ) are “suf ficiently close” (Rezende et al., 2014). Instead, we deriv e an MCMC process, whose stationary distribution is ˆ P ( Z ) , allowing us to directly dra w samples from ˆ P ( Z ) . By conditioning on samples from ˆ P ( Z ) , samples drawn from x ∼ P θ ( X | Z ) are more consistent with the training data. In our experiments, we compare samples x ∼ P θ ( X | Z = z 0 ) , z 0 ∼ P ( Z ) to x ∼ P θ ( X | Z = z i ) for i = { 1 , 5 , 10 } , where z i ’ s are obtained through MCMC sampling, to show that MCMC sampling improv es initially poor samples (see Figure 4). W e also show that artifacts in x samples induced by interpolations across the latent space can also be corrected by MCMC sampling see (Figure 2). W e further validate our work by showing that the denoising properties of denoising generati ve autoencoders are best rev ealed by the use of MCMC sampling. Our MCMC sampling process is straightforward, and can be applied easily to existing generative au- toencoders. This technique is orthogonal to the use of more po werful posteriors in AAEs (Makhzani et al., 2015) and V AEs (Kingma et al., 2016), and the combination of both could result in further improv ements in generati ve modeling. Finally , our basic MCMC process opens the doors to apply a large e xisting body of research on sampling methods to generative autoencoders. A C K N O W L E D G E M E N T S W e would lik e to acknowledge the EPSRC for funding through a Doctoral T raining studentship and the support of the EPSRC CDT in Neurotechnology . R E F E R E N C E S Y oshua Bengio. Learning deep architectures for AI. F oundations and tr ends R in Machine Learning , 2(1): 1–127, 2009. Y oshua Bengio, Li Y ao, Guillaume Alain, and Pascal V incent. Generalized denoising auto-encoders as gener- ativ e models. In Advances in Neural Information Pr ocessing Systems , pp. 899–907, 2013. Y oshua Bengio, Eric Thibodeau-Laufer, Guillaume Alain, and Jason Y osinski. Deep generative stochastic networks trainable by backprop. In Journal of Mac hine Learning Research: Pr oceedings of the 31st Inter- national Confer ence on Machine Learning , volume 32, 2014. Ronan Collobert, K oray Kavukcuoglu, and Cl ´ ement Farabet. T orch7: A matlab -like environment for machine learning. In BigLearn, NIPS W orkshop , number EPFL-CONF-192376, 2011. Alex ey Dosovitskiy and Thomas Brox. Generating images with perceptual similarity metrics based on deep networks. arXiv preprint , 2016. Ian Goodfellow , Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, Da vid W arde-Farley , Sherjil Ozair, Aaron Courville, and Y oshua Bengio. Generativ e Adversarial Nets. In Advances in Neural Information Pr ocessing Systems , pp. 2672–2680, 2014. Daniel Jiwoong Im, Sungjin Ahn, Roland Memisevic, and Y oshua Bengio. Denoising criterion for variational auto-encoding framew ork. arXiv preprint , 2015. Serge y Ioffe and Christian Szegedy . Batch normalization: Accelerating deep network training by reducing internal cov ariate shift. In Pr oceedings of the 32nd International Confer ence on Machine Learning (ICML- 15) , pp. 448–456, 2015. 11 Under revie w as a conference paper at ICLR 2017 Diederik Kingma and Jimmy Ba. Adam: A method for stochastic optimization. In Pr oceedings of the 2015 International Conference on Learning Representations (ICLR-2015), arXiv pr eprint arXiv:1412.6980 , 2014. URL . Diederik P Kingma and Max W elling. Auto-encoding variational Bayes. In Proceedings of the 2015 Interna- tional Conference on Learning Representations (ICLR-2015), arXiv pr eprint arXiv:1312.6114 , 2014. URL https://arxiv.org/abs/1312.6114 . Diederik P Kingma, T im Salimans, and Max W elling. Improving v ariational inference with inv erse autoregres- siv e flow . arXiv preprint , 2016. Alex Lamb, V incent Dumoulin, and Aaron Courville. Discriminativ e regularization for generativ e models. arXiv pr eprint arXiv:1602.03220 , 2016. Anders Boesen Lindbo Larsen, Søren Kaae Sønderby , and Ole W inther . Autoencoding beyond pixels using a learned similarity metric. In Proceedings of The 33r d International Conference on Machine Learning, arXiv pr eprint arXiv:1512.09300 , pp. 1558–1566, 2015. URL http://jmlr.org/proceedings/ papers/v48/larsen16.pdf . Ziwei Liu, Ping Luo, Xiaogang W ang, and Xiaoou T ang. Deep learning face attributes in the wild. In Pr oceed- ings of the IEEE International Confer ence on Computer V ision , pp. 3730–3738, 2015. Alireza Makhzani, Jonathon Shlens, Navdeep Jaitly , and Ian Goodfellow . Adversarial autoencoders. arXiv pr eprint arXiv:1511.05644 , 2015. Y uval Netzer, T ao W ang, Adam Coates, Alessandro Bissacco, Bo Wu, and Andrew Y Ng. Reading dig- its in natural images with unsupervised feature learning. In NIPS W orkshop on Deep Learning and Un- supervised F eatur e Learning , 2011. URL https://static.googleusercontent.com/media/ research.google.com/en//pubs/archive/37648.pdf . Alec Radford, Luk e Metz, and Soumith Chintala. Unsupervised representation learning with deep conv olutional generativ e adversarial networks. In International Confer ence on Learning Repr esentations (ICLR) 2016, arXiv pr eprint arXiv:1511.06434 , 2015. URL . Danilo Jimenez Rezende, Shakir Mohamed, and Daan Wierstra. Stochastic backpropagation and approximate inference in deep generativ e models. In Pr oceedings of the 31st International Conference on Machine Learn- ing, arXiv pr eprint arXiv:1401.4082 , 2014. URL . Jeffre y S Rosenthal. A re view of asymptotic con ver gence for general state space markov chains. F ar East J . Theor . Stat , 5(1):37–50, 2001. H Sebastian Seung. Learning continuous attractors in recurrent networks. In NIPS Proceedings , volume 97, pp. 654–660, 1997. Pascal V incent, Hugo Larochelle, Y oshua Bengio, and Pierre-Antoine Manzagol. Extracting and composing ro- bust features with denoising autoencoders. In Pr oceedings of the 25th International Confer ence on Machine Learning , pp. 1096–1103. A CM, 2008. T om White. Sampling generative networks: Notes on a few effecti ve techniques. arXiv preprint arXiv:1609.04468 , 2016. 12 Under revie w as a conference paper at ICLR 2017 Supplementary Material A P RO O F T H A T T ( Z t +1 | Z t ) > 0 For P θ ( X | Z ) > 0 we require that all possible x ∈ X ⊆ R a may be generated by the net- work. Assuming that the model P θ ( X | Z ) is trained using a sufficient number of training samples, x ∈ X train = X , and that the model has infinite capacity to model X train = X , then we should be able to draw any sample x ∈ X train = X from P θ ( X | Z ) . In reality X train ⊆ X and it is not possible to ha ve a model with infinite capacity . Howe ver , P θ ( X | Z ) is modeled using a deep neural network, which we assume has sufficient capacity to capture the training data well. Further , deep neural networks are able to interpolate between samples in very high dimensional spaces (Radford et al., 2015); we therefore further assume that if we hav e a large number of training samples (as well as large model capacity), that almost an y x ∈ X can be drawn from P θ ( X | Z ) . Note that if we wish to generate human faces, we define X all to be the space of all possible faces, with distribution P ( X all ) , while X train is the space of faces made up by the training data. Then, practically even a well trained model which learns to interpolate well only captures an X , with distri- bution R P θ ( X | Z ) ˆ P ( Z ) d Z , where X train ⊆ X ⊆ X all , because X additionally contains e xamples of interpolated versions of x ∼ P ( X train ) . For Q φ ( Z | X ) > 0 it must be possible to generate all possible z ∈ Z ⊆ R b . Q φ ( Z | X ) is described by the function e ( · ; φ ) : X → Z . T o ensure that Q φ ( Z | X ) > 0 , we want to show that the function e ( X ; φ ) allows us to represent all samples of z ∈ Z . V AEs and AAEs each construct e ( X ; φ ) to produce z ∈ Z in different ways. The output of the encoder of a V AE, e V AE ( X ; φ ) is z = µ + σ , where ∼ N ( 0 , I ) . The output of a V AE is then al ways Gaussian, and hence there is no limitation on the z ’ s that e V AE ( X ; φ ) can produce. This ensures that Q φ ( Z | X ) > 0 , pro vided that σ 6 = 0 . The encoder of our AAE, e AAE ( X ; φ ) , is a deep neural network consisting of multiple conv olutional and batch normalisation layers. The final layer of the e AAE ( X ; φ ) is a fully connected layer without an acti vation function. The input to each of the M nodes in the fully connected layer is a function f i =1 ...M ( x ) . This means that z is gi ven by: z = a 1 f 1 ( x ) + a 2 f 2 ( x ) + ... + a M f M ( x ) , where a i =1 ...M are the learned weights of the fully connected layer . W e now consider three cases: Case 1: If a i are a complete set of bases for Z then it is possible to generate any z ∈ Z from an x ∈ X with a one-to-one mapping, pro vided that f i ( x ) is not restricted in the values that it can take. Case 2: If a i are an o vercomplete set of bases for Z , then the same holds, provided that f i ( x ) is not restricted in the values that it can tak e. Case 3: If a i are an undercomplete set of bases for Z then it is not possible to generate all z ∈ Z from x ∈ X . Instead there is a many (X) to one (Z) mapping. For Q φ ( Z | X ) > 0 our network must learn a complete or overcomplete set of bases and f i ( x ) must not be restricted in the v alues that it can take ∀ i . The network is encouraged to learn an ov ercomplete set of bases by learning a lar ge number of a i ’ s—specifically M = 8192 when basing our network on the DCGAN architecture (Radford et al., 2015)—more that 40 times the dimensionality of Z . By using batch normalisation layers throughout the network, we ensure that values of f i ( x ) are spread out, capturing a close-to-Gaussian distribution (Ioffe & Sze gedy, 2015), encouraging infinite support. W e hav e no w shown that, under certain reasonable assumptions, P θ ( X | Z ) > 0 and Q φ ( Z | X ) > 0 , which means that T ( Z t +1 | Z t ) > 0 , and hence we can get from any Z to any another Z in only one step. Therefore the Marko v chain described by the transition operator T ( Z t +1 | Z t ) defined in Equation (1) is both irreducible and aperiodic, which are the necessary conditions for ergodicity . 13 Under revie w as a conference paper at ICLR 2017 B C E L E B A B . 1 I N T E R P O L A T I O N S (a) D V AE (initial) (b) D V AE (5 steps) (c) D V AE (initial) (d) D V AE (5 steps) Figure 5: Interpolating between two faces using (a-d) a D V AE. The top ro ws (a, c) for each face is the original interpolation, whilst the second rows (b, d) are the result of 5 steps of MCMC sampling applied to the latent samples that were used to generate the original interpolation. The only qualita- tiv e difference when compared to V AEs (see Figure 4) is a desaturation of the generated images. 14 Under revie w as a conference paper at ICLR 2017 (a) AAE (initial) (b) AAE (5 steps) (c) AAE (initial) (d) AAE (5 steps) (e) D AAE (initial) (f) D AAE (5 steps) (g) D AAE (initial) (h) D AAE (5 steps) Figure 6: Interpolating between two faces using (a-d) an AAE and (e-h) a D AAE. The top ro ws (a, c, e, g) for each face is the original interpolation, whilst the second rows (b, d, f, h) are the result of 5 steps of MCMC sampling applied to the latent samples that were used to generate the original interpolation. Although the AAE performs poorly (b, d), the regularisation ef fect of denoising can be clearly seen with the D AAE after applying MCMC sampling (f, h). 15 Under revie w as a conference paper at ICLR 2017 C S T R E E T V I E W H O U S E N U M B E R S C . 1 S A M P L E S (a) V AE (initial) (b) V AE (1 step) (c) V AE (5 steps) (d) V AE (10 steps) (e) D V AE (initial) (f) D V AE (1 step) (g) D V AE (5 steps) (h) D V AE (10 steps) (i) AAE (initial) (j) AAE (1 step) (k) AAE (5 steps) (l) AAE (10 steps) (m) D AAE (initial) (n) D AAE (1 step) (o) D AAE (5 steps) (p) D AAE (10 steps) Figure 7: Samples from a V AE (a-d), D V AE (e-h), AAE (i-l) and D AAE (m-p) trained on the SVHN dataset. The samples from the models imitate the blurriness present in the dataset. Although very few numbers are visible in the initial sample, the V AE and D V AE produce recognisable numbers from most of the initial samples after a fe w steps of MCMC sampling. Although the AAE and D AAE f ail to produce recognisable numbers, the final samples are still a clear impro vement o ver the initial samples. 16 Under revie w as a conference paper at ICLR 2017 C . 2 I N T E R P O L A T I O N S (a) V AE (initial) (b) V AE (5 steps) (c) V AE (initial) (d) V AE (5 steps) (e) D V AE (initial) (f) D V AE (5 steps) (g) D V AE (initial) (h) D V AE (5 steps) Figure 8: Interpolating between Google Street V iew house numbers using (a-d) a V AE and (e-h) a D V AE. The top ro ws (a, c, e, g) for each house number are the original interpolations, whilst the second rows (b, d, f, h) are the result of 5 steps of MCMC sampling. If the original interpolation produces symbols that do not resemble numbers, as observed in (a) and (e), the models will attempt to move the samples tow ards more realistic numbers (b, f). Interpolation between 1- and 2-digit numbers in an image (c, g) results in a meaningless blur in the middle of the interpolation. After a few steps of MCMC sampling the models instead produce more recognisable 1- or 2-digit numbers (d, h). W e note that when the contrast is poor , denoising models in particular can struggle to recov er meaningful images (h). 17

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment