EEG in the classroom: Synchronised neural recordings during video presentation

We performed simultaneous recordings of electroencephalography (EEG) from multiple students in a classroom, and measured the inter-subject correlation (ISC) of activity evoked by a common video stimulus. The neural reliability, as quantified by ISC, …

Authors: Andreas Trier Poulsen, Simon Kamronn, Jacek Dmochowski

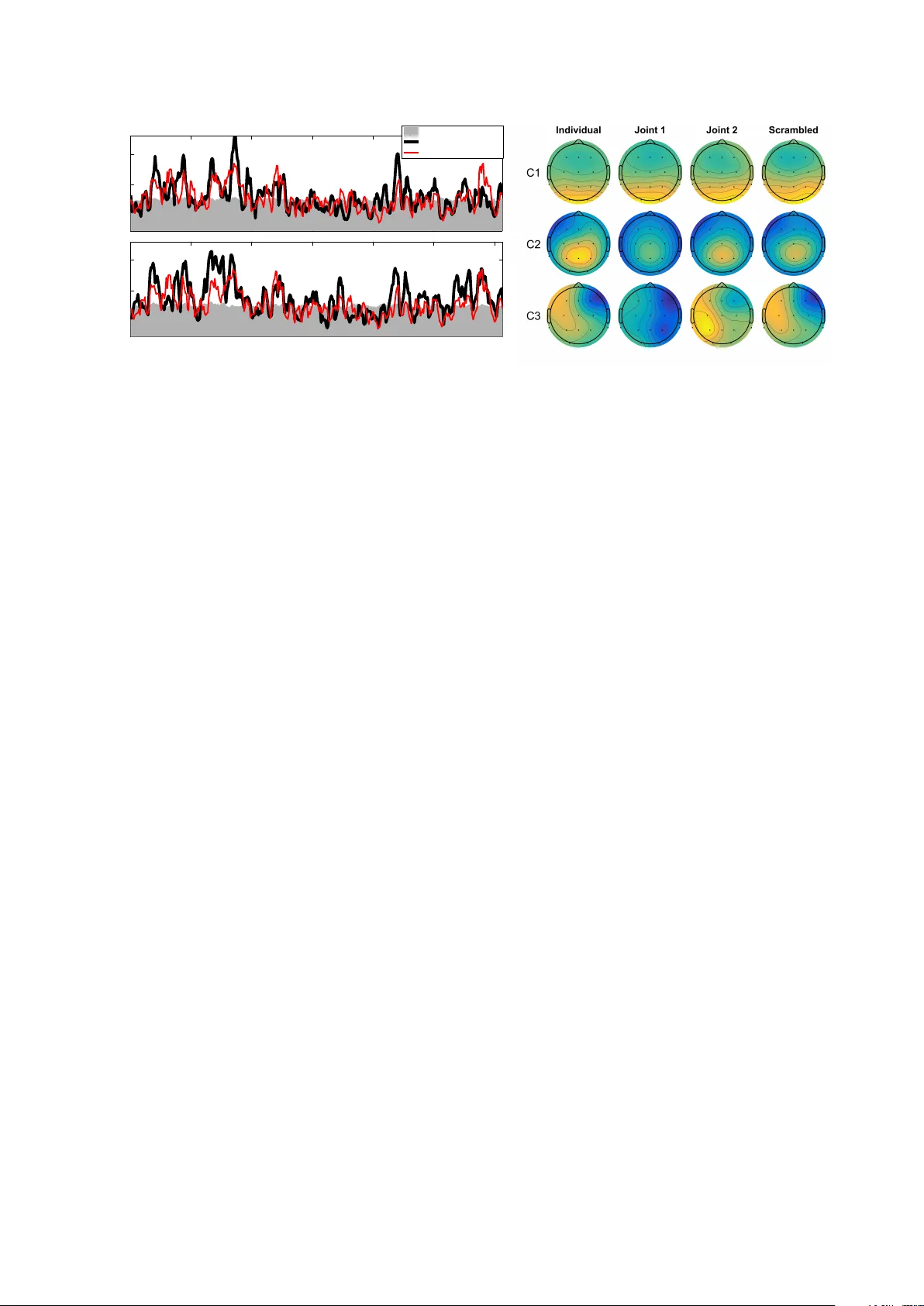

EEG in the classro om: Sync hronised neural recordings during video presen tation Andreas T rier P oulsen 1,+,* , Simon Kamronn 1,+ , Jacek Dmo c ho wski 2,3 , Lucas C. P arra 3 , and Lars Kai Hansen 1 1 T ec hnical Universit y of Denmark, DTU Compute, Kgs. Lyngb y , Denmark 2 Stanford Univ ersity , Departmen t of Psyc hology , P alo Alto, USA 3 Cit y College of New Y ork, Department of Biomedical Engineering, New Y ork, USA * atp o@dtu.dk + these authors con tributed equally to this work Decem b er 30, 2016 Abstract W e p erformed simultaneous recordings of electro encephalography (EEG) from multiple stu- den ts in a classro om, and measured the inter-sub ject correlation (ISC) of activit y ev oked by a common video stim ulus. The neural reliabilit y , as quan tified b y ISC, has b een link ed to engage- men t and attentional mo dulation in earlier studies that used high-grade equipment in lab oratory settings. Here w e repro duce many of the results from these studies using p ortable lo w-cost equip- men t, focusing on the robustness of using ISC for sub jects exp eriencing naturalistic stim uli. The presen t data shows that stimulus-ev oked neural resp onses, known to b e mo dulated by atten tion, can b e trac k ed in for groups of studen ts with sync hronized EEG acquisition. This is a step to wards real-time inference of engagement in the classro om. In tro duction Engagemen t and atten tion are imp ortan t in situations of learning, but most metho ds for measuring of atten tion or engagemen t are in trusive and unrealistic in ev eryday situations (Robinson, 1997; Cohen et al., 1990; Radw an, 2005). Recen tly , in ter-sub ject correlation (ISC) of electro encephalograph y (EEG) has b een prop osed as a mark er of atten tional engagement (Dmo c ho wski et al., 2012, 2014; Ki et al., 2016) and w e ask in this w ork whether it can b e recorded robustly with commercial-grade wireless EEG devices in a classro om setting. F urthermore, we address tw o other issues related to the robustness of the signal: The p oten tial neurophysiological origin of the measure and the robustness of the detection scheme to inter-sub ject v ariability in spatial alignmen t. User engagement has b een defined as ‘... the emotional, cognitive and b ehavioural connection that exists, at any p oin t in time and p ossibly o ver time, b et ween a user and a resource’ (Attfield et al., 2011). T raditional approac hes to measuring engagemen t are based on capturing user behaviour via user in terfaces, self-rep ort, or man ual annotation (O’Brien and T oms, 2013). Ho wev er, to ols from cognitiv e neuroscience are increasingly b eing emplo yed (Szafir and Mutlu, 2013). Recen t efforts in neuroscience aim to elucidate p erceptual and cognitive processes in a more realistic setting and using naturalistic stim uli (Dmo c howski et al., 2012; Ringac h et al., 2002; Hasson et al., 2004; Lahnakoski et al., 2014; Lankinen et al., 2014; Chang et al., 2015). F rom an educational p ersp ectiv e such quan titative measures may help iden tify mec hanisms that make learning more efficien t (Szafir and Mutlu, 2013), align services better with students needs (A ttfield et al., 2011), or monitor critical Figure 1: Exp erimental setup for join t viewings. (Left): 9 sub jects where placed on a line to induce a cinema- lik e exp eriences. (Right): Sub jects seen from the bac k, w atching films pro jected on to a screen. T ablets recording EEG are resting on the tables b ehind the sub jects. The signal is transmitted wirelessly from eac h sub ject. task p erformance (Lin et al., 2013). The p oten tial uses of engagemen t detection in the classro om are n umerous, e.g., real-time and summary feedbac k for the teacher, motiv ational strategies for increased studen t engagement, and screening for impact of teaching materials. Before the findings of tracking atten tional resp onses with neural activit y (Dmo cho wski et al., 2012, 2014; Ki et al., 2016) can b e emplo yed in a real-time classro om scenario, several issues m ust b e addressed first, including: 1) Is it p ossible to reproduce the ISCs to naturalistic stim uli under the adv erse conditions of a classroom? 2) Are the ISCs robust to inter-studen t v ariability of the spatial information pro cessing netw orks? And 3) can ISCs b e recorded with equipment that is both comfortable and affordable enough to mak e it a realistic technology for sc ho ols? Here w e inv estigate the feasibility of recording such neural resp onses from students who are viewing videos. W e use an approach dev elop ed by Dmo cho wski et al. (2012) that uses in ter-sub ject correlation (ISC) of EEG ev oked resp onses. The basic premise is that sub jects who are engaged with the conten t exhibit reliable neural resp onses that are correlated across sub jects and rep etitions within the same sub ject. In contrast, a lack of engagement manifests in generally unreliable neural resp onses (Ki et al., 2016). ISC of neural activity while watc hing films ha ve b een shown to predict the p opularit y and viewership of TV-series and commercials (Dmocho wski et al., 2014), and shows clinical promises as a measure of consciousness levels in non-resp onsiv e patien ts (Naci et al., 2015) (fMRI study). W e argue here that the neural reliabilit y of studen ts indeed ma y b e quantified on a second-b y-second basis in groups and in a classro om setting, and w e seek to in vestigate the robustness of measuring it with electro encephalograph y (EEG) resp onses during exp osure to media stimuli. T o enable correlations b et ween m ulti-dimensional EEG, correlated comp onent analysis (CorrCA) w as introduced (Dmo cho wski et al., 2012). CorrCA finds multiple spatial pro jections that are shared amongst sub jects, such that their comp onen ts are maximally correlated across time. Here we are in terested in the repro ducibility of using CorrCA as a measure of in ter-sub ject correlation, and will fo cus predominan tly on the first comp onen t, which captures most of the neural resp onses shared across students. The main goal of the presen t work is to determine whether student neural reliabilit y can b e quan tified in a real-time manner based on recordings of brain activity in a classro om setting using a lo w-cost, p ortable EEG system – the Smartphone Brain Scanner (Stop czynski et al., 2014a). With regard to the robustness of the detection scheme, we rep ort on b oth theoretical and exp erimen tal in vestigations. First, we show that ISC evok ed by ric h naturalistic stimuli is robust enough to b e repro duced with commercial-grade equipment, and to b e recorded sim ultaneously from multiple sub jects in a classro om setting. This op ens up for the p ossibilit y of real-time estimation of student atten tional engagement. Secondly , we show mathematically that the CorrCA algorithm is surprisingly 2 0 0.1 0.2 ISC r = 0.61 p < 0.0002 Joint p > 0.01, uncorrected Present study Dmochowski et. al 1 2 3 4 5 6 0 0.1 0.2 Time (min.) ISC r = 0.64 p < 0.0002 Individual (a) (b) Figure 2: ISC of neural resp onses to naturalistic stimuli are robust across different groups of sub jects and repro ducible in a classro om setting. (a) Comparison betw een the ISC obtained by Dmo c howski et al. 2012 and the present study for the first CorrCA comp onen t and the first viewing of Bang! Y ou’r e De ad . The ISC is calculated with a 1-second resolution (5 s windows, 80% ov erlap). The grey area indicates c hance levels for ISC ( p > 0 . 01 estimated with time-shuffled surrogate data, uncorrected for m ultiple comparisons). (b) The corresp onding scalp pro jections of the first three comp onents obtained from the correlated component analysis (CorrCA) of each of the four sub ject groups watc hing Bang! Y ou’r e De ad the first time. F or each component, CorrCA finds one shared set of weigh ts for all sub jects in the group. F our distinct groups of sub jects watc hed videos in different scenarios: individually on a tablet computer ( Individual ), individually with order of scenes scram bled in time ( Scr amble d ), and jointly in a classro om as seen in Fig. 1 ( Joint 1 and Joint 2 ). F or each pro jection, the p olarit y w as normalized so the v alue at the Cz electro de is p ositiv e. robust to v ariations in the spatial patterns of brain activity across sub jects. Finally , we demonstrate that the level of ISC is related to a very basic visual resp onse that is modulated by narrativ e coherence of the video stimulus. Results T o monitor neural reliabilit y we used video stimuli as they provide a balance b et ween realism and repro ducibilit y (Hasson et al., 2004). W e recorded EEG activit y using the Smartphone Brain Scanner while sub jects watc hed short video clips of appro ximately 6 minutes duration, either individually or in a group setting (Fig. 1). T o measure reliabilit y of EEG resp onses, we used correlated components analysis (CorrCA, see Metho ds) to extract maximally correlated time series with shared spatial pro jection across rep eated views within the same sub ject (inter-viewing correlation, IVC), or betw een sub jects (inter-sub ject correlation, ISC). One of our main p oints of interest is to inv estigate the robustness of ISC from EEG recorded in a classro om through comparisons with results previously measured in a lab oratory setting (Dmo c ho wski et al., 2012). W e therefore employ ed similar metho ds of analysis and calculated ISCs and IVCs in 5 second windo ws with 80 % o verlap to inv estigate their temp oral dev elopment in a 1-second resolution. W e chose to analyse the EEG with CorrCA in a broad frequency band (0.5 and 45 Hz), instead of inv estigating sp ecific frequency bands, to keep the analysis metho ds comparable with the prior lab-based study . Moreov er, CorrCA is a metho d used for robustly measuring ISC with lo w computational costs; hence making it a go o d candidate for long term real-time analyses on small devices in a classro om setting. The sub jects watc hed three video clips, whic h w ere presen ted twice in random order. The first video was a susp enseful excerpt from the short film, Bang! Y ou’r e De ad , directed by Alfred Hitc hco c k. It was selected b ecause it is kno wn to effectively synchronize brain resp onses across viewers (Hasson 3 T able 1: Correlation coefficients betw een the ISC time courses obtained in a lab oratory setting (Dmocho wski et al., 2012) and those obtained in the presen t study (groups Individual, Joint 1 and Joint 2 ). Inter-sub ject correlation (ISC) measures similarity of resp onses betw een sub jects for the first and second viewings (v1,v2), and the inter-viewing correlation (IVC) measures similarity within-sub ject b et w een the t wo views. Co efficien ts are calculated for the first CorrCA component recorded while watc hing Bang! Y ou’r e de ad . **: p < 0 . 01. ISC v1 ISC v2 IV C Individual 0.64** 0.33** 0.49** Join t group 1 0.51** 0.15** 0.44** Join t group 2 0.61** 0.28** 0.54** T able 2: Scenes described b y the sub jects as ha ving the strongest impression on them. Based on the 30 sub jects whic h sa w Bang! Y ou’re De ad with unin terrupted narrativ e. In a p ost-experiment questionnaire, sub jects w ere ask ed to describ e the scenes that made the strongest impression on them. Their answers were collected in the eigh t groups. The sub jects each mentioned 1.77 scenes on av erage (0.77 std.). 29 sub jects (97 %) mentioned either scenes where the boy p oin ts the gun at his mother or at other p eople. Scene Appro x. times No of times mentioned (%) The b o y sho ots (or p oin ts gun at) mother 2:25 and 3:00 16 (53 %) The b o y sho ots (or p oin ts gun at) at p eople 2:10, 3:30 and 5:30 15 (50 %) The b o y loads another bullet into gun 6:10 8 (27 %) The uncle discov ers his gun is gone 4:35 4 (13 %) The b o y finds and loads gun 0:25 and 1:40 4 (13 %) The b o y p oin ts at mirror or sho ot tow ards camera 0:40, 1:50 and 5:25 4 (13 %) When the father did not run after the b o y 3:00 1 (3 %) The abrupt ending 6:14 1 (3 %) et al., 2008; Dmo c ho wski et al., 2012). The second video w as an excerpt from Sophie’s Choic e , directed b y Alan J. Pakula (1982), and the third was an uneven tful baseline video of p eople silently descending an escalator. F or b oth the joint and individual recording scenarios, the time course of the ISC, based on the first CorrCA component from sub jects watc hing the film, closely reproduces results obtained previously in a lab oratory setting (Fig. 2a and T able 1). An indication of the stabilit y of the tec hnique is provided b y the spatial patterns of the neural activit y that drives these repro ducible resp onses. Similar to other comp onen t extraction tec hniques, suc h as independent comp onen t analysis or common spatial patterns (Parra and Sa jda, 2003; Koles et al., 1990), CorrCA reduces the signal of multiple electro des to a few comp onen ts. The ISC is then computed for the first few comp onen ts, whic h capture most of the correlation b etw een recordings. The strongest three correlated comp onen ts sho w a stable pattern of activity across the differen t groups and recording conditions (Fig. 2b), all three obtaining significan t spatial correlations b et ween groups ( r c omp1 = 0 . 97, r c omp2 = 0 . 91, r c omp3 = 0 . 79, all with p < 0 . 002 for uncorrected p erm utation test), for Bang! Y ou’r e De ad . The robustness to recording conditions is also apparent for the second film clip from Sophie’s Choic e ( r c omp1 = 0 . 51, p < 0 . 002; r c omp2 = 0 . 48, p = 0 . 008; r c omp3 = 0 . 36, p = 0 . 033), alb eit with a lo wer av erage correlation, which for the first tw o comp onen ts may b e due to noisy scalp maps for the Joint 1 group and Individual group, resp ectively (see supplemen tary Fig. S1). F or the baseline video, only the first comp onen t ac hieved significant av erage correlation b et w een groups ( r c omp1 = 0 . 46, p = 0 . 014). The low er stabilit y in the scalp maps obtained for Sophie’s Choic e and the baseline video could b e explained b y the low er ALD of these stimuli (see b elo w), since these films obtain lo wer av erage IVC compared to Bang! Y ou’r e De ad for all groups (Fig. 3). Previous research has indicated the potentials of ISC as a marker of engagemen t of conscious pro cessing (Dmo c howski et al., 2012, 2014; Naci et al., 2015; Lahnakoski et al., 2014; Ki et al., 2016). T o further in vestigate this, we ask ed sub jects p ost-exp erimen t to describ e the film segments 4 Figure 3: Distribution and mean of IVC calculated from the first CorrCA comp onent for sub ject groups and films. Violin plots sho w distributions of IVC estimated using a squared exp onen tial (normal) k ernel with band- width of 0.005 (Hoffmann, 2015). Horizontal black bars denote distribution means. F or visualisation purp oses, the extreme 2.5% v alues at either end of the distributions w ere left out of the violin plots (but were k ept for estimating mean and p-v alues). A blo ck permutation test (block size B = 25 s ) w as employ ed to estimate statistical significant differences in the mean IVC b et ween viewing conditions (uncorrected for multiple compar- isons). F or b oth films there were significant differences in mean IVC b et w een groups with normal narrative and the Scr amble d group ( Bang! Y ou’re De ad : p Individual = 0 . 006, p Joint 1 = 0 . 033, p Joint 2 = 0 . 004; Sophie’s Choic e : p Individual = 0 . 059, p Joint 1 = 0 . 37, p Joint 2 = 0 . 012). How ever, there were no significant differences b et ween groups with the original, unscram bled narrativ e. Note that the Scr amble d group did not watc h the baseline video. (or ”scenes”) that made the biggest impact on them. W e quan tified their answers by assigning each answ er to one of eigh t general scene descriptions. T able 2 shows that the scenes most frequen tly men tioned are ”Boy p ointing gun at mother” or ”Boy p ointing gun at p eople”, and 29 out of 30 sub jects mentioned one or b oth of the scenes as ha ving had high impact on them. The most frequen tly mentioned scene o ccurs around 2:25, where a p eak in the ISC can b e seen (Fig. 2a). The high impact of this particular scene w as confirmed by the susp ense ratings presen ted in Naci et al. (2015). See Dmocho wski et al. (2012) for additional descriptions and examples of scenes eliciting high ISC in Bang! Y ou’r e De ad . T o determine if the p ortable equipment, which uses only 14 c hannels, can detect v arying lev els of neural reliabilit y, a second group of sub jects watc hed the same tw o film clips individually , but no w with scenes scrambled in time. This interv ention is a widely used to ol to create a baseline with similar lo w-level stim uli, yet reduced engagemen t (Miller and Selfridge, 1950; Anderson et al., 2006; Hasson et al., 2008; Dmo c howski et al., 2012). See Metho ds for more information on the definition and time scales of the scrambled scenes. Despite using consumer-grade EEG we find that IVC is significan tly ab o ve chance for a large fraction of the original engaging clip, but drops dramatically when the scenes are scram bled in time (mean IVC, Fig. 3, p < 0 . 01, for Bang! Y ou’r e De ad ). Also the baseline video, whic h sub jects rep orted not to engage them at all, only obtained significan t ISC ( p < 0 . 01, uncorrected) in 2.3 % of the 354 tested time windows, compared to the 54.1 % significant windo ws obtained for Bang! Y ou’r e De ad . F or exp eriments conducted in less controlled, everyda y settings as in this study, it is imp ortant to assess across-session repro ducibilit y . T o test this, w e recorded a second group of sub jects in a classro om setting who watc hed the material together ( Joint 1 and 2 ). These tw o groups obtained mean IV Cs comparable to the individual recordings (Fig. 3, Bang! Y ou’r e De ad : p > 0 . 49, Sophie’s Choic e : p > 0 . 26), and also show ed repro ducibilit y b et ween the groups of simultaneous recordings (Fig. 3, Bang! Y ou’r e De ad : p > 0 . 49, Sophie’s Choic e : p > 0 . 08). Robustness to in ter-sub ject v ariations in the spatial brain structure is a basic question when 5 1 2 3 4 5 6 0 0.1 0.2 Time (min.) ISC r = 0.71 p < 0.0002 0 0.075 0.15 ALD Figure 4: The ISC of the first CorrCA comp onen t is temp orally correlated with the av erage luminance differences (ALD) of the film stimulus. ALD is calculated as the frame-to-frame difference in pixel intensit y , smo othed to matc h the 5 s window of ISC, and mainly reflects the frequency of changes in camera p osition. Data computed from the neural responses of sub jects w atching Bang Y ou’r e De ad . T able 3: Correlation co efficients b et ween the ALD and the ISC for the tw o viewings (v1,v2) as well as the IV C for the first correlated component. The correlation is presented for Bang Y ou’re de ad and Sophie’s Choic e for the Individual and Scr amble d (Scr) groups. **: p < 0 . 01. ISC v1 ISC v2 IV C Bang Y ou’re Dead 0.71** 0.61** 0.56** Sophie’s Choice 0.50** 0.24** 0.23** Bang Y ou’re Dead (Scr) 0.54** 0.45** 0.35** Sophie’s Choice (Scr) 0.42** 0.01 -0.22** applying CorrCA to classro om data. CorrCA is deriv ed under the assumption that the spatial net works of sub jects are identical. This assumption could b e challenged b y inter-individual differ- ences, ho wev er, it turns out to b e surprisingly robust to such v ariability (Kamronn et al., 2015). T o demonstrate this, w e briefly analyse a ’w orst case’ scenario in whic h the true mixing w eights of tw o sub jects form a pair of ortho gonal v ectors. The observ ations are assumed to consist of a single true signal, z , mixed in to D dimensions with additive Gaussian noise; X 1 = a 1 z | + , X 2 = a 2 z | + . Giv en a large sample, the co v ariance matrices are giv en as R 11 = P · a 1 a | 1 + σ 2 I , R 12 = P · a 1 a | 2 , where P is the v ariance of z and σ 2 signifies the noise v ariance. F or simplicit y the weigh t vectors are assumed to b e unit length. The t wo matrices in Eq. (3) can then b e written as ( R 11 + R 22 ) − 1 = 1 P [ a 1 a 2 ] " a | 1 a | 2 # + 2 σ 2 P I ! − 1 ; R 12 + R 21 = P · [ a 1 a 2 ] " a | 2 a | 1 # , (1) using blo c k matrix notation. With a | 1 a 2 = 0, k a 1 k 2 = k a 2 k 2 = 1 and the W o odbury identit y , the pro duct of the tw o matrices in Eq. (1) can b e expressed as ( R 11 + R 22 ) − 1 ( R 12 + R 21 ) = P 2 σ 2 + P ( a 1 a | 2 + a 2 a | 1 ) . (2) An eigenv ector of matrix (2) tak es the form α a 1 + β a 2 , with α = ± β and ± P 2 σ 2 + P as eigenv alues. By applying this eigenv ector to observ ations, X 1 and X 2 , w e see that CorrCA still identifies the relev ant time series, z . F or the first CorrCA comp onen t, the channels w eighted most hea vily are the ones p ositioned o ver the o ccipital lob e (see Fig. 2b). T o estimate how muc h of the ISC was driv en by basic low-lev el visual pro cessing, w e analysed the relation b etw een ISC and a measure of frame-to-frame luminance fluctuations (av erage luminance difference, ALD; see metho ds). Note that to av oid synchronised eye 6 ALD 0.04 0.08 0.12 ISC 0 0.05 0.1 0.15 0.2 slope: 1.872 slope: 1.329 Bang! You're Dead Narrative Scrambled ALD 0.005 0.010 0.015 0 0.05 0.1 0.15 slope: 8.183 slope: 5.589 Sophie's Choice Figure 5: Relation b et ween the ISC and the ALD for different conditions. Each point indicates a p oint in the ISC time course as seen in Fig. 2a (5 s windows, 80% ov erlap) and the corresp onding ALD calculated from the visual stim ulus. It is eviden t that time points with higher luminance fluctuations (high t ALD) result in higher correlation of brain activity across sub jects (high ISC). The indicated ”slope” is a least squares fit of the slop e of lines passing through (0,0). The slop e indicates the strength of ISC for a given ALD v alue. F or both films there is a significan t drop in the slop e ( p < 0 . 01: blo ck p erm utation test with blo c k size B = 25sec), thus the original narrative (blue) elicits higher ISC than the less engaging scrambled version of the films (red). Note that brigh tness of the scenes in Sophie’s Choic e is m uch lo wer than in Bang! Y ou’r e de ad , resulting in an ALD that is low er b y almost a factor 10. artefacts and to ensure that only signals of neural origin contributed to the measured correlations, w e remov ed indep enden t comp onents related to ey e artefacts from the EEG (see methods). Figure 4 and T able 3 sho w that there is a significan t correlation b et ween the ISC and the ALD for b oth Bang! Y ou’r e De ad and Sophie’s Choic e for the first CorrCA comp onen t. This suggests that this portion of the correlated activit y ma y indeed be driven b y lo w-level visual evok ed resp onses. Ho wev er, the degree of engagement, here represen ted b y narrativ e coherence, app ear to mo dulate the amplitude of the ISC time course, since even though the scrambled stim ulus w as driven b y the visual stim ulus, it w as so to a lesser exten t. Previous researc h has sho wn that visual evok ed p oten tials (VEP) are mo dulated by spatial atten tion (Johannes et al., 1995) and that even feature-sp ecific atten tion enhances steady-state VEPs (M ¨ uller et al., 2006). W e quan tify the effect of scrambling the narrative by comparing the sensitivit y (slop e) of ISC to ALD in b oth the normal and scrambled conditions by fitting a simple linear mo del (Fig. 5). F or b oth films we found significan t reductions of the ISC/ALD slop e in the scrambled version ( p < 0 . 01; blo ck p erm utation test, with blo c k size B = 25 s ). Discussion W e hav e demonstrated that student neural reliability to media stim uli may be quantified using EEG in a classroom setting. F or educational tec hnology cost and robustness are k ey features, hence, w e aimed at establishing a realistic scenario based on lo w-cost consumer grade equipment, the Smart- phone Brain Scanner, fo cusing on sev eral p oten tial sources that could degrade robustness. W e hav e pro vided evidence that salien t asp ects of the neural reliability previously detected with lab oratory grade equipment can b e repro duced in a realistic setting. W e recorded fully-synchronized EEG with nine sub jects in a real classro om and found that the lev el of neural resp onse reliability matc hed prior laboratory results. The robustness of CorrCA and ISC is granted by the reproducibil- 7 it y b et w een recording conditions, b oth of the ISC time-courses throughout the film clips and of the spatial top ographies of the first three CorrCA comp onen ts. F or the film clip from Bang! Y ou’r e De ad w e sa w that sev en sub jects were enough to obtain stable top ographies for all three components, whereas for Sophie’s Choic e and the baseline video the results w ere more noisy , suggesting that more sub jects are needed to obtain stable results. Previous research shows that ten sub jects provided for stable results in a case in volving non-narrative baseline videos or films with low er ISC and IVC in a lab oratory setting (Dmo c ho wski et al., 2012). Mathematically , we hav e shown that our detection scheme, CorrCA, is robust to inter-sub ject v ariability in spatial configurations of brain netw orks, or induced by cap misalignment. In the calculations, w e assumed t wo sub jects in a worst case scenario where the sub jects’ spatial pro jections are orthogonal. This result conforms w ell with simulations that show that, ev en for multiple sub jects with randomly dra wn spatial pro jections, CorrCA w as able to find the relev ant times series (Kamronn et al., 2015). The simulations also sho wed that increasing the num b er of sub jects decreased the signal- to-noise ratio, presumably due to the estimated common pro jection not b eing able to fit with the differen t pro jections of each sub ject. W e hav e presen ted results that further indicate a relationship betw een c hanges in ISC and view er engagement. Through a basic analysis of questionnaires on scenes of high impact, we found that high ISC indeed is asso ciated with high impact. W e ha ve also sho wed a relationship betw een neural resp onses to luminance fluctuations and coherence of stimulus narrativ e. F or b oth the films presen ted, we saw a significant drop in the av erage IVC for sub jects watc hing the film sequences in whic h the narrative had b een temp orally scrambled. At the same time no significan t difference was found b et ween the groups watc hing the film sequences that had not b een scrambled, whic h further underlines the robustness of the measure. It ma y app ear surprising that there exists a significan t correlation b et ween the r aw EEG signals of v arious students in the classro om. Ho wev er, it is w ell-known that ey e scan patterns in a film audience follow a sp ecific pattern after a scene c hange, activ ating the dorsal pathw ay (Unema et al., 2005). A v alid assumption could therefore b e that the correlation is due to synchronised artefacts from eye mov emen ts, but this has recently b een shown not to affect attentional mo dulation of ISC (Ki et al., 2016). Also, it is known that stimuli in the form of flashing images elicit VEPs, which are mo dulated in amplitude b y the luminance (Armington, 1968). When recorded with EEG, the spatial distribution of the early VEP at 100ms (P100) is similar to the scalp maps of the first correlated comp onen t (C1 in Fig. 2b) (Johannes et al., 1995; Sandmann et al., 2012). W e in vestigated whether low-lev el visual pro cesses could b e a driving force b ehind the measured ISCs by correlating the ISC with changes in luminance in the video stim uli, as measured by the ALD. W e found that luminance fluctuations drive a significan t portion of the ISC. In all four groups of sub jects Sophie’s Choic e obtained low er IV C compared to Bang! Y ou’r e De ad . This difference could b e explained by the fact that the film clip also had a muc h lo wer ALD. Also, Fig. 4 indicates that the passage in Bang! Y ou’r e de ad with the highest and most sustained ISC (around 1:20 to 1:50) coincides with the interv al with the most scene c hanges. This relationship could, ho wev er, also b e due to more complex pro cesses, as fast-paced cutting is a known cinematographic to ol used by Hitc hco c k to induce susp ense and thereby increase the attention of the view er (Bordwell, 2002). The strong link b et ween ISC and luminance fluctuations due to scene cuts ha ve also recen tly b een presen ted in a fMRI study (Herb ec et al., 2015). This is something that would b e in teresting to tak e in to account for future studies inv estigating the applicability of ISC. Baseline videos could b e created in wa ys to achiev e similar ALD features as the target stim uli. The baseline video, created for this study , consisted of one contin uous scene of p eople entering and exiting an escalator in a relaxed manner, which did not pro duce an y significant correlation. F uture studies might use a baseline video con taining scene cuts of faces and bo dy parts, to also take the effect of editing into account. T o in vestigate the p ossibilit y of higher lev el pro cesses also b eing at pla y , we analysed the linear 8 relationship b et ween ISC and luminance fluctuations at a giv en time in the video stimulus. The scram bling op eration aimed to test for a change in attentional engagement while con trolling for lo w lev el features. The premise w as that sub jects would b e less attentiv e to the stim ulus, i.e. less ”en- gaged”, if they did not follow the narrativ e arch of the story . With that in mind, Fig. 4 and 5 suggest that ISC is driven by stimulus-ev ok ed resp onses that are mo dulated by attentional engagement with the stimulus. W e ha ve demonstrated the feasibilit y of tracking in ter-sub ject correlation in a classro om setting; a measure that has b een related to atten tional mo dulation (Ki et al., 2016). W e ha ve sho wn that ISC is robust to recording equipmen t and conditions, and w e ha ve presented evidence that the amplification of ISC in films that hav e a strong and coherent narrative is due to atten tional mo dulation of visual ev oked resp onses. Th us ISC may b e used as an indirect electrophysiological measure of engagemen t through an attentional top-do wn modulation of lo w-lev el neural pro cesses. Recen t researc h has sho wn that attentional mo dulation of neural responses takes place in sp eec h perception (Mesgarani and Chang, 2012; Mirko vic et al., 2015), which lends credibility to a similar pro cess o ccurring in the visual system. The evidence that suc h a basic and w ell defined mechanism could b e at play further adds to the robustness of the approach in real ev eryday scenarios. Metho ds Proto col. F our groups of sub jects watc hed the video stim uli in differen t scenarios. The first group ( N = 12, Individual ) watc hed videos individually in an office environmen t on a tablet computer (Go ogle Nexus 7 tablet, with a 7” (17.8 cm) screen) with earphones. The second group ( N = 12) saw the videos in the same manner, but the scenes of the film stimulus w ere scram bled in time resulting in the narrativ e b eing lost ( Scr amble d ). The ob jective of this condition w as to demonstrate that the similarit y of resp onses across sub jects is not simply the result of low-lev el stimulus features (whic h are iden tical in the Individual and Scr amble d conditions), but instead, is mo dulated narrativ e coherence, whic h presumably engages viewers. Tw o additional groups ( N = 9 , N = 9) watc hed the original videos on a screen in a classro om (Figure 1, Joint 1 and Joint 2 ), with sound pro jected through loudsp eak ers. An attempt w as made to create viewing conditions for the sub jects in the joint groups, that w ere similar to the viewing conditions for the individual group, i.e., lights were damp ened and the pro jected image pro duced appro ximately the same field-of-view (see supplementary materials). The cen tral question w as whether the viewing condition (i.e., in a group versus individually) influences the level of ISC across sub jects. Stim uli. The first video clip was a susp enseful excerpt from the short film Bang! Y ou’r e De ad (1961) directed b y Alfred Hitc hco c k. It was selected because it is known to elicit highly reliable brain activit y across sub jects in fMRI (Hasson et al., 2004) as well as EEG (Dmo c ho wski et al., 2012). Our second stimulus was a clip from Sophie’s Choic e , directed b y Alan J. Pakula (1982), whic h has b een used earlier to study fMRI activity in the context of emotionally salient naturalistic stim uli (Raz et al., 2012). A third non-narrative control video was recorded in a Danish metro station of sev eral p eople who were b eing transp orted quietly on an escalator. Eac h video clip had a length of appro ximately six minutes and w as shown twice to each sub ject. F or each viewing the order of the clips w as randomized, while the same random order was used the second time the clips were shown. A com bined video w as created for each of the six p ossible p erm utations of the order of the clips, starting with a 10 second 43 Hz tone for use in post pro cessing sync hronization, and 20 seconds black screen b et ween each film clip. The total length of the video amounted to 39 minutes. An additional con trol stim ulus ( Scr amble d ) w as created b y scram bling the order of the scenes in Bang! Y ou’r e De ad and Sophie’s Choic e in accordance with previous researc h (Hasson et al., 2008; Dmo c howski et al., 2012). In these studies, scene segments were defined in v arying temp oral scales (36 s, 12 s, and 4 s) that consisted of m ultiple camera positions, ”shots”. F or this study w e defined a scene as a single shot (i.e. the segment betw een t wo scene cuts) with the added rule that a scene must not exceed 250 9 frames ( ∼ 10 s) to reduce sub jects’ abilit y to infer the narrative from long scenes. This pro cedure resulted in 73 scenes lasting b et ween 0.5 and 10 seconds and corresp onded to the intermediate to short time-scales employ ed in previous studies (Hasson et al., 2008). Sub jects. A total of 42 female sub jects (mean age: 22.4y , age range: 18-32y), who gav e written informed consen t prior to the exp erimen t, were recruited for this study . Non-in v asiv e exp erimen ts on health y sub jects are exempt from ethical committee pro cessing by Danish law (Den Nationale Vidensk absetiske Komit ´ e, 2014). Among the 42 recordings, nine were excluded due to unstable wireless comm unication that precluded prop er synchronization of the data across sub jects (five from the Individual group, one from the Scr amble d group and three from the tw o Joint groups). The difference in the n umber of recordings in the different groups could giv e unfair adv antages with resp ect to noise when using CorrCA or calculating ISC. W e therefore decided to randomly choose four sub jects from the Scr amble d group and one from Joint 2 group and excluded these from the analyses. This w as to ensure that each group had seven fully synchronized recordings. P ortable EEG – Smartphone Brain Scanner. Research grade EEG equipment is costly , time-consuming to set up, and immobile. Ho wev er, recently consumer grade EEG equipment that is more affordable and has increased comfort has app eared. Here we use the mo dified 14 c hannel system, ’Emo cap’, based on the EEG Emotiv EPOC headset. F or details and v alidation, see (Stop czynski et al., 2014a,b). In this study it was implemen ted on Asus Nexus 7 tablets. An electrical trigger and asso ciated sound w as used to synchronize EEG and video signals in the individual viewing condition, while a split audio signal (sim ultaneously feeding into microphone and EEG amplifiers) was used to sync hronize the nine sub jects EEG recordings and the video in the joint viewing condition (see sup- plemen tary materials for further information on synchronisation). The resulting timing uncertaint y w as measured to b e less than 16 ms. The EEG w as recorded at 128 Hz and subsequen tly bandpass filtered digitally using a linear phase window ed sinc FIR filter b et ween 0.5 and 45 Hz and shifted to adjust for group delay . Eye artefacts w ere reduced with a conserv ative pre-pro cessing pro cedure using indep enden t comp onen t analysis (ICA), remo ving up to 3 of the 14 a v ailable comp onen ts (Corrmap plug-in for EEGLAB (Delorme and Makeig, 2004; Viola et al., 2009)). Correlated comp onen t analysis to measure ISC and IVC. CorrCA w as presen ted in Dmo c ho wski et al. 2012, as a constrained version of Canonical Correlation Analysis (CCA). CorrCA seeks to find sets of weigh ts that maximises the correlation b et ween the neural activit y of sub jects exp eriencing the same stim uli. F or each neural comp onen t, CorrCA finds one shared set of weigh ts for all sub jects in the group. Giv en t wo m ultiv ariate spatio-temporal time series (termed view in CorrCA), { X 1 , X 2 } ∈ R D × N , with D b eing the num b er of measured features (EEG c hannels) in the tw o views and N the num b er of time samples, CCA estimates weigh ts, { w 1 , w 2 } , which maximize the correlation b etw een the comp onen ts, y 1 = X | 1 w 1 and y 2 = X | 2 w 2 . The w eights are calculated using t wo eigenv alue equations, with the constraint that the comp onen ts b elonging to each m ultiv ariate time series are uncorrelated (Hardo on et al., 2004). CorrCA is relev ant for the case where the views are homogeneous, e.g., using the same EEG channel p ositions, and imp oses the additional constraint of shared weigh ts w = w 1 = w 2 . This assumption can p oten tially increase sensitivit y inv olving few er parameters. In CorrCA the weigh ts are thus estimated through a single eigen v alue problem; ( R 11 + R 22 ) − 1 ( R 12 + R 21 ) w = ρ w , (3) where, R ij = 1 N X i X | j , is the sample co v ariance matrix (Dmo c howski et al., 2012). T o illustrate the spatial distribution of the underlying ph ysiological activit y of the comp onen ts, we use the estimated forw ard mo dels (”patterns”) as discussed in (Parra et al., 2005; Haufe et al., 2014). Av erage luminance difference (ALD). Video clips w ere con verted to grey scale (0-255) b y a veraging o v er the three colour channels. W e then calculated the squared difference in pixel intensit y from one frame to the next and to ok the av erage across pixels. These signals were non-linearly 10 re-sampled at 1Hz by selecting the maximum ALD for eac h 1 s interv al to emphasise the large differences during changes in camera p osition (see figure S2 in supplementary materials for an comparison b etw een frame-to-frame and smo othed difference). These v alues w ere then smo othed in time by con volving with a Gaussian k ernel with a ”v ariance” parameter of 2.5 s 2 . This do wn sampling and smo othing w as aimed at matching the temp oral resolution of the ALD to that of the time-resolv ed ISC computation (5 s sliding windo w with 1 s interv als). Statistical testing. In order to ev aluate the statistical relev ance of the correlations, w e employ ed a simple permutation test ( P = 5000 permutations) (Dmo c ho wski et al., 2012). T o test the robustness of the obtained w eights for the spatial pro jections, w e calculated the av erage correlation of all p ossible pairings of the four conditions groups for a giv en comp onen t. Again, w e employ ed a p erm utation test ( P = 5000 p erm utations) to ev aluate statistical relev ance b y randomly permuting the channel order for eac h group and recalculating the a verage correlation. When testing differences in a v erage IV C b et w een conditions, we used a blo c k permutation test (block size B = 25 s , P = 5000 p erm utations) to account for temp oral dep endencies. References Anderson, D. R., Fite, K. V., Petro vic h, N., and Hirsc h, J. (2006). Cortical activ ation while w atching video montage: An fmri study . Me dia Psycholo gy , 8(1):7–24. Armington, J. C. (1968). The electroretinogram, the visual ev oked poten tial, and the area-luminance relation. Vision r ese ar ch , 8:263–276. A ttfield, S., Kazai, G., Lalmas, M., and Piw ow arski, B. (2011). T ow ards a science of user engagemen t. In WSDM Workshop on User Mo del ling for Web Applic ations . A CM International Conference on W eb Search And Data Mining. Bordw ell, D. (2002). Intensified contin uity: visual st yle in con temp orary American film. Film Quarterly , 55(3):16–28. Chang, W.-T., J¨ a¨ ask el¨ ainen, I. P ., Belliv eau, J. W., Huang, S., Hung, A.-Y., Rossi, S., and Ahv eninen, J. (2015). Combined MEG and EEG sho w reliable patterns of electromagnetic brain activity during natural viewing. Neur oImage , 114:49–56. Cohen, A., Ivry , R. B., and Keele, S. W. (1990). Atten tion and structure in sequence learning. J.Exp.Psychol.[L e arn.Mem.Co gn.] , 16(1):17–30. Delorme, A. and Mak eig, S. (2004). EEGLAB: An op en source to olbox for analysis of single-trial EEG dynamics including indep enden t component analysis. Journal of Neur oscienc e Metho ds , 134(1):9–21. Den Nationale Vidensk absetiske Komit´ e (2014). V ejledning om anmeldelse, indb eretning mv. (sund- hedsvidensk ablige forskningspro jekter). Dmo c ho wski, J. P ., Bezdek, M. a., Ab elson, B. P ., Johnson, J. S., Sc humac her, E. H., and P arra, L. C. (2014). Audience preferences are predicted by temp oral reliabilit y of neural pro cessing. Natur e Communic ations , 5:1–9. Dmo c ho wski, J. P ., Sa jda, P ., Dias, J., and Parra, L. C. (2012). Correlated comp onen ts of ongoing EEG p oin t to emotionally laden attention - a p ossible marker of engagement? F r ontiers in human neur oscienc e , 6(May):112. Hardo on, D. R., Szedmak, S., and Sha we-ta ylor, J. (2004). Canonical correlation analysis; An o verview with application to learning metho ds. Neur al c omputation , 16(12):2639–2664. 11 Hasson, U., Landesman, O., Knappmeyer, B., V allines, I., Rubin, N., and Heeger, D. J. (2008). Neuro cinematics: The neuroscience of film. Pr oje ctions , 2(1):1–26. Hasson, U., Nir, Y., Levy , I., F uhrmann, G., and Malach, R. (2004). Intersub ject sync hronization of cortical activity during natural vision. scienc e , 303(5664):1634–1640. Haufe, S., Meineck e, F., G¨ orgen, K., D¨ ahne, S., Ha ynes, J.-D., Blank ertz, B., and Bießmann, F. (2014). On the in terpretation of weigh t vectors of linear mo dels in m ultiv ariate neuroimaging. Neur oImage , 87:96–110. Herb ec, A., Kauppi, J. P ., Jola, C., T ohk a, J., and Pollic k, F. E. (2015). Differences in fMRI in tersub ject correlation while viewing unedited and edited videos of dance p erformance. Cortex , 71:341–348. Hoffmann, H. (2015). violin. m-Simple violin plot using matlab default k ernel density estimation. Johannes, S., M ¨ un te, T. F., Heinze, H. J., and Mangun, G. R. (1995). Luminance and spatial atten tion effects on early visual pro cessing. Co gnitive Br ain R ese ar ch , 2:189–205. Kamronn, S., P oulsen, A. T., and Hansen, L. K. (2015). Multiview Bay esian Correlated Comp onen t Analysis. Neur al Computation , 27(10):2207–2230. Ki, J. J., Kelly , S. P ., and Parra, L. C. (2016). Atten tion strongly mo dulates reliability of neural resp onses to naturalistic narrative stimuli. The Journal of Neur oscienc e , 36(10):3092–3101. Koles, Z. J., Lazar, M. S., and Zhou, S. Z. (1990). Spatial patterns underlying p opulation differences in the background eeg. Br ain top o gr aphy , 2(4):275–284. Lahnak oski, J. M., Glerean, E., J¨ a¨ ask el¨ ainen, I. P ., Hy¨ on¨ a, J., Hari, R., Sams, M., and Nummenmaa, L. (2014). Synchronous brain activity across individuals underlies shared psychological p ersp ec- tiv es. Neur oImage , 100:316–24. Lankinen, K., Saari, J., Hari, R., and Koskinen, M. (2014). Intersub ject consistency of cortical MEG signals during movie viewing. Neur oImage , 92:217–224. Lin, C.-T., Huang, K.-C., Ch uang, C.-H., Ko, L.-W., and Jung, T.-P . (2013). Can arousing feed- bac k rectify lapses in driving? prediction from eeg p o w er sp ectra. Journal of neur al engine ering , 10(5):056024. Mesgarani, N. and Chang, E. F. (2012). Selective cortical representation of attended sp eak er in m ulti-talker sp eech p erception. Natur e , 485(7397):233–236. Miller, G. A. and Selfridge, J. A. (1950). V erbal con text and the recall of meaningful material. The A meric an journal of psycholo gy , 63(2):176–185. Mirk ovic, B., Deb ener, S., Jaeger, M., and De V os, M. (2015). Deco ding the attended sp eec h stream with multi-c hannel EEG: implications for online, daily-life applications. Journal of Neur al Engine ering , 12(4):046007. M ¨ uller, M. M., Andersen, S., T rujillo, N. J., V ald ´ es-Sosa, P ., Malinowski, P ., and Hilly ard, S. a. (2006). F eature-selectiv e attention enhances color signals in early visual areas of the human brain. Pr o c e e dings of the National A c ademy of Scienc es of the Unite d States of A meric a , 103(38):14250–4. Naci, L., Sinai, L., and Owen, A. M. (2015). Detecting and in terpreting conscious exp eriences in b eha viorally non-resp onsiv e patients. Neur oImage . 12 O’Brien, H. L. and T oms, E. G. (2013). Examining the generalizabilit y of the user engagemen t scale (ues) in exploratory search. Information Pr o c essing & Management , 49(5):1092–1107. P arra, L. and Sa jda, P . (2003). Blind source separation via generalized eigenv alue decomp osition. The Journal of Machine L e arning R ese ar ch , 4:1261–1269. P arra, L. C., Sp ence, C. D., Gerson, A. D., and Sa jda, P . (2005). Recipes for the linear analysis of EEG. Neur oImage , 28(2):326–41. Radw an, A. A. (2005). The effectiv eness of explicit attention to form in language learning. System , 33(1):69–87. Raz, G., Winetraub, Y., Jacob, Y., Kinreich, S., Maron-Katz, A., Shaham, G., Podlipsky , I., Gilam, G., Soreq, E., and Hendler, T. (2012). Portra ying emotions at their unfolding: a m ultila yered approac h for probing dynamics of neural net works. Neur oImage , 60(2):1448–61. Ringac h, D. L., Hawk en, M. J., and Shapley , R. (2002). Receptive field structure of neurons in monk ey primary visual cortex revealed by stim ulation with natural image sequences. Journal of vision , 2(1):2–2. Robinson, P . (1997). Individual differences and the fundamen tal similarity of implicit and explicit adult second language learning. L anguage L e arning , 47(1):45–99. Sandmann, P ., Dillier, N., Eic hele, T., Mey er, M., Kegel, A., P ascual-Marqui, R. D., Marcar, V. L., J¨ anc ke, L., and Deb ener, S. (2012). Visual activ ation of auditory cortex reflects maladaptive plasticit y in co c hlear implan t users. Br ain , 135:555–568. Stop czynski, A., Stahlh ut, C., Larsen, J. E., P etersen, M. K., and Hansen, L. K. (2014a). The Smartphone Brain Scanner: A P ortable Real-Time Neuroimaging System. PloS one , 9(2):e86733. Stop czynski, A., Stahlhut, C., P etersen, M. K., Larsen, J. E., Jensen, C. F., Iv anov a, M. G., Andersen, T. S., and Hansen, L. K. (2014b). Smartphones as p ock etable labs: visions for mobile brain imaging and neurofeedback. International journal of psychophysiolo gy , 91(1):54–66. Szafir, D. and Mutlu, B. (2013). Artful: adaptiv e review tec hnology for flipp ed learning. In Pr o- c e e dings of the SIGCHI Confer enc e on Human F actors in Computing Systems , pages 1001–1010. A CM. Unema, P . J., Pannasc h, S., Jo os, M., and V elichk ovsky , B. M. (2005). Time course of information pro cessing during scene perception: The relationship b et ween saccade amplitude and fixation duration. Visual Co gnition , 12(3):473–494. Viola, F. C., Thorne, J., Edmonds, B., Schneider, T., Eichele, T., and Deb ener, S. (2009). Semi- automatic identification of indep enden t comp onen ts representing EEG artifact. Clinic al Neur o- physiolo gy , 120(5):868–877. Ac kno wledgemen ts W e thank Iv ana Konv alink a, Arek Stop czynski and the DTU Smartphone Brain Scanner team for their assistance and helpful discussions. This work w as supp orted by the Lundb ec k F oundation through the Center for Inte gr ate d Mole cular Br ain Imaging and b y Inno v ation F oundation Denmark through Neur ote chnolo gy for 24/7 br ain state monitoring . 13 Author con tributions statemen t A TP , SK, JD, LP and LKH designed research; SK, A TP and LKH p erformed research; A TP , SK, JD, LP , and LKH contributed analytical to ols; A TP , SK, LKH analysed data; A TP , SK, JD, LP , and LKH wrote the pap er. Additional information Comp eting financial interests The authors declare that the researc h was conducted in the absence of an y commercial or financial relationships that could be construed as a potential conflict of in terest. 14

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment