Towards better decoding and language model integration in sequence to sequence models

The recently proposed Sequence-to-Sequence (seq2seq) framework advocates replacing complex data processing pipelines, such as an entire automatic speech recognition system, with a single neural network trained in an end-to-end fashion. In this contri…

Authors: Jan Chorowski, Navdeep Jaitly

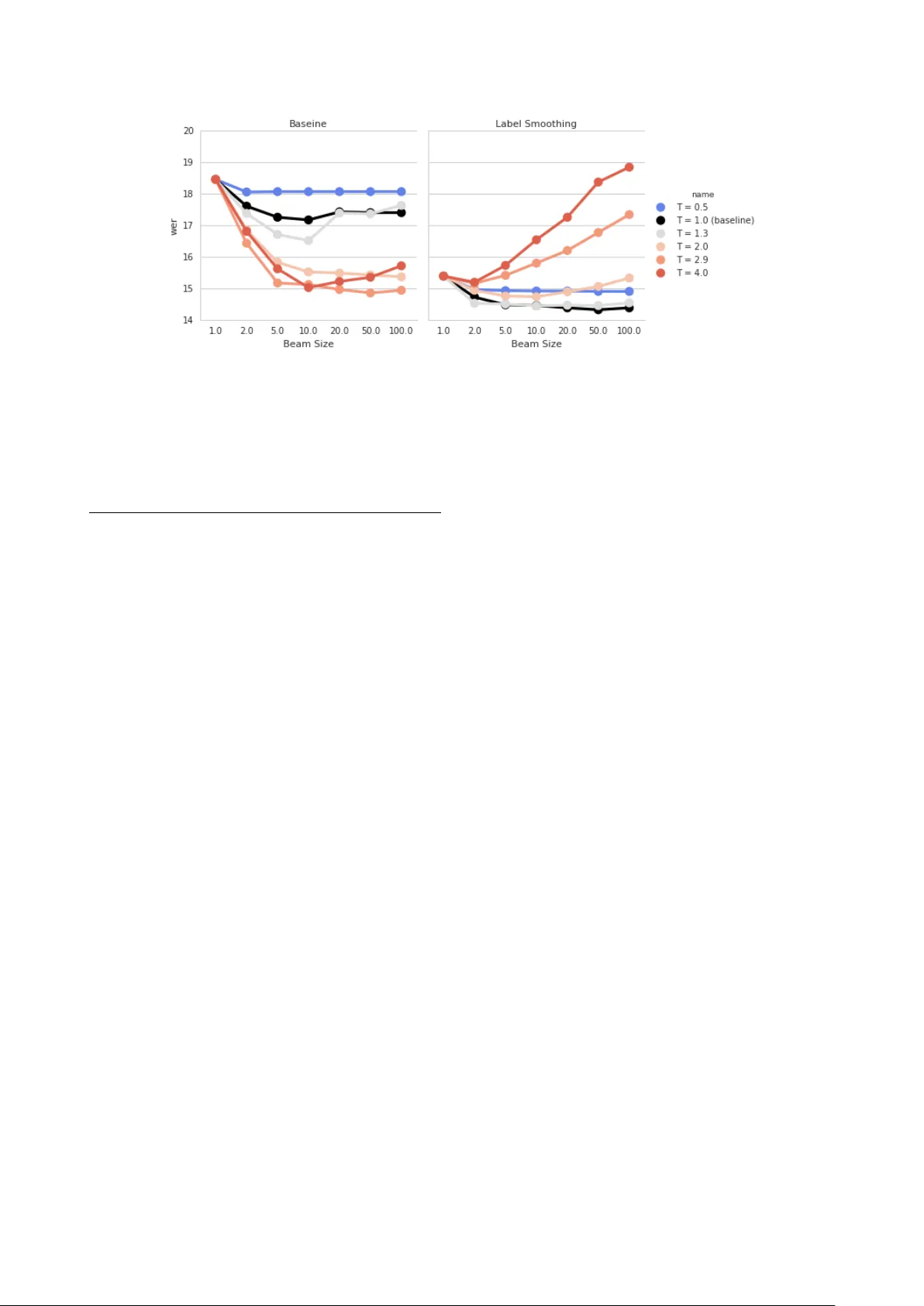

T owards better decoding and language model integration in sequence to sequence models J an Chor owski, Navdeep J aitly Google Brain Google Inc. Mountain V ie w , CA 94043, USA jan.chorowski@cs.uni.wroc.pl,ndjaitly@google.com Abstract The recently proposed Sequence-to-Sequence (seq2seq) frame- work advocates replacing complex data processing pipelines, such as an entire automatic speech recognition system, with a single neural network trained in an end-to-end fashion. In this contribution, we analyse an attention-based seq2seq speech recognition system that directly transcribes recordings into char- acters. W e observe two shortcomings: ov erconfidence in its predictions and a tendency to produce incomplete transcriptions when language models are used. W e propose practical solutions to both problems achieving competiti ve speaker independent word error rates on the W all Street Journal dataset: without sepa- rate language models we reach 10.6% WER, while together with a trigram language model, we reach 6.7% WER. Index T erms : attention mechanism, recurrent neural networks, LSTM 1. Introduction Deep learning [ 1 ] has led to many breakthroughs including speech and image recognition [ 2 , 3 , 4 , 5 , 6 , 7 ]. A subfamily of deep models, the Sequence-to-Sequence (seq2seq) neural net- works ha ve pro ved to be very successful on complex transduction tasks, such as machine translation [ 8 , 9 , 10 ], speech recognition [ 11 , 12 , 13 ], and lip-reading [ 14 ]. Seq2seq networks can typi- cally be decomposed into modules that implement stages of a data processing pipeline: an encoding module that transforms its inputs into a hidden representation, a decoding (spelling) module which emits target sequences and an attention module that com- putes a soft alignment between the hidden representation and the targets. T raining directly maximizes the probability of observing desired outputs conditioned on the inputs. This discriminativ e training mode is fundamentally different from the generative "noisy channel" formulation used to b uild classical state-of-the art speech recognition systems. As such, it has benefits and limitations that are different from classical ASR systems. Understanding and pre venting limitations specific to seq2seq models is crucial for their successful de velopment. Discrimina- tiv e training allows seq2seq models to focus on the most infor- mativ e features. Ho wever , it also increases the risk of overfitting to those few distinguishing characteristics. W e ha ve observed that seq2seq models often yield very sharp predictions, and only a few hypotheses need to be considered to find the most lik ely transcription of a giv en utterance. Ho wev er , high confidence reduces the div ersity of transcripts obtained using beam search. During typical training the models are conditioned on ground truth transcripts and are scored on one-step ahead predictions. By itself, this training criterion does not ensure that all rele vant fragments of the input utterance are transcribed. Subsequently , mistakes that are introduced during decoding may cause the model to skip some words and jump to another place in the recording. The problem of incomplete transcripts is especially apparent when external language models are used. 2. Model Description Our speech recognition system, builds on the recently proposed Listen, Attend and Spell network [ 13 ]. It is an attention-based seq2seq model that is able to directly transcribe an audio record- ing x into a space-delimited sequence of characters y . Similarly to other seq2seq neural networks, it uses an encoder-decoder architecture composed of three parts: a listener module tasked with acoustic modeling, a speller module tasked with emitting characters and an attention module serving as the intermediary between the speller and the listener: h = Listen ( x ) (1) p ( y | x ) = AttendAndSpell ( y , h ) (2) 2.1. The Listener The listener is a multilayer Bi-LSTM network that transforms a sequence of N frames of acoustic features x 1 , x 2 , . . . , x N into a possibly shorter sequence of hidden activ ations h 1 , h 2 , . . . , h N/k , where k is a time reduction constant [ 12 , 13 ]. 2.2. The Speller and the Attention Mechanism The speller computes the probability of a sequence of characters conditioned on the activ ations of the listener . The probability is computed one character at a time, using the chain rule: p ( y | h ) = Y i p ( y i | y τ # . (11) The cov erage criterion prev ents looping ov er the utterance because once the cumulativ e attention bypasses the threshold τ a frame is counted as selected and subsequent selections of this frame do not reduce the decoding cost. In our implementation, the cov erage is recomputed at each beam search iteration using all attention weights produced up to this step. In Figure 2 we compare the effects of the three methods when decoding a network that uses label smoothing and a tri- gram language model. Unlike [ 12 ] we didn’t experience looping when beam search promoted transcript length. W e hypothe- size that label smoothing increases the cost of correct character emissions which helps balancing all terms used by beam search. W e observ e that at large beam widths constraining EOS emis- sions is not sufficient. In contrast, both promoting coverage and transcript length yield improvements with increasing beams. Howe ver , simply maximizing transcript length yields more word insertion errors and achiev es an overall w orse WER. 4. Experiments W e conducted all experiments on the W all Street Journal dataset, training on si284, validating on dev93 and e valuating on e val92 Figure 2: Impact of using techniques that pr event incomplete transcripts when a trigr am langua ge models is used on the dev93 WSJ subset. Results are aver aged acr oss two networks set. The models were trained on 80-dimensional mel-scale filter- banks extracted e very 10ms form 25ms windows, extended with their temporal first and second order differences and per-speaker mean and v ariance normalization. Our character set consisted of lowercase letters, the space, the apostrophe, a noise marker , and start- and end- of sequence tokens. For comparison with previously published results, experiments inv olving language models used an e xtended-vocabulary trigram language model built by the Kaldi WSJ s5 recipe [ 18 ]. W e have use the FST framew ork to compose the language model with a "spelling lexicon" [ 6 , 12 , 19 ]. All models were implemented using the T ensorflow frame work [20]. Our base configuration implemented the Listener using 4 bidirectional LSTM layers of 256 units per direction (512 total), interleav ed with 3 time-pooling layers which resulted in an 8-fold reduction of the input sequence length, approximately equating the length of hidden acti vations to the number of characters in the transcript. The Speller was a single LSTM layer with 256 units. Input characters were embedded into 30 dimensions. The attention MLP used 128 hidden units, previous attention weights were accessed using 3 conv olutional filters spanning 100 frames. LSTM weights were initialized uniformly over the range ± 0 . 075 . Networks were trained using 8 asynchronous replica workers each employing the AD AM algorithm [ 21 ] with default parameters and the learning rate set initially to 10 − 3 , then reduced to 10 − 4 and 10 − 5 after 400k and 500k training steps, respectiv ely . Static Gaussian weight noise with standard deviation 0.075 was applied to all weight matrices after 20000 training steps. W e have also used a small weight decay of 10 − 6 . W e hav e compared two label smoothing methods: unigram smoothing [ 17 ] with the probability of the correct label set to 0 . 95 and neighborhood smoothing with the probability of correct token set to 0 . 9 and the remaining probability mass distrib uted symmetrically ov er neighbors at distance ± 1 and ± 2 with a 5 : 2 ratio. W e have tuned the smoothing parameters with a small grid search and have found that good results can be obtained for a broad range of settings. W e hav e gathered results obtained without language models in T able 2. W e ha ve used a beam size of 10 and no mechanism to promote longer sequences. W e report a verages of two runs taken at the epoch with the lo west validation WER. Label smoothing brings a large error rate reduction, nearly matching the perfor- T able 2: Results without separate language model on WSJ . Model Parameters dev93 eval92 CTC [3] 26.5M - 27.3 seq2seq [12] 5.7M - 18.6 seq2seq [24] 5.9M - 12.9 seq2seq [22] - - 10.5 Baseline 6.6M 17.9 14.2 Unigram LS 6.6M 13.7 10.6 T emporal LS 6.6M 14.1 10.7 T able 3: Results withextended trigram langua ge model on WSJ. Model dev93 eval92 seq2seq [12] - 9.3 CTC [3] - 8.2 CTC [6] - 7.3 Baseline + Cov 12.6 8.9 Unigram LS + Cov . 9.9 7.0 T emporal LS + Cov . 9.7 6.7 mance achiev ed with very deep and sophisticated encoders [ 22 ]. T able 3 gathers results that use the extended trigram lan- guage model. W e report av erages of two runs. For each run we hav e tuned beam search parameters on the validation set and applied them on the test set. A typical setup used beam width 200, language model weight λ = 0 . 5 , cov erage weight γ = 1 . 5 and coverage threshold τ = 0 . 5 . Our best result surpasses CTC- based networks [ 6 ] and matches the results of a DNN-HMM and CTC ensemble [23]. 5. Related W ork Label smoothing was proposed as an efficient regularizer for the Inception architecture [ 16 ]. Several improved smoothing schemes were proposed, including sampling erroneous labels instead of using a fixed distribution [ 25 ], using the marginal label probabilities [ 17 ], or using early errors of the model [ 26 ]. Smoothing techniques increase the entropy of a model’ s pre- dictions, a technique that was used to promote exploration in reinforcement learning [ 27 , 28 , 29 ]. Label smoothing prev ents saturating the SoftMax nonlinearity and results in better gradient flow to lower layers of the network [ 16 ]. A similar concept, in which training tar gets were set slightly belo w the range of the output nonlinearity was proposed in [30]. Our seq2seq networks are locally normalized, i.e. the speller produces a probability distrib ution at every step. Alternatively normalization can be performed globally on whole transcripts. In discriminati ve training of classical ASR systems normaliza- tion is performed over lattices [ 31 ]. In the case of recurrent networks lattices are replaced by beam search results. Global normalization has yielded important benefits on many NLP tasks including parsing and translation [ 32 , 33 ]. Global normalization is expensi ve, because each training step requires running beam search inference. It remains to be established whether globally normalized models can be approximated by cheaper to train lo- cally normalized models with proper regularization such as label smoothing. Using source coverage v ectors has been inv estigated in neu- ral machine translation models. Past attentions vectors were used as auxiliary inputs in the emitting RNN either directly [ 34 ], or as cumulativ e coverage information [ 35 ]. Coverage embeddings vectors associated with source words end modified during train- ing were proposed in [ 36 ]. Our solution that employs a co verage penalty at decode time only is most similar to the one used by the Google T ranslation system [10]. 6. Conclusions W e hav e demonstrated that with ef ficient regularization and care- ful decoding the sequence-to-sequence approach to speech recog- nition can be competiti ve with other non-HMM techniques, such as CTC. 7. Acknowledgements 8. References [1] Y . LeCun, Y . Bengio, and G. Hinton, “Deep learning, ” Nature , vol. 521, no. 7553, pp. 436–444, 2015. [2] G. Hinton, L. Deng, D. Y u, G. E. Dahl, A.-r . Mohamed, N. Jaitly , A. Senior, V . V anhoucke, P . Nguyen, T . N. Sainath et al. , “Deep neural networks for acoustic modeling in speech recognition: The shared views of four research groups, ” IEEE Signal Processing Magazine , vol. 29, no. 6, pp. 82–97, 2012. [3] A. Grav es and N. Jaitly , “T owards end-to-end speech recognition with recurrent neural networks. ” in ICML , vol. 14, 2014, pp. 1764– 1772. [4] A. Hannun, C. Case, J. Casper, B. Catanzaro, G. Diamos, E. Elsen, R. Prenger , S. Satheesh, S. Sengupta, A. Coates et al. , “Deep speech: Scaling up end-to-end speech recognition, ” arXiv preprint arXiv:1412.5567 , 2014. [5] D. Amodei, R. Anubhai, E. Battenberg, C. Case, J. Casper, B. Catanzaro, J. Chen, M. Chrzanowski, A. Coates, G. Diamos et al. , “Deep speech 2: End-to-end speech recognition in english and mandarin, ” arXiv preprint , 2015. [6] Y . Miao, M. Gowayyed, and F . Metze, “Eesen: End-to-end speech recognition using deep rnn models and wfst-based decoding, ” in 2015 IEEE W orkshop on A utomatic Speech Recognition and Un- derstanding (ASR U) . IEEE, 2015, pp. 167–174. [7] A. Krizhevsk y , I. Sutske ver , and G. E. Hinton, “Imagenet classifi- cation with deep con volutional neural networks, ” in Advances in neural information pr ocessing systems , 2012, pp. 1097–1105. [8] I. Sutske ver , O. V inyals, and Q. V . Le, “Sequence to sequence learning with neural networks, ” in Advances in neural information pr ocessing systems , 2014, pp. 3104–3112. [9] D. Bahdanau, K. Cho, and Y . Bengio, “Neural machine trans- lation by jointly learning to align and translate, ” arXiv preprint arXiv:1409.0473 , 2014. [10] Y . W u, M. Schuster, Z. Chen, Q. V . Le, M. Norouzi, W . Machere y , M. Krikun, Y . Cao, Q. Gao, K. Macherey , J. Klingner, A. Shah, M. Johnson, X. Liu, L. Kaiser , S. Gouws, Y . Kato, T . Kudo, H. Kazawa, K. Stev ens, G. Kurian, N. Patil, W . W ang, C. Y oung, J. Smith, J. Riesa, A. Rudnick, O. V inyals, G. Corrado, M. Hughes, and J. Dean, “Google’ s neural machine translation system: Bridging the gap between human and machine translation, ” CoRR , vol. abs/1609.08144, 2016. [Online]. A vailable: http://arxiv .org/abs/1609.08144 [11] J. K. Chorowski, D. Bahdanau, D. Serdyuk, K. Cho, and Y . Bengio, “ Attention-based models for speech recognition, ” in Advances in Neural Information Pr ocessing Systems , 2015, pp. 577–585. [12] D. Bahdanau, J. Chorowski, D. Serdyuk, P . Brakel, and Y . Bengio, “End-to-end attention-based large vocab ulary speech recognition, ” in 2016 IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) , March 2016, pp. 4945–4949. [13] W . Chan, N. Jaitly , Q. V . Le, and O. V inyals, “Listen, attend and spell, ” arXiv preprint , 2015. [14] J. S. Chung, A. Senior , O. V inyals, and A. Zisserman, “Lip reading sentences in the wild, ” arXiv preprint , 2016. [15] Ç. Gülçehre, O. Firat, K. Xu, K. Cho, L. Barrault, H. Lin, F . Bougares, H. Schwenk, and Y . Bengio, “On using monolingual corpora in neural machine translation, ” CoRR , vol. abs/1503.03535, 2015. [Online]. A vailable: http://arxiv .org/abs/1503.03535 [16] C. Szegedy , V . V anhoucke, S. Ioffe, J. Shlens, and Z. W ojna, “Re- thinking the inception architecture for computer vision, ” arXiv pr eprint arXiv:1512.00567 , 2015. [17] G. Pereyra, G. T ucker , J. Choro wski, L. Kaiser , and G. Hin- ton, “Regularizing neural networks by penalizing confident output distrib utions, ” in Submitted to ICLR 2017 , 2017, https://openreview.net/forum?id=HkCjNI5ex . [18] D. Pove y , A. Ghoshal, G. Boulianne, L. Burget, O. Glembek, N. Goel, M. Hannemann, P . Motlicek, Y . Qian, P . Schwarz, J. Silovsk y , G. Stemmer , and K. V esely , “The kaldi speech recogni- tion toolkit, ” in IEEE 2011 W orkshop on Automatic Speec h Recog- nition and Understanding . IEEE Signal Processing Society , Dec. 2011, iEEE Catalog No.: CFP11SRW -USB. [19] C. Allauzen, M. Riley , J. Schalkwyk, W . Skut, and M. Mohri, “OpenFst: A general and efficient weighted finite-state transducer library , ” in Pr oceedings of the Ninth International Conference on Implementation and Application of Automata, (CIAA 2007) , ser . Lecture Notes in Computer Science, v ol. 4783. Springer , 2007, pp. 11–23, http://www.openfst.org . [20] M. Abadi, A. Agarwal, P . Barham, E. Bre vdo, Z. Chen, C. Citro, G. S. Corrado, A. Davis, J. Dean, M. Devin et al. , “T ensorflow: Large-scale machine learning on heterogeneous distributed sys- tems, ” arXiv preprint , 2016. [21] D. Kingma and J. Ba, “ Adam: A method for stochastic optimiza- tion, ” arXiv preprint , 2014. [22] Y . Zhang, W . Chan, and N. Jaitly , “V ery deep con volutional networks for end-to-end speech recognition, ” arXiv preprint arXiv:1610.03022 , 2016. [23] A. Grav es and N. Jaitly , “T ow ards End-T o-End Speech Recognition with Recurrent Neural Networks, ” in ICML ’14 , 2014, pp. 1764– 1772. [24] W . Chan, Y . Zhang, Q. Le, and N. Jaitly , “Latent sequence decom- positions, ” arXiv preprint , 2016. [25] L. Xie, J. W ang, Z. W ei, M. W ang, and Q. T ian, “Disturblabel: Reg- ularizing cnn on the loss layer, ” arXiv pr eprint arXiv:1605.00055 , 2016. [26] A. Aghajanyan, “Softtarget regularization: An effecti ve technique to reduce over-fitting in neural networks, ” CoRR , vol. abs/1609.06693, 2016. [Online]. A vailable: http://arxiv .org/abs/ 1609.06693 [27] R. J. W illiams and J. Peng, “Function optimization using connec- tionist reinforcement learning algorithms, ” Connection Science , vol. 3, no. 3, pp. 241–268, 1991. [28] V . Mnih, A. P . Badia, M. Mirza, A. Graves, T . P . Lillicrap, T . Harle y , D. Silver , and K. Kavukcuoglu, “ Asynchronous methods for deep reinforcement learning, ” arXiv preprint , 2016. [29] Y . Luo, C.-C. Chiu, N. Jaitly , and I. Sutskever , “Learning on- line alignments with continuous rewards policy gradient, ” arXiv pr eprint arXiv:1608.01281 , 2016. [30] Y . A. LeCun, L. Bottou, G. B. Orr , and K.-R. Müller , Efficient BackPr op . Berlin, Heidelberg: Springer Berlin Heidelberg, 2012, pp. 9–48. [Online]. A vailable: http: //dx.doi.org/10.1007/978- 3- 642- 35289- 8_3 [31] X. He, L. Deng, and W . Chou, “Discriminativ e learning in se- quential pattern recognition, ” IEEE Signal Pr ocessing Magazine , vol. 25, no. 5, pp. 14–36, 2008. [32] D. Andor , C. Alberti, D. W eiss, A. Se veryn, A. Presta, K. Ganche v , S. Petrov , and M. Collins, “Globally normalized transition-based neural networks, ” CoRR , vol. abs/1603.06042, 2016. [Online]. A vailable: http://arxiv .org/abs/1603.06042 [33] S. W iseman and A. M. Rush, “Sequence-to-sequence learning as beam-search optimization, ” CoRR , vol. abs/1606.02960, 2016. [Online]. A vailable: http://arxiv .org/abs/1606.02960 [34] M. Luong, H. Pham, and C. D. Manning, “Effectiv e approaches to attention-based neural machine translation, ” CoRR , vol. abs/1508.04025, 2015. [Online]. A vailable: http: //arxiv .org/abs/1508.04025 [35] Z. Tu, Z. Lu, Y . Liu, X. Liu, and H. Li, “Modeling coverage for neural machine translation, ” CoRR , vol. abs/1601.04811, 2016. [Online]. A vailable: http://arxiv .org/abs/1601.04811 [36] H. Mi, B. Sankaran, Z. W ang, and A. Ittycheriah, “Cov erage embedding model for neural machine translation, ” CoRR , vol. abs/1605.03148, 2016. [Online]. A vailable: http://arxiv .org/abs/ 1605.03148

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment