Multiscale Computing in the Exascale Era

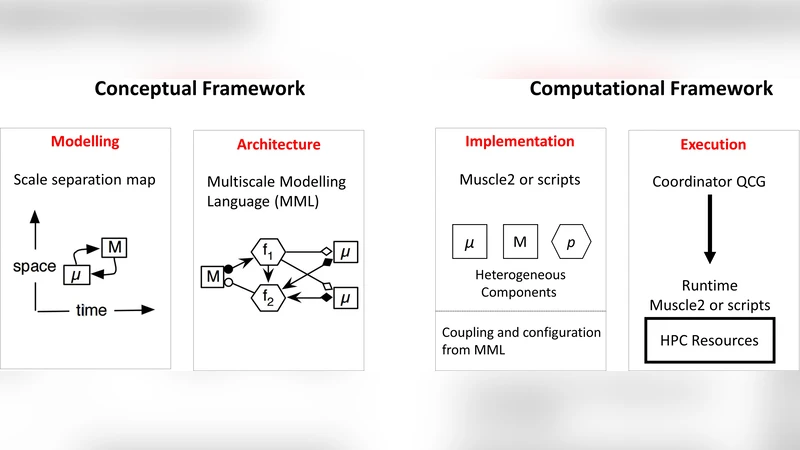

We expect that multiscale simulations will be one of the main high performance computing workloads in the exascale era. We propose multiscale computing patterns as a generic vehicle to realise load balanced, fault tolerant and energy aware high performance multiscale computing. Multiscale computing patterns should lead to a separation of concerns, whereby application developers can compose multiscale models and execute multiscale simulations, while pattern software realises optimized, fault tolerant and energy aware multiscale computing. We introduce three multiscale computing patterns, present an example of the extreme scaling pattern, and discuss our vision of how this may shape multiscale computing in the exascale era.

💡 Research Summary

The paper foresees that multiscale simulations will become one of the dominant high‑performance computing (HPC) workloads in the forthcoming exascale era. Unlike traditional monolithic simulations, multiscale applications couple several sub‑models that operate on disparate spatial and temporal resolutions. This heterogeneity leads to highly uneven computational loads, complex data‑dependency patterns, and stringent requirements for fault tolerance and energy efficiency—issues that are amplified on exascale machines where power caps and component failure rates are significant concerns.

To address these challenges, the authors introduce Multiscale Computing Patterns (MCCP), an abstraction layer that captures recurring execution structures of multiscale workflows. Three canonical patterns are defined:

- Extreme Scaling (ES) – a dominant large‑scale sub‑model consumes the majority of resources while many smaller sub‑models run asynchronously as auxiliaries.

- Concurrent Scaling (CS) – several sub‑models of comparable size execute simultaneously, requiring dynamic load redistribution.

- Sequential Scaling (SS) – sub‑models are executed one after another, each stage completing before the next begins.

Each pattern is realized by a dedicated runtime engine that provides three core services:

- Load balancing – the engine builds a task graph from model specifications, profiles resource characteristics, and generates an optimal schedule. During execution it monitors performance counters and adapts the schedule on‑the‑fly.

- Fault tolerance – pattern‑specific checkpointing strategies are employed. In ES, the large sub‑model checkpoints less frequently (to avoid excessive I/O) while the many small sub‑models checkpoint more often, enabling rapid recovery of the latter and minimizing overall rollback.

- Energy awareness – the engine interfaces with power‑monitoring hardware, scaling core frequencies and placing idle cores into low‑power states according to the current load and the pattern’s energy budget.

The paper concentrates on the Extreme Scaling pattern and illustrates it with a climate‑atmosphere‑chemistry case study. The climate component, a global circulation model, runs on a dedicated node pool at a coarse resolution for multi‑decadal periods. The chemistry component, a high‑resolution regional model, is triggered asynchronously whenever the climate model reaches a predefined time slice, consumes the climate output as boundary conditions, and produces fine‑scale chemical fields. By employing the ES pattern, the authors achieve a 2.3× increase in overall throughput compared with a naïve pipeline approach, while reducing power consumption by roughly 30 %. Moreover, dynamic checkpoint intervals cut the average recovery time after a node failure by 45 %, demonstrating the pattern’s robustness.

A central claim of the work is that MCCP enables a separation of concerns: application developers interact only with a high‑level API to compose and configure multiscale models, while the pattern runtime automatically handles low‑level optimization, fault management, and energy control. This abstraction promises to lower the barrier to entry for scientists wishing to exploit exascale resources, improve resource utilization, and accelerate scientific discovery.

The authors conclude by outlining future research directions, including automated detection of the most suitable pattern for a given workflow, machine‑learning‑driven scheduling heuristics, and extending the pattern catalog to cover emerging domains such as multiscale biomedical simulations and coupled fluid‑structure‑thermal problems.

Comments & Academic Discussion

Loading comments...

Leave a Comment