A Probabilistic Framework for Deep Learning

We develop a probabilistic framework for deep learning based on the Deep Rendering Mixture Model (DRMM), a new generative probabilistic model that explicitly capture variations in data due to latent task nuisance variables. We demonstrate that max-su…

Authors: Ankit B. Patel, Tan Nguyen, Richard G. Baraniuk

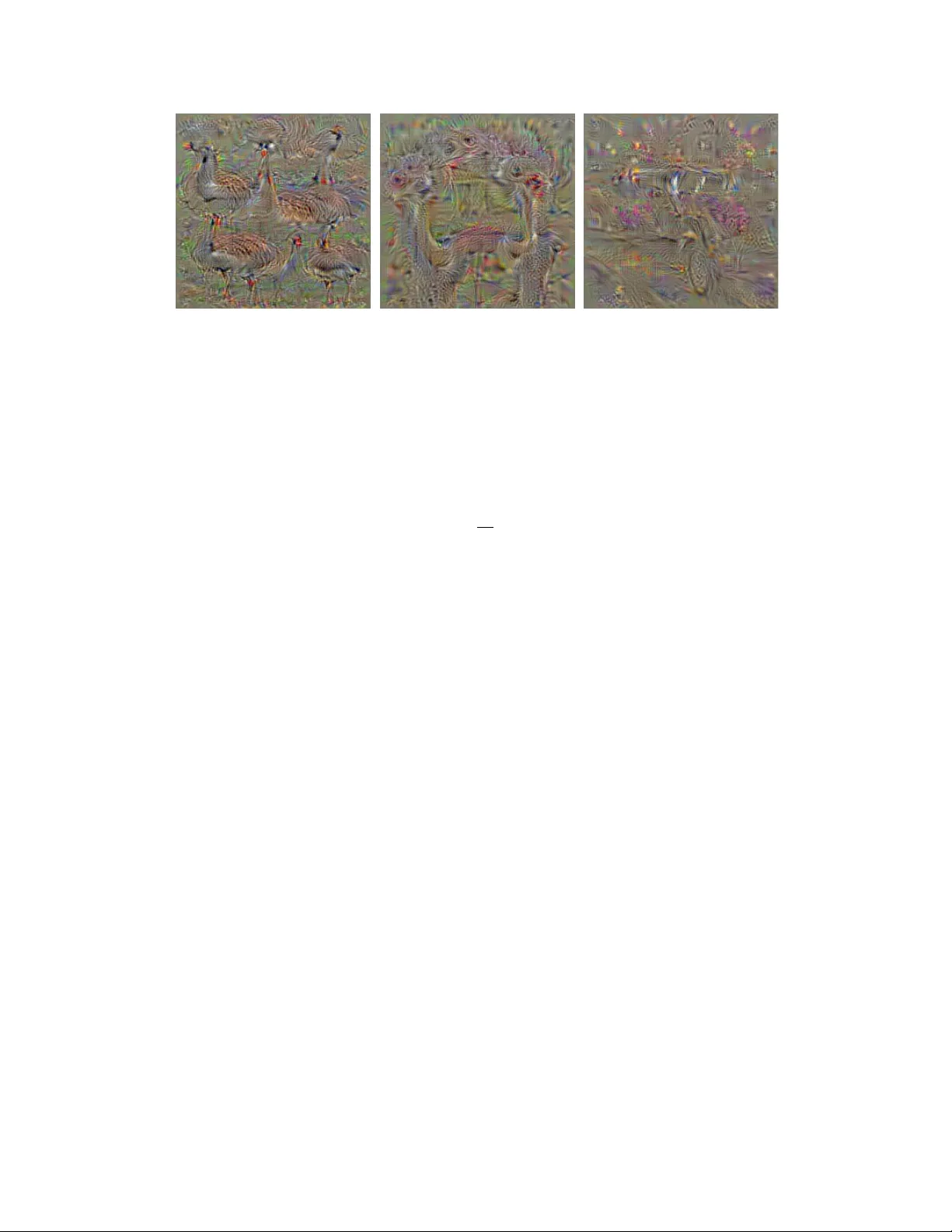

A Pr obabilistic Framework f or Deep Lear ning Ankit B. Patel Baylor College of Medicine, Rice Uni versity ankitp@bcm.edu,abp4@rice.edu T an Nguyen Rice Univ ersity mn15@rice.edu Richard G. Baraniuk Rice Univ ersity richb@rice.edu Abstract W e dev elop a probabilistic framew ork for deep learning based on the Deep Render- ing Mixtur e Model (DRMM), a new g enerative pr obabilistic model that explicitly capture variations in data due to latent task nuisance variables. W e demonstrate that max-sum inference in the DRMM yields an algorithm that exactly reproduces the operations in deep con volutional neural networks (DCNs), providing a first principles deriv ation. Our framework pro vides new insights into the successes and shortcomings of DCNs as well as a principled route to their improvement. DRMM training via the Expectation-Maximization (EM) algorithm is a po werful alternati ve to DCN back-propagation, and initial training results are promising. Classification based on the DRMM and other variants outperforms DCNs in supervised digit classification, training 2-3 × faster while achieving similar accurac y . Moreov er , the DRMM is applicable to semi-supervised and unsupervised learning tasks, achie v- ing results that are state-of-the-art in sev eral categories on the MNIST benchmark and comparable to state of the art on the CIF AR10 benchmark. 1 Introduction Humans are adept at a wide array of complicated sensory inference tasks, from recognizing objects in an image to understanding phonemes in a speech signal, despite significant variations such as the position, orientation, and scale of objects and the pronunciation, pitch, and volume of speech. Indeed, the main challenge in many sensory perception tasks in vision, speech, and natural language processing is a high amount of such nuisance variation . Nuisance variations complicate perception by turning otherwise simple statistical inference problems with a small number of variables (e.g., class label) into much higher-dimensional problems. The key challenge in de veloping an inference algorithm is then how to factor out all of the nuisance variation in the input . Over the past fe w decades, a vast literature that approaches this problem from myriad dif ferent perspectiv es has developed, b ut the most difficult inference problems ha ve remained out of reach. Recently , a new breed of machine learning algorithms ha ve emerged for high-nuisance inference tasks, achieving super -human performance in many cases. A prime example of such an architecture is the deep con volutional neural network (DCN), which has seen great success in tasks like visual object recognition and localization, speech recognition and part-of-speech recognition. The success of deep learning systems is impressi ve, b ut a fundamental question remains: Why do the y work? Intuitions abound to explain their success. Some explanations focus on properties of feature in variance and selectivity de veloped over multiple layers, while others credit raw computational power and the amount of av ailable training data. Howe ver , beyond these intuitions, a coherent theoretical framew ork for understanding, analyzing, and synthesizing deep learning architectures has remained elusiv e. In this paper , we develop a ne w theoretical framework that pro vides insights into both the successes and shortcomings of deep learning systems, as well as a principled route to their design and improve- ment. Our frame work is based on a gener ative probabilistic model that explicitly captur es variation due to latent nuisance variables . The Rendering Mixtur e Model (RMM) explicitly models nuisance variation through a rendering function that combines task target variables (e.g., object class in an 30th Conference on Neural Information Processing Systems (NIPS 2016), Barcelona, Spain. object recognition) with a collection of task nuisance variables (e.g., pose). The Deep Rendering Mixtur e Model (DRMM) extends the RMM in a hierarchical fashion by rendering via a product of affine nuisance transformations across multiple le vels of abstraction. The graphical structures of the RMM and DRMM enable ef ficient inference via message passing (e.g., using the max-sum/product algorithm) and training via the expectation-maximization (EM) algorithm. A key element of our frame work is the relaxation of the RMM/DRMM generati ve model to a discriminativ e one in order to optimize the bias-v ariance tradeoff. Below , we demonstrate that the computations in volved in joint MAP inference in the relaxed DRMM coincide e xactly with those in a DCN. The intimate connection between the DRMM and DCNs pro vides a range of ne w insights into ho w and why they work and do not work. While our theory and methods apply to a wide range of dif ferent inference tasks (including, for example, classification, estimation, regression, etc.) that feature a number of task-irrelev ant nuisance variables (including, for example, object and speech recognition), for concreteness of exposition, we will focus belo w on the classification problem underlying visual object recognition. The proofs of se veral results appear in the Appendix. 2 Related W ork Theories of Deep Learning. Our theoretical work shares similar goals with several others such as the i -Theory [ 1 ] (one of the early inspirations for this work), Nuisance Management [ 27 ], the Scattering T ransform [6], and the simple sparse network proposed by Arora et al. [2]. Hierarchical Generative Models. The DRMM is closely related to se veral hierarchical models, including the Deep Mixture of Factor Analyzers [30] and the Deep Gaussian Mixture Model [32]. Like the above models, the DRMM attempts to employ parameter sharing, capture the notion of nuisance transformations explicitly , learn selectivity/in variance, and promote sparsity . Howe ver , the key features that distinguish the DRMM approach from others are: (i) The DRMM explicitly models nuisance v ariation across multiple le vels of abstraction via a product of af fine transformations. This factorized linear structure serv es dual purposes: it enables (ii) tractable inference (via the max- sum/product algorithm), and (iii) it serv es as a re gularizer to pre vent o verfitting by an e xponential reduction in the number of parameters. Critically , (iv) inference is not performed for a single variable of interest but instead for the full global configuration of nuisance v ariables. This is justified in low- noise settings. And most importantly , (v) we can deriv e the structure of DCNs pr ecisely , endo wing DCN operations such as the con volution, rectified linear unit, and spatial max-pooling with principled probabilistic interpretations. Independently from our work, Soatto et al. [ 27 ] also focus strongly on nuisance management as the k ey challenge in defining good scene representations. Howe ver , their work considers max-pooling and ReLU as appr oximations to a marginalized lik elihood, whereas our work interprets those operations dif ferently , in terms of max-sum inference in a specific probabilistic generativ e model. The work on the number of linear regions in DCNs [ 15 ] is complementary to our own, in that it sheds light on the complexity of functions that a DCN can compute. Both approaches could be combined to answer questions such as: Ho w many templates are required for accurate discrimination? Ho w many samples are needed for learning? W e plan to pursue these questions in future work. Semi-Supervised Neural Networks. Recent work in neural networks designed for semi-supervised learning (fe w labeled data, lots of unlabeled data) has seen the resur gence of generati ve-like ap- proaches, such as Ladder Networks [ 20 ], Stacked What-Where Autoencoders (SWW AE) [ 34 ] and many others. These network architectures augment the usual task loss with one or more re gularization term, typically including an image reconstruction error , and train jointly . A key dif ference with our DRMM-based approach is that these networks do not arise from a proper probabilistic density and as such they must resort to learning the bottom-up recognition and top-down reconstruction weights separately , and they cannot k eep track of uncertainty . 3 The Deep Rendering Mixture Model: Capturing Nuisance V ariation Although we focus on the DRMM in this paper , we define and explore sev eral other interesting variants,including the Deep Rendering Factor Model (DRFM) and the Evolutionary DRMM (E- DRMM), both of which are discussed in more detail in [ 18 ] and the Appendix. The E-DRMM is particularly important, since its max-sum inference algorithm yields a decision tree of the type employed in a random decision forest classifier[5]. 2 . . . I c L g L g L 1 g 1 I z g a c I g a c Rendering Mixture Model Rendering Factor Model Deep Rendering Mixture Model A B z L z L 1 z 1 C Deep Sparse Path Model Figure 1: Graphical model depiction of (A) the Shallow Rendering Models and (B) the DRMM. All dependence on pixel location x has been suppressed for clarity . (C) The Sparse Sum-over -Paths formulation of the DRMM. A rendering path contributes only if it is acti ve (green arrows). 3.1 The (Shallow) Rendering Mixtur e Model The RMM is a gener ative pr obabilistic model for images that explicitly models the relationship between images I of the same object c subject to nuisance g ∈ G , where G is the set of all nuisances (see Fig. 1A for the graphical model depiction). c ∼ Cat( { π c } c ∈C ) , g ∼ Cat( { π g } g ∈G ) , a ∼ Bern( { π a } a ∈A ) , I = aµ cg + noise . (1) Here, µ cg is a template that is a function of the class c and the nuisance g . The switching variable a ∈ A = { ON, OFF } determines whether or not to render the template at a particular patch; a sparsity prior on a thus encourages each patch to ha ve a few causes. The noise distrib ution is from the exponential family , b ut without loss of generality we illustrate belo w using Gaussian noise N (0 , σ 2 1 ) . W e assume that the noise is i.i.d. as a function of pixel location x and that the class and nuisance variables are independently distributed according to categorical distributions. (Independence is merely a con venience for the de velopment; in practice, g can depend on c .) Finally , since the world is spatially varying and an image can contain a number of different objects, it is natural to break the image up into a number of patches , that are centered on a single pixel x . The RMM described in (1) then applies at the patch lev el, where c , g , and a depend on pixel/patch location x . W e will omit the dependence on x when it is clear from context. Inference in the Shallo w RMM Y ields One Layer of a DCN. W e now connect the RMM with the computations in one layer of a deep con volutional network (DCN). T o perform object recognition with the RMM, we must marginalize out the nuisance variables g and a . Maximizing the log-posterior ov er g ∈ G and a ∈ A and then choosing the most likely class yields the max-sum classifier ˆ c ( I ) = argmax c ∈C max g ∈G max a ∈A ln p ( I | c, g , a ) + ln p ( c, g , a ) (2) that computes the most likely global configuration of target and nuisance v ariables for the image. Assuming that Gaussian noise is added to the template, the image is normalized so that k I k 2 = 1 , and c, g are uniformly distrib uted, (2) becomes ˆ c ( I ) ≡ argmax c ∈C max g ∈G max a ∈A a ( h w cg | I i + b cg ) + b a (3) = argmax c ∈C max g ∈G ReLu( h w cg | I i + b cg ) + b 0 (4) where ReLU ( u ) ≡ ( u ) + = max { u, 0 } is the soft-thresholding operation performed by the rec- tified linear units in modern DCNs. Here we hav e reparameterized the RMM model from the 3 moment par ameters θ ≡ { σ 2 , µ cg , π a } to the natural par ameters η ( θ ) ≡ { w cg ≡ 1 σ 2 µ cg , b cg ≡ − 1 2 σ 2 k µ cg k 2 2 , b a ≡ ln p ( a ) = ln π a , b 0 ≡ ln p ( a =1) p ( a =0) . The relationships η ( θ ) are referred to as the generative par ameter constraints . W e no w demonstrate that the sequence of operations in the max-sum classifier in (3) coincides e xactly with the operations in volved in one layer of a DCN : image normalization, linear template matching, thresholding, and max pooling. First, the image is normalized (by assumption). Second, the image is filtered with a set of noise-scaled rendered templates w cg . If we assume translational in variance in the RMM, then the rendered templates w cg yield a con volutional layer in a DCN [ 11 ] (see Appendix Lemma A.2). Third, the resulting activ ations (log-probabilities of the hypotheses) are passed through a pooling layer; if g is a translational nuisance, then taking the maximum over g corresponds to max pooling in a DCN. Fourth, since the switching variables are latent (unobserved), we max-marginalize ov er them during classification. This leads to the ReLU operation (see Appendix Proposition A.3). 3.2 The Deep Rendering Mixture Model: Capturing Levels of Abstraction Marginalizing o ver the nuisance g ∈ G in the RMM is intractable for modern datasets, since G will contain all configurations of the high-dimensional nuisance v ariables g . In response, we e xtend the RMM into a hierarchical Deep Rendering Mixtur e Model (DRMM) by factorizing g into a number of different nuisance v ariables g (1) , g (2) , . . . , g ( L ) at different le vels of abstraction. The DRMM image generation process starts at the highest le vel of abstraction ( ` = L ), with the random choice of the object class c ( L ) and ov erall nuisance g ( L ) . It is then follo wed by random choices of the lower -level details g ( ` ) (we absorb the switching variable a into g for brevity), progressiv ely rendering more concrete information level-by-le vel ( ` → ` − 1 ), until the process finally culminates in a fully rendered D -dimensional image I ( ` = 0 ). Generation in the DRMM takes the form: c ( L ) ∼ Cat ( { π c ( L ) } ) , g ( ` ) ∼ Cat ( { π g ( ` ) } ) ∀ ` ∈ [ L ] (5) µ c ( L ) g ≡ Λ g µ c ( L ) ≡ Λ (1) g (1) Λ (2) g (2) · · · Λ ( L − 1) g ( L − 1) Λ ( L ) g ( L ) µ c ( L ) (6) I ∼ N ( µ c ( L ) g , Ψ ≡ σ 2 1 D ) , (7) where the latent variables, parameters, and helper v ariables are defined in full detail in Appendix B. The DRMM is a deep Gaussian Mixture Model (GMM) with special constraints on the latent v ariables. Here, c ( L ) ∈ C L and g ( ` ) ∈ G ` , where C L is the set of tar get-relev ant nuisance variables, and G ` is the set of all target-irrele v ant nuisance variables at le vel ` . The r endering path is defined as the sequence ( c ( L ) , g ( L ) , . . . , g ( ` ) , . . . , g (1) ) from the root (overall class) down to the individual pixels at ` = 0 . µ c ( L ) g is the template used to render the image, and Λ g ≡ Q ` Λ g ( ` ) represents the sequence of local nuisance transformations that partially render finer -scale details as we mo ve from abstract to concrete. Note that each Λ ( ` ) g ( ` ) is an affine transformation with a bias term α ( ` ) g ( ` ) that we hav e suppressed for clarity . Fig. 1B illustrates the corresponding graphical model. As before, we have suppressed the dependence of g ( ` ) on the pixel location x ( ` ) at lev el ` of the hierarchy . Sum-Over -Paths F ormulation of the DRMM. W e can rewrite the DRMM generation process by expanding out the matrix multiplications into scalar products. This yields an interesting ne w perspectiv e on the DRMM, as each pixel intensity I x = P p λ ( L ) p a ( L ) p · · · λ (1) p a (1) p is the sum over all active paths to that pix el, of the product of weights along that path. A rendering path p is acti ve if f ev ery switch on the path is activ e i.e. Q ` a ( ` ) p = 1 . While exponentially many possible rendering paths exist, only a v ery small fraction, controlled by the sparsity of a , are activ e. Fig. 1C depicts the sum-ov er-paths formulation graphically . Recursive and Nonnegative Forms. W e can rewrite the DRMM into a recursi ve form as z ( ` ) = Λ ( ` +1) g ( ` +1) z ( ` +1) , where z ( L ) ≡ µ c ( L ) and z (0) ≡ I . W e refer to the helper latent variables z ( ` ) as intermediate r ender ed templates . W e also define the Nonnegative DRMM (NN-DRMM) as a DRMM with an extra nonnegati vity constraint on the intermediate rendered templates, z ( ` ) ≥ 0 ∀ ` ∈ [ L ] . The latter is enforced in training via the use of a ReLu operation in the top-down reconstruction phase of inference. Throughout the rest of the paper , we will focus on the NN-DRMM, lea ving the unconstrained DRMM for future work. For brevity , we will drop the NN prefix. Factor Model. W e also define and explore a variant of the DRMM that where the top-lev el latent variable is Gaussian: z ( L +1) ∼ N (0 , 1 d ) ∈ R d and the recursiv e generation process is otherwise 4 identical to the DRMM: z ( ` ) = Λ ( ` +1) g ( ` +1) z ( ` +1) where g ( L +1) ≡ c ( L ) . W e call this the Deep Rendering F actor Model (DRFM). The DRFM is closely related to the Spik e-and-Slab Sparse Coding model [ 25 ]. Belo w we explore some training results, but we lea ve most of the exploration for future work. (see Fig. 3 in Appendix C for architecture of the RFM, the shallow v ersion of the DRFM) Number of Free Parameters. Compared to the shallow RMM, which has D |C L | Q ` |G ` | parameters, the DRMM has only P ` |G ` +1 | D ` D ` +1 parameters, an exponential r eduction in the number of fr ee parameters (Here G L +1 ≡ C L and D ` is the number of units in the ` -th layer with D 0 ≡ D ). This enables efficient inference, learning, and better generalization. Note that we hav e assumed dense (fully connected) Λ g ’ s here; if we impose more structure (e.g. translation in v ariance), the number of parameters will be further reduced. Bottom-Up Inference. As in the shallow RMM, gi ven an input image I the DRMM classifier infers the most likely global configuration { c ( L ) , g ( ` ) } , ` = 0 , 1 , . . . , L by ex ecuting the max-sum/product message passing algorithm in tw o stages: (i) bottom-up (from fine-to-coarse) to infer the o verall class label ˆ c ( L ) and (ii) top-down (from coarse-to-fine) to infer the latent v ariables ˆ g ( ` ) at all intermediate lev els ` . First, we will focus on the fine-to-coarse pass since it leads directly to DCNs. Using (3), the fine-to-coarse NN-DRMM inference algorithm for inferring the most likely cateogry ˆ c L is giv en by argmax c ( L ) ∈C max g ∈G µ T c ( L ) g I = argmax c ( L ) ∈C max g ∈G µ T c ( L ) 1 Y ` = L Λ T g ( ` ) I = argmax c ( L ) ∈C µ T c ( L ) max g ( L ) ∈G L Λ T g ( L ) · · · max g (1) ∈G 1 Λ T g (1) | I | {z } ≡ I 1 = · · · ≡ argmax c ( L ) ∈C µ T c ( L ) I ( L ) . (8) Here, we hav e assumed the bias terms α g ( ` ) = 0 . In the second line, we used the max-product algorithm (distributivity of max o ver products i.e. for a > 0 , max { ab, ac } = a max { b, c } ). See Appendix B for full details. This enables us to re write (8) recursiv ely: I ( ` +1) ≡ max g ( ` +1) ∈G ` +1 (Λ g ( ` +1) ) T | {z } ≡ W ( ` +1) I ( ` ) = MaxP o ol(ReLu(Conv( I ( ` ) ))) , (9) where I ( ` ) is the output featur e maps of layer ` , I (0) ≡ I and W ( ` ) are the filters/weights for layer ` . Comparing to (3), we see that the ` -th iteration of (8) and (9) corresponds to feedforw ard propagation in the ` -th layer of a DCN. Thus a DCN’s operation has a pr obabilistic interpr etation as fine-to-coarse infer ence of the most probable configur ation in the DRMM. T op-Down Inference. A unique contrib ution of our generati ve model-based approach is that we ha ve a principled deriv ation of a top-down inference algorithm for the NN-DRMM (Appendix B). The resulting algorithm amounts to a simple top-down reconstruction term ˆ I n = Λ ˆ g n µ ˆ c ( L ) n . Discriminative Relaxations: From Generative to Discriminative Classifiers. W e have con- structed a correspondence between the DRMM and DCNs, but the mapping is not yet complete. In particular, recall the generative constraints on the weights and biases. DCNs do not have such constraints — their weights and biases are free parameters. As a result, when faced with training data that violates the DRMM’ s underlying assumptions, the DCN will have more freedom to compensate. In order to complete our mapping from the DRMM to DCNs, we r elax these parameter constraints, allowing the weights and biases to be free and independent parameters. W e refer to this process as a discriminative r elaxation of a generative classifier ([16, 4], see the Appendix D for details). 3.3 Learning the Deep Rendering Model via the Expectation-Maximization (EM) Algorithm W e describe how to learn the DRMM parameters from training data via the hard EM algorithm in Algorithm 1. The DRMM E-Step consists of bottom-up and top-down (reconstruction) E-steps at each layer ` in the model. The γ ncg ≡ p ( c, g | I n ; θ ) are the responsibilities, where for bre vity we hav e absorbed a into g . The DRMM M-step consists of M-steps for each layer ` in the model. The per -layer M-step in turn consists of a responsibility-weighted re gression, where GLS( y n ∼ x n ) denotes the solution to a generalized Least Squares regression problem that predict targets y n from predictors x n and is 5 Algorithm 1 Hard EM and EG Algorithms for the DRMM E-step: ˆ c n , ˆ g n = argmax c,g γ ncg M-step: ˆ Λ g ( ` ) = GLS | {z } I ( ` − 1) n ∼ ˆ z ( ` ) n | g ( ` ) = ˆ g ( ` ) n ∀ g ( ` ) G-step: ∆ ˆ Λ g ( ` ) ∝ ∇ Λ g ( ` ) ` DR M M ( θ ) closely related to the SVD. The Iversen brack et is defined as J b K ≡ 1 if expression b is true and is 0 otherwise. There are se veral interesting and useful features of the EM algorithm. First, we note that it is a derivative-fr ee alternative to the back pr opagation algorithm for training that is both intuiti ve and potentially much faster (provided a good implementation for the GLS problem). Second, it is easily parallelized over layers, since the M-step updates each layer separately (model parallelism). Moreov er , it can be extended to a batch version so that at each iteration the model is simultaneously updated using separate subsets of the data (data parallelism). This will enable training to be distributed easily across multiple machines. In this vein, our EM algorithm shares sev eral features with the ADMM- based Bregman iteration algorithm in [ 31 ]. Ho we ver , the motiv ation there is from an optimization perspectiv e and so the resulting training algorithm is not derived from a proper probabilistic density . Third, it is far more interpretable via its connections to (deep) sparse coding and to the hard EM algorithm for GMMs. The sum-over -paths formulation makes it particularly clear that the mixture components are paths (from root to pixels) in the DRMM. G-step. For the training results in this paper , we use the Generalized EM algorithm wherein we replace the M-step with a gradient descent based G-step (see Algorithm 1). This is useful for comparison with backpropagation-based training and for ease of implementation. But before we use the G-step, we would lik e to make a fe w remarks about the proper M-step of the algorithm, saving the implementation for future work. Flexibility and Extensibility . Since we can choose different priors/types for the nuisances g , the larger DRMM family could be useful for modeling a wider range of inputs, including scenes, speech and text. The EM algorithm can then be used to train the whole system end-to-end on different sources/modalities of labeled and unlabeled data. Moreov er, the capability to sample from the model allows us to probe what is captured by the DRMM, providing us with principled ways to improve the model. And finally , in order to properly account for noise/uncertainty , it is possible in principle to extend this algorithm into a soft EM algorithm. W e leav e these interesting extensions for future work. 3.4 New Insights into Deep Con vnets DCNs are Message P assing Networks. The DRMM inference algorithm is equiv alent to performing max-sum-pr oduct message passing of the DRMM Note that by “max-sum-product” we mean a nov el combination of max-sum and max-product as described in more detail in the proofs in the Appendix. The factor graph encodes the same information as the generativ e model but organizes it in a manner that simplifies the definition and e xecution of inference algorithms [ 10 ]. Such inference algorithms are called messag e passing algorithms, because the y work by passing real-valued functions called messages along the edges between nodes. In the DRMM, the messages sent from finer to coarser le vels are in fact the feature maps I ( ` ) . The factor graph formulation provides a po werful interpretation: the con volution, Max-P ooling and ReLu operations in a DCN corr espond to max-sum/pr oduct infer ence in a DRMM . Thus, we see that architectures and layer types commonly used in today’ s DCNs can be deriv ed from precise probabilistic assumptions that entirely determine their structure. The DRMM therefore unifies two perspecti ves — neural network and probabilistic inference (see T able 2 in the Appendix for details). Shortcomings of DCNs. DCNs perform poorly in categorizing transparent objects [ 23 ]. This might be explained by the fact that transparent objects generate pixels that have multiple sources, conflicting with the DRMM sparsity prior on a , which encourages few sources. DCNs also fail to classify slender and man-made objects [ 23 ]. This is because of the locality imposed by the locally- 6 connected/con volutional layers, or equi valently , the small size of the template µ c ( L ) g in the DRMM. As a result, DCNs fail to model long-range correlations. Class A ppearance Models and Acti vity Maximization. The DRMM enables us to understand ho w trained DCNs distill and store knowledge from past experiences in their parameters. Specifically , the DRMM generates rendered templates µ c ( L ) g via a mixture of products of affine transformations, thus implying that class appearance models in DCNs are stor ed in a similar factorized-mixture form over multiple levels of abstraction. As a result, it is the product of all the filters/weights over all layers that yield meaningful images of objects (Eq. 7). W e can also shed new light on another approach to understanding DCN memories that proceeds by searching for input images that maximize the activity of a particular class unit (say , class of cats) [ 26 ], a technique we call activity maximization . Results from acti vity maximization on a high performance DCN trained on 15 million images is shown in Fig. 1 of [ 26 ]. The resulting images re veal much about ho w DCNs store memories. Using the DRMM, the solution I ∗ c ( L ) of the acti vity maximization for class c ( L ) can be deri ved as the sum of individual activity-maximizing patches I ∗ P i , each of which is a function of the learned DRMM parameters (see Appendix E): I ∗ c ( L ) ≡ X P i ∈P I ∗ P i ( c ( L ) , g ∗ P i ) ∝ X P i ∈P µ ( c ( L ) , g ∗ P i ) . (10) This implies that I ∗ c ( L ) contains multiple appearances of the same object b ut in various poses. Each activity-maximi zing patch has its o wn pose g ∗ P i , consistent with Fig. 1 of [ 26 ] and our own extensi ve experiments with Ale xNet, VGGNet, and GoogLeNet (data not shown). Such images provide strong confirmational evidence that the underlying model is a mixture o ver nuisance parameters, as predcted by the DRMM. Unsupervised Learning of Latent T ask Nuisances. A key goal of representation learning is to disentangle the factors of v ariation that contrib ute to an image’ s appearance. Given our formulation of the DRMM, it is clear that DCNs are discriminativ e classifiers that capture these factors of v ariation with latent nuisance variables g . As such, the theory presented here makes a clear prediction that for a DCN, supervised learning of task targ ets will lead to unsupervised learning of latent task nuisance variables . From the perspectiv e of manifold learning, this means that the architecture of DCNs is designed to learn and disentangle the intrinsic dimensions of the data manifolds. In order to test this prediction, we trained a DCN to classify synthetically rendered images of naturalistic objects, such as cars and cats, with v ariation in factors such as location, pose, and lighting. After training, we probed the layers of the trained DCN to quantify how much linearly decodable information exists about the task target c ( L ) and latent nuisance variables g . Fig. 2 (Left) shows that the trained DCN possesses significant information about latent factors of v ariation and, furthermore, the more nuisance v ariables, the more layers are required to disentangle the factors. This is strong evidence that depth is necessary and that the amount of depth required increases with the complexity of the class models and the nuisance variations. 4 Experimental Results W e ev aluate the DRMM and DRFM’ s performance on the MNIST dataset, a standard digit classifica- tion benchmark with a training set of 60,000 28 × 28 labeled images and a test set of 10,000 labeled images. W e also ev aluate the DRMM’ s performance on CIF AR10, a dataset of natural objects which include a training set of 50,000 32 × 32 labeled images and a test set of 10,000 labeled images. In all experiments, we use a full E-step that has a bottom-up phase and a principled top-down reconstruction phase. In order to approximate the class posterior in the DRMM, we include a Kullback-Leibler div ergence term between the inferred posterior p ( c | I ) and the true prior p ( c ) as a regularizer [ 9 ]. W e also replace the M-step in the EM algorithm of Algorithm 1 by a G-step where we update the model parameters via gradient descent. This v ariant of EM is known as the Generalized EM algorithm [ 3 ], and here we refer to it as EG. All DRMM e xperiments were done with the NN-DRMM. Configurations of our models and the corresponding DCNs are provided in the Appendix I. Supervised T raining. Supervised training results are shown in T able 3 in the Appendix. Shallow RFM: The 1-layer RFM (RFM sup) yields similar performance to a Con vnet of the same configuration (1.21% vs. 1.30% test error). Also, as predicted by the theory of generative vs discriminativ e classifiers, EG training con ver ges 2-3x faster than a DCN (18 vs. 40 epochs to reach 1.5% test error , Fig. 2, middle). Deep RFM: T raining results from an initial implementation of the 2-layer DRFM 7 Layer& Accuracy&Rate& 0 10 20 30 40 50 Epoch 0.00 0.02 0.04 0.06 0.08 0.10 Test Error Pretraining + Finetune End-to-End Supervised Semisupervised 1-layer Convnet 0 10 20 30 40 50 Epoch 0.00 0.02 0.04 0.06 0.08 0.10 Test Error Supervised 2-layer Convnet Figure 2: Information about latent nuisance v ariables at each layer (Left), training results from EG for RFM (Middle) and DRFM (Right) on MNIST , as compared to DCNs of the same configuration. EG algorithm conv erges 2 − 3 × faster than a DCN of the same configuration, while achie ving a similar asymptotic test error (Fig. 2, Right). Also, for completeness, we compare supervised training for a 5-layer DRMM with a corresponding DCN, and they show comparable accuracy (0.89% vs 0.81%, T able 3). Unsupervised T raining. W e train the RFM and the 5-layer DRMM unsupervised with N U images, follo wed by an end-to-end re-training of the whole model (unsup-pretr) using N L labeled images. The results and comparison to the SWW AE model are shown in T able 1. The DRMM model outperforms the SWW AE model in both scenarios (Filters and reconstructed images from the RFM are av ailable in the Appendix 4.) T able 1: Comparison of T est Error rates (%) between best DRMM variants and other best published results on MNIST dataset for the semi-supervised setting (taken from [ 34 ]) with N U = 60 K unlabeled images, of which N L ∈ { 100 , 600 , 1 K, 3 K } are labeled. Model N L = 100 N L = 600 N L = 1 K N L = 3 K Con vnet [11] 22 . 98 7 . 86 6 . 45 3 . 35 MTC [21] 12 . 03 5 . 13 3 . 64 2 . 57 PL-D AE [12] 10 . 49 5 . 03 3 . 46 2 . 69 WT A-AE [14] - 2 . 37 1 . 92 - SWW AE dropout [34] 8 . 71 ± 0 . 34 3 . 31 ± 0 . 40 2 . 83 ± 0 . 10 2 . 10 ± 0 . 22 M1+TSVM [8] 11 . 82 ± 0 . 25 5 . 72 4 . 24 3 . 49 M1+M2 [8] 3 . 33 ± 0 . 14 2 . 59 ± 0 . 05 2 . 40 ± 0 . 02 2 . 18 ± 0 . 04 Skip Deep Generativ e Model [13] 1 . 32 - - - LadderNetwork [20] 1 . 06 ± 0 . 37 - 0 . 84 ± 0 . 08 - Auxiliary Deep Generativ e Model [13] 0 . 96 - - - catGAN [28] 1 . 39 ± 0 . 28 - - - Improv edGAN [24] 0 . 93 ± 0 . 065 - - - RFM 14 . 47 5 . 61 4 . 67 2 . 96 DRMM 2-layer semi-sup 11 . 81 3 . 73 2 . 88 1 . 72 DRMM 5-layer semi-sup 3 . 50 1 . 56 1 . 67 0 . 91 DRMM 5-layer semi-sup NN+KL 0 . 57 − − − SWW AE unsup-pretr [34] - 9 . 80 6 . 135 4 . 41 RFM unsup-pretr 16 . 2 5 . 65 4 . 64 2 . 95 DRMM 5-layer unsup-pretr 12 . 03 3 . 61 2 . 73 1 . 68 Semi-Supervised T raining. For semi-supervised training, we use a randomly chosen subset of N L = 100, 600, 1K, and 3K labeled images and N U = 60 K unlabeled images from the training and validation set. Results are shown in T able 1 for a RFM, a 2-layer DRMM and a 5-layer DRMM with comparisons to related work. The DRMMs performs comparably to state-of-the-art models. Specially , the 5-layer DRMM yields the best results when N L = 3 K and N L = 600 while results in the second best result when N L = 1 K . W e also show the training results of a 9-layer DRMM on CIF AR10 in T able 4 in Appendix H. The DRMM yields comparable results on CIF AR10 with the best semi-supervised methods. For more results and comparisons to other related work, see Appendix H. 8 5 Conclusions Understanding successful deep vision architectures is important for improving performance and solving harder tasks. In this paper , we hav e introduced a new family of hierarchical generativ e models, whose inference algorithms for two dif ferent models reproduce deep convnets and decision trees, respecti vely . Our initial implementation of the DRMM EG algorithm outperforms DCN back- propagation in both supervised and unsupervised classification tasks and achie ves comparable/state- of-the-art performance on sev eral semi-supervised classification tasks, with no architectural hyperpa- rameter tuning [17, 19] Acknowledgments. Thanks to Xaq Pitkow and Ben Poole for helpful discussions and feedback. ABP and RGB were supported by IARP A via DoI/IBC contract D16PC00003. RGB was also supported by NSF CCF-1527501, AFOSR F A9550-14-1-0088, AR O W911NF-15-1-0316, and ONR N00014-12-1-0579. TN was supported by an NSF Graduate Reseach Fello wship and NSF IGER T T raining Grant (DGE-1250104). 9 References [1] F . Anselmi, J. Z. Leibo, L. Rosasco, J. Mutch, A. T acchetti, and T . Poggio. Magic materials: a theory of deep hierarchical architectures for learning sensory representations. MIT CBCL T echnical Report , 2013. [2] S. Arora, A. Bhaskara, R. Ge, and T . Ma. Prov able bounds for learning some deep representations. arXiv pr eprint arXiv:1310.6343 , 2013. [3] C. M. Bishop. P attern Recognition and Machine Learning , volume 4. Springer New Y ork, 2006. [4] C. M. Bishop, J. Lasserre, et al. Generative or discriminati ve? getting the best of both worlds. Bayesian Statistics , 8:3–24, 2007. [5] L. Breiman. Random forests. Machine learning , 45(1):5–32, 2001. [6] J. Bruna and S. Mallat. Inv ariant scattering conv olution networks. IEEE T ransactions on P attern Analysis and Machine Intelligence , 35(8):1872–1886, 2013. [7] I. J. Goodfellow , D. W arde-Farley , M. Mirza, A. Courville, and Y . Bengio. Maxout networks. arXiv pr eprint arXiv:1302.4389 , 2013. [8] D. P . Kingma, S. Mohamed, D. J. Rezende, and M. W elling. Semi-supervised learning with deep generativ e models. In Advances in Neural Information Pr ocessing Systems , pages 3581–3589, 2014. [9] D. P . Kingma and M. W elling. Auto-encoding variational bayes. arXiv pr eprint arXiv:1312.6114 , 2013. [10] F . R. Kschischang, B. J. Frey , and H.-A. Loeliger . Factor graphs and the sum-product algorithm. IEEE T ransactions on Information Theory , 47(2):498–519, 2001. [11] Y . LeCun, L. Bottou, Y . Bengio, and P . Haf fner . Gradient-based learning applied to document recognition. Pr oceedings of the IEEE , 86(11):2278–2324, 1998. [12] D.-H. Lee. Pseudo-label: The simple and efficient semi-supervised learning method for deep neural networks. In W orkshop on Challenges in Repr esentation Learning, ICML , v olume 3, 2013. [13] L. Maaløe, C. K. Sønderby , S. K. Sønderby , and O. W inther . Auxiliary deep generative models. arXiv pr eprint arXiv:1602.05473 , 2016. [14] A. Makhzani and B. J. Frey . Winner -take-all autoencoders. In Advances in Neural Information Pr ocessing Systems , pages 2773–2781, 2015. [15] G. F . Montufar , R. Pascanu, K. Cho, and Y . Bengio. On the number of linear re gions of deep neural networks. In Advances in Neural Information Pr ocessing Systems , pages 2924–2932, 2014. [16] A. Ng and M. Jordan. On discriminative vs. generati ve classifiers: A comparison of logistic regression and naiv e bayes. Advances in neural information pr ocessing systems , 14:841, 2002. [17] T . Nguyen, A. B. Patel, and R. G. Baraniuk. Semi-supervised learning with deep rendering mixture model. CVPR(Submitted) , 2017. [18] A. B. Patel, T . Nguyen, and R. G. Baraniuk. A probabilistic theory of deep learning. arXiv pr eprint arXiv:1504.00641 , 2015. [19] A. B. Patel, T . Nguyen, and R. G. Baraniuk. A probabilistic framework for deep learning. NIPS , 2016. [20] A. Rasmus, M. Ber glund, M. Honkala, H. V alpola, and T . Raiko. Semi-supervised learning with ladder networks. In Advances in Neural Information Pr ocessing Systems , pages 3532–3540, 2015. [21] S. Rifai, Y . N. Dauphin, P . V incent, Y . Bengio, and X. Muller . The manifold tangent classifier . In Advances in Neural Information Pr ocessing Systems , pages 2294–2302, 2011. [22] S. Rifai, P . V incent, X. Muller, X. Glorot, and Y . Bengio. Contractiv e auto-encoders: Explicit in variance during feature extraction. In Pr oceedings of the 28th international conference on machine learning (ICML-11) , pages 833–840, 2011. [23] O. Russakovsk y , J. Deng, H. Su, J. Krause, S. Satheesh, S. Ma, Z. Huang, A. Karpathy , A. Khosla, M. Bernstein, et al. Imagenet large scale visual recognition challenge. International Journal of Computer V ision , 115(3):211–252, 2015. [24] T . Salimans, I. Goodfello w , W . Zaremba, V . Cheung, A. Radford, and X. Chen. Improved techniques for training gans. arXiv pr eprint arXiv:1606.03498 , 2016. [25] A.-S. Sheikh, J. A. Shelton, and J. Lücke. A truncated em approach for spike-and-slab sparse coding. Journal of Mac hine Learning Research , 15(1):2653–2687, 2014. [26] K. Simonyan, A. V edaldi, and A. Zisserman. Deep inside con volutional networks: V isualising image classification models and saliency maps. arXiv pr eprint arXiv:1312.6034 , 2013. [27] S. Soatto and A. Chiuso. V isual representations: Defining properties and deep approximations. In International Confer ence on Learning Repr esentations , 2016. [28] J. T . Springenberg. Unsupervised and semi-supervised learning with categorical generativ e adversarial networks. arXiv pr eprint arXiv:1511.06390 , 2015. [29] J. T . Springenberg, A. Dosovitskiy , T . Brox, and M. Riedmiller . Striving for simplicity: The all conv olu- tional net. arXiv preprint , 2014. [30] Y . T ang, R. Salakhutdinov , and G. Hinton. Deep mixtures of factor analysers. arXiv pr eprint arXiv:1206.4635 , 2012. [31] G. T aylor, R. Burmeister , Z. Xu, B. Singh, A. Patel, and T . Goldstein. Training neural networks without gradients: A scalable admm approach. arXiv preprint , 2016. [32] A. van den Oord and B. Schrauwen. Factoring variations in natural images with deep gaussian mixture models. In Advances in Neural Information Pr ocessing Systems , pages 3518–3526, 2014. [33] V . N. V apnik and V . V apnik. Statistical learning theory , volume 1. W iley Ne w Y ork, 1998. 10 [34] J. Zhao, M. Mathieu, R. Goroshin, and Y . LeCun. Stacked what-where autoencoders. arXiv preprint arXiv:1506.02351 , 2016. 11 A From the Rendering Mixtur e Model Classifier to a DCN Layer Proposition A.1 (MaxOut Neural Netw orks) . The discriminative r elaxation of a noise-fr ee Gaussian Rendering Mixtur e Model (GRMM) classifier with nuisance variable g ∈ G is a single layer neural net consisting of a local template matching operation followed by a piecewise linear activation function (also known as a MaxOut NN [7]). Pr oof. For transparenc y , we prov e this claim exhaustiv ely . Later claims will hav e simpler proofs. W e hav e ˆ c ( I ) ≡ argmax c ∈C p ( c | I ) = argmax c ∈C { p ( I | c ) p ( c ) } = argmax c ∈C X g ∈G p ( I | c, g ) p ( c, g ) ( a ) = argmax c ∈C max g ∈G p ( I | c, g ) p ( c, g ) = argmax c ∈C max g ∈G exp (ln p ( I | c, g ) + ln p ( c, g )) ( b ) = argmax c ∈C ( max g ∈G exp X ω ln p ( I ω | c, g ) + ln p ( c, g ) !) ( c ) = argmax c ∈C ( max g ∈G exp − 1 2 X ω I ω − µ ω cg | Σ − 1 cg | I ω − µ ω cg + ln p ( c, g ) − D 2 ln | Σ cg | !) = argmax c ∈C ( max g ∈G exp X ω w ω cg | I ω + b ω cg !) ( d ) ≡ argmax c ∈C exp max g ∈G { w cg ? LC I } = argmax c ∈C max g ∈G { w cg ? LC I } = Choose { MaxOutPool ( LocalT emplateMatch ( I )) } = MaxOut-NN ( I ; θ ) . In line (a), we take the noise-free limit of the GRMM, which means that one hypothesis ( c, g ) dominates all others in likelihood. In line (b), we assume that the image I consists of multiple channels ω ∈ Ω , that are conditionally independent gi ven the global configuration ( c, g ) T ypically , for input images these are color channels and Ω ≡ { R, G, B } but in general Ω can be more abstract (e.g. as in feature maps). In line (c), we assume that the pixel noise covariance is isotropic and conditionally independent giv en the global configuration ( c, g ) , so that Σ cg = σ 2 x 1 D is proportional to the D × D identity matrix 1 D . In line (d), we defined the locally connected template matching operator ? LC , which is a location-dependent template matching operation. Note that the nuisance v ariables g ∈ G are (max-)marginalized o ver , after the application of a local template matching operation against a set of filters/templates W ≡ { w cg } c ∈C ,g ∈G . Lemma A.2 ( T ranslational Nuisance → d DCN Con volution ) . The MaxOut template matching and pooling operation (fr om Pr oposition A.1) for a set of translational nuisance variables G ≡ T r educes to the traditional DCN con volution and max-pooling operation. 12 Pr oof. Let the activ ation for a single output unit be y c ( I ) . Then we hav e y c ( I ) ≡ max g ∈G { w cg ? LC I } = max t ∈T {h w ct | I i} = max t ∈T {h T t w c | I i} = max t ∈T {h w c | T − t I i} = max t ∈T { ( w c ? DCN I ) t } = MaxP o ol( w c ? DCN I ) . where ? DCN is the traditional DCN Con volution operator . Finally , vectorizing in c giv es us the desired result y ( I ) = MaxPool( W ? DCN I ) . Proposition A.3 (Max Pooling DCNs with ReLu Acti vations) . The discriminative r elaxation of a noise-fr ee GRMM with translational nuisances and random missing data is a single con volutional layer of a traditional DCN. The layer consists of a generalized con volution operation, followed by a ReLu activation function and a Max-P ooling operation. Pr oof. W e will model completely random missing data as a nuisance transformation a ∈ A ≡ { keep , drop } , where a = keep = 1 leav es the rendered image data untouched, while a = drop = 0 throws out the entire image after rendering. Thus, the switching variable a models missing data. Critically , whether the data is missing is assumed to be completely random and thus independent of any other task variables, including the measurements (i.e. the image itself). Since the missingness of the evidence is just another nuisance, we can in vok e Proposition A.1 to conclude that the discriminativ e relaxation of a noise-free GRMM with random missing data is also a MaxOut-DCN, but with a specialized structure which we now deri ve. Mathematically , we decompose the nuisance variable g ∈ G into two parts g = ( t, a ) ∈ G = T × A , and then, following a similar line of reasoning as in Proposition A.1, we ha ve ˆ c ( I ) = argmax c ∈C max g ∈G p ( c, g | I ) = argmax c ∈C max g ∈G { w cg ? LC I } ( a ) = argmax c ∈C max t ∈T max a ∈A { a ( h w ct | I i + b ct ) + b 0 ct + b a + b 0 I } ( b ) = argmax c ∈C max t ∈T { max { ( w c ? DCN I ) t , 0 } + b 0 ct + b 0 drop + b 0 I } ( c ) = argmax c ∈C max t ∈T { max { ( w c ? DCN I ) t , 0 } + b 0 ct } ( d ) = argmax c ∈C max t ∈T { max { ( w c ? DCN I ) t , 0 }} = Choose { MaxPool ( ReLu ( DCNConv ( I ))) } = DCN ( I ; θ ) . In line (a) we calculated the log-posterior (ignoring ( c, g ) -independent constants) ln p ( c, g | I ) = ln p ( c, t, a | I ) = ln p ( I | c, t, a ) + ln p ( c, t, a ) + ln p ( I ) = 1 σ 2 x h aµ ct | I i − 1 2 σ 2 x ( k aµ ct k 2 2 + k I k 2 2 )) + ln p ( c, t, a ) ≡ a ( h w ct | I i + b ct ) + b 0 ct + b a + b 0 I , where a ∈ { 0 , 1 } , w ct ≡ 1 σ 2 x µ ct , b ct ≡ − 1 2 σ 2 x k µ ct k 2 2 , b a ≡ ln p ( a ) , b 0 ct ≡ ln p ( c, t ) , b 0 I ≡ − 1 2 σ 2 x k I k 2 2 . In line (b), we use Lemma A.2 to write the expression in terms of the DCN con volution 13 operator , after which we inv oke the identity max { u, v } = max { u − v , 0 } + v ≡ ReLu ( u − v ) + v for real numbers u, v ∈ R . Here we’ ve defined b 0 drop ≡ ln p ( a = drop ) and we’ ve used a slightly modified DCN con volution operator ? DCN defined by w ct ? DCN I ≡ w ct ? I + b ct + ln p ( a = keep ) p ( a = drop ) . Also, we observe that all the primed constants are independent of a and so can be pulled outside of the max a . In line(c), the two primed constants that are also independent of c, t can be dropped due to the argmax ct . Finally , in line (d), we assume a uniform prior over c, t . The resulting sequence of operations corresponds exactly to those applied in a single conv olutional layer of a traditional DCN. B From the Deep Rendering Mixtur e Model to DCNs Here we define the DRMM in full detail. Definition B.1 ( Deep Rendering Mixture Model (DRMM) ) . The Deep Rendering Mixtur e Model (DRMM) is a deep Gaussian Mixture Model (GMM) with special constraints on the latent variables. Generation in the DRMM takes the form: c ( L ) ∼ Cat ( { π c ( L ) } ) g ( ` ) ∼ Cat ( { π g ( ` ) } ) ∀ ` ∈ [ L ] ≡ { 1 , 2 , . . . , L } µ c ( L ) g ≡ Λ g µ c ( L ) ≡ Λ (1) g (1) Λ (2) g (2) . . . Λ ( L − 1) g ( L − 1) Λ ( L ) g ( L ) µ c ( L ) I ∼ N ( µ c ( L ) g , Ψ) = N ( µ c ( L ) g , σ 2 1 D (0) ) wher e the latent variables, parameters, and helper variables ar e defined as g ( ` ) ≡ g ( ` ) x ( ` ) x ( ` ) ∈X ( ` ) t ( ` ) ≡ t ( ` ) x ( ` ) x ( ` ) ∈X ( ` ) , a ( ` ) ≡ a ( ` ) x ( ` ) x ( ` ) ∈X ( ` ) g ( ` ) x ( ` ) ≡ t ( ` ) x ( ` ) , a ( ` ) x ( ` ) t ( ` ) x ( ` ) ∈ { UL , UR , LL , LR } a ( ` ) x ( ` ) ∈ { 0 , 1 } ≡ { OFF , ON } x ( ` ) ∈ X ( ` ) ≡ { pixels in level ` } ∈ R D ( ` ) Λ ( ` ) g ( ` ) = Λ ( ` ) t ( ` ) ,a ( ` ) ∈ R D ( ` − 1) × D ( ` ) = T ( ` ) t ( ` ) Z ( ` ) Γ ( ` ) M ( ` ) a ( ` ) M ( ` ) a ( ` ) ≡ diag a ( ` ) ∈ R D ( ` ) × D ( ` ) T ( ` ) t ( ` ) ≡ translation oper ator to position t ( ` ) ∈ R D ( ` − 1) × D ( ` − 1) Z ( ` ) ≡ zer o-padding operator ∈ R D ( ` − 1) × F ( ` ) Γ ( ` ) ≡ ⊗ x ( ` ) ∈X ( ` ) Γ ( ` ) x ( ` ) | {z } F ( ` ) × 1 ∈ R F ( ` ) × D ( ` ) Γ ( ` ) x ( ` ) ≡ { filter bank at level ` } ∈ R F ( ` ) F ( ` ) ≡ W ( ` ) H ( ` ) C ( ` ) = size of the core templates at layer ( ` ) For simplicity , in the following sections, we will use c and c ( L ) interchangeably . 14 Definition B.2 ( Nonnegative Deep Rendering Mixture Model (NN-DRM) ) . The Nonne gative Deep Rendering Mixtur e Model is defined as a DRMM (Definition B.1) with additional nonnegativity constraint(s) on the intermediate latent variables (r ender ed templates): z ( ` ) n = Λ g ( ` +1) n · · · Λ g ( L ) n µ c ( L ) n ≥ 0 ∀ ` ∈ { 1 , . . . , L } (11) Follo wing the same line of reasoning as in the main text, we will deri ve the Hard EM algorithm for the DRMM model. B.1 E-step: Computing the Soft Responsibilities γ ncg ≡ p ( c, g | I n ) = p ( I n | c, g ; θ ) p ( c, g | θ ) P c,g p ( I n | c, g ; θ ) p ( c, g | θ ) = π cg | Ψ | − 1 / 2 exp − 1 2 k I n − µ cg k 2 Ψ − 1 Z , where the partition function Z is defined as Z ( θ ) ≡ X c,g π cg | Ψ | − 1 / 2 exp − 1 2 k I n − µ cg k 2 Ψ − 1 . Since the numerator and denominator both contain | Ψ | − 1 / 2 , the responsibilities simplify to γ ncg = π cg exp − 1 2 k I n − µ cg k 2 Ψ − 1 Z 0 , (12) where Z 0 is defined as Z 0 ( θ ) ≡ X cg π cg exp − 1 2 k I n − µ cg k 2 Ψ − 1 . B.2 E-step: Computing the Hard Responsibilities Assuming isotropic noise Ψ = σ 2 1 D and taking the zero-noise limit σ 2 → 0 , the term in the denominator Z 0 ( θ ) for which k I n − µ cg k 2 2 is smallest will go to zero most slo wly . Hence the responsibilities γ ncg will all approach zero, e xcept for one term ( c ∗ , g ∗ ) , for which the γ nc ∗ g ∗ will approach one. 1 Thus, the soft responsibilities become hard responsibilities in the zero-noise limit: γ ncg σ → 0 − → r ncg ≡ 1 , if ( c, g ) = argmax c 0 g 0 − 1 2 k I n − µ c 0 g 0 k 2 2 0 , otherwise (13) B.3 Useful Lemmas In order to deriv e the E-step for the DRMM, we will need a few simple theoretical results. W e prove them here. Definition B.3 ( Masking Operator ) . Let a ∈ { 0 , 1 } d be a binary vector (mask) and let Λ ∈ R D × d be a r eal matrix. Then the masking operator M a (Λ) ∈ R D × d is defined as M a (Λ) ≡ Λ · M a ≡ Λ · diag( a ) , wher e M a ≡ diag( a ) ∈ R d × d is the diagonal masking matrix. 1 T echnically , there can be multiple maximizers and the algorithms belo w can be generalized to handle this case. But we focus on the case with just one unique maximum for simplicity . 15 Lemma B.4. The action of a masking operator on a vector z ∈ R d can be written in sever al equivalent ways: M a (Λ) z = Λ · diag( a ) · z = Λ · diag( a ) · diag( a ) · z = Λ[: , a ] · z [ a ] = Λ( a z ) . Her e denotes the elementwise (Hadamar d) pr oduct between two vectors and Λ[: , a ] is numpy notation for the subset of columns { j ∈ [ D ] : a j = 1 } of Λ . Pr oof. The first equality is by definition. The second equality is a result of a being binary since a 2 i = a i for a i ∈ { 0 , 1 } . The third and fourth equalities result from the associati vity of matrix multiplication. Lemma B.5 ( Optimization with Masking Operators ) . Let z , u ∈ R D × 1 . Consider the optimiza- tion pr oblem max a ∈{ 0 , 1 } D M a ( z T ) u = max a ∈{ 0 , 1 } D z T M a u (14) wher e M a ≡ diag( a ) . Then the optimization can be solved in closed form as: (a) max a ∈{ 0 , 1 } D M a ( z T ) u = 1 T D ReLu( z u ) . (b) ˆ a ≡ argmax a ∈{ 0 , 1 } D M a ( z T ) u = [ z u > 0] ∈ { 0 , 1 } D . (c) M ˆ a u = sgn( z ) ReLu (sgn( z ) u ) . (d) If z ≥ 0 , then ˆ a ≡ argmax a ∈{ 0 , 1 } D M a ( z T ) u = [ u > 0] ∈ { 0 , 1 } D is a maximizer , for which M ˆ a u = ReLu ( u ) . Pr oof. (a) The maximum value can be computed as v ? ≡ max a ∈{ 0 , 1 } D M a ( z T ) u = max a ∈{ 0 , 1 } D z T diag( a ) u = max a ∈{ 0 , 1 } D X i ∈ [ D ] z i a i u i = X i ∈ [ D ] max a i ∈{ 0 , 1 } a i ( z i u i ) ≡ X i ∈ [ D ] ˆ a i ( z i u i ) = X i ∈ [ D ] [ z i u i > 0] · z i u i = X i ∈ [ D ] ReLu( z i u i ) = 1 T D ReLu( z u ) . (b) In the 4th line the vector optimization decouples into a set of independent scalar optimizations max a i ∈{ 0 , 1 } a i ( z i u i ) , each of which is solv able in closed form: ˆ a i ≡ [ z i u i > 0] . Hence, the optimal 16 solution ˆ a is given by ˆ a = [ z u > 0] . (c) Substituting in ˆ a , we get M ˆ a u = u [ z u > 0] = (sgn( z ) sgn( z )) | {z } 1 D u [ sgn( z ) u > 0] = sgn( z ) (sgn( z ) u ) [ sgn( z ) u > 0] = sgn( z ) ReLu (sgn( z ) u ) , where in the third and fourth equalities we have used the associati vity of elementwise multiplication and the definition of ReLu, respectiv ely . (d) If z ≥ 0 , then when z i > 0 , ˆ a i = [ u i > 0] , and when z i = 0 , ˆ a i can be either 0 or 1 since then max a i ∈{ 0 , 1 } a i ( z i u i ) = 0 ∀ a i ∈ { 0 , 1 } . Therefore, if z ≥ 0 , ˆ a = [ u > 0] is a solution of the optimization 14. It follo ws that M ˆ a u = [ u > 0] u = ReLu ( u ) . Lemma B.6 ( Optimization with “Row" Max-Marginal ) . Let z , u ∈ R D × 1 . Consider the opti- mization pr oblem max t ∈T D z T u ( t ) = max t ∈T D X x z x u ( t ) x (15) wher e T is the set of possible fine-scale translations at location x . Also, t ≡ . . . t x . . . and u ( t ) ≡ . . . u x ( t x ) . . . (16) Then the optimization can be solved as: (a) max t ∈T D z T u ( t ) = P x | z x | max t x ∈T sgn( z x ) u x ( t x ) (b) ˆ t = argmax t ∈T D z T u ( t ) = argmax t sgn( z ) u ( t ) = . . . argmax t x sgn( z x ) u x ( t x ) . . . (c) u ( ˆ t ) = sgn( z ) max t (sgn( z ) u ( t )) = . . . sgn( z x )max t x sgn( z x ) u x ( t x ) . . . (d) If z ≥ 0 , then ˆ t = argmax t u ( t ) = . . . argmax t x u x ( t x ) . . . is a maximizer for which u ( ˆ t ) = max t ( u ( t )) = . . . max t x u x ( t x ) . . . 17 Pr oof. (a) The maximum value can be computed as v ? ≡ max { t x ∈T } D x =1 X x z x u x ( t x ) = X x max t x ∈T z x u x ( t x ) = X x max t x ∈T | z x | sgn( z x ) u x ( t x ) = X x | z x | max t x ∈T sgn( z x ) u x ( t x ) (b) In the 2nd line the v ector optimization decouples into a set of independent scalar optimizations max t x ∈T z x u x ( t x ) , each of which has the solution as follows: argmax t x sgn( z x ) u x ( t x ) . Hence, the optimal solution ˆ t = argmax t ∈T D z T u ( t ) = . . . argmax t x sgn( z x ) u x ( t x ) . . . = argmax t sgn( z ) u ( t ) . (c) Substituting in ˆ t , we obtain v ? = X x | z x | sgn( z x ) u x ( ˆ t x ) = X x z x sgn( z x )(sgn( z x ) u x ( ˆ t x )) = X x z x sgn( z x )max t x sgn( z x ) u x ( t x ) = z T . . . sgn( z x )max t x sgn( z x ) u x ( t x ) . . . Hence, u ( ˆ t ) = . . . sgn( z x )max t x sgn( z x ) u x ( t x ) . . . = sgn( z ) max t (sgn( z ) u ( t )) , (d) If z ≥ 0 , then when z i > 0 , ˆ t x = argmax t x u x ( t x ) , and when z i = 0 , ˆ t x can take an y value in its domain since then max t x ∈T sgn( z x ) u x ( t x ) = 0 ∀ t x ∈ T . Therefore, if z ≥ 0 , ˆ t x = argmax t x u x ( t x ) is a solution of the optimization 15. It follo ws that u ( ˆ t ) = max t ( u ( t )) ≡ . . . max t x u x ( t x ) . . . . Definition B.7 ( Deep Masking Operator ) . Let a ( ` ) ∈ { 0 , 1 } D ( ` ) be a collection of binary (vector) masks and let Λ ( ` ) ∈ R D ( ` − 1) × D ( ` ) be a collection of (matrix) operators. Then the deep masking operator M { a ( ` ) } ( { Λ ( ` ) } ) ∈ R D (0) × D ( L ) is defined as M { a ( ` ) } ( { Λ ( ` ) } ) ≡ L Y ` =1 M a ( ` ) (Λ ( ` ) ) = L Y ` =1 Λ ( ` ) · M a ( ` ) , wher e M a ≡ diag( a ) is the diagonal masking matrix for mask a . 18 B.4 E-Step: Inference of T op-Le vel Category Theorem B.8 ( Inference in DRMM ⇒ Signed Con vnets ) . Infer ence in the DRMM, accor ding to the Dynamic Pr ogramming-based algorithm below , yields Signed DCNs. The infer ence algorithm has a bottom-up and top-down pass. Pr oof. Giv en input image I n ≡ I (0) n , we infer ˆ c n as follows: ˆ c n = argmax c max g − 1 2 k I n − µ cg k 2 2 = argmax c max g µ T cg I n − 1 2 k I n k 2 2 − 1 2 k µ cg k 2 2 = argmax c max g µ T cg I n − 1 2 k µ cg k 2 2 , where the last equality follow since I n is independent of c, g . W e further assume that: α g ( ` ) = 0 ∀ ` k µ cg k 2 2 = const ∀ c, g . As a result, µ cg = Λ g µ c and the most probable class ˆ c n is inferred as ˆ c n = argmax c max g µ T cg I (0) n (17) = argmax c max g (Λ g µ c ) T I (0) n (18) = argmax c max g ( L :1) µ T c Λ T g ( L ) · · · Λ T g (2) Λ T g (1) I (0) n (19) = argmax c max g ( L :2) max t (1) max a (1) µ T c Λ T g ( L ) · · · Λ T g (2) ( M a (1) Λ T t (1) ) I (0) n (20) = argmax c max g ( L :2) max t (1) max a (1) µ T c Λ T g ( L ) · · · Λ T g (2) | {z } ≡ z (1) ↓ T M a (1) Λ T t (1) I (0) n | {z } ≡ u (1) ↑ n ( t (1) ) (21) = argmax c max g ( L :2) max t (1) max a (1) z (1) ↓ T M a (1) u (1) ↑ n ( t (1) ) (22) ( a ) = argmax c max g ( L :2) max t (1) z (1) ↓ T M ˆ a (1) n u (1) ↑ n ( t (1) ) (23) ( b ) = argmax c max g ( L :2) z (1) ↓ T s (1) ↓ max t (1) s (1) ↓ M ˆ a (1) n u (1) ↑ n ( t (1) ) (24) ( c ) = argmax c max g ( L :2) z (1) ↓ T s (1) ↓ max t (1) s (1) ↓ s (1) ↓ ReLu s (1) ↓ u (1) ↑ n ( t (1) ) (25) ( d ) = argmax c max g ( L :2) z (1) ↓ T s (1) ↓ MaxP o ol ReLu diag ( s (1) ↓ ) u (1) ↑ n ( T ) | {z } ≡ I (1) n ( s (1) ↓ ) (26) = argmax c max g ( L :2) µ T c Λ T g ( L ) · · · Λ T g (2) I (1) n (27) In line (a), we employ Lemma B.5 (b) to infer the optimal ˆ a (1) n . In line (b) and (c), we employ B.6 (c) and Lemma B.5 (c) to calculate the max-product message I (1) n to be sent to the next layer . Notice that here s (1) ↓ = sgn z (1) ↓ . In line (b), ˆ t (1) n is implicitly inferred via Lemma B.6 (b) . In line (d), s (1) ↓ s (1) ↓ becomes a vector of all 1 ’ s. Also, in the same line, diag ( s (1) ↓ ) is a diagonal matrix with diagonal s (1) ↓ and u (1) ↑ n ( T ) is a matrix [ u nxt ] where rows are indexed by x ∈ X and columns by t ∈ T . It corresponds to the output of the con volutional layer in a DCN, prior to the ReLu and spatial max-pooling operators. 19 Note that we ha ve succeeded in e xpressing the optimization (Eq. 19) recursi vely in terms of a one le vel smaller sub-problem (Eq. 27). Iterating this procedure yields a set of recurrence relations, which define our Dynamic Pr ogramming (DP) algorithm for the bottom-up and top-do wn inference in the DRMM: Bottom-Up E-Step ( E ↑ ): u ( ` ) ↑ n = Λ T t ( ` ) I ( ` − 1) n (28) s ( ` ) ↓ = sgn z ( ` ) ↓ (29) ∀ s ( ` ) ↓ ∈ {± 1 } D ( ` ) : ˆ a ( ` ) l n ( s ( ` ) ↓ ) = [ s ( ` ) ↓ u ( ` ) ↑ n > 0] (30) ∀ s ( ` ) ↓ ∈ {± 1 } D ( ` ) : ˆ t ( ` ) l n ( s ( ` ) ↓ ) = argmax t ( ` ) s ( ` ) ↓ u ( ` ) ↑ n ( t ( ` ) ) (31) I ( ` ) n ( s ( ` ) ↓ ) = M ˆ a ( ` ) n Λ T ˆ t ( ` ) I ( ` − 1) n (32) = s ( ` ) ↓ MaxP o ol ReLu diag ( s (1) ↓ ) u (1) ↑ n ( T ) (33) ˆ c ( L ) n = argmax c ( L ) µ T c ( L ) I ( L ) n (34) T op-Down/T raceback E-Step ( E ↑ ): ˆ z ( ` ) ↓ n = Λ ˆ g ( ` +1) n · · · Λ ˆ g ( L ) n µ ˆ c ( L ) n (35) = Λ ˆ g ( ` +1) n ˆ z ( ` +1) ↓ n (36) ˆ s ( ` ) ↓ n = sgn( ˆ z ( ` ) ↓ n ) (37) ˆ a ( ` ) l n = ˆ a ( ` ) l n ( ˆ s ( ` ) ↓ n ) = [ ˆ s ( ` ) ↓ n u ( ` ) ↑ n > 0] (38) ˆ t ( ` ) l n = ˆ t ( ` ) l n ( s ( ` ) ↓ n ) = argmax t ( ` ) s ( ` ) ↓ n u ( ` ) ↑ n ( t ( ` ) ) (39) where u ( ` ) ↑ n and ˆ z ( ` ) ↓ n are the bottom-up and top-down net inputs into layer ` , respectiv ely . Corollary B.9 ( Inference in the NN-DRMM ⇒ Convnets ) . Infer ence in the NN-DRMM accor ding to the Dynamic Pr ogramming-based algorithm above yields ReLu DCNs. Pr oof. The NN-DRMM assumes that the intermediate rendered latent variables z ( ` ) n ≥ 0 for all ` , which implies that the signs are also nonnegati ve i.e., s ( ` ) n ≥ 0 . This in turn, according to Lemma B.5 (d) and B.6 (d) , reduces Eqs. 33, 34, 38 and 39 to E ↑ : I ( ` ) n = MaxP o ol ReLu u ( ` ) ↑ n (40) ˆ c ( L ) n = argmax c ( L ) µ T c ( L ) I ( L ) n (41) E ↓ : ˆ a ( ` ) n = [ u ( ` ) ↑ n > 0] (42) ˆ t ( ` ) n = argmax t ( ` ) u ( ` ) ↑ n ( t ( ` ) ) , (43) which is equiv alent to feedforward propagation in a DCN. Note that the the top-down step no longer requires information from the deeper lev els, and so it can be computed in the bottom-up step instead. Remark: Note that the v ector max notation max t u ( t ) = . . . max t x u x ( t x ) . . . is the same as the max nota- tion we use in our arXiv post. It refers to the ro w max-marginals of the matrix u ( t ) ≡ [ u xt ] x ∈X ,t ∈T with respect to latent variables t . 20 ˆ z (1) nxtac I (0) nxc I (1) nxc (ˆ z ) (1) nxtac p o ol (1) ta Softmax Classifier 0 1 2 3 4 5 6 7 8 9 Latent Factor ! Gated ! Factor ! Summarized ! Factor ! Input ! Image ! Rendering Net Layer (Factor DRM EM) ! conv (1) tac (1) nxtac Responsibilities ! Reconst. Errors ! (Cluster Distances) ! softmax d (1) nxtac Gating ! ˆ I (0) nx | tac dist( · , · ) Figure 3: Neural network implementation of shallo w Rendering Model EM algorithm. C Rendering F actor Model (RFM) Architectur e D T ransf orming a Generative Classifier into a Discriminati ve One Before we formally define the procedure, some preliminary definitions and remarks will be helpful. A generativ e classifier models the joint distribution p ( c, I ) of the input features and the class labels. It can then classify inputs by using Bayes Rule to calculate p ( c | I ) ∝ p ( c, I ) = p ( I | c ) p ( c ) and picking the most likely label c . T raining such a classifier is kno wn as generative learning , since one can generate synthetic features I by sampling the joint distrib ution p ( c, I ) . Therefore, a generati ve classifier learns an indir ect map from input features I to labels c by modeling the joint distrib ution p ( c, I ) of the labels and the features. In contrast, a discriminati ve classifier parametrically models p ( c | I ) = p ( c | I ; θ d ) and then trains on a dataset of input-output pairs { ( I n , c n ) } N n =1 in order to estimate the parameter θ d . This is known as discriminative learning , since we directly discriminate between different labels c giv en an input feature I . Therefore, a discriminati ve classifier learns a direct map from input features I to labels c by dir ectly modeling the conditional distribution p ( c | I ) of the labels given the features. Giv en these definitions, we can no w define the discriminative r elaxation procedure for con verting a generati ve classifier into a discriminati ve one. Starting with the standard learning objectiv e for a generati ve classifier , we will employ a series of transformations and relaxations to obtain the learning 21 I c g ⇡ cg cg I c g ✓ X" discrimina+ve" relaxa+on" X" ⇢ ( · ) A B ✓ ⌘ ✓ brain ⌘ brain ˜ ✓ ⌘ ✓ wo r l d Figure 4: Graphical depiction of discriminati ve relaxation procedure. (A) The Rendering Model (RM) is depicted graphically , with mixing probability parameters π cg and rendered template parameters λ cg . Intuitiv ely , we can interpret the discriminativ e relaxation as a brain-world transformation applied to a generati ve model. According to this interpretation, instead of the world generating images and class labels (A), we instead imagine the world generating images I n via the rendering parameters ˜ θ ≡ θ world while the brain generates labels c n , g n via the classifier parameters η dis ≡ η brain (B). The brain-world transformation con verts the RM (A) to an equi v alent graphical model (B), where an e xtra set of parameters ˜ θ and constraints (arrows from θ to ˜ θ to η ) hav e been introduced. Discriminativ ely relaxing these constraints (B, red X’ s) yields the single-layer DCN as the discriminativ e counterpart to the original generativ e RM classifier in (A). objectiv e for a discriminative classifier . Mathematically , we hav e max θ L gen ( θ ) ≡ max θ X n ln p ( c n , I n | θ ) ( a ) = max θ X n ln p ( c n | I n , θ ) + ln p ( I n | θ ) ( b ) = max θ, ˜ θ : θ = ˜ θ X n ln p ( c n | I n , θ ) + ln p ( I n | ˜ θ ) ( c ) ≤ max θ X n ln p ( c n | I n , θ ) | {z } ≡ L cond ( θ ) ( d ) = max η : η = ρ ( θ ) X n ln p ( c n | I n , η ) ( e ) ≤ max η X n ln p ( c n | I n , η ) | {z } ≡ L dis ( η ) , (44) where the L ’ s are the generative , conditional and discriminative log-likelihoods, respectiv ely . In line (a), we used the Chain Rule of Probability . In line (b), we introduced an extra set of parameters ˜ θ while also introducing a constraint that enforces equality with the old set of generative parameters θ . In line (c), we relax the equality constraint (first introduced by Bishop, LaSerre and Minka in [ 4 ]), allowing the classifier parameters θ to differ from the image generation parameters ˜ θ . In line (d), we pass to the natural par ametrization of the exponential f amily distribution I | c , where the natural parameters η = ρ ( θ ) are a fixed function of the conv entional parameters θ . This constraint on the natural parameters ensures that optimization of L cond ( η ) yields the same answer as optimization of L cond ( θ ) . And finally , in line (e) we relax the natural parameter constraint to get the learning objectiv e for a discriminati ve classifier , where the parameters η are now free to be optimized. A graphical model depiction of this process is shown in Fig. 4. In summary , starting with a generati ve classifier with learning objecti ve L gen ( θ ) , we complete steps (a) through (e) to arriv e at a discriminativ e classifier with learning objective L dis ( η ) . W e refer to this process as a discriminative r elaxation of a generative classifier and the resulting classifier is a discriminative counterpart to the generative classifier . 22 dum bbel l cup d a l m a ti a n bel l pepper l em o n hus k y w a s hi ng m a chi ne co m pute r k ey bo a rd k i t fo x goose limousine ostrich Figure 1: Numerically computed images, illustrating the class appearance models, lear nt by a Con vNet, trained on ILSVRC-2013. Note ho w dif ferent aspects of class appearance are captured in a single image. Better vie wed in colour . 3 Figure 5: Results of acti vity maximization on the ImageNet dataset [ 26 ]. For a gi ven class c , activity-maximizing inputs are superpositions of various poses of the object, with distinct patches P i containing distinct poses g ∗ P i , as predicted by Eq. 46. Figure adapted with permission from the authors. E Derivation of Closed-F orm Expression f or Activity-Maximizing Images Results of running activity maximization are sho wn in Fig. 5 for completeness. Mathematically , we seek the image I that maximizes the score S ( c | I ) of a specific object class. Using the DRM, we hav e max I S ( c ( L ) | I ) = max I max g ∈G h 1 σ 2 µ ( c ( L ) , g ( ` ) ) | I i ∝ max g ∈G max I h µ ( c ( L ) , g ) | I i = max g ∈G max I P 1 · · · max I P p h µ ( c ( L ) , g ) | X P i ∈P I P i i = max g ∈G X P i ∈P max I P i h µ ( c ( L ) , g ) | I P i i = max g ∈G X P i ∈P h µ ( c ( L ) , g ) | I ∗ P i ( c ( L ) , g ) i = X P i ∈P h µ ( c ( L ) , g ) | I ∗ P i ( c ( L ) , g ∗ P i i , (45) where I ∗ P i ( c ( ` ) , g ) ≡ argmax I P i h µ ( c ( ` ) , g ) | I P i i and g ∗ P i = g ∗ ( c ( ` ) , P i ) ≡ argmax g ∈G h µ ( c ( ` ) , g ) | I ∗ P i ( c ( ` ) , g ) i . In the third line, the image I is decomposed into P patches I P i of the same size as I , with all pixels outside of the patch P i set to zero. The max g ∈G operator finds the most probable g ∗ P i within each patch. The solution I ∗ of the activity maximization is then the sum of the individual acti vity-maximizing patches I ∗ ≡ X P i ∈P I ∗ P i ( c ( ` ) , g ∗ P i ) ∝ X P i ∈P µ ( c ( ` ) , g ∗ P i ) . (46) F From the DRMM to Decision T r ees In this section we show that, lik e DCNs, Random Decision Forests (RDFs) can also be deri ved from the DRMM model. Instead of translational and switching nuisances, we will sho w that an additive mutation nuisance pr ocess that generates a hierarchy of categories (e.g., ev olution of a taxonomy of living or ganisms) is at the heart of the RDF . F .1 The Ev olutionary Deep Rendering Mixture Model W e define the Evolutionary DRMM (E-DRMM) as a DRMM with an ev olutionary tree of categories. Samples from the model are generated by starting from the root ancestor template and randomly 23 mutating the templates. Each child template is an additi ve “mutation” of its parent, where the specific mutation does not depend on the parent (see Eq.47 below). At the leav es of the tree, a sample is generated by adding Gaussian pixel noise. Like in the DRMM, giv en c ( L ) ∼ Cat( π c ( L ) ) and g ( ` +1) ∼ Cat( π g ( ` +1) ) , with c ( L ) ∈ C L and g ( ` +1) ∈ G ` +1 where ` = 1 , 2 , · · · , L , the template µ c ( L ) g and the image I are rendered as µ c ( L ) g = Λ g µ c ( L ) ≡ Λ g (1) · · · Λ g ( L ) · µ c ( L ) ≡ µ c ( L ) + α g ( L ) + · · · + α g (1) , g = { g ( ` ) } L ` =1 I ∼ N ( µ c ( L ) g , σ 2 1 D ) ∈ R D . Here, Λ g ( ` ) has a special structure due to the additi ve mutation process: Λ g ( ` ) = [ 1 | α g ( ` ) ] , where 1 is the identity matrix. The rendering path represents template e volution and is defined as the sequence ( c ( L ) , g ( L ) , . . . , g ( ` ) , . . . , g (1) ) from the root ancestor template down to the indi vidual pixels at ` = 0 . µ c ( L ) is an abstract template for the root ancestor c ( L ) , and P ` α g ( ` ) represents the sequence of local nuisance transformations, in this case, the accumulation of many additi ve mutations. As with the DRMM, we can cast the E-DRMM into an incremental form by defining an intermediate class c ( ` ) ≡ ( c ( L ) , g ( L ) , . . . , g ( ` +1) ) that intuitiv ely represents a partial evolutionary path up to le vel ` . Then, the mutation from le vel ` + 1 to ` can be written as µ c ( ` ) = Λ g ( ` +1) · µ c ( ` +1) = µ c ( ` +1) + α g ( ` +1) . (47) Here, α g ( ` ) is the mutation added to the template at lev el ` in the evolutionary tree. F .2 Infer ence with the E-DRM Yields a Decision T ree Since the E-DRMM is an RMM with a hierarchical prior on the rendered templates, we can use Eq.3 to deriv e the E-DRMM inference algorithm for ˆ c ( L ) ( I ) as: ˆ c ( L ) ( I ) = argmax c ( L ) ∈C L max g ∈G h µ c ( L ) + α g ( L ) + · · · + α g (1) | I i = argmax c ( L ) ∈C L max g (1) ∈G 1 · · · max g ( L − 1) ∈G L − 1 h µ c ( L ) + α g ( L ) ∗ | {z } ≡ µ c ( L − 1) + · · · + α g (1) | I i · · · ≡ argmax c ( L ) ∈C L h µ c ( L ) g ∗ | I i . (48) where µ c ( ` ) has been defined in the second line. Here, we assume that the sub-trees are well-separated. In the last lines, we repeatedly use the distributi vity of max over sums, resulting in the iteration g ∗ c ( ` +1) ≡ argmax g ( ` +1) ∈G ` +1 h µ c ( ` +1) g ( ` +1) | {z } ≡ W ( ` +1) | I i ≡ Cho oseChild(Filter( I )) . (49) Eqs.48 and 49 define a Decision T ree . The leaf label histograms at the end of a decision tree plays a similar role as the SoftMax regression layer in DCNs. Applying bagging [ 5 ] on decision trees yield a Random Decision Forest (RDF). G Unifying the Probabilistic and Neural Netw ork P erspectives H Additional Experimental Results H.1 Learned Filters and Image Reconstructions Filters and reconstructed images are shown in Fig. 6. H.2 Additional T raining Results More results plus comparison to other related work are gi ven in T able 3. 24 T able 2: Summary of probabilistic and neural network perspectiv es for DCNs. The DRMM provides a probabilistic interpretation for all of the common elements of DCNs relating to the underlying model, inference algorithm, and learning rules. Aspect Neural Nets Perspective ( De ep Convolutional Neural Networks) Probabilistic Perspective (Deep Rendering Model) Model Weights and biases of filters at a given layer Partial Rendering at a given abstraction level/scale Number of Layers Number of Abstraction Levels Number of Filters in a layer Number of Clusters/Classes at a given abstraction level Implicit in network weights; can be computed by product of weights over all layers or by activity maximization Category prototypes are finely detailed versions of coarser - scale super - category prototypes. Fine details are modeled with affine nuisance transformations. Inference Forward propagation thru DCN Exact bottom - up inference via Max - Sum Message Passing (with Max - Product for Nuisance Factorization). Input and Output Feature Maps Probabilistic Max - Sum Messages (real - valued functions of variables nodes) Template matching at a given l ayer (convolutional, locally or fully connected) Local computation at factor node (log - likelihood of measurements) Max - Pooling over local pooling region Max - Marginalization over Latent Translational Nuisance transformations Rectified Linear Unit (ReLU). Sparsifies output activations. Max - Marginalization over Latent Switching state of Renderer. Low prior probability of being ON. Learning Stochastic Gradient Descent Batch Discriminative EM Algorithm with Fine - to - Coarse E - step + Gradient M - step. No coarse - to - fine pass in E - step. N/A Full EM Algorithm Batch - Normali zed SGD Discriminative Approximation to Full EM (assumes Diagonal Pixel Covariance) Figure 6: (Left) Filters learned from 60,000 unlabeled MNIST samples and (Right) reconstructed images from the Shallow Rendering Mixture Model I Model Configurations In our experiments, configurations of the RFM and 2-layer DRFM are similar to LeNet5 [ 11 ] and its variants. Also, configurations of the 5-layer DRMM (for MNIST) and the 9-layer DRMM (for CIF AR10) are similar to Conv-Small and Con v-Large architectures in [29, 20], respecti vely . 25 T able 3: T est error (%) for supervised, unsupervised and semi-supervised training on MNIST using N U = 60 K unlabeled images and N L ∈ { 100 , 600 , 1 K, 3 K, 60 K } labeled images. Model T est Error (%) N L = 100 N L = 600 N L = 1 K N L = 3 K N L = 60 K RFM sup - - - - 1 . 21 Convnet 1-layer sup - - - - 1 . 30 DRMM 5-layer sup - - - - 0 . 89 Convnet 5-layer sup - - - - 0 . 81 RFM unsup-pretr 16 . 2 5 . 65 4 . 64 2 . 95 1 . 17 DRMM 5-layer unsup-pretr 12 . 03 3 . 61 2 . 73 1 . 68 0 . 58 SWW AE unsup-pretr [34] - 9 . 80 6 . 135 4 . 41 - RFM semi-sup 14 . 47 5 . 61 4 . 67 2 . 96 1 . 27 DRMM 5-layer semi-sup 3 . 50 1 . 56 1 . 67 0 . 91 0 . 51 Convnet [11] 22 . 98 7 . 86 6 . 45 3 . 35 - TSVM [33] 16 . 81 6 . 16 5 . 38 3 . 45 - CAE [22] 13 . 47 6 . 3 4 . 77 3 . 22 - MTC [21] 12 . 03 5 . 13 3 . 64 2 . 57 - PL-DAE [12] 10 . 49 5 . 03 3 . 46 2 . 69 - WT A-AE [14] - 2 . 37 1 . 92 - - SWW AE no dropout [34] 9 . 17 ± 0 . 11 4 . 16 ± 0 . 11 3 . 39 ± 0 . 01 2 . 50 ± 0 . 01 - SWW AE with dropout [34] 8 . 71 ± 0 . 34 3 . 31 ± 0 . 40 2 . 83 ± 0 . 10 2 . 10 ± 0 . 22 - M1+TSVM [8] 11 . 82 ± 0 . 25 5 . 72 4 . 24 3 . 49 - M1+M2 [8] 3 . 33 ± 0 . 14 2 . 59 ± 0 . 05 2 . 40 ± 0 . 02 2 . 18 ± 0 . 04 - Skip Deep Generative Model [13] 1 . 32 - - - - LadderNetwork [20] 1 . 06 ± 0 . 37 - 0 . 84 ± 0 . 08 - - Auxiliary Deep Generative Model [13] 0 . 96 - - - - ImprovedGAN [24] 0 . 93 ± 0 . 065 - - - - catGAN [28] 1 . 39 ± 0 . 28 - - - - T able 4: T est error rates (%) between 2-layer DRMM and 9-layer DRMM trained with semi- supervised EG and other best published results on CIF AR10 using N U = 50 K unlabeled images and N L ∈ { 4 K, 50 K } labeled images Model N L = 4 K N L = 50 K Con vnet [11] 43 . 90 27 . 17 Con v-Large [29] - 9 . 27 CatGAN [28] 19 . 58 ± 0 . 46 9.38 Improv edGAN [24] 18 . 63 ± 2 . 32 - LadderNetwork [20] 20 . 40 ± 0 . 47 - DRMM 2-layer 39 . 2 24 . 60 DRMM 9-layer 23 . 24 11 . 37 T able 5: Comparison of RFM, 2-layer DRMM and 5-layer DRMM against Stacked What-Where Auto-encoders with various re gularization approaches on the MNIST dataset. N is the number of labeled images used, and there is no extra unlabeled image. Model N = 100 N = 600 N = 1 K N = 3 K SWW AE (3 layers) [34] 10 . 66 ± 0 . 55 4 . 35 ± 0 . 30 3 . 17 ± 0 . 17 2 . 13 ± 0 . 10 SWW AE (3 layers) + dropout on con volution [34] 14 . 23 ± 0 . 94 4 . 70 ± 0 . 38 3 . 37 ± 0 . 11 2 . 08 ± 0 . 10 SWW AE (3 layers) + L1 [34] 10 . 91 ± 0 . 29 4 . 61 ± 0 . 28 3 . 55 ± 0 . 31 2 . 67 ± 0 . 25 SWW AE (3 layers) + noL2M [34] 12 . 41 ± 1 . 95 4 . 63 ± 0 . 24 3 . 15 ± 0 . 22 2 . 08 ± 0 . 18 Con vnet (1 layer) 18 . 33 10 . 36 8 . 07 4 . 47 RFM (1 layer) 22 . 68 6 . 51 4 . 66 3 . 55 DRMM 2-layer 12 . 56 6 . 50 4 . 75 2 . 66 DRMM 5-layer 11 . 97 3 . 70 2 . 72 1 . 60 26

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment