Improving the Performance of Neural Networks in Regression Tasks Using Drawering

The method presented extends a given regression neural network to make its performance improve. The modification affects the learning procedure only, hence the extension may be easily omitted during evaluation without any change in prediction. It mea…

Authors: Konrad Zolna

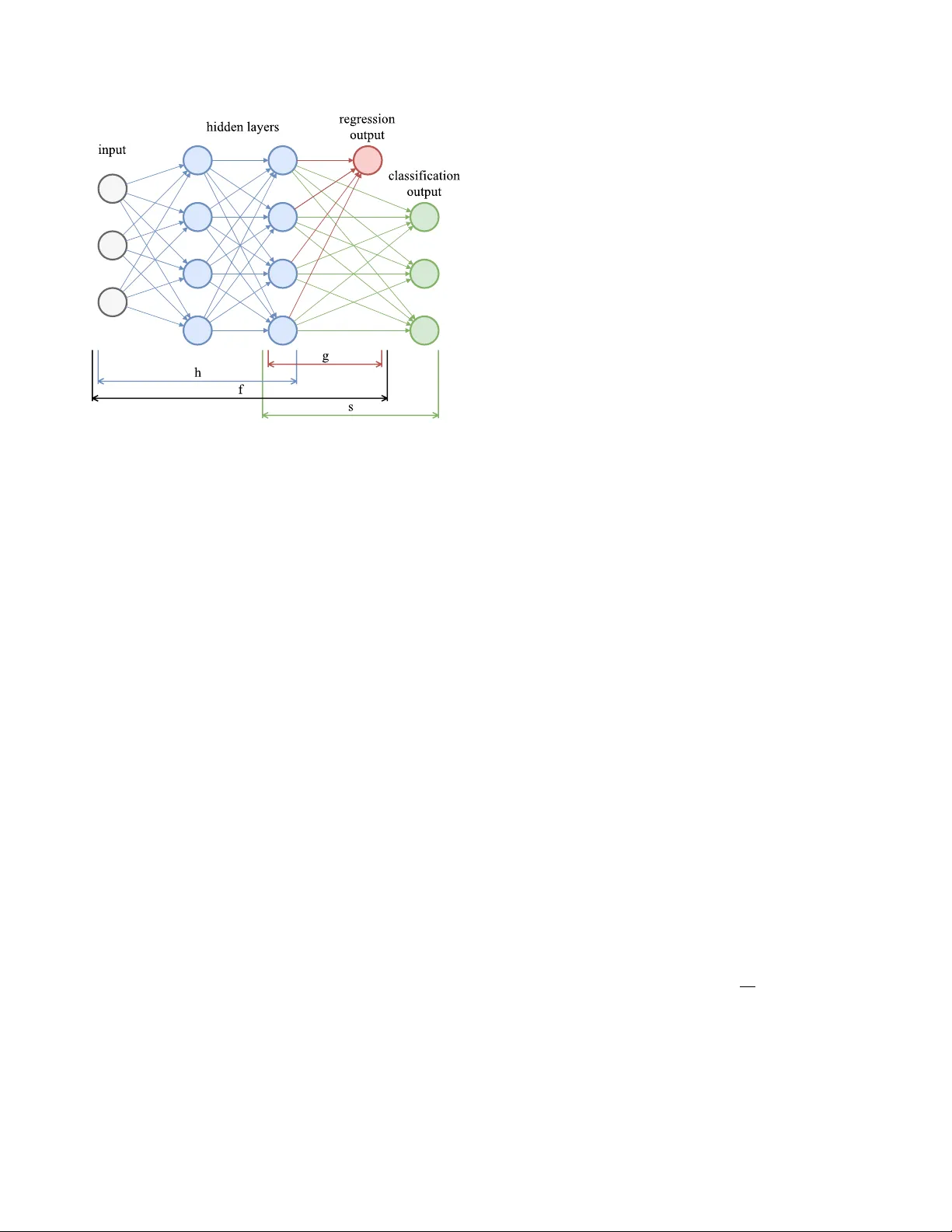

Impr oving the P erf ormance of Neural Networks in Regr ession T asks Using Drawering K onrad ˙ Zołna J agiellonian University , Cr acow , P oland RTB House, W arsaw , P oland Email: konr ad.zolna@im.uj.edu.pl, konrad.zolna@rtbhouse .com Abstract —The method presented extends a given regr ession neural network to make its perf ormance improve. The modifi- cation affects the learning procedur e only , hence the extension may be easily omitted during evaluation without any change in prediction. It means that the modified model may be evaluated as quickly as the original one but tends to perform better . This impr ovement is possible because the modification gives better expressi ve power , provides better beha ved gradients and works as a regularization. The knowledge gained by the temporarily extended neural network is contained in the parameters shared with the original neural network. The only cost is an incr ease in lear ning time. 1. Introduction Neural networks and especially deep learning architec- tures hav e become more and more popular recently [1]. W e believ e that deep neural networks are the most powerful tools in a majority of classification problems (as in the case of image classification [2]). Unfortunately , the use of neural networks in regression tasks is limited and it has been recently showed that a softmax distribution of clustered values tends to work better , even when the target is continuous [3]. In some cases seemingly continuous v alues may be understood as categorical ones (e.g. image pixel intensities) and the transformation between the types is straightforward [4]. Howe ver , sometimes this transformation cannot be simply incorporated (as in the case when targets span a huge set of possible values). Furthermore, forcing a neural network to predict multiple targets instead of just a single one makes the ev aluation slo wer . W e want to present a method which fulfils the following requirements: • gains advantage from the categorical distrib ution which makes a prediction more accurate, • outputs a single value which is a solution to a given regression task, • may be e valuated as quickly as in the case of the original regression neural network. The method proposed, called drawering , bases on tem- porarily extending a given neural network that solves a regression task. That modified neural network has properties which improve learning. Once training is done, the original neural network is used standalone. The knowledge from the extended neural network seems to be transferred and the original neural network achieves better results on the regression task. The method presented is general and may be used to enhance any given neural network which is trained to solve any re gression task. It also affects only the learning proce- dure. 2. Main idea 2.1. Assumptions The method presented may be applied for a regression task, hence we assume: • the data D consists of pairs ( x i , y i ) where the input x i is a fixed size real valued vector and target y i has a continuous v alue, • the neural network architecture f ( · ) is trained to find a relation between input x and target y , for ( x, y ) ∈ D , • a loss function L f is used to asses performance of f ( · ) by scoring P ( x,y ) ∈ D L f ( f ( x ) , y ) , the lower the better . 2.2. Neural network modification In this setup, any given neural network f ( · ) may be understood as a composition f ( · ) = g ( h ( · )) , where g ( · ) is the last part of the neural network f ( · ) i.e. g ( · ) applies one matrix multiplication and optionally a non-linearity . In other words, a vector z = h ( x ) is the value of last hidden layer for an input x and the v alue g ( z ) may be written as g ( z ) = σ ( Gh ( x )) for a matrix G and some function σ (one can notice that G is just a vector). The job which is done by g ( · ) is just to squeeze all information from the last hidden layer into one value. In simple words, the neural network f ( · ) may be divided in two parts, the first, core h ( · ) , which performs majority of calculations and the second, tiny one g ( · ) which calculates a single v alue, a prediction, based on the output of h ( · ) . Figure 1. The sample extension of the function f ( · ) . The function g ( · ) always squeezes the last hidden layer into one value. On the other hand the function s ( · ) may have hidden layers, but the simplest architecture is presented. Our main idea is to extend the neural network f ( · ) . For e very input x the v alue of the last hidden layer z = h ( x ) is duplicated and processed by two independent, parameterized functions. The first of them is g ( · ) as before and the second one is called s ( · ) . The original neural network g ( h ( · )) is trained to minimize the given loss function L f and the neural network s ( h ( · )) is trained with a new loss function L s . An e xample of the extension described is presended in the Figure 1. For the sake of consistency the loss function L f will be called L g . Note that functions g ( h ( · )) and s ( h ( · )) share parameters, because g ( h ( · )) and s ( h ( · )) are compositions having the same inner function. Since the parameters of h ( · ) are shared. It means that learning s ( h ( · )) influences g ( h ( · )) (and the other way around). W e want to train all these functions jointly which may be hard in general, b ut the function s ( · ) and the loss function L s are constructed in a special way presented belo w . All real v alues are clustered into n consecutiv e intervals i.e. disjoint sets e 1 , e 2 , ..., e n such that • ∪ n i =1 e i cov ers all real numbers, • r j < r k for r j ∈ e j , r k ∈ e k , when j < k . The function s ( h ( · )) (ev aluated for an input x ) is trained to predict which of the sets ( e i ) n i =1 contains y for a pair ( x, y ) ∈ D . The loss function L s may be defined as a non-binary cross-entropy loss which is typically used in classifiation problems. In the simplest form the function s ( · ) may be just a multiplication by a matrix S (whose first dimension is n ). T o sum up, drawering in its basic form is to add ad- ditional, parallel layer which takes as the input the value of the last hidden layer of the original neural network f ( · ) . A modified ( drawer ed ) neural network is trained to predict not only the original target, but also additional one which depicts order of magnitude of the original target. As a result extended neural network simultaneously solves the regression task and a related classification problem. One possibility to define sets e i , called drawer s , is to take suitable percentiles of target values to make each e i contain roughly the same number of them. 2.3. T raining functions g ( h ( · )) and s ( h ( · )) jointly T raining is done using gradient descent, hence it is suf- ficient to obtain gradients of all functions defined i.e. h ( · ) , g ( · ) and s ( · ) . For a gi ven pair ( x, y ) ∈ D the forward pass for g ( h ( x )) and s ( h ( x )) is calculated (note that a majority of calculations is shared). Afterwards two backpropagations are processed. The backpropagation for the composition g ( h ( x )) using loss function L g returns a vector which is a concatenation of two vectors g r ad g and g r ad h,g , such that g r ad g is the gradient of function g ( · ) at the point h ( x ) and g r ad h,g is the gradient of function h ( · ) at the point x . Similarly , the backpropagation for s ( h ( x )) using loss function L s giv es two gradients g r ad s and g r ad h,s for functions s ( · ) and h ( · ) , respectiv ely . The computed gradients of g ( · ) and s ( · ) parameters (i.e. g r ad g and g r ad s ) can be applied as in the normal case – each one of those functions takes part in only one of the backpropagations. Updating the parameters belonging to the h ( · ) part is more complex, because we are obtaining two different gra- dients g r ad h,g and g r ad h,s . It is worth noting that h ( · ) parameters are the only common parameters of the com- positions g ( h ( x )) and s ( h ( x )) . W e want to take an a verage of the gradients g r ad h,g and g r ad h,s and apply (update h ( · ) parameters). Unfortunately , the orders of magnitute of them may be different. Therefore, taking an unweighted average may result in minimalizing only one of the loss functions L g or L s . T o address this problem, the a verages a g and a s of absolute v alues of both gradients are calculated. Formally , the norm L 1 is used to define: a g = k g r ad h,g k 1 , (1) a s = k g r ad h,s k 1 . The values a g and a s aproximately describe the impacts of the loss functions L g and L s , respectively . The final v ector g r ad h which will be used as a gradient of h ( · ) parameters in the gradient descent procedure equals: g r ad h = α g rad h,g + (1 − α ) a g a s g r ad h,s (2) for a hyperparameter α ∈ (0 , 1) , typically α = 0 . 5 . This strategy makes updates of h ( · ) parameters be of the same order of magnitude as in the procces of learning the original neural network f ( · ) (without dr awering ). One can also normalize the gradient g r ad h,g instead of the gradient g r ad h,s , but it may need more adjustments in the hyperparameters of the learning procedure (e.g. learning rate alteration may be required). Note that for α = 1 the learning procedure will be identical as in the original case where the function f is trained using loss function L g only . It is useful to bear in mind that both backpropagations also share a lot of calculations. In the extreme case when the ratio a c a d is known in adv ance one backpropagation may be performed simultaneously for loss function L g and the weighted loss function L s . W e noticed that the ratio needed is roughly constant between batches iterations therefore may be calculated in the initial phase of learning. Afterw ards may be checked and updated from time to time. In this section we slightly abused the notation – a value of gradient at a given point is called just a gradient since it is obvious what point is consider ed. 2.4. Defining drawers 2.4.1. Regular and unev en. W e mentioned in the subsec- tion 2.2 that the simplest way of defining drawers is to take intervals whose endings are suitable percentiles that distribute target values uniformly . In this case n re gular drawer s are defined in the following way: e i = ( q i − 1 ,n , q i,n ] (3) where q i,n is i n -quantile of targets y from training set (the v alues q 0 ,n and q n,n are defined as minus infinity and plus inifinity , respectiv ely). This way of defining drawers makes each interv al e i contain approximately the same number of target values. Ho wev er , we noticed that an alternativ e way of defining e i ’ s, which tends to support classical mean square error (MSE) loss better, may be proposed. The MSE loss penal- izes more when the dif ference between the gi ven target and the prediction is lar ger . T o address this problem dr aw er s may be defined in a way which encourages the learning procedure to focus on e xtreme values. Drawer s should group the middle values in bigger clusters while placing extreme v alues in smaller ones. The definition of 2 n uneven drawers is as follo ws: e i = ( q 1 , 2 n − i +2 , q 2 , 2 n − i +2 ] , for i ≤ n, (4) e i = ( q 2 i − n +1 − 2 , 2 i − n +1 , q 2 i − n +1 − 1 , 2 i − n +1 ] , for i > n. In this case e very drawer e i +1 contains approximately two times more target v alues as compared to drawer e i for i < n . Finally , both e n and e n +1 contain the maximum of 25% of all target values. Similarly to the asceding intervals in the first half, e i are desceding for i > n i.e. contain less and less target values. The number of drawers n is a hyperparameter . The big- ger n the more complex distrib ution may be modeled. On the other hand each dr awers has to contain enough representants among targets from training set. In our e xperiments each drawer contained at least 500 target v alues. 2.4.2. Disjoint and nested. W e observed that sometimes it may be better to train s ( h ( · )) to predict whether target is in a set f j , where f j = ∪ n i = j e i . In this case s ( h ( · )) has to answer a simpler question: ”Is a targ et higher than a given value?” instead of bounding the target value from both sides. Of course in this case s ( h ( x )) no longer solves a one-class classification problem, but ev ery value of s ( h ( x )) may be assesed independently by binary cross-entropy loss. Therefore, drawer s may be: • r e gular or uneven , • nested or disjoint . These di visions are orthogonal. In all experiments described in this paper (the section 6) uneven drawers were used. 3. Logic behind our idea W e believ e that drawering improves learning by provid- ing the follo wing properties. • The extension s ( · ) gi ves additional expressi ve power to a giv en neural network. It is used to predict additional target, but since this target is closely re- lated with the original one, it is believ ed that gained knowledge is transferred to the core of the giv en neural network h ( · ) . • Since categorical distributions do not assume their shape, they can model arbitrary distribution – they are more fle xible. • W e ar gue that classification loss functions provide better behav ed gradients than regression ones. As a result ev olution of classification neural network is more smooth during learning. • Additional target (ev en closely related) works as a regularization as typically in multitask learning [5]. 4. Model comparison Effecti veness of the method presented was established with the comparison. The original and drawer ed neural network were trained on the same dataset and once trainings were completed the neural networks performances on a giv en test set were measured. Since drawering affects just a learning procedure the comparision is fair . All learning procedures depend on random initialization hence to obtain reliable results a fe w learning procedures in both setups were performed. Adam [6] was chosen for stochastic optimization. The comparison was done on two datasets described in the follo wing section. The results are described in the section 6. 5. Data The method presented were tested on two datasets. 5.1. Rossmann Store Sales The first dataset is public and was used during Ross- mann Store Sales competition on well-known platform kag- gle.com . The official description starts as follows: Rossmann operates over 3,000 drug stores in 7 European countries. Currently , Rossmann store managers are tasked with predicting their daily sales for up to six weeks in advance. Store sales are influenced by many factors, including promo- tions, competition, school and state holidays, sea- sonality , and locality . W ith thousands of individual managers predicting sales based on their unique circumstances, the accurac y of results can be quite varied. The dataset contains mainly categorical features like information about state holidays, an indicator whether a store is running a promotion on a gi ven day etc. Since we needed ground truth labels, only the train part of the dataset was used (in kaggle .com notation). W e split this data into ne w training set, v alidation set and test set by time. The training set ( 648 k records) is consisted of all observ ations before year 2015. The validation set ( 112 k records) contain all observations from January , February , March and April 2015. Finally , the test set ( 84 k records) cov ers the rest of the observ ations from year 2015. In our version of this task target y is normalized loga- rithm of the turnover for a gi ven day . Logarithm was used since the turnovers are exponentially distributed. An input x is consisted of all information provided in the original dataset except for Pr omo2 related information. A day and a month was extracted from a giv en date (a year was ignored). The biggest challenge linked with this dataset is not to ov erfit trained model, because dataset size is relatively small and encoding layers have to be used to cope with categorical v ariables. Differences between scores on train, v alidation and test sets were significant and seemed to grow during learning. W e belie ve that dr awering prevents overfitting – works as a regularization in this case. 5.2. Con version value task This priv ate dataset depicts conv ersion value task i.e. a regression problem where one wants to predict the v alue of the next item bought for a given customer who clicked a displayed ad. The dataset describes states of customers at the time of impressions. The state (input x ) is a vector of mainly continuous features like a price of last item seen, a v alue of the previous purchase, a number of items in the basket etc. T arget y is the price of the ne xt item bought by the gi ven user . The price is always positi ve since only users who clicked an ad and con verted are incorporated into the dataset. The dataset was split into training set ( 2 , 1 million records) and validation set ( 0 , 9 million observations). Ini- tially there was also a test set extracted from validation set, but it turned out that scores on validation and test sets are almost identical. W e believe that the biggest challenge while w orking on the con version v alue task is to tame gradients which vary a lot. That is to say , for two pairs ( x 1 , y 1 ) and ( x 2 , y 2 ) from the dataset, the inputs x 1 and x 2 may be close to each other or ev en identical, but the tar gets y 1 and y 2 may ev en not hav e the same order of magnitude. As a result gradients may remain relati vely high e ven during the last phase of learning and the model may tend to predict the last encountered target ( y 1 or y 2 ) instead of predicting an a verage of them. W e ar gue that drawering helps to find general patterns by providing better beha ved gradients. 6. Experiments In this section the results of the comparisons described in the pre vious section are presented. 6.1. Rossmann Store Sales In this case the original neural netw ork f ( · ) takes an input which is produced from 14 v alues – 12 categorical and 2 continuous ones. Each categorical value is encoded into a vector of size min ( k , 10) , where k is the number of all possible values of the given categorical v ariable. The min- imum is applied to av oid incorporating a redundancy . Both continuous features are normalized. The concatenation of all encoded features and two continuous variables produces the input vector x of size 75. The neural network f ( · ) has a sequential form and is defined as follo ws: • an input is processed by h ( · ) which is as follows: – Linear (75 , 64) , – ReLU , – Linear (64 , 128) , – ReLU , • afterwards an output of h ( · ) is fed to a simple function g ( · ) which is just a Linear (128 , 1) . The drawer ed neural network with incorporated s ( · ) is as follows: • as in the original f ( · ) , the same h ( · ) processes an input, • an output of h ( · ) is duplicated and processed inde- pendently by g ( · ) which is the same as in the original f ( · ) and s ( · ) which is as follows: – Linear (128 , 1024) , – ReLU , – D ropout (0 . 5) , – Linear (1024 , 19) , – S ig moid . The tor ch notation were used, here: • Linear ( a, b ) is a linear transformation – vector of size a into vector of size b , • ReLU is the r ectifier function applied pointwise, • S ig moid ia the sigmoid function applied pointwise, • D ropout is a dr opout layer [7]. The drawer ed neural network has roughly 150 k more parameters. It is a huge advantage, but these additional parameters are used only to calculate new target and addi- tional calculations may be skipped during an ev aluation. W e belie ve that patterns found to answer the additional target, which is related to the original one, were transferred to the core part h ( · ) . W e used dropout only in s ( · ) since incorporating dropout to h ( · ) causes instability in learning. While work on regres- sion tasks we noticed that it may be a general issue and it should be inv estigated, but it is not in the scope of this paper . Fifty learning procedures for both the original and the extended neural network were performed. They were stopped after fifty iterations without any progress on val- idation set (and at least one hundred iterations in total). The iteration of the model which performed the best on v alidation set was chosen and ev aluated on the test set. The loss function used was a classic square error loss. The minimal error on the test set achieved by the draw- er ed neural network is 4 . 481 , which is 7 . 5% better than the best original neural network. The difference between the a verage of T op5 scores is also around 7 . 5% in fa vor of drawering . While analyzing the av erage of all fifty models per method the difference seems to be blurred. It is caused be the fact that a few learning procedures ov erfited too much and achie ved unsatisfying results. But ev en in this case the av erage for drawer ed neural networks is about 3 . 8% better . All these scores with standard de viations are showed in the T able 1. T ABLE 1. R O S S M A N N S T O R E S A L E S S C O R E S Model Min T op5 mean T op5 std All mean All std Original 4 . 847 4 . 930 0 . 113 5 . 437 0 . 259 Extended 4 . 481 4 . 558 0 . 095 5 . 232 0 . 331 W e have to note that e xtending h ( · ) by additional 150 k parameter s may r esult in ever better performance, but it would drastically slow an evaluation. However , we noticed that simple extensions of the original neural netwok f ( · ) tend to overfit and did not achie ve better r esults. The train errors may be also in vestigated. In this case the original neural network performs better which supports our thesis that drawering works as a regularization. Detailed results are presented in the T able 2. T ABLE 2. R O S S M A N N S T O R E S A L E S S C O R E S O N T R A I N I N G S E T Model Min T op5 mean T op5 std All mean All std Original 3 . 484 3 . 571 0 . 059 3 . 494 0 . 009 Extended 3 . 555 3 . 655 0 . 049 3 . 561 0 . 012 0 , 8 3 5 0 , 8 4 0 0 , 8 4 5 0 , 8 5 0 0 , 8 5 5 0 , 8 6 0 0 , 8 6 5 N e s t e d ( b e s t ) N e s t e d ( w o r s t ) D i s j o i n t ( b e s t ) D i s j o i n t ( w o r s t ) O r i g i n a l ( b e s t ) O r i g i n a l ( w o r s t ) Figure 2. Sample ev olutions of scores on validation set during learning procedures. 6.2. Con version value task This dataset provides detailed users descriptions which are consisted of 6 categorical features and more than 400 continuous ones. After encoding the original neural network f ( · ) takes an input vector of size 700. The core part h ( · ) is the neural network with 3 layers that outputs a vector of size 200. The function g ( · ) and the extension s ( · ) are simple, Linear (200 , 1) and Linear (200 , 21) , respectively . In case of the conv ersion value task we do not provide a detailed model description since the dataset is priv ate and this experiment can not be reproduced. Ho wever , we decided to incorporate this comparison to the paper because two versions of drawers were tested on this dataset ( disjoint and nested ). W e also want to point out that we in vented drawering method while working on this dataset and after- wards decided to check the method out on public data. W e were unable to achieve superior results without drawering . Therefore, we belie ve that work done on this dataset (despite its pri vacy) should be presented. T o obtain more reliable results ten learning procedures were performed for each setup: • Original – the original neural network f ( · ) , • Disjoint – drawer ed neural network for disjoint drawers , • Nested – drawer ed neural network for nested dr aw- ers . In the Figure 2 six learning curves are sho wed. For each of three setups the best and the worst ones were chosen. It means that the minimum of the other eight are between representants sho wed. The first 50 iterations were skipped to make the figure more lucid. Each learning procedure was finished after 30 iterations without any progress on the validation set. It may be easily inferred that all twenty drawer ed neural networks performed significantly better than neural networks trained without the extension. The difference between Dis- 1 2 3 4 5 6 7 8 9 1 0 1 1 1 2 1 3 1 4 1 5 1 6 1 7 1 8 1 9 0 , 0 0 , 5 1 , 0 4 8 9 1 0 1 1 1 2 Figure 3. Sample values of s ( h ( x )) for a randomly chosen model solving Rossmann Store Sales problem (nested drawers ). joint and Nested versions is also noticeable and Disjoint drawers tends to perform slightly better . In the Rossmann Stores Sales case we experienced the opposite, hence the version of drawers may be understood as a hyperparameter . W e suppose that it may be related with the size of a giv en dataset. 7. Analysis of s ( h ( x )) v alues V alues of s ( h ( x )) may be analyzed. F or a pair ( x, y ) ∈ D the i -th value of the vector s ( h ( x )) is the probability that tar get y belongs to the drawer f i . In this section we assume that drawers are nested, hence values of s ( h ( x )) should be descending. Notice that we do not force this property by the architecture of drawer ed neural netw ork, so it is a side effect of the nested structure of dr awers . In the Figure 3 a few sample distributions are showed. Each according label is ground truth ( i such that e i contains target value). The values of s ( h ( x )) are clearly monotonous as expected. It seems that s ( h ( x )) performs well – values are close to one in the beginning and to zero in the end. A switch is in the right place, close to the ground truth label and misses by maximum one drawer . 8. Conclusion The method presented, drawering , extends a given re- gression neural network which makes training more effec- tiv e. The modification affects the learning procedure only , hence once drawer ed model is trained, the extension may be easily omitted during ev aluation without any change in prediction. It means that the modified model may be e valuated as fast as the original one b ut tends to perform better . W e believe that this improvement is possible because drawer ed neural network has bigger expressi ve power , is provided with better behaved gradients, can model arbitrary distribution and is regularized. It turned out that the knowl- edge gained by the modified neural network is contained in the parameters shared with the giv en neural network. Since the only cost is an increase in learning time, we believ e that in cases when better performance is more im- portant than training time, drawering should be incorporated into a gi ven regression neural network. References [1] Y . LeCun, Y . Bengio, and G. Hinton, “Deep learning, ” Natur e , vol. 521, no. 7553, pp. 436–444, 2015. [2] K. He, X. Zhang, S. Ren, and J. Sun, “Deep residual learning for image recognition, ” CoRR , vol. abs/1512.03385, 2015. [Online]. A vailable: http://arxiv .org/abs/1512.03385 [3] A. van den Oord, S. Dieleman, H. Zen, K. Simonyan, O. V inyals, A. Gra ves, N. Kalchbrenner, A. W . Senior, and K. Kavukcuoglu, “W avenet: A generative model for raw audio, ” CoRR , vol. abs/1609.03499, 2016. [Online]. A vailable: http://arxiv .org/abs/1609. 03499 [4] A. van den Oord, N. Kalchbrenner , and K. Kavukcuoglu, “Pixel recurrent neural networks, ” CoRR , v ol. abs/1601.06759, 2016. [Online]. A vailable: http://arxiv .org/abs/1601.06759 [5] S. Thrun, “Is learning the n-th thing any easier than learning the first?” Advances in neural information processing systems , pp. 640– 646, 1996. [6] D. P . Kingma and J. Ba, “ Adam: A method for stochastic optimization, ” CoRR , vol. abs/1412.6980, 2014. [Online]. A vailable: http://arxiv .org/abs/1412.6980 [7] N. Sriv astava, G. E. Hinton, A. Krizhevsky , I. Sutskev er , and R. Salakhutdinov , “Dropout: a simple way to prevent neural networks from overfitting. ” Journal of Machine Learning Resear ch , vol. 15, no. 1, pp. 1929–1958, 2014.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment