Controlling False Discoveries During Interactive Data Exploration

Recent tools for interactive data exploration significantly increase the chance that users make false discoveries. The crux is that these tools implicitly allow the user to test a large body of different hypotheses with just a few clicks thus incurri…

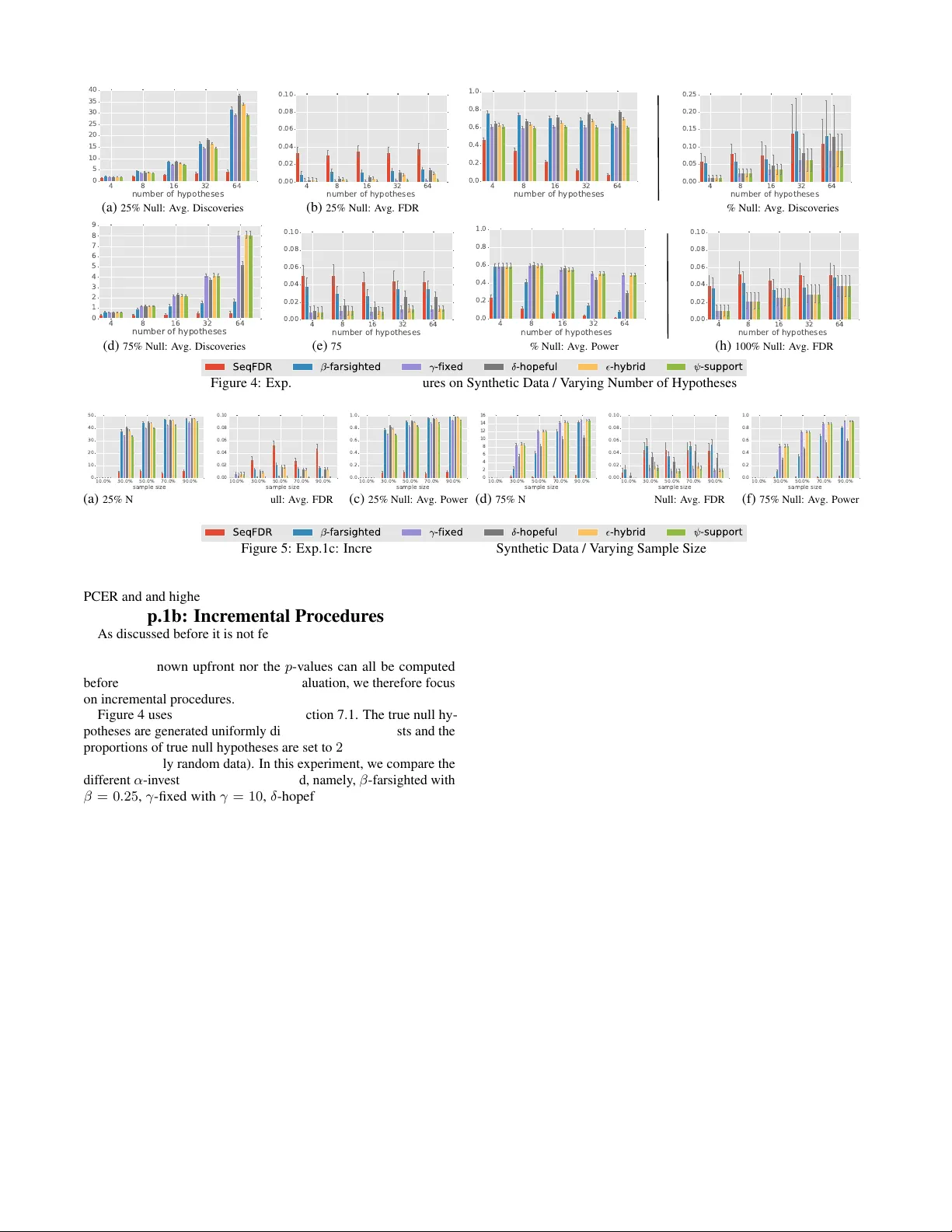

Authors: Zheguang Zhao, Lorenzo De Stefani, Emanuel Zgraggen