Transfer Learning Across Patient Variations with Hidden Parameter Markov Decision Processes

Due to physiological variation, patients diagnosed with the same condition may exhibit divergent, but related, responses to the same treatments. Hidden Parameter Markov Decision Processes (HiP-MDPs) tackle this transfer-learning problem by embedding …

Authors: Taylor Killian, George Konidaris, Finale Doshi-Velez

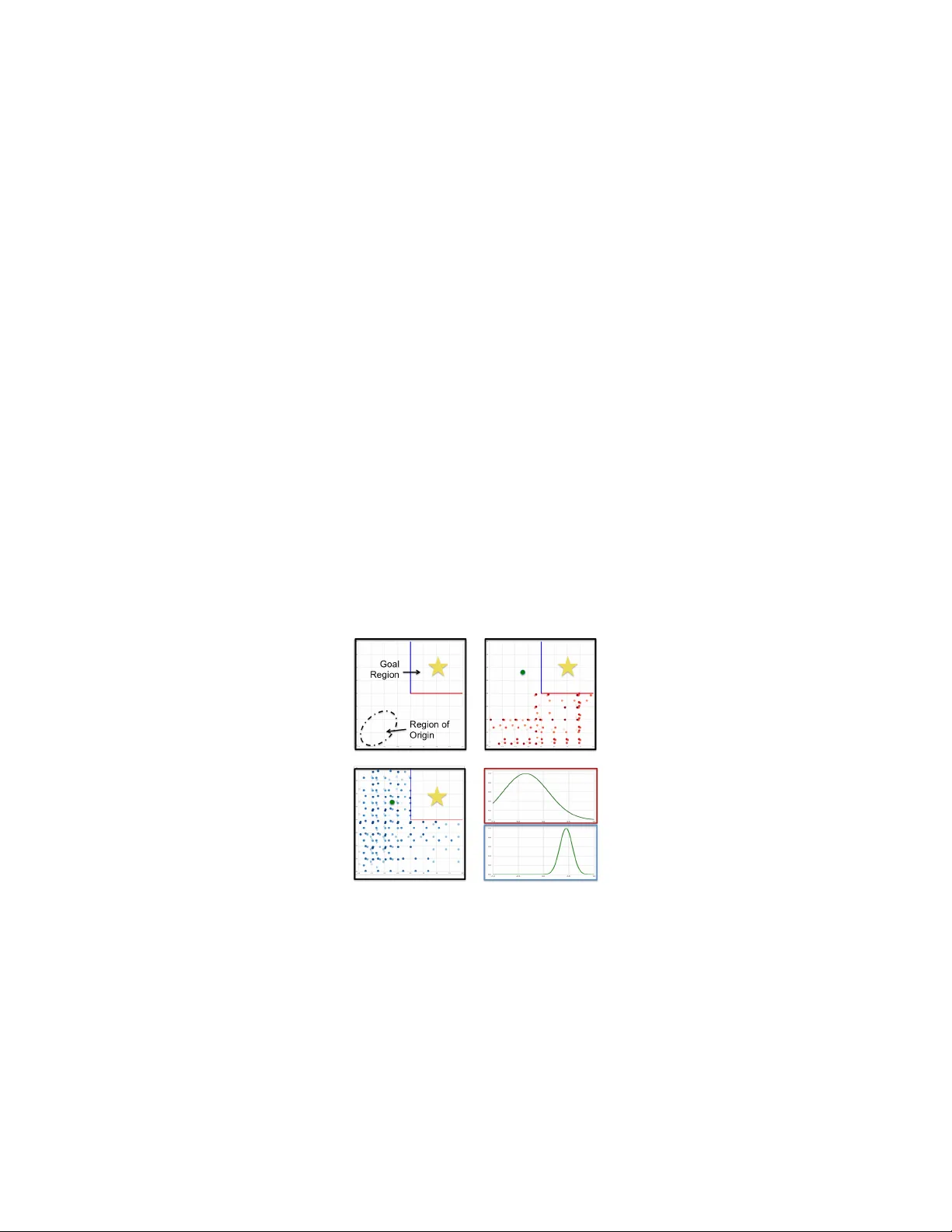

T ransfer Lear ning Acr oss Patient V ariations with Hidden Parameter Mark o v Decision Pr ocesses T aylor W . Killian Harvard Uni v ersity taylorkillian@g.harvard.edu George K onidaris Brown Uni v ersity gdk@cs.brown.edu Finale Doshi-V elez Harvard Uni v ersity finale@seas.harvard.edu Abstract Due to physiological variation, patients diagnosed with the same condition may exhibit di v ergent, b ut related, responses to the same treatments. Hidden Parameter Markov Decision Processes (HiP-MDPs) tackle this transfer -learning problem by embedding these tasks into a low-dimensional space. Ho we v er , the original formu- lation of HiP-MDP had a critical flaw: the embedding uncertainty was modelled independently of the agent’ s state uncertainty , requiring an unnatural training proce- dure in which all tasks visited e v ery part of the state space—possible for robots that can be mov ed to a particular location, impossible for human patients. W e update the HiP-MDP framework and extend it to more robustly dev elop personalized medicine strategies for HIV treatment. 1 Introduction Due to physiological variation, patients diagnosed with the same condition may exhibit di vergent, but related, responses to the same treatments. T o develop optimal treatment or control policies for a patient, it is undesirable and inef fectual to start afresh each time a new individual is cared for . Howe ver , each patient may still require a tailored treatment plan as “one-size-fits-all" treatments can introduce more risk in aggressiv e diagnoses. Ideally , an agent tasked with dev eloping an optimal health management policy would be able to le verage the similarities across separate, b ut related, instances while also customizing treatment for the individual. This paradigm of learning introduces a compelling regime for transfer learning. The Hidden Parameter Marko v Decision Process ( HiP-MDP ) [ 9 ] formalizes the transfer learning task in the following w ay: first it assumes that any task instance can be fully parameterized by a bounded number of latent parameters w . That is, we posit that the dynamics dictating a patient’ s physiological response can be expressed as T ( s 0 | s, a, w b ) for patient b . Second, we assume that the system dynamics will not change during a task and an agent would be capable of determining when a change occurs (e.g. a ne w patient). Doshi-V elez and Konidaris [9] show that the HiP-MDP can identify the dynamics of a ne w task instance and flexibly adapt to the variations present therein. Ho we ver , the original HiP-MDP formulation had a critical flaw: the embedding uncertainty of the latent parameter space was modelled independently from the agent’ s state uncertainty . This assumption required the agent to hav e the ability to visit ev ery part of the state space before identifying the v ariations present in the dynamics of the current instance. While this may be feasible in robotic systems, it is not generally av ailable to domains in healthcare. W e present an alternative HiP-MDP formulation that alle viates this issue via a Gaussian Process latent variable model ( GPL VM ). This approach creates a unified Gaussian Process ( GP ) model for both inferring the transition dynamics within a task instance but also in the transfer between task instances [ 5 ]. Steps are taken to av oid negati ve transfer by selecting the most representati ve e xamples of the prior instances with regards to the latent parameter setting. This change in the model allo ws for better uncertainty quantification and thus more rob ust and direct transfer . W e ground our approach 30th Conference on Neural Information Processing Systems (NIPS 2016), Barcelona, Spain. with recent advances in the use of GPs to approximate dynamical systems and in transfer learning as well as discuss rele v ant Reinforcement Learning (RL) applications to healthcare (Sec. 2). In Sec. 3 we formalize the adjustments to the HiP-MDP framew ork and in Sec. 5 we present the performance of the adjusted HiP-MDP on developing personalized treatment strate gies within HIV simulators. 2 Related W ork The use of RL (and machine learning, in general) in the de velopment of optimal control policies and decision making strategies in healthcare [ 22 ] is gaining significant momentum as methodologies hav e begun to adequately account for uncertainty and v ariations in the problem space. There hav e been notable ef forts made in the administration of anesthesia [ 18 ], in personalizing cancer [ 24 ] and HIV therapies [ 11 ] and in understanding the causality of macro e vents in diabetes managment [ 16 ]. Mari vate et al. [15] formalized a routine to accommodate multiple sources of uncertainty in batch RL methods to better ev aluate the effecti veness of treatments across a subpopulations of patients. W e similarly attempt to address and identify the variations across subpopulations in the dev elopment treatment policies. W e instead, attempt to account for these variations while dev eloping effecti ve treatment policies in an approximate online fashion. GPs have increasingly been used to facilitate methods of RL [ 19 , 20 ]. Recent adv ances in modeling dynamical systems with GPs hav e led to more efficient and robust formulations [ 7 , 8 ], most particularly in the approximation and simulation of dynamical s ystems. The HiP-MDP approximates the underlying dynamical system of the task through the training of a Gaussian Process dynamical model [ 6 , 27 ] where only a small portion of the true system dynamics may be observ ed as is common in partially observable Marko v Decision Processes (POMDP) [ 12 ]. In order to facilitate the transfer between task instances we embed a latent, low-dimensional parametrization of the system dynamics with the states. By virtue of the GP [ 13 , 25 ], this latent embedding allows the HiP-MDP to infer across similar task instances and provide a better prediction of the currently observ ed system. The use of GPs to facilitate the transfer of pre viously learned information to ne w instances of the same or a similar task has a rich history [ 2 , 12 , 19 ]. More recently , there hav e been advances in or ganizing ho w the GP is used to transfer , being constrained to only select previous task instances where positi ve transfer occurs [ 5 , 14 ]. This adapti ve approach to transfer learning helps to a void pre vious instances that would otherwise negati vely affect effecti ve learning in the current instance. By selecting the most rele v ant instances of a current task for transfer , learning in the current instance becomes more efficient. 3 Model The HiP-MDP is described by a tuple: { S, A, Θ , T , R, γ , P Θ } , where S and A are the sets of states s and actions a (eg. patient health state and prescribed treatment, respecti vely), and R ( s, a ) is the re ward function mapping the utility of taking action a from state s . The transition dynamics T ( s 0 | s, a, θ b ) for each task instance b depends on the v alue of the hidden parameters θ b ∈ Θ (eg. patient physiology). Where the set of all possible parameters θ b is denoted by Θ and where P Θ is the prior o ver these parameters. Finally , γ ∈ (0 , 1] is the factor by which R is discounted to e xpress how influential immediate rew ards are when learning a control policy . Thereby , the HiP-MDP describes a class of tasks; where particular instances of that class are obtained by independently sampling a parameter vector θ b ∈ Θ at the initiation of a ne w task instance b . W e assume that θ b is in variant o ver the duration of the instance, signaling distinct learning frontiers between instances when a newly dra wn θ b 0 accompanies observed additions to S and A . The HiP-MDP presented in [9] provided a transition model of the form: ( s 0 d − s d ) ∼ K X k z kad w kb f kad ( s ) + ∼ N (0 , σ 2 nad ) which sought to learn weights w kb based on the k th latent factor corresponding to task instance b , filter parameters z kad ∈ { 0 , 1 } denoting whether the k th latent parameter is rele vant in predicting dimension d when taking action a as well as task specific basis functions f kad drawn from a GP . While this formulation is expressi ve, it presents a problematic flaw when trained. Due to the independence 2 of the weights w kb from the basis functions f kad , training the HiP-MDP requires canv assing the state space S in order to infer the filter parameters z kad and learn the instance specific weights w kb for each latent parameter . W e bypass this flaw by applying a GPL VM [ 13 ] to jointly represent the dynamics and the latent weights w b corresponding to a specific task instance b . This leads to providing as input to the GP , with hyperparameters ψ , the augmented state ˜ s =: [ s | , a, w b ] | . The approximated transition model then takes the form of: s 0 d ∼ f d ( ˜ s ) + f d ∼ GP ( ψ ) w b ∼ N ( µ b , Σ b ) ∼ N (0 , σ bd ) This approach enables the HiP-MDP to flexibly infer the dynamics of a new instance by virtue of the statistical similarities found in the learned cov ariance function between observed states of the new instance and those from prior instances. Another feature of formulating the HiP-MDP after this fashion is that we are able to le verage the mar ginal log likelihood of the GP to optimize the weight distribution and thereby quantify the uncertainty [ 3 , 4 ] of the latent embedding of w b for θ b . These two features of reformulating the HiP-MDP as a GPL VM allows for more rob ust and efficient transfer . Demonstration W e demonstrate a toy e xample (see Fig. 1) of a domain where an agent is able to learn separate policies according to a hidden latent parameter . Instances inhabiting a “blue" latent parametrization can only pass through to the goal region o ver the blue boundary while those with a “red" parametrization can only cross the red boundary . After a fe w training instances, the HiP-MDP is able to separate the two latent classes and dev elops individualized policies for each. Due to the flexibility enabled by embedding the latent parametrization into the system’ s state, the GPL VM identifies which class the current instance belongs to within the first couple of training episodes. In total, this example took approximately 30 minutes to de velop optimal policies for 20 task instances. W e place an unclassified survey point in the top left quadrant to gather information about the polic y uncertainty giv en the two latent classes. Figure 1: T oy Problem: (a) Schematic outlining the domain, (b) learned policy for “red" parametriza- tion, (c) learned policy for “blue" parametrization, (d) uncertainty measure for input point according to separate latent classes. 4 Inference Parameter Learning and Updates W e deploy the HiP-MDP when the agent is provided a large amount of batch observational data from se veral task instances (e.g. patients) and tasked with quickly performing well on new instances. W ith this observational data the GP transition functions f d are learned and the individual weighting distributions for w b are optimized. Howe ver , the training of the f d requires computing in verses of matrices of size N = P b n b where n b is the number of data points collected from instance b . T o streamline the approximation of T we choose a set of support 3 points s ∗ from S b that sparsely approximate the full GP . Optimization procedures exist to select these points accurately [ 20 , 23 ], we ho wever heuristically select these points to minimize the maximum reconstruction error within each batch using simulated annealing. Control Policy A control policy is learned for each task instance b follo wing the procedure outlined in [ 7 ] where a set of tuples ( s, a, s 0 , r ) are observed and the policy is periodically updated (as is the latent embedding w b ) in an online fashion, lev eraging the approximate dynamics of T via the f ∗ d to create a synthetic batch of data from the current instance. This generated batch of data from b is then used to improv e the current policy via the Double Deep Q Network v ariant of fitted-Q using prioritized experience replay [ 17 , 21 , 26 ]. Multiple episodes are run from each instance b to optimize the policy for completing the task under the hidden parameter setting θ b . After doing so, the hyperparameters of the GP defining the f d are updated before learning for another randomly manifest task instance. 5 Experimental Results Baselines W e benchmark the HiP-MDP framew ork in the HIV domain by observing ho w an agent would perform without transfe rring information from prior patients to aid in the ef ficient dev elopment of the treatment policy for a current patient. W e do this by representing two ends of the precision medicine spectrum; a “one-size-fits-all" approach that learns a single treatment policy for all patients by using all previous patient data together and a "personally tailored" treatment plan where a single patient’ s data is all that is used to train the policy . W e represent these baselines in en vironments where a model is present (with the simulators) or absent (utilizing the GP approximation). HIV T reatment Ernst, et.al. [ 11 ] leverage the mathematical representation of how a patient re- sponds to HIV treatments [ 1 ] in dev eloping an RL approach to find effecti ve treatment policies using methods introduced in [ 10 ]. The learned treatment policies cycle on and off tw o dif ferent types of anti-retroviral medication in a sequence that maximizes long-term health. By perturbing the underlying system parameters one can simulate v aried patient physiologies. W e le verage these variations via the GPL VM augmentation to the HiP-MDP to efficiently learn treatment policies that match the nai ve “personally tailored" baseline but with reliance on much less data. The HiP-MDP also outperforms the “one-size-fits-all" baseline, as e xpected. (see Fig. 2 for representativ e results) . W e see that the GPL VM driv en HiP-MDP is capable of immediately taking advantage of the prior information from previously learned data e ven in the face of unique physiological characteristics. The rob ust and efficient manner in which the HiP-MDP achiev es such results in the HIV domain is promising and, in turn, moti vates further inquiry into a more generalized learning agent for the de velopment of other individualized medical treatment plans. Figure 2: Representati ve results of applying the GPL VM aided HiP-MDP model to the HIV treatment simulator as provided from [ 11 ]. The HiP-MDP learned treatment policy (blue) matches or improves on the naiv e baseline policy dev elopment strategies. 4 References [1] BM Adams, HT Banks, H Kwon, and HT T ran. Dynamic multidrug therapies for hiv: optimal and sti control approaches. Mathematical Biosciences and Engineering , pages 223–241, 2004. [2] EV Bonilla, KM Chai, and CK Williams. Multi-task Gaussian Process Prediction. In Advances in Neural Information Pr ocessing Systems , volume 20, pages 153–160, 2008. [3] J Quinonero Candela. Learning with uncertainty - gaussian pr ocesses and r elevance vector machines . PhD thesis, T echnical Univ ersity of Denmark, 5 2004. [4] J Quinonero Candela, A Girard, R Murray-Smith, and CE Rasmussen. Propagation of uncer - tainty in bayesian kernel models - application to multiple-step ahead time series forecasting. In Pr oceedings of the ICASSP , pages 701–704, 2003. [5] B Cao, SJ Pan, Y Zhang, D Y Y eung, and Q Y ang. Adaptive transfer learning. In AAAI , v olume 2, page 7, 2010. [6] MP Deisenroth and S Mohamed. Expectation Propagation in Gaussian Process Dynamical Systems. In Advances in Neur al Information Pr ocessing Systems , volume 25, pages 2618–2626, 2012. [7] MP Deisenroth and CE Rasmussen. Pilco: A model-based and data-efficient approach to policy search. In In Pr oceedings of the International Conference on Mac hine Learning , 2011. [8] MP Deisenroth and CE Rasmussen. Gaussian processes for data-efficient learning in robotics and control. IEEE T ransactions on P attern Analysis and Machine Intelligence , 37, February 2015. [9] F Doshi-V elez and G K onidaris. Hidden parameter markov decision processes: A semipara- metric regression approach for discov ering latent task parametrizations. CoRR , abs/1308.3513, 2013. URL . [10] D Ernst, P Geurts, and L W ehenkel. Tree-based batch mode reinforcement learning. Journal of Machine Learning Resear ch , 6(Apr):503–556, 2005. [11] D Ernst, G Stan, J Goncalv es, and L W ehenkel. Clinical data based optimal sti strategies for hi v; a reinforcement learning approach. In Pr oceedings of the 45th IEEE Confer ence on Decision and Contr ol , 2006. [12] LP Kaelbling, ML Littman, and AR Cassandra. Planning and acting in partially observable stochastic domains. Artificial intelligence , 101(1):99–134, 1998. [13] N Lawrence. Gaussian process latent v ariable models for visualisation of high dimensional data. In Advances in Neural Information Pr ocessing Systems , volume 16, pages 329–336, 2004. [14] G Leen, J Peltonen, and S Kaski. F ocused multi-task learning using g aussian processes. In J oint Eur opean Confer ence on Machine Learning and Knowledge Discovery in Databases , pages 310–325. Springer , 2011. [15] VN Mariv ate, J Chemali, E Brunskill, and M Littman. Quantifying uncertainty in batch personalized sequential decision making. In W orkshops at the T wenty-Eighth AAAI Confer ence on Artificial Intelligence , 2014. [16] CA Merck and S Kleinber g. Causal explanation under indeterminism: A sampling approach. 2015. [17] V Mnih, K Kavukcuoglu, D Silver , A Grav es, I Antonoglou, D W ierstra, and M Riedmiller . Playing atari with deep reinforcement learning. arXiv pr eprint arXiv:1312.5602 , 2013. [18] BL Moore, LD Pyeatt, V Kulkarni, P Panousis, K P adrez, and A G Doufas. Reinforcement learning for closed-loop propofol anesthesia: a study in human volunteers. J ournal of Machine Learning Resear ch , 15(1):655–696, 2014. 5 [19] CE Rasmussen and M Kuss. Gaussian processes in reinforcement learning. In Advances in Neural Information Pr ocessing Systems , volume 15, 2003. [20] CE Rasmussen and CKI Williams. Gaussian Processes for Machine Learning . MIT Press, Cambridge, 2006. [21] T om Schaul, John Quan, Ioannis Antonoglou, and David Silv er . Prioritized experience replay . arXiv pr eprint arXiv:1511.05952 , 2015. [22] SM Shortreed, E Laber , DJ Lizotte, TS Stroup, J Pineau, and SA Murphy . Informing sequential clinical decision-making through reinforcement learning: an empirical study . Machine learning , 84(1-2):109–136, 2011. [23] E Snelson and Z Ghahramani. Sparse gaussian processes using pseudo-inputs. In Advances in Neural Information Pr ocessing Systems , volume 17, pages 1257–1264, 2005. [24] M T enenbaum, A Fern, L Getoor , M Littman, V Manasinghka, S Natarajan, D Page, J Shrager , Y Singer , and P T adepalli. Personalizing cancer therapy via machine learning. [25] R Urtasun and T Darrell. Discriminative gaussian process latent v ariable model for classification. In Pr oceedings of the 24th International Confer ence on Mac hine learning , pages 927–934. A CM, 2007. [26] H V an Hasselt, A Guez, and D Silver . Deep reinforcement learning with double q-learning. [27] JM W ang, DJ Fleet, and A Hertzmann. Gaussian Process Dynamical Models. In Advances in Neural Information Pr ocessing Systems , volume 17, pages 1441–1448, 2005. 6

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment