Exploration for Multi-task Reinforcement Learning with Deep Generative Models

Exploration in multi-task reinforcement learning is critical in training agents to deduce the underlying MDP. Many of the existing exploration frameworks such as $E^3$, $R_{max}$, Thompson sampling assume a single stationary MDP and are not suitable …

Authors: Sai Praveen Bangaru, JS Suhas, Balaraman Ravindran

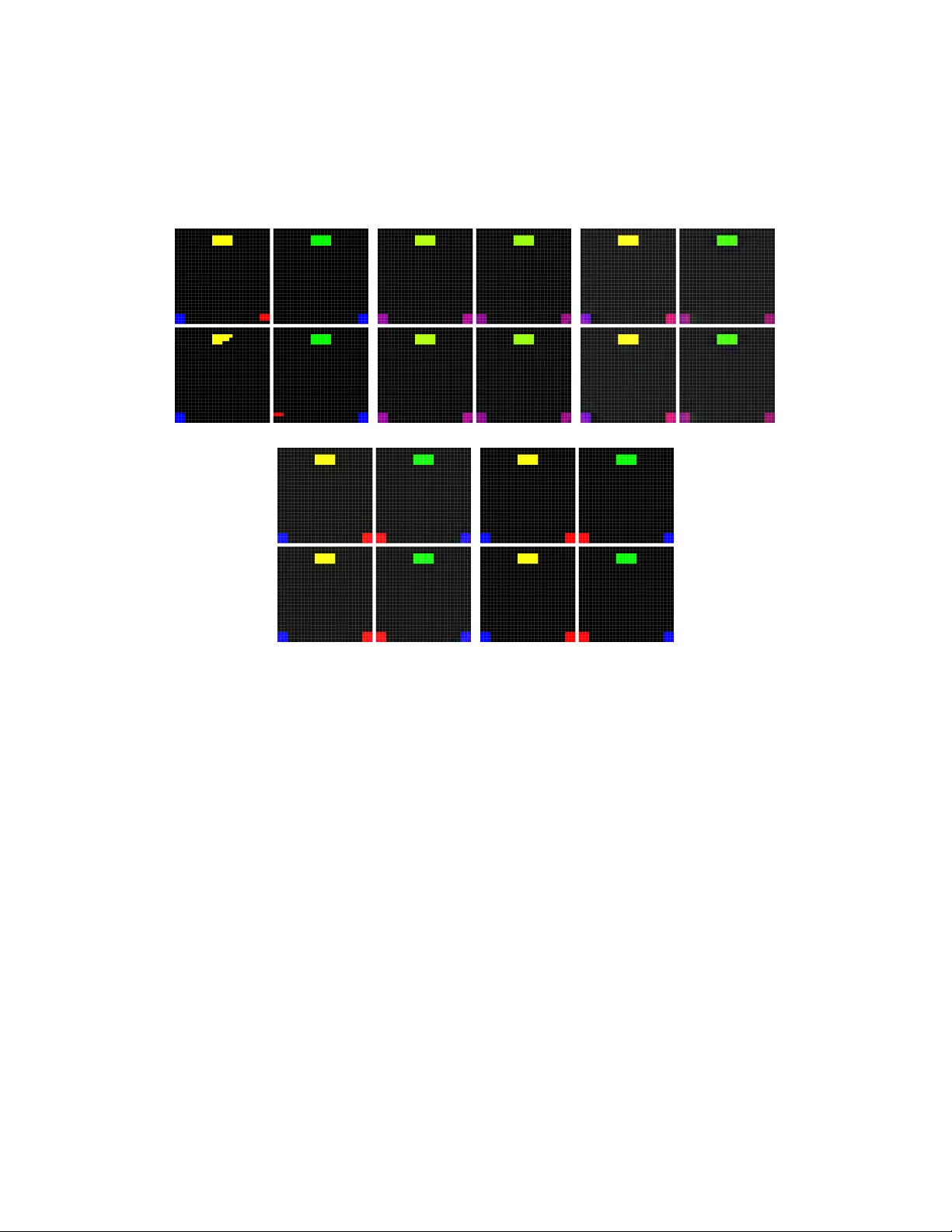

Exploration f or Multi-task Reinf or cement Learning with Deep Generativ e Models Sai Prav een B Department of Computer Science & Engineering Indian Institute of T echnology Madras bpraveen@cse.iitm.ac.in JS Suhas Department of Computer Science & Engineering Indian Institute of T echnology Madras sauce@cse.iitm.ac.in Balaraman Ravindran Department of Computer Science & Engineering Indian Institute of T echnology Madras ravi@cse.iitm.ac.in Abstract Exploration in multi-task reinforcement learning is critical in training agents to deduce the underlying MDP . Many of the existing e xploration frameworks such as E 3 , R max , Thompson sampling assume a single stationary MDP and are not suit- able for system identification in the multi-task setting. W e present a nov el method to facilitate exploration in multi-task reinforcement learning using deep generati ve models. W e supplement our method with a low dimensional energy model to learn the underlying MDP distribution and pro vide a resilient and adaptiv e exploration signal to the agent. W e e valuate our method on a new set of en vironments and provide intuiti ve interpretation of our results. 1 Introduction Learning to solve multiple tasks simultaneously is the Multi-task reinforcement learning(MTRL) problem. MTRL can be solved by either planning after deducing the current MDP or ignoring MDP deduction and learning a polic y o ver all the MDPs combined. For example, say , a marker in the en vironment determines the obstacle structure and the goal locations. Any agent will have to visit the marker to learn the environment structure, and hence, deducing the task to be solved becomes an important part of the agents policy . MTRL is different from T ransfer learning, which aims to generalize (or transfer ) knowledge from a set of tasks to solve a ne w , but similar , task. MTRL also in volves learning a common representation for a set of tasks, but mak es no attempt to generalize to new tasks. Con ventional reinforcement learning algorithms like Q-learning, SARSA etc fail to identify this decision making sub-problem. They also learn sub-optimal policies because of the non-stationary rew ard structure since the goal location varies from episode to episode. T o address these issues, current research in MTRL is driv en tow ards using a model ov er all possible MDPs to deduce the current MDP or using some form of memory to store past observations and then reason based on this history . These methods will be able to deduce the MDP if they happen to see the mark ers, but make no effort to acti vely search and deduce the MDP . 29th Conference on Neural Information Processing Systems (NIPS 2016), Barcelona, Spain. Our methods incentivize the agent to activ ely seek markers and deduce the MDP by providing a smooth, adaptiv e exploration signal, as a pseudo -re ward, obtained from a generativ e model using deep neural networks. Since our method relies on computing the Jacobian of some state representation with respect to the input, we use deep neural netw orks to learn this state representation. T o the best of our knowledge, we are the first to propose using the Jacobian as an exploration bonus. Our exploration bonus is added to intrinsic re ward like in Bayesian Exploration Bonus. W e focus on grid-worlds with colors for markers. F or clarity , we redefine a state s , to be a single grid-location and its pixel v alue, x s , as the observation. Our methods can, howe ver , generalize to arbitrarily complex distributions on the observ ations X . W e also assume that the agent can deduce rew ards and transition probabilities from the observation. 2 Related W ork There is extensi ve research in the field of exploration strate gies for reducing uncertainty in the MDP . R max , E 3 are examples of widely used exploration strategies. Bayesian Exploration Bonus assigns a pseudo rew ard to states calculated using frequency of state visitation. Thomson sampling samples an MDP from the posterior distrib ution computed using evidence(re wards and transition probabilities) that it obtains from trajectories. W e follow a similar approach to sample MDPs. Recent adv ances in this domain in volve sampling using Dropout Neural Networks[1]. Ho wev er , these algorithms assume there exists a single stationary MDP for each episode(which we refer to as Single-T ask RL). Our algorithm addresses the Multi-task RL problem where each episode uses an MDP sampled from an arbitrary distribution on MDPs. Contrary to the STRL exploration strategies, our exploration bonus is designed to mark states potentially useful for the agent to improv e its certainty about the current MDP . Recent adv ances in MTRL and T ransfer Learning algorithms like V alue Iteration Networks[7] and Actor-Mimic Networks[6] that attempt to identify common structure among tasks and generalize learning to ne w tasks with similar structure. In the conte xt of MTRL and Transfer Learning on en vironments that giv e image -like observ ations, V alue Iteration Networks employ Recurrent Neural Networks for v alue iteration and learn kernel functions f R ( x ) and f P ( x ) to estimate the rew ard and transition probabilities for a state from its immediate surroundings. This has the ef fect of easily generalizing to ne w tasks which share the same MDP structure( R and P for a state can be determined using locality assumptions). Our w ork does not attempt to learn common structure across MDPs for the purpose of transfer learning. Instead, we attempt to learn the input MDP distrib ution to deduce the current MDP giv en the observations. [5] proposes a novel method using Deep Recurrent Memory Networks to learn policies on Minecraft multi-task en vironments. They used a fixed memory of past observations. At each step, a context vector is generated and the memory network is queried for rele vant information. This model successfully learns policies on I-shaped en vironments where the color of a marker cell determines the goal location. In their experiments with the I-world and Pattern-Recognition world, the identifier states are very close to the agents starting position. Another class of MTRL algorithms focuses on deducing the current MDP using Bayesian Reasoning. Multi-class models proposed by [4] and [9], attempt to assign class labels to the current MDP given a sequence of observations made from it. [9] use a Hierarchical Bayesian Model(HBM) to learn a conditional distribution o ver class labels gi ven the observ ations. The agent samples an MDP from the posterior distribution in a manner similar to Thomson sampling, and then chooses the action. W e follow the same procedure for action selection, b ut incorporate exploration bonuses into it as well. [4] proposes Multi-class Multi-task Learning(MCMTL), a non-parametric Hierarchical Bayesian Model to learn inherent structure in the v alue functions of each class. MCMTL clusters MDPs into classes and learns a posterior distribution o ver the MDPs giv en observed e vidence. This is similar to our work, b ut it does not explicitly incenti vize the agent to visit marker states. Our contributions are two fold. First, we propose a deep generati ve model to allo w sampling from posterior distribution. Second, we propose a nov el exploration bonus using the models posterior distribution. 2 3 Background 3.1 V ariational A uto Encoders V ariational Auto Encoders(V AE)[3] attempt to learn the distribution that generated the data X , p ( X ) . V AEs, like standard autoencoders hav e an encoder , z = f e ( x ) , and a decoder y = f d ( z ) component. Generativ e models that attempt to estimate p ( X ) use a likelihood objecti ve function, p θ ( X ) or log p θ ( X ) . More formally , the objective function can be written as p ( x ) = Z Z p ( x | z ; θ ) p ( z ) ≈ X z ∼ Z p ( x | z ; θ ) p ( z ) ˆ p ( x ) ≈ X z ∈ D p ( x | z ; θ ) p ( z ) where D = { z 1 , z 2 . . . , z m } and z k ∼ Z ∀ k where p ( x | z ; θ ) is defined to be N ( f ( z ; θ ) , σ 2 I ) . Gradient-moti vated learning requires approximation of the integral with samples. In high-dimensional z -space, this could lead to large estimation errors as p ( x | z ) is likely to be concentrated around a few select z s and it would take an infeasible number of samples to get proper estimate. V AEs circumvent this problem by introducing a new distrib ution Z ∼ N ( µ φ ( z ) , σ φ ( z )) to sample D from. T o reduce parameters, we use σ φ ( z ) = c · I . These tw o functions are approximated with a deep network and form the encoder component of the V AE. p θ ( X ) is represented using the sampling function f ( z ; θ ) where z ∼ N (0 , 1) and f ( z ) forms the decoder component of the V AE. After some mathematical sleight of hand to account for Z in the learning equations([8] provides an intuiti ve understanding of these equations), we obtain the following formulation of the loss function E = D KL ( N ( µ ( X ) , σ ( X )) , N (0 , 1)) + N X k =1 ( y i − x i ) σ 2 where the KL-div ergence term exists to adjust for importance sampling z from Z instead of N (0 , 1) . 3.2 Gaussian-Binary Restricted Boltzmann Machines RBMs hav e been used widely to learn energy models over an input distrib ution p ( X ) . RBM is an undirected, complete bipartite, probabilistic graphical model with N h hidden units, H , and N v visible units, V . In Gaussian-Binary RBMs, hidden units are binary units(Bernoulli distribution) capable of representing a total of 2 N h combinations, while the visible units use the Gaussian distrib ution. The network is parametrized by edge weights matrix W between each node of V and H , and bias vectors a and b for V and H respectively . Gi ven a visible state v , the hidden state, h , is obtained by sampling the posterior giv en by p ( h | v ) = 1 1 + exp ( W T v + b ) Giv en a hidden state h , visible state v is obtained by sampling the posterior given by p ( v | h ) = N ( W T h + a , Σ ) Since RBMs model conditional distrib utions, conditional distributions( p ( v | h ) and p ( h | v ) ) hav e a closed form while marginal and joint distrib utions( p ( v ) , p ( h ) and p ( h , v ) ) are impossible to compute without explicit summation o ver all combinations. Parameters are learnt using contrasti ve di vergence([2]). Learning Σ , howe ver , proved to be unsta- ble([2]) and hence, we treat σ as a hyperparameter and use Σ = σ ∗ I N v . 4 Deep Generative Model 4.1 Encoding Let us consider the nature of our inputs. W e have assumed that the agents observed surroundings are embedded on a map as an image X . A mask M is a binary image, of the same dimensions, with 3 m i = 1 if its corresponding state has been observed by the agent. W e denote the i th pixel and mask be denoted by x i and m i respectiv ely . In most episodes, the agent will not visit the entire grid-world, hence m i = 0 for some x i ∈ X . Since there can be sev eral views of the same ground-truth MDP , we need to be able to reconstruct the ground-truth MDP from multiple observ ations of the MDP ov er sev eral episodes. F or Single-T ask RL, this can be done in a tab ular fashion. In MTRL, howe ver , we hav e potentially infinite possible MDPs and it becomes hard to build association between dif ferent views of the same MDP . W e use deep conv olutional V AEs to infer the association between dif ferent views of the same MDP and use it with a low dimensional energy model to sample MDPs giv en observational evidence. Here, locality of the world features w arrant the use of con volution layers in the V AE. Figure 1 sho ws our setup to learn the associations and to infer ground truth MDP gi ven observations. W e use one setup to train the model and another to allo w back sampling of MDPs. W e call these the train and query models. Our method can be scaled to large state spaces because of the V AE. (a) T rain Model (b) Query Model Figure 1: Deep Generative Model - T rain model requires mask inputs to account for missing observations. Query model in volv es value iteration to determine best action ov er sampled MDPs. Giv en this setup, for the learning phase, we modify the V AE loss function to account for unobserved states, x i ∈ X with m i = 0 . The ne w loss function is giv en by E = D KL ( N ( µ ( X ) , σ ( X )) , N (0 , 1)) + N X k =1 m i · ( y i − x i ) σ 2 Inclusion of m i in the loss function is quite intuiti ve and works well on the sets that we tested on, since it remov es any penalty for unseen x i and allows the V AE to project its knowledge onto the unseen states. 4.2 Sampling Giv en a partial observation, X , we sample for the posterior to obtain K MDP samples. If X doesn’t hav e enough evidence to ske w the posterior in fa vour of one single MDP , then the encoding produced by V AE, z , is far from encodings of ground-truth MDPs, z in in z -space. W e obtain an MDP that is a mixture of MDPs if we sample from this posterior . Solving this MDP could result in the agent following a polic y unsuitable for any of the component MDPs in isolation. One way to circumvent this problem is to train a probability distrib ution over the MDP embeddings, z in . For our 2-MDP en vironments, we use a Gaussian-Boltzmann RBM to cluster inputs with fixed-v ariance gaussians. W e then use Algorithm 1 to sample from these gaussians. 4.3 V alue function Giv en K samples from model posterior , p ( y | x ) , we perform action selection using an aggre gate value function o ver the K samples. W e define, for each state s , an aggregate v alue function ¯ V ( s ) as ¯ V ( s ) = E m ∼ p ( y | x ) V m ( s ) ≈ P K k =0 V m k ( s ) K 4 Algorithm 1: Sample MDPs giv en x Result : K MDPs sampled from model posterior Compute z = f e ( x ) Sample K hidden RBM states h ( i ) ∈ H , i ∈ { 1 . . . K } from the posterior p ( h | z ) Calculate MAP estimate z ( i ) = arg max z p ( z | h ( i ) ) Decode map estimates z ( i ) to get MDP samples y ( i ) where m is an MDP and V m ( s ) denotes the value function for state s under MDP m . V m ( s ) can be obtained using any standard planning algorithms and we use v alue iteration(with γ =0.95, 40 iterations). Action selection is done using -greedy mechanism with = 0 . 1 . Since recomputing value functions at each step is computationally infeasible, each selected action persists for τ = 3 steps. W e note that value functions used need not be e xact, but can be approximate as the y are only used for τ steps. A quick er estimate can be obtained using Monte-Carlo methods when the state-space is large. 5 Jacobian Exploration Bonus T o incentivize the agent to visit decisiv e pixels/locations, we introduce a bonus based on the change in the embedding Z . Intuiti vely , the embedding Z has the highest change when the V AE detects changes that are relev ant to the distribution p ( X ) that it is modelling. The bonus can be summarised as follows: B α ( s ) = α · tanh + ∂ z ∂ x s where x s denotes the list of observ ations made at state s . W e use a tanh ( · ) transfer function to bound activ ations produced by the Jacobian, thus mainitaining numerical stability . This bonus can be used in two ways - as a pseudo re ward, R s = R s ( x ) + B α ( s ) or to replace the actual rew ard. R s = max ( R s ( x ) , B α ( s )) where R s ( x ) is the actual re ward deduced by the agent. While both methods showed impro vement, the latter worked better since total reward for states which already gav e a high reward was not further increased. Since B α changes drastically with ne w observ ations, B α is recomputed every time the ˆ V t ( s ) is to be recomputed. B α is also memory-less i.e. it doesn’ t carry over an y information from one episode to the next. Figure 2: Final Jacobian Bonus for BW -E and BW -H - Locations in yellow-green are identified by the agent as being most helpful in deducing the MDP being solved. 6 Experiments 6.1 T estbench W e have implemented the follo wing algorithms. 5 • V alue Iteration, referred to as STRL • Multi-task RL with V AE, RBM without exploration bonus, referred to as MTRL-0 • Multi-task RL with V AE, RBM, and Jacobian Bonus, referred to as MTRL- α W e have tested the abo ve algorithms on 2 en vironments. • Back W orld (Easy) [BW -E] - Goal location alternates depending on marker location color , marker location is fix ed and is in most paths from start to goal. This domain demonstrates the advantage g ained using a probabilistic model over the MDPs. • Back W orld (Hard) [BW -H] - Same setting as BW -H, but marker location is not on most paths from start to goal. This domain demonstrates the advantage pro vided by the Jacobian exploration bonus and our generati ve model. For STRL, using only visible portions of the en vironment was v ery unstable and hence, we had to add a pseudo re ward. For each unseen location, we pro vide a pseudo rew ard, ε n for n th step (with ε 0 = 0 . 3 ), that is annealed by a factor of κ = 0 . 9 . Each episode w as terminated at 200 steps if the agent hadn’t reached the goal. Using this pseudo rew ard, the agent was forcefully terminated fe wer times. These w orlds become challenging due to partial visibility . W e use a 5x5 kernel with clipped corners and the agent is always assumed to be at the center . At each step, the en vironment tracks the locations that the agent has seen and presents it to the agent before an action is taken. For our experiments, we consider the a verage number of steps to goal as a measure of loss and av erage rew ard as a measure of performance. (a) BW -E A (b) BW -E B (c) BW -H A (d) BW -H B (e) Suboptimal path[BW -H] (f) Optimal path[BW -H] (g) V isibility Kernel Figure 3: 28x28 worlds used in our experiments - White indicates start position of agent. Green and Y ellow are marker locations. Red locations are failures. Blue locations are all successes. Gray areas in kernel are visible to the agent. White cell in kernel is the agents position. Shown optimal path considers MDP deduction as a sub-problem. 7 Results 7.1 Navigation T able 1 gives a verage re ward for each agent. T able 2 giv es av erage episode length. W e also impose forceful termination at 200 steps if episode has not yet completed. From the results, we infer the following. • STRL using value iteration does poorly as it as no w ay of deducing MDPs. 6 T able 1: A verage Re ward W orld STRL MTRL-0 MTRL- α BW -E 0.21 0.99 0.99 BW -H 0.23 0.92 0.99 T able 2: A verage Episode Length W orld STRL MTRL-0 MTRL- α BW -E 184.19 46.20 46.29 BW -H 183.64 54.0 45.8 • MTRL-0 solves both BW -E and BW -H en vironments and does almost as good as MTRL- α . This improv ement can be attributed to the use of our deep generati ve model. • MTRL- α shows better results on BW -H. This was expected as MTRL-0 makes no attempt to visit marker locations. MTRL- α is moti vated by the Jacobian Bonus to visit marker locations, thereby deducing the MDP . • MTRL-0 performs as good as MTRL- α in BW -E as marker locations lie on most paths to the goal. Ho we ver , since it fails to understand the significance of the mark er locations and markers in BW -H are not on most paths to the goal, it results in longer episodes and lower rew ard. 7.2 V isualizations 7.2.1 RBM T raining T o visualize RBM training, we used the BW -E en vironment. W e used a random agent,( -greedy policy with =1 and restricted actions at boundaries so it doesn’ t b ump into walls) on this en vironment to na vigate and collect a sample , of the seen en vironment, at the end of each episode. W e then encoded the samples using V AE and used it to train a RBM. Figure 4 shows the clusters and their means for each gaussian fit by the RBM. Since there are only two possible BW -E en vironments, RBM only fits two gaussians and our encoded samples are clustered around the same. T raining was done in minibatches of 64 samples with 1 hidden unit in the RBM. (a) Epoch 0 (b) Epoch 3 (c) Epoch 6 (d) Epoch 9 (e) Epoch 12 (f) Epoch 15 (g) Epoch 26 (h) Epoch 79 Figure 4: V isualization of RBM training on encoded BW -E world samples - Red points are means of the fitted gaussians. Blue points are data points in the minibatch. Black points are samples from the gaussians fitted by RBM. Spread of the black points is a measure of the variance of the fitted gaussians. W e see two distinct clusters in each snapshot. RBM iterati vely refines its parameters to fit the means close to the encoded sample clusters. Due to perfect reconstruction from V AE, there is no spread of encoded samples. 7 7.2.2 V AE T raining T o visualize the training of V AE, we use the same setup as for visualizing RBM training. W e used 400 samples from BW -E and training was done in mini-batches of 128 samples. 4 test samples were randomly chosen and reconstruction from V AE w as recorded after 30, 60, 90 and 120 epochs of training. Figure 5 sho ws the training progress of V AE on BW -E samples. (a) Input (b) Epoch 30 (c) Epoch 60 (d) Epoch 90 (e) Epoch 120 Figure 5: V isualization of V AE training on BW -E : After 30 epochs, V AE has learnt the general structure of the grid-world, but has yet to learn colors. After 60 epochs, it has learnt colors for mark er pixels that were in most training examples, b ut has yet to learn goal state colors. After 90 epochs, it has learn colors for goal state, but is yet to learn colors at corners as the y are present in very fe w samples. After 120 epochs, learning is complete. 8 Conclusions and Futur e W ork W e hav e presented a new method using a deep generati ve model to provide an exploration bonus for solving the multi-task reinforcement learning problem. Our modification to the V AE loss function allows it to learn from partial inputs and also the associations between dif ferent vie ws of the same en vironment. Use of RBMs to learn a distribution over z allows us to sample actual MDPs instead of a mixture of MDPs. W e introduced an intuitive exploration bonus and hav e shown improv ements ov er existing baselines. One drawback of Jacobian Bonus is that it doesn’t use the rew ard structure of the MDPs. This bonus could yield sub-optimal policies in en vironments with multiple markers and associated rewards. W e would like to incorporate the re ward structure into the Jacobian Bonus to ha ve some form of utility interpretation. Our deep generative model is scalable and we would like to explore learning in larger worlds and extend our method to work with Minecraft-lik e 3D en vironments. 8 References [1] Y arin Gal and Zoubin Ghahramani. “Dropout as a Bayesian approximation: Representing model uncertainty in deep learning”. In: arXiv pr eprint arXiv:1506.02142 (2015). [2] Geoffre y Hinton. “A practical guide to training restricted Boltzmann machines”. In: Momentum 9.1 (2010), p. 926. [3] Diederik P Kingma and Max W elling. “Auto-encoding v ariational bayes”. In: arXiv pr eprint arXiv:1312.6114 (2013). [4] Alessandro Lazaric and Mohammad Ghav amzadeh. “Bayesian multi-task reinforcement learn- ing”. In: ICML-27th International Conference on Machine Learning . Omnipress. 2010, pp. 599– 606. [5] Junhyuk Oh et al. “Control of Memory , Activ e Perception, and Action in Minecraft”. In: arXiv pr eprint arXiv:1605.09128 (2016). [6] Emilio Parisotto, Jimmy Lei Ba, and Ruslan Salakhutdinov. “Actor-mimic: Deep multitask and transfer reinforcement learning”. In: arXiv pr eprint arXiv:1511.06342 (2015). [7] A vi v T amar, Serge y Levine, and Pieter Abbeel. “V alue Iteration Networks”. In: arXiv preprint arXiv:1602.02867 (2016). [8] Jacob W alker et al. “An uncertain future: Forecasting from static images using variational autoencoders”. In: Eur opean Conference on Computer V ision . Springer. 2016, pp. 835–851. [9] Aaron W ilson et al. “Multi-task reinforcement learning: a hierarchical Bayesian approach”. In: Pr oceedings of the 24th international conference on Machine learning . ACM. 2007, pp. 1015– 1022. 9

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment