Identity-sensitive Word Embedding through Heterogeneous Networks

Most existing word embedding approaches do not distinguish the same words in different contexts, therefore ignoring their contextual meanings. As a result, the learned embeddings of these words are usually a mixture of multiple meanings. In this pape…

Authors: Jian Tang, Meng Qu, Qiaozhu Mei

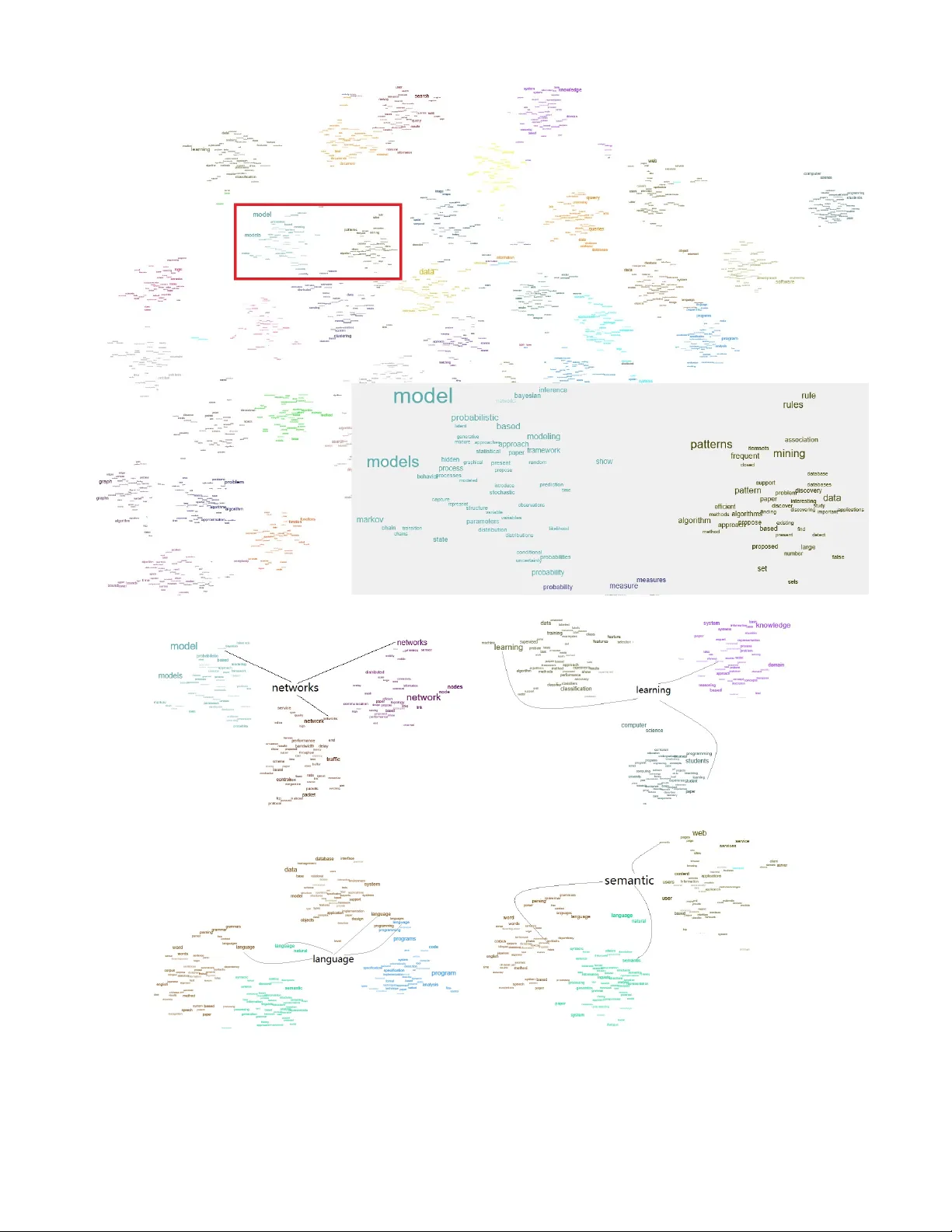

Identity-sensitive W or d Embedding thr ough Heter ogeneous Networks Jian T ang Microsoft Research jiatang@microsoft.com Meng Qu P eking Univ ersity mnqu@pku.edu.cn Qiaozhu Mei University of Michigan qmei@umich.edu ABSTRA CT Most existing w ord embedding approaches do not distin- guish the same words in differen t contexts, therefore ignoring their con textual meanings. As a result, the learned embed- dings of these w ords are usually a mixture of m ultiple mean- ings. In this paper, w e ac knowledge m ultiple identities of the same word in different contexts and learn the identit y- sensitiv e w ord em b eddings. Based o n an iden tity-labeled text corpora, a heterogeneous netw ork of words and w ord iden tities is constructed to model different-lev els of word co-occurrences. The heterogeneous net work is further em- bedded in to a low-dimensional space through a principled net work embedding approach, through whic h we are able to obtain the em b eddings of words and the embeddings of w ord iden tities. W e study th ree differen t t yp es of w ord iden- tities including topics, sentimen ts and categories. Exp eri- men tal results o n real-world data sets show that the identit y- sensitiv e word embeddings learned by our approach indeed capture different meanings of words and outp erforms com- petitive metho ds on tasks including text classification and w ord similarity computation. Categories and Subject Descriptors I.2.6 [ Artificial Intelligence ]: Learning General T erms Algorithms, Exp erimentation K eywords Iden tity-sensitiv e word em b edding, heterogeneous netw orks, scalabilit y 1. INTR ODUCTION Learning a meaningful represen tation of word s is critical in many text mining tasks. T raditional vector space mo dels represen t a word as a “one-hot” vector in a high-dimensio nal Permission to make digital or hard copies of all or part of this work for personal or classroom use is granted without fee provided that copies are not made or distributed for profit or commercial advantage and that copies bear this notice and the full cita- tion on the first page. Copyrights for components of this work owned by others than A CM must be honored. Abstracting with credit is permitted. T o copy otherwise, or re- publish, to post on servers or to redistribute to lists, requires prior specific permission and/or a fee. Request permissions from Permissions@acm.org. xxxxx, xxxxxxx. Copyright 20XX A CM X-XXXXX-XX-X/XX/XX ...$15.00. space, which suffers from serious problems of data sparse- ness. Recen t approaches represen t words as real vec tors in a lo w-dimensional space (i.e., word embedding), which muc h better capture the syntactic and semantic relationships b e- t ween the words. W ord embeddings hav e been prov ed to be v ery effective in many tasks such as word analogy [13], named en tity reco gnition [5], POS tagging [5], and text clas- sification [12, 23, 9]. The basic intuition of learning word embeddings comes from the distributional hypothesis that “you shall know a w ord by the company it keep s” (Firth, J.R. 1957:11) [6]. Suc h an in tuition has motiv ated man y models, including the first word embedding approac h based on neura l net works [1], SENNA [5], Glov e [19], Skipgram [13], etc. Among these models, the Skipgram mo del has b een widely adopted due to its simplicity , effectiveness and efficiency . The basic idea of the Skipgram is to use the embedding of the target word to predict the embeddings of the surrounding context w ords in local context windows. Although these existing approac hes hav e b een pro ved to be already quite effective in some tasks, a common prob- lem of them is that they do not distinguish the meanings of the same words in different contexts. In other words, ev ery w ord is represented with a single embedding vecto r, ev en if they hav e multiple, clearly different meanings (e.g., “ja v a,” “bank” ). Some words hav e m ultiple senses and each corresponds to a different meaning (e.g., “tear” as a noun or as a verb). Some words hav e one sense but their mean- ings v ary subtly in differen t contexts (e.g., “netw ork” in the data mining communit y or in the computer net works com- m unity). Representations of these w ords learned b y existing w ord embedding approaches are a mixture of their multiple meanings, thus compromising the accuracy of seman tic re- lations. T o address this problem, the contextual meanings of wo rds must b e taken into consideration. In this pap er, we ackno wledge that same word may tak e on different identities when they are used in different con- texts, and every such identify carries its own meaning and should b e represented with its own embedding. In other w ords, w e learn the identit y-sensitive word embeddings. Our intuition is inspired by the theories of so cial identit y and self categorization in so cial psychology and economics. These theories suggest that every p erson can sense multiple iden tities of herself, which are usually p erceived from her mem b ership in different so cial groups, and some iden tity ma y dominate other iden tities under particular situations [22, 25]. F or example, the first author ma y perceive him- self as b oth a Microsoft employ ee, a data mining researcher, Figure 1: Illustration of conv erting a identit y-lab eled text corp ora to a heterogeneous netw ork. Different colors represen t differen t word iden tities. The heterogeneous netw ork enco des the lo cal con text-level word co-occurrences through the w ord- con text bipartite netw ork. When constructing the w ord-context netw ork, the identities of words are ignored if the words are treated as contexts. The heterogeneous netw ork also enco des identit y-level word co-o ccurrences through the word-iden tity bipartite netw ork. and a Universit y of Michigan alumni, and the third iden- tit y b ecomes dominant in a conv ersation ab out Ohio States. F ollo wing a simple analogy , we define the identit y of a word as its mem b ership belonging to a group of w ords, which may be deriv ed from a taxonom y , a topic modeling process [2], or any other meaningful partitions of words. Besides topic iden tity , there ma y be many other types of word identities suc h as categories, sentimen ts, POS tags and ideologies. The meanings of a word with different identities are usually dif- feren t. Therefore, a sound word embedding should allo w eac h word to hav e a different embedding for every identit y it belongs to, i.e., to hav e identit y-sensitive embeddings. T o learn the identit y-sensitiv e word embeddings, we pro- pose an approach based on heterogeneous netw ork embed- ding. Given a text collection, we first recognize the iden ti- ties of every word tok en in that corp us, therefore the same w ord in diff erent contexts may b e lab eled with di fferent iden- tities. This identit y recognition pro cess may take any rea- sonable existing word lab eling approaches and v ary for dif- feren t t yp es of identities. F or example, the topic identi ties of w ord tokens can b e recognized through the Gibbs sampling algorithm for the latent Dirichlet allo cation [2]; the part-of- speech of words can b e obtained though a sequence lab eling method such as the conditional random fields [10]; the senti- men t polarity of word tok ens can be obtained through a sen- timen t dictionary and a word-sense disambiguation process. Based on the ident ity-labeled corpus, we build a heteroge- neous netw ork cons isting of w ords and w ord iden tities, whic h encodes differen t lev els of essen tial w ord co-occurrence infor- mation for the embedding pro cess. The heterogeneous net- w ork con tains a word-con text net work that enco des the lo cal con text level w ord co-o ccurrences, which are mainly utilized b y existing word embedding approac hes such as Skipgram; it also con tains a word-iden tity bipartite net work that en- codes identit y-lev el word co-o ccurrences. The intuit ion is that words with the same identit y are likely to take similar meanings. The netw ork constructing pro cess is illustrated in Fig. 1. W e extend our previous work on homogeneous net work em b edding [24] to learn the embedding of the het- erogeneous netw ork, which is very efficient and scalable. W e present comprehensive exp eriments on three differen t t yp es of word identities including topics, sentimen ts and cat- egories. The effectiven ess of identit y-sensitive word embed- dings is carefully ev aluated using the tasks of text classifica- tion and word similarit y computation. Exp erimental results sho w that the iden tity-sensitiv e w ord em beddings are indeed able to capture different meanings of the same words, out- performing competitive approaches including some state-of- the-art w ord em bedding approaches and other methods that consider the contextual meanings of words. T o summarize, we make the follow contributions: • W e study identit y-sensitive word embeddings which is inspired by so cial identit y and self categorization the- ories. W e ac knowledge that the same words may take on different identiti es and present differen t meanings in differen t contexts. • W e use a heterogeneous netw ork to enco de different lev els of co-o ccurrence information b etw een words and w ord identities. A principled heterogeneous netw ork em b edding approach is prop osed to learn the embed- dings of w ords and word identities. • W e study three different types of w ord identities in- cluding topics, sen timents and categories. Exp erimen- tal results sho w that our prop osed approac h outp er- forms existing metho ds on real tasks and rea l data sets. The rest of this pap er is organized as follows. Section 2 discusses the related work. Section 3 formally defines the problem of identit y-sensitiv e word embedding via heteroge- neous netw orks. Section 4 describ es our approach of hetero- geneous netw ork embedding. Section 5 presents the exp eri- men tal results, and we conclude in Section 6. 2. RELA TED WORK W ord embedding has b een attracting increasing attentio n recen tly [1, 14, 15, 5, 8, 16, 13, 19, 24]. Eac h word is rep- resen ted with a low-dimensional vector, which can b e used as features for a v ariety of tasks. The first word embedding approac h is proposed b y Bengio et al. [1] for language mo del- ing pu rp ose, in which a neural netw ork is built for predicting the next word given the previous words in a sentence. Mnih et al. [14] further simplified the neural language mo del with a log-bilinear mo del. How eve r, b oth of the tw o previous ap- proac hes suffer from serious problem of high computational complexit y due to the large mo del output space (the vocab- ulary). Mnih et al. [15] addressed the problem through the hierarc hical softmax techniques. Mikolo v et al. [13] prop osed a very simple mo del Skipgram b y just using the embedding of the target w ord to predict the embeddings of the sur- rounding context words in lo cal context windows, which is essen tially using th e local context-lev el word co-o ccurrences. Compared to the Skipgram, our previous work [24] show ed that better results can b e obtained through constructing the w ord co-o ccurrence netw ork first and then embedding the co-occurrence netw ork. Most existing embedding approaches represent each word with a single em b edding vector, ignoring the v ariation of w ord meanings in different con texts. A more sound solution in practice is to represen t each word with multiple embed- dings, eac h of which captures a differen t meaning of the w ord. There are indeed some recent work that learns multi- ple embeddings for each word [8, 3, 17, 11]. Huang et al. [8] used a p ost-pro cessing approach to cluster the context v ec- tors of eac h word, and then each context cluster is treated as a embedding of the word. Neelak atan et al. [17] prop osed a multi-sense Skipgram (MSSG) mo del to estimate multiple em b edding v ectors for each word during the learning pro- cess. Each sense of the w ord is associated with a context cluster. During the learning pro cess, the av erage context v ectors is compared with the con text cluster centers to de- termine the sense of the target w ord. In the experiments, w e compare with the MSSG mo del. Chen et al. [3] used exter- nal knowledg e W ordNet to estimate an embedding for each sense of a word. Compared with their work, our approach does not require external knowledge. The most similar w ork to us is [11], in which topic identit y is studied to differen- tiate the meanings of wo rds in differen t contexts. Ho wev er, in their work only topic iden tity is studied while we studied m ultiple types of word identities b esides topic identit y . Be- sides, all previous approac hes learned the embeddings from free text while our approach learned the embeddings through em b edding heterogeneous netw orks, which are able to cap- ture differen t levels of word co-o ccurrences. 3. PR OBLEM DEFINITION The meanings of words are usually ambiguous, dep ending on the contexts. Most existing word embedding approaches ignore the v ariations of word meanings in different con texts. As a result, each w ord is represented with a single em b ed- ding vector. In this pap er, we take the initiativ e to study con text-aw are word em b edding. W e ac knowledge that the same word may hav e differen t iden tities in different con- texts. W e define the iden tity of word as follows: Definition 1. (Wor d Identity) The identit y of a word is its membership b elonging to a group of words. F or exam- ple, the word “cat” has the identit y of “animal” as it b elongs to the “animal” ; the word “flo wer” has the identit y of “plan t” as it belongs to the “plant” . There are man y t yp es of w ord identities, e.g., topics, sen ti- men ts, ideologies, categories and POS tags. F or eac h t ype of iden tity , eac h word ma y take on multiple ident ities. T ake the topic identit y as an example, each word may b elong to mul- tiple topics; another example is the sen timent iden tity , some w ords can hav e b oth the “p ositive” and “negativ e” sentimen t orien tation. When a word takes on different identities, the meanings of the word are usually different. Therefore, each w ord with a different identit y should b e represented with a different embedding, capturing a different meaning of the w ord, i.e., identit y-sensitive w ord em b edding. Specifically , w e define identit y-sensitive word embedding as follows: Definition 2. (Identity-sensitive Wor d Emb e dding) Iden tity-sen sitive word embedding means that each w ord w has a different embedding ~ w i for each identit y i ∈ I , where I is the set of word identities. Note that although we assume that each wo rd has a dif- feren t embedding for each identit y . In practice, eac h wo rd usually has a few identities. F or example, each word usually belongs to a few topics; many w ords only tak e on either p osi- tiv e or negative sen timent orien tation. Therefore, compared to the word embeddings without considering the word iden- tities, the num b er of parameters for iden tity-sensitiv e word em b eddings will not increase significantly . T o learn the iden tit y-sensitive word embeddings, the iden- tities of the tok ens in the corpus must b e recognized first. The recognizing pro cess depends on the types of identities. F or example, the topic identities can b e recognized through the Gibbs sampling of the latent Dirichlet allo cation (LDA) model; the sen timent identities can b e determined by the sen timent identities of the sentences. Once the identities of the tokens in the corp ora are recognized, we can lev erage dif- feren t levels of word co-occurrences, which are the essen tial information for word embedding. One in tuition is based on the distributional hypothesis, which assumes that words co- occur with similar w ords are lik ely to ha ve simila r meanings. Therefore, the local con text-level word co-occurrences can be lev eraged, whic h are also used b y the Skipgram mo del. T o encode the local con text-level word co-occurrences, we in tro duce the w ord-context bipartite netw ork: Definition 3. (Wor d-c ontext Network G wc ) W ord-con text net work, denoted as G wc = ( V w ∪ V c , E wc ) , is a bipartite net work. V w is the set of words with iden tities, V c is the v o cabulary of context wo rds without considering the w ord iden tities. E wc is the set of edges b etw een words with iden- tities and con text words. The weigh t of the edge b etw een w ord w and context word c is the num b er of times they co-occur in all the lo cal context windows. Note that when constructing the word-con text net work, the identities of the w ords are ignored when the words are treated as contexts. The reason is that by doing this, the n umber of unique con text words can b e reduced, decreasing the data sparsity . Besides the local con text-level word co- occurrences, another in tuition is that words with the same iden tity are lik ely to tak e on similar meanings. F or example, w ords assigned to the same topic are likely to tak e similar meanings. Therefore, the identit y-lev el word co-o ccurrences can b e lev eraged. T o enco de the iden tity-lev el word co- occurrences, we introduce the word-iden tit y netw ork, de- fined as below: Definition 4. (Wor d-Identity Network G wi ) W ord- iden tity net work, denoted as G wi = ( V w ∪ V I , E wi ) , is also a bipartite netw ork, where V I is the set of identities. E wi is the set of edges betw een words with iden tities and word iden tities. The weigh t b etw een word w and identit y i is the n umber of times word w taking on iden tity i in the entire corpus. The word-con text netw ork and word-iden tity netw ork en- codes different lev els of word co-o ccurrences. T o leverage both levels of word co-o ccurrences, we further combine the t wo netw orks into a heterogeneous net work . Therefore, to learn the word embeddings, we can embed the heteroge- neous net work construc ted from the identit y-lab eled corpus. F ormally , we define our problem as follows: Definition 5. (Pr oblem Definition) Give an identit y- labeled text corp ora, the problem of iden tity-sens itive word em b edding aims to learn multip le low-dimensional embed- ding vectors for each word through embedding a heteroge- neous netw ork constructed from the iden tity-labeled text co- pora. In the next section, we introduce our approac h of het- erogeneous net work embedding for iden tity-sensitiv e word em b edding. 4. IDENTITY -SENSITIVE WORD EMBED- DING In this section, we introduce our approac h of em b edding heterogeneous netw orks, through which we can obtain b oth the identit y-sensitive word embeddings and the em b eddings of word iden tities. W e first review our previous mo del LINE on large-scale information netw ork embedding [24]. The LINE model is applicable for embedding arbitrary t yp es of net works including directed, undirected and weigh ted. It is mainly designed for homogeneous information netw ork em b edding, in whic h there is only one t yp e of no des in the netw ork. T o learn a low-dimensional representation of the vertices in the netw ork, the intuition is to preserve the relationship or proximit y b et ween the vertices in the low- dimensional spaces. The essential idea of LINE is to pre- serv e the first-order or second -order prox imities b et ween the v ertices, which assumes that vertices with links or vertices with similar neighbors are similar to each other. Based on the distributional hypothesis in linguistics, words with sim- ilar compan y or neigh b ors are lik ely to be similar to each other. This corresponds to the assumption of the second- order proximit y in the word-con text netw ork. Therefore, we only utilize the second-order proximit y here. Next, w e introduce how to utilize the LINE mo del for bipartite net work embedding. Giv en a bipartite netw ork G = ( V 1 ∪ V 2 , E 12 ), where V 1 and V 2 are tw o disjoint sets of vertices, and E 12 are the set of edges b et ween the t wo sets of vertices. F or eac h edge e = ( i, j ) , i ∈ V 1 , j ∈ V 2 , the probability of v ertex v j conditioned on vertex v i can b e defined as follo ws: p ( v j | v i ) = exp( ~ v T j · ~ v i ) P k ∈ V 2 exp( ~ v T k · ~ v i ) , (1) where ~ v i is the lo w-dimensional represent ation of vertex v i . Giv en a vertex v i ∈ V 1 , we can see that Eqn. (1) actu- ally defines a conditional distribution ov er the vertices in the set V 2 . In practice, the second-order proximit y betw een a pair of vertex ( v i , v i 0 ) can actually be determined by the corresponding empirical conditional distribution ˆ p ( ·| v i ) and ˆ p ( ·| v i 0 ). Therefore, to preserve the second-order proximit y , w e can mak e the conditional distribution p ( ·| v i ) b e close to the empirical distribution ˆ p ( ·| v i ). The final ob jectiv e func- tion can be formulated as follows: O = X i ∈ V 1 λ i d ( ˆ p ( ·| v i ) , p ( ·| v i )) , (2) where d ( · , · ) is the distance function betw een tw o probabilit y distributions, and here we adopt the widely used distance of KL-div ergence. The empirical probability can b e defined as ˆ p ( v j | v i ) = w ij d i , where d i is the degree of v ertex v i . λ i is the prestige of v ertex v i in the net work, and we use a v ery simple approac h by setting λ i = P k w ik , i.e., the degree of vertex v i . Removing some constants, the final ob jective function (2) can b e simplified as b elow: O = − X ( i,j ) ∈ E 12 w ij log p ( v j | v i ) . (3) As mentioned ab o ve, the iden tity-sensi tive word embed- dings can be obt ained thro ugh em b edding t he heterog eneous net work constructed from the iden tity-labeled text corpora. The heterogeneous net w ork includes tw o bipartite netw orks: w ord-context netw ork and word-iden tity net work. There- fore, a intuitiv e approach is to minimize the follo wing ob jec- tiv e function: O H N = O wc + O wi , (4) where O wc is the ob jective function for word-con text net- w ork and defined as: O wc = − X ( j,k ) ∈ E wc w j k log p ( w j | c k ) , (5) and O wi is the ob jective function for w ord-identit y netw ork and defined as: O wi = − X ( j,k ) ∈ E wi w j k log p ( w j | i k ) . (6) Note that for the ob jective function (4), a b etter approac h ma y b e weigh ting the tw o netw orks differently . How ev er, we found that equally weigh ting the tw o netw orks consistently perform v ery w ell in differen t data sets, and this is able to sav e users’ effort in c ho osing a optim um parameter for con trolling the imp ortance of the tw o netw orks. Optimization. Next, w e describe the solution to op- timize the ob jective function (4). The optimization can b e approac hed thro ugh the techn iques of negative sampling [13] and edge sampling [24]. F or each vertex v i ∈ V 1 , the con- ditional probability (1) requires calculating the similarities betw een vertex v i and all the vertices in the set V 2 , which is very computationally expensive. The negative sampling tec hnique approaches this through drawing some negative edges for each positive edge. Specifically , it aims to mini- mize the follo wing ob jective function: O ns = − X ( j,k ) ∈ E wc w j k (log σ ( ~ w T j · ~ c k ) + K X n ∼ P n ( w ) log σ ( − ~ w T n · ~ c k )) − X ( j,k ) ∈ E wi w j k (log σ ( ~ w T j · ~ i k ) + K X n ∼ P n ( w ) log σ ( − ~ w T n · ~ i k )) , (7) where K is the num b er of negative samples for eac h p ositive edge. σ ( · ) = 1 / (1 + exp( − x )) is the sigmoid function. P n ( w ) is the noisy distribution of w ords, whic h is used to generating negativ e edges. P n ( w )is usually set as P n ( w ) ∝ t ( w ) 0 . 75 according to [13, 24], where t ( w ) is the frequency of the w ord w . The ob jective function (7) can b e optimized through sto chas- tic gradient descent. When an edge ( i, j ) is sampled, the w eight of the edge w ij will b e multip lied in to the gradients. This is problematic when the v alues of the w eights diverge, i.e., some weigh ts are very large while some are very small. In this case, it is very hard to choose an a ppropriate learning rate when different edges are sampled for mo del updating. The edge sampling [24] approach effectively addresses this through sampling the edges according to their probabilities and treating the sampled edges as binary edges. When using edge sampling for optimizing ob jective func- tion (7), one concern is that the weigh ts of the edges in the t wo netw orks G wc and G wi ma y not be comparable. This can b e resolved through alternatively sampling the edges from the tw o netw orks. The o verall learning pro cess can b e summarized into Alg. 1. Algorithm 1: T raining process of iden tit y-sensitive w ord embedding. Data : G wc , G wi , n umber of samples T , num ber of negative samples K . Result : identit y-sensitive word em b eddings, embeddings of word identities. while iter ≤ T do • dra w a positive edge from E wc and K negative edges according to the noisy distribution P n ( w ), and update the iden tity-sensitiv e word em b eddings and con text embeddings; • dra w a positive edge from E wi and K negative edges according to the noisy distribution P n ( w ), and update the iden tity-sensitiv e word em b eddings and the embeddings of word iden tities; end 5. EXPERIMENTS In this section, we mov e forward to ev aluate the effec- tiv eness of our prop osed approach on real-world data sets. Three types of word identities are studied including top- ics , sentiments and c ate gories . The iden tity-sensitiv e wo rd em b eddings are ev aluated through the tasks of text classi- fication and con textual word similarity measuring. W e first in tro duce our experiment setup. 5.1 Experiment Setup 5.1.1 Data Sets • 20NG , the widely used data set 20newsgroup for text mining 1 . On this data set , tw o t yp es of w ord iden tities topic and c ate gory are recognized. • DBLP , the abstracts of the papers in computer science bibliograph y 2 . In this data set, 8 diverse research fields are used including “data mining” , “database” , “artificial in telligence” , “computational linguistics” , “hardward” , “system” , “programming language” and “theory” . The 1 Av ailable at http://qwone.com/~jason/20Newsgroups/ 2 Av ailable at https://aminer.org/billboard/citation topic and c ategor y identities are also recognized on this data set. • WikiSample , a collection of sampled English Wikipedia articles and used in [23]. Seven div erse categories from the DBp edia ontology are selected including “Arts” , “History” , “Hu man” , “Mathematics” , “Nature” , “T ec h- nology” and “Sports” . F or each category , 9,000 articles are randomly selected in to the training sets. On this data set, the topic and c ate gory identities are recog- nized. • WikiFull , the entire English Wikip edia articles 3 . On this data set, the topic identit y is recognized. This data set is used for the task of contextual wo rd simi- larit y measuring. • Twitter , a large collection of tw eets used for senti- men t analysis 4 . 120,000 tw eets are sampled and split in to training and test data sets. On this data set, the sentiment iden tity is recognized. • MR , a mo vie review data set from [18]. • Treebank , the Stanford sentimen t T reebank dataset 5 . The data set for binary sen timen t classification is used. In all the ab ov e data sets, the stop words are remov ed. W e summarize the statistics of these data sets in T able 1. 5.1.2 Recognizing the Identities of W or ds T o learn the iden tit y-sensitive word embeddings, the iden- tities of the word tokens in different contexts must b e rec- ognized in adv ance. In this part, we in tro duce the identi ty recognizing approaches for different types of identities. T opic. The topic iden tities of the tokens in the corpus are estimated through the Gibbs sampling algorithm [7] of the laten t Dirichlet allo cation. Sen timen t. The sentimen t identities of the tokens in each tr aining document are determined through the identit y of the do cument. Sp ecifically , if a do cument has p ositiv e sen- timen t, then all the tokens in the do cumen t are assigned to the p ositiv e identit y . How ever, not all the words hav e the sen timent orientations. Therefore, some feature selec- tion methods are first used to select a set of words that are lik ely to hav e the sentimen t orientation. When recognizing the iden tities of the tokens in a do cument, only the words selected b y the feature sel ection methods are assigned to the iden tity of the do cumen t. F or feature selection, a very simple approach is used. F or eac h word w , we calculate its probabilit y appearing in p os- itiv e sen timent p ( w | pos ) and negativ e sentimen t p ( w | neg ). Then w ords with p ( w | pos ) p ( w | neg ) or p ( w | neg ) p ( w | pos ) larger than a threshold are selected. W e empirically c ho ose the threshold as 10. The sen timent identities of the tokens in each of the test document can b e determined through the word embeddings learned on the tr aining do cuments. Note that each w ord has 3 Av ailable at https://en.wiki pedia.org/wiki/ Wikipedia:Database_download 4 Availableathttp://thinknook. com/ twitter- sentiment- analysis- training- corpus- dataset- \ 2012- 09- 22/ 5 Av ailable http://nlp.stanford.edu/sentiment/index. html T able 1: Statistics of the data sets. 20NG DBLP WikiSample WikiFull Twitter MR TreeBank # T rain 11,314 25,026 42,000 3,405,189 800,000 7,108 7,792 # T est 7,532 12,520 21,000 - 400,000 3,554 1,821 Doc.Length 305.77 77.04 672.56 224.20 14.36 22.02 20.28 #Category 20 8 7 - 2 2 2 Iden tity topic, category topic, category topic, category topic sentime nt sen timen t sen timent m ultiple word embeddings associated with different identi- ties and meanwhile has a con text embedding when the word is treated as a con text. Given a test document, w e recognize the iden tity of token w in the do cumen t as follows: k ∗ = argmax k ~ w T k ~ c ¯ w , (8) where ~ w k is the embedding of word w with identit y k , ~ c ¯ w is the a verage con text embedding of all the other words except w in the document . Category . F or the category iden tity , the identities of the tok ens in each tr aining document is assigned as the iden- tit y of the do cument. The identities of the tok ens in the test do cuments can b e recognized in the same w ay as the sen timent identit y according to Eqn. (8). 5.1.3 Compar ed Algorithms • BO W: the classical “bag-of-w ords” represen tation. Each document is represen ted with a | V | -dimensional vector, with each dimension corresp onding to a word. The w eight of eac h word/dimension is calculated according to the TFIDF w eighting [20]. • Skipgram: the widely used word embedding algorithm Skipgram [13]. In the Skipgram model, words in differ- en t contexts are treated as the same words, and hence eac h word is only represented with a single embedding v ector. • LINE: our previous work on large-scale information net work embedding [24]. The LINE mo del can b e ap- plied for learning word embeddings through building the word co-o ccurrence netw ork first and then embed- ding the word co-o ccurrence netw ork. W ords in differ- en t contexts are also not distinguished. • LD A: the latent Dirichlet allo cation model [2]. • MSSG: the multi-sense Skipgram mo del prop osed in [17]. The MSSG mo del dynamically learns multiple embed- dings for each w ord during the training pro cess, each of which captures a different sense of the word. In the MSSG mo del, w e set the num b er of senses as 3 b y default according to [17]. • TWE: the topical-word embedding mo del prop osed in [11], in which topic is used as the iden tities of the w ords. The TWE mo del can also b e used when other types of iden tities are recognized. W e use the second v ariant of the TWE [11] as it allows eac h word to hav e differen t em b eddings for differen t identities. • ISE. Our solution for identit y-sensitiv e word embed- ding. A heterogeneous netw ork is constructed from the ident ity-labeled text corp ora according to Section 3 and then the heterogeneous netw ork is embedded ac- cording to Section 4. 5.1.4 Evaluation W e ev aluate different word embeddings through the tasks of text classification and contextual w ord similarity measur- ing. T ext classification. All the mo dels are trained on the training data sets to learn the word embeddings and ev alu- ated on the test data sets. F or the embeddings of the doc- umen ts on b oth training and test data sets, a v ery simple approac h is used by av eraging ov er the word v ectors of the documents. Note that our goal is not to obtain the opti- m um do cument embeddings but to compare the w ord em- beddings learned by differen t approaches. More adv anced document embedding techniques can refer to recursive neu- ral netw orks [21] or conv olutional neural netw orks [9]. Once the embeddings of the documents in b oth training and test data sets are obtained, the one-vs-rest logistic regression model in the LibLinear 6 pac k age is used for classification. W e ev aluate the classification p erformance through the met- rics Micro-F1 and Macro-F1. Con textual W ord Similarit y . W e also ev aluate the qual- it y of different w ord embeddings through the task of contex- tual word similarity measuring, which is introduced in [8]. Giv en t wo sentences, the task aims to measure the similarit y betw een a pair of words in the t w o sentences. F or ev aluation, w e use the data set in [8], which includes 2,003 w ord pairs and their senten tial contexts collected from Wikip edia. The con textual similarity scores of these word pairs are collected from Amazon Mec hanical T urk. W e use the WikiFull data set, in whic h the topic iden tities of the tokens are recog- nized through the Gibbs sampling algorithm. By training on the WikiFull data sets, the identit y-sensitive word em- beddings can b e obtained. Given a pair of words ( w, w 0 ) with their senten tial contexts, we first recognize the identi- ties of the t wo words in the senten tial contexts according to the Eqn. (8), through which we are able to obtain the corre- sponding em b eddings of the tw o words. Then the similarity betw een the tw o words is calculated as the cosine similarit y of their word embeddings. Once the similarities b etw een all pairs of w ords are calculated, the Spearman correlation is calculated b etw een the similarities calculated based on the em b eddings and the similarit y scores given by humans. 5.1.5 P arameter Settings The dimension of word embeddings for different mo dels is set as 100 b y default without explicitly sp ecified. The windo w size is set as 5 for Skipgram, MSSG, TWE and when constructing the w ord-con text netw ork for both LINE and ISE. The learning rate of LINE and ISE is set as ρ t = ρ 0 (1 − t/T ), where T is the total num b er of edge samples and ρ 0 = 0 . 025 is the initial learning rate. The n umber of negativ e samples in all the em b edding models is set as 5. The n umber 6 h ttp://www.csie.ntu .edu.tw/ cjlin/liblinear/ of samples T can b e safely set to b e very large, e.g., 500 million. 5.2 Quantitative Results Let us start by looking at the results of text classification. T able 2 rep orts the results of text classification on 20NG , DBLP and WikiSample data sets. On these data sets, the topic and c ate gory identities are recognized. Let us first compare the results of the approaches without considering the word meanings in different contexts including Skipgram and LINE. W e can see that the p erformance of LINE con- sisten tly outperforms Skipgram on all the three data sets, whic h is consisten t with the results rep orted in [24, 23]. The reason is that the LINE model learns the word em- bedding through first constructing the word co-o ccurrence net work and then em b edding the netw ork, which captures more global structures of word co-o ccurrences compared to free text. The LDA, MSSG, TWE, ISE mo dels all considers the v ari- ations of word meanings in differen t con texts. In the LDA, the same word may b e assigned to different topics in differ- en t do cuments; in the MSSG, each word can take different senses in different contexts; in TWE and ISE, the meanings of the words are determined by their identities, which are differen t dep ending on the contexts. W e can see that though the LDA is able to differen tiate the meanings of words in different contexts by inferring the topic identities, the p erformance is not so go od. This is be- cause the meanings of wo rds assigned to the same topic are assumed to b e totally the same, which may not b e reason- able. Our approac h effectively complements this through iden tity-sensitiv e word embedding, whic h differen tiates the meanings of wo rds in the same topics/identities. The per- formance of MSSG is ev en inferior to that of Skipgram. The reason ma y b e that the MSSG dynamically infer multiple senses of the words and it may b e very hard to accurately estimate the senses of the w ords in differen t contexts dur- ing the learning pro cess. F or b oth the TWE and our ISE models, when topic identit y is considered, the p erformance outperforms b oth the Skipgram and LINE. Our ISE mo del also consisten tly outperforms the TWE model. The reasons are tw ofold. First, our ISE approac h lev erages the iden tity-lev el word co-o ccurrences while the TWE does not utilize this information; se c ond , our ISE model learns the word embeddings through embedding the heterogeneous netw orks, which capture global structures of w ord co-o ccurrences compared to free text. W e also notice that the performance of TWE becomes v ery bad when using the category iden tity , even inferior to that of Skipgram. The reason is that the category iden tities of t he tok ens in the test documents are v ery hard to recognize by the TWE mo del. Ho wev er, our ISE mo del can effectively recognize the identi- ties of the tokens in the test do cument through the context em b eddings of words, whic h are not av ailable in the TWE model. W e also notice that the results of ISE with c ate gory iden tity are b etter than with topic iden tity . The reason is that the c atego ry identit y is more relev ant to the text classi- fication task compared to the topic iden tity . Our ISE mo del also outp erforms the BOW metho d, the dimension of which is mu ch larger. T able 3 rep orts the results of text classification on the Twitter , MR , and Treebank data sets. On the three data sets, the sentiment identit y is recognized. Similar re- sults can b e observed compared to the 20NG , DBLP and WikiSample data sets. The p erformance of LD A is still not goo d. The LINE mo del consistently outp erforms the Skipgram. The p erformance of MSSG is still inferior to the Skipgram. The p erformance of TWE with sentimen t iden- tit y is also not go o d, even inferior to the LINE model. Our ISE model p erforms the b est b y considering the sent iment iden tity (on Treebank dataset, a little bit inferior to BOW, whic h uses a muc h larger feature dimension). ● ● ● ● ● ● ● ● ● ● 20 40 60 80 100 0.45 0.55 0.65 0.75 Number of T opics Micro−F1 ● ISE(topic) LDA (a) 20NG ● ● ● ● ● ● ● ● ● ● 20 40 60 80 100 0.68 0.72 0.76 0.80 Number of T opics Micro−F1 ● ISE(topic) LDA (b) DBLP Figure 2: Performance of ISE w.r.t the num b er of identities (topics). W e are also interest ed in how the num b er of identities w ould affect the performance. Fig. 2 rep orts the perfor- mance of text classification w.r.t the num ber of identit ies (topics) on the 20NG and DBLP data sets. W e can see that compared to the LD A, the p erformance of the ISE mo del is quite stable and not sensitive to the num ber of topics, whic h sav es the o verhead of choosing the appropriate n um- ber of topics when using the LDA mo del. The p erformance gain o ver LD A is especially significan tly when t he n umber of topics sp ecified is less than the optimum one. The reason is that the LDA does not differentiate the meanings of words in the same topic/iden tity , which is v ery serious when the n umber of topics is small, while in our ISE mo del the mean- ings of words with the same topic/iden tity are differen tiated through embedding. 5.2.1 Contextual W or d Similarity T able 4: Results of contextual wo rd similarit y by training w ord embeddings on the WikiFull data set. T opic identit y is utilized. Algorithm Spearman Correlation d=50 d=300 MSSG 49.17 57.26 TWE(topic) 57.60 61.23 ISE(topic) 58.54 62.27 Next, let us lo ok at the results on the task of contextual w ord similarity . T able 4 rep orts the results on the Wiki- Full data set. W e only compare the word embedding ap- proac hes that consider contextual word meanings including MSSG, TWE and ISE. F or the TWE and ISE mo dels, the topic identit y is used as it can b e estimated in an unsup er- vised wa y through LDA and 200 topics are estimated. W e can see that the p erformance of TWE and ISE significantly outperforms the MSSG mo del, which is consisten t with pre- vious results on text classification. The ISE still outp erforms T able 2: Results of text classification on 20NG , DBLP , and WikiSample data sets. Two types of identities topics and c ate gories are considered. F or the top ic iden tity , the n um b ers of topics are set as 80, 80, 100 on the three data sets resp ectiv ely . 20NG DBLP WikiSample Algorithm Micro-F1 Macro-F1 Micro-F1 Macro-F1 Micro-F1 Macro-F1 BO W 80.88 79.30 82.37 82.50 79.95 80.03 LD A 70.17 68.10 79.00 79.19 74.61 74.61 Skipgram 76.82 75.75 80.72 80.89 77.92 77.98 LINE 78.76 77.75 81.41 81.56 78.79 78.85 MSSG 75.66 74.59 80.06 80.22 77.61 77.69 TWE(topic) 79.95 78.94 80.83 81.05 78.17 78.18 ISE(topic) 81.29 82.00 82.20 82.44 79.29 79.30 TWE(category) 71.63 71.67 79.75 79.71 76.47 76.47 ISE(category) 84.64 83.89 83.65 83.71 80.46 80.46 T able 3: Results of text classification on the Twitter , MR , and Treebank data sets. The sentiment iden tity is recognized on these three data sets. Twitter MR Treebank Algorithm Micro-F1 Macro-F1 Micro-F1 Macro-F1 Micro-F1 Macro-F1 BO W 75.27 75.27 71.90 71.90 79.51 79.47 LD A 65.33 65.32 56.74 56.74 63.28 63.28 Skipgram 73.00 73.02 67.05 67.05 72.76 72.76 LINE 73.18 73.19 71.06 71.06 74.79 74.78 MSSG 72.98 72.97 65.00 64.09 71.39 71.39 TWE(sen timent) 72.67 72.67 70.81 70.82 74.95 74.94 ISE(sen timent) 75.50 75.50 73.52 73.51 78.47 78.43 TWE as it leverages iden tity-lev el word co-o ccurrences and represen ts different levels of word co-o ccurrences through heterogeneous netw orks. 5.3 Nearest Neighbors T o intu itively compare the embeddings learned by differ- en t approac hes, in this part we lo ok at the nearest neigh- bors of w ords based on the embeddings. The similarit y of the words are calculated as the cosine similarity of the w ord embeddings. T able 5 presents some examples of near- est neighbors based on the em b eddings learned by different approac hes on the WikiFull data set. Overall, we can see that the word embeddings learned by our ISE approach are indeed able to capture different meanings of the same word. T ak e the word “language” as an example. The three dif- feren t meanings of “language” recognized by our ISE mo del are “linguistic” , “programming language” and “human lan- guage” . The MSSG model only disco vers tw o differen t mean- ings. The result of Skipgram is even worse, which is a mix- ture com bination of multiple meanings. 5.4 V isualization of W ord Embeddings Fig. 3 shows a visualization of our identit y-sensitive word em b eddings on the DBLP data set with topic identit y . The w ords are mapp ed to 2-dimensional space with the tSNE [26]. Fig. 3(a) gives an ov erview of the visualization. Different colors represent differen t topics/identities, and the top 50 rank ed w ords in each topic are visualized. A t the right b ot- tom, we zo om in a part of the visualization and present tw o topics. Overall, we can see that the iden tity-sensitiv e word em b eddings ha ve a very clear semantic structu re. W ords be- longing to the same identities/topics are clustered together and different iden tities/topics are well separated. At the bottom of the tw o topic examples in Fig. 3(a), we find that the word “probabilit y” taking on differen t iden tities/topics are very close to eac h other. This ma y b e explained by the linguistic theory that the meanings of wo rds with different senses are still v ery close to each other [4]. Fig. 3(b), 3(c), 3(d), 3(e) sho w some examples of wo rds that app ear in multiple topics (or hav e multiple identities) including the w ord “netw orks” , “learning” , “language” , and “seman tic” . F or example, the word “netw orks” can refer to “Ba yesian netw orks” or “wireless netw orks” ; the word “lan- guage” can refer to “programming language” or “natural lan- guage” ; the word “learning” can refer to “machine learning” or “ human learning” . 6. CONCLUSION In this pap er, we studied the problem of contextual word em b eddings. W e ackno wledged that the same words can tak e on different iden tities in different contexts, and pro- posed to learn identit y-sensitiv e word embeddings. W e pro- posed to represent different levels of word co-occurrences with a heterogeneous netw ork. A principled heterogeneous net work embedding approach based on our previous work is further prop osed, which is able to obtain identit y-sensitiv e w ord embeddings and the embeddings of word identities. Experiments show that the identit y-sensitive word embed- dings learned by our approac h are indeed able to capture differen t meanings of the same word. Exp eriments on text classification and contextual word similarity prov ed the ef- fectiv eness of our prop osed approac h. T able 5: Comparison of nearest neigh b ors based on embeddings learned by different approaches on the WikiFull data set. W ord Algorithm Most similar words W ord Algorithm Most similar words language ISE(topic) multilingual, sp eaking, vocabulary , write, linguistic bank ISE(topic) branch, trust, commercial, savings, dep osit programming, jav a, compiler, implementation, oriented river, opp osite, mouth, crossing, bridge Hindi, Malay alam, Bengali, Marathi, Oriya MSSG programming, procedural, lisp, jav a, ob ject MSSG repurchases, securitizes, money , credit, sto ck vernacular, English, sp eakers, siswati, b erber thouet, swale, north, east, side Skipgram multilingual, englishmandarin, spoken, linguistic, english Skipgram citibank, HSBC, vanquish , vanquish, p ostbank star ISE(topic) sky , pho enix, glob e, golden, blue windows ISE(topic) server, op erating, version, Vista, XP series, movies, comics, prequel, film rear, flo or, large, ro of, entrance MSSG nebula, orion, giant, moon, planet MSSG vista, os, XP , MS, dos episode, batman, prequel, series, movie doors, openings, arcade, freestanding, so ck ets Skipgram sunlik e, gazers, hypergiant, sup ergiant, galaxy Skipgram microsoft, desktop, console, linux, cygwin left ISE(topic) leaving, moving, position, coming, return fox ISE(topic) nba, ab c, cbs, espn, news upper, middle, facing, side, top hawk, eagle, buck, bird, duck MSSG leaving, returned, stayed, joined, leav e MSSG wolf, b ear, ringneck, bullfrogs, antelope right, radishes, centerline, back courts, kingside upn, fxm, nbc, wfft, kmov Skipgram righ t, leaving, coming, arrive, leav e Skipgram cbs, nbc, ab c, ktvi, wtxf apple ISE(topic) strawberry , apples, plum, blueb erry , cherry netw ork ISE(topic) areas, exchange, distributed, services, link iphone, ipo d, ipad, store, ios abc, programming, broadcast, stations, nationwide macintosh, mac, computers, server, pro ducts rail, lines, stations, traffic, routes MSSG pc, macintosh, IBM, Microsoft, Intel MSSG connection, wan, routing, noncentralized, proto col, ip pear, honey , pumpkin, potato, nut channel, cable, tv, broadcaster, newsasia Microsoft, activision, sony , retail, gamestop Skipgram blackberry , macintosh, appleworks, ib ook, iwork Skipgram citynet, cablecast, cable, csnet, channel In the future, we plan to study more types of word iden- tities suc h as POS tags and ideologies. Besides, an inter- esting problem to in vestiga te is that whether the iden tity - sensitiv e w ord embeddings can b e useful in improving the performance of recognizing the identities of the word tokens in different contexts. F or example, whether the iden tity- sensitiv e word embeddings can b e useful for topic mo deling process. 7. REFERENCES [1] Y. Bengio, R. Ducharme, P . Vincen t, and C. Janvin. A neural probabilistic language mo del. The Journal of Machine L earn ing R ese arch , 3:1137–1155, 2003. [2] D. M. Blei, A. Y. Ng, and M. I. Jordan. Latent diric hlet allocation. the Journal of machine Le arning r esear ch , 3:993–1022, 2003. [3] X. Chen, Z. Liu, and M. Sun. A unified mo del for word sense represen tation and disambiguation. In EMNLP , pages 1025–1035, 2014. [4] N. Chomsky . Curre nt issues in linguistic theory , volume 38. W alter de Gruyter, 1988. [5] R. Collobert, J. W eston, L. Bottou, M. Karlen, K. Kavuk cuoglu, and P . Kuksa. Natural language processing (almost) from scratch. JMLR , 12:2493–2537, 2011. [6] J. R. Firth. A synopsis of linguistic theory , 1930–1955. In J. R. Firth (Ed.), Studies in linguistic analysis , pages 1–32. [7] T. L. Griffiths and M. Steyvers. Finding scientific topics. Pr oc e edings of the National A c ademy of Scienc es , 101(suppl 1):5228–5235, 2004. [8] E. H. Huang, R. So cher, C. D. Manning, and A. Y. Ng. Improv ing word represen tations via global context and multiple word prototypes. In ACL , pages 873–882. Association for Computational Linguistics, 2012. [9] Y. Kim. Conv olutional neural netw orks for sentence classification. arXiv pr eprint arXiv:1408.5882 , 2014. [10] J. Lafferty , A. McCallum, and F. C. Pereira. Conditional random fields: Probabilistic mo dels for segmenting and labeling sequence data. 2001. [11] Y. Liu, Z. Liu, T.-S. Chua, and M. Sun. T opical word embeddings. In AAAI , 2015. [12] A. L. Maas, R. E. Daly , P . T. Pham, D. Huang, A. Y. Ng, and C. Potts. Learning w ord vectors for sen timent analysis. In Pr o ce e dings of the 49th Annual Meeting of the Asso ciation for Computational Linguistics: Human L anguage T echn olo gies-V olume 1 , pages 142–150. Association for Computational Linguistics, 2011. [13] T. Mikolo v, I. Sutskev er, K. Chen, G. S. Corrado, and J. Dean. Distributed representations of w ords and phrases and their compositionality . In NIPS , pages 3111–3119, 2013. [14] A. Mnih and G. Hinton. Three new graphical mo dels for statistical language mo delling. In ICML , pages 641–648. ACM, 2007. [15] A. Mnih and G. E. Hinton. A scalable hierarchical distributed language mo del. In NIPS , pages 1081–1088, 2009. [16] A. Mnih and K. Kavuk cuoglu. Learning word em b eddings efficiently with noise-con trastive estimation. In A dvanc es in Neur al Information Pr o c essing Systems , pages 2265–2273, 2013. [17] A. Neelak antan, J. Shank ar, A. Passos, and A. McCallum. Efficient non-parametric estimation of multiple em beddings per word in vect or space. arXiv pr eprint arXiv:1504.06654 , 2015. [18] B. Pang and L. Lee. Seeing stars: Exploiting class relationships for sentimen t categorization with resp ect to rating scales. In Pr o c ee dings of the 43r d Annual Me eting on Asso ciation for Computational Linguistics , pages 115–124. Association for Computational Linguistics, 2005. [19] J. Pennington, R. Socher, and C. D. Manning. Glov e: Global vectors for w ord representation. EMNLP , 12:1532–1543, 2014. [20] G. Salton and C. Buckley . T erm-w eigh ting approaches in automatic text retriev al. Information pr oc essing & management , 24(5):513–523, 1988. [21] R. Socher, A. Perelygin, J. Y. W u, J. Chuang, C. D. Manning, A. Y. Ng, and C. P otts. Recursive deep models for semantic compositionality o ve r a sentimen t treebank. In Pr oc e edings of the c onfer enc e on empiric al metho ds in natur al language pr o c essing (EMNLP) , volume 1631, page 1642. Citeseer, 2013. [22] H. T a jfel. So cial identit y and intergroup behaviour. So cial Scienc e Information/sur les scienc es so ciales , 1974. [23] J. T ang, M. Qu, and Q. Mei. Pte: Predictive text embedding through large-scale heterogeneous text netw orks. In KDD , 2015. [24] J. T ang, M. Qu, M. W ang, M. Zhang, J. Y an, and Q. Mei. Line: Large-scale information netw ork embedding. In WWW , pages 1067–1077. International W orld Wide W eb Conferences Steering Committee, 2015. [25] J. C. T urner, M. A. Hogg, P . J. Oakes, S. D. Reicher, and M. S. W etherell. R e discoveri ng the so cial gr oup: A self-c ateg orization the ory. Basil Blackw ell, 1987. [26] L. V an der Maaten and G. Hinton. Visualizing data using t-sne. Journal of Machine L earning R ese ar ch , 9(2579-2605):85, 2008. (a) Ov erview of the identit y-sensitive word embeddings (b) The meanings of word “netw orks” (c) The meanings of word “learning” (d) The meanings of word “language” (e) The meanings of word “semantic” Figure 3: Visualization of the identi ty-sensitiv e w ord embeddings on the DBLP data set using the tSNE [26]. T opic iden tity is used. Different colors represent different identities (topics).

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment