Gaussian Attention Model and Its Application to Knowledge Base Embedding and Question Answering

We propose the Gaussian attention model for content-based neural memory access. With the proposed attention model, a neural network has the additional degree of freedom to control the focus of its attention from a laser sharp attention to a broad att…

Authors: Liwen Zhang, John Winn, Ryota Tomioka

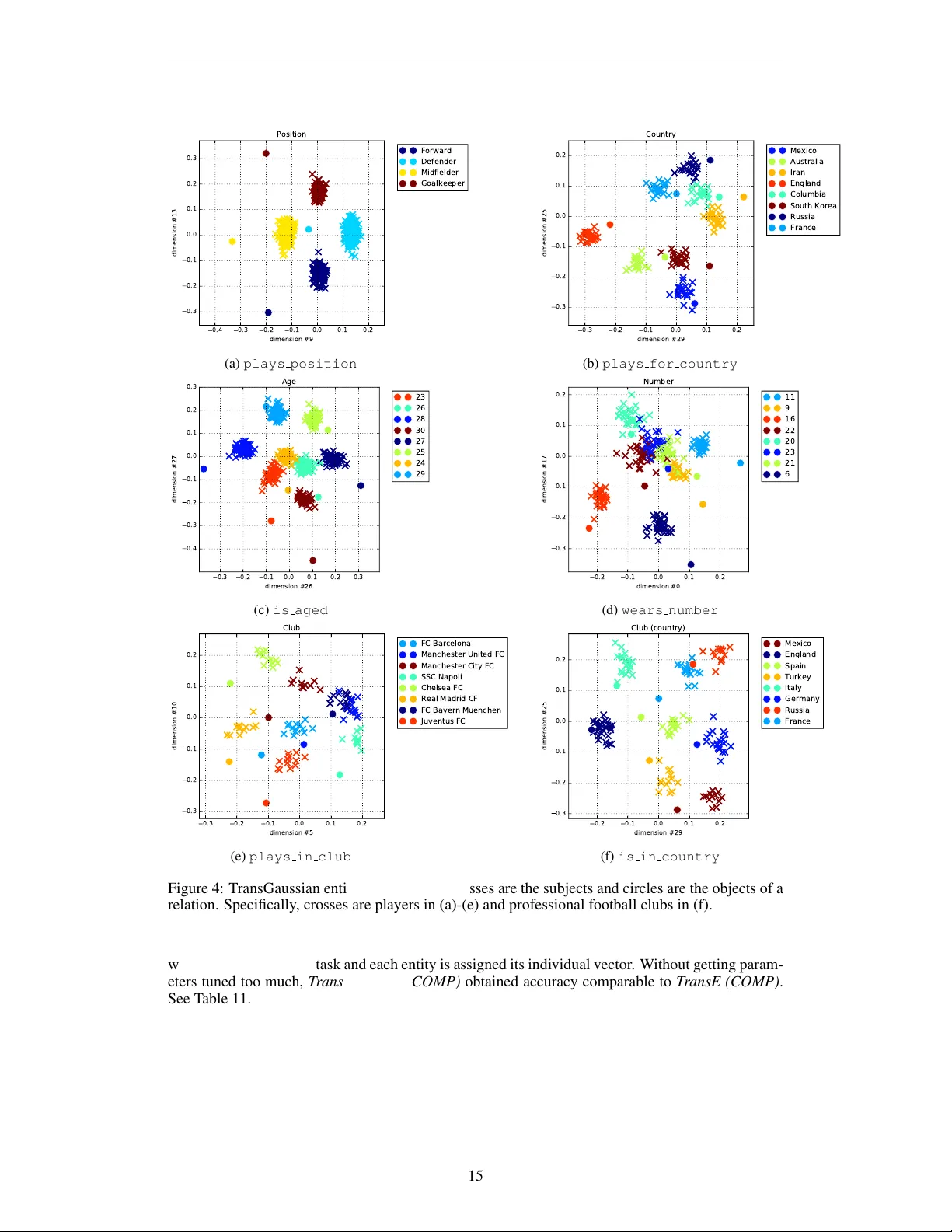

Under revie w as a conference paper at ICLR 2017 G AU S S I A N A T T E N T I O N M O D E L A N D I T S A P P L I C A T I O N T O K N O W L E D G E B A S E E M B E D D I N G A N D Q U E S T I O N A N S W E R I N G Liwen Zhang Department of Computer Science Univ ersity of Chicago Chicago, IL 60637, USA liwenz@cs.uchicago.edu John W inn & Ryota T omioka Microsoft Research Cambridge Cambridge, CB1 2FB, UK { jwinn, ryoto } @microsoft.com A B S T R AC T W e propose the Gaussian attention model for content-based neural memory ac- cess. W ith the proposed attention model, a neural network has the additional degree of freedom to control the focus of its attention from a laser sharp attention to a broad attention. It is applicable whenev er we can assume that the distance in the latent space reflects some notion of semantics. W e use the proposed atten- tion model as a scoring function for the embedding of a knowledge base into a continuous vector space and then train a model that performs question answering about the entities in the knowledge base. The proposed attention model can han- dle both the propagation of uncertainty when following a series of relations and also the conjunction of conditions in a natural w ay . On a dataset of soccer players who participated in the FIF A W orld Cup 2014, we demonstrate that our model can handle both path queries and conjunctiv e queries well. 1 I N T RO D U C T I O N There is a growing interest in incorporating external memory into neural networks. For example, memory networks (W eston et al., 2014; Sukhbaatar et al., 2015) are equipped with static memory slots that are content or location addressable. Neural T uring machines (Graves et al., 2014) imple- ment memory slots that can be read and written as in T uring machines (T uring, 1938) but through differentiable attention mechanism. Each memory slot in these models stores a vector corresponding to a continuous representation of the memory content. In order to recall a piece of information stored in memory , attention is typically employed. Attention mechanism introduced by Bahdanau et al. (2014) uses a network that outputs a discrete probability mass ov er memory items. A memory read can be implemented as a weighted sum of the memory vectors in which the weights are giv en by the attention network. Reading out a single item can be realized as a special case in which the output of the attention network is peaked at the desired item. The attention network may depend on the current context as well as the memory item itself. The attention model is called location-based and content-based, if it depends on the location in the memory and the stored memory vector , respecti vely . Knowledge bases, such as W ordNet and Freebase, can also be stored in memory either through an explicit kno wledge base embedding (Bordes et al., 2011; Nickel et al., 2011; Socher et al., 2013) or through a feedforward network (Bordes et al., 2015). When we embed entities from a kno wledge base in a continuous vector space, if the capacity of the embedding model is appropriately controlled, we expect semantically similar entities to be close to each other , which will allow the model to generalize to unseen facts. Howe ver the notion of proximity may strongly depend on the type of a relation. For example, Benjamin Franklin was an engineer but also a politician. W e would need different metrics to capture his proximity to other engineers and politicians of his time. 1 Under revie w as a conference paper at ICLR 2017 Inner-product Gaussian Gaussian Figure 1: Comparison of the conv entional content-based attention model using inner product and the proposed Gaussian attention model with the same mean but tw o different cov ariances. In this paper, we propose a new attention model for content-based addressing. Our model scores each item v item in the memory by the (logarithm of) multi variate Gaussian likelihood as follo ws: score ( v item ) = log φ ( v item | µ context , Σ context ) = − 1 2 ( v item − µ context ) Σ − 1 context ( v item − µ context ) + const. (1) where context denotes all the v ariables that the attention depends on. For example, “ American engineers in the 18th century” or “ American politicians in the 18th century” would be two contexts that include Benjamin Franklin but the tw o attentions would have v ery different shapes. Compared to the (normalized) inner product used in pre vious work (Sukhbaatar et al., 2015; Gra ves et al., 2014) for content-based addressing, the Gaussian model has the additional control of the spread of the attention ov er items in the memory . As we sho w in Figure 1, we can view the con ven- tional inner-product-based attention and the proposed Gaussian attention as addressing by an affine energy function and a quadratic energy function, respecti vely . By making the addressing mechanism more complex, we may represent many entities in a relati vely lo w dimensional embedding space. Since knowledge bases are typically extremely sparse, it is more likely that we can afford to hav e a more complex attention model than a lar ge embedding dimension. W e apply the proposed Gaussian attention model to question answering based on kno wledge bases. At the high-lev el, the goal of the task is to learn the mapping from a question about objects in the knowledge base in natural language to a probability distribution ov er the entities. W e use the scoring function (1) for both embedding the entities as v ectors, and extracting the conditions mentioned in the question and taking a conjunction of them to score each candidate answer to the question. The ability to compactly represent a set of objects makes the Gaussian attention model well suited for representing the uncertainty in a multiple-answer question (e.g., “who are the children of Abraham Lincoln?”). Moreo ver , traversal over the knowledge graph (see Guu et al., 2015) can be naturally handled by a series of Gaussian conv olutions, which generalizes the addition of vectors. In fact, we model each relation as a Gaussian with mean and variance parameters. Thus a traversal on a relation corresponds to a translation in the mean and addition of the variances. The proposed question answering model is able to handle not only the case where the answer to a question is associated with an atomic f act, which is called simple Q&A (Bordes et al., 2015), but also questions that require composition of relations (path queries in Guu et al. (2015)) and conjunction of queries. An e xample flow of ho w our model deals with a question “Who plays forward for Borussia Dortmund?” is sho wn in Figure 2 in Section 3. This paper is structured as follows. In Section 2, we describe how the Gaussian scoring function (1) can be used to embed the entities in a kno wledge base into a continuous vector space. W e call our model T ransGaussian because of its similarity to the TransE model proposed by Bordes et al. (2013). Then in Section 3, we describe our question answering model. In Section 4, we carry out experiments on W orldCup2014 dataset we collected. The dataset is relatively small but it allows us to e valuate not only simple questions but also path queries and conjunction of queries. The proposed T ransGaussian embedding with the question answering model achieves significantly higher accuracy than the v anilla T ransE embedding or T ransE trained with compositional relations Guu et al. (2015) combined with the same question answering model. 2 Under revie w as a conference paper at ICLR 2017 2 K N OW L E D G E B A S E E M B E D D I N G In this section, we describe the proposed TransGaussian model based on the Gaussian attention model (1). While it is possible to train a network that computes the embedding in a single pass (Bordes et al., 2015) or over multiple passes (Li et al., 2015), it is more efficient to offload the embedding as a separate step for question answering based on a large static kno wledge base. 2 . 1 T H E T R A N S G AU S S I A N M O D E L Let E be the set of entities and R be the set of relations. A knowledge base is a collec- tion of triplets ( s, r , o ) , where we call s ∈ E , r ∈ R , and o ∈ E , the subject, the re- lation, and the object of the triplet, respecti vely . Each triplet encodes a fact . For example, ( Albert Einstein , has profession , theoretical physicist ) . All the triplets given in a knowledge base are assumed to be true. Howe ver generally speaking a triplet may be true or false. Thus knowledge base embedding aims at training a model that predict if a triplet is true or not giv en some parameterization of the entities and relations (Bordes et al., 2011; 2013; Nickel et al., 2011; Socher et al., 2013; W ang et al., 2014). In this paper , we associate a vector v s ∈ R d with each entity s ∈ E , and we associate each relation r ∈ R with two parameters, δ r ∈ R d and a positiv e definite symmetric matrix Σ r ∈ R d × d ++ . Giv en subject s and relation r , we can compute the score of an object o to be in triplet ( s, r , o ) using the Gaussian attention model as (1) with score ( s, r , o ) = log φ ( v o | µ context , Σ context ) , (2) where µ context = v s + δ r , Σ context = Σ r . Note that if Σ r is fixed to the identity matrix, we are modeling the relation of subject v s and object v o as a translation δ r , which is equiv alent to the T ransE model (Bordes et al., 2013). W e allow the cov ariance Σ r to depend on the relation to handle one-to-man y relations (e.g., profession has person relation) and capture the shape of the distribution of the set of objects that can be in the triplet. W e call our model T ransGaussian because of its similarity to T ransE (Bordes et al., 2013). Parameterization For computational efficienc y , we will restrict the cov ariance matrix Σ r to be diagonal in this paper . Furthermore, in order to ensure that Σ r is strictly positi ve definite, we employ the exponential linear unit (ELU, Cle vert et al., 2015) and parameterize Σ r as follows: Σ r = diag ELU( m r, 1 )+1+ . . . ELU( m r,d )+1+ ! where m r,j ( j = 1 , . . . , d ) are the unconstrained parameters that are optimized during training and is a small positi ve value that ensure the positi vity of the v ariance during numerical computation. The ELU is defined as ELU( x ) = x, x ≥ 0 , exp ( x ) − 1 , x < 0 . Ranking loss Suppose we ha ve a set of triplets T = { ( s i , r i , o i ) } N i =1 from the knowledge base. Let N ( s, r ) be the set of incorrect objects to be in the triplet ( s, r , · ) . Our objective function uses the ranking loss to measure the margin between the scores of true an- swers and those of false answers and it can be written as follo ws: min { v e : e ∈E } , { δ r , M r , : r ∈ ¯ R} 1 N X ( s,r,o ) ∈T E t 0 ∼N ( s,r ) [ µ − score ( s, r , o ) + score ( s, r , t 0 )] + + λ X e ∈E k v e k 2 2 + X r ∈ ¯ R k δ r k 2 2 + k M r k 2 F , (3) where, N = |T | , µ is the margin parameter and M r denotes the diagonal matrix with m r,j , j = 1 , . . . , d on the diagonal; the function [ · ] + is defined as [ x ] + = max(0 , x ) . Here, we treat an in verse 3 Under revie w as a conference paper at ICLR 2017 relation as a separate relation and denote by ¯ R = R ∪ R − 1 the set of all the relations including both relations in R and their inv erse relations; a relation ˜ r is the in verse relation of r if ( s, ˜ r , o ) implies ( o, r , s ) and vice versa. Moreov er, E t 0 ∼N ( s,r ) denotes the expectation with respect to the uniform distribution ov er the set of incorrect objects, which we approximate with 10 random samples in the experiments. Finally , the last terms are ` 2 regularization terms for the embedding parameters. 2 . 2 C O M P O S I T I O N A L R E L AT I O N S Guu et al. (2015) has recently sho wn that training TransE with compositional relations can make it competitiv e to more complex models, although TransE is much simpler compared to for exam- ple, neural tensor networks (NTN, Socher et al. (2013)) and T ransH W ang et al. (2014). Here, a compositional relation is a relation that is composed as a series of relations in R , for exam- ple, grand father of can be composed as first applying the parent of relation and then the father of relation, which can be seen as a tra versal ov er a path on the knowledge graph. T ransGaussian model can naturally handle and propagate the uncertainty over such a chain of relations by con volving the Gaussian distributions along the path. That is, the score of an en- tity o to be in the τ -step relation r 1 /r 2 / · · · /r τ with subject s , which we denote by the triplet ( s, r 1 /r 2 / · · · /r τ , o ) , is giv en as score ( s, r 1 /r 2 / · · · /r τ , o ) = log φ ( v o | µ context , Σ context ) , (4) with µ context = v s + P τ t =1 δ r t , Σ context = P τ t =1 Σ r t , where the cov ariance associated with each relation is parameterized in the same way as in the pre vious subsection. T raining with compositional relations Let P = n s i , r i 1 /r i 2 / · · · /r i l i , o i o N 0 i =1 be a set of randomly sampled paths from the knowledge graph. Here relation r i k in a path can be a relation in R or an inv erse relation in R − 1 . W ith the scoring function (4), the generalized training objecti ve for compositional relations can be written identically to (3) except for replacing T with T ∪ P and replacing N with N 0 = |T ∪ P | . 3 Q U E S T I O N A N S W E R I N G Giv en a set of question-answer pairs, in which the question is phrased in natural language and the an- swer is an entity in the kno wledge base, our goal is to train a model that learns the mapping from the question to the correct entity . Our question answering model consists of three steps, entity recog- nition, relation composition, and conjunction. W e first identify a list of entities mentioned in the question (which is assumed to be pro vided by an oracle in this paper). If the question is “Who plays Forward for Borussia Dortmund?” then the list would be [ Forward , Borussia Dortmund ]. The next step is to predict the path of relations on the knowledge graph starting from each en- tity in the list extracted in the first step. In the above example, this will be (smooth versions of) /Forward/position played by/ and /Borussia Dortmund/has player/ predicted as series of Gaussian conv olutions. In general, we can have multiple relations appearing in each path. Finally , we take a product of all the Gaussian attentions and renormalize it, which is equiv alent to Bayes’ rule with independent observations (paths) and a noninformati ve prior . 3 . 1 E N T I T Y R E C O G N I T I O N W e assume that there is an oracle that provides a list containing all the entities mentioned in the question, because (1) a domain specific entity recognizer can be dev eloped ef ficiently (W illiams et al., 2015) and (2) generally entity recognition is a challenging task and it is beyond the scope of this paper to show whether there is any benefit in training our question answering model jointly with a entity recognizer . W e assume that the number of extracted entities can be different for each question. 3 . 2 R E L A T I O N C O M P O S I T I O N W e train a long short-term memory (LSTM, Hochreiter & Schmidhuber, 1997) network that emits an output h t for each token in the input sequence. Then we compute the attention over the hidden 4 Under revie w as a conference paper at ICLR 2017 Q: W ho pla y s f or w ar d f or Boruss i a Dor tmund ? W ho pla y s f or w ar d f or Borus sia Dor tmund ? multi ply & normali z e w eigh t ed sum w eigh t ed c on v ol uti on w eigh ts 𝛼 𝑟 , Forwar d w eigh ts 𝑝 𝑡 , Forw ard 𝒉 1 𝒉 2 𝒉 𝑇 𝒐 Forward 𝒗 Fo rw ar d 𝒗 Bo ru ss ia _D or tm u nd A: Mar c o R eu s 𝒗 Ma rc o_ Re us sc or e u sing E q. (7 ) Figure 2: The input to the system is a question in natural language. T wo entities Forward and Borussia Dortmund are identified in the question and associated with point mass distributions centered at the corresponding entity vectors. An LSTM encodes the input into a sequence of output vectors of the same length. Then we take av erage of the output vectors weighted by attention p t,e for each recognized entity e to predict the weight α r,e for relation r associated with entity e . W e form a Gaussian attention over the entities for each entity e by con volving the corresponding point mass with the (pre-trained) Gaussian embeddings of the relations weighted by α r,e according to Eq. (6). The final prediction is produced by taking the product and normalizing the Gaussian attentions. states for each recognized entity e as p t,e = softmax ( f ( v e , h t )) ( t = 1 , . . . , T ) , where v e is the v ector associated with the entity e . W e use a two-layer perceptron for f in our experiments, which can be written as follo ws: f ( v e , h t ) = u > f ReLU ( W f ,v v e + W f ,h h t + b 1 ) + b 2 , where W f ,v ∈ R L × d , W f ,h ∈ R L × H , b 1 ∈ R L , u f ∈ R L , b 2 ∈ R are parameters. Here ReLU( x ) = max(0 , x ) is the rectified linear unit. Finally , softmax denotes softmax over the T tokens. Next, we use the weights p t,e to compute the weighted sum ov er the hidden states h t as o e = X T t =1 p t,e h t . (5) Then we compute the weights α r,e ov er all the relations as α r,e = ReLU w > r o e ( ∀ r ∈ R ∪ R − 1 ) . Here the rectified linear unit is used to ensure the positivity of the weights. Note howe ver that the weights should not be normalized, because we may w ant to use the same relation more than once in the same path. Making the weights positive also has the effect of making the attention sparse and interpretable because there is no cancellation. For each extracted entity e , we view the extracted entity and the answer of the question to be the subject and the object in some triplet ( e, p, o ) , respectiv ely , where the path p is inferred from the question as the weights α r,e as we described abov e. Accordingly , the score for each candidate answer o can be expressed using (1) as: score e ( v o ) = log φ ( v o | µ e,α, KB , Σ e,α, KB ) (6) with µ e,α, KB = v e + P r ∈ ¯ R α r,e δ r , Σ e,α, KB = P r ∈ ¯ R α 2 r,e Σ r , where v e is the vector associated with entity e and ¯ R = R ∪ R − 1 denotes the set of relations including the in verse relations. 5 Under revie w as a conference paper at ICLR 2017 3 . 3 C O N J U N C T I O N Let E ( q ) be the set of entities recognized in the question q . The final step of our model is to tak e the conjunction of the Gaussian attentions derived in the previous step. This step is simply carried out by multiplying the Gaussian attentions as follows: score ( v o |E ( q ) , Θ) = log Y e ∈E ( q ) φ ( v o | µ e,α, KB , Σ e,α, KB ) = − 1 2 X e ∈E ( q ) v o − µ e,α, KB > Σ − 1 e,α, KB ( v o − µ e,α, KB ) + const. , (7) which is again a (logarithm of) Gaussian scoring function, where µ e,α, KB and Σ e,α, KB are the mean and the covariance of the Gaussian attention given in (6). Here Θ denotes all the parameters of the question-answering model. 3 . 4 T R A I N I N G T H E Q U E S T I O N A N S W E R I N G M O D E L Suppose we hav e a knowledge base ( E , R , T ) and a trained TransGaussian model { v e } e ∈E , { ( δ r , Σ r ) } r ∈ ¯ R , where ¯ R is the set of all relations including the in verse rela- tions. During training time, we assume the training set is a supervised question-answer pairs { ( q i , E ( q i ) , a i ) : i = 1 , 2 , . . . , m } . Here, q i is a question formulated in natural language, E ( q i ) ⊂ E is a set of knowledge base entities that appears in the question, and a i ∈ E is the answer to the question. For e xample, on a knowledge base of soccer players, a v alid training sample could be (“Who plays forward for Borussia Dortmund?”, [ Forward , Borussia Dortmund ] , Marco Reus ). Note that the answer to a question is not necessarily unique and we allow a i to be any of the true answers in the knowledge base. During test time, our model is shown ( q i , E ( q i )) and the task is to find a i . W e denote the set of answers to q i by A ( q i ) . T o train our question-answering model, we minimize the objectiv e function 1 m m X i =1 E t 0 ∼N ( q i ) [ µ − score ( v a i |E ( q i ) , Θ) + score ( v t 0 |E ( q i ) , Θ)] + + ν X e ∈E ( q i ) X r ∈ ¯ R | α r,e | + λ k Θ k 2 2 where E t 0 ∼N ( q i ) is expectation with respect to a uniform distribution over of all incorrect answers to q i , which we approximate with 10 random samples. W e assume that the number of relations implied in a question is small compared to the total number of relations in the kno wledge base. Hence the coefficients α r,e computed for each question q i are regularized by their ` 1 norms. 4 E X P E R I M E N T S As a demonstration of the proposed framew ork, we perform question and answering on a dataset of soccer players. In this work, we consider two types of questions. A path query is a question that contains only one named entity from the knowledge base and its answer can be found from the knowledge graph by walking down a path consisting of a fe w relations. A conjunctive query is a question that contains more than one entities and the answer is giv en as the conjunction of all path queries starting from each entity . Furthermore, we experimented on a knowledge base completion task with TransGaussian embeddings to test its capability of generalization to unseen fact. Since knowledge base completion is not the main focus of this work, we include the results in the Appendix. 4 . 1 W O R L D C U P 2 0 1 4 D A TA S E T W e build a knowledge base of football players that participated in FIF A W orld Cup 2014 1 . The original dataset consists of players’ information such as nationality , posi- tions on the field and ages etc. W e picked a few attributes and constructed 1127 en- tities and 6 atomic relations. The entities include 736 players, 297 professional soc- cer clubs, 51 countries, 39 numbers and 4 positions. And the six atomic relations are 1 The original dataset can be found at https://datahub .io/dataset/fifa-world-cup-2014-all-players. 6 Under revie w as a conference paper at ICLR 2017 plays in club : PLA YER → CLUB, plays position : PLA YER → POSITION, is aged : PLA YER → NUMBER, wears number 2 : PLA YER → NUMBER, plays for country : PLA YER → COUNTR Y , is in country : CLUB → COUNTR Y , where PLA YER, CLUB, NUMBER, etc, denote the type of entities that can appear as the left or right argument for each relation. Some relations share the same type as the right argument, e.g., plays for country and is in country . Giv en the entities and relations, we transformed the dataset into a set of 3977 triplets. A list of sample triplets can be found in the Appendix. Based on these triplets, we created two sets of question answering tasks which we call path query and conjunctive query respectiv ely . The answer of ev ery question is always an entity in the knowledge base and a question can in volve one or two triplets. The questions are generated as follows. Path queries. Among the paths on the knowledge graph, there are some natural composition of relations, e.g., plays in country (PLA YER → COUNTR Y) can be decomposed as the com- position of plays in club (PLA YER → CLUB) and is in country (CLUB → COUNTR Y). In addition to the atomic relations, we manually picked a few meaningful compositions of relations and formed query templates , which takes the form “find e ∈ E , such that ( s, p, e ) is true”, where s is the subject and p can be an atomic relation or a path of relations. T o formulate a set of path-based question-answer pairs, we manually created one or more question templates for ev ery query tem- plate (see T able 5) Then, for a particular instantiation of a query template with subject and object entities, we randomly select a question template to generate a question giv en the subject; the object entity becomes the answer of the question. See T able 6 for the list of composed relations, sample questions, and answers. Note that all atomic relations in this dataset are many-to-one while these composed relations can be one-to-many or man y-to-many as well. Conjunctive queries. T o generate question-and-answer pairs of conjunctive queries, we first picked three pairs of relations and used them to create query templates of the form “Find e ∈ E , such that both ( s 1 , r 1 , e ) and ( s 2 , r 2 , e ) are true. ” (see T able 5). F or a pair of relations r 1 and r 2 , we enumerated all pairs of entities s 1 , s 2 that can be their subjects and formulated the corresponding query in natural language using question templates as in the same way as path queries. See T able 7 for a list of sample questions and answers. As a result, we created 8003 question-and-answer pairs of path queries and 2208 pairs of conjunctive queries which are partitioned into train / v alidation / test subsets. W e refer to T able 1 for more statistics about the dataset. T emplates for generating the questions are list in T able 5. 4 . 2 E X P E R I M E N T A L S E T U P T o perform question and answering under our proposed framework, we first train the T ransGaussian model on W orldCup2014 dataset. In addition to the atomic triplets, we randomly sampled 50000 paths with length 1 or 2 from the knowledge graph and trained a TransGaussian model composi- tionally as described in Set 2.2. An in verse relation is treated as a separate relation. Following the naming con vention from Guu et al. (2015), we denote this trained embedding by T ransGaus- sian (COMP) . W e found that the learned embedding possess some interesting properties. Some dimensions of the embedding space dedicate to represent a particular relation. Players are clustered by their attributes when entities’ embeddings are projected to the corresponding lower dimensional subspaces. W e elaborate and illustrate such properties in the Appendix. Baseline methods W e also trained a TransGaussian model only on the atomic triplets and denote such a model by T ransGaussian (SINGLE) . Since no in verse relation was in volved when T rans- Gaussian (SINGLE) was trained, to use this embedding in question answering tasks, we represent the in verse relations as follows: for each relation r with mean δ r and variance Σ r , we model its in verse r − 1 as a Gaussian attention with mean − δ r and variance equal to Σ r . W e also trained T ransE models on W orldCup2014 dataset by using the code released by the authors of Guu et al. (2015). Likewise, we use T ransE (SINGLE) to denote the model trained with atomic triplets only and use T ransE (COMP) to denote the model trained with the union of triplets and paths. Note that TransE can be considered as a special case of T ransGaussian where the variance matrix is the identity and hence, the scoring formula Eq. (7) is applicable to T ransE as well. 7 Under revie w as a conference paper at ICLR 2017 T raining configurations For all models, dimension of entity embeddings was set to 30. The hid- den size of LSTM was set to 80. W ord embeddings were trained jointly with the question answering model and dimension of word embedding was set to 40. W e employed Adam (Kingma & Ba, 2014) as the optimizer . All parameters were tuned on the v alidation set. Under the same setting, we exper - imented with two cases: first, we trained models for path queries and conjuncti ve queries separately; Furthermore, we trained a single model that addresses both types queries. W e present the results of the latter case in the next subsection while the results of the former are included in the Appendix. Evaluation metrics During test time, our model receives a question in nat ural language and a list of kno wledge base entities contained in the question. Then it predicts the mean and variance of a Gaussian attention formulated in Eq. (7) which is expected to capture the distrib ution of all positiv e answers. W e rank all entities in the knowledge base by their scores under this Gaussian attention. Next, for each entity which is a correct answer , we check its rank relativ e to all incorrect answers and call this rank the filtered rank. For example, if a correct entity is ranked abov e all negati ve answers except for one, it has filtered rank two. W e compute this rank for all true answers and report mean filter ed rank and H@1 which is the percentage of true answers that have filtered rank 1. 4 . 3 E X P E R I M E N T A L R E S U LT S W e present the results of joint learning in T able 2. These results sho w that TransGaussian works better than TransE in general. In fact, T ransGaussian (COMP) achiev ed the best performance in almost all aspects. Most notably , it achie ved the highest H@1 rates on challenging questions such as “where is the club that edin dzeko plays for?” (#11, composition of two relations) and “who are the defenders on german national team?” (#14, conjunction of two queries). The same table shows that T ransGaussian benefits remarkably from compositional training. For example, compositional training improved TransGaussian’ s H@1 rate by near 60% in queries on players from a giv en countries (#8) and queries on players who play a particular position (#9). It also boosted T ransGaussian’ s performance on all conjunctiv e quries (#13–#15) significantly . T o understand T ransGaussian (COMP) ’ s weak performance on answering queries on the profes- sional football club located in a giv en country (#10) and queries on professional football club that has players from a particular country (#12), we tested its capability of modeling the composed re- lation by feeding the correct relations and subjects during test time. It turns out that these two relations were not modeled well by T ransGaussian (COMP) embedding, which limits its perfor - mance in question answering. (See T able 8 in the Appendix for quantitative e valuations.) The same limit was found in the other three embeddings as well. Note that all the models compared in T able 2 uses the proposed Gaussian attention model because T ransE is the special case of T ransGaussian where the v ariance is fixed to one. Thus the main dif fer- ences are whether the variance is learned and whether the embedding was trained compositionally . Finally , we refer to T able 9 and 10 in the Appendix for experimental results of models trained on path and conjunctiv e queries separately . T able 1: Some statistics of the W orldCup2014 dataset. # entity # atomic relations # atomic triplets # path query Q&A ( train / validation / test ) # conjunctiv e query Q&A ( train / validation / test ) 1127 6 3977 5620 / 804 / 1579 1564 / 224 / 420 5 R E L A T E D W O R K The work of V ilnis & McCallum (2014) is similar to our Gaussian attention model. They discuss many advantages of the Gaussian embedding; for e xample, it is arguably a better way of handling asymmetric relations and entailment. Ho wever the work was presented in the word2v ec (Mikolov et al., 2013)-style word embedding setting and the Gaussian embedding was used to capture the div ersity in the meaning of a word. Our Gaussian attention model extends their work to a more general setting in which any memory item can be addressed through a concept represented as a Gaussian distribution o ver the memory items. 8 Under revie w as a conference paper at ICLR 2017 T able 2: Results of joint learning with path queries and conjunction queries on W orldCup2014. T ransE (SINGLE) T ransE (COMP) T ransGaussian (SINGLE) T ransGaussian (COMP) # Sample question H@1(%) Mean Filtered Rank H@1(%) Mean Filtered Rank H@1(%) Mean Filtered Rank H@1(%) Mean Filtered Rank 1 which club does alan pulido play for? 88 . 59 1 . 18 91 . 95 1 . 11 96 . 64 1 . 04 98.66 1.01 2 what position does gonzalo higuain play? 100.00 1.00 98 . 11 1 . 03 98 . 74 1 . 01 100.00 1.00 3 how old is samuel etoo? 67 . 11 1 . 44 90 . 79 1 . 13 94 . 74 1 . 08 97.37 1.04 4 what is the jersey number of mario balotelli? 45 . 00 1 . 89 83 . 57 1 . 22 97 . 14 1 . 03 99.29 1.01 5 which country is thomas mueller from ? 94 . 40 1 . 06 94 . 40 1 . 06 96 . 80 1 . 04 98.40 1.02 6 which country is the soccer team fc porto based in ? 98.48 1.02 98.48 1.02 93 . 94 1 . 06 95 . 45 1 . 05 7 who plays professionally at liverpool fc? 95 . 12 1 . 10 90 . 24 1 . 20 98.37 1.04 96 . 75 1.04 8 which player is from iran? 89 . 86 1 . 51 76 . 81 2 . 07 38 . 65 2 . 96 99.52 1.00 9 name a player who plays goalkeeper? 98 . 96 1 . 01 69 . 79 1 . 82 42 . 71 5 . 52 100.00 1.00 10 which soccer club is based in mexico? 22 . 03 13 . 94 30.51 8.84 6 . 78 10 . 66 16 . 95 21 . 14 11 where is the club that edin dzeko plays for ? 52 . 63 3 . 88 57 . 24 2 . 10 47 . 37 2 . 27 78.29 1.41 12 name a soccer club that has a player from australia ? 30 . 43 12 . 08 33.70 11.47 13 . 04 11 . 64 19 . 57 17 . 57 Overall (Path Query) 74 . 16 3 . 11 77 . 39 2.56 69 . 54 3 . 02 85.94 3 . 52 13 who plays forward for fc barcelona? 97 . 55 1 . 06 76 . 07 1 . 66 93 . 25 1 . 24 98.77 1.02 14 who are the defenders on german national team? 95 . 93 1 . 06 69 . 92 2 . 33 65 . 04 2 . 04 100.00 1.00 15 which player in ssc napoli is from argentina? 88 . 81 1 . 17 76 . 12 1 . 76 88 . 81 1 . 35 97.76 1.03 Overall (Conj. Query) 94 . 29 1 . 09 74 . 29 1 . 89 83 . 57 1 . 51 98.81 1.02 Bordes et al. (2014; 2015) proposed a question-answering model that embeds both questions and their answers to a common continuous vector space. Their method in Bordes et al. (2015) can combine multiple knowledge bases and even generalize to a knowledge base that was not used during training. Howe ver their method is limited to the simple question answering setting in which the answer of each question associated with a triplet in the knowledge base. In contrast, our method can handle both composition of relations and conjunction of conditions, which are both naturally enabled by the proposed Gaussian attention model. Neelakantan et al. (2015a) proposed a method that combines relations to deal with compositional relations for kno wledge base completion. Their key technical contrib ution is to use recurrent neural networks (RNNs) to encode a chain of relations. When we restrict ourselves to path queries, question answering can be seen as a sequence transduction task (Graves, 2012; Sutskev er et al., 2014) in which the input is te xt and the output is a series of relations. If we use RNNs as a decoder , our model would be able to handle non-commutati ve composition of relations, which the current weighted con volution cannot handle well. Another interesting connection to our work is that they take the maximum of the inner-product scores (see also W eston et al., 2013; Neelakantan et al., 2015b), which are computed along multiple paths connecting a pair of entities. Representing a set as a collection of vectors and taking the maximum over the inner-product scores is a natural way to represent a set of memory items. The Gaussian attention model we propose in this paper, howe ver , has the advantage of dif ferentiability and composability . 6 C O N C L U S I O N In this paper, we have proposed the Gaussian attention model which can be used in a variety of contexts where we can assume that the distance between the memory items in the latent space is compatible with some notion of semantics. W e ha ve sho wn that the proposed Gaussian scoring function can be used for knowledge base embedding achieving competiti ve accuracy . W e have also shown that our embedding model can naturally propagate uncertainty when we compose relations together . Our embedding model also benefits from compositional training proposed by Guu et al. (2015). Furthermore, we have demonstrated the power of the Gaussian attention model in a chal- lenging question answering problem which in volves both composition of relations and conjunction of queries. Future work includes experiments on natural question answering datasets and end-to-end training including the entity extractor . A C K N O W L E D G M E N T S The authors would like to thank Daniel T arlow , Nate K ushman, and K evin Gimpel for valuable discussions. 9 Under revie w as a conference paper at ICLR 2017 R E F E R E N C E S Dzmitry Bahdanau, K yunghyun Cho, and Y oshua Bengio. Neural machine translation by jointly learning to align and translate. arXiv preprint , 2014. Antoine Bordes, Jason W eston, Ronan Collobert, and Y oshua Bengio. Learning structured embed- dings of knowledge bases. In Conference on Artificial Intelligence , number EPFL-CONF-192344, 2011. Antoine Bordes, Nicolas Usunier, Alberto Garcia-Duran, Jason W eston, and Oksana Y akhnenko. T ranslating embeddings for modeling multi-relational data. In Advances in Neur al Information Pr ocessing Systems , pp. 2787–2795, 2013. Antoine Bordes, Jason W eston, and Nicolas Usunier . Open question answering with weakly super- vised embedding models. In Joint Eur opean Confer ence on Machine Learning and Knowledge Discovery in Databases , pp. 165–180. Springer , 2014. Antoine Bordes, Nicolas Usunier , Sumit Chopra, and Jason W eston. Large-scale simple question answering with memory networks. arXiv pr eprint arXiv:1506.02075 , 2015. Djork-Arn ´ e Clevert, Thomas Unterthiner , and Sepp Hochreiter . Fast and accurate deep network learning by exponential linear units (elus). arXiv pr eprint arXiv:1511.07289 , 2015. Alex Grav es. Sequence transduction with recurrent neural networks. arXiv pr eprint arXiv:1211.3711 , 2012. Alex Grav es, Greg W ayne, and Iv o Danihelka. Neural turing machines. arXiv preprint arXiv:1410.5401 , 2014. Kelvin Guu, John Miller , and Percy Liang. Tra versing knowledge graphs in v ector space. In EMNLP 2015 , 2015. Sepp Hochreiter and J ¨ urgen Schmidhuber . Long short-term memory . Neur al computation , 9(8): 1735–1780, 1997. Diederik Kingma and Jimmy Ba. Adam: A method for stochastic optimization. arXiv preprint arXiv:1412.6980 , 2014. Y ujia Li, Daniel T arlow , Marc Brockschmidt, and Richard Zemel. Gated graph sequence neural networks. arXiv pr eprint arXiv:1511.05493 , 2015. T omas Mikolov , Kai Chen, Greg Corrado, and Jeffre y Dean. Efficient estimation of word represen- tations in vector space. arXiv pr eprint arXiv:1301.3781 , 2013. Arvind Neelakantan, Benjamin Roth, and Andrew McCallum. Compositional vector space models for knowledge base completion. arXiv preprint , 2015a. Arvind Neelakantan, Jeev an Shankar , Alexandre P assos, and Andre w McCallum. Efficient non-parametric estimation of multiple embeddings per word in vector space. arXiv preprint arXiv:1504.06654 , 2015b. Maximilian Nickel, V olker Tresp, and Hans-Peter Kriegel. A three-way model for collectiv e learning on multi-relational data. In Pr oceedings of the 28th international confer ence on machine learning (ICML-11) , pp. 809–816, 2011. Richard Socher, Danqi Chen, Christopher D Manning, and Andre w Ng. Reasoning with neural tensor networks for knowledge base completion. In Advances in Neural Information Processing Systems , pp. 926–934, 2013. Sainbayar Sukhbaatar , Jason W eston, Rob Fergus, et al. End-to-end memory networks. In Advances in neural information pr ocessing systems , pp. 2440–2448, 2015. Ilya Sutsk ever , Oriol V inyals, and Quoc V Le. Sequence to sequence learning with neural networks. In Advances in neural information pr ocessing systems , pp. 3104–3112, 2014. 10 Under revie w as a conference paper at ICLR 2017 Alan Mathison T uring. On computable numbers, with an application to the entscheidungsproblem: A correction. Pr oceedings of the London Mathematical Society , 2(1):544, 1938. Luke V ilnis and Andrew McCallum. W ord representations via gaussian embedding. arXiv preprint arXiv:1412.6623 , 2014. Zhen W ang, Jianwen Zhang, Jianlin Feng, and Zheng Chen. Knowledge graph embedding by trans- lating on hyperplanes. In AAAI , pp. 1112–1119. Citeseer , 2014. Jason W eston, Ron J W eiss, and Hector Y ee. Nonlinear latent factorization by embedding multiple user interests. In Pr oceedings of the 7th ACM confer ence on Recommender systems , pp. 65–68. A CM, 2013. Jason W eston, Sumit Chopra, and Antoine Bordes. Memory networks. arXiv preprint arXiv:1410.3916 , 2014. Jason D W illiams, Eslam Kamal, Hani Amr Mokhtar Ashour , Jessica Miller , and Geoff Zweig. Fast and easy language understanding for dialog systems with microsoft language understanding intelligent service (LUIS). In 16th Annual Meeting of the Special Interest Group on Discourse and Dialogue , pp. 159, 2015. 11 Under revie w as a conference paper at ICLR 2017 A W O R D C U P 2 0 1 4 D A TA S E T T able 3: Sample atomic triplets. Subject Relation Object david villa plays for country spain lionel messi plays in club fc barcelona antoine griezmann plays position forward cristiano ronaldo wears number 7 fulham fc is in country england lukas podolski is aged 29 T able 4: Statistics of the W orldCup2014 dataset. # entity 1127 # atomic relations 6 # atomic triplets 3977 # relations (atomic and compositional) in path queries 12 # question and answer pairs in path queries ( train / validation / test ) 5620 / 804 / 1579 # types of questions in conjunctiv e queries 3 # question and answer pairs in conjunctiv e queries ( train / validation / test ) 1564 / 224 / 420 size of vocab ulary 1781 T able 5: T emplates of questions. In the table, (player), (club), (position) are placeholders of named entities with associated type. (country 1) is a placeholder for a country name while (country 2) is a placeholder for the adjectiv al form of a country . # Query template Question template 1 Find e ∈ E : ( (player), plays in club , e ) is true which club does (player) play for ? which professional football team does (player) play for ? which football club does (player) play for ? 2 Find e ∈ E : ((player), plays position , e ) is true what position does (player) play ? 3 Find e ∈ E : ((player), is aged , e ) is true how old is (player) ? what is the age of (player) ? 4 Find e ∈ E : ((player), wears number , e ) is true what is the jersey number of (player) ? what number does (player) wear ? 5 Find e ∈ E : ((player), plays for country , e ) is true what is the nationality of (player) ? which national team does (player) play for ? which country is (player) from ? 6 Find e ∈ E : ((club), is in country , e ) is true which country is the soccer team (club) based in ? 7 Find e ∈ E : ((club), plays in club − 1 , e ) is true name a player from (club) ? who plays at the soccer club (club) ? who is from the professional football team (club) ? who plays professionally at (club) ? 8 Find e ∈ E : ((country 1), plays for country − 1 , e ) is true which player is from (country 1) ? name a player from (country 1) ? who is from (country 1) ? who plays for the (country 1) national football team ? 9 Find e ∈ E : ((position), plays position − 1 , e ) is true name a player who plays (position) ? who plays (position) ? 10 Find e ∈ E : ((country 1), is in country − 1 , e ) is true which soccer club is based in (country 1) ? name a soccer club in (country 1) ? 11 Find e ∈ E : ((player), plays in club / is in country , e ) is true which country does (player) play professionally in ? where is the football club that (player) plays for ? 12 Find e ∈ E : ((country 1), plays for country − 1 / plays in club , e ) is true which professional football team do players from (country 1) play for ? name a soccer club that has a player from (country 1) ? which professional football team has a player from (country 1) ? 13 Find e ∈ E : ((position), plays position − 1 , e ) is true and ((club), plays in club − 1 , e ) is true who plays (position) for (club)? who are the (position) at (club) ? name a (position) that plays for (club) ? 14 Find e ∈ E : ((position), plays position − 1 , e ) is true and ((country 1), plays for country − 1 , e ) is true who plays (position) for (country 1) ? who are the (position) on (country 1) national team ? name a (position) from (country 1) ? which (country 2) footballer plays (position) ? name a (country 2) (position) ? 15 Find e ∈ E : ((club), plays in club − 1 , e ) is true and ((country 1), plays for country − 1 , e ) is true who are the (country 2) players at (club) ? which (country 2) footballer plays for (club) ? name a (country 2) player at (club) ? which player in (club) is from (country 1) ? 12 Under revie w as a conference paper at ICLR 2017 T able 6: (Composed) relations and sample questions in path queries. # Relation T ype Sample question Sample answer 1 plays in club many-to-one which club does alan pulido play for ? tigres uanl which professional football team does klaas jan huntelaar play for ? fc schalke 04 2 plays position many-to-one what position does gonzalo higuain play ? ssc napoli 3 is aged many-to-one how old is samuel etoo ? 33 what is the age of luis suarez ? 27 4 wears number many-to-one what is the jersey number of mario balotelli ? 9 what number does shinji okazaki wear ? 9 5 plays for country many-to-one which country is thomas mueller from ? germany what is the nationality of helder postiga ? portugal 6 is in country many-to-one which country is the soccer team fc porto based in ? portugal 7 plays in club − 1 one-to-many who plays professionally at liverpool fc ? steven gerrard name a player from as roma ? miralem pjanic 8 plays for country − 1 one-to-many which player is from iran ? masoud shojaei name a player from italy ? daniele de rossi 9 plays position − 1 one-to-many name a player who plays goalkeeper ? gianluiqi buffon who plays forward ? raul jimenez 10 is in country − 1 one-to-many which soccer club is based in mexico ? cruz azul fc name a soccer club in australia ? melbourne victory fc 11 plays in club / is in country many-to-one where is the club that edin dzeko plays for ? england which country does sime vrsaljko play professionally in ? italy 12 plays for country − 1 / plays in club many-to-many name a soccer club that has a player from australia ? crystal palace fc name a soccer club that has a player from spain ? fc barcelona T able 7: Conjuncti ve queries and sample questions. # Relations Sample questions Entities in questions Sample answer 13 plays position − 1 and plays in club − 1 who plays forward for fc barcelona ? who are the midfielders at fc bayern muenchen ? forward , fc barcelona midfielder, fc bayern muenchen lionel messi toni kroos 14 plays position − 1 and plays for country − 1 who are the defenders on german national team ? which mexican footballer plays forward ? defender , germany defender , mexico per mertesacker raul jimenez 15 plays in club − 1 and plays for country − 1 which player in paris saint-germain fc is from argentina ? who are the korean players at beijing guoan ? paris saint-germain fc , argentina beijing guoan , korea ezequiel lavezzi ha daesung B T R A N S G AU S S I A N E M B E D D I N G O F W O R L D C U P 2 0 1 4 W e trained our T ransGaussian model on triplets and paths from W orldCup2014 dataset and illus- trated the embeddings in Fig 3 and 4. Recall that we modeled every relation as a Gaussian with diagonal cov ariance matrix. Fig 3 shows the learned variance parameters of different relations. Each row corresponds to the v ariances of one relation. Columns are permuted to rev eal the block struc- ture. From this figure, we can see that e very relation has a small variance in tw o or more dimensions. This implies that the coordinates of the embedding space are partitioned into semantically coherent clusters each of which represent a particular attribute of a player (or a football club). T o verify this further , we picked the two coordinates in which a relation (e.g. plays position ) has the least variance and projected the embedding of all valid subjects and objects (e.g. players and positions) of the relation to this 2 dimensional subspace. See Fig. 4. The relation between the subjects and the objects are simply translation in the projection when the corresponding subspace is two dimensional (e.g., plays position relation in Fig. 4 (a)). The same is true for other relations that requires larger dimension but it is more challenging to visualize in two dimensions. For relations that ha ve a large number of unique objects, we only plotted for the eight objects with the most subjects for clarity of illustration. Furthermore, in order to elucidate whether we are limited by the capacity of the T ransGaussian embedding or the ability to decode question expressed in natural language, we e valuated the test question-answer pairs using the T ransGaussian embedding composed according to the ground-truth relations and entities. The results were ev aluated with the same metrics as in Sec. 4.3. This es- timation is conducted for TransE embeddings as well. See T able 8 for the results. Compared to T able 2, the accuracy of TransGaussian (COMP) is higher on the atomic relations and path queries but lower on conjunctive queries. This is natural because when the query is simple there is not much room for the question-answering network to improv e upon just combining the relations ac- cording to the ground truth relations, whereas when the query is complex the network could com- bine the embedding in a more creative way to overcome its limitation. In fact, the two queries (#10 and #12) that TransGaussian (COMP) did not perform well in T able 2 pertain to a single re- lation is in country − 1 (#10) and a composition of two relations plays for country − 1 / plays in club (#12). The performance of the two queries were lo w e ven when the ground truth 13 Under revie w as a conference paper at ICLR 2017 19 4 5 11 10 18 23 14 20 22 8 6 24 1 7 16 25 29 15 2 28 21 26 27 13 9 12 3 17 0 dimension plays_for_country is_in_country (inv) plays_for_country (inv) is_in_country is_aged (inv) is_aged plays_in_club (inv) plays_in_club wears_number (inv) wears_number plays_position (inv) plays_position relation Variance of relations in every dimension 0.2 0.4 0.6 0.8 1.0 1.2 1.4 1.6 Figure 3: V ariance of each relation. Each row sho ws the diagonal values in the v ariance matrix associated with a relation. Columns are permuted to re veal the block structure. T able 8: Ev aluation of embeddings. W e ev aluate the embeddings by feeding the correct entities and relations from a path or conjunctiv e query to an embedding model and using its scoring function to retriev e the answers from the embedded knowledge base. T ransE (SINGLE) T ransE (COMP) T ransGaussian (SINGLE) T ransGaussian (COMP) # Relation H@1(%) Mean Filtered Rank H@1(%) Mean Filtered Rank H@1(%) Mean Filtered Rank H@1(%) Mean Filtered Rank 1 plays in club 75 . 54 1 . 38 93 . 48 1 . 09 99.86 1.00 98 . 51 1 . 02 2 plays position 96 . 33 1 . 04 94 . 02 1 . 09 98 . 37 1 . 02 100.00 1.00 3 is aged 55 . 03 1 . 69 91 . 44 1 . 12 96 . 88 1 . 03 100.00 1.00 4 wears number 38 . 86 2 . 09 78 . 67 1 . 32 95 . 92 1 . 04 100.00 1.00 5 plays for country 71 . 60 1 . 39 94 . 84 1 . 10 99 . 32 1 . 01 100.00 1.00 6 is in country 98 . 32 1 . 03 99 . 66 1 . 00 99 . 33 1 . 01 100.00 1.00 7 plays in club − 1 87 . 50 1 . 46 83 . 42 1 . 45 94 . 70 1 . 07 97.42 1.03 8 plays for country − 1 82 . 47 1 . 68 68 . 21 3 . 37 25 . 27 5 . 66 98.78 1.02 9 plays position − 1 100.00 1.00 75 . 54 1 . 60 13 . 59 24 . 35 98 . 78 1 . 02 10 is in country − 1 23 . 11 26 . 92 23.48 23.27 8 . 32 130 . 59 19 . 41 83 . 61 11 plays in club / is in country 20 . 24 7 . 05 58 . 29 1 . 98 46 . 88 2 . 99 80.16 1.38 12 plays for country − 1 / plays in club 25 . 32 22 . 27 27.73 10.04 19 . 04 35 . 59 20 . 15 33 . 01 Overall (Path relations) 64 . 64 5 . 09 75 . 02 3.59 67 . 22 14 . 87 86.73 8 . 79 13 plays position − 1 and plays in club − 1 91 . 85 1 . 20 69 . 97 1 . 82 77 . 45 1 . 83 95.38 1.06 14 plays position − 1 and plays for country − 1 91 . 71 1 . 23 66 . 71 2 . 85 51 . 49 4 . 88 97.83 1.05 15 plays in club − 1 and is in country − 1 88 . 59 1 . 20 73 . 37 1 . 80 83 . 42 1 . 34 94.70 1.08 Overall (Conj. relations) 90 . 72 1 . 21 70 . 02 2 . 16 70 . 79 2 . 68 95.97 1.06 relations were giv en, which indicates that the T ransGaussian embedding rather than the question- answering network is the limiting factor . C K N OW L E D G E BA S E C O M P L E T I O N Knowledge base completion has been a common task for testing knowledge base models on their ability of generalizing to unseen facts. Here, we apply our T ransGaussian model to a knowledge completion task and show that it has competiti ve performance. W e tested on the subset of W ordNet released by Guu et al. (2015). The atomic triplets in this dataset was originally created by Socher et al. (2013) and Guu et al. (2015) added path queries that were randomly sampled from the knowledge graph. W e build our TransGaussian model by training on these triplets and paths and tested our model on the same link prediction task as done by Socher et al. (2013); Guu et al. (2015). As done by Guu et al. (2015), we trained T ransGaussian (SINGLE) with atomic triplets only and trained T ransGaussian (COMP) with the union of atomic triplets and paths. W e did not incorporate 14 Under revie w as a conference paper at ICLR 2017 0.4 0.3 0.2 0.1 0.0 0.1 0.2 dimension #9 0.3 0.2 0.1 0.0 0.1 0.2 0.3 dimension #13 Position Forward Defender Midfielder Goalkeeper (a) plays position 0.3 0.2 0.1 0.0 0.1 0.2 dimension #29 0.3 0.2 0.1 0.0 0.1 0.2 dimension #25 Country Mexico Australia Iran England Columbia South Korea Russia France (b) plays for country 0.3 0.2 0.1 0.0 0.1 0.2 0.3 dimension #26 0.4 0.3 0.2 0.1 0.0 0.1 0.2 0.3 dimension #27 Age 23 26 28 30 27 25 24 29 (c) is aged 0.2 0.1 0.0 0.1 0.2 dimension #0 0.3 0.2 0.1 0.0 0.1 0.2 dimension #17 Number 11 9 16 22 20 23 21 6 (d) wears number 0.3 0.2 0.1 0.0 0.1 0.2 dimension #5 0.3 0.2 0.1 0.0 0.1 0.2 dimension #10 Club FC Barcelona Manchester United FC Manchester City FC SSC Napoli Chelsea FC Real Madrid CF FC Bayern Muenchen Juventus FC (e) plays in club 0.2 0.1 0.0 0.1 0.2 dimension #29 0.3 0.2 0.1 0.0 0.1 0.2 dimension #25 Club (country) Mexico England Spain Turkey Italy Germany Russia France (f) is in country Figure 4: TransGaussian entity embeddings. Crosses are the subjects and circles are the objects of a relation. Specifically , crosses are players in (a)-(e) and professional football clubs in (f). word embedding in this task and each entity is assigned its individual vector . W ithout getting param- eters tuned too much, T ransGaussian (COMP) obtained accurac y comparable to T ransE (COMP) . See T able 11. 15 Under revie w as a conference paper at ICLR 2017 T able 9: Experimental results of path queries on W orldCup2014. T ransE (SINGLE) T ransE (COMP) T ransGaussian (SINGLE) T ransGaussian (COMP) # Relation and sample question H@1(%) Mean Filtered Rank H@1(%) Mean Filtered Rank H@1(%) Mean Filtered Rank H@1(%) Mean Filtered Rank 1 plays in club (which club does alan pulido play for?) 90 . 60 1 . 12 92 . 62 1 . 11 96 . 64 1.03 97.99 1.03 2 plays position (what position does gonzalo higuain play?) 100.00 1.00 98 . 11 1 . 02 98 . 74 1 . 01 100.00 1.00 3 is aged (how old is samuel etoo?) 81 . 58 1 . 30 92 . 11 1 . 10 96 . 05 1 . 04 100.00 1.00 4 wears number (what is the jersey number of mario balotelli?) 44 . 29 1 . 88 85 . 71 1 . 19 96 . 43 1 . 04 100.00 1.00 5 plays for country (which country is thomas mueller from ?) 97 . 60 1 . 02 94 . 40 1 . 11 98 . 40 1 . 02 99.20 1.01 6 is in country (which country is the soccer team fc porto based in ?) 98.48 1.02 98.48 1.02 93 . 94 1 . 08 98.48 1.02 7 plays in club − 1 (who plays professionally at liverpool fc?) 95 . 12 1 . 08 86 . 99 1 . 38 96.75 1.03 96.75 1.03 8 plays for country − 1 (which player is from iran?) 81 . 16 1 . 61 72 . 46 2 . 36 40 . 58 3 . 19 93.24 1.48 9 plays position − 1 (name a player who plays goalkeeper?) 100.00 1.00 30 . 21 2 . 30 55 . 21 5 . 09 85 . 42 1 . 15 10 is in country − 1 (which soccer club is based in mexico?) 24.58 11 . 47 23 . 73 10 . 07 5 . 08 9.18 17 . 80 20 . 10 11 plays in club / is in country (where is the club that edin dzeko plays for ?) 48 . 68 4 . 24 62 . 50 2 . 07 48 . 03 2 . 41 76.97 1.50 12 plays for country − 1 / plays in club (name a soccer club that has a player from australia ?) 34.78 9.49 30 . 43 11 . 26 6 . 52 9 . 88 16 . 30 20 . 27 Overall 74 . 92 2 . 80 74 . 35 2.71 70 . 17 2 . 82 84.42 3 . 68 T able 10: Experimental results of conjuncti ve queries on W orldCup2014. T ransE (SINGLE) T ransE (COMP) T ransGaussian (SINGLE) T ransGaussian (COMP) # Relation and sample question H@1(%) Mean Filtered Rank H@1(%) Mean Filtered Rank H@1(%) Mean Filtered Rank H@1(%) Mean Filtered Rank 13 plays position − 1 and plays in club − 1 (who plays forward for fc barcelona?) 94 . 48 1 . 10 71 . 17 1 . 77 87 . 12 1 . 37 98.77 1.02 14 plays position − 1 and plays for country − 1 (who are the defenders on german national team?) 95 . 93 1 . 08 76 . 42 2 . 50 64 . 23 2 . 02 100.00 1.00 15 plays in club − 1 and is in country − 1 (which player in ssc napoli is from argentina?) 91 . 79 1 . 13 75 . 37 1 . 75 88 . 06 1 . 37 94.03 1.07 Overall 94 . 05 1 . 11 74 . 05 1 . 97 80 . 71 1 . 56 97.62 1.03 T able 11: Accuracy of kno wledge base completion on W ordNet. Model Accuracy (%) TransE (SINGLE) 68.5 TransE (COMP) 80.3 TransGaussian (SINGLE) 58.4 TransGaussian (COMP) 76.4 16

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment