Column Networks for Collective Classification

Relational learning deals with data that are characterized by relational structures. An important task is collective classification, which is to jointly classify networked objects. While it holds a great promise to produce a better accuracy than non-…

Authors: Trang Pham, Truyen Tran, Dinh Phung

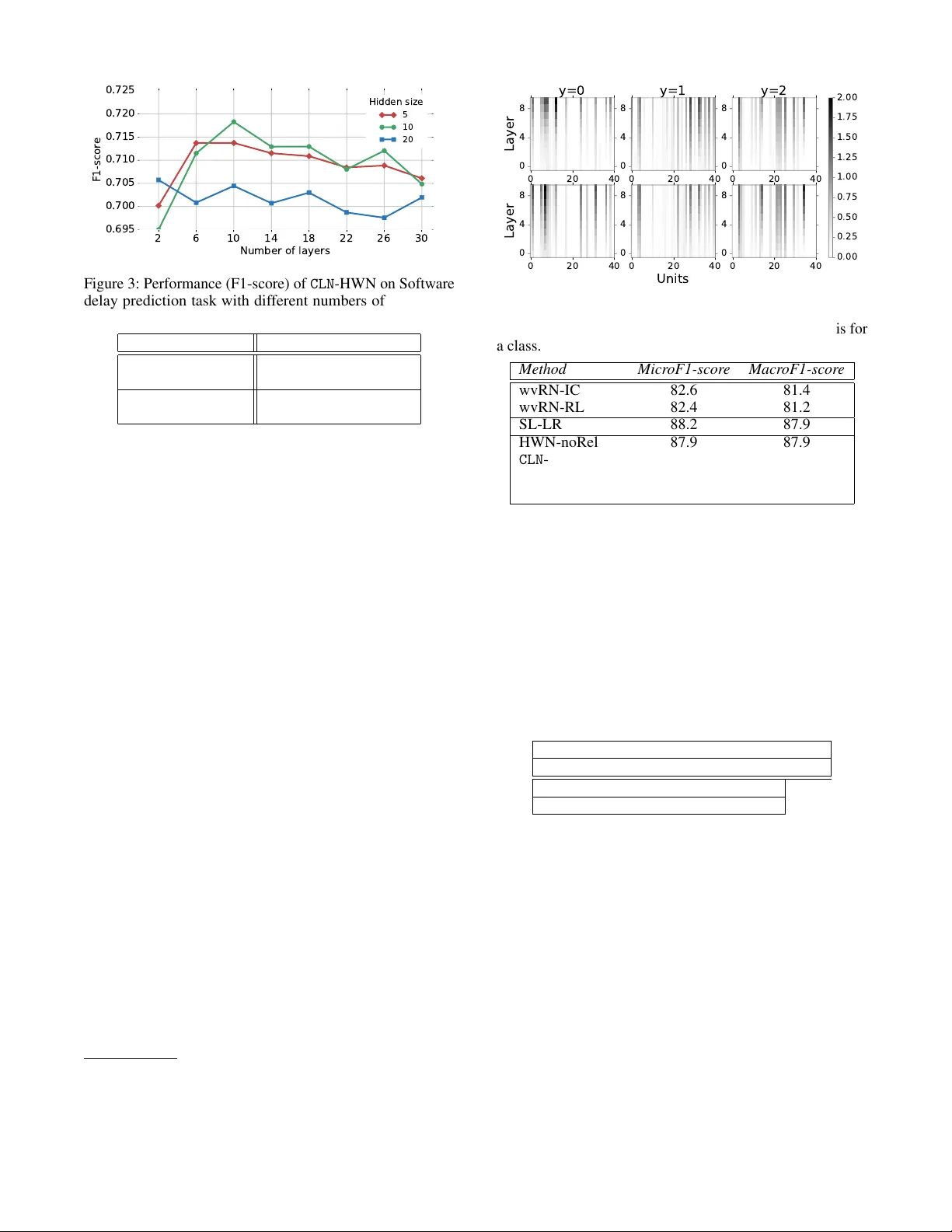

Column Networks f or Collectiv e Classification T rang Pham, T ruyen T ran, Dinh Phung and Svetha V enkatesh Deakin Univ ersity , Australia { phtra,truyen.tr an,dinh.phung,svetha.venkatesh } @deakin.edu.au Abstract Relational learning deals with data that are characterized by relational structures. An important task is collectiv e classifi- cation, which is to jointly classify netw orked objects. While it holds a great promise to produce a better accuracy than non-collectiv e classifiers, collectiv e classification is compu- tationally challenging and has not lev eraged on the recent breakthroughs of deep learning. W e present Column Network ( CLN ), a nov el deep learning model for collectiv e classification in multi-relational domains. CLN has many desirable theoreti- cal properties: (i) it encodes multi-relations between any two instances; (ii) it is deep and compact, allowing complex func- tions to be approximated at the network level with a small set of free parameters; (iii) local and relational features are learned simultaneously; (i v) long-range, higher-order depen- dencies between instances are supported naturally; and (v) crucially , learning and inference are ef ficient with linear com- plexity in the size of the network and the number of relations. W e ev aluate CLN on multiple real-world applications: (a) delay prediction in software projects, (b) PubMed Diabetes publi- cation classification and (c) film genre classification. In all of these applications, CLN demonstrates a higher accuracy than state-of-the-art riv als. 1 Introduction Relational data are characterized by relational structures be- tween objects or data instances. F or example, research pub- lications are linked by citations, web pages are connected by hyperlinks and movies are related through same direc- tors or same actors. Using relations may improv e perfor- mance in classification as relations between entities may be indicativ e of relations between classes. A canonical task in learning from this data type is collective classi- fication in which networked data instances are classified simultaneously rather than independently to exploit the dependencies in the data (Macskassy and Provost 2007; Neville and Jensen 2007; Richardson and Domingos 2006; Sen et al . 2008). Collectiv e classification is, howe ver , highly challenging. Exact collectiv e inference under general de- pendencies is intractable. For tractable learning, we of- ten resort to surrogate loss functions such as (structured) pseudo-likelihood (Sutton and McCallum 2007), approxi- mate gradient (Hinton 2002), or iterati ve schemes, stacked learning (Choetkiertikul et al . 2015; Kou and Cohen 2007; Macskassy and Prov ost 2007; Neville and Jensen 2000). Existing models designed for collecti ve classification are mostly shallo w and do not emphasize learning of local and relational features. Deep neural networks, on the other hand, offer automatic feature learning, which is arguably the key behind recent record-breaking successes in vision, speech, games and NLP (LeCun, Bengio, and Hinton 2015; Mnih et al . 2015). W ith known challenges in relational learning, can we design a deep neur al network that is ef ficient and accurate for collective classification? There has been recent work that combines deep learning with structured prediction but the main learning and inference problems for general multi- relational settings remain open (Belanger and McCallum 2016; Do, Arti, and others 2010; T ompson et al . 2014; Y u, W ang, and Deng 2010; Zheng et al. 2015). In this paper , we present Column Network ( CLN ), an ef- ficient deep learning model for multi-relational data, with emphasis on collectiv e classification. The design of CLN is partly inspired by the columnar organization of neocortex (Mountcastle 1997), in which cortical neurons are organized in vertical, layered mini-columns, each of which is respon- sible for a small receptiv e field. Communications between mini-columns are enabled through short-range horizontal connections. In CLN , each mini-column is a feedforward net that takes an input vector – which plays the role of a recepti ve field – and produces an output class. Each mini-column net not only learns from its own data but also exchanges fea- tures with neighbor mini-columns along the pathway from the input to output. Despite the short-range exchanges, the in- teraction range between mini-columns increases with depth, thus enabling long-range dependencies between data objects. T o be able to learn with hundreds of layers, we leverage the recently introduced highway nets (Sri vastav a, Greff, and Schmidhuber 2015) as models for mini-columns. W ith this design choice, CLN becomes a network of interacting high- way nets . But unlike the original highway nets, CLN ’ s hidden layers share the same set of parameters, allo wing the depth to grow without introducing new parameters (Liao and Poggio 2016; Pham et al . 2016). Functionally , if feedforward nets and highway nets are functional approximators for an input vector , CLN can be thought as an approximator of a grand function that takes a complex network of v ectors as input and returns multiple outputs. CLN has many desirable theoretical properties: (i) it encodes multi-relations between any two instances; (ii) it is deep and compact, allo wing complex func- tions to be approximated at the network level with a small set of free parameters; (iii) local and relational features are learned simultaneously; (iv) long-range, higher -order depen- dencies between instances are supported naturally; and (v) crucially , learning and inference are efficient, linear in the size of network and the number of relations. W e ev aluate CLN on real-world applications: (a) delay pre- diction in softw are projects, (b) PubMed Diabetes publication classification and (c) film genre classification. In all applica- tions, CLN demonstrates a higher accuracy than state-of-the- art riv als. 2 Preliminaries Notation convention: W e use capital letters for matrices and bold lowercase letters for vectors. The sigmoid func- tion of a scalar x is defined as σ ( x ) = [1 + exp ( − x )] − 1 , x ∈ R . A function g of a vector x is defined as g ( x ) = ( g ( x 1 ) , ..., g ( x n )) . The operator ∗ is used to denote element- wise multiplication. W e use superscript t (e.g. h t ) to denote layers or computational steps in neural networks , and sub- script i for the i th element in a set (e.g. h t i is the hidden activ ation at layer t of entity i in a graph). 2.1 Collective Classification in Multi-r elational Setting W e describe the collecti ve classification setting under mul- tiple relations. Gi ven a graph of entities G ={ E , R , X , Y } where E = { e 1 , ..., e N } are N entities that connect through relations in R . Each tuple { e j , e i , r } ∈ R describes a rela- tion of type r ( r = 1 ...R, where R is the number of relation types in G ) from entity e j to entity e i . T wo entities can connect through multiple relations. A relation can be unidi- rectional or bidirectional. F or example, movie A and movie B may be linked by a unidirectional relation sequel(A,B) and two bidirectional relations: same-actor(A,B) and same-director(A,B) . Entities and relations can be represented in an entity graph where a node represents an entity and an edge e xists between two nodes if they hav e at least one relation. Furthermore, e j is a neighbor of e i if there is a link from e j to e i . Let N ( i ) be the set of all neighbors of e i and N r ( i ) be the set of neighbors related to e i through relation r . This immediately implies N ( i ) = ∪ r ∈ R N r ( i ) . X = { x 1 , ..., x N } is the set of local features, where x i is feature vector of entity e i ; and Y = { y 1 , ..., y N } with each y i ∈ { 1 , ..., L } is the label of e i . y i can either be observ ed or latent. Gi ven a set of kno wn label entities E obs , a collecti ve classification algorithm simultaneously infers unknown la- bels of entities in the set E hid = E \ E obs . In our probabilistic setting, we assume the classifier produces estimate of the joint conditional distribution P ( Y | G ) . It is challenging to learn and infer about P ( Y | G ) . A pop- ular strategy is to employ approximate b ut efficient iterati ve methods (Macskassy and Pro vost 2007). In the next subsec- tion, we describe a highly effecti ve strategy kno wn as stacked learning , which partly inspires our work. 2.2 Stacked Lear ning 𝒆 1 𝒆 3 𝒆 2 𝒆 4 𝒙 1 𝒙 2 𝒙 3 𝒙 4 𝑦 1 𝑦 2 𝑦 3 𝑦 4 Figure 1: Collectiv e classification with Stacked Learning (SL). ( Left ): A graph with 4 entities connected by unidi- rectional and bidirectional links, ( Right ): SL model for the graph with three steps where x i is the feature v ector of entity e i . The bidirectional link between e 1 and e 2 is modeled as two unidirectional links from e 1 to e 2 and vice versa. Stacked learning (Fig. 1) is a multi-step learning procedure for collecti ve classification (Choetkiertikul et al . 2015; K ou and Cohen 2007; Y u, W ang, and Deng 2010). At step t − 1 , a classifier is used to predict class probabilities for entity e j , i.e., p t − 1 j = P t − 1 ( y j = 1) , ..., P t − 1 ( y j = L ) . These intermediate outputs are then used as relational features for neighbor classifiers in the next step. In (Choetkiertikul et al . 2015), each relation produces one set of conte xtual features , where all features of the same relation are av eraged: c t ir = 1 |N r ( i ) | X j ∈N r ( i ) p t − 1 j (1) where c t ir is the relational feature vector for relation r at step t . The output at step t is predicted as follo ws P t ( y i ) = f t x i , p t − 1 i , c t i 1 , c t i 2 ..., c t iR (2) where f t is the classifier at step t . When t = 1 , the model uses local features of entities for classification, i.e., c 1 ir = 0 and p 0 i = 0 . At each step, classifiers are trained sequentially with known-label entities. 3 Column Networks In this section we present our main contribution, the Column Network ( CLN ). 3.1 Architectur e Inspired by the columnar org anization in neocortex (Mount- castle 1997), the CLN has one mini-column per entity (or data instance), which is akin to a sensory receptive field. Each column is a feedforward net that passes information from a lower layer to a higher layer of its own, and higher layers of neighbors (see Fig. 2 for a CLN that models the graph in Fig.1( Left )). The nature of the inter-column communication is dictated by the relations between the two entities. Through multiple layers, long-range dependencies are es- tablished (see Sec. 3.4 for more in-depth discussion). This somewhat resembles the strategy used in stacked learning as described in Sec. 2.2. The main differ ence is that in CLN 𝒙 1 𝒙 2 𝒙 3 𝒙 4 𝑦 1 𝑦 2 𝑦 3 𝑦 4 𝒉 1 𝒉 2 Figure 2: CLN for the graph in Fig. 1( Left ) with 2 hidden layers ( h 1 and h 2 ). the intermediate steps do not output class probabilities b ut learn higher abstraction of instance featur es and rel ational featur es . As such, our model is end-to-end in the sense that receptiv e signals are passed from the bottom to the top, and abstract features are inferred along the way . Like wise, the training signals are passed from the top to the bottom. Denote by x i ∈ R M and h t i ∈ R K t the input feature vector and the hidden activ ation at layer t of entity e i , respectiv ely . If there is a connection from entity e j to e i , h t − 1 j serves as an input for h t i . Generally , h t i is a non-linear function of h t − 1 i and previous hidden states of its neighbors: h t i = g h t − 1 i , h t − 1 j 1 , ..., h t − 1 j | N ( i ) | where j ∈ N ( i ) and h 0 i is the input vector x i . W e borro w the idea of stacked learning (Sec. 2.2) to handle multiple relations in CLN . The context of relation r ( r = 1 , ..., R ) at layer t in Eq. (1) is replaced by c t ir = 1 |N r ( i ) | X j ∈N r ( i ) h t − 1 j (3) Furthermore, dif ferent from stacked learning, the context in CLN are abstracted features, i.e., we replace Eq. (2) by h t i = g b t + W t h t − 1 i + 1 z R X r =1 V t r c t j r ! (4) where W t ∈ R K t × K t − 1 and V t r ∈ R K t × K t − 1 are weight matrices and b t is a bias vector for some acti vation function g ; z is a pre-defined constant which is used to prev ent the sum of parameterized contexts from growing too lar ge for complex relations. At the top layer T , for example, the label probability for entity i is gi ven as: P ( y i = l ) = softmax b l + W l h T i Remark: There are se veral similarities between CLN and existing neural network operations. Eq. (3) implements mean- pooling, the operation often seen in CNN. The main differ- ence with the standard CNN is that the mean pooling does not reduce the graph size. This suggests other forms of pooling such as max-pooling or sum-pooling. Asymmetric pooling can also be implemented based on the concept of attention, that is, Eq. (3) can be replaced by: c t ir = X j ∈N r ( i ) α j h t − 1 j subject to P j ∈N r ( i ) α j = 1 and α j ≥ 0 . Eq. (4) implements a con volution. F or example, standard 3x3 con volutional k ernels in images implement 8 relations: left, right, above , below , above-left, abo ve-right, below-left, below-right . Supposed that the relations are shared between nodes, the CLN achiev es translation invariance , similar to that in CNN. 3.2 Highway Network as Mini-Column W e now specify the detail of a mini-column, which we im- plement by extending a recently introduced feedforw ard net called Highway Netw ork (Sriv astava, Greff, and Schmidhu- ber 2015). Recall that traditional feedforward nets hav e a major difficulty of learning with high number of layers. This is due to the nested non-linear structure that prev ents the ease of passing information and gradient along the computational path. Highway nets solv e this problem by partially opening the gate that lets pre vious states to propagate through layers, as follows: h t = α 1 ∗ ˜ h t + α 2 ∗ h t − 1 (5) where ˜ h t is a nonlinear candidate function of h t − 1 and where α 1 , α 2 ∈ ( 0 , 1 ) are learnable gates. Since the gates are ne ver shut down completely , data signals and error gradients can propagate very f ar in a deep net. For modeling relations, the candidate function ˜ h t in Eq. (5) is computed using Eq. (4). Lik ewise, the gates are modeled as: α 1 = σ b t α + W t α h t − 1 i + 1 z R X r =1 V t α r c t j r ! (6) and α 2 = 1 − α 1 as for compactness (Sriv astav a, Greff, and Schmidhuber 2015). Other gating options exists, for example, the p -norm gates where α p 1 + α p 2 = 1 for p > 0 (Pham et al . 2016). 3.3 Parameter Sharing f or Compactness For feedforward nets, the number of parameters grow with number of hidden layers. In CLN , the number is multiplied by the number of relations (see Eq. (4)). In highway network implementation of mini-columns, a set of parameters for the gates is used thus doubling the number of parameters (see Eq. (6)). For a deep CLN with many relations, the number of parameters may grow faster than the size of training data, leading to ov erfitting and a high demand of memory . T o ad- dress this challenge, we borro w the idea of parameter sharing in Recurrent Neural Network (RNN), that is, layers hav e iden- tical parameters. There has been empirical evidence support- ing this strategy in non-relational data (Liao and Poggio 2016; Pham et al. 2016). W ith parameter sharing, the depth of the CLN can grow without increasing in model size. This may lead to good per- formance on small and medium datasets. See Sec. 4 provides empirical evidences. 3.4 Capturing Long-range Dependencies An important property of our proposed deep CLN is the ability to capture long-range dependencies despite only local state exchange as sho wn in Eqs. (4,6). T o see ho w , let us consider the example in Fig. 2, where x 1 is modeled in h 1 3 and h 1 3 is modeled in h 2 4 , therefore although e 1 does not directly connect to e 4 but information of e 1 is still embedded in h 2 4 through h 1 3 . More generally , after k hidden layers, a hidden activ ation of an entity can contains information of its ex- panded neighbors of radius k . When the number of layers is large, the representation of an entity at the top layer contains not only its local features and its directed neighbors, but also the information of the entire graph. W ith highway networks, all of these le vels of representations are accumulated through layers and used to predict output labels. 3.5 T raining with mini-batch As described in Sec. 3.1, h t i is a function of h t − 1 i and the pre- vious layer of its neighbors. h t i therefore can contains infor - mation of the entire graph if the network is deep enough. This requires full-batch training which is e xpensive and not scal- able. W e propose a very simple yet ef ficient approximation method that allows mini-batch training. F or each mini-batch, the neighbor activ ations are temporarily frozen to scalars, i.e., gradients are not propag ated through this “blanket”. After the parameter update, the activ ations are recomputed as usual. Experiments showed that the procedure did con ver ge and its performance is comparative with the full-batch training method. 4 Experiments and Results In this section, we report three real-w orld applications of CLN on networked data: softwar e delay estimate , PubMed paper classification and film genr e classification . 4.1 Baselines For comparison, we employed a comprehensi ve suit of base- line methods which include: (a) those designed for collective classification, and (b) deep neural nets for non-collecti ve clas- sification. For the former , we used NetKit 1 , an open source toolkit for classification in network ed data (Macskassy and Prov ost 2007). NetKit offers a classification frame work con- sisting of 3 components: a local classifier , a r elational clas- sifier and a collective inference method. In our experiments, the local classifier is the Logistic Regression (LR) for all settings; relational classifiers are (i) weighted-vote Relational Neighbor (wvRN), (ii) logistic regression link-based classi- fier with normalized v alues (nbD), and (iii) logistic regression link-based classifier with absolute count values (nbC). Col- lectiv e inference methods include Relaxation Labeling (RL) and Iterative Classification (IC). In total, there are 6 pairs of “relational classifier – collective inference”: wvRN-RL, wvRN-IC, nbD-RL, nbD-IC, nbC-RL and nbC-IC. For each dataset, results of two best settings will be reported. W e also implemented the state-of-the-art collective classi- fiers following (Choetkiertikul et al . 2015; K ou and Cohen 1 http://netkit-srl.sourceforge.net/ 2007; Y u, W ang, and Deng 2010): stacked learning with lo- gistic regression (SL-LR) and with random forests (SL-RF). For deep neural nets, follo wing the latest results in (Liao and Poggio 2016; Pham et al . 2016), we implemented high- way network with shared parameters among layers (HWN- noRel). This is essentially a special case of CLN without relational connections. 4.2 Experiment Settings W e report three variants of the CLN : a basic version that uses standard Feedforward Neural Network as mini-column ( CLN - FNN) and two v ersions of CLN -HWN that use highway nets with shared parameters ( CLN -HWN-full for full-batch mode and CLN -HWN-mini for mini-batch mode, as described in Sections 3.2, 3.3 and 3.5). All neural nets use ReLU in the hidden layers. Dropout is applied before and after the recurrent layers of CLN -HWNs and at every hidden layers of CLN -FNN. Each dataset is divided into 3 separated sets: training, v alidation and test sets. For hyper -parameter tuning, we search for (i) number of hidden layers: 2, 6, 10, ..., 30, (ii) hidden dimen- sions, and (iii) optimizers: Adam or RMSprop. CLN -FNN has 2 hidden layers and the same hidden dimension with CLN -HWN so that the two models ha ve equal number of pa- rameters. The best training setting is chosen by the v alidation set and the results of the test set are reported. The result of each setting is reported by the mean result of 5 runs. Code for our model can be found on Github 2 4.3 Software Delay Pr ediction This task is to predict potential delay for an issue , which is an unit of task in an iterative software de velopment life- cycle (Choetkiertikul et al . 2015). The prediction point is when issue planning has completed. Due to the dependen- cies between issues, the prediction of delay for an issue must take into account all related issues. W e use the largest dataset reported in (Choetkiertikul et al . 2015), the JBoss, which contains 8,206 issues. Each issue is a vector of 15 features and connects to other issues through 12 relations (unidirectional such as blocked-by or bidirectional such as same-developer ). The task is to predict whether a software issue is at risk of getting delays (i.e., binary classifi- cation). Fig. 3 visualizes CLN -HWN-full performance with dif fer- ent numbers of layers ranging from 2 to 30 and hidden di- mensions from 5, 10 to 20. The F1-score peaks at 10 hidden layers and dimension size of 10. T able 1 reports the F1-scores of all methods. The two best classifiers in NetKit are wvRN-IC and wvRN-RL. The non-collecti ve HWN-noRel works surprisingly well – almost reaching the performance of the best collecti ve SL-RF with 2 points short. This demonstrates that deep neural nets are highly competitiv e in this domain, and to the best of our kno wledge, this fact has not been established. CLN -HWN-full beats the best collectiv e-method, the SL-RF by 3.1 points. W e lost 0.7% in mini-batch training mode but the gain of training speed was substantial - roughly 6x. 2 https://github .com/trangptm/Column_networks 2 6 10 14 18 22 26 30 Number of layers 0.695 0.700 0.705 0.710 0.715 0.720 0.725 F1-score Hidden size 5 10 20 Figure 3: Performance (F1-score) of CLN -HWN on Software delay prediction task with dif ferent numbers of layers and hidden sizes Non-neural F1 Neural net F1 wvRN-IC 54.7 HWN-noRel 66.8 wvRN-RL 55.8 CLN -FNN 70.5 SL-LR 65.3 CLN - HWN-full 71.9 SL-RF(*) 68.8 CLN -HWN-mini 71.2 T able 1: Software delay prediction performance. (*) Result reported in (Choetkiertikul et al. 2015). 4.4 PubMed Publication Classification W e used the Pubmed Diabetes dataset consisting of 19,717 scientific publications and 44,338 citation links among them 3 . Each publication is described by a TF/IDF weighted word vector from a dictionary which consists of 500 unique words. W e conducted experiments of classifying each publication into one of three classes: Diabetes Melitus - Experimental, Diabetes Melitus type 1, and Diabetes Mellitus type 2. V isualization of hidden layers W e randomly picked 2 samples of each class and visualized their ReLU units acti- vations through 10 layers of the CLN -HWN (Fig. 4). Inter- estingly , the acti vation strength seems to grow with higher layers, suggesting that learn features are more discriminative as they are getting closer to the outcomes. For each class a number of hidden units is turned of f in e very layer . Figures of samples in the same class have similar patterns while figures of samples from different classes are v ery different. Classification accuracy The best setting for CLN -HWN is with 40 hidden dimensions and 10 recurrent layers. Re- sults are measured in MicroF1-score and MacroF1-score (See T able 2). The non-relational highway net (HWN-noRel) out- performs two best baselines from NetKit. The two version of CLN -HWN perform best in both F1-score measures. 4.5 Film Genre Pr ediction W e used the MovieLens Latest Dataset (Harper and K onstan 2016) which consists of 33,000 movies. The task is to predict genres for each movie gi ven plot summary . Local features were extracted from mo vie plot summary downloaded from IMDB database 4 . After removing all movies without plot summary , the dataset remains 18,352 movies. Each movie 3 Download: http://linqs.umiacs.umd.edu/projects//projects/lbc/ 4 http://www .imdb .com 0 20 40 0 4 8 Layer y=0 0 20 40 0 4 8 y=1 0 20 40 0 4 8 y=2 0 20 40 0 4 8 Layer 0 20 40 Units 0 4 8 0 20 40 0 4 8 0.00 0.25 0.50 0.75 1.00 1.25 1.50 1.75 2.00 Figure 4: The dynamics of activ ations of 40 ReLU units through 10 hidden layers of 2x3 samples - each column is for a class. Method Micr oF1-scor e Macr oF1-score wvRN-IC 82.6 81.4 wvRN-RL 82.4 81.2 SL-LR 88.2 87.9 HWN-noRel 87.9 87.9 CLN -FNN 89.4 89.2 CLN -HWN-full 89.8 89.6 CLN - HWN-mini 89.8 89.6 T able 2: Pubmed Diabetes classification results measured by MicroF1-score and MacroF1-score. is described by a Bag-of-W ords vector of 1,000 most fre- quent words. Relations between movies are ( same-actor, same-director ). T o create a rather balanced dataset, 20 genres are collapsed into 9 labels: (1) Drama, (2) Comedy , (3) Horror + Thriller , (4) Adventure + Action, (5) Mystery + Crime + Film-Noir , (6) Romance, (7) W estern + W ar + Documentary , (8) Musical + Animation + Children, and (9) Fantasy + Sci-Fi. The frequencies of 9 labels are reported in T able 3. Label 0 1 2 3 4 Freq(%) 46.3 32.5 24.0 19.1 15.9 Label 5 6 7 8 Freq(%) 16.5 14.8 10.4 11.5 T able 3: The frequencies of 9 collapsed labels on Movielens On this dataset, CLN -HWNs work best with 30 hidden di- mensions and 10 recurrent layers. T able 4 reports the F-scores. The two best settings with NetKit are nbC-IC and nbC-RL. CLN -FNN performs well on Micro-F1 but fails to improve MacroF1-score of prediction. CLN - HWN-mini outperforms CLN -HWN-full by 1.3 points on Macro-F1. Fig. 5 sho ws why CLN -FNN performs badly on MacroF1 (MacroF1 is the average of all classes’ F1-scores). While CLN -FNN works well with balanced classes (in the first three classes, its performance is nearly as good as CLN -HWN), it fails to handle imbalanced classes (See T able 3 for label frequencies). For e xample, F1-score is only 5.4% for label 7 and 13.3% for label 8. In contrast, CLN -HWN performs well on all classes. Method Micr o-F1 Macr o-F1 nbC-IC 46.6 38.0 nbC-RL 43.5 40.4 SL-LR 53.4 48.9 HWN-noRel 50.8 45.2 CLN -FNN 54.3 41.8 CLN -HWN-full 57.4 52.7 CLN -HWN-mini 57.5 54.1 T able 4: Movie Genre Classification Performance reported in MicroF1 score and MacroF1 score. 0 1 2 3 4 5 6 7 8 Label 0 10 20 30 40 50 60 70 80 F1-score SL-LR CLN-FNN CLN-HWN Figure 5: Genre prediction F1-score of SL-LR, CLN -FNN, CLN -HWN on each label. Best viewed in color . 5 Related W ork This paper sits at the intersection of two recent indepen- dently de veloped areas: Statistical Relational Learning (SRL) and Deep Learning (DL). Started in the late 1990s, SRL has advanced significantly with noticeable works such as Probabilistic Relational Models (Getoor and Sahami 1999), Conditional Random Fields (Laf ferty , McCallum, and Pereira 2001), Relational Marko v Netw ork (T askar , Pieter , and K oller 2002) and Markov Logic Networks (Richardson and Domin- gos 2006). Collecti ve classification is a canonical task in SRL, also kno wn in various forms as structured prediction (Dietterich et al . 2008) and classification on network ed data (Macskassy and Prov ost 2007). T wo key components of collecti ve classifiers are relational classifier and collective inference (Macskassy and Prov ost 2007). Relational classifier makes use of predicted classes (or class probabilities) of entities from neighbors as fea- tures. Examples are wvRN (Macskassy and Prov ost 2007), logistic based (Domke 2013) or stacked graphical learning (Choetkiertikul et al . 2015; K ou and Cohen 2007). Collective infer ence is the task of jointly inferring labels for entities. This is a subject of AI with abundance of solutions including message passing algorithms (Pearl 1988), v ariational mean- field (Opper and Saad 2001) and discrete optimization (T ran and Dinh Phung 2014). Among existing collectiv e classifiers, the closest to ours is stacked graphical learning where collec- tiv e inference is bypassed through stacking (Kou and Cohen 2007; Y u, W ang, and Deng 2010). The idea is based on l earn- ing a stack of models that take intermediate prediction of neighborhood into account. The other area is Deep Learning (DL), where the cur- rent wav e has offered compact and efficient ways to build multilayered networks for function approximation (via feed- forward networks) and program construction (via recurrent networks) (LeCun, Bengio, and Hinton 2015; Schmidhuber 2015). Ho wev er, much less attention has been paid to general networked data (Monner , Reggia, and others 2013), although there has been work on pairing structured outputs with deep networks (Belanger and McCallum 2016; Do, Arti, and oth- ers 2010; T ompson et al . 2014; Y u, W ang, and Deng 2010; Zheng et al . 2015). Parameter sharing in feedforward net- works was recently analyzed in (Liao and Poggio 2016; Pham et al . 2016). The sharing ev entually transforms the net- works in to recurrent neural networks (RNNs) with only one input at the first layer . The empirical findings were that the performance is good despite the compactness of the model. Among deep neural nets, the closest to our work is RNCC model (Monner , Reggia, and others 2013), which also aims at collecti ve classification using RNNs. There are substan- tial dif ferences, howe ver . RNCC shuffles neighbors of an entities to a random sequence and uses horizontal RNN to integrate the sequence of neighbors. Ours emphasizes on v er- tical depth, where parameter sharing gives rise to the vertical RNNs . Ours is conceptually simpler – all nodes are trained simultaneously , not separately as in RNCC. 6 Discussion This paper has proposed Column Network ( CLN ), a deep neu- ral network with an emphasis on fast and accurate collecti ve classification. CLN has linear complexity in data size and number of relations in both training and inference. Empiri- cally , CLN demonstrates a competitiv e performance against ri val collecti ve classifiers on three real-world applications: (a) delay prediction in software projects, (b) PubMed Diabetes publication classification and (c) film genre classification. As the name suggests, CLN is a network of narrow deep networks, where each layer is e xtended to incorporate as in- put the preceding neighbor layers. It some what resembles the columnar structure in neocortex (Mountcastle 1997), where each narrow deep netw ork plays a role of a mini-column. W e wish to emphasize that although we use highway networks in actual implementation due to its excellent performance (Pham et al . 2016; Sriv astav a, Greff, and Schmidhuber 2015; T ran, Phung, and V enkatesh 2016), any feedforwar d networks can be potentially be used in our architecture. When param- eter sharing is used, the feedforward networks become re- current networks, and CLN becomes a network of interacting RNNs. Indeed, the entire network can be collapsed into a giant feedforw ard network with n − n input/output mappings. When relations are shared among all nodes across the net- work, CLN enables translation in variance across the network, similar to those in CNN. Ho wever , the CLN is not limited to a single network with shared relations. Alternatively , networks can be IID according to some distribution and this allows relations to be specific to nodes. There are open rooms for future work. One extension is to learn the pooling operation using attention mechanisms. W e hav e considered only homogeneous prediction tasks here, assuming instances are of the same type. Howe ver , the same framew ork can be easily extended to multiple instance types. Acknowledgement Dinh Phung is partially supported by the Australian Research Council under the Discov ery Project DP150100031 References Belanger , D., and McCallum, A. 2016. Structured prediction energy netw orks. ICML . Choetkiertikul, M.; Dam, H. K.; Tran, T .; and Ghose, A. 2015. Predicting delays in softw are projects using network ed classification. In 30th IEEE/ACM International Confer ence on Automated Softwar e Engineering . Dietterich, T . G.; Domingos, P .; Getoor , L.; Muggleton, S.; and T adepalli, P . 2008. Structured machine learning: the next ten years. Machine Learning 73(1):3–23. Do, T .; Arti, T .; et al. 2010. Neural conditional random fields. In International Confer ence on Artificial Intelligence and Statistics , 177–184. Domke, J. 2013. Structured learning via logistic regression. In Advances in Neural Information Pr ocessing Systems , 647– 655. Getoor , L., and Sahami, M. 1999. Using probabilistic rela- tional models for collaborati ve filtering. In W orkshop on W eb Usage Analysis and User Pr ofiling (WEBKDD’99) . Harper , F . M., and K onstan, J. A. 2016. The movielens datasets: History and context. ACM T r ansactions on Interac- tive Intelligent Systems (T iiS) 5(4):19. Hinton, G. 2002. Training products of experts by minimizing contrastiv e div ergence. Neural Computation 14:1771–1800. K ou, Z., and Cohen, W . W . 2007. Stacked Graphical Models for Ef ficient Inference in Markov Random Fields. In SDM , 533–538. SIAM. Laf f erty , J.; McCallum, A.; and Pereira, F . 2001. Conditional random fields: Probabilistic models for segmenting and la- beling sequence data. In Pr oceedings of the International Confer ence on Machine learning (ICML) , 282–289. LeCun, Y .; Bengio, Y .; and Hinton, G. 2015. Deep learning. Natur e 521(7553):436–444. Liao, Q., and Poggio, T . 2016. Bridging the gaps between residual learning, recurrent neural networks and visual cortex. arXiv pr eprint arXiv:1604.03640 . Macskassy , S., and Prov ost, F . 2007. Classification in net- worked data: A toolkit and a univ ariate case study. The Journal of Mac hine Learning Resear ch 8:935–983. Mnih, V .; Kavukcuoglu, K.; Silver , D.; Rusu, A. A.; V eness, J.; Bellemare, M. G.; Gra ves, A.; Riedmiller , M.; Fidjeland, A. K.; Ostrovski, G.; et al. 2015. Human-level control through deep reinforcement learning. Natur e 518(7540):529– 533. Monner , D. D.; Reggia, J.; et al. 2013. Recurrent neural col- lecti ve classification. Neural Networks and Learning Systems, IEEE T ransactions on 24(12):1932–1943. Mountcastle, V . B. 1997. The columnar organization of the neocortex. Brain 120(4):701–722. Neville, J., and Jensen, D. 2000. Iterativ e classification in relational data. In Pr oc. AAAI-2000 W orkshop on Learning Statistical Models fr om Relational Data , 13–20. Neville, J., and Jensen, D. 2007. Relational dependency net- works. Journal of Machine Learning Resear ch 8(Mar):653– 692. Opper , M., and Saad, D. 2001. Advanced mean field methods: Theory and practice . Massachusetts Institute of T echnology Press (MIT Press). Pearl, J. 1988. Pr obabilistic Reasoning in Intelligent Systems: Networks of Plausible Infer ence . San Francisco, CA: Morgan Kaufmann. Pham, T .; T ran, T .; Phung, D.; and V enkatesh, S. 2016. Faster training of very deep networks via p-norm g ates. ICPR’16 . Richardson, M., and Domingos, P . 2006. Marko v logic networks. Machine Learning 62:107–136. Schmidhuber , J. 2015. Deep learning in neural networks: An ov erview. Neural Networks 61:85–117. Sen, P .; Namata, G.; Bilgic, M.; Getoor , L.; Galligher , B.; and Eliassi-Rad, T . 2008. Collectiv e classification in network data. AI magazine 29(3):93. Sri vastav a, R. K.; Gref f, K.; and Schmidhuber , J. 2015. Train- ing very deep netw orks. In Advances in neural information pr ocessing systems , 2377–2385. Sutton, C., and McCallum, A. 2007. Piecewise pseudo- likelihood for ef ficient CRF training. In Pr oceedings of the International Conference on Mac hine Learning (ICML) , 863– 870. T askar , B.; Pieter, A.; and K oller, D. 2002. Discriminati ve probabilistic models for relational data. In Pr oceedings of the 18th Confer ence on Uncertainty in Artificial Intelligence (U AI) , 485–49. Morgan Kaufmann. T ompson, J. J.; Jain, A.; LeCun, Y .; and Bregler , C. 2014. Joint training of a con volutional network and a graphical model for human pose estimation. In Advances in neural information pr ocessing systems , 1799–1807. T ran, T ., and Dinh Phung, S. V . 2014. Tree-based Iterated Local Search for Mark ov Random Fields with Applications in Image Analysis. Journal of Heuristics DOI:10.1007/s10732- 014-9270-1. T ran, T .; Phung, D.; and V enkatesh, S. 2016. Neural choice by elimination via highway networks. T rends and Applications in Knowledge Discovery and Data Mining , Lecture Notes in Computer Science 9794:15–25. Y u, D.; W ang, S.; and Deng, L. 2010. Sequential labeling using deep-structured conditional random fields. Selected T opics in Signal Processing , IEEE Journal of 4(6):965–973. Zheng, S.; Jayasumana, S.; Romera-Paredes, B.; V ineet, V .; Su, Z.; Du, D.; Huang, C.; and T orr , P . H. 2015. Conditional random fields as recurrent neural networks. In Pr oceedings of the IEEE International Confer ence on Computer V ision , 1529–1537.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment