Efficient Convolutional Auto-Encoding via Random Convexification and Frequency-Domain Minimization

The omnipresence of deep learning architectures such as deep convolutional neural networks (CNN)s is fueled by the synergistic combination of ever-increasing labeled datasets and specialized hardware. Despite the indisputable success, the reliance on…

Authors: Meshia Cedric Oveneke, Mitchel Aliosha-Perez, Yong Zhao

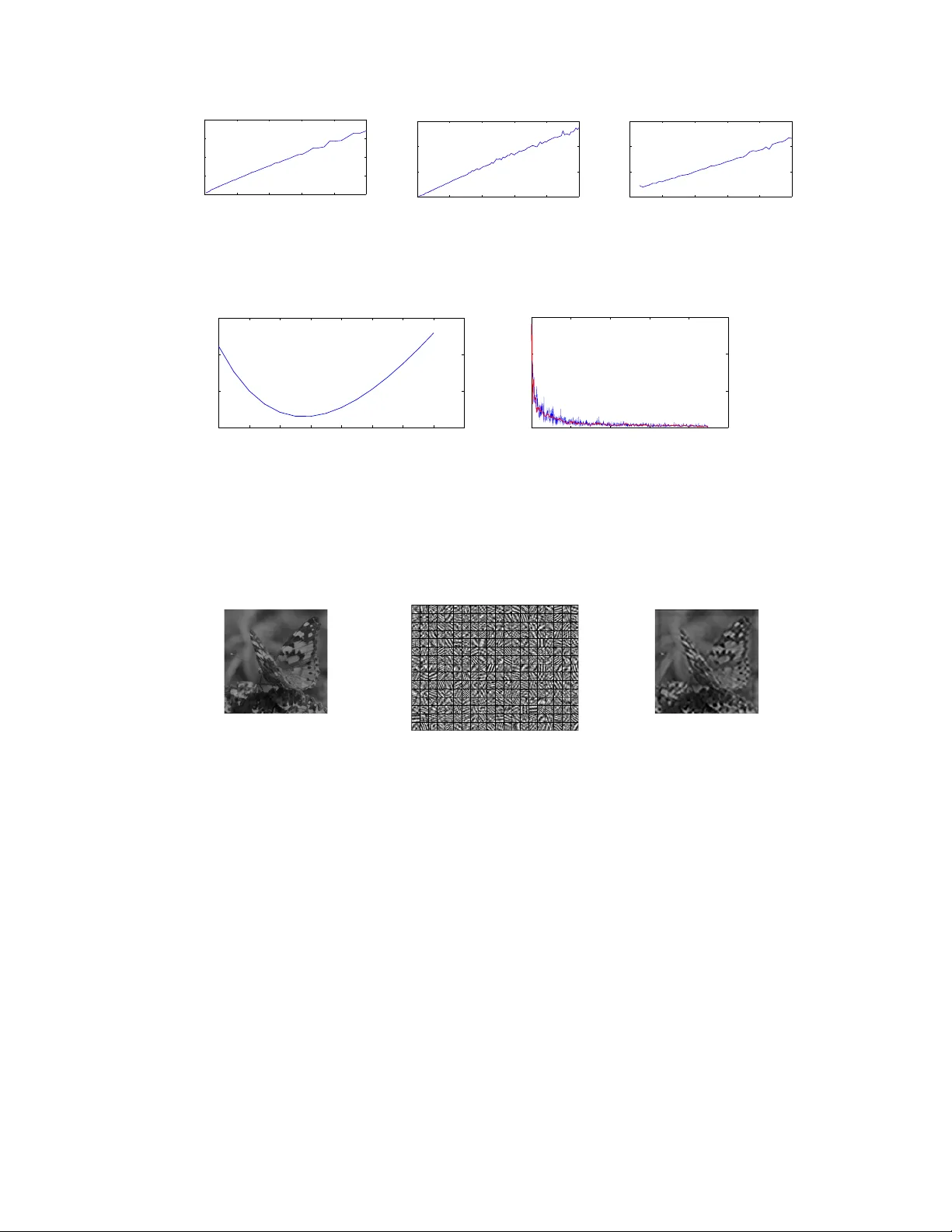

Efficient Con volutional A uto-Encoding via Random Con vexification and Fr equency-Domain Minimization Meshia C. Oveneke 1 , Mitchel Aliosha-Per ez 1 , Y ong Zhao 1 2 , Dongmei Jiang 2 , Hichem Sahli 1 3 1 VUB-NPU Joint A VSP Research Lab Vrije Univ ersiteit Brussel (VUB) Deptartment of Electronics & Informatics (ETR O) Pleinlaan 2, Brussels, Belgium {mcovenek,maperezg,yzhao,hsahli}@etrovub.be 2 VUB-NPU Joint A VSP Research Lab Northwestern Polytechnical Univ ersity (NPU) Shaanxi Ke y Lab on Speech and Image Information Processing Y ouyo Xilu 127, Xi’an 710072, China jiangdm@nwpu.edu.cn 3 Interuniv ersity Microelectronics Centre (IMEC) Kapeldreef 75, 3001 Hev erlee, Belgium Abstract The omnipresence of deep learning architectures such as deep con volutional neu- ral networks (CNN)s is fueled by the syner gistic combination of e ver -increasing labeled datasets and specialized hardware. Despite the indisputable success, the reliance on huge amounts of labeled data and specialized hardware can be a limiting factor when approaching ne w applications. T o help alle viating these limitations, we propose an efficient learning strate gy for layer -wise unsupervised training of deep CNNs on con ventional hardware in acceptable time. Our proposed strategy consists of randomly con v exifying the r econstruction contractive auto-encoding (RCAE) learning objectiv e and solving the resulting large-scale con vex minimization prob- lem in the frequency domain via coor dinate descent (CD). The main advantages of our proposed learning strategy are: (1) single tunable optimization parameter; (2) fast and guaranteed con vergence; (3) possibilities for full parallelization. Numerical experiments show that our proposed learning strategy scales (in the worst case) linearly with image size, number of filters and filter size. 1 Introduction At the heart of the recent success of deep con volutional neural networks (CNN)s in se veral application domains such as computer vision, speech recognition and natural language processing, is the synergis- tic combination of e ver -increasing labeled datasets and specialized har dwar e in the form of graphical processing units (GPU)s [ 16 ], field programmable gate arrays (FPGA)s [ 10 ] or computer clusters [ 18 ]. Despite this indisputable success, relying on huge amounts of labeled data and specialized hardware can be a limiting factor when approaching ne w applications. Furthermore, for applying deep learning techniques locally on platforms with limited resources, one cannot rely on huge amounts of labeled data and specialized hardware. W e therefore argue that there is a growing need for resource-ef ficient unsupervised learning strate gies capable of training deep CNNs on con ventional hardw are in accept- able time. The main purpose of unsupervised learning is to leverage the wealth of unlabeled data for disentangling the causal (generativ e) factors [ 2 , 7 ]. In the context of supervised pattern classification, empirical e vidence sho ws that: unsupervised pre-training (generati ve) helps in disentangling the class 30th Conference on Neural Information Processing Systems (NIPS 2016), Barcelona, Spain. manifolds in the lower layers of a deep CNNs, the upper layers are better disentangled when they are subject to supervised training (discriminati ve) and a combination of the tw o boosts the ov erall performance when the ratio of unlabeled to labeled samples is high [3, 4, 14]. In this work, we propose to train deep CNNs in a greedy layer-wise manner , using the r econstruction contractive auto-encoding (RCAE) learning objecti ve [ 1 ]. The RCAE learning objectiv e has been prov en (theoretically and empirically) to capture the local shape of the data-generating distrib ution [ 2 , 1 ], hence capturing the manifold structure of the data. Meanwhile, minimizing the RCAE learning objectiv e in v olves solving a non-conv ex minimization problem, often addressed using stochastic gradient descent (SGD) methods [13]. Despite their empirical success when applied to deep CNNs, their ke y disadvantage is the computationally expensi ve manual tuning of optimization parameters such as learning rates and con vergence criteria. Moreover , their inherently sequential nature makes them very difficult to parallelize using GPUs or distribute them using computer clusters [ 13 ]. T o ov ercome the abov e-mentioned dif ficulties, we propose to con vexify the RCAE learning objecti ve. T o this end, inspired by recent work such as [ 15 , 8 , 11 ], we propose to adopt a random con vexification strategy by fixing the (non-linear) encoding parameters and only learning the (linear) decoding parameters. By further transforming the randomly con ve xified RCAE objecti ve into the frequency domain using the discr ete F ourier transform (DFT), we obtain a learning objecti ve which we propose to minimize using coor dinate descent (CD) [ 12 , 17 ]. The main advantages of our proposed learning strategy are: (1) single tunable optimization parameter; (2) fast and guaranteed conv ergence; (3) possibilities for full parallelization. 2 Efficient Con volutional A uto-Encoding The main moti v ation of this work is ef ficient unsupervised training of deep CNNs. T o this end, we adopt a greedy layer-wise learning strategy and consider deep CNNs as a stack of single-layer CNNs with K filters and input space X ⊂ R d × d × C , i.e. the space of C -channel d × d images 1 x = x (1) , . . . , x ( C ) . W e further propose to train each single-layer CNN using the r econstruc- tion contractive auto-encoding (RCAE) learning objectiv e [ 1 ]. The RCAE learning objecti ve has been prov en to capture the high-density regions of the (layer-wise) data-generating distrib ution for any twice-differential reconstruction (encoding-decoding) function and regularization parameter approaching 0 asymptotically . T o this end, we consider the following parametric reconstruction function: r ( x ; θ ) , K X k =1 w ( k ) | {z } decoding ∗ g ( C X c =1 a ( k ) ∗ x ( c ) + b ( k ) ) | {z } encoding = K X k =1 w ( k ) ∗ h ( k ) (1) with g ( · ) denoting the entry-wise application of a twice-dif ferentiable activ ation function g : R → R . The model parameters θ consist of w × w encoding filters { a ( k ) } K k =1 , h × h encoding biases { b ( k ) } K k =1 and w × w decoding filters { w ( k ) } K k =1 . W e consider a valid con volution for the encoding function, yielding a dimension h = d − w + 1 , and a full con v olution for the decoding function. The core idea behind our proposed layer -wise learning strategy is to sample each entry of the (non- linear) encoding parameters { a ( k ) } K k =1 and { b ( k ) } K k =1 i.i.d. from pre-determined density functions p ( a ) and p ( b ) respectiv ely , and keeping them fix ed while learning the (linear) decoding parameters { w ( k ) } K k =1 in the frequency domain. T o this end, we define the complex-v alued decoding parameters associated to { w ( k ) } K k =1 as { W ( k ) } K k =1 , with W ( k ) = F { w ( k ) } ∈ C d × d being the discr ete F ourier transform (DFT). Gi ven a set of N training images D N = { x n } N n =1 sampled i.i.d. from the data- 1 Although we consider d × d images, the remaining calculations also hold for rectangular images. 2 generating distribution p ( x ) , we define the follo wing frequency-domain RCAE objectiv e: L RCAE = Z X p ( x ) " k r ( x ; θ ) − x k 2 F + λ ∂ r ( x ; θ ) ∂ x 2 F # d x ≈ 1 N N X n =1 k r ( x n ; θ ) − x n k 2 F + λ ∂ r ( x ; θ ) ∂ x x = x n 2 F ∝ 1 N N X n =1 kF { r ( x n ; θ ) − x n }k 2 F + λ F ( ∂ r ( x ; θ ) ∂ x x = x n ) 2 F = 1 N N X n =1 K X k =1 W ( k ) H ( k ) n − X n 2 F + λ K X k =1 W ( k ) D ( k ) n 2 F (2) where k • k F is the Frobenius norm, denotes the Hadamard (entry-wise) product [ 5 ], H ( k ) n = F { h ( k ) n } , X n = P C c =1 F { x ( c ) n } , D ( k ) n = G ( k ) n A ( k ) with G ( k ) n = F ∂ g ( v ) ∂ v v = v ( k ) n and v ( k ) n = P C c =1 a ( k ) ∗ x ( c ) n + b ( k ) . W e used Parsev al’ s theorem in conjunction with the con v olution theorem [ 9 ] for transforming the randomly con vexified RCAE objecti ve from spatial to frequency domain. As a direct consequence, minimizing the transformed RCAE objectiv e (2) reduces to solving d 2 independent K -dimensional regularized linear least-squares problems, which of fers full parallelization possibilities. W e propose to solve each of the independent K -dimensional problems using coor dinate descent (CD) [ 17 ], which has recently witnessed a resur gence of interest in large- scale optimization problems due to its simplicity and fast conv er gence [ 12 ]. In the context of RCAE, the CD method consists of iteratively minimizing (2) along the k -th coordinate (filter), while keeping the remaining K − 1 coordinates (filters) fixed, yielding the follo wing strategy for updating the filters in the frequency domain: ˆ W ( k ) = ¯ H ( k ) N X N − P i 6 = k ˆ W ( i ) H ( i ) N ¯ H ( k ) N + λ D ( i ) N ¯ D ( k ) N H ( k ) N ¯ H ( k ) N + λ D ( k ) N ¯ D ( k ) N (3) where the bold fraction sign ( · · ) denotes the Hadamard (entry-wise) division and H ( k ) N = P N n =1 H ( k ) n , X N = P N n =1 X n and D ( k ) N = P N n =1 D ( k ) n . The main advantages of the deriv ed filter update strategy (3) are: single tunable optimization parameter λ , fast and guaranteed con ver gence of ˆ W ( k ) ; possibilities for parallelization offered by the frequency-domain transformation of L RCAE . It is important to note that the incremental data acquisition inv olv ed in H ( k ) N , X N and D ( k ) N can also be computed in parallel. Once the decoding filters are learned in the frequency domain, they are transformed back to the spatial domain using the in verse DFT : ˆ w ( k ) = F − 1 { ˆ W ( k ) } (4) At inference stage, we use the transpose of the learned decoding filters ˆ w ( k ) for computing k feature maps as follows: ˆ h ( k ) , g ( P C c =1 ˆ w ( k ) T ∗ x ( c ) ) . 3 Numerical Experiments As a sanity check for the o verall computational efficienc y of our proposed learning strate gy , we’ ve implemented the CD-based frequency-domain RCAE minimization in MA TLAB R2014a. W e’ ve used the built-in fast F ourier transform (FFT) for transforming the RCAE objecti ve into the frequenc y domain. The hyperbolic tangent was used as activ ation function. The encoding filters and biases were randomly fixed by sampling their entries independently from a zero-mean normal distrib ution with standard deviations 0 . 1 and 0 . 01 respectiv ely . For learning the decoding filters, we’ v e implemented a fairly naiv e version of frequency-domain CD (3) by initializing each complex-valued filter as ˆ W ( k ) = 0 + i 0 and only performing a single CD cycle trough the filter coordinates k ∈ [1 , K ] . For all the experiments, we used of the Caltech-256 Object Category dataset [ 6 ] and whitened the images. 3 0 0.5 1 1.5 2 2.5 x 10 5 0 20 40 60 80 Image size (pixels) CPU−time (ms) 0 20 40 60 80 100 0 50 100 150 #Filters CPU−time (ms) 0 10 20 30 40 50 5 10 15 20 Filter size (pixels) CPU−time (ms) Figure 1: Time-comple xity of CD-based RCAE minimization on Caltech-256 dataset. Left : CPU-time (in milliseconds) in function of image size (in pixels). Middle : CPU-time (in milliseconds) in function of number of filters. Right : CPU-time (in milliseconds) in function of filter size (in pixels). 14 15 16 17 18 19 20 21 22 5 6 7 8 x 10 −3 regularization parameter reconstruction error 0 200 400 600 800 1000 0 1 2 3 x 10 −3 #Training images convergence rate Figure 2: Reconstruction error and conv er gence rate of CD-based RCAE minimization on Caltech- 256 dataset. Left : Reconstruction error in function of re gularization parameter λ . Right : Con vergence rate of the learned decoding filters in function of number of training images N . The con ver gence rate is computed using the a verage squared dif ference between consecuti ve filter updates. The original curve (blue) is noisy due to the average inv olv ed in the computation of the conv ergence rate. A smoothed curve (red) is therefore plotted on top, in order to highlight the general trend. Figure 3: V isualization of learned decoding filters and reconstruction result on Caltech-256 dataset (after training with 400 images). Left : Previously unseen 244 × 244 testing image. Middle : 300 learned decoding filters. Right : Reconstruction result on previously unseen 244 × 244 testing image (left) using 300 learned decoding filters (middle). As first experiment, we’ ve measured the computational time-complexity in terms of CPU-time on an Intel ® Core ™ i7-2600 CPU @ 3.40 GHz machine. Figure (1) shows that our proposed method has a (worst-case) linear time-complexity w .r .t. image size, number of filters and filter size. Knowing that our naive implementation does not in volv e any particular form of parallelism, linear time is the best possible complexity we can achiev e in situations where the algorithm has to read its entire input sequentially . In a second experiment, we study the influence of the regularization parameter λ on the reconstruction error and analyze the conv ergence rate of the learned decoding filters in function of the number training samples N . For robust estimation, the reconstruction error was av eraged ov er a batch of 500 images that were not used during training. Figure (2), left, illustrates that the reconstruction error reaches a (global) minimum when the regularization parameter approaches λ = 16 . 5 . Figure (2), right, illustrates that after roughly 400 training images, the learned decoding filters { ˆ w ( k ) } K k =1 already settle. This clearly highlights the advantages of random con vexification and frequency-domain minimization using CD. Figure (3) depicts the decoding filters and reconstruction result on a pre viously unseen testing image, obtained after CD-based minimization of the RCAE objectiv e (2) using only 400 training images. 4 4 Conclusions W e’ ve proposed an efficient learning strategy for layer-wise unsupervised training of deep CNNs. The main contributions of our proposed learning strategy are random con vexification and frequenc y- domain transformation of the r econstruction contractive auto-encoding (RCAE) objectiv e, which yields a relativ ely easy-to-solve large-scale re gularized linear least-squares problem. W e’ ve proposed to solve this problem using coor dinate descent (CD), with as main advantages: (1) single tunable optimization parameter; (2) fast and guaranteed con ver gence; (3) possibilities for full paralleliza- tion. Numerical experiments sho w that, with a fairly naiv e implementation, our proposed learning strategy scales (in the w orst case) linearly with image size, number of filters and filter size. W e also observe that, using relati vely few training images, the learned filters already settle and yield decent reconstruction results on unseen testing images. W e believ e that the inherently parallel nature of our proposed learning strategy offers very interesting possibilities for further increasing the computational efficienc y using more sophisticated implementation strategies. Acknowledgments This work is supported by the Agency for Inno v ation by Science and T echnology in Flanders (IWT) – PhD grant nr . 131814, the VUB Interdisciplinary Research Program through the EMO-App project and the National Natural Science Foundation of China (grant 61273265). References [1] Guillaume Alain and Y oshua Bengio. What regularized auto-encoders learn from the data- generating distribution. The J ournal of Machine Learning Resear ch , 15(1):3563–3593, 2014. [2] Y oshua Bengio, Aaron Courville, and Pierre V incent. Representation learning: A revie w and new perspectiv es. P attern Analysis and Machine Intelligence, IEEE T r ansactions on , 35(8):1798–1828, 2013. [3] P . P . Brahma, D. W u, and Y . She. Why deep learning works: A manifold disentanglement perspectiv e. IEEE T r ansactions on Neural Networks and Learning Systems , 27(10):1997–2008, Oct 2016. [4] Dumitru Erhan, Y oshua Bengio, Aaron Courville, Pierre-Antoine Manzagol, Pascal V incent, and Samy Bengio. Why does unsupervised pre-training help deep learning? J ournal of Machine Learning Resear ch , 11(Feb):625–660, 2010. [5] Gene H Golub and Charles F V an Loan. Matrix computations , volume 3. JHU Press, 2012. [6] Gregory Grif fin, Alex Holub, and Pietro Perona. Caltech-256 object category dataset. 2007. [7] Irina Higgins, Loic Matthey , Xavier Glorot, Arka Pal, Benigno Uria, Charles Blundell, Shakir Mohamed, and Alexander Lerchner . Early visual concept learning with unsupervised deep learning. arXiv preprint , 2016. [8] Guang-Bin Huang. What are extreme learning machines? filling the gap between frank rosenblatts dream and john von neumanns puzzle. Cognitive Computation , 7(3):263–278, 2015. [9] David W Kammler . A first course in F ourier analysis . Cambridge Univ ersity Press, 2007. [10] Griffin Lace y , Graham W T aylor , and Shawki Areibi. Deep learning on fpgas: Past, present, and future. arXiv preprint , 2016. [11] Xia Liu, Shaobo Lin, Jian Fang, and Zongben Xu. Is extreme learning machine feasible? a theoretical assessment (part i). Neural Networks and Learning Systems, IEEE T ransactions on , 26(1):7–20, 2015. [12] Y u Nesterov . Efficiency of coordinate descent methods on huge-scale optimization problems. SIAM Journal on Optimization , 22(2):341–362, 2012. [13] Jiquan Ngiam, Adam Coates, Ahbik Lahiri, Bobby Prochnow , Quoc V Le, and Andrew Y Ng. On optimization methods for deep learning. In Pr oceedings of the 28th International Confer ence on Machine Learning (ICML-11) , pages 265–272, 2011. [14] T om Le Paine, Poo ya Khorrami, W ei Han, and Thomas S Huang. An analysis of unsupervised pre-training in light of recent advances. arXiv pr eprint arXiv:1412.6597 , 2014. 5 [15] Ali Rahimi and Benjamin Recht. W eighted sums of random kitchen sinks: Replacing minimiza- tion with randomization in learning. In Advances in neural information pr ocessing systems , pages 1313–1320, 2009. [16] Rajat Raina, Anand Madhav an, and Andrew Y Ng. Lar ge-scale deep unsupervised learning using graphics processors. In Pr oceedings of the 26th annual international confer ence on machine learning , pages 873–880. A CM, 2009. [17] Stephen J Wright. Coordinate descent algorithms. Mathematical Pro gramming , 151(1):3–34, 2015. [18] Ren W u, Shengen Y an, Y i Shan, Qingqing Dang, and Gang Sun. Deep image: Scaling up image recognition. 6

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment