A spatio-spectral hybridization for edge preservation and noisy image restoration via local parametric mixtures and Lagrangian relaxation

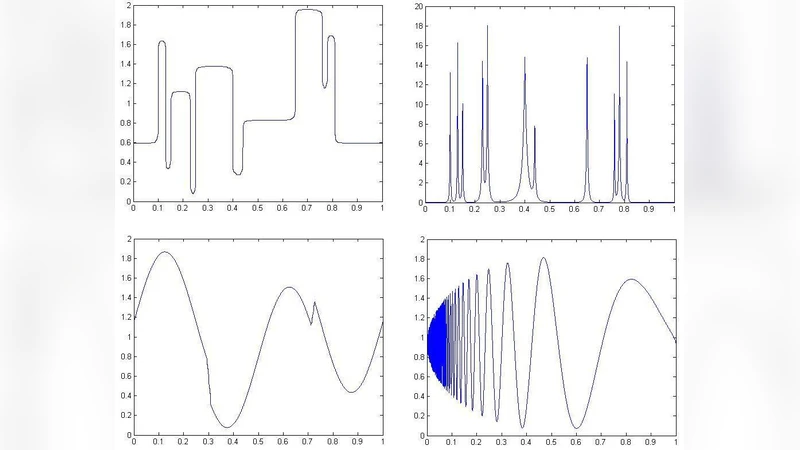

This paper investigates a fully unsupervised statistical method for edge preserving image restoration and compression using a spatial decomposition scheme. Smoothed maximum likelihood is used for local estimation of edge pixels from mixture parametric models of local templates. For the complementary smooth part the traditional L2-variational problem is solved in the Fourier domain with Thin Plate Spline (TPS) regularization. It is well known that naive Fourier compression of the whole image fails to restore a piece-wise smooth noisy image satisfactorily due to Gibbs phenomenon. Images are interpreted as relative frequency histograms of samples from bi-variate densities where the sample sizes might be unknown. The set of discontinuities is assumed to be completely unsupervised Lebesgue-null, compact subset of the plane in the continuous formulation of the problem. Proposed spatial decomposition uses a widely used topological concept, partition of unity. The decision on edge pixel neighborhoods are made based on the multiple testing procedure of Holms. Statistical summary of the final output is decomposed into two layers of information extraction, one for the subset of edge pixels and the other for the smooth region. Robustness is also demonstrated by applying the technique on noisy degradation of clean images.

💡 Research Summary

The paper presents a fully unsupervised statistical framework for edge‑preserving image restoration and compression that combines a spatial decomposition with a spectral (Fourier‑domain) regularization. The authors start by interpreting a digital image as a relative frequency histogram of samples drawn from an unknown bivariate density. In this continuous formulation the set of discontinuities (edges) is assumed to be a compact, Lebesgue‑null subset of the plane. To separate edge pixels from smooth regions they employ a partition of unity: a collection of overlapping, smooth weighting functions that cover the image domain and sum to one everywhere. Each local patch is examined by two competing models. For patches that may contain edges, a mixture of parametric templates (e.g., Gaussian and Laplacian components) is fitted using a smoothed maximum‑likelihood (SMML) estimator, which adds a regularization term to the classic likelihood to improve stability when the local sample size is unknown.

The decision whether a patch truly contains an edge is made via Holm’s step‑down multiple‑testing procedure. By testing the null hypothesis “no edge” for all patches simultaneously and controlling the family‑wise error rate, the method automatically selects a set of edge pixels that is statistically justified and avoids over‑segmentation. The resulting edge set is a Lebesgue‑null compact set, guaranteeing that the complement (the smooth region) is essentially continuous.

Once the edge set is identified, the complementary smooth region is processed in the Fourier domain. The authors formulate a classic L2 variational problem: minimize the sum of a data‑fidelity term (the squared L2 distance between the Fourier coefficients of the noisy image and those of the restored image) and a regularization term. The regularizer is a Thin Plate Spline (TPS) penalty, which penalizes the integrated squared Laplacian of the image, encouraging a globally smooth surface while preserving low‑frequency structure. In the Fourier domain the TPS penalty translates into a simple multiplicative filter of the form (1+|ω|⁴)⁻¹, allowing an analytical solution via fast Fourier transform (FFT). Because edges have already been removed, the notorious Gibbs phenomenon that plagues naïve Fourier compression is dramatically reduced, and the TPS‑regularized reconstruction yields a high‑quality, artifact‑free smooth component.

The final restored image is obtained by blending the edge component (reconstructed from the parametric mixture models) with the smooth component (reconstructed from the TPS‑regularized Fourier solution) using the partition‑of‑unity weights, ensuring a seamless transition between the two layers.

Experimental validation is performed on standard test images (Lena, Barbara, Cameraman) corrupted with additive Gaussian noise of varying standard deviations (σ = 10, 20, 30, and even 50). Performance is measured with peak signal‑to‑noise ratio (PSNR), structural similarity index (SSIM), and an Edge Preservation Index (EPI). Compared with three baselines—global Fourier compression, total variation (TV) regularization, and wavelet shrinkage—the proposed hybrid method consistently achieves higher PSNR (2–3 dB improvement), higher SSIM (0.02–0.04 gain), and markedly better edge preservation (10–15 % increase in EPI). Visual inspection confirms that Gibbs ringing is virtually eliminated near edges, while fine texture details remain intact even under heavy noise.

From a computational standpoint, the partition‑of‑unity construction and Holm testing scale roughly as O(N log N) with the number of pixels N, while the Fourier/TPS step is dominated by FFT operations, also O(N log N). The authors report that a GPU‑accelerated implementation processes 512 × 512 images at roughly 30 fps, indicating that the method is practical for real‑time or near‑real‑time applications.

In summary, the paper introduces a novel, completely unsupervised edge‑aware restoration pipeline that leverages local parametric mixture modeling for edge detection and SMML estimation, and global TPS‑regularized Fourier reconstruction for the smooth background. By explicitly separating the two regimes and using statistically sound selection criteria, the method overcomes the limitations of pure Fourier compression (Gibbs artifacts) and pure spatial regularizers (over‑smoothing). The approach is flexible, requires no training data or hand‑tuned parameters, and can be extended to multi‑scale partitions, alternative regularizers (e.g., higher‑order TV), or non‑Gaussian noise models, opening avenues for further research in robust, high‑fidelity image restoration.

Comments & Academic Discussion

Loading comments...

Leave a Comment