Feature Importance Measure for Non-linear Learning Algorithms

Complex problems may require sophisticated, non-linear learning methods such as kernel machines or deep neural networks to achieve state of the art prediction accuracies. However, high prediction accuracies are not the only objective to consider when…

Authors: Marina M.-C. Vidovic, Nico G"ornitz, Klaus-Robert M"uller

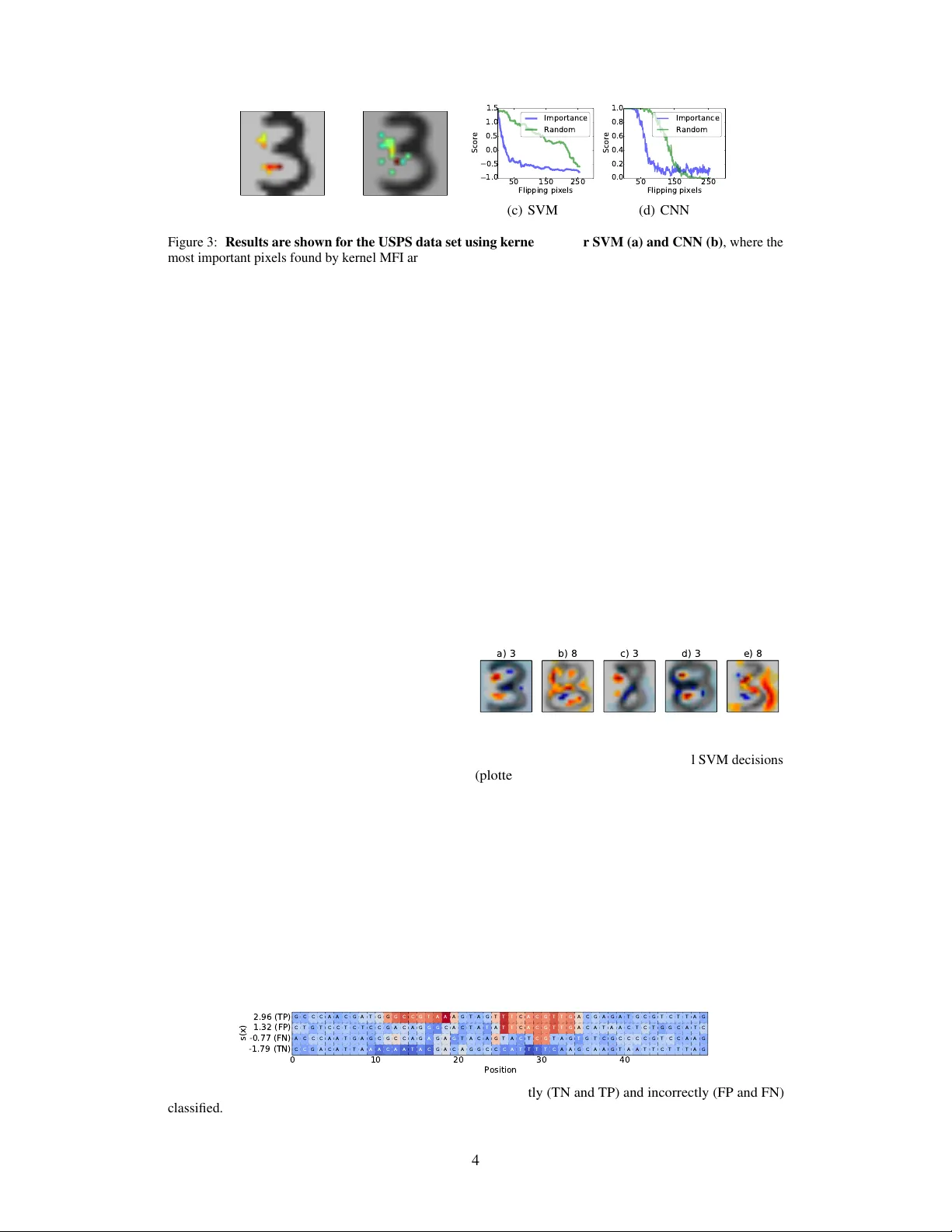

F eatur e Importance Measur e f or Non-linear Lear ning Algorithms Marina M.-C. V idovic Machine Learning Group T echnical Univ ersity of Berlin Berlin, Germany marina.vidovic@tu-berlin.de Nico Görnitz Machine Learning Group T echnical Univ ersity of Berlin Berlin, Germany nico.goernitz@tu-berlin.de Klaus-Robert Müller Machine Learning Group T echnical Univ ersity of Berlin Berlin, Germany klaus-robert.mueller@tu-berlin.de Marius Kloft Department of Computer Science Humbold Univ ersity of Berlin Berlin, Germany kloft@hu-berlin.de 1 Introduction Complex problems may require sophisticated, non-linear learning methods such as kernel machines or deep neural networks to achiev e state of the art prediction accuracies. Howe ver , high prediction accuracies are not the only objecti ve to consider when solving problems using machine learning. Instead, particular scientific applications require some explanation of the learned prediction function. Unfortunately , most methods do not come with out of the box straight forw ard interpretation. Even linear prediction functions s ( x ) = P j β j x j are not straight forward to explain if features β exhibit complex correlation structure. In computational biology , positional oligomer importance matrices (POIMs) [ 7 ] address the need for interpretation of sophisticated learning machines. POIMS specifically explain the output of kernel- based learning methods acting on DN A sequences using a weighted degree string kernel [1, 6, 5, 3]. A WD kernel breaks tw o discrete DN A sequences x and x 0 of length L apart into all subsequences up to some length and then counts the number of matching subsequences—the so-called positional oligomers (POs). For the follo wing considerations, let Σ = { A, C, G, T } be the DN A alphabet and X ∈ Σ L a random variable o ver the DN A alphabet of length L . POIMs assign each PO y ∈ Σ k , of length k starting at position j in X with an importance score POIM y ,j ∝ E [ s ( X ) | X j : j + k = y ] . POIMs allow visualization of each PO’ s significance to the prediction function s . A seminal property of POIMs is that they take the ov erlaps of the POs at different positions and lengths into account. As visual inspecting POIMs can be tedious, [ 9 , 8 ] proposed motifPOIMs, a probabilistic non-con vex method to automatically e xtract the biological factors underlying the SVM’ s prediction such as promoter elements or transcription factor binding sites –often called motifs. Unfortunately , POIMs are restricted to specific DN A applications. As a generalization of POIMs, the feature importance ranking measure (FIRM) [ 10 ] assigns each fea- ture f with an importance score Q f := p V ar Y [ E X [ s ( X ) | f ( X ) = Y ] . FIRM measures the v ariation of the prediction function when varying a feature. If the expected v alue of the prediction function is not changed when varying a feature f, the feature is considered as unimportant. Unfortunately , FIRM is in general intractable [10]. In this paper , we propose the Measur e of F eatur e Importance (MFI). MFI is general and can be applied to any arbitrary learning machine (including kernel machines and deep learning). MFI is intrinsically non-linear and can detect features that by itself are inconspicuous and only impact the 30th Conference on Neural Information Processing Systems (NIPS 2016), Barcelona, Spain. prediction function through their interaction with other features. Lastly , MFI can be used for both — model-based feature importance (as POIMs and FIRM) and instance-based feature importance (i.e, measuring the importance of a feature for a particular data point). 2 Methodology In this section, we describe our proposed method — Measure of Feature Importance (MFI). MFI extends the concepts of POIM and FIRM (which are contained as special cases) to non-linear feature interactions and instance-based feature importance attribution, and it is particularly simple to apply . T o distinguish between model-based and instance-based MFI, we introduce a function called “explanation mode”, which maps the sample in their respecti ve feature space. Exemplary , for instance-based explanation, a DN A sequence would be mapped to itself, whereas the same sequence would be mapped to a POIM in case of model-based explanation. Definition 1 (MFI and kernel MFI) . Let X be a random variable on a space X . Furthermor e, let s : X → Y be a pr ediction function (output by an arbitrary learning machine), and let f : X → R be a r eal-valued featur e. Let φ : X → F be a function (“explanation mode”), wher e F is an arbitrary space. Lastly , let k : Y × Y → R and l : R × R → R be kernel functions. Then we define: MFI : S φ,f ( t ) := E [ s ( X ) φ ( X ) | f ( X ) = t ] (1) kernel MFI : S + φ,f ( t ) = C ov [ k ( s ( X ) , s ( · )) , l ( φ ( X ) , φ ( · )) | f ( X ) = t ] . (2) T able 1: Specific instantiation for MFI in terms of instance-based (ib) and model-based (mb) application, with B ∈ A k × { 1 , . . . , L − k + 1 } . Illustrations are giv en in Figure 1. Objects mode method φ f t a Image z ib MFI φ ( X ) = 1 f i,j ( X ) = X i,j t = z i,j b Sequence z ib MFI φ ( X ) = 1 f i,k ( X ) = X i : i + k t = z i : i + k c Images mb kernel MFI φ ( X ) = X f ( X ) = t const d Sequences mb kernel MFI φ ( X ) = B f ( X ) = t const Le a r n i n g M a c h i n e MFI 5 1 0 1 5 2 0 2 5 Position AA AC AG AT CA CC CG CT G A G C G G G T TA TC TG TT ACGTTAGGTCCAATGTAC AGT Instance-ba sed Ex planatio n Model -based Ex planation a b c d Figure 1: MFI Examples W e consider two possible flavors of feature importance: (left) instance-based importance measures (e.g. Why is this specific example of ’3’ classified as ’3’ using my trained RBF-SVM classifier? ); (right) model-based importance measure (e.g. Which re gions ar e generally important for the classifier decision? ). In the following, we will e xplain the “explanation mode” of above definition in terms of model-based and instance-based proceed ex emplary for both, sequence and image data. 2.1 Model-based MFI: Here, the task is to globally assess what features a giv en (trained) learning machine regards as most significant — independent of the e xamples given. In the case of sequence data, were we hav e sequences of length L ov er the alphabet Σ = { A, C, G, T } , an importance map for all k -mers ov er all positions is gained by using the explanation mode φ : Σ L → Σ k × L − k +1 , where each sequence is mapped to a sparse PWM, in which entries only indicate presence or absence of positional k -mers. In the case of two dimensional image data, X ∈ R d 1 × d 2 , where we already are in the decent visual explanation mode, φ ( X ) = X keeps the surroundings by mapping the data to itself. In both cases, we set f ( X ) = t , where t = const , which is why we can ne glected it. The v arious case studies are summarized in T able 1 with corresponding examples sho wn in Figure 1 on the right. 2 2.2 Instance-based MFI: Gi ven a specific example, the task at hand is to assess why this example has been assigned this specific classifier score (or class) prediction. In the case of sequence data we compute the feature importance of any positional k -mer in a giv en sequence g ∈ Σ L by f ( X ) = X i : i + k , with t = g i : i + k . In the case of images, where g ∈ R d 1 × d 2 is the image of interest and g i,j expose one pix el, f ( X ) = X i,j maps the random samples X ∈ R d 1 × d 2 to one pixel t = g i,j . In both cases, we set φ ( X ) = 1 , which is why we can neglect it. For e xamples and specific instruction see T able 1 and Figure 1 on the left. 2.3 Relation to Hilbert-Schmidt Independence Criterion In 2005, the Hilbert-Schmidt independence criterion [ 2 ] (HSIC) was proposed as a kernel-based methodology to measure the independence of two distinct v ariables X and Y : H S I C ( X , Y ) = k C X Y k 2 = E [ k ( X , X 0 ) l ( Y , Y 0 )] − 2 E [ E X [ k ( X , X 0 )] E Y [ l ( Y , Y 0 )]] + E [ k ( X , X 0 )] E [ l ( Y , Y 0 )] where k and l are reproducing kernels and C X Y is the cross-co variance operator . W e have the following interesting relation of MFI to HSIC. Lemma 1 (Relation of Kernel MFI to HSIC) . Given the kernel MFI of Definition 1 S + φ,f , then S + φ,f = C ov [ k ( s ( X ) , s ( · )) , l ( φ ( X ) , φ ( · )) | f ( X ) = t ] and the corr esponding Hilbert-Schmidt Independence Criterion becomes: H S I C ( S φ , Y , R ) = k S + φ k 2 = tr ( K L ) . The relation to HSIC provides us with a practical tool to assess non-linear feature importances as defined in kernel MFI in Definition 1. In order to make this approach practically suitable, we resort to sampling as an inference method. T o this end, let Z ⊂ X be a subset of X containing n = | Z | samples. Then Eq. (1) can be approximated by ˆ S φ,f ( t ) := 1 | Z { f ( z )= t } | P z ∈ Z s ( z ) φ ( z ) 1 { f ( z )= t } − µ s µ φ with µ φ = 1 | Z { f ( z )= t } | P z ∈ Z { f ( z )= t } φ ( z ) and µ s = 1 | Z { f ( z )= t } | P z ∈ Z { f ( z )= t } s ( z ) . Hence, when number of samples | Z | → ∞ , then ˆ S φ,f → S φ,f . A corresponding sampling scheme is also av ailable for kernel MFI. 3 Empirical Evaluation 10 21 46 100 215 464 1000 2154 4641 Number of samples 1 0 - 2 1 0 - 1 1 0 0 1 0 1 1 0 2 1 0 3 1 0 4 Runtime (sec) 0.000 0.005 0.010 0.015 0.020 0.025 Frobenius distance Figure 2: Illustration of the runtime measured in seconds for v arious sample sizes (plotted in blue) and of the Frobenius distance between two consec- utiv e results (green curve). In this section, we ev aluate the proposed method em- pirically regarding its ability to explain the rele vance of features for model- and instance-based e xplanation models. Although our method can be applied to any learning machines, we focus in the experiments on support vector machines (SVMs) using a Gaussian kernel function and con volutional neural networks (CNNs). 3.1 Experimental setup For validation, we follo w the Most Rele vant First (MoRF) strategy [ 4 ] and successively calculate the classifier performance while blurring pix els a) with descending relev ance (i.e., computed by our proposed method) and b) randomly . The idea is that blurring pixels with high rele vance will influence the classifier decision and thus drop its performance faster than blurring randomly chosen pixels would do. In the following, we e valuate our proposed method on the USPS data set, using a SVM with an RBF k ernel and a CNN with follo wing architecture: a 2D con volution layer with 10 tanh-filters of size 8x4, a max-pool layer of size=2, a dense-layer with 100 ReLUs, a dense layer with 2 softmax units. For all experiments, we used a sample size of 1000 samples, which was considered as suitable trade-of f between runtime and accuracy . 3 (a) SVM (b) CNN 50 150 250 Flipping pixels 1.0 0.5 0.0 0.5 1.0 1.5 Score Importance Random (c) SVM 50 150 250 Flipping pixels 0.0 0.2 0.4 0.6 0.8 1.0 Score Importance Random (d) CNN Figure 3: Results are shown f or the USPS data set using kernel MFI f or SVM (a) and CNN (b) , where the most important pixels found by kernel MFI are embedded in the mean picture of digit three . Figure (c) and (d) show the classifier performance loss when successi vely blurring the pixel regarding their rele vance found by kernel MFI compared to a random pixel blurring. 3.2 Results T o find a suitable trade-off between runtime and accuracy , we e valuate runtime and conv ergence behavior (in terms of the Frobenius distance of two consecuti ve results) for increasing numbers of samples. From the results, shown in Figure 2, we observ e that the Frobenius distance (green curve) con ver ges to zero already for small sample sizes (215 samples). Unfortunately , runtime grows very fast (almost exponentially) showing the boundaries of our method. Hence, a good trade-off between runtime and accuracy would be an y sample size between 500 and 2000 in this experiment. For the following e xperiments we used a sample size of 1000. Model-Based Featur e Importance The results are sho wn in Figure 3. W e observe that for both, SVM and CNN, the pix el bridge that changes the digit three to the digit eight is of high importance. In Figure 3 (c) and (d) the classifier performance for increasing amount of blurring pixels in terms of MoRF as explained above is shown. Compared to a ran- dom pixel blurring, we can clearly observ e that the performance drops significantly faster when blurring the most important pix els (as found by our proposed kernel MFI method). a) 3 b) 8 c) 3 d) 3 e) 8 Figure 4: Instance-based explanation of the SVM de- cision f or fi ve USPS test data images . The highlighted pixels are informati ve for the individual SVM decisions (plotted at the image top) – only the first two images were correctly classified. Instance-Based Feature Importances For the pixel-wise explanation e xperiment, an SVM with an RBF kernel was trained on the USPS training data set. From Figure 4 we observ e that the pixels b uilding the vertical connection from a three to an eight hav e a strong dis- criminativ e evidence. If these positions are left blank, the image is classified as three , which, in case of the last three images leads to mis- classifications. For the nucleotide-wise explanation e xperiment, an SVM with an WD kernel was trained on a synthetic training data set. W e inserted two motifs in the positive class (GGCCGT AAA at position 11 and TTTCA CGTTGA at position 24). From Figure 5 we observe that the nucleotides b uilding the two patterns, which we inserted in the positiv e sequences have strong discriminative e vidence. If the discriminativ e patterns are too noisy , the sequences are assumed to stem from the negativ e class, which, in case of the false negati ve (FN) example leads to mis-classifications. If only one of the two patterns was inserted, the classifier gi ves high evidence to the single pattern and assigns the wrong label. 0 10 20 30 40 Position -1.79 (TN) -0.77 (FN) 1.32 (FP) 2.96 (TP) s(x) C C G A C A T T A A A C A A T A C G A C A G G C C C A T T T T C A A G C A A G T A A T T C T T T A G A C C C A A T G A G C G C C A G A G A G T A C A G T A C T C G T A G T G T C G C C C C G T C C A A G C T G T C C T C T C C G A C A G G G C A C T A T A T T C A C G T T G A C A T A A C T C T G G C A T C G C C C A A C G A T G G G C C G T A A A G T A G T T T C A C G T T G A C G A G A T G C G T C T T A G Figure 5: Instance-based feature importances experiment. The highlighted nucleotids are informati ve for the SVM decision for four test sequences that hav e been correctly (TN and TP) and incorrectly (FP and FN) classified. 4 4 Conclusion & Outlook By this work, we contributed to opening the black box of learning machines. Building up on POIMs and FIRM, we proposed MFI, which is a general measure of feature importance that is applicable to arbitrary learning machines. MFI can be used for both for a general explanation of the prediction model and for a data instance specific explanation. As a nonlinear measure, MFI can detect features that exhibit their importance only through interactions with other features. Experiments on artificially generated splice-site sequence data as well as real-world image data demonstrate the properties and benefits of our approach. While in the present work we hav e focused images and sequences, the frame work allows us to explain arbitrary data sources. In future research, we would like to study further applications (e.g., in volving trees, graphs, etc), including wind turbine anomaly detection, as well as we want to in vestigate adv anced sampling techniques from probabilistic machine learning that may lead to faster con ver gence. Acknowledgments MMCV and NG were supported by BMBF ALICE II grant 01IB15001B. W e also acknowledge the support by the German Research Foundation through the grant DFG KL2698/2-1, MU 987/6-1, and RA 1894/1-1. KRM thanks for partial funding by the National Research Foundation of Korea funded by the Ministry of Education, Science, and T echnology in the BK21 program. MK and KRM were supported by the German Ministry for Education and Research through the aw ards 031L0023A and 031B0187B and the Berlin Big Data Center BBDC (01IS14013A). References [1] A. Ben-Hur, C. S. Ong, S. Sonnenbur g, B. Schoelkopf, and G. Raetsch. Support v ector machines and kernels for computational biology. PLoS Computational Biolo gy , 4(10), 2008. [2] A. Gretton, O. Bousquet, A. Smola, and B. Schölkopf. Measuring Statistical Dependence with Hilbert-Schmidt Norms. In International confer ence on algorithmic learning theory , 2005. [3] G. Rätsch, S. Sonnenburg, J. Sriniv asan, H. W itte, K. R. Müller, R. J. Sommer, and B. Schoelkopf. Improving the Caenorhabditis eleg ans genome annotation using machine learning. PLoS Computational Biology , 3(2):0313–0322, 2007. [4] W . Samek, A. Binder, G. Monta von, S. Bach, and K.-R. Müller . Evaluating the visualization of what a deep neural network has learned. arXiv pr eprint arXiv:1509.06321 , 2015. [5] B. Schölk opf and A. J. Smola. Learning with K ernels: Support V ector Machines, Re gularization, Optimization, and Be yond . MIT Press, 2002. [6] S. Sonnenb urg, G. Schweik ert, P . Philips, J. Behr , and G. Rätsch. Accurate splice site prediction using support vector machines. BMC Bioinformatics , 8(Suppl 10):S7, 2007. [7] S. Sonnenburg, A. Zien, P . Philips, and G. Rätsch. POIMs: Positional oligomer importance matrices - Understanding support vector machine-based signal detectors. Bioinformatics , 24(13):6–14, 2008. [8] M. M.-C. V idovic, N. Görnitz, K.-R. Müller , G. Rätsch, and M. Kloft. Opening the Black Box: Revealing Interpretable Sequence Motifs in K ernel-Based Learning Algorithms. In ECML PKDD , volume 6913, pages 175–190, 2015. [9] M. M.-C. V idovic, N. Görnitz, K.-R. Müller , G. Rätsch, and M. Kloft. SVM2Motif — Recon- structing Overlapping DN A Sequence Motifs by Mimicking an SVM Predictor . PLoS ONE , pages 1–23, 2015. [10] A. Zien, N. Kraemer, S. Sonnenb urg, and G. Raetsch. The Feature Importance Ranking Measure. In ECML PKDD , number 1, pages 1–15, 6 2009. 5

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment