Spikes as regularizers

We present a confidence-based single-layer feed-forward learning algorithm SPIRAL (Spike Regularized Adaptive Learning) relying on an encoding of activation spikes. We adaptively update a weight vector relying on confidence estimates and activation o…

Authors: Anders S{o}gaard

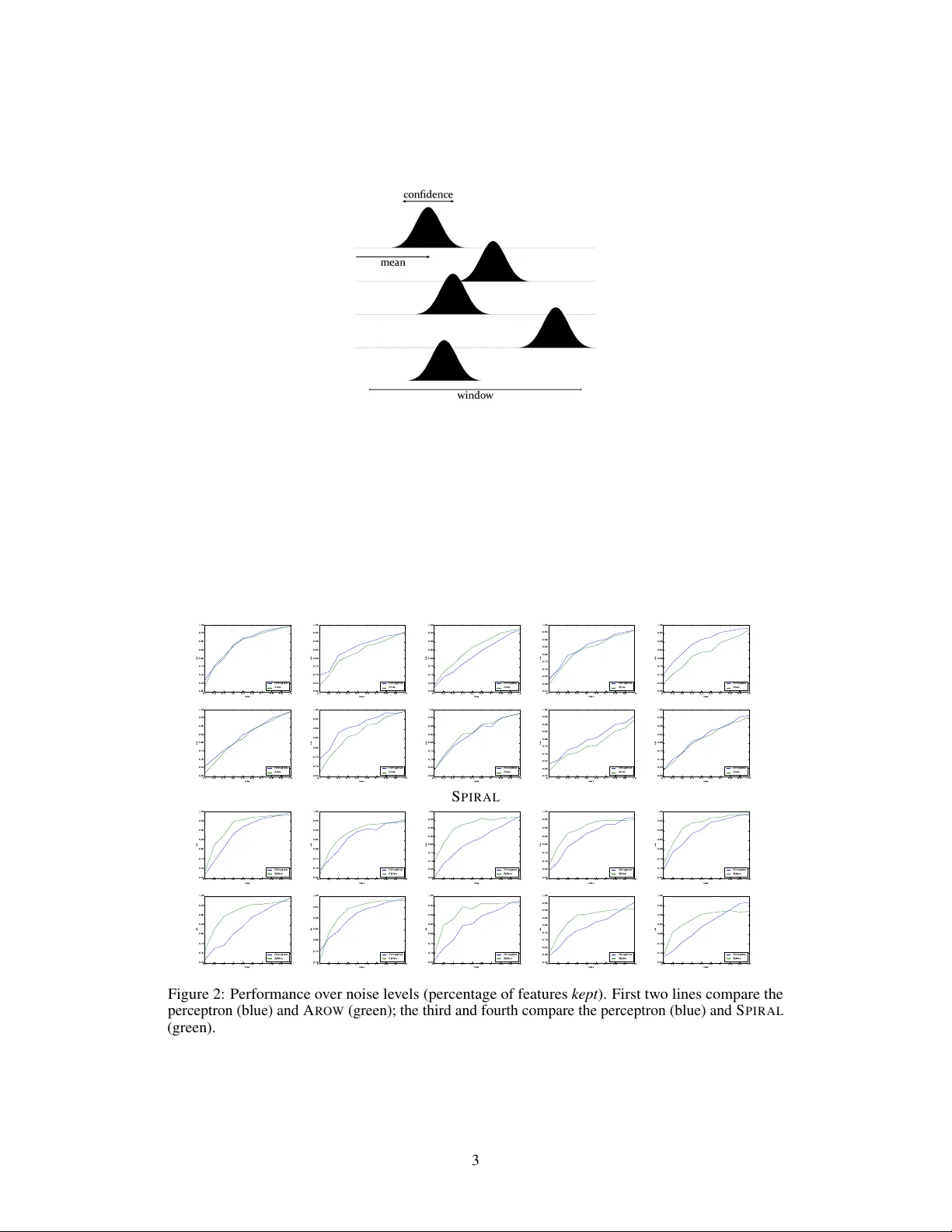

Spikes as Regularizers Anders Søgaard ∗ Department of Computer Science Univ ersity of Copenhagen Copenhagen, DK-2200 soegaard@di.ku.dk Abstract W e present a confidence-based single-layer feed-forw ard learning algorithm S P I - R AL (Spike Re gularized Adaptiv e Learning) relying on an encoding of acti vation spikes . W e adaptiv ely update a weight vector relying on confidence estimates and acti vation of fsets relativ e to previous acti vity . W e regularize updates proportionally to item-le vel confidence and weight-specific support, loosely inspired by the ob- servation from neurophysiology that high spike rates are sometimes accompanied by low temporal precision. Our experiments suggest that the new learning algo- rithm S P I R A L is more rob ust and less prone to o verfitting than both the av eraged perceptron and A RO W . 1 Confidence-weighted Learning of Linear Classifiers The perceptron [Rosenblatt, 1958] is a conceptually simple and widely used discriminativ e and linear classification algorithm. It was originally motiv ated by observations of ho w signals are passed between neurons in the brain. W e will return to the perceptron as a model of neural computation, b ut from a more technical point of vie w , the main weakness of the perceptron as a linear classifier is that it is prone to o verfitting. One particular type of ov erfitting that is likely to happen in perceptron learning is featur e swamping [Sutton et al., 2006], i.e., that very frequent features may prev ent co-variant features from being updated, leading to catastrophic performance if the frequent features are absent or less frequent at test time. In other words, in the perceptron, as well as in passiv e-aggressiv e learning Crammer et al. [2006], parameters are only updated when features occur , and rare features therefore often receiv e inaccurate values. There are several ways to approach such overfitting, e.g., capping the model’ s supremum norm, but here we focus on a specific line of research: confidence-weighted learning of linear classifiers. Confidence-weighted learning explicitly estimates confidence during induction, often by maintaining Gaussian distributions o ver parameter v ectors. In other words, each model parameter is interpreted as a mean, and augmented with a co variance estimate. Confidence-W eighted Learning C W L [Dredze et al., 2008] was the first learning algorithm to do this, but Crammer et al. [2009] later introduced Adaptiv e Re gularization of W eight V ectors (A RO W ), which is a simpler and more ef fecti ve alternati ve: A RO W passes ov er the data, item by item, computing a margin, i.e., a dot product of a weight vector µ and the item, and updating µ and a cov ariance matrix Σ in a standard additiv e fashion. As in C W L , the weights – which are interpreted as means – and the cov ariance matrix form a Gaussian distribution over the weight vectors. Specifically , the confidence is x > Σ x . W e add a smoothing constant r (= 0 . 1) and compute the learning rate α adapti vely: ∗ This research is funded by the ERC Starting Grant LO WLANDS No. 313695, as well as by the Danish Research Council. 30th Conference on Neural Information Processing Systems (NIPS 2016), Barcelona, Spain. α = max(0 , 1 − y x > µ ) x > Σ x + r (1) W e then update µ proportionally to α , and update the co variance matrix as follo ws: Σ ← Σ − Σ xx > Σ x > Σ x + r (2) C W L and A RO W hav e been shown to be more robust than the (a veraged) perceptron in se veral studies [Crammer et al., 2012, Søgaard and Johannsen, 2012], but below we show that replacing binary activ ations with samples from spikes can lead to better re gularized and more robust models. 2 Spikes as Regularizers 2.1 Neuroph ysiological motivation Neurons do not fire synchronously at a constant rate. Neural signals are spike-shaped with an onset, an increase in signal, followed by a spike and a decrease in signal, and with an inhibition of the neuron before returning to its equilibrium. Below we simplify the picture a bit by assuming that spikes are bell-shaped (Gaussians). The learning algorithm ( S P I R A L ) which we will propose below , is moti vated by the observ ation that spike rate (the speed at which a neuron fires) increases the more a neuron fires [Kaw ai and Sterling, 2002, K eller and T akahashi, 2015]. Futhermore, Keller and T akahashi [2015] sho w that increased activity may lead to spiking at higher rates with lo wer temporal precision. This means that the more activ e neurons are less successful in passing on signals, leading the neuron to return to a more stable firing rate. In other words, the brain performs implicit regularization by exhibiting low temporal precision at high spik e rates. This prev ents highly acti ve neurons from swamping other co-v ariant, but less acti ve neurons. W e hypothesise that implementing a similar mechanism in our learning algorithms will prev ent feature swamping in a similar fashion. Finally , Blanco et al. [2015] show that periods of increased spike rate lead to a smaller standard deviation in the synaptic weights. This loosely inspired us to implement the temporal imprecision at high spike rates by decreasing the weight’ s standard deviation. 2.2 The algorithm In a single layer feedforward model, such as the perceptron, sampling from Gaussian spikes only effect the input, and we can therefore implement our re gularizer as noise injection [Bishop, 1995]. The variance is the relati ve confidence of the model on the input item (same for all parameters), and the means are the parameter values. W e multiply the input by the in verse of the sample, reflecting the intuition that highly acti ve neurons are less precise and more likely to drop out, before we clip the sample from 0 to 1. W e gi ve the pseudocode in Algorithm 1, follo wing the con ventions in Crammer et al. [2009]. 3 Experiments 3.1 Main experiments W e extract 10 binary classification problems from MNIST , training on odd data points, testing on ev en ones. Since our algorithm is parameter-free, we did not do explicit parameter tuning, but during the implementation of S P I R A L , we only e xperiment with the first of these ten problems (left, upper corner). T o test the robustness of S P I R A L relativ ely to the perceptron and A R OW , we randomly corrupt the input at test time by removing features. Our set-up is inspired by Globerson and Roweis [2006]. In the plots in Figure 2, the x -axis presents the number of features kept ( not deleted). W e observe tw o tendencies in the results: (i) S P I R A L outperforms the perceptron consistently with up to 80% of the features, and sometimes by a very large margin; e xcept that in 2/10 cases, the perceptron 2 Figure 1: Sampling activ ations from Gaussian spikes. A RO W 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 0 . 8 0 . 9 1 . 0 n o i s e 0 . 6 0 0 . 6 5 0 . 7 0 0 . 7 5 0 . 8 0 0 . 8 5 0 . 9 0 0 . 9 5 1 . 0 0 a c c P e r c e p t r o n A r o w 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 0 . 8 0 . 9 1 . 0 n o i s e 0 . 6 0 0 . 6 5 0 . 7 0 0 . 7 5 0 . 8 0 0 . 8 5 0 . 9 0 0 . 9 5 1 . 0 0 a c c P e r c e p t r o n A r o w 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 0 . 8 0 . 9 1 . 0 n o i s e 0 . 6 0 0 . 6 5 0 . 7 0 0 . 7 5 0 . 8 0 0 . 8 5 0 . 9 0 0 . 9 5 1 . 0 0 a c c P e r c e p t r o n A r o w 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 0 . 8 0 . 9 1 . 0 n o i s e 0 . 5 5 0 . 6 0 0 . 6 5 0 . 7 0 0 . 7 5 0 . 8 0 0 . 8 5 0 . 9 0 0 . 9 5 1 . 0 0 a c c P e r c e p t r o n A r o w 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 0 . 8 0 . 9 1 . 0 n o i s e 0 . 6 0 0 . 6 5 0 . 7 0 0 . 7 5 0 . 8 0 0 . 8 5 0 . 9 0 0 . 9 5 1 . 0 0 a c c P e r c e p t r o n A r o w 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 0 . 8 0 . 9 1 . 0 n o i s e 0 . 6 0 0 . 6 5 0 . 7 0 0 . 7 5 0 . 8 0 0 . 8 5 0 . 9 0 0 . 9 5 1 . 0 0 a c c P e r c e p t r o n A r o w 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 0 . 8 0 . 9 1 . 0 n o i s e 0 . 6 5 0 . 7 0 0 . 7 5 0 . 8 0 0 . 8 5 0 . 9 0 0 . 9 5 1 . 0 0 a c c P e r c e p t r o n A r o w 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 0 . 8 0 . 9 1 . 0 n o i s e 0 . 6 0 0 . 6 5 0 . 7 0 0 . 7 5 0 . 8 0 0 . 8 5 0 . 9 0 0 . 9 5 1 . 0 0 a c c P e r c e p t r o n A r o w 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 0 . 8 0 . 9 1 . 0 n o i s e 0 . 5 5 0 . 6 0 0 . 6 5 0 . 7 0 0 . 7 5 0 . 8 0 0 . 8 5 0 . 9 0 0 . 9 5 1 . 0 0 a c c P e r c e p t r o n A r o w 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 0 . 8 0 . 9 1 . 0 n o i s e 0 . 6 0 0 . 6 5 0 . 7 0 0 . 7 5 0 . 8 0 0 . 8 5 0 . 9 0 0 . 9 5 1 . 0 0 a c c P e r c e p t r o n A r o w S P I R A L 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 0 . 8 0 . 9 1 . 0 n o i s e 0 . 6 5 0 . 7 0 0 . 7 5 0 . 8 0 0 . 8 5 0 . 9 0 0 . 9 5 1 . 0 0 a c c P e r c e p t r o n S p ik e s 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 0 . 8 0 . 9 1 . 0 n o i s e 0 . 6 5 0 . 7 0 0 . 7 5 0 . 8 0 0 . 8 5 0 . 9 0 0 . 9 5 1 . 0 0 a c c P e r c e p t r o n S p ik e s 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 0 . 8 0 . 9 1 . 0 n o i s e 0 . 6 0 0 . 6 5 0 . 7 0 0 . 7 5 0 . 8 0 0 . 8 5 0 . 9 0 0 . 9 5 1 . 0 0 a c c P e r c e p t r o n S p ik e s 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 0 . 8 0 . 9 1 . 0 n o i s e 0 . 6 0 0 . 6 5 0 . 7 0 0 . 7 5 0 . 8 0 0 . 8 5 0 . 9 0 0 . 9 5 1 . 0 0 a c c P e r c e p t r o n S p ik e s 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 0 . 8 0 . 9 1 . 0 n o i s e 0 . 6 5 0 . 7 0 0 . 7 5 0 . 8 0 0 . 8 5 0 . 9 0 0 . 9 5 1 . 0 0 a c c P e r c e p t r o n S p ik e s 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 0 . 8 0 . 9 1 . 0 n o i s e 0 . 6 5 0 . 7 0 0 . 7 5 0 . 8 0 0 . 8 5 0 . 9 0 0 . 9 5 1 . 0 0 a c c P e r c e p t r o n S p ik e s 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 0 . 8 0 . 9 1 . 0 n o i s e 0 . 7 0 0 . 7 5 0 . 8 0 0 . 8 5 0 . 9 0 0 . 9 5 1 . 0 0 a c c P e r c e p t r o n S p ik e s 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 0 . 8 0 . 9 1 . 0 n o i s e 0 . 6 5 0 . 7 0 0 . 7 5 0 . 8 0 0 . 8 5 0 . 9 0 0 . 9 5 1 . 0 0 a c c P e r c e p t r o n S p ik e s 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 0 . 8 0 . 9 1 . 0 n o i s e 0 . 5 5 0 . 6 0 0 . 6 5 0 . 7 0 0 . 7 5 0 . 8 0 0 . 8 5 0 . 9 0 0 . 9 5 1 . 0 0 a c c P e r c e p t r o n S p ik e s 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 0 . 8 0 . 9 1 . 0 n o i s e 0 . 6 5 0 . 7 0 0 . 7 5 0 . 8 0 0 . 8 5 0 . 9 0 0 . 9 5 1 . 0 0 a c c P e r c e p t r o n S p ik e s Figure 2: Performance ov er noise le vels (percentage of features kept ). First two lines compare the perceptron (blue) and A R OW (green); the third and fourth compare the perceptron (blue) and S P I R A L (green). 3 Algorithm 1 S P I R A L . Except for lines 8–10, this is identical to A R OW . 1: r = 0 . 1 , µ 0 = 0 , Σ 0 = I , { x t | x t ∈ R d } , v = 0 2: for t < T do 3: x t = x t · 4: if v t > v then 5: v = v t 6: end if 7: v t = x > t Σ t − 1 x t (sampling activ ations from Gaussian spikes) 8: v t = 0 < v t < 1 (clipping values outside the [0,1] windo w) 9: ν t ∼ N ( µ t − 1 , v t v ) 10: x t = x t · (1 − ν t ) 11: m t = µ t − 1 · x t 12: if m t y t < 1 then 13: α t = max(0 , 1 − y t x > t µ t − 1 ) x > t Σ t − 1 x t + r 14: µ t = µ t − 1 + α t Σ t − 1 y t x t 15: Σ t = Σ t − 1 − Σ t − 1 x t x > t Σ t − 1 x > t Σ x t + r 16: end if 17: end for 18: return µ T , Σ T is better with only 10% of the features. (ii) In contrast, A RO W is less stable, and only improv es significantly over the perceptron under mid-range noise lev els in a few cases. The perceptron is almost always superior on the full set of features, since this is a relati vely simple learning problem, where ov erfitting is unlikely , unless noise is injected at test time. 3.2 Practical Rademacher complexity W e compute S P I R A L ’ s practical Rademacher complexity as the ability of S P I R A L to fit random re-labelings of data. W e randomly label the above dataset ten times and compute the av erage error reduction ov er a random baseline. The perceptron achiev es a 5% error reduction o ver a random baseline, on a verage, ov erfitting quite a bit to the random labelling of the data. In contrast, S P I R A L only reduces 0.6% of the errors of a random baseline on av erage, suggesting that it is almost resilient to ov erfitting on this dataset. 4 Conclusion W e hav e presented a simple, confidence-based single layer feed-forward learning algorithm S P I - R A L that uses sampling from Gaussian spikes as a regularizer , loosely inspired by recent findings in neurophysiology . S P I R A L outperforms the perceptron and A R OW by a large mar gin, when noise is injected at test time, and has lower Rademacher comple xity than both of these algorithms. Acknowledgments References Cristopher Bishop. Training with noise is equi valent to tikhonov re gularization. Neur al Computation , 7(1):108–116, 1995. W ilfredo Blanco, Catia Pereira, V inicius Cota, Annie Souza, Cesar Renno-Costa, Sharlene Santos, Gabriella Dias, Ana Guerreiro, Adriano T ort, Adriao Neto, and Sidarta Ribeiro. Synaptic home- ostasis and restructuring across the sleep-wake cycle. PLoS Computational Biology , 11:1–29, 2015. K oby Crammer , Ofer Dekel, Joseph Keshet, Shai Shalev-Shw artz, and Y oram Singer . Online passiv e-agressiv e algorithms. J ournal of Machine Learning Resear ch , 7:551–585, 2006. K oby Crammer , A Kulesza, and Mark Dredze. Adaptiv e regularization of weighted vectors. In NIPS , 2009. 4 K oby Crammer , Mark Dredze, and Fernando Pereira. Confidence-weighted linear classification for text cate gorization. J ournal of Machine Learning Resear ch , 13:1891–1926, 2012. Mark Dredze, K oby Crammer , and Fernando Pereira. Confidence-weighted linear classification. In ICML , 2008. Amir Globerson and Sam Roweis. Nightmare at test time: robust learning by feature deletion. In ICML , 2006. Fusao Kawai and Peter Sterling. cgmp modulates spike responses of retinal ganglion cells via a cgmp-gated current. V isual Neur oscience , 19:373–380, 2002. Clif ford Keller and T erry T akahashi. Spike timing precision changes with spike rate adaptation in the owl’ s auditory space map. J ournal of Neur ophysiology , 114:2204–2219, 2015. Frank Rosenblatt. The perceptron: a probabilistic model for information storage and organization in the brain. Psyc hological Review , 65(6):386–408, 1958. Anders Søgaard and Anders Johannsen. Robust learning in random subspaces: equipping NLP against OO V effects. In COLING , 2012. Charles Sutton, Michael Sindelar, and Andrew McCallum. Reducing weight undertraining in structured discriminativ e learning. In N AA CL , 2006. 5

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment