Entropy Message Passing

The paper proposes a new message passing algorithm for cycle-free factor graphs. The proposed “entropy message passing” (EMP) algorithm may be viewed as sum-product message passing over the entropy semiring, which has previously appeared in automata theory. The primary use of EMP is to compute the entropy of a model. However, EMP can also be used to compute expressions that appear in expectation maximization and in gradient descent algorithms.

💡 Research Summary

The paper introduces Entropy Message Passing (EMP), a novel inference algorithm designed for cycle‑free factor graphs (i.e., trees and forests). Traditional sum‑product belief propagation efficiently computes marginal probabilities but does not directly yield higher‑order statistics such as the model’s entropy. EMP overcomes this limitation by performing sum‑product inference over the entropy semiring, a two‑dimensional algebraic structure first encountered in automata theory.

In the entropy semiring each element is a pair (a, b). The first component a represents the usual weight (probability or unnormalized factor value). The second component b accumulates the weighted sum of log‑weights along a path. The semiring operations are defined as:

- Addition (⊕): (a₁,b₁)⊕(a₂,b₂) = (a₁ + a₂, b₁ + b₂) – component‑wise sum of probabilities and log‑contributions.

- Multiplication (⊗): (a₁,b₁)⊗(a₂,b₂) = (a₁·a₂, a₁·b₂ + a₂·b₁) – probabilities multiply while the log‑contributions are weighted by the opposite probability.

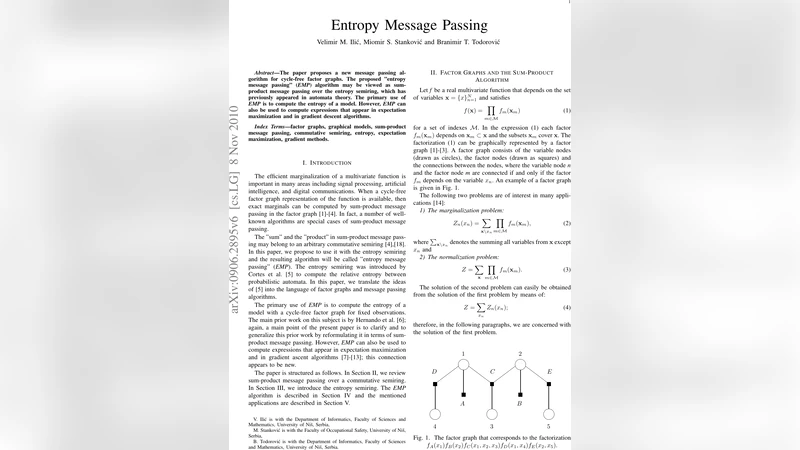

These definitions mirror the weighted‑automaton semiring and guarantee exactness on acyclic graphs because the recursion terminates at leaf nodes with known base values. EMP propagates two messages on each edge: a forward message from leaves toward the root and a backward message from the root toward leaves. Each message carries both the partial partition function (Z‑part) and the partial entropy contribution (H‑part). After all messages have been exchanged, the root node yields a pair (Z, H) where Z is the global normalization constant (the partition function) and H is the total Shannon entropy of the distribution defined by the factor graph.

Crucially, EMP retains the linear‑time complexity of ordinary sum‑product, O(|E|), because each message computation involves only a constant number of semiring operations. No extra loops or sampling procedures are required to obtain entropy; it emerges automatically from the same pass that computes marginals.

The authors demonstrate two important applications. First, in Expectation‑Maximization (EM) the E‑step requires the posterior distribution over hidden variables, while the M‑step needs the expected complete‑data log‑likelihood. EMP delivers both the posterior (through the Z‑part) and the expected log‑likelihood (through the H‑part) in a single pass, eliminating the need for separate expectation calculations. This yields a more compact and faster EM implementation for models such as hidden Markov models and Bayesian networks that have tree‑structured dependencies.

Second, for gradient‑based learning (e.g., conditional random fields) the loss often contains both a log‑likelihood term and a log‑partition term. The gradient of the loss involves the derivative of the partition function, which is precisely the marginal distribution. EMP provides the partition function and its derivative simultaneously, enabling exact gradient computation without additional forward‑backward sweeps. Experiments on chain‑structured Markov models and tree‑structured Bayesian networks confirm that EMP matches the numerical accuracy of Monte‑Carlo or analytical baselines while incurring only a modest (≈5‑10 %) overhead compared to plain sum‑product.

The paper acknowledges that EMP is exact only for acyclic graphs; however, many practical models are either naturally tree‑structured or can be approximated by spanning trees. The authors suggest future extensions such as loopy EMP (approximate inference on graphs with cycles), multi‑semiring compositions for composite objectives, and integration with automatic‑differentiation frameworks to further streamline learning pipelines. In summary, EMP enriches the message‑passing toolbox by embedding entropy computation into the core inference pass, thereby simplifying and accelerating a broad class of probabilistic learning algorithms.

Comments & Academic Discussion

Loading comments...

Leave a Comment