Bounds on the Sum Capacity of Synchronous Binary CDMA Channels

In this paper, we obtain a family of lower bounds for the sum capacity of Code Division Multiple Access (CDMA) channels assuming binary inputs and binary signature codes in the presence of additive noise with an arbitrary distribution. The envelope o…

Authors: K. Alishahi, F. Marvasti, V. Aref

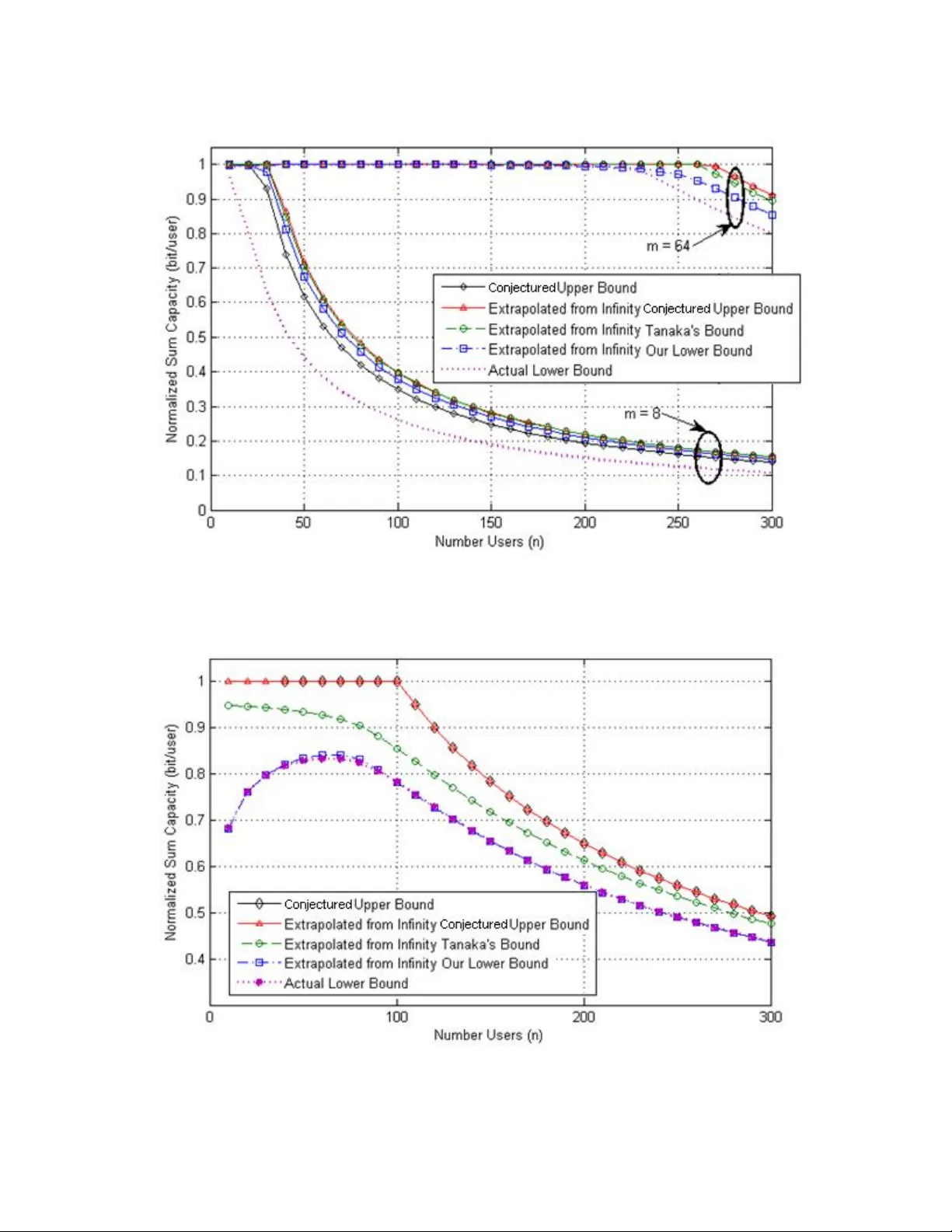

1 Bounds on the Sum Capacity of Synchronous Binary CDMA Channels K. Alishahi 1 , F. Marvasti 2 , V. Aref 2 , P. Pad 2 Index Terms – Sum Capacity, tight bounds, binary CDMA, synchronous CDMA, multi-user detection, multi access channels Abstract In this paper, we obtain a family of lower bounds for the sum capacity of Code Division Multiple Access (CDMA) channels assuming binary inputs and binary signature codes in the pr esence of additive noise with an arbitrary distribution. The envelope of t his family gives a relatively tight lower bound in terms of the number of users, spreading gain and the noise distribution. The derivation methods for the noiseless and the noisy channels are different but when th e noise variance goes to zero, the noisy channel bound approaches the noiseless case. The behavior of the lo wer bound shows that for small noise power, the number of users can be much more than the spread ing gain without any significant loss of information (overloaded CDMA). A conjectured upper bound is also de rived under the usual assumption that the users send out equally likely binary bits in the presence of additive noise with an arbitrary distribution. As the noise level increases, and/or, the ratio of the number of users and the spreading gain increases, the conjectured upper bound approaches the lower bound. We have also derived asymptotic limits of our bounds that can be compared to a formula that Tanaka obtained using techniques from statistical physics; his bound is close to that of our conjectur ed upper bound for large scale systems. 1 Department of Mathem atical Sciences, Shar if Universit y of Technol ogy, Tehra n, Iran. kasraalishahi@ math.sharif.e du. 2 Advanced Communication s Research Institute (ACRI), Sharif University of Technology, Tehran, Iran. marvasti@sharif.edu. 2 1. Introduction Multiple Access Channels (MAC) with many users a nd Multi-User Detection (M UD) at the receiver give rise to information theoretic problems and concepts much more complicated than the classical situation of single user channels. Although comprehensive theore m s for capacity regions have been developed for MAC, an explicit computation of the capacity region is not known in terms of specific model param eters. In this paper, we intend to derive a relatively tig ht family of lower bounds an d a conjectured upper bound for a binary Multi-User CDMA with binary signatu res; we assume synchronous CDMA and additive Independent and Identically Distributed ( i.i.d. ) nois e with any distribution. Below we will give a brief historical development of this area: The capacity region consists of a set of informati on rates such that simultaneous reliable communication is possible for each user. This problem was developed by Ahlswede [l ]-[2] an d Liao [3] on the two-user discrete memoryless channel. An explicit expression for the capacity region of the Gaussian discrete memoryless MAC was given by Cover [4] and Wyner [5] and discussed in [6 ]. Verdu in [7] found the capacity region of the CDMA channel as a function of the cross-correlations between the assigned signature waveforms and their signal-to-noise ratios for the symbol synchronous case and for inputs with power constraints. The same author [8] found the capacity region for symbol asynchronous case for Gaussian distributed inputs w ith power constraints; in these two papers, Verdu showed that the achievable rates depend only on the correlation matrix of the spreading coefficients. These issues and complexity of MUD receivers were discussed by the sam e author in his book [9] . In [10], the authors considered random spreading and anal yzed the spectral efficien cy, (defined as bits per chip that can be transmitted reliably) for linear detectors. In the limit, when the number of users and the signature length go to infinity (the ratio is kept co nstant), they obtained nice analytical formulas for the spectral efficiency and showed from concentration th eorems that the spectral efficiency of a typical 3 random selection of signature matrices and its average are the same with high probability. These formulas follow from the known spectrum of large covariance matrices. For finite number of users with real-valued inputs and real valued signatures, an upper bound for the sum capacity has been defined in [18]. The extension of the sum capacity bounds for asymmetric user power constraint is given in [19]; they have also iden tified the sequences that achieve the sum capacity. The authors of reference [20] have found upper and lower bounds for the spectral e fficiency (defined as the sum capacity by the authors) under quasi-static fadi ng environments, channel estimation, and training sequences; the bound evaluations are based on the works of [21]-[22]. For the binary input values, not much is known exce pt the asymptotic behavior for the spectral efficiency in the limiting case where the num ber of users ( ) and the spreading gain ( ) go to infinity when the ratio / is kept constant and SNR values are large [11] . The random matrix techniques used for Gaussian inputs do not apply here because the spectral efficiency cannot be written in terms of just the covariance matrix of the spreading sequences. Tanaka [12] app lied a technique from statistical mechanics to this problem and derived a formula for the normalized su m capacity. Tanaka evaluated the performance of a class of CDMA multiuser detectors in the large-sy stem limit analy tically. These results were later extended in [13] to include unequal po wers and fading channels. This method is non-rig orous but later, Montanari and Tse [14] have made progress towards a rigorous derivation of Tanaka’s capacity formula. The authors of reference [15] have shown that, for large systems, the capacity concentrates around its mean, i.e. a random signature matrix results in a capacity very close to the “mean capacity” 3 with high probability. The same authors in [1 6] claim ed that Tanaka’s formula is an upper bound to the capacity for all values of the parameters and de rived various concentration theorem s for the large-system limit. For binary inputs and random binary CDMA channels , we have derived a relatively tight lower bound for the sum capacity of the noiseless case [17] and [30]. In these references, we have shown that interference 3 The mean is with respect to the randomness of the signature matrix. 4 free overloaded CDMA is possible. In what follows, we will derive the sum capacity for the noiseless and noisy scenarios. Our closed form derivations, unlike the previous works, do not depend on the limiting cases but rather on the number of users, spreading ga in (signature length), and the noise distribution. The derivation of the noisy case is for a general i.i.d. distribution and special cases such as Gaussian and uniform distributions are also derived in closed forms. In the next section, the pr eliminaries and some pr opositions are discussed. Section 3 is the main part of the paper where the supremum of a family of lower bounds for noisy channels is derived. The sum capacity lower bounds for Gaussian and uniformly dist ributed additive noise are derived as special cases. Section 4 covers a derivation of a conje ctured upper bound for the sum capacity that is close to the lower bounds, and hence the adjective of “relatively tight” bounds. Section 5 gives the asymptotic derivation of the normalized sum-capacity when the number of users ( ) and the spreading gain ( ) go to infinity while the ratio / is kept constant; a comparison of our results with that of Tanaka is also included. Simulation results are discussed in Section 6, and finally the concluding rema rks are in Section 7. 2. Preliminaries 2.1. Capacity region In a MAC, there are several users sending info rmation to a common receiver and the users should overcome not only the noise but also their mutual interference. The “capacity region” ( ) of a MAC is defined as the closure of all achievable rate vectors 4 . Ahlswede and Liao [1-3] characterized t he structure of the capacity region of an n - user discrete memoryless MAC as the closure of the convex hull of the rate vectors , ,…, satisfying 4 A vector , ,…, is called an achievable rate vector if it is possible for the se nders, usi ng appropri ate codes, to send information by th ese rates with arbitrarily small probabilities of error. 5 ∑ ; | for all 1,2, … , (1) for some input product distribution … ; where refers to the symbol sent by user i and is the output of the channel. If we are to assign a single value to a MAC as a measur e of capacity of the cha nnel, the sum capacity is the most appropriate candidate. The sum capacity me asures the maximum of the total information rate (i.e., the sum of all the user rates) that can be achieved, and is equal to max , ,…, ; where th e maximum is taken over all product distributions … [23]. 2.2. Code Division Multiple Access (CDMA) channels We consider a synchronous CDMA channel as a special case of MACs, in which there are users sending binary symbols to a common receiver. The user has a signature sequence ,…, assumed to be known to the receiver. If ,…, is the vector of user symbols, the user sends , where 1 . Mor eover, there exists a background noise ,…, such that ’s are i.i.d. random variables with any arbitrary distribut ion. We assume that the channel attenuation is normalized to √ (perfect near/far attenuation compensa tion). Under these assumptions and if the received signal is ,…, , then √ ∑ or equivalently √ where ,…, is the signature matrix. In this paper, we are interested in binary CDMA signature matrices, i.e., 1 or alternatively, the signature matrix is chosen from 1 , the set of all matrices with 1 entries. 3. Lower Bounds for the Sum Channel Capacity In this section we will obtain a family of lower bou nds for the sum channel capacity of binary CDMA signature matrix in terms of , and the noise model. The point is that for given values of and , we 6 still have the choice of designing the signature m atrix in order to optimize the capacity. Therefore, the relevant quantity is the maximum take n over all potential signature matrices in 1 . In section 3.1, we consider the noiseless channel a nd derive a lower bound, the behavior of which shows that for a given spreading gain , there is a threshold much larger than , such that while the number of users is less than , there exist signature matrices with sum capacities close to . In section 3.2, we extend our techniques to cover binary CDMA channel with additive i.i.d. noise. As special cases, we will obtain lower bounds for channels with Gaussian white noise and for i.i.d. noise with uniform distribution. 3.1. Noiseless channels Even in the absence of noise, multi- user interference can affect the channel capacity [17] and [30]. In order to evaluate a lower bound, let and be natural numbers. For a matrix 1 , denote the sum channel capacity by ,i . e . , m a x . …. , ,…, ; | . Now define , m a x Theorem 1: For any and , , l o g 2 2 2 5 Proof: is the maximum mutual informa tion over all product distributions on . Specifically , ; , where √ and has uniform distribution on 1 ,…, 1 . 5 Throughout this paper, the unit of cap acity is in bits and the base of log is 2. 7 But since the channel is noiseless, the mutual information is simply equal to (deterministic channel). In the remaining of the proof, we will find a lower bound for . For a given , let √ be the map induced by , and suppose that | 1 | and the preimages of the values of 1 have cardinalities , ,…, . It can be easily seen that the mutual information is equal to – 2 l o g 2 The key point is that the above expression can be rewr itten in another form in terms of the input values rather than the output distribution: 1 2 l o g 2 where | | is the number of points mapped by to the same value as . Now if we let to be random with independent entr ies that are uniformly chosen to be 1 and take the expectation of , we obtain 1 2 log 2 But since there is a symmetry between the elements of 1 , all terms in the above summation are equal and hence for any 1 , log 2 log Since log is a concave function of , according to the Jensen’s inequality, log l o g . Therefore 8 l o g But can be computed explicitly as follows: 1 In the last expression and hence are random and the probability is computed according to this randomness. Now note that for 1,2, … , and since the rows of are independent, ’s are independent events and hence But 0 if and differ in an odd number of elements and if they differ in 2 positions. Thus, since there are different ’s that have 2 positions not equal to , we get 2 2 Substituting in the previous inequality, we obtain l o g 2 2 And since there are always values not less than the expected value, we have 9 , m a x m a x And thus equation (2) is derived. 3.2. Noisy channels In this section, we will investigate the sum capac ity for binary CDMA in the presence of noise. The setting is as before but we assume that there is an additive noise vector ,…, such that ’s are i.i.d. random variables with a given pdf . The sum capacity function in this case is defined as , , m a x , where , is the sum channe l capacity for the CDMA with signature matrix , which is equal to max ; according to Proposition (1), where the maximum is taken over all product distributions on . Theorem 2: A general Lower Bound for a Noisy channel: For any function , , , l o g 2 √ 3 where is the sum of independent random variables taking 1 with equal probability (also independent of ) . Proof: To prove this theorem, our approach is again to pick a matrix at random and then try to estimate the expected value of the mutual information of th e channel corr esponding to this matrix. The result is clearly a lower bound since the maximum is always greater than the expected value. 10 Let us fix a signature matrix and let √ where is uniformly distributed in 1 (that is s are independent and equal to 1 or 1 with pr obability ). We start by the following formula for the mutual information: ; , , l o g , , , log , , But 2 , 2 1 √ , , , 2 1 √ Since √ , we obtain the following relation ; , log 2 .2 ∑ 1 √ 2 , log ∑ 1 √ Now assume that the signature matrix is also chosen at random and take the expectation with respect to this source of randomness: ; ,, log ∑ 1 √ According to the symmetry of the above expression in vertices of the hypercube 1 as the values of , we can remove the expectation with respect to and set where 1 is arbitrary: 11 ; , log ∑ 1 √ Now let be an arbitrary function and rewrite the above formula as ; , log 2 ∑ ∑ 1 √ 2 ∑ , log ∑ 1 √ 2 ∑ Using the concavity of the logarithm functi on and applying Jensen’s inequality, we have ; l o g , ∑ 1 √ 2 ∑ Recall that Now since ’s are i.i.d., we have and hence 12 1 √ 2 ∑ 1 √ 2 But ’s and ’s are independent for different ’s. Thus we have a product of independent terms and consequently , 1 √ 2 ∑ , 1 √ 2 , 1 √ 2 But 1 √ 2 1 √ 2 1 √ 2 2 √ 2 √ Now note that if and have different entries, then √ has the same distribution as √ , where is the sum of independent random variables taking 1 with equal probability. Thus the last expression can be written as 13 2 √ And hence we obtain , ; l o g √ Since the uniform distribution is just one choice of pro duct distributions for the input vector, on e has , ; And thus , ; But then , , m a x , , This completes the proof. Corollary 1: If we let l o g where is the pdf of the additiv e noise, then from (3) we arrive at the following family of lower bounds , , l o g 2 4 2 √ 4 where log is the differential entropy for the pdf and the function is defined by . 14 Remark 1: In both noiseless and noisy cases, our approach is based on a probabilistic argument; we choose a signature matrix at random and then tr y to est im ate the expected value of the sum capacity of the channel corresponding to this random matrix. Conseque ntly, our lower bounds are in fact bounds for the average sum channel capacity of a typical signature ma trix. This point combined with the concentration theorems mentioned by Korada and Macris [15] -[16 ] imply that the sum capacity of a channel with a random signature matrix (for large and ) is greater than the bounds obtained in theorems (1) and (2) with high probability. Example 1: Gaussian noise An important special case is Additive Wh ite Gaussian Noise (AWGN) with variance . If we denote the capacity in this case by , , ), then we have the following family of lower bounds: Proposition 2: For any positive real number , , , log √ l o g 2 √ 1 5 The proof is rather straightforward from (4). This family of the lower bounds along with its envelope (supremum) is simulated in Figs. 2-4 and will be discussed in Section 5. Example 2: Uniform distribution Assume that the noise is of the form ,…, where ’s are i.i.d. random variables with uniform distribution on – , . Denote the capacity in this case by , , . Proposition 3: , , l o g 2 4 2 √ 6 15 where the function is defined as 1 | | | | 1 0 | | 1 The proof is again straightforward from (4). Notice th at for the uniformly distributed noise the parameter disappears due to cancellation. Corollary 2: The noiseless lower bound ((2) from Theorem 1) can be derived from (4) of Corollary 1 when noise goes to zero. Proof: Let be an arbitrary pdf and 0 . Define . It can be easily checked that l o g and where and as before. Now, from (4), we know that , , l o g l o g 2 4 2 √ l o g 2 4 2 √ . But for fixed values of , as 0 , √ 0 if 2 and √ 0 if 2 . Hence, we obtain lim , , l o g 2 0 . 16 It can be seen that the right-hand side takes its maximum at 0 since 0 1 ; thus the noiseless bound shown in (2) is derived. 4. A Conjectured Upper Bound for the Sum Channel Capacity As stated in Proposition 1 in Section 2, the sum capacity of a multiple access channel is the maximum mutual information over all product distributions on the input v ector. But the symmetry between user s and between 1 ’s and 1 ’s in CDMA chan nels makes it plausibl e to think that th e best probability distribution is the uniform distribution (the symmetric distribution with respect to users) on the input vector. Although this statement seems obvious, it is an open problem (please refer to footnote 6 for m ore details). In [16], Korada and Macris conjecture that th is is the case for the Gaussi an white noise. Here, we state the conjecture in an extended form, where the noise can have any general symmetric pdf: Conjecture: Let 1 and √ , where ,…, constitutes i.i.d. random variables with a given symmetric pdf (i.e. , ). Then max ∏ ; is attained for the uniform distribution when ’s are independent and equal to 1 with probabilit y . It is not difficult, as shown by Korada and Macris , to see that if we consider the average mutual information over all signature matrices, then the m aximum is attained for the uniform distribution [ 1 6], but the problem for a specific channel comes to be much more difficult. In this section, assuming this conjecture is true, we will derive a conjectured upper bound for the sum capacity, which is very close to the lower bound obtained in the previous section. This implies that the bounds are relatively tight. Proposition 4 Conjectured Upper Bound: Let be a sy mmetric probability distribution function, that is . Defining the function by ∑ √ , we have 17 , , m i n , 7 where is the differential entropy as defined in (4). For the noiseless case, we should use the usual entropy instead of the differential entropy as will be shown in Example 5. Proof: It is clear that for binary inputs , , . Assume that is the signature matrix that results in the maximum mutual information between and √ . Based on our conjecture, for any signature matrix including , the mutual information is maximized at the uniform distribution for the input vector. Thus by assuming that ’s are independent a nd uniform , we have , , , ; | But | and ,…, Now note that √ ∑ , where ∑ is the sum of independent symmetric Bernoulli random variables taking 1 . Hence has the distribution of a convolved binom ial random variable and a random variable with pdf which is exactly . By Substituting in the above relations, the proof is complete. Example 3 (The Gaussian noise): For a Gaussian distribution, the function becomes 1 √ 2 2 √ 18 Then, we have , , m i n , l o g √ 2 Example 4 (The noise with uniform distribution): For a uniform distribution, the function becomes 1 2 2 1 , 2 √ Then, we have , , m i n , l o g 2 Reference [29] also discusses the maximum entropy of the sum of independent random variables. Example 5 (The noiseless channel): For the noiseless case, we can assume that th e noise pdf is an impulse. Consequently, becomes 2 2 √ This is a discrete probability distribution. Hence we should use the usua l entropy instead of the differential entropy and a true upper bound as opposed to the conjectured 6 one shown in (7) becomes 6 Based on the book by Marshall and Olkin [27], for binary inpu ts with equal probability, the upp er bound conjecture is a tru e bound for the no iseless case. Appa rently, Chang & Weldon [24] has assumed this wit hout a proper refere nce in their derivation. Tanaka, Korada & Ma cris ([15-16]) ha ve conjecture d that mutual informat ion is maximized for symmetric binary inputs with additiv e Gaussian noise. Our conjecture for th e noisy case with symmetric pdfs is still an open problem. Thus, we have re ferred everywhere to our upper bound as "conjectured upper bound" for the symmetric noisy cases; in the noisy cas es such as Examples 3 a n d 4, an obvio us true upper bound is the noiseless case in Example 5. 19 , m i n , This bound is identical to that of Chang and Weldon f or T-user MAC’s [24]. 5. Asymptotic Analysis In order to compare our results to Tanaka’s sum cap acity bound as shown below [12]-[ 16 ], and [26], we first try to find the normalized lower bound for the s um capacity in the limit when and go to infin ity while keeping the ratio / constant. Subsequently, the sam e limit is found for the conjectured upper bound. Simulation results in Section 6 show a co mparison of our bounds in the lim it with that of Tanaka’s bound. 5.1. Lower Bounds for the Sum Channel Capacity in the Limit It can be seen from [17] and [30] that if 0 , for any , lim ⁄ , , , 1 . This is because (the maximum number of users without any interference defined in [30]) grows faster than linear with respect to . In the following theorem, we represent a limiting procedure which we claim is the appropriate regime for the noiseless case and derive th e exact capacity in the limit in Theorems 3 and 5. Theorem 3 Noiseless case : If , denotes the maximum capacity per user, i.e. , , , , then for any lim ⁄ , , m i n 1 , 1 2 8 The proof is given in Appendix A. Therem 4 : ( Noisy channel): For any arbitrary function , lim ⁄ , , , 1 s u p , 1 l o g 2 20 Considering the family l o g , we obtain the following bound for the Gaussian case: Special Case (Gaussian Noise): lim ⁄ , , , 1 i n f sup , 1 2 log log 1 4 9 The proof is given in Appendix B. Although (9) has been derived b y just considering a fam ily of special functions for , we will prove in Appendix D that the best bound obtainable b y any cannot be much better than (9). Moreover, our simulation results show that the formula (D1) in Appendix D can be used as a good approximation for (9), which is computationally much simpler. 5.2. Conjectured Upper Bounds for the Sum Channel Capacity in the Limit Theorem 5: Noiseless Channel: In the limit, we have lim ⁄ , , m i n 1 , 1 2 10 Proof: From Example 5 and [24] , we have lim ⁄ , , m i n 1 , log n m i n 1 , log 2πen 2 log n m i n 1 , 1 2 Theorem 6 : Noisy Channel : In the limit, we have lim ⁄ , , , m i n 1 , 1 11 where is a standard Gaussian ra ndom variable independent of . Proof: The obtained from (7) of Proposition 4 is equal to the pdf of the r.v. 21 √ √ . Due to the central limit theorem, the term on the right hand side approaches a Gaussian r.v. in the limit and thus we have (11). Example : for the Gaussian noise, when is a Gaussian random variable of variance , one has log 2 and log2 . Hence lim ⁄ , , , m i n 1 , 1 2 log 1 12 The above upper bound is rem iniscent of the Shannon capacity for an AWGN channel ( 1/ / is the normalized bandwidth and SNR ). Theorems 3 and 5 for the noiseless cases show that the ratio of the upper and lower bounds approach 1 . However, there is alway s a gap between the bounds for finite and . Wh en approaches 0 , the above bound approaches which is not a good bound for low SNR values since only for / 1.593 , the above bound is less than 1 bit/user (see Fig. 8). However, for 1 , we have the actual channel capacity, whic h is equal to the single user capacity. 6. Simulation Results The lower and conjectured upper bounds have been s imulated with respect to , and / . Also, the asymptotic bounds are simulated and compared to the results of Tanaka. For the noiseless case, simulations of the normalized lower and upper b ounds derived from Theo rem 1 and Example 5 of Proposition 4 have been extensively studied in [ 30] an d are given in Fig. 1 as a reference. This figure shows that there exist CDMA matrices such that the number of users, , can be 3-4 times the spreading gain, . For example, for 6 4 , the maximum number of users with almost no interference is close to 22 239 . For 6 4 , orthogonal Walsh matrices can be used, and for 64 239 , there are matrices [30] that are interference free. For the AWGN case, according to (5) from Proposition (2 ), a fa mily of lower bounds for the normalized sum channel capacity for any number γ can be obrtained. Fig. 2 shows some of the bounds of this family for various values of γ with respect to . Also the envelope (supremum) of the whole family is plotted in the same figure when / is fixed 7 and is equal to 16 dB. This envelope behaves similarly to the noiseless case of Fig.1, and as expected, the sum capacity is reduced. Fig. 3 shows the normalized lower and conjectured upper bounds for two values of / . This figure shows that the noisier the channel, the tighter is our lower and conjectured upper bounds. The variation of the normalized sum capacity bounds w.r.t. / for various number of users is shown in Fig. 4. This figure shows the logari thmic rela tionship between the capacity and the signal-to-noise- ratio. The reason of the leveling off of the curves is due to the fact that the normalized sum capcity cannot be greater than one . This figure shows that for larger given a fixed (i.e., larger ) the bound is tighter when / is low. However, for higher / and the smaller given a fixed , the upper and lower bounds will approach 1 bit/user faster and hence are tighter in that region. In summary , the sum capacity bounds be have differently for different / values and spreading gains. In general, for a given / and , the sum ca pacity bounds are very tight and increase almost line arly with ( 1 bit/user) up to a point (thi s point increases with increasing and / ) and then, suddenly, the interfereneces of users dominate as is increased. Bey ond this point, the conjectured upper and lower bounds are no longer as tight. However, the higher and / , the tighter are the bounds in this region. 6.1. Comparison with Tanaka’s bound in the limiting case 7 / for CDM A is define d as 1 2 ⁄ assuming that each user is normalized to 1 . The signature matrix is assumed to be norm alized by 1/ √ . The chip period is also normalized to one unit of time. 23 Although Tanaka’s definition of the sum ca pacity is slightly different from ours 8 , we would like to compare our bounds, derived from the lim iting case in S ection 5, with that of Tanaka’s. The reader should bear in mind that our results (even for the asym p totic cases) are valid for any additive noise with a general pdf, while Tanaka’s asymptotic results are only valid for additive Gaussian noise. Al so, Tanaka’s results may not be valid for finite dimensional systems, in general. We have also managed to simulate the com plic ated formulae of Tanaka 9 from a co mbination of references [12]-[16] for 0.5, 1, 2, 4, and 8 . For 1 , fortunately, we do have t he exact capacity since we can use Walsh signature matrices, and due to its orthogonality , its performance is equivalent to binary PSK. The actual capacity is 1 , where is the probability of error and is related to / through the normal distribution. Figs. 5 and 6 show a comparison of the actual norm alized sum capacity with Tanaka’s bound for 0 .5 and 1 , respectively. Clearly, Tanaka’s bound is an upper bound. In these two figures, we have also included our lowe r and upper bounds. Tanaka ’s bound becomes tighter when increases from 0.5 to 1 ; our upper bound also becomes tighter with increasing but is not as good as Tanaka’s bound for / values less than 8 dB. As becomes greater than 1 , our bounds become tighter, but we have no way to evaluate Ta naka’s bound since the actual sum capacity is not known. However, as shown in Fig. 7, Tanaka ’s bound approaches our upper bound with increasing ; we can show this analytically from Appendix C. An important observation from Fi gs. 5-7 is that that for any , the normalized sum capacity can approach 1 bit/user for large values of SNR but this is not true for finite dimensions (due to overloaded interference), and therefore cannot be extrapolated from infinite dimension to fi nite dimensions. 8 Actually, the defi nition of s u m capacity by Tanaka and refe rences [15-16] is not exac tly the same as ours. They maximize, over the input probab ilities, the average mutual information w.r.t. the random sign ature matrices. We define the capacity as the maximum over all matrices and input probabilities. The averag ing over all matrices is a lower bound for our definition of the sum capacity. 9 For the Matlab and Mathcad codes of Tanaka’s formulae as well as ours, please check http://acri.sharif.edu/en/g7/kotob/default.asp?page=1 24 An interesting point about our asymptotic (large scale systems) bounds is that they are very good approximations for finite dimensional CDMA system s. Fig. 8 shows a comparison between lower and conjectured upper bounds for finite dime nsional and the asy mptotic cases when 2 . For 4 , the asymptotic upper bound and the finite di mensional one coinside. Also, for 3 2 , there is very little difference between the asymptotic an d the finite lower bounds; our computer sim ulations show that this conclusion is valid for all values of provided that is adjusted according to the vlaues of . Since simulations of the bounds in the lim it are m u ch simpler, we can use the lower and upper bounds derived in Section 5- (9) 10 and (12)- instead of the bounds derived from the combinatorics in (5) and (7). Fig. 9 shows another interesting comparison betw een the actual bounds deve loped from combinatorics and the asymptotic bounds extrapolated to finite scales ( 8 , 6 4 and / 16 dB ). For 6 4 , the two conjectured upper bounds coincide while Tanaka ’s extrapolated bound is slightl y lower than our conjectured upper bound. The exrapolated lower bound is h igher than the actual bound derived from combinatorics. Since / 6 4 , as increases, the gap between our upper bound and Tanaka’s bound diminishes. For 8 , Fig. 9 shows that our asympotic bou nds as well as Tanaka’s bound are above our combinatorics upper bound for large number of users ( 3 0 , . ., 4 and therefore not accurate. This senario changes with higher noise variances. For a high noisy environment and with the previous parameters, Fig. 10 shows that the extrapolated a nd actual bounds coincide; Tana ka’s bound, as usual, is in between closer to the conjectured upper bound. The reason that the bounds coincide can be explained from the bahvior of (5) an d (9) for the lower bounds and Example 3 and (12) for the u pper bounds when the noise variance goes to infinity. We can easily show that the ratio of (5) and (9) goes to 1 in the limit, and for the conjectured upper bounds, we can show that for large variances, the pdf in Example 3 becomes Gaussian like with a variance of (convolution of binomial and Gaussian pdf’s). Thus, the upper bound in Example 3 degnerates into (12) an d we can show that the ratio goes to 1 in the limit. 10 Eq. (D1) in Appendix D is even a simpler a pproximation of (5 ) and (9). 25 Fig. 1. The lower and upper bounds for the norm alized channel capacity vs. the number of users for different spreading gains in a noiseless system accor ding to Theorem 1. Fig. 2. A family of lower bounds for the sum capac ity of an AWGN channel using different γ 's and their envelope vs. number of users when / 8 dB and the spreading gain is 6 4 . 26 Fig. 3. The normalized sum capacity lower (e nvelopes) and conjectured upper bounds for AWGN channels vs. for different / values when 6 4 . Fig. 4. The normalized sum capacity lower (envelopes) and conjectured upper bounds vs. / for different values of with 6 4 . 27 Fig. 5. The normalized sum capacity bounds vs. / in the limit when and go to infi nity fo r 0 . 5 ( ). In this case the orthogonal Walsh signature matrix is equivalent to the single user PSK. Tanaka’s result is an upper bound to the actual capacity in this case. Fig. 6. The normalized sum capacity bounds vs. / in the limit when and go to infi nity fo r 1 ( ). In this case the orthogonal Walsh signature matrix is equivalent to the single user PSK. Tanaka’s result is a very tight upper bound to the actual capacity in this case. 28 Fig. 7. The normalized sum capacity bounds vs. / in the limit when and go to infi nity fo r 2, 4 a nd 8 . Depending on the values of , Tanaka’s bound is somewh ere between our bounds but closer to our conjectured upper bound as increases. As increases, our lower and conjectured upper bounds and Tanaka’s bound become very tight . Fig. 8. The normalized sum capacity vs. / . As inclreases with 2 , the sum capacity converges to the theoretical limit. For 3 2 , there is very little difference between the liming case and the simulations of finite CDMA. 29 Fig. 9. Comparison of our asymptotic and Tanaka’s capacity bounds vs. extrapolated to finite size scale, 8, 64 and / 1 6 d B . Fig. 10. Comparison of our asymptotic and Tanaka’s capacity bounds vs. extrapolated to finite size scale for a high noisy environment, 6 4 and / 4 d B . 30 7. Conclusion In this paper, for binary input and bi nary CDMA signatures, we have derived a family of lower bounds for additive i.i.d . noise of any distribution with respect to the number of users, , the spreading gain, , and the pdf of the noise. The envelope of this fa mily gives a lower bound. Special case of AWGN has also been derived and simulated. The bound and simu lations show that when the noise variance goes to zero, the noisy and the noiseless lower bounds become identical. We have also derived a conjectured upper bound for a gener al i.i.d. symmetric noise distribution; special cases of Gaussian and uniform distribution are also obtained. Simulations also show that for a given noise level ( / ) and spreading gain, , the number of users, , can be greater than with almost no interference, i.e., overl oaded CDMA is possible and thus we can improve the throughput of a CDMA system by designi ng structured CDMA codes. In general, for a given / and , from Figs. 1, 3, and 4, the sum capacity bounds are very tight and increase almost linearly with ( 1 bit/user) up to a point (this point increases with increasing and / ) and then, abruptly, the interferenece of users dominates as is increased. Beyond this point the upper and lower bounds are no longer as tight. However, the higher and / , the tighter are the bounds in this region. We have also simulated Tanaka’s bound and showed that it is a tight upper bound in the limiting case for 1 . As increases, we have shown analy tically as well as by simulations that this bound approaches our conjectured upper bound for a wide range of / . For future work, we intend to generalize our bound s to nonbinary user signals and nonbinary CDMA signature matrices. The proof of the conjecture is al so another interesting problem to solve. Also, we would like to extend our results to the asynchro nous case with near/far effects and imperfect channel estimations and training. Extension to fading channels is another issue to be investigated. The case when additive noise is not i.i.d. , is another interesting topic to be investiga ted. 31 Appendix A Proof of Theorem 3 (Section 5.1) : One can easily check that ~ . Hence from (2), we have 2 2 ~ 2 1 By using a change of variable , the last integral becomes √ 2 √ 2 Stirling’s estimation, ! ~ √ 2 , implies that ~2 2 And so we obtain lim ⁄ , , 1 l i m ⁄ , 1 log 2 √ 2 √ 1 l i m ⁄ , 1 log 2 2 √ 2 √ 1 l i m 1 log 2 where is a sequence of finite measures on 0,1 given by √ √ √ √ for and 0 for 0 . But according to Varadhan’s lemma [25], lim 1 log 2 m a x , 1 where is the rate function which is defined as th e unique lower semi-continuous function with the following properties: (i) limsup log i n f for any open . (ii) liminf log i n f for any closed . 32 Now let , . We will distinguish two cases, 0 and 0 . In the first case, we have liminf 1 log liminf 1 log 2 √ 2 √ liminf 1 1 2 l o g log l og 1 2 l o g 1 √ 1 2 liminf 1 log 1 2 log 1 1 2 liminf 1 liminf 1 log 1 2 If 0, then liminf 1 log liminf 1 log 1 √ liminf 1 1 2 l o g log log 1 √ 1 2 liminf 1 log 1 2 log 1 1 2 liminf 1 log 1 1 2 1 2 0 Thus we should have 0 0 and for 0 . Hence from (A1), we have lim 1 log 2 m a x 0 , 1 1 2 Appendix B Proof of Theorem 4: Noisy Lower-bound in the limit: According to (3), , , 1 , , 1 1 log 2 √ 33 lim ⁄ , , , 1 1 1 log 2 √ 2 √ ~ 2 √ 2 √ Again we have ~2 And the central limit theorem implies that 2 √ 2 √ 2 where ~ 0, 1 is a standard Gaussian ra ndom variable, independent of . lim ⁄ , , , 1 1 1 log 2 √ 1 1 1 log 2 2 1 1 1 log 2 1 1 s u p , 1 log 2 1 s u p , 1 l o g 2 B1 34 Special Case of Gaussian Noise : Let √ be the pdf of a centered Gaussian random variable of variance and set . In this case clearly we have . To compute 2 in this case we need to do some mo re calculations for the Gaussian random variable: If is a standard Gaussian random variable and , are arbitrary real numbers, we ha ve 1 √ 1 Thus, we have log l o g l o g l o g 1 1 4 1 2 log 1 4 Hence the right-hand side of (B1) in this case becomes 1 sup , 1 2 log log 1 4 Thus, we get (9). Appendix C Tanaka’s Bound Approaches Our Asymptotic Upper Bound as Increas es In this appendix, we show that for large and the noise variance 0 , Tanaka’s formula is close to our bound log 1 . Tanaka’s formula can be rewritten as [12]: 35 1 2 log 1 1 , l o g In which, , 2 1 ln cosh √ where is the standard normal measure and 1 1 tanh √ Notice that and hence tanh ∞ √ √ 1. ∞ √ 1. ∞ Q 1 1 And therefore lim ∞ 0 . for 0 , tanh , thus we can rewrite the above equation as: tanh ∞ √ √ √ ∞ √ √ √ 2 √ √ 2 √ 0 Using the Taylor expansion about 0 , it can be easily seen that ln cosh , thus , 2 1 √ 2 2 √ 2 2 √ And since ~ , consequently , 0 and hence ~ 1 2 log 1 Appendix D Donsker-Varadhan inequality [25]: If and are two probability measures on a given space , then inf : ln ln 36 where is the Radon-Nikodym derivative of with respect to . Infimum is attained at ln . We proved that for any arbitrary function , lim ⁄ , ∞ , , 1 s u p , 1 l o g 2 1 And hence lim ⁄ , ∞ , , 1 i n f sup , 1 l o g 2 But inf sup , 1 l o g 2 s u p , inf 1 l o g 2 s u p , 1 inf l o g 2 Now let and be probability measures induced on from the random variables 2 and respectively, and let . Then Donsker-Varadhan inequality implies that inf l o g 2 l o g ln l o g ln where . For the Gaussian noise, 1 , and thus ln 1 2 ln 1 4 1 2 4 4 1 2 ln 1 4 4 4 Therefore we have inf sup , 1 l o g 2 s u p , log 2 4 4 l n 1 4 37 Hence the best lower bound obtainable from B1 is not be tter than 1 s u p , log 2 4 4 l n 1 4 1 Acknowledgement We would like to sincerely thank A. Am ini, G.H. Mohimani, B. Akhbari, and M.H. Yasaee for their helpful comments. We are specifically indebted to the two anonymous reviewers and the associate editor for their helpful comments; in fact, Dr. Gerhard Kramer has brought t o our attentions the similarity of our upper bound for the noiseless case with that of T- c odes developed by Chang and Weldon [24], and the proof that our conjectured upper bound is a tr ue upper bound for the noiseless case (Section 4) based on the works of Shepp and Olgin [27] and the two correspondi ng papers [28]-[29]. References [1] R. Ahlswede, “Multi-way communication channels ,” in Proc. 2nd Int. Sym p. Information Theory, Tsahkadsor, Armenian SSR, pp. 103- 135. 1971 [2] R. Ahlswede, “The capacity region of a channel with two senders and two r eceivers,” Ann. Prob., vol. 2, pp. 805-814, Oct. 1974. [3] H. Liao, “Multiple access channels”, Ph.D. thesis, Department of Electrical Engineering, University of Hawaii, Honolulu, 1972. [4] T. Cover, “Some advances in-broadcast channels,” Advances in Communication Systems , vol. 4. New York: Academic, pp. 229-260, 1975. [5] A. D. Wyner, “Recent results in the Shannon th eory,” IEEE Trans. Inform. Theory, vol. IT-20, Jan. 1974. [6] T. Cover, J. Thomas, Elements of Information The ory, John Wiley &Sons, second Ed., 200 6. 38 [7] S. Verdu, “Capacity region of Gaussian CDMA channels: The symbol synchronous case,” in Proc. of the Allerton Conf. on Commun., Control, and Computing, Mon ticello, IL, USA, Oct. 1986. [8] S. Verdu, “The capacity region of the symbol -asynchronous Gaussian multiple-access channel,” IEEE Trans. Inform. Theory, vol. 35, pp. 733 –751, July 1989. [9] S. Ver du, Multiuser Detection , Cambridge University Press, 1998. [10] S. Verdu and S. Shamai (Shitz), “Spectral efficiency of CDMA with random spreading,” IEEE Trans. Inform. Theory, vol. 45, no. 2, p p. 622–640, 1999. [11] D. N. C. Tse and S. Verdu, “Optimum asympt otic multiuser efficiency of randomly spread cdma,” IEEE Trans. Inform. Theory, vol. 46, no. 7, pp. 2718–2722, 2000. [12] T. Tanaka, “A statistical-mechanics approach to large-system analysis of CDMA multiuser detectors,” IEEE Trans. Inform. Theory, vol. 48, no. 11, pp. 28 88–2910, Nov. 2002. [13] D. Guo and S. Verdu, “Randomly spread CDMA : Asymptotics via statistical physics,” IEEE Trans. Inform. Theory, vol. 51, n o. 6, pp. 1983–201 0, 2005. [14 ] A. Montanari and D. Tse, “Analysis of be lief propagation for non-linear problems: The example of CDMA (or : How to prove Tanaka’s formula),” in Proc. of the IEEE Inform. Theory Workshop, Punta del Este, Uruguay, Mar 13–Mar 17 2006. [15]S. B. Korada and N. Macris, “On the concentra tion of the capacity for a code division multiple access system,” in Proc. of the IEEE Int. Symposium on In form. Theory, Nice, France, , pp. 2801–2805, June 2007. [16] S. B. Korada and N. Macris, “Tight bounds on the capacity of binary input random CDMA systems,” in arXiv:0803.1454v1 [cs.IT] , March 2008. 39 [17] P. Pad, F. Marvasti, K. Alishahi and S. Akbari, “Errorless codes fo r over-loaded synchronous CDMA systems and evaluation of channel capacity bounds”, on Proc. of Int. Symp. Information Theory (ISIT’2008),, Toronto, June 2008. [18] M. Rupf and J. L. Massey, “Optimum sequen ce multisets for synchronous code-division multiple- access channels,” IEEE Trans. Inf. Theory , vol. 40, pp. 1261–1266, July 1994. [19] P. Viswanath and V. Anantharam, “Optimal sequences and sum capacity of s y nchronous cdma systems,” IEEE Trans. Inf. Theory, vol. 45, pp. 1984–199 1, Sept. 1999. [20] S.P. Ponnaluri and T. Guess, “Effects of Spreading and Training on Capacity in Overloaded CDMA ,” IEEE Trans. Comm ., vol. 54, pp . 523-52 6 , Apr. 2008. [21] M. Medard, “The effect upon channel capacity in wireless communications of perfect and imperfect knowledge of the channel,” IEEE Trans. Inf. Theory, vol. 46, pp. 933–946, Ma y 2000. [22] B. Hassibi and B. Hochwald, “How much training is needed in multiple antenna wireless links?,” IEEE Trans. Inf. Theory, vol. 49, pp. 951–963, Apr. 2003. [23]J. K. Wolf, “Codig techniques for m ultiple acce ss communication channels,” in New Concepts in Multi-User Communication, J. K. Skwirzynski, Ed. Al phen an de Rijn: Sijthoff & Noordhoff, pp. 83-103, 1981. [24] S.-C. Chang and E. J. Weldon, Jr., "Coding fo r T-user Multiple-access Channels, IEEE Trans. Inf. Theory, vol. 25, no. 6, Nov. 19 79. [25] S.R.S. Varadhan, “Asymptotic Probabilities a nd Differential Equations, Co mm. Pure Appl. Math, vol 19, pp.261-286, 19 66. [26] T. Tanaka, “Replica analysis of performance loss due to separation of detection and decoding in CDMA channels,” on Proc. of Int. Symp. Information Theory (ISIT), June 2006 . 40 [27] A.W. Marshall and I. Olkin, Inequalities, Theroy of Majorization and Its Applications, Mathematics in Science and Engineering, Vol 143, Academ ic Press (Theorem E.2 p. 406). [28] P. Harremoes " Binomial and Poisson distributions as maxim u m entropy distributions , IEEE Trans. on Information Theory, pp. 2039-2041, vol 47, no. 5, July 2001. [29] E. Ordentlich, “Maximiz ing the entropy of the sum of bounded independent random vairables”, , IEEE Trans. on Information Theory pp. 2176, 2181, vol 52, no. 5, May 2006. [30] P. Pad, F. Marvasti, K. Alishahi and S. Akba ri, “A Class of Errorless Codes for Overloaded Synchronous Wireless and Optical CDMA System s”, Accepted for publication, IEE Trans. IEEE. 41 Biographies Dr. Kasra Alishahi received the B.S., M.S., and Ph.D. degrees all from the Department of Mathematical Sciences, Sharif University of Technology, Tehran, Ir an, in 2000, 2002, and 2 008, respectively. He is currently an Assistant Professor with Sharif University of Technology. His research interests are stochastic processes and stochastic geometry. Dr. Alishahi was a recipient of a gold medal in the International Mathematical Olympiad in 1998 . Dr. Farokh Marvasti (S’72–M’74–SM’83) received the B.S., M.S., and Ph.D. degrees all from Renesselaer Polytechnic Institute, Troy, NY, in 1970, 1971, and 1973, respectively. He has worked, consulted, and taught in various industries and academic institutions since 1972. Am ong which are Bell Labs, University of California Davis, Illinois Ins titute of Technology, University of London, King’s College. He is currently a professor with Sharif University of Technology, Tehran, Iran, and the Director of the Advanced Communications Research Ins titute (ACRI). He has approximately 60 journal publications and has written several reference books. His latest book is Nonun iform Sampling: Theory and Practice (New York: Kluwer, 2001). Dr. Marvasti was one of the Editors and Associate Editors of the IEEE TRANSACTIONS ON COMMUNICATIONS AND SIGNAL PROCESSING from 1990 to 1997. He is also a Guest Editor of the Sp ecial Issue on Nonuniform Sampling for the Sampling Theory and Signal and Image Processing Journal Mr. Vahid Aref (S’07) was born in 1984. He received the B.S. and M.S. degrees all from the Department of Electrical Engineering, Sharif University of Technology, Tehran, Iran, in 2006 and 2008, respectively . He is planning to continue his PhD at EPFL in La usanne, Switzerland in Septe mber of 2009. Mr. Aref received a silver medal in the National Physics Olympiad competition in 2001. Mr. Pedram Pad (S’03) was born in Iran in 1986. H e is a double major B.S. degree student of electrical engineering and pure mathematics at Sharif University of Technolog y , Tehran, Iran. He is also a member of Advanced Communications Research Institute (ACR I). Mr. Pad received a gold medal in the National Mathematical Olympiad competition in 2003.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment