SAFIUS - A secure and accountable filesystem over untrusted storage

We describe SAFIUS, a secure accountable file system that resides over an untrusted storage. SAFIUS provides strong security guarantees like confidentiality, integrity, prevention from rollback attacks, and accountability. SAFIUS also enables read/wr…

Authors: V Sriram, Ganesh Narayan, K Gopinath

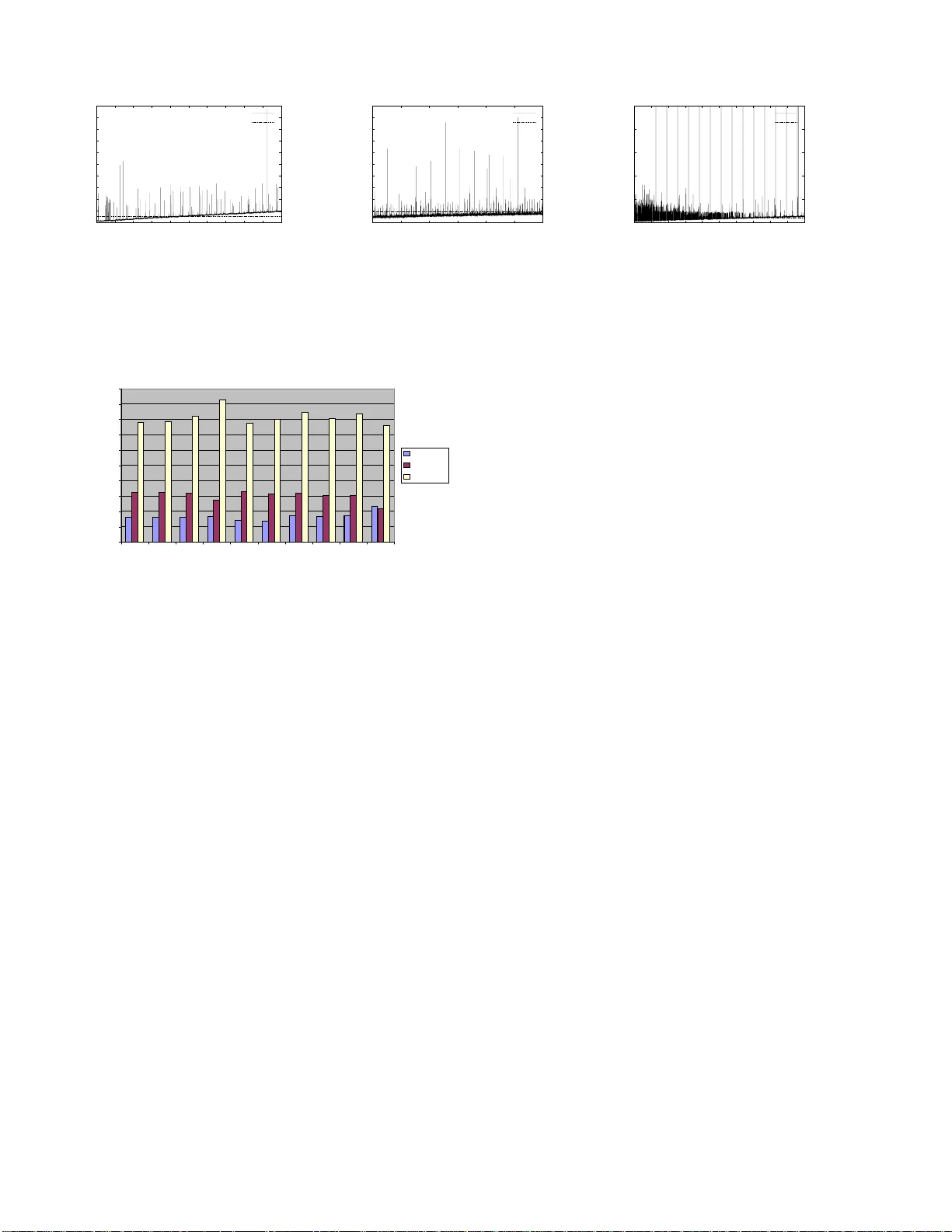

1 SAFIUS - A secure and accountable filesyst em ov er untrusted storage V Sriram, Ganesh Narayan, K Gopinath Computer Science and Automation Indian Institute of Science, Bangalore { v sriram, nganesh, gopi } @csa.ii sc.ernet.in Abstract — W e describe SAFIUS, a secure accounta ble file system that resides o ver an untrusted s torage. SAFIUS provides strong security guarantees like confidentiality , in- tegrity , prev ention from rollback attacks, and account- ability . SAFIUS also enables read/write sharing of data and pro vides the standard UNIX-like int erface for a ppli- cations. T o achieve accountability with good performance, it uses asynchronous signatures; to reduce the space re- quired f or storing these s ignatures, a no vel signature prun- ing mechanism is used. SAFIUS has been implemented on a GNU/Linux based system modifying OpenGFS. Pr eliminary performance studies show that SAFIUS has a to lerable over - head for pro viding secure sto rage: while it has an overhead of about 50% of OpenGFS in data intensive w orkloads (due to the overhead of p erforming encryption/decryption in soft- ware), it is comparable (o r bette r in so me cases) to OpenGFS in metadata intensive w orkloads. I . I N TR O D U C T I O N W ith storage requirements growing at around 40% ev- ery year , dep loy ing and managing enterpris e storage is becomin g increasi ngly problematic . The need for ubiq- uitous storage access ibility also requires a re-loo k at tra- dition al stor age architectur es. Org aniza tions respond to such need s by centralizing the storage management: ei- ther inside the o r ganiz ation, or by outsour cing the storage. Though both the options are together feasible and can co- exi st, the y both p ose seri ous securi ty hazards : the user ca n no longer aff ord to implicitly trust the storage or the stor- age pro vider/ person nel w ith critical data. Most systems respond to such a threat by protec ting data crypto graph ically ensuring confide ntiali ty and in teg rity . Ho wev er , con vention al security measures lik e confiden- tiality and update inte grity alone are not suf ficient in man- aging long li ved storag e: the storage usage need s to be ac- counte d, both in quality and quantity; also the inappropri- ate a ccesse s, as specified by the user , should be disallo wed and indi vidua l accesses should ensure non-rep udiat ion. In order for such storage to be useful , the storage accesse s should also provi de freshness guarantee s for updates . In th is work we show that i t is possib le to archite ct such a secure and accountabl e file system over an untrusted stor - age which is administrated in situ or outsour ced. W e call this architect S AFIUS: SAF IUS is designed to lev erage trust onto an easily manage able entit ies, pro vidin g secure access to data resid ing on untr usted storage. The critical aspect of SAFIUS that dif ferentiates it from rest of the so- lution s is that sto rage cli ents themselv es are in depen dently managed and need not mutually trust each other . A. Data is mine, contr ol is not! In many enterprise setups, users of data are differe nt from the ones who contro l the data: data is managed by storag e adminis trators , who a re ne ither pr oduce rs nor co n- sumers of the data. T his requir es the users to trust stor- age administ rators w ithout an option . I ncreas ed storage requir ements could result in an incr ease in the nu mber of storag e administrat ors and users would be force d to trust a lar ger number of admin istrato rs for their dat a. A surv ey 1 , by Storag etek, re vealed that stora ge administrat ion was a major cause of difficul ty in storage m anagemen t as data storag e requirements increased. Although outso urcin g of storag e requiremen ts is currentl y smal l, with cont inued ex - plosio n in the dat a storage requir ements and soph istica tion of tech nolog ies needed to make the storage ef ficient and secure , enter prises ma y soon outsource th eir storag e (man - agement) fo r cost and efficienc y reasons. Storage serv ice pro viders (SSPs) provid e storag e and its managemen t as a service. Using outsourced storage or storage services would mean that enti ties outside an enterprise hav e access to (and in fac t control) enterprise ’ s data. B. Need to tr eat storag e as an untrus ted entity Hence, there is a stro ng need to treat storag e as an un - trusted entity . Systems like PFS[7], Ivy[5], SUNDR [3], Plutus[4] and TDB[9] provid e a secure filesystem ove r an untr usted storage. Such a secure filesyste m needs to pro vide integrity and confidentiali ty guarantee s. But, that alone is not suf ficient as the server can still dissemin ate 1 http://www .storagetek.com.au/compa ny/press/su rve y .html 2 old, but valid data to the users in place of the m ost recent data (rollb ack attack [4]). Further , the server , if malicious, canno t be trusted to enforce any protecti on mechanisms (acces s control) to pre vent one user from dabbling with anothe r user’ s data which he is not authoriz ed to access. Hence a maliciou s user in collusion with the server can mount a number of attacks on the system un less pre vented. All systems m entione d abo ve protect the clients from the servers . But, we argue that we also need to pr otect the server fr om malicio us cl ients . If we do no t do this, we may end up in a situa tion where the untrust ed storag e server gets penal ized ev en when it is no t maliciou s. If the syste m allo ws arbitrary client s to access the storage , then it would be dif ficult to con trol each of t hese clien ts to obe y the pro- tocol. The client s themselves could be compromised or the users who use the clients could be malicious. Either way the untrusted sto rage server could be wrongly penal- ized. T o our kno wledge, most systems implicitly trust the clients and may not be useful in certai n situations. C. SAFIUS - Secur e Accountable filesyst em over un- truste d stora ge W e propos e SAFIUS, an architec ture that pro vides ac- counta bility gua rantee s apart from pro viding secure acce ss to data residin g on untrusted storage. By le veraging on an easily manageable truste d entity in the system, we pro- vide secure access to a scalable amount of data (that re- sides on an untru sted storage) for a number of indep en- dently manag ed clien ts. The trusted entity is nee ded only for m aintain ing some global state to be shared by many clients ; the bulk data path does not in v olv e the truste d en- tity . SAFIUS guaran tees tha t a party th at violate s the secu- rity p rotoc ol can al ways b e ide ntified pre cisely , pre ve nting entitie s which obey the protocol from getting penalized . The party can be one of the clien ts which exp orts fi lesys- tem interf ace to users or the untruste d storag e. The follo wing are the high lev el features of S AFIUS • It pro vides con fidentiali ty , integr ity an d freshness guar - antees on the data stored. • It can identify the entities that violate the protocol. • It pro vides shar ing of data for rea ding and wri ting among users. • Clients can recove r independen tly fro m failures without af fecting global filesystem cons istenc y . • It provid es close to U NIX lik e semantics. The architect ure is implemented in G NU/Linux. Our studie s sho w that SA FIUS has a tolerable ov erhead for pro viding secur e stora ge: w hile it has an overh ead of about 50% of O penGFS for data intensi ve workloads , it is com- parabl e (or better in some cases) to OpenGFS in m etadata intens i ve workload s. I I . D E S I G N SAFIUS p rov ides secu re access to data stored on an un- trusted storage with perfect accountabil ity guarantee s by maintain ing some global state in a trusted entity . In the SAFIUS s ystem, ther e are fileser ver s 2 that provi de filesys - tem access to clien ts, with the back-end storage resid ing on untrus ted sto ra ge , henc eforth r eferred to as stor age s erver . The fileserver s can reach the storag e servers directly . The system also has lock ser ver s, kno wn as l-hash ser ver (for lock-h ash serv er), a trusted entity that provid es locking servic e and also holds and serves some critical metadata. A. Security r equir ements Since the filesyste m is b uilt ov er an untru sted da ta st ore, it is mandatory to ha ve con fidential ity , in teg rity and fresh- ness guarantees for the data stored. T hese guarantees pre- ven t the exp osure or update of data by the stora ge server either by unauthorize d us ers or by collusio n between unau- thoriz ed users and the stor age serv er . Where ver there is mutual distrust between entitie s, protocols emplo yed by the system should be able to identify the misbeha ving en- tity (entity which violates the protocol) precisel y . This fea- ture is referr ed to as accountabi lity . B. Sharing and Scalab ility The system should enable easy and seamless sharin g of data between users in a safe way . Users should be able to mod ify s haring seman tics of a file on their own, w ithout the in v olvement of a trusted entity . The system sh ould als o be scalable to a reasona bly large number of users. C. F ailur es and r ecover ability The system should continue to function , tolerat ing fail- ures of t he fileserv ers and it should be able to reco ver from fail ures of l-hash server s or storage server s. Fileserv ers and storage servers can fail in a byzantin e manner as they are n ot tr usted an d he nce can be maliciou s. The files erv ers should reco ver independen tly from failur es. D. Thr eat Model SAFIUS is based on a relax ed threat model: • Users need no t trust all the fileserve rs unifor mly . They need to trust only those fileserver s throug h which they ac- cess the filesystem. E ven this trust is temporal and can be re vok ed. It is qu ite impracti cal to b uild a system witho ut the user to fileserv er trust 3 . 2 They are termed fi leservers as these machines can potentially serve as NFS servers with a looser co nsistency semantics to end clients. 3 The applications which access the data would not hav e any assur- ance on the data read or written as it passes through an untrusted oper- ating system 3 FS2 FS3 FS1 Storage Server User A2 User A1 User C1 User B2 User B1 User C2 Trust Distrust l−hash server Fig. 1 T H R E AT M O D E L • No entit y trust s the storage serve r and vice versa. The storag e server is not ev en trusted for correctly storing of data. • The users and hence the fileserv ers need not trust each other and w e assume that they do not. This assumpti on is importan t for ease of m anagemen t of the fileserve rs. The fileserv ers can be independ ently manage d and the users ha ve the choice and responsi bility to choose which file- serv ers to trust. • The l-hash servers are tru sted by the fileserve rs, but not vice ver sa. Figure 1 illustrates an instance of this threat model. Users A 1 and A 2 trust the fi leserv er F S 1 , users B 1 and B 2 trust the fileserve r F S 2 and users C 1 and C 2 trust the fileserv er F S 3 . U ser B 1 apart from trusti ng F S 2 also trusts F S 1 . If we consi der trust do mains 4 to be made o f en tities that trust each o ther either directly or t ransit i ve ly then SAFIUS guaran tees protect ion across trust domains . The trust re- lation ship could be limited in some ca ses (shar ing of few files) or it could be complete (user trust ing a fileserv er). This threat model pro vides complete freedom of admin- isterin g the fileserv ers independen tly and hence eases the managea bility . I I I . A R C H I T E C T U R E The block diagram of the SAFIUS architecture is shown in figure 2 . Ever y fileserve r in the system has a filesystem module that provides the VFS interf ace t o the applicatio ns, a vo lume m anager throug h which the fi lesyste m talks to the storage server and a lock clien t module that interacts with the l-hash server for obt aining , releasing , upgradin g, or do wngrading of locks. The l-hash server , apart from servin g lock req uests , also distrib utes the hash of inodes. The l-hash ser ver also ha s the filesyste m modu le, v olume 4 If we treat the entities in the system as nodes of a graph and an edge between i and j , if i trusts j , then each connected component of the graph forms a trust domain Volume manager Lock Client FS Volume manager Lock Client FS Fileserver 1 Storage Server l−hash server Fileserver 2 Fig. 2 S A F I U S A R C H I T E C T U R E manager m odule and a specialize d ve rsion of lock client module and can be used like any other fileserver in the system. T he lock client modules do not interac t directly among each other , as they do not ha ve mutual trust. The lock clients intera ct transiti vely through the l-hash serv er which valid ates the requests. The fi leserv ers ca n fetch the hash of inode from the trusted l-ha sh serv er and hence fetch any file block with integrity guarantees. In figure 2, t he thick lines represen t bu lk d ata p ath a nd the t hin lines the metad ata path . T his model honou rs the trust assump- tions stated earlier and can scale w ell because each file- serv er talks to the block storage serve r directly . A. Block a ddr esses, file gr oups and inode-ta ble in SAFIUS The blocks ar e addressed by their content hashe s simi- lar to syste ms like SFS-R O [1]. It g i ve s a write-onc e prop- erty and blocks cannot be ov erwritten without changing the pointe r to the block . SAFIUS curren tly uses SHA-1 as the content hash and assumes that SHA-1 collisions d o not happe n. SAFIUS uses the conce pt of fileg roups [4], to reduce the amount of crypt ograp hic key s that need to be maintaine d. Since “block n umbers” a re conten t-hash es, fetchin g the correc t inode block would ensure that the file data is correct. SAFIUS guarant ees the integrit y of the in- ode block by storing the hash of the inode block in an inode hash table i-tb l . Each tuple of i-tbl is called as idata , and consis ts of the inode’ s hash and an incarna tion number . i- tbl is stored in the untrusted st orage server; its integrit y is guaran teed by storing the has h of the i- tbl’ s inode block in a local stable stora ge in the l-hash server . B. On-Disk structu r es: Inode and dir ectory entry Inode The inode of a file contains pointers to data blocks either directly o r through multiple le vel s of indirec- tion, apart from other meta information fo und in standard UNIX filesy stems. The block pointers are SHA-1 hashes of the blocks. These apart, it also contains a 4 byte fi - leg roup id, that poin ts to rele van t ke y info rmation to en- 4 crypt/ decryp t the blocks of this file. The hash of an inode correspo nds to the current versi on of the file. If a file is updat ed, then one of its leaf data block changes and hence its intermediate metabloc ks (as it has a pointe r to this leaf block) and ultimately the inode block chan ges (this is simila r to what hap pens in some lo g structu red fi lesyste ms, where w rites are not don e in-place, like wafl [2]). Thus , updatin g a file can be seen as moving from one version of the fi le to another , w ith the version switch happe ning at a point in time when the file’ s idata is update d in the i-tbl. Dir ectory entry Directory entries in S AFIUS are simi- lar to the direct ory entries in traditi onal filesystems. They contai n a name and th e in ode n umber corresp ondin g to the name. C. Stora ge Server The granularity at which the storage serv er serves data is varia ble sized blocks . The storage serv er supports three basic operations , namel y load , stor e and fr ee of blocks. A block can be stor ed m ultiple times, i.e. client s can issue any number of stor e reque sts to the same block and the block has to be fr eed that many times before the physi- cal block can be reused at the se rve r . T o pre ven t one us er from freeing a block belon ging to anoth er user , the storage serv er maintains a per inod e number reference count on each of the stored blocks. Each block contains a list of in- ode numbers and their refere nce count s. Architec tures lik e SUNDR [3] maintain a per user reference count for the blocks . Ha ving a per user reference count decimates the possib ility of seamless sharing w hich is one of our design goals. For write sharing a file between two users A and B in S UNDR, the users A and B must belo ng to a group G and the file is write shareable in th e group G . This group G has to be created and its public k ey need to be distrib uted by a trusted entit y . This restr iction is due to per user ref - erence count on the blocks and a per user table mapping inode numbers to their hashes . Let users A and B write share a file f in SUNDR. If B modifies a block k stor ed by A ear lier to l , then B cannot free k , as it had not stor ed it. SAFIUS has a per inode refer ence count on bloc ks, and the storage serv er does the necess ary access control to the referen ce count updates by looking up the filegro up infor- mation for sharin g info rmation . T he trusted l-ha sh ser ver ratifies the access contro l enforced by the storage server . The storag e serv er aut hentic ates the user (thr ough pub- lic ke y mechanisms) who performs the store or free oper - ation. If the uid (of the user ) pe rforming the store or fre e operat ion is same as the o wner of the inode, then the op - eration is valid. If this is not the case, then the storage serv er has to veri fy if the current uid has enoug h permis- sions to write to the file. If this check is not enforced , then an arbitrary us er can free the blocks belongi ng to files for which he has no write access. The storage server achie ves this by maintaining a cache of inode numbers and their corres pondi ng filegroup id s. T his cache is populated and maintain ed with the help of storage server . This enables seamless write shari ng in SAF IUS. D. l-hash server: i-tb l, file gr oup tr ee The l-has h serv er pro vides the basi c locking serv ice, stores and distrib utes the idata of inodes to/from the itbl 5 . The l-hash serv er also maintains a map of inode number to file group id information in a fgrp 6 (filegr oup) tree that contai ns the fileg roup data in the lea f blocks of the tr ee. In additi on, there is a persis tent 64 bit monoto nically inc reas- ing fgrp incarnation number , a global count that indicates the numbe r of chan ges made to file sha ring attrib utes. T he root of the filegr oup Merkle tree and the fgrp incarna tion number are stored locally in the l-hash server . T he root of the fgrp tr ee is hashe d with the fgrp in carna tion number to get fgrp hash . E. V olu me Mana ger The volu me manager does the job of translatin g the read, write, and block free reque sts from the fileserv er to load, store or free operatio ns that can be issued to the storag e serv er . The volu me manager export s the standard block interface to the filesystem module, but expects the filesystem module to pass some additional informati on like hash of an existing block (for reading and freeing) and fi- leg roup id of the block (for encryptio n or decrypt ion). F . E ncrypti on and hashing The blocks are decrypte d and encrypt ed , as they enter and lea ve the fileserver m achine respec ti vely by the v ol- ume manag er . On a write request the block is encrypted , hashed and stored. On a read request the blocks are fetched from the storage serve r , checke d for integr ity (by compar - ing the block’ s hash with that of its pointer) and is de- crypte d and handed over to the upper layer . The choice of doin g th e encry ption and decryption a t the v olume man - ager layer was done to simplify the filesystem implementa - tion and for performan ce reasons ; to delay encryption and a v oid repeate d decryptions on the same block. G. Need for non-r epudi ation Since the v olume manager and the storage server are mutually distrust ful, we need to protect them from the 5 i-tbl is the persistent table indexed by inode number and con tains the i-data corresponding to an inode 6 It can be realized as a file in SAFIUS 5 0 10000 20000 30000 40000 50000 60000 70000 80000 90000 16 64 256 1024 4096 16384 65536 Time consumed in Milli Secs Size of buffer sha1 rsa-sign rsa-verify Fig. 3 R S A S I G N I N G O V E R V A R I O U S B L O C K S I Z E S other party’ s maliciou s actions: 1. Load Misses: The v olume manager reque sts a block to be a loaded bu t the stora ge server replies back saying that the block is not foun d. It c ould be t hat the fileserv er is lying (d id not st ore the block at all) or the sto rage ser ver is lying. 2. Un solicite d stores : A block would be account ed in a particu lar user’ s quota , b ut the user can claim that h e ne ver stored the block. The first case is more serio us as there is potential data loss. Load opera tions do no t alter the state of stored data and the fileserver would require the neces sary k ey to de- crypt it. Ho weve r , for obv ious reasons , both store and free operat ions hav e to be non-repudi ating. W e achiev e this by taggin g each of store and free operation w ith a sign ature. Let D u = { bl k num, ino, uid, op, nonce, count } . For a store o r free opera tion, the v olume manager sends { D u , { D u } K − 1 u } as the signature. Here blknu m refers to the hash of the block that is to be stor ed or freed, ino refers to the inode number to which the block belongs uid refers to the user id of the user who is performing the op- eration , nonce is a random number that is unique across sessio ns and is estab lished with the st orage s erv er , coun t is the count of the curren t op eration in this ses sion and K u − 1 is the pri vate ke y of the use r . { D u } K − 1 u is D u signed by the priv ate ke y of the user . The count is incre mented on e ve ry store or free opera tion. The nonce distingu ishes two stores or frees to the same block w hich happens in two dif ferent sessions, while count distinguis hes two stores or frees to the same bloc k in the same sess ion, h ence allowin g any number of retransmission s. This signat ure captu res the curren t state of the operat ion in the v olume m anager . The signat ure is referred to as re quest signat ur e . The stora ge server recei ves the signature , val idates the count, uid and nonce, and veri fies the signature . It follows the protoc ol desc ribed earli er to store o r free the block . On a successful opera tion, it prepares and sends a reply sig- nature . Let D ss = { D u , f g r phash } . T he storag e server manager Volume Storage Server 1. Write Local Log 2. Log op/data 3. Store(B,X,ino,uid) Fig. 4 S E Q U E N C E O F E V E N T S O N A W R I T E sends { D ss , { D ss } K ss − 1 } to the volu me manage r . D u is the same as what the storage server recei ve d from the vol- ume manager . K ss − 1 refers to the pri v ate k ey of the stor- age server . The v olume manager verifies that D u it re- cei ves from the storage serv er is same as the one it had sent and ver ifies the signature. The signat ure return ed to the v olume manager is referred as the gra nt signatur e . The grant signature prev ents the storage server from den ying the stores made to a block , and in case there were free operation s on the bl ock that res ulted in the block be- ing removed , the req uest sign atur e of the free operation would defend the storage server . Unsolic ited stores are eliminate d as the sto rage server will not hav e requ est si g- nature s for those blocks. Hence assuming that that the RSA signatu res are not for geable, the protoco l achie ves non-re pudia tion and hence pro vides perfect accounta bility in SAFIUS. H. Asynchr onous signing The protocol describ ed abo ve has a huge performan ce ov erhead: two signatu re generati ons and two verification s in the path of a store or a free opera tion. Since signa ture is generated on the hash of a block rather than the block itself, the time taken for actual signatu re generat ion, up to a certain block size, masks the time taken for generatin g the SHA-1-h ash of the bl ock. Figure 3 illu strates this. The amount of time taken for signing a 32 byt e bloc k and the amount of time taken for signin g a 16KB are comparable. Ho wev er with higher block sizes , the S HA-1 cost sho w s up and the sign ature generation cost increases linearly , as can be seen for block sizes bigger than 16KB. Instea d of signing ev ery operatio n, the protocol signs group s of operations. The store and the free opera- tions do not ha ve signin g or verification in their code path and the cost of the signing is amortized among number of store and free operations. Let B u = { blk n um, ino, op, nonce, count } , The field s in this str uc- ture are same as that was in D u . uid fi eld is not in- 6 l−hash Server Volume manager Storage Server 1. Sig bunch, request signature 2. grant signature 3. Store grant signature Fig. 5 S I G NAT U R E E X C H A N G E cluded in B u as we group only operat ions belong ing to a particula r user together and it is specified in a header for the signa ture block . After a thresh old number of op- eration s or after a timeout by defa ult, the volume man- ager packs blocks of B u s 7 in to a block B D u . The block B D u has a header H u , H u = { uid, count } with uid referring to the uid of the user whose operations are being currently bun ched and signed, and count the number of operation s in the current set. Let B D u be defined as { H u , B u , B u ′ , B u ′′ , B u ′′′ , .. } . B D u is signed with the user’ s pri vate key and { B D u , { B D u } K u − 1 } is sent to the storage server as the r equest signat ur e . The storag e serve r verifies that these operat ions specified by B u s are all vali d (they did happen) and ver ifies the sig- nature on B D u . If the operatio ns are v alid, then the storag e server generate s a block B D ss , where B D ss = { B D u , f g r phash } , and signs it using its pri vate ke y . It sends { B D ss , { B D ss } K ss − 1 } to the volume m anager which verifies that B D u is same as the one it had sent in the req uest sign atur e and then verifies the signat ure. It can be easil y seen that signing of b unch of operations is equi va lent to signi ng of each of these operat ions, pro- vided there are no SHA-1 coll isions and hence achie ves non-re pudia tion. I. Need for log ging When the opera tions a re synchron ously sign ed, on ce the store or free operation completes, the operati on can not be repudi ated by the storage server . But with asynchro nous signin g, a write or free operat ion could return befor e the grant signature is recei ved . If the storag e serve r refuse s to send the gr ant signatu re or if it fails , the fileserver may ha ve to retry the operatio ns and may also hav e to repeat the process of exchang ing requ est sig natur e for grant sig- nature . W e need a local log in the fileserver , where det ails of the current opera tion are logged. W e log the data for 7 It contains blocks B u , B u ′ , B u ′′ Volume manager l−hash server 1. Send signature bunch local log 2. Send sig bunch to log 3. Reply Ok Storage Server 4. Send sig bunch, 6. Send root node signature 5. Send root node signature Fig. 6 S I G NAT U R E P RU N I N G P RO T O C O L a store reques t so that the store operation can be retried under error condit ions. So, befor e a store or free request is sent to the server , we log B u and the uid . If the opera- tion is a store operation, we ad dition ally log the data too. Once the grant signat ure is obtain ed for the bunc h, the log entries can be freed. Figure 4 illustrates the sequenc e of e ve nts that happen on a write. J . P ersiste nce of signatur es The request and the grant signatures should be persi s- tent. If it were not persisten t, w e cannot identify which entity violat ed the protocol. The v olume manager sends the grant signatur e and B D ss to the l-hash server . It is the respon sibili ty of the l-hash server to preserve the signa ture. Figure 5 illust rates this. K. Signatu r e pruning The signatur es that need to be preserve d at the l-hash serv er are on a per operation basis. H o we ver , the num- ber of sign atures genera ted is proportio nal to numbe r of store/f ree requests proces sed. A malicio us fileserver can repeat edly do a store and free to the sa me block to increase the number of signatu res generated. It is not possibl e to store all these signatu res as is. SAFIUS has a prunin g protoc ol, e xec uted between the l-hash server and the stor - age serv er to ensure that the amount of spa ce required to pro vide accountabi lity is a constant function of number of blocks used, rather than the number of operat ions. In this pruning protoc ol, the storage server and the l- hash server agree upon per uid reference counts 8 on ev- ery stored block in the system. A store would increase the reference count and free would decrement it. Each of these refere nces must hav e an associate d reques t and grant signatu re pair . If the l-hash serve r and the storage serv er agr ee on these reference counts, then w e can safely 8 this is different from the per i node reference count maintained by the storage serve r 7 discar d all the request and grant signatures correspond ing to this block. T o achie ve this, we maintain a re fcnt tr ee , a Merkle tree, in both the l-hash server and the storage serv er . The leaf blocks of this refcnt tree store the block numbers and the per uid refer ence cou nts as sociat ed with the b lock (only if at least o ne refer ence coun t is no n-zero ). If the root block of the ref cnt tr ee is same in both the l- hash serv er and storage s erv er , then b oth parties mus t ha ve the same reference count s on the leaf blocks. Figure 6 il- lustrat es the signat ure pruning protocol . Since the storag e serv er has signed the root of the tree that it had genera ted, there ca nnot be a load miss for a v alid block f rom the stor - age se rve r side. The r efcnt tr ee in the l-has h serv er hel ps pro vide accou ntabi lity . T o sa ve some sp ace, the r efcnt tr ee does not store the referen ce count map for all blocks . It has a table of unique reference count entries (mostly bloc ks o wned by one user only) and the ref cnt tr ee ’ s leaf bloc ks merely ha ve a pointer to this table. L. The filesyste m m odule The filesystem modul e pro vides the sta ndard UNIX lik e interf ace for th e appli cation s, so th at appli cation s need no t be re-written. Ho w e ver , o w ing to its relaxed threat model, the file system has the follo wing restrictions: • Distribu ted filesyst ems like frangipa ni [8] and GFS [6] ha ve a notion of a per node log, which is in a uni versal ly access ible location. Any node in the cluster can replay the log. In the thr eat model that we hav e chosen, the file- serv ers do not trust eac h other; so it i s not possib le for one fileserv er to replay the log of another fileserv er to restor e filesystem consiste nc y . • Trad itiona l fi lesyst ems hav e a noti on of consist enc y in which each block in the system is in use or is free. In case of SAFIUS , this notion of consisten cy is tough to achie ve. • Give n our relax ed threat model, it i s the resp onsibi lity of the fileservers to hon our the filesystem structures. If they do not, there is a poss ibility of filesystem inco nsiste ncy . Ho wev er , the SAFIU S architectur e guarantees complete isolati on of the ef fects o f the misbeha ving entity to its o wn trust domain. M. Read/Write contr ol flow The fi leserv ers get the root directory inode ’ s idata dur - ing mount time. Subsequent file or dir ectory look ups are done in the same way as in a stand ard UNIX filesystems. Reads: T he in ode’ s idata fetch ed from the l-has h serv er is the only piece of metadata that the fileserver needs to obtain from the l-hash server . The filesy stem module can fetch the blocks it wants from the storage server directly by issuing a read to the vol ume manager . During the read call, whe n the fileserv er requ ests a shared lock on the file’ s inode, the l-hash server , ap art from grantin g the lock, also sends the idata of the inode. Using this idata it can fetch the inode b lock and hence th e appro priate intermedi ate blocks and finally the leaf data bloc k, which conta ins the off set reques ted. Writes: W rite operations from the fileserve r usually procee d by first obt aining an excl usi ve lock on the inod e. While granti ng the exclusi ve lock, the l-hash server also sends the latest hash of the inode as a part of idata . Af- ter the up date of the necessar y bloc ks includin g the inode block, the hash of the new inode block correspo nds to the ne w ver sion of the file. As long as the idata in the l-hash serv er is not updated with this, the fi le is still in the old ver sion. When the ne w idata corre spond ing to this fi le – hence in ode, is upd ated in the i -tbl, th e file mov es to a n e w ver sion. T he l-hash makes sure that the current user has enoug h permiss ions to update the inode’ s hash. Now the old data block s and metablocks that hav e been replaced by n e w o nes i n the ne w ver sion of the file hav e to be fr eed. The write is n ot visible to other fileserv ers un til t he inode’ s idata is update d in the l-hash serve r . Since this is done be- fore releasin g the e xclusi ve lock , an y intermed iate reads to the file would ha ve to wait. N. Logg ing Journa ling is used by filesystems to speed up the task of resto ring the consistenc y of the filesystem after a crash. Many filesyste ms use a redo log for loggin g their m eta- data chan ges. During reco ver y after a cras h, the log data is replayed to restore consistenc y . In SA FIUS, pend ing update s to the filesyst em during the time of crash do not af fect the consiste nc y of the filesystem as long as the fi le- serv ers do not free any block belon ging to pre vious ve rsion of the file and the inode’ s idata is not up dated. When the inode’ s idata is updat ed in the l-ha sh serv er , the file mov es to the ne xt ver sion an d all subse quent acc esses will see t he ne w versi on of the file. The ov erwritten blocks ha ve to be freed when the idata update in l-has h serv er is successful and the new blocks that were written to should be freed if the ida ta upd ate fa iled for some reaso n. SA FIUS uses an undo- only operation log to achie ve this. O. Stor e inode data pr otocol T o ensure that the system is consiste nt, the idat a of an inode in the i-tbl of the l-hash serv er has to be update d atomical ly , i.e. the inode’ s idata has to be either in the old state or in the ne w state and the fileserver that is perform- ing the update should be able to know whether the update sent to the l-hash serv er has succee ded or not. Fileserv ers tak e an exclusi ve lock on the fi le when it is opened for writ- ing. After fl ushing the modified blocks of the file and be- 8 local log local log lock client module 1. count, txid, inodes, idata 3. replies Ok 4. Commit Tx Fileserver l−hash server 5. Write itbl l−hash server 6. itbl write committed filesystem module 2. Log data Fig. 7 S T O R E I N O D E DAT A P ROT O C O L fore droppi ng the lock it holds, the fi leserv er e xe cutes the stor e-inod e data pr otocol with the l-has h serv er to ensure consis tenc y . The store inode data protoc ol is begun by the fileserv er sending the count a nd the li st of i nodes and t heir idata to the l-hash server . The l-hash server stores inodes’ ne w idata in the i-tbl atomical ly (either all of the se inod es’ hashes are updated or none of them are updated), employ- ing a local log. It also r emember s the last txid recei ved as a part of stor e inode data protocol from each fi leserv er . After recei ving a reply from the l-has h server , the file- serv er writes a commit record to the log and commits the transa ction, after which the blocks that are to be freed are queue d for freeing and log space is reclaimed. The l-hash serv er remembe rs the latest txid from the fileserv ers to help the fi leserv ers know if their last execu tion of stor e inode data had succeeded. If the fileserv er had crashe d immedi- ately after sending the inode’ s ha sh, it has no w ay of kno w- ing whether the l-hash serv er recei ved th e data a nd had up- dated the i-t bl. If it had updated the i-tbl, the transaction has t o be committed a nd th e bl ocks mean t for freein g need to be freed. If it is not the case, then the transaction has to be aborted and the blocks written as a part of that transac- tion ha ve to be freed. On reco ver y , the fileserver contacts the l-hash server to get the last txid that had updated the i-tbl. If that txid does not ha ve a commit reco rd in the log, then the co mmit record is added no w and the recov ery procedure is started. Since all the calls to sto r e inode data protoco l are seria l- ized within a filese rve r and the global ch anges are visi ble only on updates to the i-tbl, this protocol will ensure con- sisten cy of the filesystem. The store inode data protocol tak es a list of h ino de nu mber , idata i pair inste ad of a single inode number , idata pair . This is to ensure that dependen t inodes are fl ushed atomically . For instance, this is usef ul during file c reatio n and delet ion, when the file i node is d e- pende nt on the directory inode. P . Fil e Cr eation File creation in v olv es obtain ing a free inode number , creatin g a new disk i node and updatin g the directory entry of the paren t directory with the new na me-to-in ode map- ping. Inode numbers are generated on the fileser ver s au- tonomou sly without consulti ng any exter nal entity . Each fileserv er stores a persisten t bitmap of free local inode numbers locally . This map is updated after an inode num- ber is alloca ted for a new file or dire ctory . Q. F ile Deletion T raditional UNIX systems prov ide a delete on close scheme for unlinks. T o pro vide similar semantics in a dis- trib uted fi lesyste m, one has to keep track of open refer - ences to a fi le from all the nodes and the file is deleted by the last proc ess which closes the file, among all the nod es. This warrant s that we need to maintain some global in- formatio n reg arding the open references to fi les. In a N FS like en vironment, where the s erv er is stateless, rea ding and writing to a file that is unlinked from some other node re- sults in a stale file handle error . SAFIUS’ threat model does not permit similar unlink semanti cs. So we define a simplifi ed unlink semantics for file deletes in SAFIU S. Unlink in SA FIUS remov es the direct ory entry and decre- ments the inode referen ce count, but it defe rs deletion of the file as long as any process in the same node , from which unlink was called, has an open reference to the file. The last process on the node, from which unlink was called , deletes th e fi le. Subsequ ent read s and writes from other nodes to the fi le do not suc ceed and return stale file handle error . There wo uld not be any ne w re ads and write s to the file from the node that called unlink as the direc - tory ent ry is remo ved a nd t he la st proc ess that h ad a n op en referen ce has closed the file. T his seman tics honour s the standa rd rea d after write con sisten cy . As long as the file is not deleted, a read call following a write returns the lat- est contents of the file. After a file is deleted, subseq uent reads and writes to t he file do no t succeed, and hence r ead after write consis tenc y . The inode numbers ha ve to be freed for re-allo cation . As mentione d earlier , inode numbers identify the user and the machine id who o wns the file. If the nod e which un- links the file is same as the one which has created it, then the ino de number can be marke d as free in the alloc ation bitmap. But if unlink h appen s in another m achine , th en the fact that the inode number is free has to be communicat ed to that machine. S ince ou r threat m odel does not assume two fileserv ers to trust each other , the informatio n has to be routed th rough the l-hash serve r . The l-ha sh serv er sends the fr eed inode numbers list to the appropriate fileserv er , 9 Server Storage Appln FS Lock Clnt Module User Land Kernel Land Fileserver R/W Volume mgr FS/blk dev Kernel Land R/W User Land Storage server l−hash server User Land l−hash server FS R/W Kernel Land Fig. 8 I M P L E M E N TA T I O N O V E RV I E W during moun t time (when th e fileserv er fetches the ro ot d i- rectory inode ’ s idata). R. Locki ng in SAFIUS SAFIUS uses the Memexp prot ocol of OpenGFS [6] with some minor modifications. The l-hash server ensures that the current uid has enough permission s to acquire the lock in the particula r mode requested. T he lock numbers and the inode numbers ha ve a one to one corresp onden ce and he nce we can deri ve the inod e number fro m the lock number . Using the lock number , the l-hash se rve r obtains the filegro up id and hence the permissions . OpenGFS has a mechani sm of callbacks wherei n a node that needs a lock , curren tly held by another node, sends a message to that node’ s callback port. The node which holds the lock do wngrade s the lock if the lock is not in use. In SAFIUS, since the callback cannot be directly sent (the two fileserv ers would be mutually distrus ting), the callbac ks are routed through the l-hash server . S. Lock cl ient module The lock module in th e fileserv er handles all th e clien t side acti vities of the lock protocol that was briefly de- scribe d in the prev ious section . Apart from this, it also does the job of fetch ing the ida ta corresp ondin g to an in- ode number from the l-hash serve r . It also exec utes the store ino de data protoco l with the l-hash ser ver to ens ure atomic updates of list of inod es and their idata. Figure 7 illustr ates the protoc ol. The protoco l guarantee s atomicity of updates to a set of inodes and their idata. I V . I M P L E M E N T A T I O N SAFIUS is implemented in the GNU /Linux en viron- ment. Figure 8 depicts the v ariou s modules in S AFIUS and their intera ction. The base code used for the filesystem GNBD store latencies 0 50000 100000 150000 200000 250000 300000 Time in microseconds Avg(us) Median(us) Min(us) Max(us) Avg(us) 4310 169683 2954 803 240 Median(us) 3952 169602 2979 820 240 Min(us) 1276 166032 705 800 210 Max(us) 194198 254672 49774 2060 11080 Async signing Sync Signing No signing Unmodified FC scsi disk Fig. 9 S T O R E L A T E N C I E S and lock serv er is OpenGFS-0.2 9 and base code use d for the volu me mana ger is GNBD-0.0.91. The Memexp lock serv er in OpenGFS was modified to be the l-hash server to manage locks and to store and distrib ute idata of the in- odes. T he v olume m anager , the filesystem module and the lock clien t module reside ins ide the kernel space, while the storag e serve r and l-hash server are implemen ted as user space pro cesses . The curre nt implementa tion of SAFIUS does not ha ve any key manage ment scheme and ke ys are manually distrib uted. The itbl has to reside in the untrusted storag e as it has to hold the idata for all the inodes in the system. Consequ ently , the itbl’ s integrit y and freshness has to be guarante ed. W e ach ie ve this by storing the itbl informat ion in a special file in the root director y of the filesystem (.itbl). The l-hash server stores the idata of this file in its loc al stable storag e. The idata of the itbl se rve as the bootstrap point for vali dating an y file in this fi lesyste m. V . E V A L UA T I O N The performance of S AFIUS has been ev aluated w ith the follo wing hard ware setup. The fileserv er is a Pentium III 1266 MHz machine with 89 6MB of physical memory . A machine with a similar configuration serve s as the l- hash server , when the l-hash server and fileserv er are dif - ferent. A P entium IV 1.8 GHz machine w ith 896MB of physic al memory functions as storage serve r . The storage serv er is on a Giga bit E therne t and the fileserv er and l-hash serv ers are on 100Mb ps E thernet . The fileserver and l-hash serv ers ha ve a log space o f 700MB on a fibre channel SCSI disk. The stor age server uses a file on the ext3 fi lesyste m (ov er a partition on IDE hard disk) as its store. The file- 9 http://opengfs.sourcefor ge.net/ 10 serv er , storage serv er and the l-hash serve r all run L inux ker nel. The perfo rmance nu mbers of SAFIUS are r eporte d in comparison with an OpenGFS setup. A GNBD device serv ed as the shared block device . Fo r the OpenGFS exper - iments, the storag e server machine hos ts the GNBD s erv er and the fileserver machine hosts the OpenGFS FS client. The l-hash serv er machine run s the memexp lock s erv er o f OpenGFS. Basically two sets of configuration are studi ed: one in which the l-hash server and the fi leserv er are the same machine and anothe r in which the l-hash server and the fileserv er are two dif ferent machines. In O penGFS set up the two configuration s are: one in w hich the lock server and the FS client were in the same machine and anot her in which the y were in two phy sically dif ferent machin es. A thread, gfs gloc kd in SAFIUS, wakes up periodi cally to drop the unused locks. The interv al in which this threa d is kick ed in i s used as a parameter of study . The perfo rmance of SAFIUS configurations are reported with and without encryp tion/ decry ption. Hence, the performan ce numbers report ed are for eight differe nt combina tions for SAFIUS and two dif ferent combinations for OpenGFS. 1. SA FIUS-I30: It is a SAFIUS setup in w hich the l- hash serv er and the fileserver are the same machine. The gfs glockd interv al is 30 seconds. 2. SA FIUS-I10: It is a SAFIUS setup in w hich the l- hash serv er and the fileserver are the same machine. The gfs glockd interv al is 10 seconds. 3. SA FIUS-D30: It is a SAFIUS setup in which the l- hash server and th e fileserver are dif ferent m achine s. The gfs glockd interv al is 30 seconds 4. SA FIUS-D10: It is a SAFIUS setup in which the l- hash server and th e fileserver are dif ferent m achine s. The gfs glockd interv al is 10 seconds 5. Op enGFS-I: It is an OpenGFS setup in which the memexp lock serv er and the fi lesyste m run on the same machine 6. Op enGFS-D: It is an OpenGFS setup in which the memexp lock server and the filesystem run on diffe rent machines SAFIUS-I30E, SAFIUS-I10E, S AFIUS-D30E, SAFIUS - D10E are the SA FIUS configurations w ith encryp - tion/d ecrypt ion. A. P erformance of V o lume manag er - Micr obenc hmark As describ ed in Section II, SAFIUS volume manager uses asyn chron ous signin g to av oid signin g and verifica - tion in the common store and free path. Experimen ts hav e been conducted to measure the latencies of load and store operat ions. An ioctl interf ace in the v olume manager code is used for performing loads and stores from the userlan d, bypas sing the b uf fer cache of the kern el. A sequence of 20000 store operations are performed with the data from /de v/urandom. The fileserv er machine is used for issu- ing the stores. F igure 12 sho w s the plot of the latenc y in Y -axis and the store opera tion sequence number along X-axis. Approximately once e ve ry 1000 operati ons, th ere is a huge vertica l line, signi fying a latenc y of more than 100ms 10 . T his is when the signer thread kicks in to per - form the signing. As observ ed in section II, the laten cy of a signin g opera tion is about 80ms on a Pentium IV machine . As can be observ ed from figure 3, the cost of signing is consta nt till the block size is 32KB and gro ws linearly af- ter that. This incre ase correspo nds to the cost of SHA - 1, which is amortized by the RS A exponen tiatio n cost for smaller bloc k sizes. The plot in figure 12 also sho ws the mean and median of th e latencies measured . W e also hav e studie d GNBD store latenci es for vari ous combinations . The results are reported in Fig. 9. A s to be expect ed, synch ronou s signing incurs th e hig hest ov erhead while the asynch ronou s sign ing, on an a verage, appears to be only 30% costl ier compared to no-signin g. The next subsecti on describes the perfo rmance studies condu cted for the filesystem module. B. F ilesystem P erforman ce - Micr obenc hmark and P opu- lar benc hmarks The perfor mance of the filesystem module has been an- alyzed by run ning the Postmark 11 microben chmark suite and compilat ion of OpenSSH, OpenGFS and Apache w eb serv er sources. As described in the beginnin g of this se c- tion, numbers are reporte d for six configuratio ns. Figure 16 sho ws the numbers obta ined by running Postmark suite. The Postmark benchmark has been run w ith the follo wing configura tion: set size 512 10000 set numbe r 1500 set seed 2121 set trans actio ns 500 set subdi recto ries 10 set read 512 set write 512 set buffe ring false Currently , o wing to the simple implementatio n of the storag e serv er , logical to physical lookups take a lot of time and hence Postmark with bigg er co nfigurati ons take a long time to comp lete. Hence w e report P ostmark results only for th e smaller configurat ions. SA FIUS beats OpenGFS i n 10 The Y -axis scale is trimmed, the latencies are abo ut 170ms 11 Benchmark from Network Applianc e 11 0 5 10 15 20 25 30 35 40 45 50 0 2000 4000 6000 8000 10000 12000 14000 16000 18000 20000 Time consumed in Milli Secs Iteration # Volume manager - store latency (without signing) Store Latency f(x) Fig. 10 N O - S I G N I N G 160000 170000 180000 190000 200000 210000 220000 230000 240000 250000 260000 0 2000 4000 6000 8000 10000 12000 Time consumed in Micro Secs Iteration # Volume manager - store latency (Sync signing) Store Latency f(x) Fig. 11 S Y N C - S I G N I N G 0 20000 40000 60000 80000 100000 0 2000 4000 6000 8000 100001200014000 160001800020000 Time consumed in Micro Secs Iteration # Volume manager - store latency (Async signing) Store Latency f(x) Fig. 12 A S Y N C - S I G N I N G Apache compilation 0 2 4 6 8 10 12 14 16 18 20 S AFIUS - I30 S AFIUS - I30E S AFIUS - I10 S AFIUS - I10E O p e nGFS - I S AFIUS - D30 S AFIUS - D30E S AFIUS - D10 S AFIUS - D10E O p e nGFS - D Time in seconds untar configure make Fig. 13 A PAC H E C O M P I L A T I O N metadata intensi ve operations lik e create and delete. Cre- ations and deletions in S AFIUS are not immediatel y com- mitted (ev en to l og) a nd a re co mmitted onl y when the lock is dropped and hence the exp lanat ion. Wi th the current input con figuratio n, reads an d append s for SAFIUS-D30, SAFIUS-I30, OpenGFS-I and OpenGFS-D see m to be the same. Bigger configuratio ns m ay sho w some dif ference. SAFIUS-I10 and O penGFS-D10 seem to perform poorly for appends and reads due to flushin g of data belongi ng to files that would anyw ay get dele ted. There is no dif- ferenc e between the l-hash serv er being in the same ma- chine or in dif ferent machine for P ostmark suit e. For the Postmark suite, there is not much diffe rence between the configura tions that h as e ncrypt ion/d ecrypt ion and the ones that doesn ’ t ha ve. Next three performan ce tests in v olv ed compilation of OpenSSH, OpenGFS and Apache web serve r . Three a cti v- ities were p erformed on th e sourc e tree: untar (tar zx vf) of source , con figure and make. Time taken for each of these operat ions for all the ten configuratio ns described before is reported. F igure 14 is the result of OpenSSH compi la- tion. All SAFIU S configur ations take twice the amount of time for un tar compared to OpenGFS-I, while Open GFS- D takes four times the time tak en by OpenGFS-I for un- tar . OpenSSH compilatio n did not complete in S AFIUS- I10E. T ime taken for make in SAFIUS-D30 and SAFIUS- D30E, seems to be almost same, while SAFIUS-I30E takes 10% more time than SA FIUS-I30. The best SAFIUS con- figuratio ns (SAFIUS-D30 and SA FIUS-D30E) are withi n 120% of the best OpenGFS configurati on (OpenGFS- D). The worst SAFIUS con figuratio ns (SAFIUS-I10E and SAFIUS-D10E) are within 200% of worst OpenGFS con- figuratio n (OpenGFS-I). The ne xt perfor mance test was compilat ion of Apache sou rce. Figure 13 sho ws the time tak en for untar , configure and make operations of Apache source compilatio n. SA FIUS-D30 gi ves the best perfor - mance for untar and O penGFS-D gi ves the worst. This is probably because of reduced interferen ce for syncing the ida ta writes . OpenGFS-D does the best for make and configure . Among SA FIUS configurations , S AFIUS-D10 does the best for configure and SAFIU S-I30 does the best for make. The last performan ce test is OpenGFS com- pilatio n. make was run from the src/fs subtree instea d of the tople vel source tree. Figure 15 sho ws the time tak en for unta r , configure and make operatio ns for com- piling OpenGFS. SA FIUS-I30E and SA FIUS-I10E takes about 120% of time taken by SAFIUS -I30 an d SA FIUS- I10 respec ti vely for runnin g configure and make. The best SAFIUS configuration for runnin g make (SA FIUS-D30) tak es arou nd 115% of time tak en by best OpenGFS con- figuratio n (OpenGFS-I). T he w orst SAFIUS con figuratio n for running make (SAFIUS -I30E) takes about 115% of time tak en by worst OpenGFS configuration (OpenGFS- D). W e c an c onclu de, from the experimen ts run, that SAFIUS seems to be comparable (or sometimes better) to OpenGFS for metada ta intensi ve operations and around 125% of the best OpenGFS configurat ion without encryp- tion/d ecrypt ion, and around 150% of the best OpenGFS 12 OpenSSH compilation 0 20 40 60 80 100 120 140 S AFIUS - I30 S AFIUS - I30E S AFIUS - I10 S AFIUS - I10E O p e nGFS - I S AFIUS - D30 S AFIUS - D30E S AFIUS - D10 S AFIUS - D10E O p e nGFS - D Time in seconds untar configure make Fig. 14 O P E N S S H C O M P I L A T I O N configura tion with encryption/ decry ption and for other op- eration s. V I . C O N C L U S I O N S & F U T U R E W O R K In this work the design and implementatio n of a se- cure distrib uted fi lesyste m over untru sted storage was dis- cussed . SAFIU S provides confidential ity , integ rity , fresh- ness and accountabil ity guarantees, protecting the clients from malicious storage and the storage from malicious clients . SAF IUS requires that trust be placed on the lock- serv er ( l-hash server) , to prov ide all the secur ity guaran- tees; a not so unrealis tic th reat model. For the applica tions, SAFIUS is lik e any other filesystem; it does not require any change of inter face s and hence has no compatib ility issues . SAF IUS uses the l-hash s erv er to store and retrie ve the hash codes of the inode blocks. The hash codes reside on the untrusted storage and the integrity of th e system is pro vided with the help of a secure local storage in the l- hash serv er . S AFIUS is flexib le; u sers choose whi ch client fileser ver s to trust an d ho w lon g. S AFIUS pro vides ease of administ ration ; the fileserv ers can f ail and reco ver without af fecting the consisten cy of the filesystem and without the in v olvement of anothe r entity . W ith some m inor modifi- cation s, SAFIUS can easily prov ide consi stent snapshots of the filesystem (by not deleting the overwritt en blo cks). The performance of SA FIUS is promising gi ve n the secu- rity guarantee s it pro vides. A deta iled perfo rmance stu dy (under hea vier load s), has to be done in-order to establis h the consis tenc y in performan ce. Possible a ven ues for future work are: • Fault tolerant distributed l-hash server : The l-hash serv er in SA FIUS can beco me a bottleneck and prev ent scalab ility of fileservers . It would be interesting to see ho w the system perfo rms when we hav e a fau lt toleran t OpenGFS compilation 0 10 20 30 40 50 60 70 SAFIUS - I30 SAFIUS - I30 E SAFIUS - I10 SAFIUS - I10 E Op e nG FS - I SAFIUS - D30 SAFIUS - D30E SAFIUS - D10 SAFIUS - D10E Op e nG FS - D Time in seconds untar configure make Fig. 15 O P E N G F S C O M P I L A T I O N distrib uted l-h ash serv er in place of existing l-hash server . The distrib uted lock protocol should work without assum- ing any trus t between fileserver s. • Op timizatio ns in stora ge serv er: T he current imple- mentatio n of the storage server is a simple request re- spons e proto col that serial izes all the reque sts. It would be a perfo rmance boos t to do multiple operati ons in par - allel. This may affec t the write ordering assumptions tha t exi st in the current system. • Utilities: Userland filesystem debu g utilities and failure reco ve ry utilities hav e to be written. • Key management: S AFIUS do es not hav e a ke y man- agement scheme and no interfa ces by w hich the users can communica te to the fileser ver s their ke ys. T his wo uld be an essentia l element for the system. The source code for SAFIUS is a vailab le on request. R E F E R E N C E S [1] Ke vin F u, M. Frans Kaashoek, and David Mazieres. Fast and se- cure distributed read-only fil e system. Computer Systems , 20(1):1– 24, 2002. [2] D. Hitz, J. Lau, and M. Malcolm. File system design for an NFS file server appliance. In Pr oceedings of the USENIX W i nter 1994 T echnical Confer ence , pages 235–246, San Fransisco, CA, USA, 1994. [3] David Mazires Jinyuan Li, Maxwell Krohn and Dennis Shasha. Secure untrusted data repository (sundr). T echnical report, NYU Department of Computer Science, 2003 . [4] M. Kallahalla, E. Riedel, R . Swaminathan, Q. W ang, and K. Fu. Plutus - scalable secure file sharing on untrusted storage. In In Pr oceedings of the Second USENIX Conferen ce on F ile and Stor- ag e T echn olog ies (F AST). USENIX , March 2003. [5] Athicha Muthitacharoen, Benjie Chen, and David Mazieres. A lo w-bandwidth network file system. In Symposium on Operating Systems Principles , pages 174–1 87, 2001. [6] S tev en R. Soltis, Thomas M. Ruw art , and Matthe w T . O’Kee fe. The Global File System. In Proceed ings of the Fifth NASA God- dar d Conferen ce on Mass Storag e Systems , pages 319–342, 1996. 13 Postmark 0 200 400 600 800 1000 1200 1400 1600 S AFIUS - I30 S AFIUS - I30E S AFIUS - I10 S AFIUS - I10E O penGFS - I S AFIUS - D30 S AFIUS - D30E S AFIUS - D10 S AFIUS - D10E O penGFS - D No. of operations per second create create with Tx read append delete delete with Tx Fig. 16 P O S T M A R K [7] Christopher A. Stein, John H. Howa rd, and Margo I. Seltzer . Uni- fying file system protection. In In P r oc. of the USENIX T echnical Confer ence , pages 79–90, 2001. [8] Chandramohan A . Thekkath, Timothy Mann, and Edward K. Lee. Frangipani: A scalable distributed file system. In Symposium on Operating Systems Principles , pa ges 224–237, 1997. [9] R. V ingralek U. Maheshw ari and B. Shapiro. Ho w to build a trusted database system on untrusted storag e. In OSDI: 4th Sympo sium on Operating Systems Design an d Implementation , 2002.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment