Correcting biased observation model error in data assimilation

While the formulation of most data assimilation schemes assumes an unbiased observation model error, in real applications, model error with nontrivial biases is unavoidable. A practical example is the error in the radiative transfer model (which is u…

Authors: John Harlim, Tyrus Berry

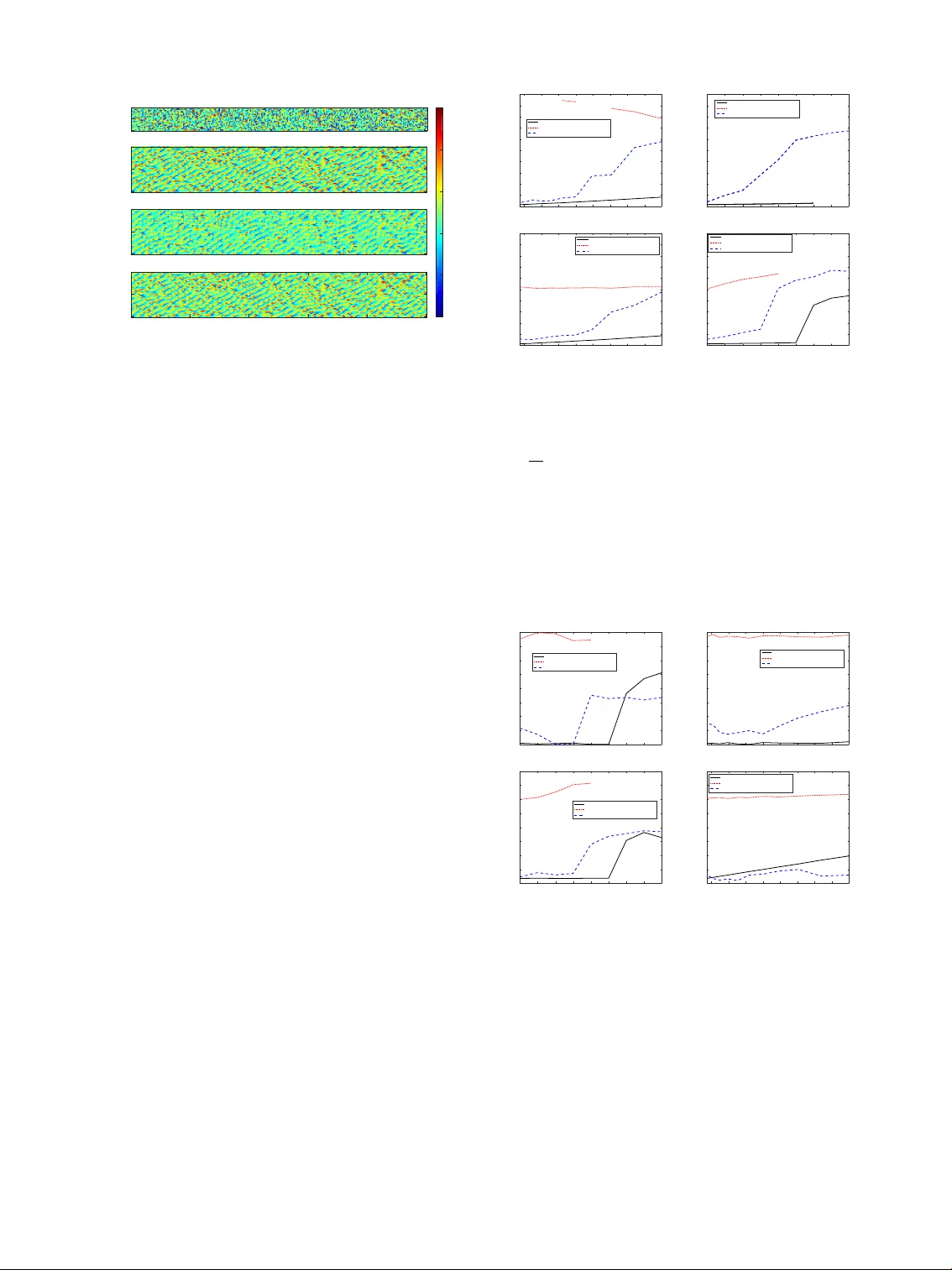

Generated using the official AMS L A T E X template—two-column layout. FOR A UTHOR USE ONL Y , NO T FOR SUBMISSION! M O N T H L Y W E A T H E R R E V I E W Correcting biased obser vation model error in data assimilation T Y RU S B E R RY Department of Mathematical Sciences, Geor ge Mason University , USA. J O H N H A R L I M ∗ Department of Mathematics and Department of Meteor ology and Atmospheric Science, the P ennsylvania State University , USA A B S TR AC T While the formulation of most data assimilation schemes assumes an unbiased observation model error , in real applications, model error with nontrivial biases is unav oidable. A practical example is the error in the radiativ e transfer model (which is used to assimilate satellite measurements) in the presence of clouds. As a consequence, man y (in fact 99%) of the cloudy observed measurements are not being used although they may contain useful information. This paper presents a novel nonparametric Bayesian scheme which is able to learn the observation model error distribution and correct the bias in incoming observations. This scheme can be used in tandem with any data assimilation forecasting system. The proposed model error estimator uses nonparametric likelihood functions constructed with data-driven basis functions based on the theory of kernel embeddings of conditional distributions developed in the machine learning community . Numerically , we show positiv e results with two examples. The first example is designed to produce a bimodality in the observation model error (typical of “cloudy” observations) by introducing obstructions to the observations which occur randomly in space and time. The second example, which is physically more realistic, is to assimilate cloudy satellite brightness temperature-like quantities, generated from a stochastic cloud model for tropical con vection and a simple radiativ e transfer model. 1. Introduction Data assimilation (see e.g., Kalnay 2003; Majda and Harlim 2012) is a sequential method to estimate the condi- tional distribution of hidden state variables x i giv en noisy observations y i through Bayes’ formula, p ( x i | y i ) ∝ p ( x i ) p ( y i | x i ) , (1) where p ( x i ) is the prior distribution of the state variables of interest and p ( y i | x i ) is the lik elihood function correspond- ing to the observation model, y i = h ( x i ) + η i at time- i . Here, h denotes an observation function and η i represents the measurement noise. In most data assimilation imple- mentations in Numerical W eather Prediction (NWP), one typically assumes that the observation model h is explicitly known. Moreov er, the tacit assumption is that the noise variables η i are independent Gaussian random variables with mean zero and a specified covariance matrix, R o . W ith these assumptions, the likelihood function is para- metrically defined as, p ( y i | x i ) = p ( y i − h ( x i )) = p ( η i ) = N ( 0 , R o ) . In NWP applications, the prior density p ( x i ) ∗ Corr esponding author addr ess: Department of Mathematics, the Pennsylvania State University , 109 McAllister Building, University Park, P A 16802-6400, USA E-mail: jharlim@psu.edu at time- i in (1) is usually represented by an ensemble of forecast solutions of a Global Circulation Model. For satellite data assimilation the observations, y i can be radiances or brightness temperatures measured by satel- lite instruments. In this particular example, the typical observation model h is the radiati ve transfer model (Liou 2002). While it is believ ed that high resolution infrared spectral radiances contain detailed information about the temperature and humidity profile, less than 1% of the AIRS satellite measurements are being used in operational data assimilation problems due to data processing/thinning for quality control purposes and the presence of clouds (Reale et al. 2008). Assimilating satellite measurement under cloudy conditions is challenging since the presence of clouds in the atmosphere induces significantly cooler radiances (which can be vie wed as large biases) relati ve to those measured under the clear-sky condition. The main challenge in this problem is to detect observation model error that can occur intermittently due to misspecification of the cloud top height, the number of clouds in a column of atmosphere, and/or the cloud fraction in the radiative transfer model of cloudy measurements (McNally 2009). Mathematically , this suggests that when an incorrect ob- servation model, ˜ h , is used in placed of the true observa- Generated using v4.3.2 of the AMS L A T E X template 1 2 M O N T H L Y W E A T H E R R E V I E W tion function, h , the observations can be written as, y i = h ( x i ) + η i ≈ ˜ h ( x i ) + b i + η i , (2) where we introduce a biased model error, b i , in addition to the measurement error η i . In this paper, we introduce a model error estimator to approximate the distribution of the error b at time- i , as- suming that the underlying observation function h is un- known. Formally , the model error estimator is a Bayesian nonparametric filter which estimates the time dependent posterior density p ( b | y i ) ∝ p ( b ) p ( y i | b ) . (3) Here, the prior density , p ( b ) , will be constructed based on the predicted observation error . The likelihood function p ( y i | b ) will be constructed nonparametrically using a his- torical time series of y and b , and no kno wledge of the true observation function is assumed. Our construction is based on a machine learning tool known as the kernel embedding of conditional distributions formulation intro- duced in Song et al. (2009, 2013). An additional nov elty of our approach is that we generalize the formula in Song et al. (2009, 2013) to data-dri ven Hilbert spaces with ba- sis functions obtained from the diffusion maps algorithm (Coifman and Lafon 2006; Berry and Harlim 2016b). This is in contrast to their original approach where the y specify the Hilbert space using a specific choice of basis functions, such as the radial basis type (Song et al. 2013). Once the prior and likelihood are specified, they are combined with (3) to form the posterior density p ( b | y i ) of the model er - ror . Finally , we use the statistics of the posterior , such as the mean and v ariance, to compensate for the error in the observation function and thereby improve the estimation of the state x i . It is important to stress that this model error estimator can be used as a subroutine in tandem with any data assimilation forecasting system based on (1). W e will demonstrate this idea with two synthetic nu- merical examples. The first example is with the Lorenz- 96 model (Lorenz 1996), where the observ ations are cor- rupted by sev ere biases at random instances and locations to mimic the bimodality of the observation error distribu- tion in real applications. The second example is with a stochastic cloud model (Khouider et al. 2010) for tropical con vection, where the observed brightness temperature- like quantities are constructed with a simple radiativ e transfer model (Liou 2002) with sev ere biases in the pres- ence of clouds. The remainder of this paper is organized as follows. In Section 2, we describe our framew ork for estimating the model error estimator , using the ensemble Kalman filter (EnKF) as an archetypal example. In Section 3, we discuss the construction of the nonparametric filter in (3) which can be combined with any primary filter (1). In partic- ular , we describe how to specify the likelihood function Prior Primary Filter − − − − − − − − − − − − → Posterior p ( x i ) p ( x i | y i ) y x Error Prior Secondary Filter − − − − − − − − − − − − → Error Posterior p ( b ) p ( b | y i ) x x Observation RKHS+T raining Data − − − − − − − − − − − − − → Lik elihood y i p ( y i | b ) F I G . 1. Diagram representing the integration of the secondary filter into an arbitrary primary filtering procedure. and the prior density in (3) by combining historical train- ing data with the current observations and primary filter estimates. In Section 4 we demonstrate our method on the two numerical examples described above and then we briefly conclude in Section 5. 2. An observation model err or estimator The k ey issue, as stated in (2), is to overcome the obser- vation model error b when we hav e no access to the true observation function h . Let us outline a general frame- work to mitigate this issue with Fig. 1. In this diagram, we refer to the data assimilation system which is used to esti- mate x at time- i in (1) as the primary filter . Since different operational NWP centers hav e their preferential methods (4D V AR, EnKF , hybrid, etc), we will design our approach in such a way that it is applicable to an y primary filtering scheme. Our strategy is to apply a secondary filter in (3) and use the resulting posterior conditional statistics to correct the observation model error in the primary filter . In particular , we will use the posterior mean statistics to correct the bi- ases and the variances to correct the additional uncertain- ties beyond the measurement error . W e should stress that the implementation of the secondary filter in this frame- work offers no additional changes to the infrastructure in the primary filter except for a simple two-way communi- cation at each assimilation step. Namely , one needs to feed the predicted observ ation error from the primary filter into the secondary filter and then feed the model error statistics from the secondary filter back into the primary filter (to correct the likelihood functions in the primary filter). The third row in the diagram in Fig. 1 clarifies that we use the current observations to construct the prior density and the likelihood function used in the secondary filter . Now let us illustrate this heuristic discussion with a concrete algo- rithm in the case where the primary filter is the ensemble Kalman filter (EnKF). M O N T H L Y W E A T H E R R E V I E W 3 W ith the EnKF , both the prior and posterior distribu- tions of the primary filter are assumed to be Gaussian and only the first two empirical moments are corrected using the Kalman filter formulas. The Kalman filter im- plicitly assumes that the observation error is unbiased, E [ y i − h ( x i )] = E [ η i ] = 0. In the i -th analysis step, an en- semble of K prior estimates x k , f i ∼ N ( ¯ x f i , P f xx , i ) , where k ∈ { 1 , ..., K } denotes the ensemble member , is transformed into an ensemble of analysis estimates x k , a i ∼ N ( ¯ x a i , P a xx , i ) . Here the superscripts f and a are to denote estimates be- fore (prior/forecast) and after (analysis) the observation y i has been assimilated, respecti vely . In each analysis step, the EnKF procedure can be summarized in three steps. First, it approximates the prior mean and cov ariance statis- tics with empirically estimated statistics defined as, ¯ x f i = 1 K K ∑ k = 1 x k , f i , P f xx , i = 1 K − 1 X i X > i + Q , (4) where X i = [ x 1 , f i − ¯ x f 1 , . . . , x K , f i − ¯ x f i ] . Second, it applies the Kalman filter formula to obtain the posterior mean and cov ariance, ¯ x a i = ¯ x f i + K i ( y i − ¯ y f i ) , P a xx , i = P f xx , i − K i P > xy , i , (5) K i = P xy , i ( P yy , i + R ) − 1 . Third, it draws an ensemble of analysis estimates x k , a i ∼ N ( ¯ x a i , P a xx , i ) . In (5), ¯ y f i = K − 1 ∑ K k = 1 y k , f i , P xy , i = ( K − 1 ) − 1 X i Y > i and P yy , i = ( K − 1 ) − 1 Y i Y > i are the empirically estimated statistics, where Y i = [ y 1 , f i − ¯ y f 1 , . . . , y K , f i − ¯ y f i ] , and y k , f i = h ( x k , f i ) are the ensemble of the predicted ob- servations. Several methods to execute (5) and to draw the analysis ensembles hav e been introduced, for exam- ple, with perturbed observations (Evensen 1994), or with square root filters (Bishop et al. 2001; Anderson 2001). Notice that this method depends on tw o parameters Q and R which represent the covariance matrices of the dynami- cal and observ ation noise. In particular , when the observa- tion model is perfect we set R = R o meaning that the only observation noise is the measurement error . W e assume that the true observation model h is un- known and we are given the imperfect observ ation model ˜ h . Define b i : = h ( x i ) − ˜ h ( x i ) as the observ ation model er - ror at the i -th time step and assume that the observation model error is biased, that is, E [ b i ] = µ b i 6 = 0 and indepen- dent to the measurement noise, η i . With this configuration, it is clear that if we consider filtering with the imperfect observation model in (2), the observ ation error is biased, E [ y i − ˜ h ( x i )] = µ b i 6 = 0. One w ay to mitigate this issue is by adjusting the obser- vation model as follo ws, y i − µ b i = ˜ h ( x i ) + ˜ b i + η i , η i ∼ N ( 0 , R ) , (6) where we define ˜ b i : = b i − µ b i as the unbiased observation model error with covariance matrix R b i : = E [ ˜ b i ˜ b > i ] . As- suming that ˜ b i and η i are independent, we can implement the EnKF with the unbiased observation model in (6) as follows, ¯ x a i = ¯ x f i + ˜ K i ( y i − µ b i − ˜ y f i ) , ˜ P a xx , i = ˜ P f xx , i − ˜ K i ˜ P > xy , i , (7) ˜ K i = ˜ P xy , i ( ˜ P yy , i + R + R b i ) − 1 . Here, ˜ y f i = K − 1 ∑ K k = 1 ˜ y k , f i , ˜ P xy , i = ( K − 1 ) − 1 X i ˜ Y > i , ˜ P yy , i = ( K − 1 ) − 1 ˜ Y i ˜ Y > i , where ˜ Y i = [ ˜ y 1 , f i − ˜ y f 1 , . . . , ˜ y K , f i − ˜ y f i ] and ˜ y k , f i = ˜ h x k , f i . In order to implement the unbiased fil- ter in (7), we need to estimate µ b i and R b i . W e propose to use the conditional statistics of the secondary filter in (3), ˆ µ b i = E [ b | y i ] and R b i = Var [ b | y i ] , as the estimators for µ b i and R b i , respectiv ely . With this goal in mind, we now explain the secondary filter . 3. The secondary filter The goal of this section is to e xplain the construction of the nonparametric filtering in (3). Since this requires vari- ous technical tools from the machine learning community , we accompan y the discussion with se veral Appendices for detailed discussions. W e assume that we are giv en only pairs of data points { ( x ` , y ` ) } N ` = 1 for training (so no knowledge of the true ob- servation function h is assumed). From these data points we compute the implied model error b ` = y ` − ˜ h ( x ` ) , where ˜ h is the giv en imperfect observation model. First, we will discuss ho w to train the likelihood function us- ing this dataset. Second, we will discuss ho w to ex- tend the likelihood function on ne w observ ations to obtain the conditional probability of b giv en new observations y i / ∈ { y ` } N ` = 1 that are not part the training dataset. Third, we will discuss how to construct a prior . Subsequently , we discuss ho w to extract the estimators µ b i and R b i that are needed in (7). a. T raining the nonpar ametric likelihood function Our goal here is to learn the likelihood function p ( y | b ) by using a training data set consisting of pairs { ( b ` , y ` ) } N ` = 1 . The outcome of this training is a matrix of size N × N , where the ( i , j ) -entry is an estimate of the con- ditional density , p ( y i | b j ) . This matrix is a discrete repre- sentation of the likelihood function e valuated at each point of the training dataset y i ∈ { y ` } N ` = 1 and b j ∈ { b ` } N ` = 1 . This matrix is a nonparametric representation of p ( y | b ) . How- ev er , since the data set could be quite large, we will nev er explicitly construct this matrix, and instead it will be rep- resented by its projection into a lower -dimensional set of basis elements. 4 M O N T H L Y W E A T H E R R E V I E W For clarity of presentation, we assume in this section that the observation is one dimensional. In order to cor- rect a multidimensional observation, the following bias correction steps are applied independently to each coor- dinate of the observation. W ith this scalar formulation, the computational cost in constructing the likelihood func- tion becomes manageable and the secondary filter is easily parallelize-able for high-dimensional observations. Our main idea is to represent the conditional density p ( y | b ) with a set of basis functions which can be learned from the training data set using the diffusion maps al- gorithm (Coifman and Lafon 2006; Berry and Harlim 2016b). First let us discuss how to construct the basis functions. Subsequently , we discuss a nonparametric rep- resentation of conditional density functions. 1 ) L E A R N I N G T H E DA TA - D R I V E N B A S I S F U N C T I O N S In a nutshell, the diffusion maps algorithm can be de- scribed as follows. Gi ven a dataset x ` ∈ M ⊂ R n with sampling density q ( x ) , defined with respect to the volume form inherited by the manifold M from the ambient space R n , the dif fusion maps algorithm is a k ernel-based method which constructs an N × N matrix L that approximates a weighted Laplacian operator , L = ∇ log ( q ) · ∇ + ∆ . The eigen vectors ~ ϕ k of the matrix L are discrete estimates of the eigenfunctions ϕ k ( x ) of the operator L which form an orthonormal basis of a weighted Hilbert space L 2 ( M , q ) . For example, if the data is uniformly distributed, q ( x ) = 1, on the unit circle M = S 1 , then the matrix L approxi- mates the Laplacian ∆ on this periodic domain. In this case, eigen vectors of L approximates eigenfunctions of the Laplacian operator on the unit circle, which are the Fourier functions that form an orthonormal basis of L 2 ( S 1 ) . Thus one can think of the dif fusion maps algorithm as a method to specify a generalized Fourier basis adapted from the data. The basis functions ϕ k ( x ) are represented nonpara- metrically by the vectors ~ ϕ k ∈ R N whose ` -th component is a discrete estimate of the eigenfunction φ k ( x ` ) , e valu- ated at training data point x ` . In our application, we apply the diffusion maps sepa- rately on the dataset b ` ∈ R and y ` ∈ R . Let q ( b ) and ˜ q ( y ) be the sampling densities of b ` and y ` , respectiv ely . Imple- menting the diffusion maps algorithm, we obtain vectors ~ ϕ k which approximate ϕ k ( b ) ∈ L 2 ( R , q ) and ~ φ k which ap- proximate φ k ( y ) ∈ L 2 ( R , ˜ q ) . In our implementation, we use the variable bandwidth kernels introduced by Berry and Harlim (2016b). W e refer to the Appendix in Berry and Harlim (2016a) for the pseudo-code of the algorithm. 2 ) A N O N P A R A M E T R I C R E P R E S E N TA T I O N O F C O N D I - T I O NA L D E N S I T Y F U N C T I O N S Let ϕ j ( b ) ∈ L 2 ( R , q ) and φ k ( y ) ∈ L 2 ( R , ˜ q ) be the basis functions approximated by the diffusion maps algorithm. For finite modes, j = 1 , . . . , M 2 , k = 1 , . . . , M 1 , we consider a nonparametric representation of the conditional density as follows, p ( y | b ) = M 1 ∑ k = 1 µ Y | b , k φ k ( y ) ˜ q ( y ) . (8) where the expansion coef ficients are defined as follows, µ Y | b , k = M 2 ∑ j = 1 ϕ j ( b )[ C Y B C − 1 BB ] k j . (9) Here matrices C Y B is M 2 × M 1 and C BB is M 1 × M 1 whose components can be approximated by Monte-Carlo av er- ages as follows, C Y B jk ≈ 1 N N ∑ ` = 1 φ j ( y ` ) ϕ k ( b ` ) (10) C BB jk ≈ 1 N N ∑ ` = 1 ϕ j ( b ` ) ϕ k ( b ` ) . (11) The equation for the expansion coef ficients in (9) is based on the theory of kernel embedding of conditional distri- bution introduced in Song et al. (2009, 2013) which we revie wed in Appendix A below . See Appendix B for the detailed proof of equations (9)-(11). From the e xpression in (9), one can see that the condi- tional density in (8) is represented as a regression in infi- nite dimensional spaces with basis functions of ϕ j ( b ) and φ k ( y ) . This representation is nonparametric in the sense that we do not assume that the density function is of par- ticular distribution. Numerically , the nonparametric rep- resentation of p ( y | b ) is giv en by an N × N matrix whose ( i , j ) th component is p ( y i | b j ) = M 1 ∑ k = 1 µ Y | b j , k φ k ( y i ) ˜ q ( y i ) , (12) where y i ∈ { y ` } N ` = 1 and the coefficients are given by (9) ev aluated at the training data b j ∈ { b ` } N ` = 1 . From (12), notice that all we need are the function values φ k ( y ` ) , ϕ j ( b ` ) , ˜ q ( y ` ) , which are obtained via the dif fusion maps algorithm. W e should note that the sampling density ˜ q is estimated using a kernel density estimation method in our implementation of the diffusion maps algorithm (see Appendix of Berry and Harlim (2016a) for the algorith- mic detail). In our numerical implementation, we will set M 1 = M 2 = M . b . Extension of the likelihood function to new observations T o construct p ( y i | b ` ) for y i 6 = { y ` } N ` = 1 that is not in the training data set, Eq. (8) suggests that we need to extend the eigenfunctions to this new data point, φ k ( y i ) . One ap- proach would be to use the Nystr ¨ om extension (Nystr ¨ om M O N T H L Y W E A T H E R R E V I E W 5 1930), howe ver , since the observation y i is noisy , a more robust method is to compute weights p ( y ` | y i ) = 1 √ 2 π R exp − ( y ` − y i ) 2 2 R which are the probabilities of the training data points given the observation y i . W e then estimate E η i [ φ k ( y i ) ˜ q ( y i )] = E p ( · | y i ) [ φ k ( · ) ˜ q ( · )] ≈ 1 N N ∑ ` = 1 p ( y ` | y i ) φ k ( y ` ) ˜ q ( y ` ) and use this expectation on (8) to define the likelihood function, p ( y i | b ` ) = E η i [ p ( y ` | b ` )] = M ∑ k = 1 µ Y | b ` , k E η i [ ˜ ϕ k ( y i ) ˜ q ( y i )] , (13) where the coefficients µ Y | b ` , k are defined in (9) and com- puted in the training phase. T o conclude, we represent the likelihood function p ( y i | b ) nonparametrically by an N - dimensional vector whose ` th component is p ( y i | b ` ) as prescribed in (13). In the remainder of this paper , we de- note this nonparametric likelihood function as the RKHS likelihood function . c. Prior density functions An appropriate construction of the prior distribution p ( b ) at each data assimilation time i is essential for accu- rate filter estimates. This is especially true when the likeli- hood function p ( y i | b ) is bimodal as a function of b , as we will see in the numerical applications belo w . In this arti- cle, we consider a Gaussian prior distribution with mean and variance gi ven by , ˆ b i = y i − ¯ y f i , σ 2 b i = P yy , i + R . (14) Giv en a training data set { ( x ` , y ` ) } N ` = 1 , we can compute the training biases b ` = y ` − ˜ h ( x ` ) such that the prior density ev aluated at the training data is giv en by , p ( b ` ) ∝ exp − ( b ` − ˆ b i ) 2 2 σ 2 b i ! . (15) So, p ( b ) is represented by an N -dimensional vector whose ` th component is p ( b ` ) as defined in (15). As an alterna- tiv e to the time dependent v ariance defined in (14), one can also use the climatological variance obtained from the historical training b ` (this is analogous to a 3DV AR prior). While more complicated priors are always possible, we will apply our numerical experiments below using these Gaussian prior densities. d. Statistics of the posterior distribution Now that we hav e defined the likelihood p ( y i | b ` ) in (13) and the prior p ( b ` ) in (15), we can multiply these terms to form the posterior density , p ( b ` | y i ) = 1 Z p ( b ` ) p ( y i | b ` ) , where Z = Z p ( b ) p ( y i | b ) d b ≈ 1 N N ∑ ` = 1 p ( b ` ) p ( y i | b ` ) q ( b ` ) − 1 is the normalization constant estimated via Monte-Carlo summation. With this posterior density estimate, we can compute the posterior mean and variance, ˆ µ b i = 1 N N ∑ ` = 1 b ` p ( b ` | y i ) q ( b ` ) − 1 ˆ R b i = 1 N N ∑ ` = 1 ( b ` − ˜ b i ) 2 p ( b ` | y i ) q ( b ` ) − 1 . (16) as estimators for µ b i and R b i that can be used in the pri- mary filtering step in (7). This completes our description of the secondary filter . In the remainder of this paper , we denote the EnKF in (7) with observ ation error corrected using the statistics in (16) as the RKHS filter . 4. Example 1: Assimilating random “cloudy” observa- tions In this example we will introduce a se vere error into the observations of the 40-dimensional chaotic Lorenz (1996) system, ˙ x j = x j − 1 ( x j + 1 − x j − 2 ) − x j + 8 , (17) where the indices j are taken modulo 40. The underly- ing truth is generated by the RK4 inte gration scheme with integration time step δ t = 0 . 05 and a randomly chosen ini- tial condition. Direct observ ations are taken at ev ery dis- crete time step ∆ t = t i + 1 − t i , which we will vary between 0.1-0.5. W e will show results for observing x j at e very other grid points (20 observations). At each observation time step t i , we randomly choose up to c = 7 from the 20 observed locations and obstruct the corresponding obser - vations with a “cloud” as follows. W e draw ξ i ∼ U ( 0 , 1 ) and let the observations at these c locations be, h ( x k ) = x k ξ i > 0 . 8 β k x k − 8 else (18) β k ∼ N ( 0 . 5 , 1 / 50 ) . Effecti vely , up-to se ven random locations out of 20 di- rect measurement hav e an 80% chance of being randomly 6 M O N T H L Y W E A T H E R R E V I E W -14 -12 -10 -8 -6 -4 -2 0 Obs Model Error 0 0.1 0.2 0.3 0.4 0.5 0.6 Probability Prior, p ( b ℓ ) Likelihood, p ( y i | b ℓ ) Posterior, p ( b ℓ | y i ) Bias P rior, ˆ b i Bias Posterior, ˆ µ b i T rue Model Error, b i -14 -12 -10 -8 -6 -4 -2 0 Obs Model Error 0 0.05 0.1 0.15 0.2 0.25 0.3 Probability Prior, p ( b ℓ ) Likelihood, p ( y i | b ℓ ) Posterior, p ( b ℓ | y i ) Bias P rior, ˆ b i Bias Posterior, ˆ µ b i T rue Model Error, b i F I G . 2. T op, Left: V isualization of the observ ations using (18) plot- ted against the true state, the top ellipse corresponds to the clear-sk y observations and the bottom ellipse corresponds to the cloudy obser- vations. T op, Right: V isualization of the observation model error as a function of the observation. Bottom, Left: Example of a bimodal RKHS likelihood function (red, dashed) indicating the current observa- tions maybe obstructed or not obstructed, the prior (15) derived from the primary filter (gray , solid) reveals it to be unobstructed as shown in the posterior (black, solid). Bottom, Right: Similar bimodal likelihood but the prior indicates obstructed observ ations. scaled by β k and shifted down by eight units. In our ex- periment, the observed data is generated with this hid- den observation model and the filter observation model is ˜ h ( x k ) = x k . Therefore, when ξ i > 0 . 8, we directly observe the state and the observ ation model is correct and we re- fer to this case as an unobstructed (“clear-sky”) observa- tion. On the other hand, when ξ i ≤ 0 . 8, the filter observa- tion model is incorrect, ˜ h 6 = h , and we refer to this case as an obstructed (“cloudy”) observation. Notice that at each time there are up to 7 randomly chosen “cloudy” locations. T o represent the measurement error, we also include ad- ditiv e Gaussian noise η k ∼ N ( 0 , R o ) in the observ ations y k = h ( x k ) + η k . Since the obstructions in the observation h appear randomly , they are impossible to predict, and we assume that the modeler only has the incorrect observation model ˜ h ( x k ) = x k . For comparison, we also generate a set of ‘clear sky’ observations ˜ y k = ˜ h ( x k ) + η k = x k + η k so that we can test the standard EnKF in the case when there is no model error . In Fig. 2 we show a scatter plot which clearly illus- trates the bimodal distribution of the observations, y k . No- tice that the clear-sky and cloudy observations are both permitted in the range [ − 9 , − 2 ] making it impossible to tell from the observ ation alone whether the observation is obstructed or not. The conditional density , p ( y i | b ` ) is trained from a short training data set { ( b ` , y ` ) } N ` = 1 , where N = 10000. In this example since model error is spatially homogeneous, we fit a single model and use it for each of the 20 observations. This assumption also allows us to use only 500 time steps of training data (since each time step contains 20 observations, this short time series giv es us N = 10000 observations). In this numerical experi- ment, the likelihood function is constructed using M = 250 eigenfunctions. In the bottom panels of Fig. 2, we show examples of the RKHS lik elihood functions (red, bottom left and right) both of which result from observations near y i ≈ 4, but in one case the observ ation is obstructed (bottom left) and in the other the observation is unobstructed (bottom right). In these panels, we also show the Gaussian prior distrib ution as proposed in (15) with a time-independent σ 2 b obtained by taking the v ariance of the training data b ` . Notice that the prior helps the secondary filter to identify whether the observation error is small (bottom, left panel) or large (bot- tom,right). In this case, the climatological prior variance is suf ficient to give a relati vely informativ e prior . In the next e xample, we will need a time-dependent prior since the variance is too broad. In Fig. 3, we show the spatiotemporal structure of the observations, the true state, and the EnKF without and with the observ ation model error corrections. Notice that the observations are severely corrupted (see the deep blue dots) and these obstructions occur completely randomly in space and time. Note also that the “cloudy” observa- tions cause observation model error because we assume no kno wledge of the true observation function h , and we filter using ˜ h ( x k ) = x k on each observed location. Here, the primary filter is implemented with 80 ensemble mem- bers and an adaptive cov ariance estimation of the system and observ ation noise cov ariances, Q and R (see Berry and Sauer (2013) for details). Below , we will also include re- sults without using the adaptiv e covariance estimation for comparison. W e use the abbreviation EnKF to refer to the primary filter without correcting the observ ation model er - ror , that is, µ b i = 0 and R b i = 0 in (7) and we use the ab- breviation RKHS to refer to the EnKF with the observation model error corrected by (16). In Fig. 4, we show the Root-Mean-Square Error (RMSE) between the truth and the filtered estimates as functions of measurement error, √ R o , and observation time, ∆ t . Each RMS error is averaged ov er 5000 filter steps after removing an initial transient to allo w each fil- ter to con verge. In each panel, we include the results of applying the EnKF to unobstructed (clear sky) observa- tions ˜ y k (black, solid line) which should be considered the best possible filtering since the observation model is correct when the observations are all unobstructed. On the other hand, when the observations are obstructed, y k (cloudy sky) the observation model is incorrect and the results (red, dotted line) degrade significantly (notice that gaps in the curve indicate catastrophic filter di ver gence). The results sho wn in the top row of Fig. 4 correspond to implementing the filter using the true value R = R o and a fixed small additive inflation Q = 10 − 3 × I 20 × 20 . In this M O N T H L Y W E A T H E R R E V I E W 7 20 40 True State 10 20 Obs -10 -5 0 5 10 15 10 20 30 40 50 Time 20 40 RKHS 20 40 EnKF F I G . 3. The spatiotemporal patterns of the sparse observations (top) simulated using (18) with c = 7 randomly located obstructed observa- tion, compared with the true state (second row) and the filtered state estimates of the EnKF with adaptive tuning of Q and R (third row) and the RKHS corrected EnKF (bottom). case, the classical EnKF filter estimates diver ge in the presence of this se vere observation model error . This re- sult suggests that the empirical additi ve inflation (which is commonly used to compensate for model error in appli- cations) is not able to overcome the ill-posedness induced by the bimodality of the model error distrib ution in this example. In the bottom row , we include results which use Q and R parameters determined by the adaptiv e cov ari- ance estimation method (Berry and Sauer 2013). Notice that the EnKF applied to the cloudy observations with the adaptiv e estimation of Q and R is able to prevent catas- trophic filter di vergence except when the observation time ∆ t > 0 . 3. For both the fix ed parameter and adapti ve filters, it is clear that the RKHS filter giv en the cloudy observa- tions approaches the performance of the EnKF given the clear sky observ ations in the limit of small measurement error and small observation time. In Fig. 5 we plot the average of the diagonal entries of the Q and R parameters estimated by the adaptiv e co vari- ance estimation as functions of both the measurement er- ror cov ariance and the observation time. First, the EnKF with clear-sk y observations reco vers the true values of R with high accuracy when the observation time is relativ ely small ( ∆ t < 0 . 35). Notice that when the EnKF is giv en the cloudy observ ations, the estimates for Q and R are much larger than the estimates than when giv en the clear-sk y ob- servations. Moreov er , the estimates from the RKHS filter are closer to those of the EnKF gi ven the clear-sky ob- servations. Intuitively , this means that the RKHS model error correction is effecti ve and that the adapti ve method only needs to compensate for the remaining measurement error which is present ev en in the clear sky observ ations. In other words, the RKHS correction is ef fectively remov- ing the clouds from the cloudy observations and achieving results close to the EnKF gi ven the clear sky observations. 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 Observation Noise Standard Devation 0 0.5 1 1.5 2 2.5 3 3.5 4 4.5 5 RMSE EnKF, Clear Sky Obs EnKF, Cloudy Obs RKHS Correction, Cloudy Obs 0.1 0.15 0.2 0.25 0.3 0.35 0.4 0.45 0.5 Observation Time 0 0.5 1 1.5 2 2.5 3 3.5 4 4.5 5 RMSE EnKF, Clear Sky Obs EnKF, Cloudy Obs RKHS Correction, Cloudy Obs 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 Observation Noise Standard Devation 0 0.5 1 1.5 2 2.5 3 3.5 4 4.5 5 RMSE EnKF, Unobstructed Obs EnKF, Obstructed Obs RKHS, Obstructed Obs 0.1 0.15 0.2 0.25 0.3 0.35 0.4 0.45 0.5 Observation Time 0 0.5 1 1.5 2 2.5 3 3.5 4 4.5 5 RMSE EnKF, Unobstructed Obs EnKF, Obstructed Obs RKHS, Obstructed Obs F I G . 4. Filtering improv ement for cloudy observation using the RKHS bias correction (blue, dashed) compared to the standard EnKF with no model error correction (red, dotted) and compared to the EnKF which uses the perfect “clear-sky” observations (black, solid). T op, Left: RMSE as a function of the measurement error standard devia- tion, √ R o with a fixed observ ation time ∆ t = 0 . 1. T op, Right: RMSE as a function of observation time when R o = 2 − 5 . All filters in the top row used an R parameter equal to the true observation noise covariance R o and fixing Q = 10 − 3 I (a simple additiv e inflation). Bottom: Same as top row except that Q and R are estimated adaptiv ely . Notice that without the adaptive estimation scheme (top) the standard EnKF is often catas- trophically di vergent when filtering the obstructed observations. The adaptiv e estimation scheme (bottom) improves the stability and perfor- mance of all the filters, but cannot overcome the obstructed observations in the EnKF (red, dotted) without the RKHS correction (blue, dashed). 0.1 0.15 0.2 0.25 0.3 0.35 0.4 0.45 0.5 Observation Time 0 0.5 1 1.5 2 2.5 3 3.5 4 Estimated System Noise Std. Dev. EnKF, Clear Sky Obs EnKF, Cloudy Obs RKHS Correction, Cloudy Obs 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 Observation Noise Standard Devation 0 0.5 1 1.5 2 2.5 3 3.5 4 Estimated System Noise Std. Dev. EnKF, Clear Sky Obs EnKF, Cloudy Obs RKHS Correction, Cloudy Obs 0.1 0.15 0.2 0.25 0.3 0.35 0.4 0.45 0.5 Observation Time 0 0.5 1 1.5 2 2.5 3 3.5 4 Estimated Obs Noise Std. Dev. EnKF, Clear Sky Obs EnKF, Cloudy Obs RKHS Correction, Cloudy Obs 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 Observation Noise Standard Devation 0 0.5 1 1.5 2 2.5 3 3.5 4 Estimated Obs Noise Std. Dev. EnKF, Clear Sky Obs EnKF, Cloudy Obs RKHS Correction, Cloudy Obs F I G . 5. Estimated Q and R parameters for the various filters in Fig. 4 using the adaptiv e estimation scheme Berry and Sauer (2013). 5. Example 2: Assimilating cloudy “satellite-like” measurements Here, we consider assimilating satellite measurements of an idealized stochastic cloud model for a single-column of org anized tropical con vection (Khouider et al. 2010). The model is a system of four-dimensional ODEs that rep- resents the time ev olution of the first and second baroclinic anomaly potential temperatures, respectiv ely , θ 1 and θ 2 , an equi valent boundary layer anomaly potential tempera- 8 M O N T H L Y W E A T H E R R E V I E W ture, θ eb , and a v ertically av eraged water v apor content, q , of a single-column tropical atmosphere. These ODEs are coupled to a stochastic birth-death process which gov erns the area fractions of three cloud types; congestus f c , deep f d , and stratiform clouds, f s , over an unresolved horizontal domain corresponding to the column model. From θ 1 and θ 2 , we can extrapolate the anomaly potential temperature at height z (in km unit) using the following interpolation function (Khouider et al. 2010), T ( z ) = θ 1 sin ( z π Z T ) + 2 θ 2 sin ( 2 z π Z T ) , z ∈ [ 0 , 16 ] , (19) where we model the height of the troposphere at the T ropic as Z T = 16 km. In our experiment, we denote the state variables as x = ( θ 1 , θ 2 , θ eb , q ) and the cloud fractions as f = ( f c , f d , f s ) . W e will assume that the cloud top heights for both the deep and stratiform are the same, z d = 12 km, whereas that for the cumulus cloud is z c = 3 km. Based on these heights, we consider modeling the brightness temperature- like quantity at wav enumber- ν with the following two- cloud type radiativ e transfer model (Liou 2002), h ν ( x , f ) = ( 1 − f d − f s ) h ( 1 − f c ) θ eb T ν ( 0 ) + Z z c 0 T ( z ) ∂ T ν ∂ z ( z ) d z + f c T ( z c ) T ν ( z c ) + Z z d z c T ( z ) ∂ T ν ∂ z ( z ) d z i (20) + ( f d + f s ) T ( z d ) T ν ( z d ) + Z ∞ z d T ( z ) ∂ T ν ∂ z ( z ) d z , where T ν ( z ) denotes the transmission from height z to the satellite, which is assumed to be a decaying function of height and also depends on q . W e specify the wa venum- bers ν such that the weighting functions, ∂ T ν ∂ z ( z ) , are max- imized at heights z max = 1 , 2 , . . . , 16 km. In Fig. 6, we show the weighting functions (black solid) with the mini- mum humidity associated with z max = 2 , 5 , 8 , 10 km (black dashes). W e also include the weighting functions corre- sponding to the maximum humidity value (red solid). This is to show that depending on the value of q , the shape of weighting functions will vary between these two weights. (see Appendix C for the detailed construction of these weighting functions). Essentially , the terms inside the square bracket in (20) denote the contribution below the deep and stratiform clouds, the first term on the third row denotes the con- tribution from the deep cloud top height and the last term denotes the contrib ution of the free atmosphere above the deep cloud. By setting f = 0, we obtain the clear-sky ob- servations. Consider implementing the primary filter with an incorrect observation model ˜ h ν ( x ) = h ν ( x , 0 ) , which means that we assume the observed brightness temper- atures are from clear-sky measurements. In Fig. 7, we 0 0.05 0.1 0.15 0 2 4 6 8 10 12 14 16 height (z) w e ig hti ng fun ct i on ( ∂ T ν ∂ z ) F I G . 6. The weighting functions for the minimum value of q (black solid) which is used as a reference to define the absorption rate corre- sponding to z max = 2 , 5 , 8 , 10 km. W e also show the weighting functions for the maximum value of q corresponding to these maximum heights (red). F I G . 7. Scatter plots of the cloudy observations, y ` , versus the observation model errors, b ` , for the observation at 16 frequencies- ν corresponding to weighting functions with maximum heights at z max = 1 , 2 , ..., 16, respectively . show the scatter plot of the observ ations which are de- fined as y ν = h ν ( x , f ) + η ν , as functions of the model error , b ν = h ν ( x , f ) − ˜ h ν ( x ) . In this example the mea- surement errors are specified as a percentage of the vari- ance of the measured variables, so η ν ∼ N ( 0 , R o ) with R o = 10 − 3 × var ( h ν ( x , f )) where var ( h ν ( x , f )) is the vari- ance of the observation. W e will also consider the robust- ness to the measurement error R o below . W e observe at each observation time, ∆ t = 0 . 0035 and use an ensemble size of K = 14. In Fig. 8, we sho w exam- ples of the secondary filter at two instances for observa- tions at wavenumber - ν corresponding to weighting func- tion with z max = 6 km. The two observations are relativ ely close to each other , y i = 0 . 184 and y i = 0 . 195, but the former has very small observation error while the latter has a relativ ely large observ ation error (see the blue dots in these two figures). Here, the nonparametric likelihood functions are trained with N = 5000 and M = 400. T o correct the observation model error, we ran the secondary M O N T H L Y W E A T H E R R E V I E W 9 -0.2 -0.15 -0.1 -0.05 0 Obs Model Error 0 20 40 60 80 100 120 Probability Prior, p ( b ℓ ) Likelihood, p ( y i = 0 . 184 | b ℓ ) Posterior, p ( b ℓ | y i = 0 . 184) T rue Model Error, b i -0.2 -0.15 -0.1 -0.05 0 Obs Model Error 0 10 20 30 40 50 60 70 80 90 100 Probability Prior, p ( b ℓ ) Likelihood, p ( y i = 0 . 195 | b ℓ ) Posterior, p ( b ℓ | y i = 0 . 195) T rue Model Error, b i F I G . 8. Bimodal likelihood and Bayesian update from the secondary filter at two random instances, one with small observation model error (left) and the other with large error (right). These two plots are for the observ ations at wa venumber- ν corresponding to weighting function with z max = 6 km. filter in (3) with the Gaussian prior with time-dependent variances σ 2 b i as defined in (14) (grey curves). In this ex- ample, we could not use the time-independent v ariance, σ 2 b , from the training data b ` since the resulting prior is too broad and was uninformative. Ho wever , since σ 2 b i is very small (see e.g. Fig. 7), it is possible that the support of the prior will be almost entirely be between the two modes of a bimodal lik elihood function, which will cause the poste- rior normalization factor Z to be very small. Thus, when Z is found to be less than a threshold Z ∗ , we do not ap- ply the secondary filter , since in these cases the likelihood function does not giv e enough information to inform the secondary filter . When the prior is on the tail of the likeli- hood function, we do not apply this thresholding step. In our numerical implementation, we found our results to be robust to a lar ge range of thresholds Z ∗ ∈ [ 10 − 30 , 10 − 2 ] . In Fig. 9, we show the filter estimates as timeseries of all sev en model variables θ 1 , θ 2 , θ eb , q , f c , f d , f s when the measurement error is R o = 10 − 3 × var ( h ν ( x , f )) or 0.1% of the observation variance. For diagnostic, we also include EnKF assimilating the same cloudy observations with the true observation function. Notice that the RKHS is as ac- curate as the EnKF implemented with the true observ ation function. On the other hand, the EnKF based on the in- correct observ ation model does not produce accurate es- timates, despite using an inflated observation cov ariance matrix in the filter (a standard method for overcoming model error). In this example we do not use any adaptiv e cov ariance estimation, all filters use an additive cov ariance inflation Q = 10 − 3 × I 7 × 7 , and an inflated R = 5 R o . In Fig. 10 we compare the filter Relative Mean Square Error (RMSE) as a function of the measurement error vari- ance, R o , ranging from 0.1% to 2% of the observation vari- ance. Here, the Relative MSE is defined as the ratio be- tween the MSE and the v ariance of the corresponding state variable, where MSE is a veraged over 2500 filter steps. As we sa w in the L96 example above, when the measurement error is small the RKHS performance approaches that of the EnKF gi ven the true observation function. For large measurement error, the performance of all of the filters degrades significantly , ev en the EnKF gi ven the true ob- 70 71 72 73 74 75 76 77 78 Time -0.1 -0.05 0 0.05 0.1 0.15 0.2 0.25 0.3 θ 1 Truth EnKF, True Obs EnKF, Wrong Obs RKHS 70 71 72 73 74 75 76 77 78 Time -0.1 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 θ 2 Truth EnKF, True Obs EnKF, Wrong Obs RKHS 70 71 72 73 74 75 76 77 78 Time -1.5 -1 -0.5 0 0.5 1 1.5 2 2.5 θ eb Truth EnKF, True Obs EnKF, Wrong Obs RKHS 70 71 72 73 74 75 76 77 78 Time 0.95 1 1.05 1.1 1.15 1.2 1.25 1.3 1.35 1.4 1.45 q Truth EnKF, True Obs EnKF, Wrong Obs RKHS 70 71 72 73 74 75 76 77 78 Time 0 0.05 0.1 0.15 0.2 0.25 0.3 0.35 0.4 0.45 fc Truth EnKF, True Obs EnKF, Wrong Obs RKHS 70 71 72 73 74 75 76 77 78 Time 0 0.05 0.1 0.15 0.2 0.25 0.3 0.35 fd Truth EnKF, True Obs EnKF, Wrong Obs RKHS 70 71 72 73 74 75 76 77 78 Time 0 0.02 0.04 0.06 0.08 0.1 0.12 0.14 0.16 0.18 0.2 fs Truth EnKF, True Obs EnKF, Wrong Obs RKHS F I G . 9. T ime series of filter estimates compared to the true state variables for all 7 model variables in the presence of small measure- ment error R o = 10 − 3 × var ( h ν ( x , f )) which is 0.1% of the observation variance. servation function. In Fig. 11 we show the filter estimates as timeseries for all sev en model variables at the highest measurement error which is 2% of the observation vari- ance. In this case, notice that even the EnKF given the true observation function produces inaccurate filter esti- mates for some v ariables (with large errors in q , f s , f d , f c ). This illustrates the difficulty of filtering these highly non- linear observations giv en by the R TM in the presence of large measurement error . For small measurement errors, the RKHS correction is again effecti ve at overcoming the sev ere bias introduced by the observation model error . 6. Summary W e introduced a data-dri ven observation model error estimator . The estimator is a recursive Bayesian method which uses a nonparametric likelihood function, con- structed by representing the kernel embedding of condi- tional distributions with data-driv en basis functions ob- 10 M O N T H L Y W E A T H E R R E V I E W 10 -1 10 0 Observation Noise (percent of obs variance) 0 10 20 30 40 50 60 70 80 90 100 θ 1 , RMSE (percent of variance) EnKF, True Obs EnKF, Wrong Obs RKHS 10 -1 10 0 Observation Noise (percent of obs variance) 0 10 20 30 40 50 60 70 80 90 100 θ 2 , RMSE (percent of variance) EnKF, True Obs EnKF, Wrong Obs RKHS 10 -1 10 0 Observation Noise (percent of obs variance) 0 10 20 30 40 50 60 70 80 90 100 θ eb , RMSE (percent of variance) EnKF, True Obs EnKF, Wrong Obs RKHS 10 -1 10 0 Observation Noise (percent of obs variance) 0 10 20 30 40 50 60 70 80 90 100 q, RMSE (percent of variance) EnKF, True Obs EnKF, Wrong Obs RKHS 10 -1 10 0 Observation Noise (percent of obs variance) 0 10 20 30 40 50 60 70 80 90 100 fc, RMSE (percent of variance) EnKF, True Obs EnKF, Wrong Obs RKHS 10 -1 10 0 Observation Noise (percent of obs variance) 0 10 20 30 40 50 60 70 80 90 100 fd, RMSE (percent of variance) EnKF, True Obs EnKF, Wrong Obs RKHS 10 -1 10 0 Observation Noise (percent of obs variance) 0 10 20 30 40 50 60 70 80 90 100 fs, RMSE (percent of variance) EnKF, True Obs EnKF, Wrong Obs RKHS F I G . 10. Rob ustness of the various filters to increasing measure- ment error R o from 0.1% up to 2% of of the observation variance. Per- formance is measured by Relativ e Mean Squared Error to the variance of the corresponding variable. Notice that for large measurement error the performance of all the filters decreases signficantly . tained via the diffusion maps algorithm. The method is scalable to high-dimensional problems since it can be used in parallel to correct error in each component of a high di- mensional observ ation. While our main motiv ation is to solve the cloudy satellite inference problem, the proposed framew ork can be used in general applications so long as a training data of the states and corresponding observ a- tions is av ailable. Also, while the proposed observation model error correction technique is shown in tandem with an ensemble Kalman filter , the fact that we estimate the conditional distribution (and not just the statistics) of the error , p ( b | y ) , implies that this framew ork can also be used in any filtering method. In the e xamples above we showed that the RKHS cor- rection is able to overcome complex multimodal observa- tion model error . When there is suf ficient observ ability (eg. short observation time) and small measurement er- ror , the RKHS correction approaches the filtering skill of the EnKF giv en the perfect observation function. In the presence of large measurement error or long observation time, the RKHS correction degrades relativ e to the EnKF with the perfect observation function, ho wever it is still a significant improv ement in both skill and stability over simply inflating the EnKF noise parameters, Q and R . The key to the RKHS is using historical data to learn the con- ditional distribution of the model error . The RKHS then combines the current filter predicted observation with the actual observation to determine the appropriate correction to the current observ ation model. This process depends on choosing an appropriate prior for the current model er- ror , and in particular we presented two natural choices for the covariance of this prior . Future improvements may be possible by designing better or adaptiv e priors. 70 71 72 73 74 75 76 77 78 Time -0.1 0 0.1 0.2 0.3 0.4 0.5 θ 1 Truth EnKF, True Obs EnKF, Wrong Obs RKHS 70 71 72 73 74 75 76 77 78 Time -0.1 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 θ 2 Truth EnKF, True Obs EnKF, Wrong Obs RKHS 70 71 72 73 74 75 76 77 78 Time -1.5 -1 -0.5 0 0.5 1 1.5 2 2.5 θ eb Truth EnKF, True Obs EnKF, Wrong Obs RKHS 70 71 72 73 74 75 76 77 78 Time 0.95 1 1.05 1.1 1.15 1.2 1.25 1.3 1.35 1.4 1.45 q Truth EnKF, True Obs EnKF, Wrong Obs RKHS 70 71 72 73 74 75 76 77 78 Time 0 0.05 0.1 0.15 0.2 0.25 0.3 0.35 0.4 0.45 fc Truth EnKF, True Obs EnKF, Wrong Obs RKHS 70 71 72 73 74 75 76 77 78 Time 0 0.05 0.1 0.15 0.2 0.25 0.3 0.35 fd Truth EnKF, True Obs EnKF, Wrong Obs RKHS 70 71 72 73 74 75 76 77 78 Time 0 0.02 0.04 0.06 0.08 0.1 0.12 0.14 0.16 0.18 0.2 fs Truth EnKF, True Obs EnKF, Wrong Obs RKHS F I G . 11. Time series of filter estimates compared to the true state variables for all 7 model v ariables in the presence of lar ge measurement error R o = 0 . 02 × var ( h ν ( x , f )) which is 2% of the observation variance. Notice that many of the cloud fraction estimates e ven for the perfect ob- servation model are far from the true state, which indicates the dif ficulty of filtering these observations when the measurement errors are lar ge. Acknowledgments. The research of J.H. is partially supported by the Of fice of Naval Research Grants N00014-16-1-2888, MURI N00014-12-1-0912 and the National Science Foundation grant DMS-1317919. References Anderson, J., 2001: An ensemble adjustment Kalman filter for data as- similation. Monthly W eather Review , 129 , 2884–2903. Berry , T ., and J. Harlim, 2016a: Forecasting turbulent modes with non- parametric diffusion models: Learning from noisy data. Physica D , 320 (57-76) . Berry , T ., and J. Harlim, 2016b: V ariable bandwidth diffusion kernels. Appl. Comput. Harmon. Anal. , 40 , 68–96. Berry , T ., and T . Sauer , 2013: Adaptive ensemble Kalman filtering of nonlinear systems. T ellus A , 65 , 20 331. M O N T H L Y W E A T H E R R E V I E W 1 1 Bishop, C., B. Etherton, and S. Majumdar, 2001: Adaptive sampling with the ensemble transform Kalman filter part I: the theoretical as- pects. Monthly W eather Review , 129 , 420–436. Coifman, R., and S. Lafon, 2006: Dif fusion maps. Appl. Comput. Har- mon. Anal. , 21 , 5–30. Evensen, G., 1994: Sequential data assimilation with a nonlinear quasi- geostrophic model using Monte Carlo methods to forecast error statistics. Journal of Geophysical Resear ch , 99 , 10 143–10 162. Kalnay , E., 2003: Atmospheric modeling, data assimilation, and pre- dictability . Cambridge Univ ersity Press. Khouider , B., J. Biello, and A. J. Majda, 2010: A stochastic multicloud model for tropical con vection. Commun. Math. Sci. , 8 (1) , 187–216. Liou, K.-N., 2002: An intr oduction to atmospheric radiation , V ol. 84. Academic press. Lorenz, E., 1996: Predictability - a problem partly solved. Proceedings on pr edictability, held at ECMWF on 4-8 September 1995 , 1–18. Majda, A. J., and J. Harlim, 2012: F iltering complex turbulent systems . Cambridge Univ ersity Press. McNally , A. P ., 2009: The direct assimilation of cloud-affected satel- lite infrared radiances in the ecmwf 4d-var . Quarterly J ournal of the Royal Meteor ological Society , 135 (642) , 1214–1229. Nystr ¨ om, E. J., 1930: ¨ Uber die praktische aufl ¨ osung von integralgle- ichungen mit anwendungen auf randwertaufgaben. Acta Mathemat- ica , 54 (1) , 185–204. Reale, O., J. Susskind, R. Rosenberg, E. Brin, E. Liu, L. P . Riisho- jgaard, J. T erry , and J. C. Jusem, 2008: Improving forecast skill by assimilation of quality-controlled airs temperature retriev als un- der partially cloudy conditions. Geophys. Res. Lett. , 35 (8) , URL http://dx.doi.org/10.1029/2007GL033002. Song, L., K. Fukumizu, and A. Gretton, 2013: Kernel embeddings of conditional distributions: A unified kernel framew ork for nonpara- metric inference in graphical models. IEEE Signal Processing Mag- azine , 30 (4) , 98–111. Song, L., J. Huang, A. Smola, and K. Fukumizu, 2009: Hilbert space embeddings of conditional distributions with applications to dynam- ical systems. Pr oceedings of the 26th Annual International Confer- ence on Machine Learning , A CM, 961–968. APPENDIX A Ker nel embedding of distributions In this Appendix we discuss the theory of kernel em- beddings of distrib utions for constructing likelihood func- tions. W e should point out that the revie w in this section follows the deriv ation in Song et al. (2009, 2013) except that we adapt their deri vation to weighted Hilbert spaces, which will be required in the next section. Giv en a symmetric positiv e definite kernel, K : M × M → R , the Moore-Aronszajn theorem states that there exists a Reproducing K ernel Hilbert Space (RKHS) H = L 2 ( M , q ) . This is a space of functions f : M → R with a repr oducing pr operty , that is, for each x ∈ M , there exists K ( x , · ) such that f ( x ) = h f , K ( x , · ) i q , ∀ f ∈ H . Moreov er, K ( x , · ) ∈ H , which implies that K ( x , y ) = h K ( x , · ) , K ( y , · ) i q . The map from M to H giv en by x 7→ K ( x , · ) is called the feature map. Let X be a random variable with distrib ution P ( X ) de- fined on a domain M . The kernel embedding of a distri- bution P ∈ H maps P to a function µ X ∈ H giv en by , µ X : = E X [ K ( X , · )] = Z M K ( x , · ) d P ( x ) (A1) which is the e xpectation of the feature map. For any f ∈ H , E X [ f ( X )] = Z M f ( x ) d P ( x ) = Z M h f , K ( x , · ) i q d P ( x ) = f , Z M K ( x , · ) d P ( x ) q = h f , µ X i q . So µ X is the Riesz representative of expectation over P ( X ) . Let Y be another random variable defined on another space N and another kernel ˜ K ( y , · ) , which maps N to a feature space ˜ H = L 2 ( N , ˜ q ) . W e assume the following equality , h K ( x , · ) ⊗ ˜ K ( y , · ) , K ( x 0 , · ) ⊗ ˜ K ( y 0 , · ) i q ⊗ ˜ q (A2) = K ( x , x 0 ) ⊗ ˜ K ( y , y 0 ) . Then the joint distribution P ( X , Y ) can be mapped into the product feature space H ⊗ ˜ H with the following defini- tion, C X Y : = Z M × N K ( x , · ) ⊗ ˜ K ( y , · ) d P ( x , y ) . (A3) Then, for any functions f ∈ H , g ∈ ˜ H , we have E X Y [ f ( X ) g ( Y )] = Z M × N f ( x ) g ( y ) d P ( x , y ) = Z M × N h f , K ( x , · ) i q h g , ˜ K ( y , · ) i ˜ q d P ( x , y ) = Z M × N h f ⊗ g , K ( x , · ) ⊗ ˜ K ( y , · ) i q × ˜ q d P ( x , y ) = h f ⊗ g , C X Y i q × ˜ q : = h f , C X Y g ˜ q i q , (A4) using the equality in (A3) and the definition of C X Y in (A3). Again, the cross-co variance operator C X Y is a Riesz representativ e of expectation ov er P ( X , Y ) . The kernel embedding of conditional distribution P ( Y | X ) is defined as, µ Y | x = E Y | x [ ˜ K ( Y , · )] = Z N ˜ K ( y , · ) d P ( y | x ) , (A5) and one can use the same argument to sho w that the em- bedding operator µ Y | x is the Riesz representer of the con- ditional expectation, E Y | x [ g ( Y )] = h g , µ Y | x i ˜ q . (A6) 12 M O N T H L Y W E A T H E R R E V I E W The main result from Song et al. (2013) that we will exploit is that Theorem 1. The kernel embedding of P ( Y | X ) satisfies, µ Y | x = q C Y X C − 1 X X K ( x , · ) , (A7) wher e the operators C X Y is defined as in (A3) and C X X = R M K ( x , · ) K ( x , · ) d P ( x ) . Pr oof. This result depends on the identity deri ved in Song et al. (2009), which states that for g ∈ ˜ H , C X X E Y | X [ g ( Y )] = C X Y g ˜ q . (A8) T o see this, let f ∈ H and notice that h f , C X X E Y | X [ g ( Y )] i q = E X [ f ( X ) E Y | X [ g ( Y )]] = E X Y [ f ( X ) g ( Y )] = h f , C X Y g ˜ q i q , where we hav e used the fact that C X X is the Riesz rep- resenter of expectation with respect to P ( X ) and also the equality in (A4). For each E Y | X [ g ( Y )] ∈ H , then by the reproducing property of H , one can write, E Y | x [ g ( Y )] = h E Y | X [ g ( Y )] , K ( x , · ) i q = h C − 1 X X C X Y g ˜ qq , K ( x , · ) i = h g , q C Y X C − 1 X X K ( x , · ) i ˜ q (A9) where we used the identity in (A8) and using the fact that C X X is symmetric and C Y X = C > X Y . Comparing (A9) with the (A6), we obtain (A7). APPENDIX B Proof of equations (9) - (11) . In this Appendix, we will prove equations (9)-(11). For our discussion below , we define two random variables. The first one, Y ∈ R with distribution P ( Y ) and kernel ˜ K such that L 2 ( R , ˜ q ) is the RKHS with orthonormal ba- sis functions φ k ( y ) that can be estimated from the data y i with sampling density ˜ q ( y ) . The second one, B ∈ R with distribution P ( N ) and kernel K such that L 2 ( R , q ) is the RKHS with orthonormal basis functions ϕ j ( x ) that can be estimated from the data b i with sampling density q ( b ) . Let assume the representation in (8). T aking inner- product of (8) with φ k and imposing the orthogonality con- dition, we obtain, µ Y | b , k = h p ( ·| b ) , φ k i = E Y | b [ φ k ] . Applying (A6) and the equality in (A5) from Theorem 1 with the random variable X replaced by B , we hav e µ Y | b , k = h µ Y | b , φ k i ˜ q = h q C Y B C − 1 BB K ( b , · ) , φ k i ˜ q = h q C Y B C − 1 BB ∑ j ϕ j ( b ) ϕ j , φ k i ˜ q . = ∑ j ϕ j ( b ) h q C Y B C − 1 BB ϕ j , φ k i ˜ q = ∑ j ϕ j ( b ) h C Y B C − 1 BB , ϕ j ⊗ φ k i q ⊗ ˜ q . (B1) In the second equality above, we used the reproduc- ing property , ϕ j ( b ) = h K ( b , · ) , ϕ j i q , such that K ( b , · ) = ∑ j ϕ j ( b ) ϕ j ( · ) . Define the projection of the operators C Y B and C BB on the space spanned by the basis functions φ k ( y ) , ϕ j ( b ) , re- spectiv ely , with matrices C Y B and C BB whose components are, C Y B jk : = h C Y B , φ j ⊗ ϕ k i ˜ q ⊗ q = E Y B [ φ j ϕ k ] , C BB jk : = h C BB , ϕ k ⊗ ϕ k i q = E B [ ϕ j ϕ k ] , where the second equality in these two equations follows from (A4). Numerically , we can estimate these quantities using Monte-Carlo averages as shown in (10) and (11), respectiv ely . T o complete the proof of equality (9), we just show that, [ C Y B C − 1 BB ] k j : = ∑ l [ C Y B ] kl [ C − 1 BB ] l j = ∑ l h C Y B , φ k ⊗ ϕ l i ˜ q ⊗ q h C − 1 BB , ϕ l ϕ j i q = * C Y B , φ k ⊗ ∑ l h C − 1 BB , ϕ l ϕ j i q ϕ l !+ ˜ q ⊗ q = C Y B , φ k ⊗ C − 1 BB ϕ j ˜ q ⊗ q = C Y B C − 1 BB , φ k ⊗ ϕ j ˜ q ⊗ q . (B2) T ogether with (B1) and the Monte-Carlo approximations on C Y B and C BB , we obtain (9)-(11). In the deriv ation abov e, we used infinite number of basis functions. Numer- ically , we use finite number of basis functions as sho wn in the main text. APPENDIX C A simplified radiative transfer model In this Appendix, we discuss the simplified radiativ e transfer model used in Section 5. Given the physical vari- ables in the stochastic cloud model in Khouider et al. (2010), the first and second baroclinic potential temper- atures, θ 1 and θ 2 , respectiv ely , the equi valent boundary M O N T H L Y W E A T H E R R E V I E W 1 3 layer potential temperature, θ eb , and the vertically av erage water vapor content, q , we consider an idealistic radiative transfer model for the brightness temperature at wav enum- ber ν at clear-sk y condition, following the standard formu- lation in Liou (2002): h ν ( x ) = θ eb T ν ( 0 ) + Z ∞ 0 T ( z ) ∂ T ν ∂ z ( z ) d z , (C1) where x = { θ 1 , θ 2 , θ eb , q } and the temperature at height z is defined by as in (19). The first term on the right hand side of (C1) denotes the contrib ution from the surface and the second term denotes the contribution from the whole column of the atmosphere which is a weighted average of the temperature with weighting function, ∂ T ν ∂ z . In (C1), we define the transmission between heights z to ∞ , assuming the height of the satellite instrument is much larger than the troposphere, as follo ws, T ν ( z ) = exp ( − Z ∞ z α ν ( s ) d s ) . Here α ν denotes the absorption rate which we assumed to decrease exponentially as a function of height, α ν ( z ) = α ∗ ν q exp ( − z H ) , where α ∗ ν denotes a reference absorption rate that is to be determined. In our simulation, we set H = 3 km. W ith this assumption, we hav e, T ν ( z ) = exp ( − α ν ( z ) H ) and T ν ( 0 ) = exp ( − α ∗ ν qH ) . Also, the weighting functions become, ∂ T ν ∂ z ( z ) = α ν ( z ) T ν ( z ) , W e determine the wav enumber ν by specifying α ∗ ν cor- responding to the height of which the weighting function is maximum. That is, setting ∂ T 2 ν ∂ z 2 ( z ) = 0, we have α ∗ ν = exp ( z max H ) qH , where q needs to be fixed to a specific reference value. T o increase the sensitivity of the weighting function to q ∈ [ a , b ] where a , b denote the lower and upper bounds of q which can be obtained by the climatological data, we rescale q to fluctuate in between [ 1 , 2 ] by defining ˜ q = q − a b − a + 1 . and define the absorption rate to be, α ν ( z ) = α ∗ ν ˜ q exp ( − z H ) , α ∗ ν = exp ( z max H ) H . This means that when q = a , the corresponding α ν ( 0 ) will produce a weighting function, ∂ T ν ∂ z ( z ) that has a maximum weight at height z max . In Fig. 6, we show the weighting functions (black solid) with the reference humidity q = a associated with z max = 2 , 5 , 8 , 10 km (black dashes). W e also include the weighting functions corresponding to hu- midity v alue q = b (red solid), which sho w that depending on the value of q , the shape of weighting functions will vary between these tw o weights. For a single type of cloud, the basic equation for the R TM of the cloudy sky measurement with cloud fraction c and cloud top height z t is giv en as follows, h ν ( x , c ) = ( 1 − c ) θ eb T ν ( 0 ) + Z z t 0 T ( z ) ∂ T ν ∂ z ( z ) d z + cT ( z t ) T ν ( z t ) + Z ∞ z t T ( z ) ∂ T ν ∂ z ( z ) d z (C2) So, the first term denotes the contribution belo w the cloud, the second term denotes the contribution from the cloud top height, and the last term denotes the contrib ution from the clear sky abo ve the cloud top height. The stochastic component of the cloud model in Khouider et al. (2010) is a birth-death process that ac- counts for the ev olution of the cloud fractions, f = { f c , f d , f s } of three cloud types, cumulus, deep, and strat- iform clouds, respectively . Assuming that the deep and stratiform cloud top heights are similar , namely z d = 12km, and the cumulus cloud top height is z c = 3km, we consider a two-cloud type formulation as follo ws, h ν ( x , f ) = ( 1 − f d − f s ) h θ eb T ν ( 0 ) + Z z d 0 T ( z ) ∂ T ν ∂ z ( z ) d z i +( f d + f s ) T ( z d ) T ν ( z d ) + Z ∞ z d T ( z ) ∂ T ν ∂ z ( z ) d z = ( 1 − f d − f s ) h ( 1 − f c ) θ eb T ν ( 0 ) + Z z c 0 T ( z ) ∂ T ν ∂ z ( z ) d z + f c T ( z c ) T ν ( z c ) + Z z d z c T ( z ) ∂ T ν ∂ z ( z ) d z i +( f d + f s ) T ( z d ) T ν ( z d ) + Z ∞ z d T ( z ) ∂ T ν ∂ z ( z ) d z Here, the first tw o ro ws denotes the contrib ution belo w the deep and stratiform clouds, the first term in the last row denotes the contribution from the deep cloud top height, and the last term denotes the contribution from the atmo- spheric column abov e the cloud. One can check that if f c = 0 and/or f d + f s = 0, then this formulation is consis- tent with (C1) and (C2). In our numerical simulations, we simulate the observa- tions as follows (ignoring time inde x- i ) , y ν = h ν ( x , f ) + η ν , η ν ∼ N ( 0 , R o ) , 14 M O N T H L Y W E A T H E R R E V I E W for 16 wa venumbers ν chosen such that the weighting functions, ∂ T ν ∂ z , are maximum at heights 1 , 2 , . . . , 16 km. But assuming that we don’t know the cloud top heights, z c , z d , as well as the cloud fractions, f = { f c , f d , f s } , we will apply the filtering with the clear-sky observation model, ˜ h ν ( x ) = h ν ( x , 0 ) , y ν ≈ ˜ h ν ( x ) + b ν + η ν , η ν ∼ N ( 0 , R ) , where b ν denotes error for at frequency- ν . In our imple- mentation, we will either fix the parameter R or estimate it adaptiv ely using the method from Berry and Sauer (2013).

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment