Cross-Layer Multi-Cloud Real-Time Application QoS Monitoring and Benchmarking As-a-Service Framework

Cloud computing provides on-demand access to affordable hardware (multi-core CPUs, GPUs, disks, and networking equipment) and software (databases, application servers and data processing frameworks) platforms with features such as elasticity, pay-per-use, low upfront investment and low time to market. This has led to the proliferation of business critical applications that leverage various cloud platforms. Such applications hosted on single or multiple cloud provider platforms have diverse characteristics requiring extensive monitoring and benchmarking mechanisms to ensure run-time Quality of Service (QoS) (e.g., latency and throughput). This paper proposes, develops and validates CLAMBS:Cross-Layer Multi-Cloud Application Monitoring and Benchmarking as-a-Service for efficient QoS monitoring and benchmarking of cloud applications hosted on multi-clouds environments. The major highlight of CLAMBS is its capability of monitoring and benchmarking individual application components such as databases and web servers, distributed across cloud layers, spread among multiple cloud providers. We validate CLAMBS using prototype implementation and extensive experimentation and show that CLAMBS efficiently monitors and benchmarks application components on multi-cloud platforms including Amazon EC2 and Microsoft Azure.

💡 Research Summary

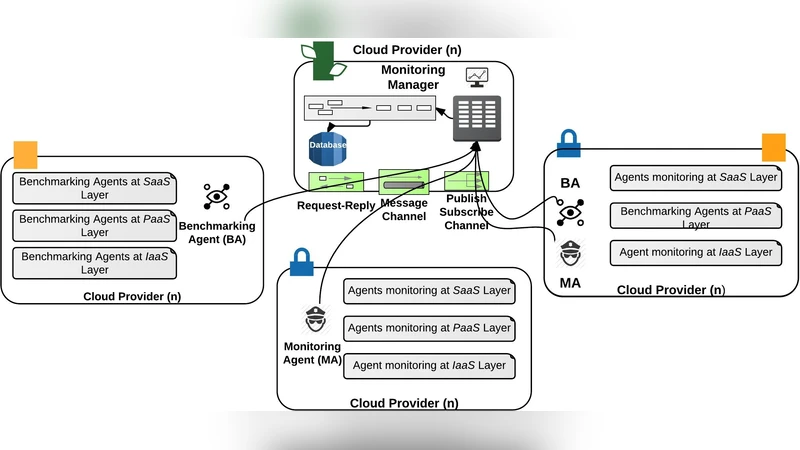

The paper addresses the growing need for fine‑grained quality‑of‑service (QoS) assurance in applications that are deployed across multiple cloud providers. Traditional monitoring tools focus either on low‑level infrastructure metrics or on a single provider, making them inadequate for modern, distributed workloads that span heterogeneous environments. To fill this gap, the authors propose CLAMBS (Cross‑Layer Multi‑Cloud Application Monitoring and Benchmarking as‑a‑Service), a framework that simultaneously monitors runtime performance and conducts systematic benchmarking of individual application components (e.g., databases, web servers) across cloud layers and providers.

Architecture. CLAMBS is built around four logical layers. The agent layer runs lightweight collectors on each virtual machine, gathering OS‑level statistics (CPU, memory, disk I/O) as well as application‑level indicators such as HTTP response times, database query latencies, and queue depths. A plugin‑based abstraction sits above the agents, normalising the disparate management APIs of major clouds (Amazon EC2 metadata, Azure Resource Manager, etc.) so that the same calls retrieve resource topology and pricing information regardless of provider. The benchmark engine deploys predefined workloads—read‑write mixes for databases, concurrent request bursts for web servers, file transfer tests for storage—and records throughput, latency, error rates, and resource utilisation in a reproducible manner. Finally, a central management and analytics server stores all time‑series data, exposes a RESTful API, and provides a web dashboard for real‑time visualisation, historical analysis, alerting, and automated scaling recommendations.

Implementation and Experiments. A prototype was built using Java agents and Python benchmark scripts. The authors deployed four virtual machines on Amazon EC2 and four on Microsoft Azure, creating three test scenarios: (1) a single‑cloud deployment, (2) a multi‑cloud distribution of the same application, and (3) a stress test that triggers auto‑scaling based on QoS thresholds. The agent overhead was measured at an average of 1.8 % CPU and 30 MB RAM per instance, with network traffic below 5 KB/s, confirming the solution’s lightweight nature. In the multi‑cloud case, CLAMBS captured inter‑instance latency differences within 5 ms, and the benchmark engine produced consistent performance metrics that enabled the system to automatically provision additional instances when latency or error‑rate thresholds were breached. The experiments demonstrated that CLAMBS can maintain QoS targets while optimising cost by revealing provider‑specific performance characteristics.

Key Insights and Contributions.

- Cross‑layer visibility – By extending monitoring from the hypervisor up to the application logic, CLAMBS uncovers performance bottlenecks that would be invisible to infrastructure‑only tools.

- Provider‑agnostic design – The plugin architecture abstracts away API heterogeneity, allowing seamless addition of new clouds or on‑premise data centres without code changes.

- Benchmark‑as‑a‑service – Standardised, repeatable workloads provide an objective baseline for comparing component performance across providers, supporting data‑driven migration and scaling decisions.

- Low overhead and scalability – The lightweight agents and efficient data aggregation enable monitoring of dozens of instances with negligible impact on the workloads themselves.

Limitations and Future Work. The current benchmark suite focuses on static workloads; it does not yet model complex micro‑service transaction graphs or serverless functions. The authors propose extending CLAMBS with AI‑driven predictive models that forecast QoS violations before they occur, and integrating support for GPU utilisation and Kubernetes metrics to cover emerging cloud-native workloads.

Conclusion. CLAMBS offers a practical, extensible solution for real‑time QoS monitoring and performance benchmarking in multi‑cloud environments. Its ability to observe individual application components across layers and providers, combined with an automated benchmarking service, equips operators with the data needed to enforce SLAs, optimise resource allocation, and make informed decisions about workload placement and scaling. The prototype’s successful validation on Amazon EC2 and Microsoft Azure demonstrates the framework’s feasibility and sets the stage for broader adoption in heterogeneous cloud ecosystems.

Comments & Academic Discussion

Loading comments...

Leave a Comment