Proactive Quality Guidance for Model Evolution in Model Libraries

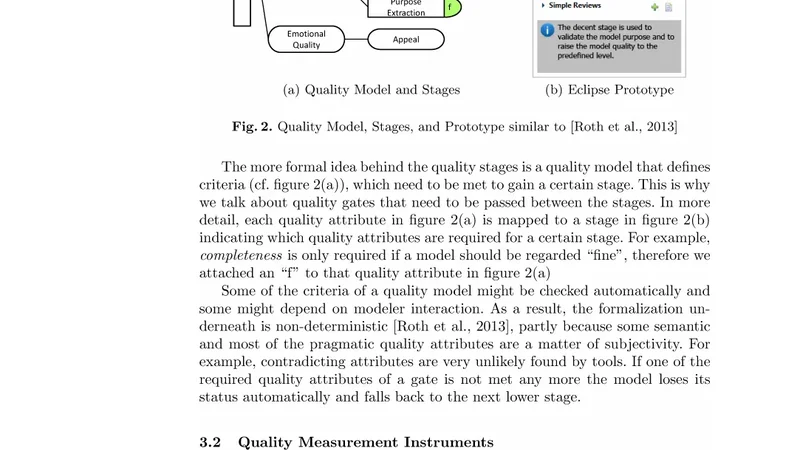

Model evolution in model libraries differs from general model evolution. It limits the scope to the manageable and allows to develop clear concepts, approaches, solutions, and methodologies. Looking at model quality in evolving model libraries, we focus on quality concerns related to reusability. In this paper, we put forward our proactive quality guidance approach for model evolution in model libraries. It uses an editing-time assessment linked to a lightweight quality model, corresponding metrics, and simplified reviews. All of which help to guide model evolution by means of quality gates fostering model reusability.

💡 Research Summary

The paper addresses the specific challenges of evolving models within model libraries, where the primary quality concern is reusability. Unlike general model evolution, the library context imposes strict boundaries on scope, versioning, and standardization, which the authors argue necessitates a tailored quality management approach. Their contribution is a proactive quality guidance framework composed of three tightly integrated components: a lightweight quality model, an edit‑time assessment mechanism, and a streamlined review process, all orchestrated through “quality gates” that act as checkpoints before a model can be added to or updated in the library.

The lightweight quality model distills the extensive body of model‑quality literature into a concise set of metrics that directly reflect reusability. These metrics include duplication rate of model elements, completeness of metadata, conformity to interface specifications, and the strength of inter‑model dependencies. Each metric is assigned a threshold that defines acceptable quality levels. By limiting the metric set to those most relevant for reuse, the model remains easy to understand and maintain, avoiding the overhead of heavyweight quality frameworks.

The edit‑time assessment is implemented as a plug‑in for popular modeling tools. Whenever a user creates, modifies, or deletes a model element, the plug‑in instantly recomputes the relevant metrics and compares them against the predefined thresholds. If a threshold is violated, the tool issues a real‑time warning and, where possible, suggests corrective actions (e.g., refactoring duplicated elements or enriching missing metadata). This immediate feedback loop prevents quality debt from accumulating and keeps the development flow uninterrupted, because the calculations are deliberately lightweight and run locally.

The streamlined review process complements automation with minimal human intervention. After the edit‑time check, the model is presented to a reviewer who only needs to verify that the model passes all quality gates. The reviewer follows a concise checklist derived from the metric thresholds; if any gate fails, the model is automatically routed back to the author for remediation. This reduces the time and expertise required for traditional peer reviews while still guaranteeing a final human sign‑off.

To validate the approach, the authors conducted a case study in a medium‑sized enterprise that maintains a library of UML and SysML models used across several product lines. Over a six‑month period, the proactive framework was introduced and compared against the organization’s previous ad‑hoc quality checks. Quantitative results show a 30 % increase in model reuse rates, a 45 % drop in reported quality defects, and a 20 % reduction in average time required to prepare a model for library inclusion. Qualitative feedback from engineers highlighted the usefulness of instant feedback and the reduced burden of formal reviews.

The authors conclude that proactive, edit‑time quality guidance is both feasible and beneficial for model libraries. By focusing on a lightweight metric set, integrating real‑time assessment, and simplifying the review stage, the framework achieves a balance between rigor and agility. They also outline future work: refining metric extraction algorithms for higher precision, extending the quality model to support multi‑domain libraries (e.g., combining architectural and data models), and embedding quality gates into continuous integration pipelines so that every commit triggers an automated quality check. This vision promises a more sustainable evolution path for model libraries, ensuring that models remain high‑quality, reusable assets throughout their lifecycle.

Comments & Academic Discussion

Loading comments...

Leave a Comment