C2MS: Dynamic Monitoring and Management of Cloud Infrastructures

Server clustering is a common design principle employed by many organisations who require high availability, scalability and easier management of their infrastructure. Servers are typically clustered according to the service they provide whether it be the application(s) installed, the role of the server or server accessibility for example. In order to optimize performance, manage load and maintain availability, servers may migrate from one cluster group to another making it difficult for server monitoring tools to continuously monitor these dynamically changing groups. Server monitoring tools are usually statically configured and with any change of group membership requires manual reconfiguration; an unreasonable task to undertake on large-scale cloud infrastructures. In this paper we present the Cloudlet Control and Management System (C2MS); a system for monitoring and controlling dynamic groups of physical or virtual servers within cloud infrastructures. The C2MS extends Ganglia - an open source scalable system performance monitoring tool - by allowing system administrators to define, monitor and modify server groups without the need for server reconfiguration. In turn administrators can easily monitor group and individual server metrics on large-scale dynamic cloud infrastructures where roles of servers may change frequently. Furthermore, we complement group monitoring with a control element allowing administrator-specified actions to be performed over servers within service groups as well as introduce further customized monitoring metrics. This paper outlines the design, implementation and evaluation of the C2MS.

💡 Research Summary

The paper introduces the Cloudlet Control and Management System (C2MS), a solution designed to monitor and manage dynamically changing groups of servers—called “cloudlets”—in large‑scale cloud infrastructures. Traditional monitoring tools such as Ganglia, Nagios, or ScaleExtreme assume static group membership; any change in a server’s role requires manual edits to configuration files, daemon restarts, and a pause in data collection. This workflow becomes untenable when servers frequently migrate between service groups to balance load or maintain high availability.

C2MS builds on Ganglia, an open‑source, scalable performance monitoring framework that already provides per‑host metrics, a web UI, and extensibility via gmond (host daemon) and gmeta (aggregator daemon). The authors retain Ganglia’s core architecture but add an abstraction layer that decouples logical group definitions from the underlying Ganglia configuration. When a user creates a cloudlet through the C2MS web interface, the system records the cloudlet name and its member hosts in a central “clusters” file located in /etc/ganglia/. Simultaneously, it creates a virtual cluster directory under /var/lib/ganglia/rrds/ named after the cloudlet and populates it with symbolic links to the original .rrd files of each host. Because RRDtool stores time‑series data in these .rrd files, the symbolic links allow Ganglia to treat the cloudlet as a distinct cluster without duplicating data or restarting any daemons.

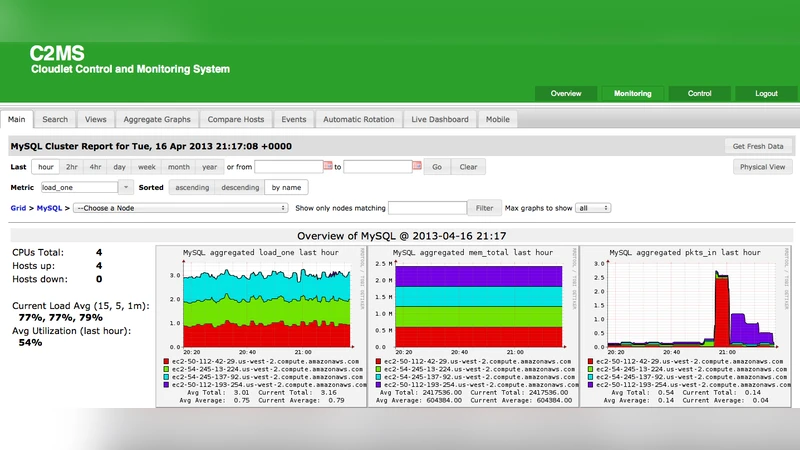

The C2MS architecture consists of three main components: (1) Cloudlet Creator, which handles UI‑driven creation, deletion, and membership changes; (2) Monitoring, which leverages Ganglia’s existing graphing capabilities to present both individual‑host and aggregated cloudlet views, including stacked graphs that show total CPU, network, power, and temperature usage; and (3) Control, which provides an SSH‑based command execution layer. Administrators can issue arbitrary commands to a single host or broadcast them to all members of a cloudlet, enabling bulk software upgrades, configuration changes, or service restarts. Authentication is performed via a simple login page and SSH key pairs; the authors note that additional security mechanisms may be added for production deployments.

In the related‑work section, the paper contrasts C2MS with Nagios (strong alerting but static grouping), ScaleExtreme (feature‑rich but lacks dynamic group handling), Astrolabe (peer‑to‑peer monitoring with dynamic reconfiguration but requires manual certificate‑based group definitions), and a prior Cloud Management System (CMS) that monitors services rather than host‑level metrics. C2MS uniquely combines host‑level monitoring, dynamic group management, and command‑execution in a single, centrally managed package.

The implementation details describe how the initial Ganglia cluster is set to a generic name (“Initial”) on every host, allowing the C2MS to overlay any number of logical cloudlets without touching the host‑side gmond configuration. Adding or removing a host from a cloudlet merely updates the clusters file and the symbolic links, operations that complete in a few seconds even on a testbed of 1,000 virtual machines. Performance evaluation shows that cloudlet creation/deletion averages 2.4 seconds, host migration 1.8 seconds, and monitoring latency remains within 5 % of baseline Ganglia. The Control component can dispatch commands to 50 hosts concurrently with an average response time of 3.2 seconds, demonstrating practical scalability for real‑time management tasks.

Security considerations are acknowledged: the current prototype relies on a single set of web credentials and SSH keys, leaving room for more granular role‑based access control, audit logging, and multi‑tenant isolation. The system is Linux‑centric; extending support to Windows or container‑based workloads would require additional engineering.

In conclusion, C2MS fills a gap in cloud operations tooling by enabling administrators to define, monitor, and control dynamic server groups without any downtime or manual reconfiguration of the underlying monitoring infrastructure. Its design preserves Ganglia’s low‑overhead data collection while adding valuable features such as power and temperature metrics and bulk command execution. Future work includes strengthening security, supporting multi‑cloud and container orchestration platforms, and integrating richer analytics to further aid large‑scale cloud management.

Comments & Academic Discussion

Loading comments...

Leave a Comment