Emotional Expression Classification using Time-Series Kernels

Estimation of facial expressions, as spatio-temporal processes, can take advantage of kernel methods if one considers facial landmark positions and their motion in 3D space. We applied support vector classification with kernels derived from dynamic t…

Authors: Andras Lorincz, Laszlo Jeni, Zoltan Szabo

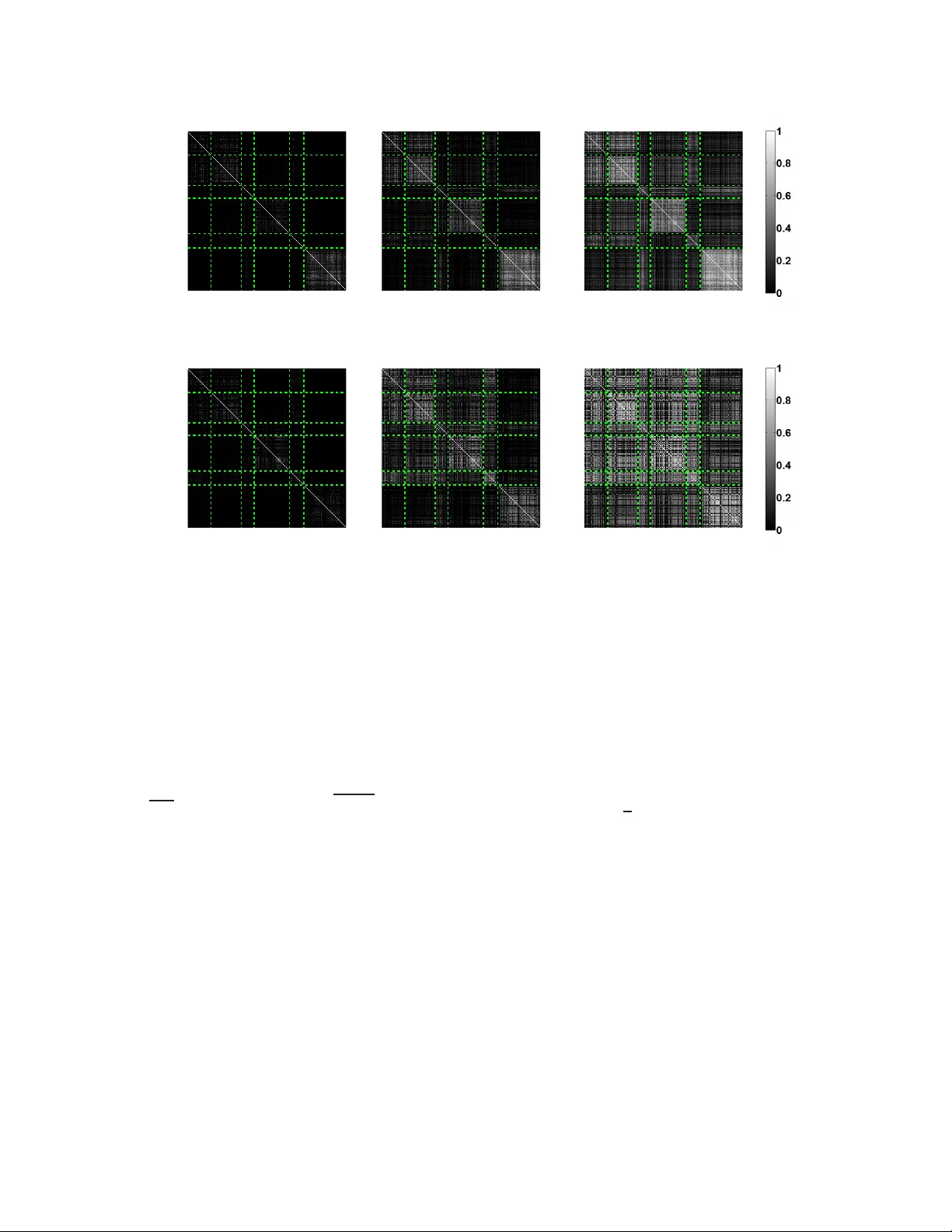

Emotional Expr ession Classification using Time-Seri es K er nels ∗ Andr ´ as L ˝ orincz 1 , L ´ aszl ´ o A. Jeni 2 , Zolt ´ an Szab ´ o 1 , Jeff rey F . Cohn 2, 3 , and T akeo Kanade 2 1 E ¨ otv ¨ os Lor ´ and Uni versity , Budapest, Hungary , {andras.lo rincz, szzoli}@elte.h u 2 Carnegie Mellon Uni versity , P ittsbur gh, P A, laszlo.jen i@ieee.org , t k@cs.cmu.edu 3 Univ ersity of Pittsbur gh, Pittsbur gh, P A, jeffcohn@c s.cmu.edu Abstract Estimation of facial expr essions, as spatio-temporal pr o- cesses, can take a dvantage of kernel methods if one consid- ers facial landmark positions and their motion in 3D space. W e applied sup port vector classifica tion with kernels de- rived fr om dyn amic time-warp ing similarity measur es. W e achieved over 99% accuracy – me asur ed by ar e a u nder R OC curve – using only the ’mo tion pattern’ of the PCA compr essed r epr esen tation of the marker point vector , th e so-called sh ape parameters. Beyond the classification of full motion pa tterns, several e xpr essions wer e r ecognized with over 90 % accu racy in as few as 5-6 frames fr om their onset, about 200 milliseconds. 1. Intr oduction Because th ey e nable mo del b ased pr ediction and timely reactions, the an alysis and iden tification of spatio- temporal processes, includ ing con curren t and interacting events, are of gr eat impo rtance in many a pplications. Spatio-temp oral processes have intriguing featu res. C onsider th ree exam- ples. Fir st is a featu re-length movie, wh ich is a set of time series of p ixel intensities in which the number of pixels is on the o rder of 1 00,00 0 and the values follow each other at a rate of 30 fps for a time interval of 2 h ours or so. Second are financial time series. Currency exchange r ates, stock s, and many othe r finance related data are h eavily af fected by both common underlying processes and by one another and exhibit large coupled fluctuatio ns over 9 orde rs o f m agni- tude [ 16 ]. Third, and a focus of the current paper, a re facial expression time series. Th e mo tion pattern s of landmark points of a face, such as m outh an d eye co rners com prise a ’landmark space’. The dyn amics of c hange in this space can reveal emotion, pain, and cognitive states, and regulate so- ∗ c 2013 IEEE . IEEE Internation al W orkshop on Analysis and Model- ing of Fac es and Gestures, Portland, Oregon, 28 June 2013 (acc epted). cial in teraction. For e ffecti ve human -comp uter interaction, automated facial expression analysis is impor tant. Figure 1. Overv iew of the system. In all three types of time series, i.e., movies, financial data, and facial expr essions, we are dealing with sp atio- temporal pro cesses. For each, kern el methods hold great 1 promise. The d emands for c haracterizin g such proc esses pose spe- cial challenges because very d ifferent signals may repre sent the same process when the process is vie wed from different distances (in the case of the movie), different ti me scales (market data), or different viewing angles ( facial expres- sions). Such inv ariances an d d istortions n eed be taken into account. Early attempts to use supp ort vector methods for t he pre- diction o f time series were very pro mising [ 15 ] ev en in the absence of alg orithms compensating f or temporal dis- tortions. In other areas, such as speech rec ognition , time warping alg orithms h av e b een developed early in o rder to match slo wer and faster s peech fragmen ts, see, e.g., [ 6 ] and the refer ences therein. Dy namic time warp ing is o ne of th e most efficient metho ds that offer the com parison of te mpo- rally distorted samples [ 18 ]. Recently , th e two method s, i.e., dy namic tim e warping and SVMs h av e been combined and sho w considerab le per- forman ce increases in the analysis of spatio- temporal sig- nals [ 5 , 4 ]. Efficient method s using independ ent compo- nent analysis [ 12 ], Haar filters [ 22 ], hidde n Markov models [ 1 , 2 , 20 ] have been applied for problems r elated to the esti- mation o f emotio ns and facial expression s. Here, we study the efficiency of novel dynamic time warping kernels [ 5 , 4 ] for emotional expression estimation. Our contributions are as follows: W e show that (1) tim e series kernel metho ds ar e highly prec ise for emotiona l ex- pression estimation using land mark data only and ( 2) they enable ea rly and r eliable estimation of expression a s soon as 5 frames from expression onset, i.e., around 200 ms. The paper is organ ized as follows. First, in th e Meth ods section we revie w how land mark points are observed in 3 dimensions, sketch the two spatio-temp oral kernels that we applied, and describe supp ort vector machine ( SVM) p rin- ciples. Section 3 is abou t ou r experimen tal stud ies. It is followed by our Discussion and Summary . 2. Methods 2.1. F a cial F eature P oint Loc alization T o lo calize a dense set of facial lan dmarks, Activ e Ap- pearance Models (AAM) [ 14 ] and Constrained Local Mod- els (CLM) [ 19 ] are often used . These metho ds register a dense parame terized shap e mode l to a n image such that its landmark s correspon d to consistent locations on the face. Of the two, person specific AAMs ha ve higher precision than CLMs, but they must be trained for each person be- fore use. On the oth er hand, CLM meth ods can be u sed for person-in depend ent f ace alignmen t b ecause of the localized region templates. In this work we use a 3D CLM method, where the shape model is defined by a 3D mesh and in particular the 3 D ver - tex locations of the mesh, called landmar k points. Consider the shape of a 3 D CLM as the coordin ates of 3D vertices that make up the mesh: x = [ x 1 ; y 1 ; z 1 ; . . . ; x M ; y M ; z M ] , (1) or , x = [ x 1 ; . . . ; x M ] , where x i = [ x i ; y i ; z i ] . W e h av e T samples: { x ( t ) } T t =1 . W e assume th at – apart fr om scale, rotation, and translation – all samp les { x ( t ) } T t =1 can be approx imated by means o f th e lin ear principal compon ent analysis (PCA). In the next subsection we b riefly describe the 3D Poin t Distribution Mo del and ho w th e CLM meth od estimates t he landmark positions. 2.1.1 Point Dis tribution Model The 3D p oint distribution mod el ( PDM) describes n on-rig id shape variations linearly and com poses it with a global rigid transform ation, placing the shape in the image frame: x i ( p ) = s PR ( ¯ x i + Φ i q ) + t , (2) ( i = 1 , . . . , M ) , where x i ( p ) denotes the 3D locatio n of th e i th landmark and p = { s, α, β , γ , q , t } denote s the pa rameters of th e model, wh ich con sist of a glob al scaling s , ang les of rotation in th ree dimen sions ( R = R 1 ( α ) R 2 ( β ) R 3 ( γ ) ), a translation t and non-rig id transfor- mation q . Here ¯ x i denotes the mean location o f the i th landmark (i. e. ¯ x i = [ ¯ x i ; ¯ y i ; ¯ z i ] and ¯ x = [ ¯ x 1 ; . . . ; ¯ x M ]) and P denotes t he projection matrix to 2D: P = 1 0 0 0 1 0 . (3) W e assume that the prior of the parameters follow a nor- mal distrib ution with mean 0 and variance Λ at a parameter vector q : p ( p ) ∝ N ( q ; 0 , Λ ) , (4) From x i points PCA provides ¯ x in ( 2 ) and Λ in ( 4 ). 2.1.2 Constrained Local Model CLM is con strained throug h the PCA of PDM. It works with local e xperts , whose opinion is considered independent and are multiplied to each other: J ( p ) = p ( p ) M Y i =1 p ( l i = 1 | x i ( p ) , I ) → min p , (5) where l i ∈ { − 1 , 1 } is a stochastic variable telling whether the i th marker is in its p osition or no t, p ( l i = 1 | x i ( p ) , I ) is the probability that for image I and for marker position x i (being a f unction of param eter p , i.e., for x i ( p ) ) the i th marker is in its position. The interested reader is referred to [ 19 ] for the details of the CLM algorithm. 1 2.2. T ime-se ries Kernels Kernel b ased classifiers, like any other classification scheme, shou ld be robust against in variances and distor- tions. Dynamic time warping, traditio nally solved by dy- namic progr amming, has been introduced to overcome tem- poral distortions and has been successfully com bined with kernel meth ods. Below , we describe tw o k ernels that we ap- plied in o ur numeric al studies: the Dy namic T ime W arpin g (DTW) kernel and the Global Alignment (GA) kernel. 2.2.1 Dynamic Time W arping K ernel Let X N be the set of discrete- time time series taking val- ues in an arbitrary space X . On e can try to align two time series x = ( x 1 , ..., x n ) and y = ( y 1 , ..., y m ) of len gths n and m , respecti vely , in v ario us ways by distorting them. An alignment π has len gth p and p ≤ n + m − 1 since the two series have n + m poin ts and they are matched at least at one po int of time. W e use the n otation o f [ 4 ]. An align- ment π is a pair of incr easing integral vectors ( π 1 , π 2 ) of length p such that 1 = π 1 (1) ≤ ... ≤ π 1 ( p ) = n and 1 = π 2 (1) ≤ ... ≤ π 2 ( p ) = m , with unitary incremen ts and no simultane ous repe titions. In turn, f or all indices 1 ≤ i ≤ p − 1 , the increm ent vector of π belongs to a set of 3 elementary moves as follows π 1 ( i + 1) − π 1 ( i ) π 2 ( i + 1) − π 2 ( i ) ∈ 0 1 , 1 0 , 1 1 (6) Coordinates of π are also known as warping functio ns. Let A ( n, m ) den ote the set of all alignm ents between two time series of length n and m . The simplest DT W ’distance’ between x a nd y is defined as D T W ( x, y ) def = min π ∈ A ( n,m ) D x,y ( π ) (7) Now , let | π | denote the length of alignment π . The cost can be defin ed by means of a local diver gence φ that measur es the discrep ancy between any two points x i and y j of vectors x and y . D x,y ( π ) def = | π | X i φ ( x π 1 ( i ) , y π 2 ( i ) ) (8) The squared Euclidean distance is often used to define the div ergence φ ( x, y ) = || x − y || 2 . Althoug h this measure is 1 W e used the CLM software of Saragih, which is ava ilabl e here https://github .com/kylemcdona ld/FaceTracker . symmetric, it does not satisfy the triangle inequ ality und er all con ditions – so it is not rigor ously a distance – and can- not be used directly to define a positive semi-definite ker- nel. This prob lem ca n be alleviated by pro jecting matrix D x,y ( π ) to a set of symm etric positive semi-d efinite m a- trices. There a re various metho ds for acco mplishing such approx imations. T hey called distance substitution [ 7 ]. W e applied the alter nating pro jection method of [ 8 ] that fin ds the n earest corre lation matrix . Den oting the n ew matrix by ˆ D T W ( x, y ) , the m odified DT W distance induces a positi ve semi-definite kernel as follo ws k DT W ( x, y ) = e − 1 t ˆ DT W ( x,y ) , (9) where t is a constan t. The full procedur e ca n b e summarized as f ollows: (1) take the samples, (2) co mpute the Euclidean distances for each sample pair, (3) build the matrix f rom the se sam ple pairs, (4) find th e n earest correlatio n matrix , (5 ) u se it to construct a kernel, an d (6) com pute the Gram matrix o f the support vector classification pro blem. Fig. 2 (a)-( c) show Gram matrices induce d by the pseu do-DTW kern el with different t parameters. 2.2.2 Global Alignment Ker nel The Glob al Alignm ent (GA) kernel assumes that the m in- imum value of alignm ents may be sensitive to peculiari- ties of the time series an d inten ds to take advantage of all alignments weighted exponen tially . It is defin ed as the sum of expon entiated and sign changed costs of the individual alignments: k GA ( x, y ) def = X π ∈ A ( n,m ) e − D x,y ( π ) . (10) Equation ( 10 ) can be rewritten by b reaking up the align - ment distances acc ording to th e local d i vergences: similar- ity function κ is induced by diver gence φ : k GA ( x, y ) def = X π ∈ A ( n,m ) | π | Y i = i e − φ ( x π 1 ( i ) ,y π 2 ( i ) ) (11) def = X π ∈ A ( n,m ) | π | Y i = i κ x π 1 ( i ) , y π 2 ( i ) , (12) where notation κ = e − φ was introduced fo r th e sake of simplicity . It has been argued that k GA runs over the whole spectrum o f th e costs an d g iv es rise to a smoother m easure than the minimum of the co sts, i.e., the DTW distan ce [ 5 ]. It h as b een shown in th e same pap er that k GA is p ositiv e definite pr ovided that κ/ (1 + κ ) is positive definite o n X . Furthermo re, the com putational effort is similar to that o f t = 2 Anger Disgust Fear Joy Sadness Surprise (a) t = 4 (b) t = 8 (c) σ = 16 Anger Disgust Fear Joy Sadness Surprise (d) σ = 32 (e) σ = 64 (f) Figure 2. Gram matrices induced by th e pseudo-DTW kernel (a-c) and the GA kernel (d-f) with different parameters. The rows and columns represent the time-series grouped by the emotion labels ( the boundaries of the dif ferent emotional sets are de noted with green d ashed lines). The pix el intensities in each cell sho w the simil arity between tw o time-series. the DTW d istance; it is O ( nm ) . Cuturi argu ed in [ 4 ] that global a lignment kernel ind uced Gr am matrix d o n ot tend to be diagon ally dom inated as long as the sequen ces to be compare d have similar lengths. In our numerical simulations, we used local kernel e − φ σ suggested by Cuturi, where φ σ def = 1 2 σ 2 || x − y || 2 + log 2 − e − || x − y || 2 2 σ 2 . (13) Fig. 2 (d)- (f) show Gram ma trices induced b y the GA kernel with dif f erent σ parameter s. 2.3. T ime-se ries Classification using SVM Suppor t V ecto r Machines ( SVMs) are very powerful f or binary and m ulti-class classification as well as f or regres- sion p roblems [ 3 ]. They are r obust against outliers. For two-class sep aration, SVM estimates the optima l separat- ing hyper-plane between the two class es by maximizing the margin between th e hyper-plane and clo sest p oints o f the classes. T he c losest poin ts of th e classes ar e called suppo rt vectors; the optima l separa ting h yper-plane lies at half dis- tance between them. W e are g iv en sample an d label p airs ( x ( i ) , y ( i ) ) with x ( i ) ∈ R m , y ( i ) ∈ {− 1 , 1 } , and i = 1 , ..., K . Here, for class ’1’ and for class ’2 ’ y ( i ) = 1 an d y ( i ) = − 1 , re- spectiv ely . W e also hav e a featu re m ap φ : R m → H , where H is a Hilber t-space. The kernel implicitly pe r- forms the do t produ ct calculations between mapp ed points: k ( x, y ) = h φ ( x ) , φ ( y ) i H . T he supp ort vector classification seeks to minimize the cost function min w ,b,ξ 1 2 w T w + C K X i =1 ξ i (14) y ( i ) ( w T φ ( x ( i ) ) + b ) ≥ 1 − ξ i , ξ i ≥ 0 , (15) where ξ i ( i = 1 , . . . , K ) are th e so- called slack variables that gener alize th e or iginal SVM con cept with separating hyper-planes to sof t-margin classifiers that have outliers that can not be separated. 3. Experiment s 3.1. Cohn-Kanade Extended Dataset In ou r simulations we used the Cohn -Kanade Extended Facial Expression (CK+) Database [ 13 ]. This d atabase was developed for automated facial image analysis and sy n- thesis and fo r p erceptual studies. The databa se is widely (a) (b) (c) (d) (e) (f) Figure 3. ROC curv es of the different emotion classifi ers: (a) anger , (b) disgust, (c) fear, (d) joy , (e) sadness and (f) surprise. T hick li nes: performance using all frames of the sequences. Thin lines: performance using the 6th frames of the sequences. Solid (dotted) line: results for pseud o DTW (GA) kernel. used to compare the performan ce of different models. The database contains 123 dif ferent subjects and 593 frontal im- age s equence s. From these, 118 subjects are annotated with the sev en universal emotion s (ang er , con tempt, disgu st, fear, happy , sad and surprise). Action units are also provided with this d atabase for the apex fr ame. The origin al Cohn- Kanade Facial Expr ession Datab ase distribution [ 11 ] had 486 F ACS-coded sequen ces f rom 97 subjects. CK+ has 593 posed sequences with full F A CS coding of the peak frames. A subset of actio n units were cod ed for presence or absence. For these sequences th e 3D landm arks and shape par ame- ters were provided by the CLM tracker itself. 3.2. Emotional Expression Classification In this set of exper iment we studied the two kernel m eth- ods on the CK+ d ataset. W e measured the per forman ces of the methods for emotion recognition. First, we tracked facial expressions with the CLM tracker and annotate d all im age sequences starting f rom the neu - tral expr ession to the pea k o f th e emotion. The CLM e sti- mates the rigid and non-rigid transformation s. W e r emoved the rigid ones from the faces and represen ted the sequences as mu lti-dimension al time-ser ies built from th e non -rigid shape parameters. W e c alculated Gram matrices using the pseudo DTW an d the GA kernels and perfor med leave-one-subje ct ou t cross validation to maximally utilize the av ailable set o f training data. For bo th kern els, we sear ched fo r th e best param e- ter ( t in the case of pseu do-DTW kernel a nd σ in the case of GA kernel) between 2 − 5 and 2 10 on a logarithmic scale with equidistant steps and selected th e parameter having the lowest mean classification erro r . The SVM regula rization parameter ( C ) was searched within 2 − 5 and 2 5 in a similar fashion. If the pseudo-DTW kernel based Gram matrix w a s not positi ve s emi-definite then we projected it to the nearest positive semi-definite matrix using the alternating pr ojec- tion method of [ 8 ]. The result of the classification is sh own in Fig. 3 . Perfor- mance is near ly 100 % for expressions with large d eforma- tions in th e facial f eatures, such as disgu st, happine ss and surprise. T o the best of o ur knowledge, classification perform ance with time- series kernels is better than th e best av ailab le re- sults to date, inclu ding spatio-tem poral ICA, boosted dy- namic featu res, and non- negativ e m atrix factorizatio n tech- niques. For detailed comparisons, see T a ble 1 . 3.3. Early Expression Cl assification Encour aged b y the results of t he first e x perimen t, we de- cided to co nstrain the m aximum length of th e sequen ces used in training and testing in ord er to estimate perfo rmance in the early phase of the emotion ev ents. W e cro pped the the time series between 2 and 16 fram es and tra ined kernel SMVs f or on e-vs-all emotio n classifica- T able 1. (a) Comparisons with hand-designed spatio-temp oral Gab or filters (W u et al. 2010 [ 21 ]), lea rned spatio-temporal ICA filters (Lon g et al. 2012 [ 12 ]) and Sparse Non-negati ve Matrix Factorization (NMF) filters (Jeni et al. 2013 [ 9 ]) on the fi rst 6 frames. (b) Comparisons with boosted dynamic features (Y ang et al. 2009 [ 22 ]) on the last frames of the sequences. (a) Method Anger Disg. Fear Joy Sadn. Surp. A ver age W u et al. [ 21 ] 0.829 0.677 0.667 0.877 0.784 0.879 0.786 Long et al. [ 12 ] 0.774 0.711 0.692 0.894 0.848 0.891 0.802 Jeni et al. [ 9 ] 0.817 0.908 0.774 0.938 0.865 0.886 0.865 This work, DTW 0.873 0.893 0.793 0.892 0.843 0.909 0. 867 This work, GA 0 .921 0.905 0.887 0.910 0. 871 0.930 0.904 (b) Method Anger Disg. Fear Joy Sadn. Surp. A ve rage Y ang et al. [ 22 ] 0.973 0.941 0.916 0.991 0.978 0.998 0.966 Long et al. [ 12 ] 0.933 0.988 0.964 0.993 0.991 0.999 0.978 Jeni et al. [ 9 ] 0.989 0.998 0.977 0.998 0.994 0.994 0.992 This work, DTW 0.991 0.994 0.987 0.999 0.995 0.996 0. 994 This work, GA 0 .986 0.993 0.986 1.000 0.984 0.997 0.991 (a) (b) (c) (d) (e) (f) Figure 4. Area Under R OC curve values of the dif ferent emotion classifiers: (a) anger , (b) disgust, (c) fear, (d) joy , (e) sadness and (f) surprise. Solid (dotted) line: results for the pseudo DTW (GA) kernel. tion. Figur e 4 shows the classification p erforma nce a s a function of the max imum length of the seque nces. Acco rd- ing to the figu res, 3 -to-4 f rames c an reach 80% A UC-ROC perfor mance, whereas 5-to-6 frames are sufficient f or about 90% perfor mance. 4. Discussion and Summary W e hav e stud ied ti me-series k ernel methods for th e anal- ysis of emo tional expressions. W e used the well k nown 3D CLM meth od with th e available op en source C++ im ple- mentation of Jason Saragih. Compared to previous results, we f ound superior p erform ances b oth on the first 6 fram es and on the last few frames of the sequences collected fr om the CK+ database. It is no table th at we used on ly shape informa tion and neglected the textural one , since the 3D CLM model can compensate for the head poses making the method robust against head pose variations [ 10 ]. The NMF m ethod [ 9 ] that deep ly exploits textural fea- tures co mes close to o ur metho d and on e expects that m ix- ing the two methods may un ite the advantages of the two a p- proach es, namely the rob ustness of the shape based method against pose v ariations and light condition s, and sometimes strong te xtural changes under small landmar k position vari- ations. Also, textural chan ges are less sensitive to the esti- mation noise of the landmark positions. W e achieved highly pro mising r esults at ear ly tim es of the ser ies: 3- to-4 frame s reach ed 80% A UC-R OC p erfor- mance, wher eas 5-to-6 frame s wer e sufficient fo r about 90% perfor mance. Such early detection enab les timely respo nse in hum an-com puter inte ractions and collab orations. Fur- thermor e, the early frames of the ser ies ha ve smaller A UC values and should make emotion estimation more robust. In sum, time -series kernels are very pro mising for em o- tion reco gnition. There is a nu mber of p otential im- provements to o ur metho d, such as (i) joined texture an d shape ba sed facial expression reco gnition usin g for exam- ple, pr obabilistic SVMs, and (ii) n ovel DTW optimization methods, like lower boun ding or the U CR suite ap proach [ 17 ] that c an make th e pro posed system tractable for real- time analysis. 5. Ackno wledgments The resear ch was carried out as p art of the EI TKIC 12- 1-201 2-000 1 p roject, which is supporte d by the Hun gar- ian Government, man aged by the National Dev e lopment Agency , financed by the Resear ch and T ec hnolog y In- novation Fund an d was perf ormed in coo peration with the EIT ICT Labs Budapest Associate Partner Group. ( www.ictlabs. elte.hu ) W e are gratef ul to Jason Saragih for providing his CLM code for our w ork. Refer ences [1] K. Bousmalis, L.-P . Morency , and M. Pantic. Modeling hid- den dynamics of multimodal cu es for spontaneous agreement and disagreement recognition. In Procee dings of the Ninth IEEE Internationa l Confer ence on Automatic F ace and Ges- tur e Recognition (FG 2011) , pages 746–752, 2011. 2 [2] K. Bousmalis, S. Zafeiriou, L.-P . Morency , and M. Pantic. Infinite hidden conditional random fields for human behav- ior analysis. IEEE Tr ansactions on Neural Networks and Learning Systems , 24(1):170 –177, 2013. 2 [3] C.-C. Chang and C.-J. Li n. LIBSVM: A library f or support vector machines. ACM Tr ansactions on Intelligent Systems and T echnolo gy , 2:27:1–2 7:27, 2011. S oftware available at http://www.cs ie.ntu.edu.tw / ˜ cjlin/libsvm . 4 [4] M. Cuturi. Fast global al ignment kernels. In Pr oceedings of the In ternational Con fer ence on Mac hine Learning , 2011. 2 , 3 , 4 [5] M. Cuturi, J.-P . V ert , . Birkenes, and T . Matsui. A kernel for time series based on global alignments. In Procee dings of the IEEE International Con fer ence on Acoustics, Speech and Signal Proce ssing (IC ASSP 2007) , volume 2, pages 413– 416, 2007. 2 , 3 [6] J. R. Dell er , J. G. Proakis, and J. H. Hansen. Discrete-time pr ocessing of speech signals . I EEE Ne w Y ork, NY , USA:, 2000. 2 [7] B. Haasdonk and C. Bahlmann. Learning with distance sub- stitution kernels. In P attern R ecog nition , pages 220–227. Springer , 2004. 3 [8] N. J. Higham. Computing the nearest correlation matrix a problem from finance. IMA Jou rnal of Numerical A nalysis , 22(3):329–3 43, 2002. 3 , 5 [9] L. A. Jeni, J. M. Girard, J. F . Cohn, and F . De La T orre. Continuous AU intensity estimation using l ocalized, sparse facial feature space. In 2nd In ternational W orkshop o n Emo- tion Repr esentation, Analysis and Synthesis in Continuous T ime and Space (EmoSP ACE) , 201 3. 6 [10] L. A. Jeni, A. L ˝ orincz, T . Nagy , Z. Palotai, J. Seb ˝ ok, Z. Szab ´ o, and D. T ak ´ acs. 3d shape esti mation i n video se- quences provides high precision ev aluation of facial expres- sions. I mag e and V ision Computing , 30(10):785–795, 2012. 6 [11] T . Kanade, J. F . Cohn, an d Y . T i an. Comprehen siv e database for facial expression analysis. In P r oceedings of the F ourth IEEE Internationa l Confer ence on Automatic F ace and Ges- tur e Recognition (FG 2000) , pages 46–53, 2000. 5 [12] F . Long, T . W u, J. R. Mov ellan, M. S. Bartlett, and G. L it- tlew ort. Learning spatiotemporal features by using i ndepen- dent component analysis with application to facial expres- sion recognition. Neur ocomputing , 93:126 – 132, 2012. 2 , 6 [13] P . Luc ey , J. F . Cohn, T . Kanade, J. Saragih, Z. Ambadar , and I. Matthews. The extended Cohn-Kanade dataset (CK+): A complete dataset for action unit and emotion-specified ex- pression. In Pro ceedings of the Computer V ision and P at- tern Recognition W orkshops (CVP RW 20 10) , pages 94–101, 2010. 4 [14] I. Matthews and S. Baker . Active appearance models revis- ited. International Jo urnal of Computer V ision , 60(2):135 – 164, 2004. 2 [15] K.-R. M ¨ uller , A. J. Smola, G. R ¨ atsch, B. Sch ¨ olko pf, J. Kohlmo rgen, and V . V apnik. Predicting time series with support vector machines. In Artificial Neural Networks- ICANN’97 , pages 999–100 4. Springer , 1997. 2 [16] T . Preis, J. J. Schneider , and H. E. Stanley . Switching pro- cesses in fi nancial markets. Pr oceedings of the National Academy of Sciences , 108(19):767 4–7678 , 2011. 1 [17] T . Rakthanmanon, B. Campana, A. Mueen, G. Bati sta, B. W estov er, Q. Zhu, J. Zakaria, and E. K eogh. Search- ing and mining tril lions of ti me series subsequences under dynamic time warping. In Proce edings of the 18th ACM SIGKDD international confere nce on Knowledge discovery and data mining , pages 262–27 0. A CM, 2012. 7 [18] H. Sakoe and S. Chiba. Dynamic programming algorithm optimization for spoken word recognition. Acoustics, S peech and Signal P r ocessing, IEE E T ransactions on , 26(1):43–49, 1978. 2 [19] J. M. Saragih, S. Lucey , and J. F . C ohn. Deformable model fitting by regularized landmark mean-shift. International J ournal of Computer V ision , 91(2):200–215, 2011. 2 , 3 [20] M. F . V alstar and M. Pantic. Combined support vector ma- chines an d hidden mark ov mod els for mod eling facial action temporal dyn amics. In Human –Computer Inter action , pages 118–12 7. Springer , 2007. 2 [21] T . W u, M. Bartlett, and J. R. Movellan. Facial expression recognition using gabor motion energy filters. In Computer V isi on and P attern Recognition W orkshops (CVPRW), 2010 IEEE Computer Society Confere nce on , pages 42–47, 2010. 6 [22] P . Y ang, Q. Liu, and D. N. Metaxas. Boosting encoded dynamic features for facial exp ression recognition. P att ern Recogn ition Lett ers , 30 (2):132–139 , 2009. 2 , 6

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment