Optimal Periodic Sensor Scheduling in Networks of Dynamical Systems

We consider the problem of finding optimal time-periodic sensor schedules for estimating the state of discrete-time dynamical systems. We assume that {multiple} sensors have been deployed and that the sensors are subject to resource constraints, whic…

Authors: Sijia Liu, Makan Fardad, Engin Masazade

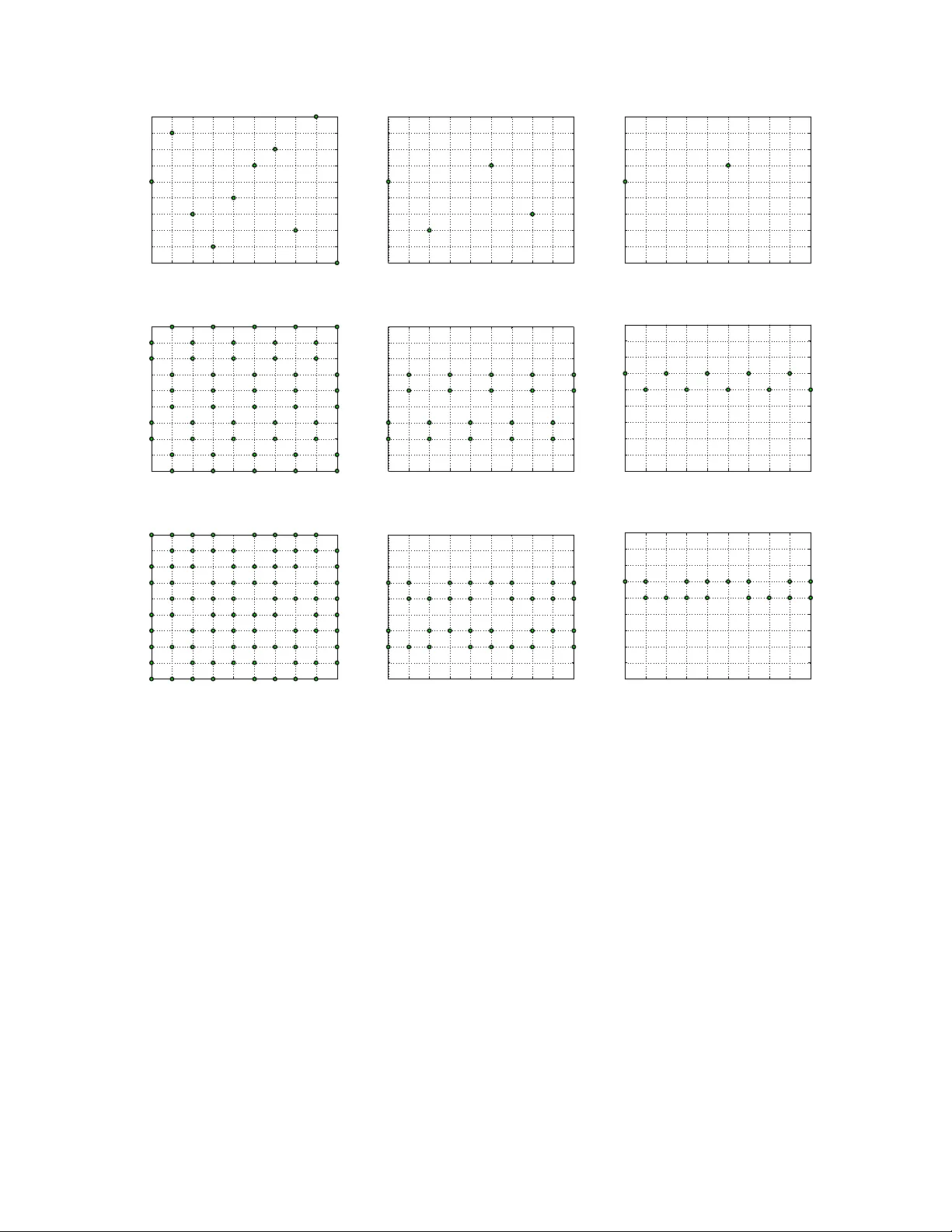

1 Optimal Periodic Sensor Scheduling in Networks of Dynamical Systems Sijia Liu, Student Member , IEEE, Makan Fardad, Member , IEEE, Engin Masazade, Member , IEEE, and Pramod K. V arshney , F ellow , IEEE Abstract W e consider the problem of finding optimal time-periodic sensor schedules for estimating the state of discrete-time dynamical systems. W e assume that multiple sensors have been deployed and that the sensors are subject to resource constraints, which limits the number of times each can be activ ated over one period of the periodic schedule. W e seek an algorithm that strikes a balance between estimation accuracy and total sensor activ ations over one period. W e make a correspondence between acti ve sensors and the nonzero columns of estimator gain. W e formulate an optimization problem in which we minimize the trace of the error cov ariance with respect to the estimator gain while simultaneously penalizing the number of nonzero columns of the estimator gain. This optimization problem is combinatorial in nature, and we employ the alternating direction method of multipliers (ADMM) to find its locally optimal solutions. Numerical results and comparisons with other sensor scheduling algorithms in the literature are provided to illustrate the effecti veness of our proposed method. Index T erms Dynamical systems, alternating direction method of multipliers, state estimation, sensor networks, sensor scheduling, sparsity . S. Liu, M. Fardad and P . K. V arshney are with the Department of Electrical Engineering and Computer Science, Syracuse Univ ersity , Syracuse, NY , 13244 USA e-mail: { sliu17, makan, varshney } @syr .edu. E. Masazade is with the Department of Electrical and Electronics Engineering, Y editepe Univ ersity , Istanbul, 34755, Turk ey e-mail: engin.masazade@yeditepe.edu.tr . The work of S. Liu and P . K. V arshney was supported by the U.S. Air Force Office of Scientific Research (AFOSR) under Grants F A9550-10-1-0263 and F A9550-10-1-0458. The work of M. Fardad was supported by the National Science Foundation under award CMMI-0927509. The work of E. Masazade was supported by the Scientic and T echnological Research Council of T urkey (TUBIT AK) under Grant 113E220. 2 I . I N T RO D U C T I O N W ireless sensor networks, consisting of a large number of spatially distributed sensors, have been used in a wide range of application areas such as en vironment monitoring, source localization and object tracking [1]–[3]. In a giv en region of interest, sensors observe the unkno wn state (e.g., field intensity or target location) which commonly ev olves as part of a linear dynamical system. A fusion center receiv es all the measurements and estimates the state over the entire spatial domain. Howe ver , due to the constraints on communication bandwidth and sensor battery life, it may not be desirable to have all the sensors report their measurements at all time instants. Therefore, the problem of sensor selection/scheduling arises, which seeks to activ ate different subsets of sensors at dif ferent time instants in order to attain an optimal tradeoff between estimation accuracy and energy use. Over the last decade, sensor selection/scheduling problems for state estimation of linear systems have been extensi vely studied in the literature [4]–[16], where sev eral variations of the problem hav e been addressed according to the types of cost functions, time horizons, heuristic algorithms, and ener gy and topology constraints. Many research efforts have focused on myopic sensor scheduling [4]–[7], where at e very instant the search is for the best sensors to be activ ated at the next time step (as opposed to a longer time horizon). Ho wev er, myopic selection strategies get trapped in local optima and perform poorly in some cases, such as sensor networks with sensing holes [11]. But if the length of time horizon becomes lar ge or infinite then finding an optimal non-myopic schedule is difficult, because the number of sensor sequences gro ws prohibitiv ely large as the time horizon grows. Therefore, some researchers hav e considered the problem of periodic sensor schedules on an infinite time horizon [14]–[18]. In [12], [13], periodicity in the optimal sensor schedule was observed ev en for finite time horizon problems in which a periodic schedule was not assumed a priori . A sufficient condition for the existence of periodicity for the sensor scheduling problem over an infinite time horizon was first suggested in [18]. Furthermore, in [14] it was prov ed that the optimal sensor schedule for an infinite horizon problem can be approximated arbitrarily well by a periodic schedule with a finite period. W e emphasize that the results in [14] are nonconstructiv e, in the sense that it is shown that the optimal sensor schedule is time- periodic but an algorithm for obtaining this schedule, or ev en the length of its period, is not provided. Although periodicity makes infinite horizon sensor scheduling problems tractable via the design of an optimal schedule over a finite period, it poses other challenges in problem formulation and optimization compared to con ventional sensor scheduling. In this paper , we seek a general framew ork to design optimal periodic sensor schedules subject to 3 measurement frequency constraints. Measurement frequency constraints imply that each sensor has a bound on the number of times it can be activ e over a time period of length K . Similar constraints have been considered in [8], [12], [19] and referred to as ener gy constraints, and transmission or communication bounds. T o achiev e our goal, we seek an optimal dynamic estimator, in the form of a time-periodic Kalman filter , that also respects the measurement frequency constraints. This can be interpreted as a design problem in which both the sensor acti v ation schedules, and the estimator gains used to combine the sensor measurements, are jointly optimized . T o allo w for additional design flexibility , we introduce into the optimization formulation sparsity-promoting penalty functions that encourage fewer measurements at e very time instant of the periodic horizon. This can be used to generate arbitrarily sparse sensor schedules that employ a minimal number of activ e sensors. The design of optimal periodic sensor schedules has been recently studied in [15]–[17]. In [15], the authors construct the optimal periodic schedule only for two sensors. For a multiple sensor scenario, the work of [16] studied the problem of periodic sensor scheduling by assuming the process noise to be very small, which results in a linear matrix inequality (LMI) problem. As a consequence of the assumption that the process noise is negligible, the ordering of the measurements does not factor into the solution of this LMI problem. Clearly , a sensor schedule in which the order of sensor activ ations is irrelev ant can not be optimal for some sensor scheduling problems. For example, it was shown in [20] that temporally staggered sensor schedules constitute the optimal sensing policy . In [17], a lower bound on the performance of scheduling sensors over an infinite time horizon is obtained, and then an open-loop periodic switching policy is constructed by using a doubly substochastic matrix. The authors sho w that the presented switching policy achiev es the best estimation performance as the period length goes to zero (and thus sensors are switched as fast as possible). In this paper , a comparison of both the performance and the computational complexity of our methodology with the existing work in [15]–[17] will be provided. The sensor scheduling frame work presented in this paper relies on making a one-to-one correspondence between every sensor and a column of the estimator gain. Namely , a sensor being off at a certain time instant is equi valent to the corresponding column of the estimator gain being identically zero. This idea has been exploited in our earlier work [21] on sparsity-promoting extended Kalman filtering, where sensors are scheduled only for the next time step and hav e no resources constraints in volv ed. Dif ferent from [21], we consider a periodic sensor scheduling problem on an infinite time horizon, where measurement frequency constraints and periodicity place further restrictions on the number of nonzero columns of the time-periodic Kalman filter gain matrices. 4 Counting and penalizing the number of nonzero columns of the estimator gain, which in this work is performed via the use of the cardinality function, results in combinatorial optimization problems that are intractable in general. It has been recently observed in [21]–[23] that the alternating direction method of multipliers (ADMM) is a powerful tool for solving optimization problems that include cardinality functions. Particularly , reference [22] considers the problem of finding optimal sparse state feedback gains and demonstrates the effecti veness of ADMM in finding such gains. Howe ver , different from [22], we extend the application of ADMM to account for the time periodicity . Furthermore, we incorporate measurement frequency constraints where subtle relationships between the sparsity-promoting parameter and the frequency parameter come into play . The main contributions of this paper can be summarized as follows. • W e de velop a general optimization framew ork for the joint design of optimal periodic sensor schedules (on an infinite time horizon) and optimal estimator (Kalman filter) gain matrices. • W e demonstrate that the optimal periodic Kalman filter gain matrices should satisfy a coupled sequence of periodic L yapunov recursions. W e introduce a new block-cyclic representation to trans- form the coupled matrix recursions into algebraic matrix equations. In particular , this allows the application of the efficient Anderson-Moore method in solving the optimization problem. • Through application of the alternating direction method of multipliers, we unco ver subtle relation- ships between the frequency constraint parameter , the sparsity-promoting parameter , and the sensor schedule. • W e present a comparison of both the performance and the computational complexity of our method- ology with other prominent work in the literature. W e demonstrate that our method performs as well or significantly better than these works, and is computationally efficient for sensor scheduling in problems with large-scale dynamical systems. The rest of the paper is organized as follows. In Section II, we motiv ate the problem of periodic sensor scheduling on an infinite time horizon. In Section III, we formulate the sparsity-promoting periodic sensor scheduling problem. In Section IV, we in voke the ADMM method, which leads to a pair of ef ficiently solv able subproblems. In Section V, we illustrate the effecti veness of our proposed approach through examples. Finally , in Section VI we summarize our work and discusses future research directions. 5 I I . P E R I O D I C I T Y O F I N FI N I T E H O R I Z O N S E N S O R S C H E D U L I N G Consider a discrete-time linear dynamical system e volving according to the equations x k +1 = Ax k + Bw k , (1) y k = Cx k + v k , (2) where x k ∈ R N is the state vector at time k , y k ∈ R M is the measurement vector whose m th entry corresponds to a scalar observation from sensor m , A , B , and C are matrices of appropriate dimensions. The inputs w k and v k are white, Gaussian, zero-mean random vectors with covariance matrices Q and R , respectively . Finally , we assume that ( A , C ) is detectable and ( A , Σ ) is stabilizable, where ΣΣ T = BQB T . For ease of describing the sensor schedule, we introduce the auxiliary binary variables ζ k,m ∈ { 0 , 1 } , to represent whether or not the m th sensor is activ ated at time k . The sensor schedule ov er an infinite time horizon can then be denoted by µ ∞ = [ ζ 1 , ζ 2 , . . . ] , where the v ector ζ k = [ ζ k, 1 , . . . , ζ k,M ] T indicates which sensors are activ e at time k . The performance of an infinite-horizon sensor schedule is then measured as follows [15], [16], J ( µ ∞ ) , lim K →∞ 1 K K X k =1 tr ( P k ) (3) where P k is the estimation error cov ariance at time k under the sensor schedule µ ∞ . Due to the combinatorial nature of the problem, it is intractable to find the optimal sensor schedule that minimizes the cost (3) in general [16]. In [18], it was suggested that the optimal sensor schedule can be treated as a time-periodic schedule ov er the infinite time horizon if the system (1)-(2) is detectable and stabilizable. Furthermore, in [14] it was proved that the optimal sensor schedule for an infinite horizon problem can be approximated arbitrarily well by a periodic schedule with a finite period, and that the error cov ariance matrix con verges to a unique limit cycle. In this case, the cost in (3) can be rewritten as J ( µ K ) = 1 K K − 1 X k =0 tr ( P k ) (4) where K is the length of the period and P k is the error cov ariance matrix at instant k of its limit cycle. In this work, similar to [14]–[16], we assume the length K of the period is gi ven. T o the best knowledge of the authors, it is still an open problem to find the optimal period length. 6 I I I . P RO B L E M F O R M U L AT I O N For the discrete-time linear dynamical system (1)–(2), we consider state estimators of the form ˆ x k +1 = A ˆ x k + L k ( y k − C ˆ x k ) = ( A − L k C ) ˆ x k + L k y k , where L k is the estimator gain (also known as the observer gain [24]) at time k . In what follows we aim to determine the matrices L k , k = 0 , 1 , . . . , by solving an optimization problem that, in particular , promotes the column sparsity of L k . W e define the estimation error co variance P k as P k = E { ( x k − ˆ x k )( x k − ˆ x k ) T } , where E is the expectation operator . 1 It is easy to show that P k satisfies the L yapunov recursion P k +1 = ( A − L k C ) P k ( A − L k C ) T + BQB T + L k RL T k . (5) Finally , partitioning the matrices L k and C into their respective columns and rows, we hav e L k C = h L k, 1 L k, 2 . . . L k,M i C T 1 C T 2 . . . C T M = L k, 1 C T 1 + L k, 2 C T 2 + · · · + L k,M C T M , (6) where we assume that each row of C characterizes the measurement of one sensor . Therefore, each column of the matrix L k can be thought of as corresponding to the measurement of a particular sensor . In estimation and inference problems using wireless sensor networks, minimizing the energy consump- tion of sensors is often desired [8], [19]. Therefore, we seek algorithms that schedule the turning on and of f of the sensors in order to strike a balance between energy consumption and estimation performance. Suppose, for example, that at time step k only the ν th sensor reports a measurement. In this case, it follo ws from (6) that L k C = L k,ν C T ν , where C T ν is the ν th row of C . This can also be interpreted as having the column vectors L k,m equal to zero for all m 6 = ν . Thus, hereafter we assume that the measurement matrix C is constant and the scheduling of the sensors is captured by the nonzero columns of the estimator gains L k , in the sense that if L k,m = 0 then at time k the m th sensor is not making a measurement. 1 In the system theory literature, ˆ x k and P k are often denoted by ˆ x k | k − 1 and P k | k − 1 ; here we use ˆ x k and P k for simplicity of notation. 7 As stated in Sec. II, in this work we search for optimal time-periodic sensor schedules, i.e., we seek optimal sequences { L k } k =0 , 1 ,...,K − 1 and { P k } k =0 , 1 ,...,K − 1 that satisfy L k + K = L k , P k + K = P k , (7) where K is a given period. Note that the choice of K is not a part of the optimization problem considered in this paper . As suggested in [14], one possible procedure for choosing K is to find the optimal sensor schedule for gradually-increasing values of K until the performance ceases to improve significantly . Furthermore, the condition on the periodicity of P k assumes that the system and estimator with L k + K = L k hav e been running for a long time so that P k has reached its steady-state limit cycle [14]. In this paper , we consider k = −∞ as the initial time and without loss of generality consider the design of L k ov er the period k = 0 , 1 , . . . , K − 1 , when the system has statistically settled into its periodic cycle. T o incorporate the energy constraints on individual sensors ov er a period of length K , we consider K − 1 X k =0 card k L k,m k 2 ≤ η m , m = 1 , 2 , . . . , M , (8) where η m denotes the measurement frequency bound. This implies that the m th sensor can make and transmit at most η m measurements ov er the period of length K . For simplicity , we assume η 1 = η 2 = . . . = η M = η . W e remark that the proposed sensor scheduling methodology in this article applies equally well to the case where the η i are not necessarily equal to each other . Next, we formulate the optimal periodic sensor scheduling problem considered in this work, and then elaborate on the details of our formulation. W e pose the optimal sensor scheduling problem as the optimization problem minimize K − 1 X k =0 tr( P k ) + γ K − 1 X k =0 g ( L k ) subject to L yapunov recursion (5) for k = 0 , 1 , . . . , K − 1 , periodicity condition (7), measurement frequency constraints (8), (9) where the matrices { L k } k =0 , ··· ,K − 1 are the optimization v ariables, card( · ) denotes the cardinality function which giv es the number of nonzero elements of its (vector) argument, and g ( L k ) := card h k L k, 1 k 2 k L k, 2 k 2 · · · k L k,M k 2 i . (10) Therefore g ( L k ) is equal to the number of nonzero columns of L k , also referred to as the column- cardinality of L k . The incorporation of the sparsity-promoting term g ( · ) in the objectiv e function en- courages the use of a small subset of sensors at each time instant. The positiv e scalar γ characterizes 8 the relati ve importance of the two conflicting terms in the objectiv e, namely the relative importance of achie ving good estimation performance versus activ ating a small number of sensors. Note that (9) is a combinatorial problem [25] and, for large systems, computationally intractable in general. Motiv ated by [22], in the next section we employ the alternating direction method of multipliers (ADMM) to solve (9). W e demonstrate that the application of ADMM leads to a pair of efficiently solv able subproblems. I V . O P T I M A L P E R I O D I C S E N S O R S C H E D U L I N G U S I N G A D M M In this section, we apply ADMM to the sensor scheduling problem (9). Our treatment uses ideas introduced in [22], where ADMM was used for the identification of optimal sparse state-feedback gains. W e extend the framew ork of [22] to account for the time periodicity of the estimator gains, their sparsity across both space and time, and the addition of measurement frequenc y constraints on individual sensors. W e begin by reformulating the optimization problem in (9) in a way that lends itself to the application of ADMM. For P k that satisfies the L yapunov recursion in (5), it is easy to sho w that P k = BQB T + L k − 1 RL T k − 1 + −∞ X n = k − 1 ( A − L k − 1 C ) · · · ( A − L n C ) · ( BQB T + L n − 1 RL T n − 1 ) · ( A − L n C ) T · · · ( A − L k − 1 C ) T . In voking the periodicity of L k , tr( P k ) can be expressed as a function f k of { L k } k =0 , ··· ,K − 1 so that the optimization problem (9) can be rewritten as minimize K − 1 X k =0 f k ( L 0 , · · · , L K − 1 ) + γ K − 1 X k =0 g ( L k ) subject to K − 1 X k =0 card k L k,m k 2 ≤ η , m = 1 , 2 , . . . , M . W e next introduce the indicator function corresponding to the constraint set of the abov e optimization problem as [23] I ( { L k } ) = 0 if P K − 1 k =0 card k L k,m k 2 ≤ η for m = 1 , 2 , . . . , M , + ∞ otherwise , (11) 9 where for notational simplicity we hav e used, and henceforth will continue to use, {·} instead of {·} k =0 ,...,K − 1 . Incorporating the indicator function into the objecti ve function, problem (9) is equiv alent to the unconstrained optimization problem minimize K − 1 X k =0 f k ( { L k } ) + γ K − 1 X k =0 g ( L k ) + I ( { L k } ) . Finally , we introduce the ne w set of v ariables { G k } , together with the new set of constraints L k = G k , k = 0 , 1 , . . . , K − 1 , and formulate minimize K − 1 X k =0 f k ( { L k } ) + γ K − 1 X k =0 g ( G k ) + I ( { G k } ) subject to L k = G k , k = 0 , 1 , . . . , K − 1 , (12) which is now in a form suitable for the application of ADMM. The augmented Lagrangian [22], [26] corresponding to optimization problem (12) is giv en by L ( { L k } , { G k } , { Λ k } ) = K − 1 X k =0 f k ( { L k } ) + γ K − 1 X k =0 g ( G k ) + I ( { G k } ) + K − 1 X k =0 tr[ Λ k ( L k − G k )] + ρ 2 K − 1 X k =0 || L k − G k || 2 F , (13) where the matrices { Λ k } are the Lagrange multipliers (also referred to as the dual variables), the scalar ρ > 0 is a penalty weight, and k · k F denotes the Frobenius norm of a matrix, k X k 2 F = tr( X T X ) . The ADMM algorithm can be described as follo ws [26]. For i = 0 , 1 , . . . , we iterati vely e xecute the follo wing three steps { L i +1 k } := arg min { L k } L ( { L k } , { G i k } , { Λ i k } ) , (14) { G i +1 k } := arg min { G k } L ( { L i +1 k } , { G k } , { Λ i k } ) , (15) Λ i +1 k := Λ i k + ρ ( L i +1 k − G i +1 k ) , k = 0 , 1 , . . . , K − 1 , (16) until both of the conditions P K − 1 k =0 k L i +1 k − G i +1 k k F ≤ , and P K − 1 k =0 k G i +1 k − G i k k F ≤ are satisfied. The rationale behind using ADMM can be described as follows [22]. The original noncon vex optimiza- tion problem (9) is dif ficult to solve due to the nondifferentiability of the sparsity-promoting function g . By defining the new set of variables { G k } , we effecti vely separate the original problem into an “ L - minimization” step (14) and a “ G -minimization” step (15), of which the former can be addressed using v ariational methods and descent algorithms and the latter can be solved analytically . 10 W e summarize our proposed method on periodic sensor scheduling in Algorithm 1. In the subsections that follow , we will elaborate on each of the steps in volv ed in the implementation of Algorithm 1 and the ex ecution of the minimization problems (14) and (15). Algorithm 1 ADMM-based sensor scheduling algorithm 1: Require: Choose ρ , . Initialize ADMM using { Λ 0 k } = { G 0 k } = { 0 } and { L 0 k } from (27). 2: for i = 0 , 1 , . . . do 3: Obtain { L i +1 k } using Algorithms 2-3. 4: Obtain { G i +1 k } using Algorithm 4. 5: Obtain { Λ i +1 k } using Λ i +1 k = Λ i k + ρ ( L i +1 k − G i +1 k ) , k = 0 , 1 , . . . , K − 1 . 6: until P K − 1 k =0 k L i +1 k − G i +1 k k F ≤ and P K − 1 k =0 k G i +1 k − G i k k F ≤ . 7: end for A. L -minimization using the Anderson-Moor e method In this section, we apply the Anderson-Moore method to the L -minimization step (14). The Anderson- Moore method is an iterati ve technique for solving systems of coupled matrix equations efficiently . W e refer the reader to [22] for a more detailed discussion of its applications and related references. In what follo ws, we extend the approach of [22] to account for the periodicity of the sensor schedule. Completing the squares with respect to { L k } in the augmented Lagrangian (13), the L -minimization step in (14) can be expressed as [22], [26] minimize K − 1 X k =0 f k ( { L k } ) + K − 1 X k =0 ρ 2 || L k − U i k || 2 F (17) where U i k := G i k − (1 /ρ ) Λ i k for k = 0 , 1 , . . . , K − 1 . For notational simplicity , henceforth we will use U k instead of U i k , where i indicates the iteration index. W e bring attention to the fact that, by defining the indicator function I in (11) and then splitting the optimization v ariables in (12), we hav e ef fectiv ely remov ed both sparsity penalties and energy constraints from the variables { L k } in the L -minimization problem (17). This is a key adv antage of applying ADMM to the sensor scheduling problem. 11 Recalling the definition of f k , problem (17) can be equiv alently written as minimize φ ( { L k } ) := K − 1 X k =0 tr( P k ) + K − 1 X k =0 ρ 2 || L k − U k || 2 F subject to L yapunov recursion (5) for k = 0 , 1 , . . . , K − 1 , periodicity condition (7). Proposition 1: The necessary conditions for the optimality of a sequence { L k } can be e xpr essed as the set of coupled matrix recur sions P k +1 = ( A − L k C ) P k ( A − L k C ) T + BQB T + L k RL T k V k = ( A − L k C ) T V k +1 ( A − L k C ) + I 0 = 2 V k +1 L k R − 2 V k +1 ( A − L k C ) P k C T + ρ ( L k − U k ) for k = 0 , . . . , K − 1 , where U k := G i k − (1 /ρ ) Λ i k and L K = L 0 , P K = P 0 . The expr ession on the right of the last equation is the gradient of φ with r espect to L k . Proof: See appendix A. Due to their coupling, it is a difficult exercise to solve the abov e set of matrix equations. W e thus employ the Anderson-Moore method [22], [27], which is an efficient technique for iterativ ely solving systems of coupled L yapunov and Sylv ester equations . W e note, ho wever , that the set of matrix equations gi ven in the proposition include (periodic) L yapunov recur sions rather than (time-independent) L yapunov equations. W e next apply what can be thought of as a lifting procedure [28] to take the periodicity out of these equations and place them in a form appropriate for the application of the Anderson-Moore method. Let T denote the following permutation matrix in block-cyclic form [29] T := 0 I I . . . . . . . . . I 0 12 where I is a N × N identity matrix, and define L := T diag { L k } = 0 L K − 1 L 0 . . . . . . L K − 2 0 , P := diag { P k } , V := diag { V k } , U := T diag { U k } , Q := diag { Q } , R := diag { R } , I := diag { I } , A := T diag { A } , B := diag { B } , C := diag { C } . In the sequel, we do not distinguish between the sequence { L k } and its cyclic form L , and will alternate between the two representations as needed. The recursive equations in the statement of Proposition 1 can no w be rewritten in the time-independent form P = ( A − LC ) P ( A − LC ) T + BQB T + LRL T (18) V = ( A − LC ) T V ( A − LC ) + I (19) 0 = 2 V LR − 2 V ( A − LC ) P C T + ρ ( L − U ) (20) Furthermore, defining ∇ Φ := T diag {∇ L k φ } = 0 ∇ L K − 1 φ ∇ L 0 φ . . . . . . . . . ∇ L K − 2 φ 0 it can be shown that ∇ Φ = 2 V LR − 2 V ( A − LC ) P C T + ρ ( L − U ) , (21) i.e., the right side of (20) gives the gradient direction for L , or equiv alently the gradient direction for each L k , k = 0 , 1 , . . . , K − 1 . W e briefly describe the implementation of the Anderson-Moore method as follows. For each iteration of this method, we first keep the value of L fixed and solve (18) and (19) for P and V , then keep P and V fixed and solve (20) for a new value L new of L . Proposition 2 shows that the difference ˜ L := L new − L between the values of L ov er two consecutiv e iterations constitutes a descent direction for φ ( { L k } ) ; see [22], [27] for related results. W e employ a line search [25] to determine the step-size s in L + s ˜ L in order 13 to accelerate the con ver gence to a stationary point of φ . W e also assume that there alw ays exists an L that satisfies the measurement frequency constraint and for which the spectrum of A − LC is contained inside the open unit disk; we elaborate on this condition in Section IV -C. These assumptions guarantee the existence of unique positiv e definite solutions P and V to Equations (18) and (19) [30]. Proposition 2: The dif ference ˜ L := L new − L constitutes a descent direction for φ ( { L k } ) , h∇ Φ , ˜ L i < 0 , (22) where h∇ Φ , ˜ L i := tr( ∇ Φ T ˜ L ) = P K − 1 k =0 tr( ∇ L k φ T L k ) . Moreover , h∇ Φ ( L ) , ˜ L i = 0 if and only if L is a stationary point of Φ , i.e., ∇ Φ ( L ) = 0 . Proof: The proof is similar to [31, Prop. 1 ] and omitted for bre vity . W e summarize the Anderson-Moore method for solving the L -minimization step in Algorithm 2. This algorithm calls on the Armijo rule [32], gi ven in Algorithm 3, to update L . Algorithm 2 L -minimization step (14), in the i th iteration of ADMM, using Anderson-Moore 1: If i = 0 , choose L 0 from (27). If i ≥ 1 , set L 0 equal to solution of (14) from previous ADMM iteration. 2: for t = 0 , 1 , . . . do 3: Set L = L t and solve (18), (19) to find P t , V t . 4: Set V = V t , P = P t and solve (20) to find ¯ L t . 5: Compute ˜ L t = ¯ L t − L t and update L t +1 = L t + s t ˜ L t , where s t gi ven by Armijo rule (see Algorithm 3). 6: until k∇ Φ ( L t ) k < . 7: end for Algorithm 3 Armijo rule for choosing step-size s t 1: Set s t = 1 and choose α, β ∈ (0 , 1) . 2: repeat 3: s t = β s t , 4: until φ ( L t + s t ˜ L t ) < φ ( L t ) + α s t tr ∇ Φ ( L t ) T ˜ L t . 14 B. G -minimization In this section, we consider the G -minimization step (15) and demonstrate that it can be solved analytically . In what follows, we extend the approach of [22] to account for the periodicity and energy constraints in the sensor schedule. Completing the squares with respect to { G k } in the augmented Lagrangian (13), the G -minimization step in (15) can be expressed as [22], [26] minimize γ K − 1 X k =0 g ( G k ) + ρ 2 K − 1 X k =0 || G k − S i k || 2 F subject to K − 1 X k =0 card k G k,m k 2 ≤ η , m = 1 , 2 , . . . , M , where S i k := L i +1 k + (1 /ρ ) Λ i k for k = 0 , 1 , . . . , K − 1 . For notational simplicity , henceforth we will use S k instead of S i k , where i indicates the iteration index. Recalling the definition of g from (10), and replacing || G k − S k || 2 F with P M m =1 k G k,m − S k,m k 2 2 yields the equiv alent optimization problem minimize ψ ( { G k } ) := M X m =1 K − 1 X k =0 γ card k G k,m k 2 + K − 1 X k =0 ρ 2 k G k,m − S k,m k 2 2 subject to K − 1 X k =0 card k G k,m k 2 ≤ η , m = 1 , 2 , . . . , M , where we hav e exploited the column-wise separability of g ( · ) and that of the Frobenius norm. W e form the matrix G m by picking out the m th column from each of the matrices in the set { G k } and stacking them, G m := h G 0 ,m G 1 ,m · · · G K − 1 ,m i . Then the G -minimization problem decomposes into the subproblems minimize ψ m ( G m ) := K − 1 X k =0 γ card k G k,m k 2 + K − 1 X k =0 ρ 2 k G k,m − S k,m k 2 2 subject to K − 1 X k =0 card k G k,m k 2 ≤ η , (23) which can be solved separately for m = 1 , 2 , . . . , M . T o solve problem (23) we rewrite the feasible set F of (23), F = G m : P K − 1 k =0 card k G k,m k 2 ≤ η , as the union F = F 0 ∪ F 1 ∪ · · · ∪ F η of the smaller sets F q , q = 0 , . . . , η , F q = G m : K − 1 X k =0 card k G k,m k 2 = q . 15 Let G q m denote a solution of minimize ψ m ( G m ) subject to G m ∈ F q . (24) Then a minimizer of (23) can be obtained by comparing ψ m ( G q m ) for q = 0 , . . . , η and choosing the one with the least value. The abov e procedure, together with finding the solution of (24), is made precise by the following proposition. Proposition 3: The solution of (23) is obtained by solving the sequence of minimization problems (24) for q = 0 , 1 , . . . , min { η , κ } , κ = P K − 1 k =0 card k S k,m k 2 . Furthermore, the solution of (24) is gi ven by G k,m = S k,m || S k,m || 2 ≥ || [ S m ] q || 2 and q 6 = 0 , 0 otherwise , for k = 0 , 1 , · · · , K − 1 , where S k := L i +1 k + (1 /ρ ) Λ i k , S m := [ S 0 ,m , · · · , S K − 1 ,m ] , [ S m ] q denotes the q th largest column of S m in the 2 -norm sense, and G k,m , S k,m denote the m th columns of G k , S k , respecti vely . Proof: See Appendix B. W e note that problem (23) can be solved via a sequence of equality constrained problems (24) whose analytical solution is determined by Proposition 3. Ho wev er, instead of solving min { η , κ } + 1 equality constrained problems, it is shown in Proposition 4 that the solution of the G -minimization problem (23) is determined by the magnitude of the sparsity-promoting parameter γ . Proposition 4: The solution G m of (23) is determined by solving one subproblem (24) based on the v alue of γ , G m = G 0 m ρ 2 k [ S m ] 1 k 2 2 < γ G 1 m ρ 2 k [ S m ] 2 k 2 2 < γ ≤ ρ 2 k [ S m ] 1 k 2 2 . . . . . . G min { η ,κ } m γ ≤ ρ 2 k [ S m ] min { η ,κ } k 2 2 (25) where G q m denotes a solution of (24) with q = 0 , 1 , . . . , min { η , κ } , and κ and [ S m ] q are defined as in Proposition 3. Proof: See Appendix C. It is clear from Proposition 4 that the parameter γ governs the column-sparsity of G m . For example, G m becomes the zero matrix as γ → ∞ , which corresponds to the scenario in which all sensors are always inactiv e. 16 T o reiterate, in order to solve the G -minimization problem (15), we first decompose it into the M subproblems (23) with separate optimization variables { G m } m =1 ,...,M . Each inequality constrained subproblem (23) is then solv ed via Proposition 4, in which the solution of the equality constrained problem (24) is determined by Proposition 3. W e summarize this procedure in Algorithm 4. Algorithm 4 G -minimization step (15) 1: Giv en η and S k = L i +1 k + 1 /ρ Λ i k , set κ = P K − 1 k =0 card k S k,m k 2 . 2: for m = 1 , . . . , M do 3: Set S m = [ S 0 ,m , · · · , S K − 1 ,m ] . 4: Solve (23) using Prop. 4 to obtain G m = G q m , where G q m is determined from Prop. 3. 5: end for 6: Use { G m } m =1 ,...,M to construct { G k } k =0 , 1 ,...,K − 1 . C. Con ver gence & Initialization of ADMM-based periodic sensor sc heduling The solution of ADMM for a noncon ve x problem generally yields a locally optimal point, and in general depends on the parameter ρ and the initial v alues of { L k } and { G k } [26]. In fact for a noncon ve x problem, such as the one considered here, ev en the conv ergence of ADMM is not guaranteed [26]. Our numerical experiments and those in other works such as [22] demonstrate that ADMM indeed works well when the v alue of ρ is chosen to be large. Howe ver , very large values of ρ make the Frobenius norm dominate the augmented Lagrangian (13) and thus lead to less emphasis on minimizing the estimation error . In order to select an appropriate v alue of ρ , certain extensions (e.g., varying penalty parameter) of the classical ADMM algorithm hav e been explored. The reader is referred to [26, Sec. 3 ]. T o initialize the estimator gain { L k } , we start with a feasible initializing sensor schedule. Such a schedule can be expressed in terms of the observ ation matrices ov er one period, namely , C ( k ) = [ ζ k, 1 C 1 , . . . , ζ k,M C M ] T for k = 0 , 1 , . . . , K − 1 , where the binary variable ζ k,m indicates whether or not the m th sensor is acti ve at time k . Note that the periodic sensor schedule { C ( k ) } uniquely determines the limit cycle of the periodic error cov ariance matrix [14]. W e express the periodic sensor schedule 17 { C ( k ) } in cyclic form C 0 := T diag { C ( k ) } = 0 C ( K − 1) C (0) . . . . . . C ( K − 2) 0 , and solve the follo wing algebraic Riccati equation for the cyclic form of { P k } P = Q + AP A T − AP C 0 T ( C 0 P C 0 T + R ) − 1 C 0 P A − 1 , (26) where P , Q , A and R have the same definitions as in Sec. IV -A. The Riccati equation (26) gives the optimal periodic estimator gain corresponding to a discrete-time system with given periodic observation matrices { C ( k ) } . Once the solution of (26) is found, the corresponding estimator gain in cyclic form is gi ven by [24] L 0 = AP C 0 T ( C 0 P C 0 T + R ) − 1 T 0 , (27) where T 0 has the same block-cyclic form of T but is instead formed using M × M identity matrices. It is not dif ficult to show that the matrix L 0 in (27) has the same sparsity pattern as C 0 . Thus, the sequence { L 0 k } obtained from L 0 respects the energy constraints and can be used to initialize ADMM. Furthermore, we assume that ( C 0 , A ) is observable, which guarantees that the spectrum of A − L 0 C 0 is contained inside the open unit disk and thus the initializing estimator gains { L 0 k } will be stabilizing. Finally , for simplicity { G k } is initialized to G k = 0 , k = 0 , 1 , . . . , K − 1 . D. Complexity analysis It has been shown that ADMM typically takes a few tens of iterations to con ver ge with modest accuracy for many applications [21]–[23], [26], [27]. The computational complexity of each iteration of ADMM is dominated by the L -minimization step, since the analytical solution of the G -minimization step can be directly obtained and the dual update is calculated by matrix addition. For the L -minimization subproblem, the descent Anderson-Moore method requires the solutions of two L yapunov equations (18)- (19) and one Sylvester equation (20) at each iteration. T o solve them, the Bartels-Ste wart method [33] yields the complexity O ( K 3 N 3 + K 3 M 3 + K 3 M N 2 + K 3 N M 2 ) , where K is the length of the period, M is the number of sensors and N is the dimension of the state vector . W e also note that the conv ergence of the Anderson-Moore method is guaranteed by Prop. 2, and it typically requires a small number of iterations because of the implementation of the Armijo rule. 18 For additional perspecti ve, we compare the computational complexity of our proposed methodology to a periodic sensor scheduling problem that is solved by semidefinite programming (SDP), for example as done in [16]. The complexity of SDP is approximated by O ( a 2 b 2 . 5 + ab 3 . 5 ) [34], where a and b denote the number of optimization variables and the size of the semidefinite matrix, respectively . For the linear matrix inequality (LMI) problem proposed in [16], the computation complexity is determined by a = N ( N + 1) / 2 + M and b = ( K + 1) N + M . Thus, problems in volving large-scale dynamical system with many state v ariables, result in large SDPs with computation complexity O ( N 6 . 5 ) . It can be seen that our approach reduces the computational complexity by a factor of N 3 . 5 compared to the LMI-based method of [16]. V . E X A M P L E : F I E L D E S T I M A T I O N O F A S PA T I A L LY E X T E N D E D S Y S T E M In order to demonstrate the effecti veness of our proposed periodic sensor scheduling algorithm, we consider the example of field monitoring. In this problem, sensors are deployed on a rectangular region to estimate the state of a dif fusion process described by the partial dif ferential equation [8], [19] ∂ ξ ( s , t ) ∂ t = ∇ 2 ξ ( s , t ) (28a) with Dirichlet boundary conditions ξ ( s , · ) = 0 s ∈ ∂ D (28b) where ξ ( s , t ) denotes the field (or state) value at location s and time t , ∇ 2 denotes the Laplace operator, and ∂ D denotes the boundary of a rectangular re gion of interest D . W e consider a spatially-discretized approximation of (28) and our aim is to estimate the state ov er the entire discrete lattice using a small number of sensors; see Fig. 1 for an e xample. W ith an abuse of notation, a simple discrete approximation of (28) can be generated by setting [8] ∇ 2 ξ ( s , t ) s =( i,j ) ≈ ξ ( i + 1 , j, t ) − 2 ξ ( i, j, t ) + ξ ( i − 1 , j, t ) h 2 + ξ ( i, j + 1 , t ) − 2 ξ ( i, j, t ) + ξ ( i, j − 1 , t ) h 2 , (29) for i = 0 , 1 , . . . , ` h and j = 0 , 1 , . . . , ` v , where ` h + 2 and ` v + 2 are the width and length of a rectangular region, respecti vely; for example, ` h = ` v = 4 in Fig. 1. In (29), h denotes the physical distance between the lattice points, and ξ ( − 1 , j, t ) = ξ ( ` h + 1 , j, t ) = ξ ( i, − 1 , t ) = ξ ( i, ` v + 1 , t ) = 0 for all indices i, j and time t . From (28) and (29), we can obtain the e volution equations d dt x ( t ) = A ∆ x ( t ) , where x ( t ) ∈ R N , N = ( ` h + 1) × ( ` v + 1) , denotes the state vector x ( t ) = [ ξ (0 , 0 , t ) , ξ (0 , 1 , t ) , . . . , ξ ( ` h , ` v , t )] T , and A ∆ 19 −1 0 1 2 3 4 5 −1 0 1 2 3 4 5 X − axis (m) Y − axis (m) Estimated Points Sensor Locations Boundary Points 1 3 7 8 2 5 4 10 9 6 Fig. 1: M = 10 sensors deployed in a 6 × 6 region. can be directly computed from (29). Finally , applying a discretization in time and introducing process noise (i.e., a spatio-temporal random field) into the e volution yields x k +1 = Ax k + w k . Here, x k is the state vector , w k is a white Gaussian process with zero mean and cov ariance matrix Q , A is the system transition matrix A = e A ∆ T , and T is the temporal sampling interv al. W e assume that M sensors, M < N , are deployed and make measurements of the state according to y k = Cx k + v k , where y k ∈ R M is the measurement vector , v k denotes the measurement noise which is a white Gaussian process with zero mean and cov ariance matrix R , and C is the M × N observation matrix. For example, the case where the m th row of C contains only one nonzero entry equal to 1 corresponds to the scenario in which the m th entry of y k represents measurements of the field at the location of the m th sensor . W e consider an instance in which M = 10 sensors are deployed to monitor N = 25 field points sho wn in Fig. 1. W e assume that each sensor can be selected at most η times, η ∈ { 1 , . . . , 10 } , during any period of length K = 10 . Furthermore, we select T = 0 . 5 , Q = 0 . 25 I , and R = I . The ADMM stopping tolerance is = 10 − 3 . In our computations, ADMM con ver ges for ρ ≥ 10 and the required number of ADMM iterations is approximately 20 . In Fig. 2, for our approach we present the estimation performance, namely the cumulative traces of error covariance matrices ov er one period, respecti vely as a function of the cumulative column-cardinality of { L k } and the measurement frequency bound η . In the left plot, we fix η = 5 and vary γ , which results 20 0 20 40 60 86 87 88 89 90 91 92 Column Cardinality of {L k } Trace of Estimation Error Covariance 0 5 10 82 83 84 85 86 87 88 89 90 91 92 Measurement Frequency Bound η η = 5 γ = 0 Fig. 2: Estimation performance obtained from our approach. Left plot: T radeoff between estimation performance and total number of sensors (in terms of column-cardinality of { L k } ) for a fixed η = 5 ; Right plot: Estimation performance as a function of measurement frequency bound η . in changes in the column-cardinality of { L k } and renders the trade-of f curve between the conflicting objecti ves of good estimation performance and minimal sensor usage. Numerical results demonstrate that as the column-cardinality of { L k } increases and more sensors are acti vated, the estimation performance improv es. In the right plot, we observe that the estimation performance is improved by increasing η . This is not surprising, as a larger value of η allows the (most informati ve) sensors to be activ e more frequently . 0 1 2 3 4 5 6 7 8 9 10 82 83 84 85 86 87 88 89 90 91 92 η : Measurement Frequency Bound Trace of Estimation Error Covariance Random Schedule ( γ = 0.15) Proposed Schedule ( γ = 0.15) Random Schedule ( γ = 0.1) Proposed Schedule ( γ = 0.1) Random Schedule ( γ = 0) Proposed Schedule ( γ = 0) Fig. 3: Performance comparison of random schedules versus our proposed schedule. 21 Next, we compare the estimation performance of our approach to that of random scheduling, where the latter method refers to randomly selected sensor schedules that satisfy the measurement frequency constraint and have the same total number of active sensors ov er one period as the schedule obtained from our approach. The performance of the random strategy is taken to be the av erage of the traces of error cov ariance matrices ov er 500 simulation trials. In Fig. 3, the estimation performance is presented as a function of the measurement frequency bound η for three different values of the sparsity-promoting parameter γ = 0 , 0 . 1 , 0 . 15 . Numerical results sho w that our approach significantly outperforms the random strategy for γ = 0 . 1 , 0 . 15 , as the former approach takes into account sensor acti vations over both time and space. For γ = 0 there is no penalty on sensor activ ations, and to achie ve the best estimation performance e very sensor is active η times per period (i.e., all sensors attain their measurement frequency bound). As a consequence, the performance gap between our approach and that of the random strategy is not as large for γ = 0 as it is for γ > 0 . In our numerical experiments for smaller versions of this example, where exhausti ve searches are feasible, we observed that our proposed method yields sensor schedules that are identical or close in performance to the globally optimal schedule found via an exhaustiv e search. In Fig. 4, we use ADMM to obtain the sensor schedule over a time period of length K = 10 for γ ∈ { 0 , 0 . 1 , 0 . 15 } and η ∈ { 1 , 5 , 8 } ; the subplots represent increasing values of γ from left to right and increasing values of η from top to bottom. In each subplot, the horizontal axis represents discrete time, the vertical axis represents sensor indices, and circles represent activ ated sensors. W e also observe that sensors selected at two consecutiv e time instances tend to be spatially distant from each other . For example, at time instants t = 1 , 2 , 3 , the acti ve sensors are 6 , 9 , 4 , respectiv ely . In Figs. 4-(I-a), (I-b), and (I-c), we assume η = 1 and vary the magnitude of the sparsity-promoting parameter γ . As seen in Fig. 4-(I-a), for γ = 0 e very sensor is selected e xactly once ov er K = 10 time steps. Figs. 4-(I-b) and (I-c) demonstrate that fewer sensors are selected as γ is further increased. This is to be expected, as the value of γ in (9) determines our emphasis on the column-cardinality of { L k } . In Figs. 4-(I-c), (II-c), and (III-c) for γ = 0 . 15 we compare the optimal time-periodic schedules for dif ferent v alues of the frequency bound η = 1 , 5 , 8 . Numerical results sho w that for γ = 0 . 15 the 6 th and 7 th sensor are selected. T o justify this selection, we note that these two sensors are located close to the center of the spatial region D ; see Fig. 1. Although we consider a random Gaussian field, the states at the boundary ∂ D are forced to take the value zero and the states closest to the center of D are subject to the largest uncertainty . Therefore, from the perspecti ve of entropy , the measurements taken from the sensors 6 and 7 are the most informativ e for the purpose of field estimation. As we increase η , we allo w such informativ e sensors to be activ e more frequently . 22 1 2 3 4 5 6 7 8 9 10 1 2 3 4 5 6 7 8 9 10 Time Instant Sensor Index 1 2 3 4 5 6 7 8 9 10 1 2 3 4 5 6 7 8 9 10 Time Instant Sensor Index 1 2 3 4 5 6 7 8 9 10 1 2 3 4 5 6 7 8 9 10 Time Instant Sensor Index (I-a) (I-b) (I-c) 1 2 3 4 5 6 7 8 9 10 1 2 3 4 5 6 7 8 9 10 Time Instant Sensor Index 1 2 3 4 5 6 7 8 9 10 1 2 3 4 5 6 7 8 9 10 Time Instant Sensor Index 1 2 3 4 5 6 7 8 9 10 1 2 3 4 5 6 7 8 9 10 Time Instant Sensor Index (II-a) (II-b) (II-c) 1 2 3 4 5 6 7 8 9 10 1 2 3 4 5 6 7 8 9 10 Time Instant Sensor Index 1 2 3 4 5 6 7 8 9 10 1 2 3 4 5 6 7 8 9 10 Time Instant Sensor Index 1 2 3 4 5 6 7 8 9 10 1 2 3 4 5 6 7 8 9 10 Time Instant Sensor Index (III-a) (III-b) (III-c) Fig. 4: I- Sensor scheduling schemes with measurement frequenc y bound η = 1 : (I-a) γ = 0 , (I-b) γ = 0 . 1 , (I-c) γ = 0 . 15 . II- Sensor scheduling schemes with measurement frequency bound η = 5 : (II-a) γ = 0 , (II-b) γ = 0 . 1 , (II-c) γ = 0 . 15 . III- Sensor scheduling schemes with measurement frequency bound η = 8 : (III-a) γ = 0 , (III-b) γ = 0 . 1 , (III-c) γ = 0 . 15 . Moreov er , the sensor schedule in Fig. 4-(II-c) verifies the optimality of the uniform stagg er ed sensing schedule for two sensors, a sensing strate gy whose optimality was prov en in [20] and [15, Proposition 5 . 2 ]. In addition, although the periodicity of the sensor schedule was a priori fixed at the value K = 10 , as η increases numerical results demonstrate repetitiv e patterns in the optimal sensor schedule. As seen in Figs. 4-(II-c) and (III-c), for η = 5 and η = 8 the sensor schedule repeats itself five times over 10 time steps and two times ov er 10 time steps, respectiv ely . This indicates that the v alue of the sensing period 23 K can be made smaller than 10 . Comparison with existing methods In this subsection, we compare the performance of our approach with that of methods proposed in [15]–[17] and an exhausti ve search that enumerates all possible measurement sequences. For tractability in the exhausti ve search, we consider a small random field where N = 4 and R = I . The values of other system parameters K , M , T and Q are specified in the following numerical examples. Also we set γ = 0 to make our approach comparable to the existing methods in [15]–[17]. The ADMM stopping tolerance is chosen as = 10 − 3 . 1 2 3 4 5 6 7 1 2 1 2 3 4 5 6 7 1 2 Sensor Index 1 2 3 4 5 6 7 1 2 Time Instant η 1 = 1, η 2 = 6 η 1 = 2, η 2 = 5 η 1 = 3, η 2 = 4 Fig. 5: The periodic sensor schedule of two sensors with K = 7 . In Fig. 5, we study a numerical example stated in [15] and present the sensor schedules obtained from our approach with K = 7 , M = 2 , T = 0 . 5 , Q = 0 . 25 I , η 1 + η 2 = 7 and η 1 ∈ { 1 , 2 , 3 } . W e observe that the sensor with smaller value of measurement frequency bound (in the present example, this corresponds to sensor one with η 1 ∈ { 1 , 2 , 3 } ) is scheduled as temporally uniformly as possible; the resulting periodic sensor schedules are in agreement with those obtained in [15, Prop. 5.2]. In Fig. 6, we compare the performance of our approach with the periodic sensor scheduling method in [16]; each plot in Fig. 6 represents the gap between the approach being considered and the globally optimal sensor schedule as a function of the process noise variance q , with K = M = 4 , T = 0 . 5 , and Q = q I . W e recall that the periodic sensor scheduling problem in [16] is formulated under the assumption of negligible process noise, and is solved using linear matrix inequalities (LMIs). The assumption that process noise is negligible results in the insensitivity of the performance objective to the order in which 24 10 −2 10 −1 10 0 10 1 0 0.2 0.4 0.6 0.8 1 1.2 1.4 1.6 1.8 Process Noise Variance q Trace of Estimation Error Covariance Performance Gap for the LMI−based Method of [18] Performance Gap for Our Approach Fig. 6: Performance gaps of the method in [16] and our approach with respect to the optimal schedule. sensors are activ ated. This assumption holds for deep space applications considered in [18], but is not a practical assumption in general. Fig. 6 demonstrates that for small values of q , our approach and that of [16] yield very similar performance. Ho wev er , for q > 0 . 1 our approach results in significant improvement in estimation performance. That is due to the fact that our optimization procedure takes into account the temporal ordering of sensor measurements. In Fig. 7, we compare the performance of our proposed sensor scheduling approach and the periodic switching policy of [17], where M = 4 and Q = 0 . 25 I . In Fig. 7-(a), each plot represents the gap between the approach being considered and the globally optimal sensor schedule for different values of the sampling interval T , where K = 4 , and T denotes the sampling time used to discretize the continuous-time system in (28). Since K is the period in discrete time, the period length in continuous time is gi ven by = K T . Simulation results sho w that both of the sensor scheduling methods achiev e the performance of the globally optimal sensor schedule as T → 0 . This is due to the fact that → 0 while T → 0 . And it has been shown in [17] that the best estimation performance is attained by using a periodic switching policy as → 0 (and thus sensors are switched as fast as possible). Howe ver , as T increases, our approach outperforms the periodic switching policy significantly , which indicates that the sensor schedules obtained from the method of [17] are inappropriate for scheduling sensors for discrete-time systems with moderate sampling rates. In Fig. 7-(b), we use the periodic switching polic y of [17] to obtain the optimal sensor schedule and compare its performance with our approach for the fixed sampling interval T = 0 . 5 and different values 25 10 −3 10 −2 1 2 3 4 5 6 7 8 9 10 x 10 −3 Sampling Interval T Trace of Estimation Error Covariance Performance Gap for the Periodic Switching Policy of [19] Performance Gap for Our Approach (a) 3 4 5 6 7 8 9 8.8 8.9 9 9.1 9.2 9.3 9.4 9.5 Period Length K Trace of Averaged Estimation Error Covariance Our Approach Periodic Switching Policy of [19] (b) Fig. 7: Performance comparison with the periodic switching policy of [17]: a) Performance gaps of the periodic switching policy of [17] and our approach with respect to the optimal schedule; b) Performance comparison between our approach and the periodic switching policy of [17] for dif ferent v alues of period lengths. of K . Fig. 7-(b) demonstrates that the periodic switching policy of [17] loses optimality as K increases. This is not surprising, since the optimality of the periodic switching policy is only guaranteed as the period length goes to zero. Therefore, to schedule sensors on a discrete-time system with a moderate sampling interval, such as T = 0 . 5 in the present example, our approach achiev es better estimation performance than the periodic switching policy of [17]. 26 V I . C O N C L U S I O N In this paper , we studied the problem of sensor scheduling for linear dynamical systems. W e proposed an algorithm that determines optimal time-periodic sensor schedules. In order to strike a balance between estimation accuracy and the number of sensor activ ations, the optimization problem aims to minimize the trace of the estimation error cov ariance matrices while penalizing the number of nonzero columns of the estimator gains. W e employed ADMM, which allows the optimization problem to be decomposed into subproblems that can either be solved efficiently using iterativ e numerical methods or solved analytically to obtain exact solutions. Our results showed that our approach outperforms previously av ailable periodic sensor scheduling algorithms. In this paper , we assumed that the period length of the periodic sensor schedule is fixed and is not an optimization variable. This leav es open the question of how to find the optimal period. Also, we characterized the sensor energy cost in terms of the number of times each sensor can be activ ated ov er a period. In future work, instead of a “hard” constraint on the measurement frequency bound, we could consider more practical ener gy models to take into account the cost of repetitiv ely selecting the informativ e sensors. Furthermore, in order to reduce the computation burden of the fusion center , de veloping a decentralized architecture where ADMM can be carried out in a distributed way and by the sensors themselves is another future research direction. A P P E N D I X A P RO O F O F P RO P O S I T I O N 1 The optimization problem (17) is equiv alent to minimize φ ( { L k } ) = K − 1 X k =0 tr( P k ) + ρ 2 K − 1 X k =0 tr[( L k − U k ) T ( L k − U k )] subject to P k +1 = ( A − L k C ) P k ( A − L k C ) T + BQB T + L k RL T k . ( L - Φ ) T o find the necessary conditions for optimality , we find the gradient of φ and set ∇ L k φ = 0 for k = 0 , 1 , · · · , K − 1 . W e begin by assuming an incremental change in the unknown variables { L k } and finding the resulting incremental change to the value of the objectiv e. Replacing L k with L k + δ L k and φ with φ + δ φ in the objecti ve function of ( L - Φ ) and collecting first order v ariation terms on both sides, we obtain δ φ = K − 1 X k =0 tr( δ P k ) + ρ 2 K − 1 X k =0 tr[( L k − U k ) T δ L k + δ L T k ( L k − U k )] . 27 W e note that for δ L k to constitute a legitimate v ariation of L k , it has to satisfy the periodicity property δ L k + K = δ L k . Similarly , replacing L k with L k + δ L k and P k with P k + δ P k in the constraint equation of ( L - Φ ) and collecting first-order variation terms on both sides, we obtain δ P k +1 = ( A − L k C ) δ P k ( A − L k C ) T + δ M k , where δ M k = − δ L k CP k ( A − L k C ) T − ( A − L k C ) P k C T δ L T k + δ L k RL T k + L k R δ L T k . The difficulty with finding the gradient of φ from the abo ve equation is the dependence of δ φ on δ P k , with the dependence of δ P k on δ L k being through a L yapunov recursion. In what follows, we aim to express P K − 1 k =0 tr( δ P k ) in terms of { δ L k } . It is easy to see that δ P k = δ M k − 1 + −∞ X n = k − 1 ( A − L k − 1 C ) · · · ( A − L n C ) · δ M n − 1 · ( A − L n C ) T · · · ( A − L k − 1 C ) T . T aking the trace of both sides of the equation and summing over k , we have K − 1 X k =0 tr( δ P k ) = K − 1 X k =0 tr( δ M k − 1 ) + K − 1 X k =0 −∞ X n = k − 1 tr[( A − L n C ) T · · · ( A − L k − 1 C ) T ( A − L k − 1 C ) · · · ( A − L n C ) δ M n − 1 ] , where we hav e used the property of the trace to change the order of the terms inside the square brackets. No w exploiting the periodicity properties L k + K = L k , δ L k + K = δ L k , P k + K = P k , which also imply the periodicity δ M k + K = δ M k of { δ M k } , the double sum in the last equation above can be rewritten to giv e K − 1 X k =0 tr( δ P k ) = K − 1 X n =0 tr { [ I + + ∞ X k = n +1 ( A − L n C ) T · · · ( A − L k − 1 C ) T I ( A − L k − 1 C ) · · · ( A − L n C )] δ M n − 1 } . T o help with the simplification of the abov e sums, we define the new matrix variable V n as V n = I + + ∞ X k = n +1 ( A − L n C ) T · · · ( A − L k − 1 C ) T I ( A − L k − 1 C ) · · · ( A − L n C ) . It can be seen that { V n } is periodic, V n + K = V n , and satisfies V n = ( A − L n C ) T V n +1 ( A − L n C ) + I . Returning to P K − 1 k =0 tr( δ P k ) and using the definition of V n , we obtain K − 1 X k =0 tr( δ P k ) = K − 1 X n =0 tr( V n δ M n − 1 ) = K − 1 X n =0 tr( V n +1 δ M n ) , 28 where the last equality results from the periodicity of { V n } and { δ M n } . Recalling that δ M n can be written explicity in terms of { δ L k } , we hav e thus achie ved our goal of expressing P K − 1 k =0 tr( δ P k ) in terms of { δ L k } . W e next carry out the last step required to find the gradient of φ . Replacing P K − 1 k =0 tr( δ P k ) with P K − 1 k =0 tr( V k +1 δ M k ) in the expression for δ φ , and using the definition of δ M k , we obtain δ φ = K − 1 X k =0 tr( V k +1 δ M k ) + ρ 2 K − 1 X k =0 tr[( L k − U k ) T δ L k + δ L T k ( L k − U k )] =2 K − 1 X k =0 tr[ − CP k ( A − L k C ) T V k +1 δ L k + RL T k V k +1 δ L k ] + ρ K − 1 X k =0 tr[( L k − U k ) T δ L k ] , where we hav e used the properties of the trace to arriv e at the last equality . Thus ∇ L k φ = [ − 2 CP k ( A − L k C ) T V k +1 + RL T k V k +1 + ρ ( L k − U k ) T ] T . Setting ∇ L k φ = 0 gi ves the necessary condition for optimality 0 = − 2 V k +1 ( A − L k C ) P k C T + 2 V k +1 L k R + ρ ( L k − U k ) , where P k and V k satisfy the recursion euqations P k +1 = ( A − L k C ) P k ( A − L k C ) T + BQB T + L k RL T k V k = ( A − L k C ) T V k +1 ( A − L k C ) + I . The proof is now complete. A P P E N D I X B P RO O F O F P RO P O S I T I O N 3 Problem (24) is equiv alent to minimize ψ m ( G m ) := K − 1 X k =0 γ card k G k,m k 2 + K − 1 X k =0 ρ 2 k G k,m − S k,m k 2 2 subject to K − 1 X k =0 card k G k,m k 2 = q , q ∈ { 0 , . . . , η } where G m := [ G 0 ,m , · · · , G K − 1 ,m ] . Similar to G m , we form the matrix S m by picking out the m th column from each of the matrices in the set { S k } and stacking them to obtain S m := [ S 0 ,m , · · · , S K − 1 ,m ] . W e define κ := P K − 1 k =0 card k S k,m k 2 , which giv es the column-cardinality of S m . It can be shown that if q = κ then the minimizer G κ m of problem (24) is S m , and ψ m ( G κ m ) = γ κ . If q > κ , we ha ve ψ m ( G m ) > ψ m ( G κ m ) for arbitrary v alues of G m ∈ F q since ψ m ( G m ) = γ q + ρ 2 k G m − S m k 2 F 29 which is greater than ψ m ( G κ m ) = γ κ . Therefore, the solution of (23) is only determined by solving the sequence of minimization problems (24) for q = 0 , 1 , . . . , min { η , κ } rather than q = 0 , 1 , · · · , η . For a gi ven q ∈ { 0 , 1 , min { η , κ }} , problem (24) can be written as minimize G m K − 1 X k =0 ρ 2 || G k,m − S k,m || 2 2 subject to K − 1 X k =0 card k G k,m k 2 = q . For q = 0 , the minimizer G q m of the optimization problem is 0 . For q 6 = 0 , it was demonstrated in [23, Appendix B] that the solution is obtained by projecting the minimizer ( G m = S m ) of the objectiv e function onto the constraint set P K − 1 k =0 card k G k,m k 2 = q . This gives G k,m = S k,m || S k,m || 2 ≥ || [ S m ] q || 2 0 || S k,m || 2 < || [ S m ] q || 2 , (30) for k = 0 , 1 , · · · , K − 1 , where [ S m ] q is the q th largest column of S m in the 2 -norm sense. The proof is now complete. A P P E N D I X C P RO O F O F P RO P O S I T I O N 4 According to Prop. 3, substituting the minimizer G q m of problem (24) into its objective function yields ψ m ( G q m ) = K − 1 X k =0 ρ 2 k G k,m − S k,m k 2 2 + K − 1 X k =0 γ card ( k G k,m k 2 ) = K − 1 X k =0 k / ∈ χ q ρ 2 k S k,m k 2 2 + γ q , where S k,m denotes the m th column of S k , χ q is a set that is composed by indices of the first q lar gest columns of S m (refer to Appendix B) in the 2 -norm sense, and χ 0 = ∅ . W e have ψ m ( G q m ) − ψ m ( G q +1 m ) = ρ 2 k [ S m ] q +1 k 2 2 − γ (31) for q = 0 , 1 , . . . , min { η , κ } − 1 , where [ S m ] q +1 denotes the ( q + 1) th largest column of S m in the 2 -norm sense. Since [ S m ] 1 ≥ [ S m ] 2 ≥ · · · ≥ [ S m ] min { η ,κ } , for γ ∈ ( ρ 2 k [ S m ] 1 k 2 2 , ∞ ) equation (31) yields ψ m ( G 0 m ) − ψ m ( G 1 m ) < 0 and ψ m ( G q m ) − ψ m ( G q +1 m ) < 0 for other q ∈ { 1 , . . . , min { η , κ } − 1 } . Therefore, the minimizer of (23) is giv en by G 0 m . Similarly , for γ ∈ ( ρ 2 k [ S m ] q +1 k 2 2 , ρ 2 k [ S m ] q k 2 2 ] equation (31) yields ψ m ( G l − 1 m ) − ψ m ( G l m ) ≥ 0 for l = 1 , . . . , q , and ψ m ( G l m ) − ψ m ( G l +1 m ) < 0 for l = q , . . . , min { η , κ } − 1 . 30 Therefore, the minimizer of (23) is given by G q m . Finally , we can write the solution of (23) in the form gi ven in the statement of Prop. 4. The proof is now complete. A C K N O W L E D G M E N T The authors ackno wledge Dr . Fu Lin and Prof. Mihailo R. Jov anovi ´ c for making a vailable their Matlab codes for sparsity-promoting linear quadratic re gulators ( lqrsp.m , a v ailable at http://www.ece.umn .edu/users/mihailo/software/lqrsp/index.html ). These files facilitated the authors in implementing the algorithms presented in this work for optimal periodic sensor scheduling. R E F E R E N C E S [1] R. Nowak, U. Mitra, and R. W illett, “Estimating inhomogeneous fields using wireless sensor networks, ” IEEE Journal on Selected Ar eas in Communications , vol. 22, no. 6, pp. 999–1006, Aug. 2004. [2] E. Masazade, R. Niu, P . K. V arshney , and M. Keskinoz, “Energy aware iterativ e source localization for wireless sensor networks, ” IEEE T ransactions on Signal Pr ocessing , vol. 58, no. 9, pp. 4824–4835, Sept. 2010. [3] E. Masazade, R. Niu, and P . K. V arshney , “Dynamic bit allocation for object tracking in wireless sensor networks, ” IEEE T ransactions on Signal Processing , vol. 60, no. 10, pp. 5048–5063, Oct. 2012. [4] E. Ertin, J. W . Fisher , and L. C. Potter , “Maximum mutual information principle for dynamic sensor query problems, ” in Pr oceedings of the 2nd International Confer ence on Information Processing in Sensor Networks , 2003, pp. 405–416. [5] H. W ang, G. Pottie, K. Y ao, and D. Estrin, “Entropy-based sensor selection heuristic for target localization, ” in Proceedings of the 3rd International Conference on Information Pr ocessing in Sensor Networks , 2004, pp. 36–45. [6] V . Gupta, T . Chung, B. Hassibi, and R. M. Murray , “Sensor scheduling algorithms requiring limited computation, ” in Pr oceedings of IEEE International Conference on Acoustics, Speech, and Signal Pr ocessing , 2004, vol. 3, pp. 825–828. [7] S. Joshi and S. Boyd, “Sensor selection via con vex optimization, ” IEEE T ransactions on Signal Pr ocessing , vol. 57, no. 2, pp. 451–462, Feb. 2009. [8] Y . Mo, R. Ambrosino, and B. Sinopoli, “Sensor selection strategies for state estimation in energy constrained wireless sensor networks, ” Automatica , vol. 47, no. 7, pp. 1330–1338, 2011. [9] X. Shen and P . K. V arshney , “Sensor selection based on generalized information gain for target tracking in large sensor networks, ” IEEE T ransactions on Signal Pr ocessing , vol. 62, no. 2, pp. 363–375, Jan. 2014. [10] A. S. Chhetri, D. Morrell, and A. Papandreou-Suppappola, “Efficient search strategies for non-myopic sensor scheduling in target tracking, ” in Proceedings of the 38 Asilomar Conference on Signals, Systems and Computers , 2004, vol. 2, pp. 2106–2110. [11] J. Liu, D. Petro vic, and F . Zhao, “Multi-step information-directed sensor querying in distributed sensor networks, ” in Pr oceedings of IEEE International Conference on Acoustics, Speech, and Signal Pr ocessing (ICASSP) , 2003, vol. 5, pp. 145–148. [12] P . Hovareshti, V . Gupta, and J. S. Baras, “Sensor scheduling using smart sensors, ” in Proceedings of the 46th IEEE Confer ence on Decision and Control , Dec. 2007, pp. 494–499. [13] M. P . V itus, W . Zhang, A. Abate, J. Hu, and C. J. T omlin, “On ef ficient sensor scheduling for linear dynamical systems, ” Automatica , vol. 48, no. 10, pp. 2482–2493, 2012. 31 [14] W . Zhang, M. P . V itus, J. Hu, A. Abate, and C. J. T omlin, “On the optimal solutions of the infinite-horizon linear sensor scheduling problem, ” in Proceedings of the 49th IEEE Conference on Decision and Contr ol , Dec. 2010, pp. 396–401. [15] L. Shi, P . Cheng, and J. Chen, “Optimal periodic sensor scheduling with limited resources, ” IEEE T ransactions on Automatic Contr ol , vol. 56, no. 9, pp. 2190–2195, Sept. 2011. [16] T . H. McLoughlin and M. Campbell, “Solutions to periodic sensor scheduling problems for formation flying missions in deep space, ” IEEE T ransactions on Aerospace and Electr onic Systems , v ol. 47, no. 2, pp. 1351–1368, April 2011. [17] J. L. Ny , E. Feron, and M. A. Dahleh, “Scheduling continuous-time Kalman filters, ” IEEE T ransactions on Automatic Contr ol , v ol. 56, no. 6, pp. 1381–1394, 2011. [18] E. Feron and C. Olivier , “T argets, sensors and infinite-horizon tracking optimality , ” in Pr oceedings of the 29th IEEE Confer ence on Decision and Control , 1990, vol. 4, pp. 2291–2292. [19] H. Zhang, J. Moura, and B. Krogh, “Dynamic field estimation using wireless sensor networks: Tradeof fs between estimation error and communication cost, ” IEEE T ransactions on Signal Pr ocessing , vol. 57, no. 6, pp. 2383–2395, June 2009. [20] R. Niu, P . K. V arshney , K. Mehrotra, and C. Mohan, “T emporally staggered sensors in multi-sensor target tracking systems, ” IEEE T ransactions on Aerospace and Electr onic Systems , v ol. 41, no. 3, pp. 794–808, July 2005. [21] E. Masazade, M. Fardad, and P . K. V arshney , “Sparsity-promoting extended Kalman filtering for target tracking in wireless sensor networks, ” IEEE Signal Pr ocessing Letters , vol. 19, no. 12, pp. 845–848, Dec. 2012. [22] F . Lin, M. Fardad, and M. R. Jovano vi ´ c, “Design of optimal sparse feedback gains via the alternating direction method of multipliers, ” IEEE T ransactions on Automatic Contr ol , vol. 58, pp. 2426–2431, 2013. [23] F . Liu, M. Fardad, and M. R. Jovano vi ´ c, “ Algorithms for leader selection in large dynamical networks: Noise-corrupted leaders, ” in Pr oceedings of the 50th IEEE Conference on Decision and Contr ol , Dec. 2011, pp. 2932–2937. [24] R. E. Kalman, “ A new approach to linear filtering and prediction problems, ” T ransactions of the ASME - Journal of Basic Engineering , v ol. 1, no. 82, pp. 35–45, 1960. [25] S. Boyd and L. V andenberghe, Con vex Optimization , Cambridge University Press, Cambridge, 2004. [26] S. Boyd, N. Parikh, E. Chu, B. Peleato, and J. Eckstein, “Distributed optimization and statistical learning via the alternating direction method of multipliers, ” F oundations and T rends in Machine Learning , vol. 3, no. 1, pp. 1–122, 2011. [27] F . Lin, M. Fardad, and M. R. Jov anovi ´ c, “ Augmented Lagrangian approach to design of structured optimal state feedback gains, ” IEEE T ransactions on Automatic Contr ol , vol. 56, no. 12, pp. 2923–2929, Dec. 2011. [28] T . Chen and B. Francis, Optimal Sampled-Data Contr ol Systems , Springer -V erlag, 1995. [29] H. Fassbender and D. Kressner , “Structured eigen value problems, ” GAMM-Mitt. , vol. 29, pp. 297–318, 2006. [30] T . Mori, N. Fukuma, and M. Kuwahara, “On the discrete Lyapunov matrix equation, ” IEEE T ransactions on Automatic Contr ol , v ol. 27, no. 2, pp. 463–464, Apr . 1982. [31] F . Lin, M. Fardad, and M. R. Jovano vi ´ c, “Design of optimal sparse feedback gains via the alternating direction method of multipliers, ” http://arxiv .org/abs/1111.6188v1 , 2011. [32] D. P . Bertsekas, Nonlinear Pro gramming , Athena Scientific, Belmont, MA, 1999. [33] R. H. Bartels and G. W . Ste wart, “Solution of the matrix equation AX + X B = C , ” Commun. ACM , vol. 15, no. 9, pp. 820–826, 1972. [34] A. Nemirovski, “Interior point polynomial time methods in conv ex programming, ” 2012 [Online], A vailable: http://www2.isye.gatec h.edu/ nemir ovs/Lect IPM.pdf .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment