Subspace Properties of Network Coding and their Applications

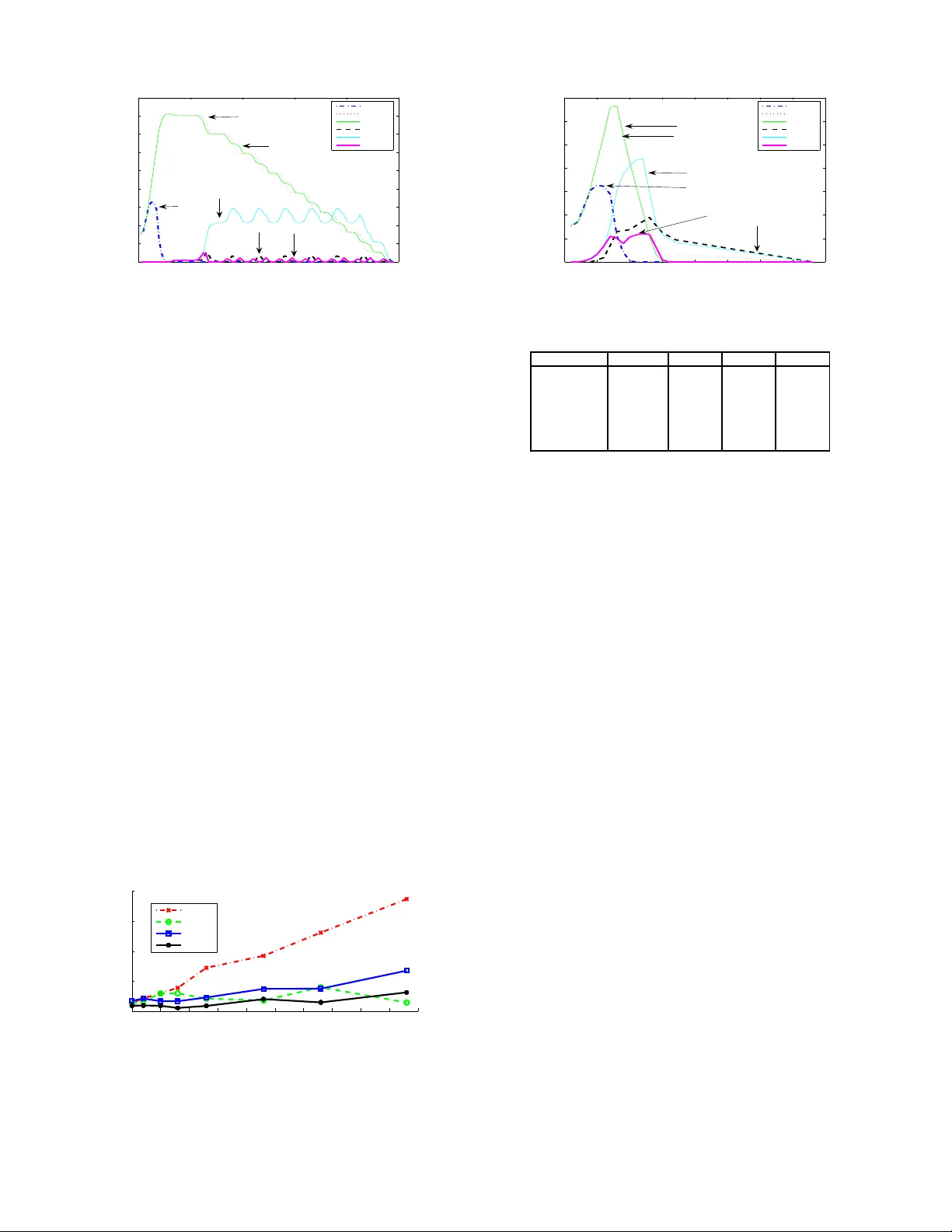

Systems that employ network coding for content distribution convey to the receivers linear combinations of the source packets. If we assume randomized network coding, during this process the network nodes collect random subspaces of the space spanned…

Authors: Mahdi Jafari Siavoshani, Christina Fragouli, Suhas Diggavi