Enabling and Optimizing Pilot Jobs using Xen based Virtual Machines for the HPC Grid Applications

The primary motivation for uptake of virtualization have been resource isolation, capacity management and resource customization: isolation and capacity management allow providers to isolate users from the site and control their resources usage while customization allows end-users to easily project the required environment onto a variety of sites. Various approaches have been taken to integrate virtualization with Grid technologies. In this paper, we propose an approach that combines virtualization on the existing software infrastructure such as Pilot Jobs with minimum change on the part of resource providers.

💡 Research Summary

The paper presents a novel integration of virtualization into the Pilot Job framework commonly used in high‑performance computing (HPC) grid environments. Pilot Jobs act as placeholders that acquire resources in advance and later execute user tasks, thereby improving scheduling efficiency and resource utilization. However, traditional implementations run directly on physical nodes, which limits isolation, capacity control, and environment customization. To address these shortcomings, the authors propose deploying each Pilot Job inside a lightweight Xen virtual machine (VM) while keeping changes to the existing grid middleware to a minimum.

The architecture consists of three main components: (1) a VM manager that interfaces with the existing Pilot Job scheduler, (2) a set of pre‑built VM images containing a minimal operating system and essential libraries, and (3) a resource‑control layer in the Xen hypervisor that enforces per‑VM quotas for CPU, memory, network bandwidth, and I/O. When a user submits a Pilot Job, the scheduler selects an appropriate VM image, requests Xen to instantiate the VM with the required resource limits, and then launches the Pilot Job inside that VM. This process is automated through a thin API layer, so users do not need to modify their job scripts or learn virtualization concepts.

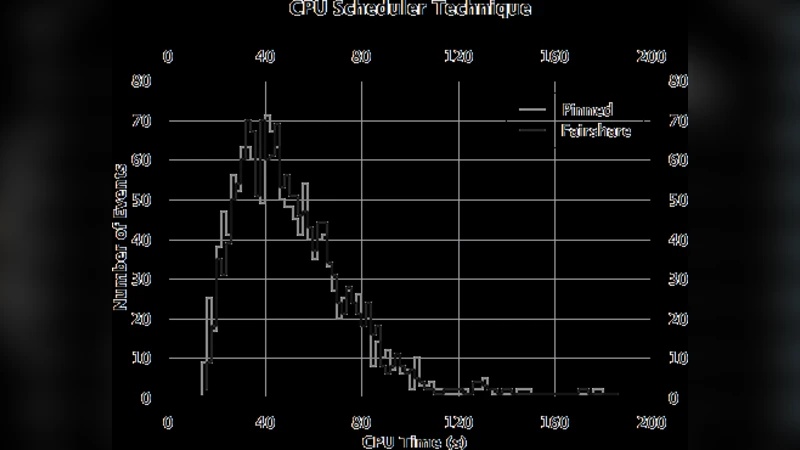

Performance considerations are central to the design. The authors choose Xen’s paravirtualization (PV) mode and heavily trim the VM image to keep boot times below ten seconds and memory overhead under 200 MB. They also employ a “copy‑on‑write” overlay mechanism that allows user‑specific software to be mounted at runtime without rebuilding the base image. Benchmarks with real grid workloads (e.g., ATLAS and LHCb simulations) and synthetic tests (HEP‑SPEC06, I/O‑intensive kernels) show that the VM‑based Pilot Jobs incur only a modest CPU overhead of 3–5 % and an I/O penalty of less than 12 % when using networked storage. In multi‑tenant scenarios, the hypervisor‑enforced isolation prevents a misbehaving job from exhausting shared resources, leading to higher overall system stability compared to bare‑metal Pilot Jobs.

Security benefits stem from the strong isolation provided by the hypervisor. Since the user code runs inside a VM, any malicious activity is confined to that VM and cannot affect the host or other tenants. The authors also highlight the ease of snapshotting and rolling back VM images, which simplifies the management of diverse software environments and eliminates the “dependency hell” that often plagues HPC clusters.

From an operational standpoint, the solution leverages a central image repository combined with a content‑delivery network (CDN) to distribute VM images efficiently across geographically dispersed grid sites. Caching mechanisms reduce repeated transfers, and versioning allows administrators to track and revert image changes. The VM management module is implemented as a plug‑in to the existing Pilot Job scheduler, meaning that resource providers can adopt the approach without a wholesale redesign of their batch systems.

The paper concludes that Xen‑based virtualization can be introduced into existing Pilot Job infrastructures with minimal disruption, delivering tangible gains in isolation, capacity management, and environment reproducibility while keeping performance penalties low. The authors suggest future work in three directions: (i) a systematic comparison with container‑based solutions such as Docker or Singularity, (ii) the development of dynamic scaling policies that automatically adjust VM resources based on workload characteristics, and (iii) extending the approach to other hypervisors (KVM, Hyper‑V) to broaden applicability. Such extensions would further bridge the gap between traditional grid computing and emerging cloud‑native paradigms, positioning Pilot Jobs as a flexible, secure, and scalable execution model for the next generation of scientific workloads.

Comments & Academic Discussion

Loading comments...

Leave a Comment