Modeling System Safety Requirements Using Input/Output Constraint Meta-Automata

Most recent software related accidents have been system accidents. To validate the absence of system hazards concerning dysfunctional interactions, industrials call for approaches of modeling system safety requirements and interaction constraints among components and with environments (e.g., between humans and machines). This paper proposes a framework based on input/output constraint meta-automata, which restricts system behavior at the meta level. This approach can formally model safe interactions between a system and its environment or among its components. This framework differs from the framework of the traditional model checking. It explicitly separates the tasks of product engineers and safety engineers, and provides a top-down technique for modeling a system with safety constraints, and for automatically composing a safe system that conforms to safety requirements. The contributions of this work include formalizing system safety requirements and a way of automatically ensuring system safety.

💡 Research Summary

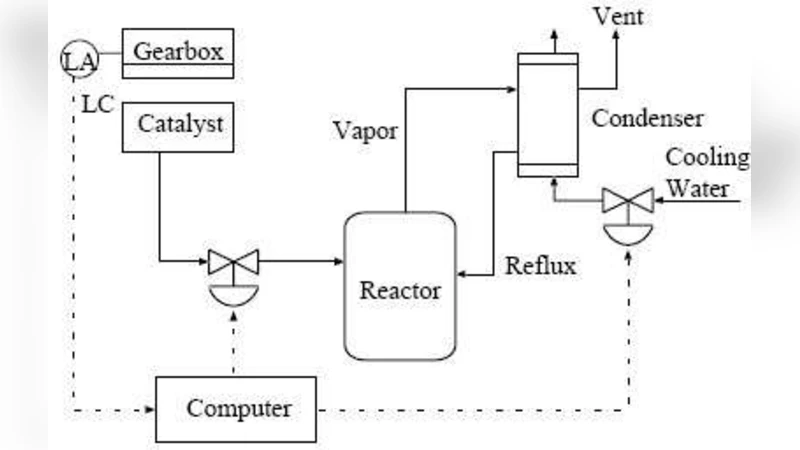

The paper addresses the growing prevalence of system‑level accidents in modern software‑intensive products, arguing that safety cannot be ensured merely by verifying the functional correctness of individual components. Instead, the interactions among components and between a system and its environment must be constrained to prevent hazardous emergent behavior. To this end, the authors introduce a formal framework based on Input/Output Constraint Meta‑Automata (I/O‑CMA). The framework consists of two hierarchical layers: the lower layer is a conventional automaton (e.g., DFA, LTS) that captures the functional behavior of the system, while the upper meta‑automaton specifies admissible sequences of input‑output event pairs. By defining safety requirements as constraints on these pairs, the meta‑automaton explicitly blocks unsafe interaction patterns such as “press accelerator then fail to apply brake” in an automotive context or “robot arm moves into human workspace without clearance” in collaborative robotics.

The methodology proceeds as follows. First, product engineers construct the functional model of the system. Second, safety engineers formalize safety policies as I/O‑CMA constraints. Third, a composition operator automatically merges the functional model with the meta‑automaton, yielding a new system model that by construction satisfies all specified safety constraints. This top‑down approach separates the responsibilities of product and safety engineers, avoiding the role ambiguity that often hampers traditional model‑checking workflows. Moreover, the meta‑automaton can propagate constraints downward, ensuring that safety policies defined at a high architectural level are enforced consistently across all subordinate components.

Compared with conventional model checking, which typically verifies safety properties after the system model has been built, the I/O‑CMA framework embeds safety directly into the model synthesis phase. This pre‑emptive guarantee reduces the need for extensive state‑space exploration and mitigates the state‑explosion problem. The authors demonstrate the approach with two case studies: an automotive electronic control unit interacting with driver inputs, and a collaborative industrial robot sharing a workspace with human operators. In both cases, the automatically composed safe system eliminated the hazardous scenarios while preserving normal functionality. Integration experiments with existing verification tools (e.g., SPIN, NuSMV) showed that the additional safety layer incurs modest computational overhead but yields significant reductions in verification time and engineering effort.

In summary, the paper contributes a rigorous method for formalizing system safety requirements as input/output constraints, a systematic composition technique that guarantees compliance, and a clear division of labor between product and safety engineering teams. The approach is especially relevant for cyber‑physical systems, human‑machine interfaces, and any domain where complex component interactions can give rise to latent hazards. Future work is suggested on scaling the meta‑automaton to real‑time systems, optimizing the composition algorithm for large‑scale designs, and expanding industrial case studies to validate the framework in production environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment