Nested Lattice Codes for Gaussian Relay Networks with Interference

In this paper, a class of relay networks is considered. We assume that, at a node, outgoing channels to its neighbors are orthogonal, while incoming signals from neighbors can interfere with each other. We are interested in the multicast capacity of these networks. As a subclass, we first focus on Gaussian relay networks with interference and find an achievable rate using a lattice coding scheme. It is shown that there is a constant gap between our achievable rate and the information theoretic cut-set bound. This is similar to the recent result by Avestimehr, Diggavi, and Tse, who showed such an approximate characterization of the capacity of general Gaussian relay networks. However, our achievability uses a structured code instead of a random one. Using the same idea used in the Gaussian case, we also consider linear finite-field symmetric networks with interference and characterize the capacity using a linear coding scheme.

💡 Research Summary

The paper investigates a class of relay networks in which each node’s outgoing links to its neighbors are orthogonal (e.g., separated in time, frequency, or code), while the incoming signals from those neighbors interfere with one another at the receiving node. This asymmetry—non‑interfering transmissions but interfering receptions—captures many practical wireless scenarios such as multi‑hop sensor or back‑haul networks. The authors focus on the multicast problem, where a single source wishes to deliver the same message to multiple destinations, and they aim to characterize the network’s capacity under this model.

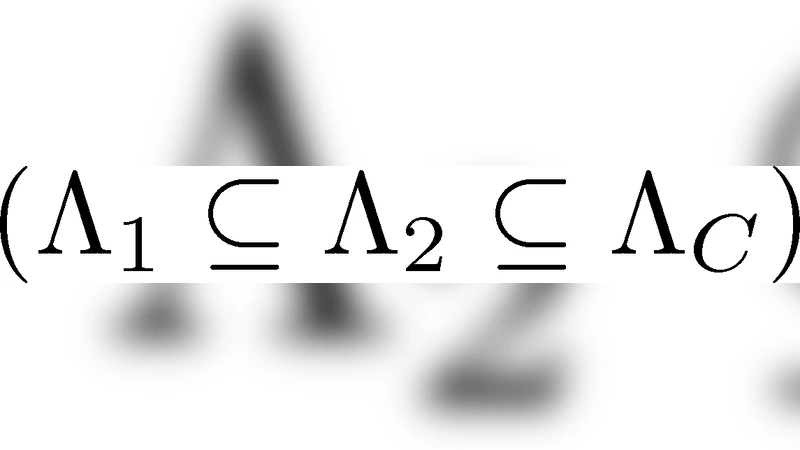

For the Gaussian version of the network, the authors propose an achievability scheme based on nested lattice codes. A nested lattice consists of a coarse lattice used for shaping (power control) and a fine lattice that carries the information symbols. Transmitters map their messages onto points of the fine lattice, then reduce them modulo the coarse lattice to satisfy the power constraint. Because lattice points add linearly, the superposition of simultaneously received signals at a relay is itself a lattice point (plus Gaussian noise). Each relay therefore performs a “compute‑and‑forward” operation: it decodes an integer linear combination of the transmitted lattice codewords rather than trying to recover each individual message. The decoded linear combination is then re‑encoded using the same lattice structure and forwarded to the next hop. This process repeats throughout the network, allowing the destination nodes to recover the original message by solving a system of linear equations formed by the collected combinations.

The central theoretical result is that the rate achieved by this lattice‑based scheme lies within a constant gap (independent of channel gains, noise variance, or network size) of the cut‑set upper bound on the multicast capacity. In other words, the scheme provides an “approximate capacity” that is as tight as the recent deterministic‑approximation result of Avestimehr, Diggavi, and Tse (ADT), but it does so with an explicit structured code rather than a random code ensemble. The constant gap is typically on the order of one or two bits per channel use, which is negligible for high‑rate applications.

Beyond the Gaussian setting, the authors extend the same conceptual framework to linear finite‑field symmetric networks. In this discrete model, each transmitted symbol belongs to a finite field (e.g., GF(2^q)), and the channel operation is a linear transformation over that field. By replacing nested lattices with linear block codes, each relay simply forwards the linear combination it receives. The authors prove that, in this case, the achievable rate exactly matches the cut‑set bound, thereby providing a full capacity characterization.

Key contributions of the paper can be summarized as follows:

- Model Definition – The paper formalizes a relay network with orthogonal outgoing links and interfering incoming links, a realistic abstraction for many wireless systems.

- Lattice‑Based Achievability – It introduces a nested‑lattice compute‑and‑forward scheme that exploits the linearity of lattice codes to handle interference without requiring each relay to decode individual messages.

- Constant‑Gap Approximation – It proves that the lattice scheme achieves rates within a universal constant of the information‑theoretic cut‑set bound, matching the performance of the ADT deterministic approximation while offering a constructive, structured code.

- Finite‑Field Extension – By translating the approach to linear finite‑field networks, the paper obtains an exact capacity result for that class, demonstrating the versatility of the underlying linear‑coding principle.

- Practical Implications – The use of structured codes facilitates implementation (e.g., via low‑complexity lattice decoders) and aligns with physical‑layer network coding concepts, suggesting applicability to emerging 5G/6G multi‑hop, device‑to‑device, and IoT scenarios.

Overall, the work shows that structured coding—specifically nested lattice codes—can achieve near‑optimal performance in relay networks with interference, bridging the gap between information‑theoretic capacity approximations and practical coding strategies. Future research directions include reducing lattice decoding complexity, handling asymmetric power constraints, and extending the framework to networks with multiple sources or more general interference patterns.

Comments & Academic Discussion

Loading comments...

Leave a Comment